Neural Network vs Traditional Algorithms: Processing Speed

FEB 27, 20269 MIN READ

Generate Your Research Report Instantly with AI Agent

Patsnap Eureka helps you evaluate technical feasibility & market potential.

Neural Network vs Traditional Algorithm Speed Background

The evolution of computational algorithms has undergone a dramatic transformation over the past several decades, fundamentally reshaping how we approach complex problem-solving across diverse industries. Traditional algorithms, rooted in classical computer science principles, dominated the computational landscape for over half a century, providing deterministic and well-understood solutions to a wide range of mathematical and logical problems.

Traditional algorithms emerged from the foundational work of pioneers like Alan Turing and John von Neumann in the 1940s and 1950s. These algorithms, including sorting methods, search techniques, and optimization procedures, were designed with explicit programming logic and clear computational pathways. Their development followed a linear progression, with each algorithm carefully crafted to solve specific problem types through predetermined steps and decision trees.

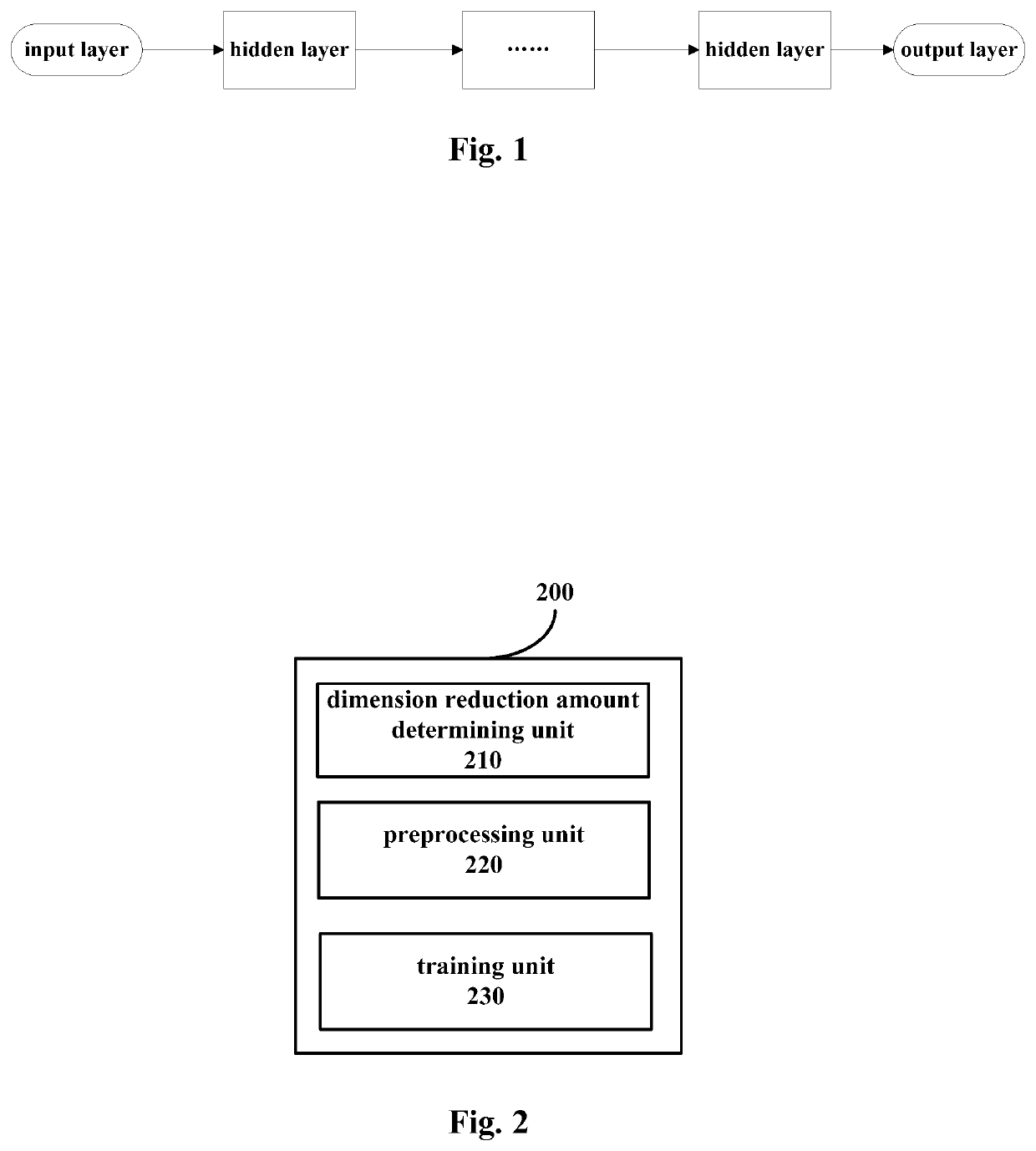

The advent of neural networks marked a paradigmatic shift in computational thinking. Inspired by biological neural systems, these algorithms introduced a fundamentally different approach to information processing. Unlike traditional algorithms that follow explicit instructions, neural networks learn patterns through iterative training processes, adjusting internal parameters to minimize error rates across large datasets.

Processing speed has emerged as a critical differentiator between these two computational paradigms. Traditional algorithms typically exhibit predictable performance characteristics, with execution times that can be mathematically analyzed and optimized through algorithmic complexity theory. Their speed advantages often manifest in scenarios requiring precise logical operations, mathematical calculations, and structured data manipulation.

Neural networks present a more complex speed profile. While individual inference operations may appear slower due to matrix multiplications and activation functions, their parallel processing capabilities and pattern recognition efficiency can dramatically outperform traditional methods in specific domains. The introduction of specialized hardware like GPUs and TPUs has further accelerated neural network processing, creating new performance benchmarks.

The speed comparison between these approaches has become increasingly relevant as organizations seek to optimize computational resources while maintaining accuracy and reliability. Understanding the historical context and technological evolution of both paradigms provides essential foundation for evaluating their respective strengths in contemporary applications.

Traditional algorithms emerged from the foundational work of pioneers like Alan Turing and John von Neumann in the 1940s and 1950s. These algorithms, including sorting methods, search techniques, and optimization procedures, were designed with explicit programming logic and clear computational pathways. Their development followed a linear progression, with each algorithm carefully crafted to solve specific problem types through predetermined steps and decision trees.

The advent of neural networks marked a paradigmatic shift in computational thinking. Inspired by biological neural systems, these algorithms introduced a fundamentally different approach to information processing. Unlike traditional algorithms that follow explicit instructions, neural networks learn patterns through iterative training processes, adjusting internal parameters to minimize error rates across large datasets.

Processing speed has emerged as a critical differentiator between these two computational paradigms. Traditional algorithms typically exhibit predictable performance characteristics, with execution times that can be mathematically analyzed and optimized through algorithmic complexity theory. Their speed advantages often manifest in scenarios requiring precise logical operations, mathematical calculations, and structured data manipulation.

Neural networks present a more complex speed profile. While individual inference operations may appear slower due to matrix multiplications and activation functions, their parallel processing capabilities and pattern recognition efficiency can dramatically outperform traditional methods in specific domains. The introduction of specialized hardware like GPUs and TPUs has further accelerated neural network processing, creating new performance benchmarks.

The speed comparison between these approaches has become increasingly relevant as organizations seek to optimize computational resources while maintaining accuracy and reliability. Understanding the historical context and technological evolution of both paradigms provides essential foundation for evaluating their respective strengths in contemporary applications.

Market Demand for High-Speed Processing Solutions

The global demand for high-speed processing solutions has experienced unprecedented growth across multiple industries, driven by the exponential increase in data generation and the need for real-time decision-making capabilities. Financial services, autonomous vehicles, healthcare diagnostics, and telecommunications represent the primary sectors fueling this demand surge. These industries require processing systems capable of handling massive datasets while maintaining microsecond-level response times.

Enterprise applications increasingly demand sophisticated processing capabilities that can adapt to varying computational workloads. Traditional rule-based systems continue to dominate scenarios requiring deterministic outcomes and regulatory compliance, particularly in banking fraud detection and industrial control systems. However, the market shows growing preference for hybrid approaches that combine the reliability of conventional algorithms with the adaptive capabilities of neural networks.

Cloud computing and edge computing architectures have fundamentally transformed processing requirements, creating distinct market segments with different performance expectations. Edge applications prioritize low-latency processing for IoT devices and mobile applications, while cloud-based solutions focus on scalable throughput for batch processing and training operations. This bifurcation has generated specialized demand patterns for different algorithmic approaches.

The artificial intelligence revolution has created substantial market pressure for processing solutions that can efficiently execute both training and inference workloads. Organizations seek systems capable of seamlessly transitioning between neural network computations and traditional algorithmic processing without performance degradation. This requirement has sparked innovation in hybrid processing architectures and specialized hardware accelerators.

Market research indicates strong growth trajectories in sectors requiring real-time analytics, including autonomous systems, high-frequency trading, and predictive maintenance applications. These applications demand processing solutions that can deliver consistent performance under varying computational loads while maintaining energy efficiency constraints.

The competitive landscape reflects increasing investment in processing optimization technologies, with organizations prioritizing solutions that offer measurable performance improvements over existing implementations. Market adoption patterns suggest preference for processing frameworks that provide flexibility in algorithm selection based on specific use case requirements rather than monolithic approaches.

Enterprise applications increasingly demand sophisticated processing capabilities that can adapt to varying computational workloads. Traditional rule-based systems continue to dominate scenarios requiring deterministic outcomes and regulatory compliance, particularly in banking fraud detection and industrial control systems. However, the market shows growing preference for hybrid approaches that combine the reliability of conventional algorithms with the adaptive capabilities of neural networks.

Cloud computing and edge computing architectures have fundamentally transformed processing requirements, creating distinct market segments with different performance expectations. Edge applications prioritize low-latency processing for IoT devices and mobile applications, while cloud-based solutions focus on scalable throughput for batch processing and training operations. This bifurcation has generated specialized demand patterns for different algorithmic approaches.

The artificial intelligence revolution has created substantial market pressure for processing solutions that can efficiently execute both training and inference workloads. Organizations seek systems capable of seamlessly transitioning between neural network computations and traditional algorithmic processing without performance degradation. This requirement has sparked innovation in hybrid processing architectures and specialized hardware accelerators.

Market research indicates strong growth trajectories in sectors requiring real-time analytics, including autonomous systems, high-frequency trading, and predictive maintenance applications. These applications demand processing solutions that can deliver consistent performance under varying computational loads while maintaining energy efficiency constraints.

The competitive landscape reflects increasing investment in processing optimization technologies, with organizations prioritizing solutions that offer measurable performance improvements over existing implementations. Market adoption patterns suggest preference for processing frameworks that provide flexibility in algorithm selection based on specific use case requirements rather than monolithic approaches.

Current Processing Speed Challenges and Bottlenecks

The processing speed comparison between neural networks and traditional algorithms reveals several critical bottlenecks that significantly impact computational efficiency across different application domains. These challenges stem from fundamental architectural differences and computational requirements that each approach demands.

Neural networks face substantial computational overhead during both training and inference phases. The forward propagation process requires extensive matrix multiplications across multiple layers, with each neuron performing weighted sum calculations followed by activation function evaluations. This becomes particularly pronounced in deep architectures where hundreds or thousands of layers must process data sequentially. The backpropagation algorithm further compounds these challenges by requiring gradient calculations through the entire network structure, creating memory bandwidth limitations and computational bottlenecks.

Memory access patterns represent another significant constraint for neural network implementations. The irregular memory access patterns during weight updates and the need to store intermediate activations create cache misses and memory latency issues. Large model architectures often exceed available GPU memory, necessitating complex memory management strategies that introduce additional overhead and reduce overall throughput.

Traditional algorithms encounter different but equally challenging bottlenecks. Iterative optimization methods suffer from convergence speed limitations, particularly when dealing with high-dimensional problems or poorly conditioned datasets. Sequential processing requirements in many classical algorithms prevent effective parallelization, creating fundamental scalability constraints that become apparent when processing large datasets.

Data preprocessing and feature engineering stages in traditional approaches introduce significant computational overhead. Complex feature extraction pipelines, normalization procedures, and dimensionality reduction techniques can consume substantial processing time before the core algorithm execution begins. These preprocessing bottlenecks often dominate the total execution time, particularly in real-time applications.

Hardware utilization efficiency varies dramatically between approaches. Neural networks can leverage parallel processing capabilities of modern GPUs effectively, but traditional algorithms often struggle to fully utilize available computational resources due to their sequential nature. This hardware-software mismatch creates performance gaps that become more pronounced as parallel processing capabilities continue to advance.

The scalability bottleneck emerges when processing requirements exceed available computational resources. Neural networks face exponential growth in computational complexity with model size increases, while traditional algorithms often encounter polynomial or linear scaling challenges that become prohibitive for large-scale applications.

Neural networks face substantial computational overhead during both training and inference phases. The forward propagation process requires extensive matrix multiplications across multiple layers, with each neuron performing weighted sum calculations followed by activation function evaluations. This becomes particularly pronounced in deep architectures where hundreds or thousands of layers must process data sequentially. The backpropagation algorithm further compounds these challenges by requiring gradient calculations through the entire network structure, creating memory bandwidth limitations and computational bottlenecks.

Memory access patterns represent another significant constraint for neural network implementations. The irregular memory access patterns during weight updates and the need to store intermediate activations create cache misses and memory latency issues. Large model architectures often exceed available GPU memory, necessitating complex memory management strategies that introduce additional overhead and reduce overall throughput.

Traditional algorithms encounter different but equally challenging bottlenecks. Iterative optimization methods suffer from convergence speed limitations, particularly when dealing with high-dimensional problems or poorly conditioned datasets. Sequential processing requirements in many classical algorithms prevent effective parallelization, creating fundamental scalability constraints that become apparent when processing large datasets.

Data preprocessing and feature engineering stages in traditional approaches introduce significant computational overhead. Complex feature extraction pipelines, normalization procedures, and dimensionality reduction techniques can consume substantial processing time before the core algorithm execution begins. These preprocessing bottlenecks often dominate the total execution time, particularly in real-time applications.

Hardware utilization efficiency varies dramatically between approaches. Neural networks can leverage parallel processing capabilities of modern GPUs effectively, but traditional algorithms often struggle to fully utilize available computational resources due to their sequential nature. This hardware-software mismatch creates performance gaps that become more pronounced as parallel processing capabilities continue to advance.

The scalability bottleneck emerges when processing requirements exceed available computational resources. Neural networks face exponential growth in computational complexity with model size increases, while traditional algorithms often encounter polynomial or linear scaling challenges that become prohibitive for large-scale applications.

Existing Speed Optimization Solutions and Frameworks

01 Hardware acceleration architectures for neural networks

Specialized hardware architectures designed to accelerate neural network computations through dedicated processing units, optimized data paths, and parallel processing capabilities. These architectures include custom chips, accelerators, and processing elements specifically designed to handle the computational demands of neural network operations more efficiently than general-purpose processors.- Hardware acceleration architectures for neural networks: Specialized hardware architectures designed to accelerate neural network computations through dedicated processing units, optimized data paths, and parallel processing capabilities. These architectures include custom chips, accelerators, and processing elements specifically designed to handle the computational demands of neural network operations more efficiently than general-purpose processors.

- Memory optimization and data management techniques: Methods for improving neural network processing speed through efficient memory access patterns, data caching strategies, and bandwidth optimization. These techniques focus on reducing memory bottleneck issues by implementing smart data prefetching, compression, and storage hierarchies that minimize latency during neural network inference and training operations.

- Parallel processing and distributed computing methods: Approaches that leverage multiple processing units or distributed systems to execute neural network operations concurrently. These methods include techniques for partitioning neural network layers, distributing workloads across multiple processors, and synchronizing results to achieve faster overall processing times through parallelization.

- Neural network model optimization and compression: Techniques for reducing the computational complexity of neural networks through model pruning, quantization, and architecture optimization. These methods aim to decrease the number of operations required during inference while maintaining acceptable accuracy levels, thereby improving processing speed without requiring hardware changes.

- Specialized instruction sets and processing pipelines: Custom instruction set architectures and optimized processing pipelines designed specifically for neural network operations. These innovations include specialized commands for matrix operations, activation functions, and convolution operations that enable faster execution of common neural network computations through streamlined processing flows.

02 Memory optimization and data management techniques

Methods for improving neural network processing speed through efficient memory access patterns, data caching strategies, and bandwidth optimization. These techniques focus on reducing memory bottleneck issues by implementing smart data prefetching, compression, and storage hierarchies that minimize latency during neural network inference and training operations.Expand Specific Solutions03 Quantization and precision reduction methods

Techniques that enhance processing speed by reducing the numerical precision of neural network parameters and computations. These methods convert high-precision floating-point operations to lower-precision fixed-point or integer operations, significantly reducing computational complexity and memory requirements while maintaining acceptable accuracy levels.Expand Specific Solutions04 Parallel processing and distributed computing frameworks

Systems and methods that leverage multiple processing units, cores, or devices to execute neural network operations concurrently. These frameworks distribute computational workloads across various processing resources, enabling faster execution through parallelization of matrix operations, layer computations, and batch processing.Expand Specific Solutions05 Network architecture optimization and pruning

Approaches that improve processing speed by optimizing the neural network structure itself, including removing redundant connections, reducing layer complexity, and streamlining computational graphs. These methods focus on maintaining model performance while significantly reducing the number of operations required during inference, resulting in faster execution times.Expand Specific Solutions

Key Players in AI Processing and Algorithm Optimization

The neural network versus traditional algorithms processing speed landscape represents a rapidly evolving competitive arena currently in its growth phase, with the global AI chip market projected to reach $227 billion by 2030. Technology maturity varies significantly across players, with established giants like Huawei, Samsung, and Sony leveraging extensive R&D capabilities alongside specialized AI chip companies such as Cambricon and Furiosa AI driving innovation in neural processing units. Traditional semiconductor leaders including Fujitsu, Hitachi, and NEC are adapting their architectures for AI workloads, while emerging players like LeapMind and Beijing Lingxi Technology focus on edge computing optimization. The competitive dynamics show a clear bifurcation between companies developing general-purpose processors with AI acceleration capabilities and those creating dedicated neural processing architectures, indicating the market is transitioning from experimental to commercial deployment phases.

Huawei Technologies Co., Ltd.

Technical Solution: Huawei has developed the Ascend series AI processors with dedicated neural processing units (NPUs) that achieve up to 320 TOPS performance for neural network acceleration. Their MindSpore framework optimizes neural network inference through graph optimization and operator fusion, reducing computational overhead by 30-40% compared to traditional algorithms. The company implements mixed-precision computing and model compression techniques to enhance processing speed while maintaining accuracy. Their HiSilicon Kirin chips integrate NPUs directly into mobile processors, enabling real-time neural network processing for applications like image recognition and natural language processing with significantly faster inference times than CPU-based traditional algorithms.

Strengths: Integrated hardware-software optimization, high-performance NPU architecture, comprehensive AI ecosystem. Weaknesses: Limited global market access due to trade restrictions, dependency on proprietary frameworks.

Cambricon Technologies Corp. Ltd.

Technical Solution: Cambricon specializes in AI chip design with their MLU (Machine Learning Unit) series processors specifically optimized for neural network workloads. Their MLU370 delivers up to 256 TOPS INT8 performance, significantly outperforming traditional CPU-based algorithms in deep learning tasks. The company's BANG programming language and CNToolkit provide optimized libraries for neural network operations, achieving 5-10x speedup over traditional algorithmic approaches in computer vision and natural language processing tasks. Their architecture features dedicated tensor processing units and optimized memory hierarchies designed specifically for neural network computation patterns, enabling efficient batch processing and parallel execution.

Strengths: Specialized AI chip architecture, optimized software stack for neural networks, strong performance in inference tasks. Weaknesses: Limited ecosystem compared to established players, primarily focused on Chinese market.

Core Innovations in Neural Network Acceleration

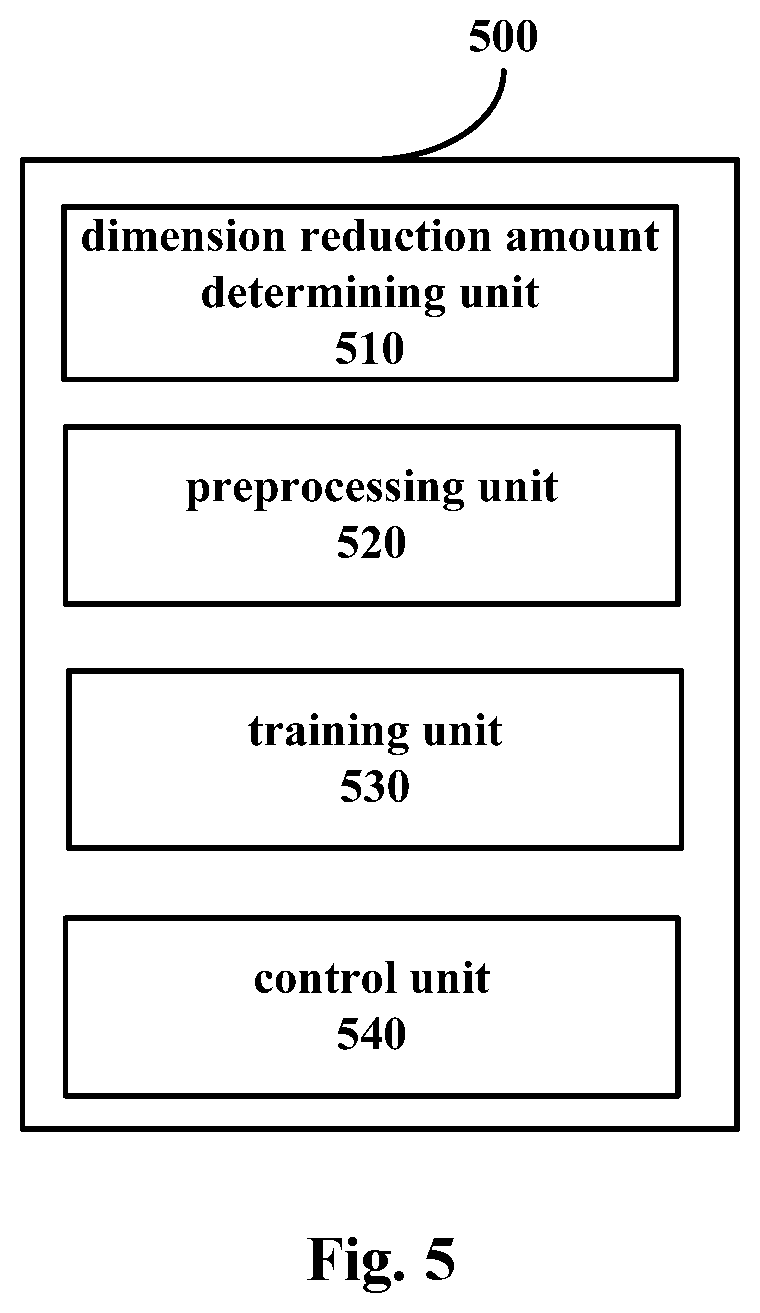

Device and method for improving processing speed of neural network

PatentActiveUS11227213B2

Innovation

- A device and method that reduce the dimensionality of parameter matrices through column dimension reduction, involving preprocessing to zero columns with low scores and retraining the network to maintain performance, thereby reducing the computational load in matrix multiplication.

Neural network processor

PatentWO2019194465A1

Innovation

- A neural network processing unit and system that combines data flow and control flow methods, allowing tasks to proceed when data is ready, regardless of other tasks, and using a dynamic memory allocation system to optimize data management and processing.

Hardware Infrastructure Requirements and Constraints

The hardware infrastructure requirements for neural networks and traditional algorithms differ significantly in terms of computational architecture, memory specifications, and processing capabilities. Neural networks, particularly deep learning models, demand specialized hardware configurations that can handle massive parallel computations efficiently. Graphics Processing Units (GPUs) have become the cornerstone of neural network processing due to their thousands of cores designed for simultaneous operations, making them ideal for matrix multiplications and tensor operations that form the backbone of neural network computations.

Traditional algorithms typically operate effectively on Central Processing Units (CPUs) with their optimized sequential processing capabilities and sophisticated branch prediction mechanisms. These algorithms often require less specialized hardware and can achieve optimal performance on standard server configurations with adequate RAM and storage systems. The memory requirements for traditional algorithms are generally more predictable and manageable, often fitting within conventional system specifications.

Memory bandwidth emerges as a critical constraint for neural network implementations. Large models require substantial memory capacity, with some state-of-the-art models demanding hundreds of gigabytes of high-speed memory. The memory hierarchy becomes crucial, as frequent data transfers between different memory levels can create significant bottlenecks. High Bandwidth Memory (HBM) and specialized memory architectures have been developed to address these constraints, though they substantially increase infrastructure costs.

Power consumption represents another fundamental constraint differentiating these approaches. Neural network processing, especially during training phases, requires substantial electrical power and generates significant heat, necessitating robust cooling systems and power distribution infrastructure. Data centers supporting neural network workloads often require specialized power management systems and enhanced cooling capabilities compared to traditional computing environments.

Scalability constraints also vary considerably between the two approaches. Neural networks benefit from distributed computing architectures, requiring high-speed interconnects and sophisticated networking infrastructure to enable efficient communication between processing nodes. Traditional algorithms may not always benefit from such distributed approaches and might achieve better cost-effectiveness through vertical scaling rather than horizontal expansion.

Storage infrastructure requirements differ substantially as well. Neural networks often require high-throughput storage systems capable of feeding massive datasets continuously to processing units, while traditional algorithms typically have more modest and predictable storage access patterns that can be satisfied with conventional storage solutions.

Traditional algorithms typically operate effectively on Central Processing Units (CPUs) with their optimized sequential processing capabilities and sophisticated branch prediction mechanisms. These algorithms often require less specialized hardware and can achieve optimal performance on standard server configurations with adequate RAM and storage systems. The memory requirements for traditional algorithms are generally more predictable and manageable, often fitting within conventional system specifications.

Memory bandwidth emerges as a critical constraint for neural network implementations. Large models require substantial memory capacity, with some state-of-the-art models demanding hundreds of gigabytes of high-speed memory. The memory hierarchy becomes crucial, as frequent data transfers between different memory levels can create significant bottlenecks. High Bandwidth Memory (HBM) and specialized memory architectures have been developed to address these constraints, though they substantially increase infrastructure costs.

Power consumption represents another fundamental constraint differentiating these approaches. Neural network processing, especially during training phases, requires substantial electrical power and generates significant heat, necessitating robust cooling systems and power distribution infrastructure. Data centers supporting neural network workloads often require specialized power management systems and enhanced cooling capabilities compared to traditional computing environments.

Scalability constraints also vary considerably between the two approaches. Neural networks benefit from distributed computing architectures, requiring high-speed interconnects and sophisticated networking infrastructure to enable efficient communication between processing nodes. Traditional algorithms may not always benefit from such distributed approaches and might achieve better cost-effectiveness through vertical scaling rather than horizontal expansion.

Storage infrastructure requirements differ substantially as well. Neural networks often require high-throughput storage systems capable of feeding massive datasets continuously to processing units, while traditional algorithms typically have more modest and predictable storage access patterns that can be satisfied with conventional storage solutions.

Energy Efficiency Considerations in Speed Optimization

Energy efficiency has emerged as a critical consideration in the optimization of processing speed when comparing neural networks and traditional algorithms. The computational intensity of neural networks, particularly deep learning models, often results in significantly higher energy consumption compared to traditional algorithmic approaches. This energy-speed trade-off presents unique challenges for organizations seeking to balance performance requirements with operational sustainability and cost-effectiveness.

Modern neural network architectures, especially transformer-based models and convolutional neural networks, require substantial computational resources that translate directly into energy consumption. Graphics Processing Units (GPUs) and specialized accelerators like Tensor Processing Units (TPUs) used for neural network inference can consume hundreds of watts during operation, while traditional algorithms running on standard CPUs typically require significantly less power. This disparity becomes particularly pronounced in large-scale deployment scenarios where thousands of inference operations occur simultaneously.

The relationship between processing speed and energy efficiency varies significantly across different implementation strategies. Hardware-specific optimizations, such as quantization techniques that reduce model precision from 32-bit to 8-bit or 16-bit representations, can achieve substantial energy savings while maintaining acceptable processing speeds. Similarly, model pruning and knowledge distillation techniques enable the creation of smaller, more energy-efficient neural networks that retain much of their larger counterparts' performance capabilities.

Traditional algorithms demonstrate superior energy efficiency in scenarios where their computational complexity remains manageable. Algorithms with linear or logarithmic time complexity typically maintain consistent energy consumption patterns that scale predictably with input size. However, when traditional algorithms encounter exponential complexity problems, their energy consumption can exceed that of neural network solutions designed for the same tasks.

Edge computing environments particularly highlight the importance of energy-efficient speed optimization. Battery-powered devices and IoT applications require careful consideration of the energy-performance trade-off, often favoring lightweight traditional algorithms or highly optimized neural network models. Emerging neuromorphic computing architectures promise to bridge this gap by mimicking biological neural processes that achieve remarkable energy efficiency while maintaining high processing capabilities.

The development of specialized hardware accelerators continues to reshape the energy efficiency landscape for neural networks. Application-Specific Integrated Circuits (ASICs) designed specifically for neural network operations can achieve orders of magnitude improvements in energy efficiency compared to general-purpose processors, making neural network solutions increasingly viable for energy-constrained applications where processing speed remains paramount.

Modern neural network architectures, especially transformer-based models and convolutional neural networks, require substantial computational resources that translate directly into energy consumption. Graphics Processing Units (GPUs) and specialized accelerators like Tensor Processing Units (TPUs) used for neural network inference can consume hundreds of watts during operation, while traditional algorithms running on standard CPUs typically require significantly less power. This disparity becomes particularly pronounced in large-scale deployment scenarios where thousands of inference operations occur simultaneously.

The relationship between processing speed and energy efficiency varies significantly across different implementation strategies. Hardware-specific optimizations, such as quantization techniques that reduce model precision from 32-bit to 8-bit or 16-bit representations, can achieve substantial energy savings while maintaining acceptable processing speeds. Similarly, model pruning and knowledge distillation techniques enable the creation of smaller, more energy-efficient neural networks that retain much of their larger counterparts' performance capabilities.

Traditional algorithms demonstrate superior energy efficiency in scenarios where their computational complexity remains manageable. Algorithms with linear or logarithmic time complexity typically maintain consistent energy consumption patterns that scale predictably with input size. However, when traditional algorithms encounter exponential complexity problems, their energy consumption can exceed that of neural network solutions designed for the same tasks.

Edge computing environments particularly highlight the importance of energy-efficient speed optimization. Battery-powered devices and IoT applications require careful consideration of the energy-performance trade-off, often favoring lightweight traditional algorithms or highly optimized neural network models. Emerging neuromorphic computing architectures promise to bridge this gap by mimicking biological neural processes that achieve remarkable energy efficiency while maintaining high processing capabilities.

The development of specialized hardware accelerators continues to reshape the energy efficiency landscape for neural networks. Application-Specific Integrated Circuits (ASICs) designed specifically for neural network operations can achieve orders of magnitude improvements in energy efficiency compared to general-purpose processors, making neural network solutions increasingly viable for energy-constrained applications where processing speed remains paramount.

Unlock deeper insights with Patsnap Eureka Quick Research — get a full tech report to explore trends and direct your research. Try now!

Generate Your Research Report Instantly with AI Agent

Supercharge your innovation with Patsnap Eureka AI Agent Platform!