Optimize Synaptic Weight Distribution in Spiking Models

APR 24, 20269 MIN READ

Generate Your Research Report Instantly with AI Agent

PatSnap Eureka helps you evaluate technical feasibility & market potential.

Spiking Neural Network Weight Optimization Background and Goals

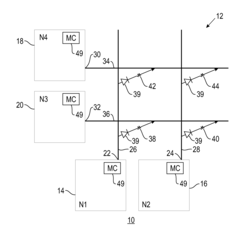

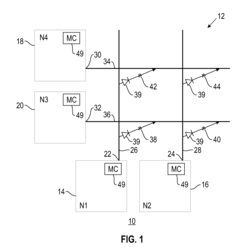

Spiking Neural Networks represent a paradigm shift from traditional artificial neural networks by incorporating temporal dynamics and event-driven computation that more closely mimics biological neural processing. Unlike conventional neural networks that process information through continuous activation functions, SNNs communicate through discrete spikes or action potentials, enabling them to capture the precise timing of neural events. This temporal precision offers significant advantages in processing spatiotemporal data and achieving energy-efficient computation, making SNNs particularly attractive for neuromorphic computing applications.

The evolution of spiking neural networks has been driven by advances in computational neuroscience and the growing demand for brain-inspired computing architectures. Early developments in the 1950s with Hodgkin-Huxley models laid the foundation for understanding neural dynamics, while subsequent decades saw the emergence of simplified neuron models like integrate-and-fire and leaky integrate-and-fire neurons. The field gained momentum in the 1990s with Maass's theoretical work on liquid state machines and the recognition of SNNs as the "third generation" of neural networks.

Contemporary research has increasingly focused on optimizing synaptic weight distributions within spiking models to enhance learning efficiency and network performance. Traditional weight initialization and optimization strategies developed for artificial neural networks often prove inadequate for spiking architectures due to their fundamentally different information processing mechanisms. The discrete, event-driven nature of spike communication creates unique challenges in gradient computation and weight update procedures.

The primary technical objectives in optimizing synaptic weight distribution encompass several critical dimensions. First, achieving stable learning dynamics requires careful consideration of weight initialization schemes that prevent vanishing or exploding gradients in the temporal domain. Second, developing efficient training algorithms that can effectively propagate error signals through time while maintaining the biological plausibility of spike-based computation. Third, establishing optimal weight distribution patterns that maximize information capacity while minimizing energy consumption.

Recent technological advances have highlighted the importance of addressing weight optimization challenges to unlock the full potential of neuromorphic computing systems. The integration of SNNs with emerging hardware platforms, including memristive devices and dedicated neuromorphic chips, demands sophisticated weight management strategies that can leverage hardware-specific characteristics while maintaining computational efficiency and learning performance across diverse application domains.

The evolution of spiking neural networks has been driven by advances in computational neuroscience and the growing demand for brain-inspired computing architectures. Early developments in the 1950s with Hodgkin-Huxley models laid the foundation for understanding neural dynamics, while subsequent decades saw the emergence of simplified neuron models like integrate-and-fire and leaky integrate-and-fire neurons. The field gained momentum in the 1990s with Maass's theoretical work on liquid state machines and the recognition of SNNs as the "third generation" of neural networks.

Contemporary research has increasingly focused on optimizing synaptic weight distributions within spiking models to enhance learning efficiency and network performance. Traditional weight initialization and optimization strategies developed for artificial neural networks often prove inadequate for spiking architectures due to their fundamentally different information processing mechanisms. The discrete, event-driven nature of spike communication creates unique challenges in gradient computation and weight update procedures.

The primary technical objectives in optimizing synaptic weight distribution encompass several critical dimensions. First, achieving stable learning dynamics requires careful consideration of weight initialization schemes that prevent vanishing or exploding gradients in the temporal domain. Second, developing efficient training algorithms that can effectively propagate error signals through time while maintaining the biological plausibility of spike-based computation. Third, establishing optimal weight distribution patterns that maximize information capacity while minimizing energy consumption.

Recent technological advances have highlighted the importance of addressing weight optimization challenges to unlock the full potential of neuromorphic computing systems. The integration of SNNs with emerging hardware platforms, including memristive devices and dedicated neuromorphic chips, demands sophisticated weight management strategies that can leverage hardware-specific characteristics while maintaining computational efficiency and learning performance across diverse application domains.

Market Demand for Efficient Neuromorphic Computing Solutions

The neuromorphic computing market is experiencing unprecedented growth driven by the increasing demand for energy-efficient artificial intelligence solutions. Traditional von Neumann architectures face significant limitations in processing the massive parallel computations required for modern AI applications, creating substantial market opportunities for brain-inspired computing paradigms. Spiking neural networks, which form the foundation of neuromorphic systems, require sophisticated synaptic weight optimization techniques to achieve competitive performance levels.

Edge computing applications represent a primary driver for neuromorphic solutions, where power constraints and real-time processing requirements make conventional processors inadequate. Internet of Things devices, autonomous vehicles, and mobile robotics increasingly demand intelligent processing capabilities that can operate within strict energy budgets. The optimization of synaptic weight distribution directly addresses these market needs by enabling more efficient information encoding and processing in spiking models.

The artificial intelligence accelerator market continues expanding as organizations seek alternatives to power-hungry GPU-based solutions. Neuromorphic processors offer inherent advantages in sparse computation and event-driven processing, but their commercial viability depends heavily on algorithmic improvements in synaptic weight management. Industries ranging from healthcare monitoring to industrial automation are actively seeking neuromorphic solutions that can deliver real-time intelligence while minimizing energy consumption.

Data center operators face mounting pressure to reduce computational energy costs, particularly for inference workloads that dominate modern AI deployments. Optimized synaptic weight distribution techniques enable neuromorphic systems to achieve superior energy efficiency compared to traditional deep learning accelerators. This efficiency advantage becomes increasingly valuable as regulatory frameworks and corporate sustainability initiatives prioritize reduced carbon footprints in computing infrastructure.

The convergence of 5G networks and edge AI creates additional market demand for neuromorphic computing solutions. Network operators require distributed intelligence capabilities that can process sensor data locally while maintaining minimal latency and power consumption. Advanced synaptic weight optimization algorithms enable these systems to adapt dynamically to changing environmental conditions while preserving computational efficiency.

Research institutions and technology companies are investing heavily in neuromorphic computing platforms, recognizing the potential for breakthrough applications in cognitive computing, sensory processing, and adaptive control systems. The market demand extends beyond traditional computing applications to include novel use cases such as neuromorphic sensors, brain-computer interfaces, and bio-inspired robotics systems.

Edge computing applications represent a primary driver for neuromorphic solutions, where power constraints and real-time processing requirements make conventional processors inadequate. Internet of Things devices, autonomous vehicles, and mobile robotics increasingly demand intelligent processing capabilities that can operate within strict energy budgets. The optimization of synaptic weight distribution directly addresses these market needs by enabling more efficient information encoding and processing in spiking models.

The artificial intelligence accelerator market continues expanding as organizations seek alternatives to power-hungry GPU-based solutions. Neuromorphic processors offer inherent advantages in sparse computation and event-driven processing, but their commercial viability depends heavily on algorithmic improvements in synaptic weight management. Industries ranging from healthcare monitoring to industrial automation are actively seeking neuromorphic solutions that can deliver real-time intelligence while minimizing energy consumption.

Data center operators face mounting pressure to reduce computational energy costs, particularly for inference workloads that dominate modern AI deployments. Optimized synaptic weight distribution techniques enable neuromorphic systems to achieve superior energy efficiency compared to traditional deep learning accelerators. This efficiency advantage becomes increasingly valuable as regulatory frameworks and corporate sustainability initiatives prioritize reduced carbon footprints in computing infrastructure.

The convergence of 5G networks and edge AI creates additional market demand for neuromorphic computing solutions. Network operators require distributed intelligence capabilities that can process sensor data locally while maintaining minimal latency and power consumption. Advanced synaptic weight optimization algorithms enable these systems to adapt dynamically to changing environmental conditions while preserving computational efficiency.

Research institutions and technology companies are investing heavily in neuromorphic computing platforms, recognizing the potential for breakthrough applications in cognitive computing, sensory processing, and adaptive control systems. The market demand extends beyond traditional computing applications to include novel use cases such as neuromorphic sensors, brain-computer interfaces, and bio-inspired robotics systems.

Current Synaptic Weight Distribution Challenges in SNNs

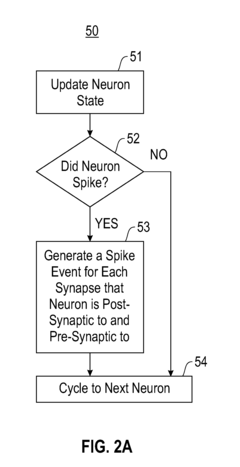

Spiking Neural Networks face significant challenges in achieving optimal synaptic weight distribution, which directly impacts their computational efficiency and biological plausibility. The primary constraint stems from the discrete, event-driven nature of spike-based communication, where traditional gradient-based optimization methods encounter substantial difficulties due to the non-differentiable spike generation process.

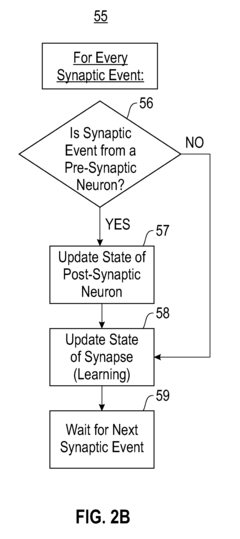

The temporal dynamics inherent in SNNs create complex interdependencies between synaptic weights and spike timing patterns. Unlike conventional artificial neural networks where weight adjustments follow straightforward backpropagation rules, SNNs must account for precise temporal relationships between pre- and post-synaptic spikes. This temporal coupling makes it challenging to determine optimal weight distributions that maintain stable network dynamics while preserving learning capabilities.

Hardware implementation constraints further complicate synaptic weight distribution optimization. Neuromorphic chips typically support limited weight precision, often restricted to 4-8 bits, creating quantization challenges that affect network performance. The mismatch between theoretical continuous weight values and practical discrete implementations introduces approximation errors that accumulate across network layers.

Biological realism requirements impose additional constraints on weight distribution patterns. Real neural networks exhibit specific statistical properties in their synaptic strength distributions, including heavy-tailed distributions and sparse connectivity patterns. Achieving these characteristics while maintaining computational functionality requires careful balance between biological accuracy and performance optimization.

Scalability issues emerge when dealing with large-scale SNN architectures. As network size increases, the computational complexity of weight optimization grows exponentially, making traditional optimization approaches computationally prohibitive. The sparse firing patterns characteristic of SNNs, while energy-efficient, create irregular gradient flow that complicates weight update procedures.

Current learning algorithms struggle with the credit assignment problem in deep SNNs, where determining the contribution of individual synaptic weights to network output becomes increasingly difficult across multiple layers. This challenge is exacerbated by the temporal nature of spike-based processing, where the timing of weight updates significantly affects learning outcomes and network stability.

The temporal dynamics inherent in SNNs create complex interdependencies between synaptic weights and spike timing patterns. Unlike conventional artificial neural networks where weight adjustments follow straightforward backpropagation rules, SNNs must account for precise temporal relationships between pre- and post-synaptic spikes. This temporal coupling makes it challenging to determine optimal weight distributions that maintain stable network dynamics while preserving learning capabilities.

Hardware implementation constraints further complicate synaptic weight distribution optimization. Neuromorphic chips typically support limited weight precision, often restricted to 4-8 bits, creating quantization challenges that affect network performance. The mismatch between theoretical continuous weight values and practical discrete implementations introduces approximation errors that accumulate across network layers.

Biological realism requirements impose additional constraints on weight distribution patterns. Real neural networks exhibit specific statistical properties in their synaptic strength distributions, including heavy-tailed distributions and sparse connectivity patterns. Achieving these characteristics while maintaining computational functionality requires careful balance between biological accuracy and performance optimization.

Scalability issues emerge when dealing with large-scale SNN architectures. As network size increases, the computational complexity of weight optimization grows exponentially, making traditional optimization approaches computationally prohibitive. The sparse firing patterns characteristic of SNNs, while energy-efficient, create irregular gradient flow that complicates weight update procedures.

Current learning algorithms struggle with the credit assignment problem in deep SNNs, where determining the contribution of individual synaptic weights to network output becomes increasingly difficult across multiple layers. This challenge is exacerbated by the temporal nature of spike-based processing, where the timing of weight updates significantly affects learning outcomes and network stability.

Existing Synaptic Weight Optimization Algorithms

01 Synaptic weight initialization and distribution methods in spiking neural networks

Various methods for initializing and distributing synaptic weights in spiking neural networks are disclosed. These approaches focus on establishing initial weight values and their statistical distributions to optimize network performance. The weight distribution can follow specific probability distributions or be determined based on network topology and connectivity patterns. Proper initialization of synaptic weights is crucial for efficient learning and convergence in spiking neural network models.- Synaptic weight initialization and distribution methods in spiking neural networks: Various methods for initializing and distributing synaptic weights in spiking neural networks are disclosed. These approaches focus on establishing initial weight values and their statistical distributions to optimize network performance. The techniques include random initialization schemes, normalized distributions, and adaptive initialization strategies that consider network topology and neuron connectivity patterns. These methods aim to improve learning convergence and network stability during training phases.

- Dynamic synaptic weight adjustment using spike-timing-dependent plasticity: Techniques for dynamically adjusting synaptic weights based on spike timing relationships between pre-synaptic and post-synaptic neurons are described. These methods implement plasticity rules that modify connection strengths according to the temporal correlation of neural firing events. The approaches enable learning and adaptation in neuromorphic systems by strengthening or weakening synaptic connections based on causal relationships between spike events, facilitating unsupervised learning and pattern recognition capabilities.

- Hardware implementation of synaptic weight storage and update mechanisms: Hardware architectures and circuits for storing and updating synaptic weights in neuromorphic computing systems are disclosed. These implementations include memory structures, crossbar arrays, and specialized circuits that efficiently maintain weight values and perform update operations. The designs address challenges such as weight precision, storage density, and update speed while minimizing power consumption. Various memory technologies and circuit topologies are employed to achieve scalable and energy-efficient synaptic weight management.

- Probabilistic and stochastic synaptic weight distribution models: Probabilistic approaches to modeling synaptic weight distributions in spiking neural networks are presented. These methods incorporate stochastic elements and statistical models to represent weight variability and uncertainty. The techniques include Gaussian distributions, log-normal distributions, and other probability density functions that capture biological realism and improve network robustness. Such models enable more flexible learning algorithms and better generalization performance by accounting for inherent variability in neural systems.

- Optimization and training algorithms for synaptic weight configuration: Advanced optimization and training algorithms specifically designed for configuring synaptic weights in spiking neural networks are described. These methods include gradient-based learning approaches, evolutionary algorithms, and reinforcement learning techniques adapted for spike-based computation. The algorithms address challenges unique to spiking networks such as non-differentiable activation functions and temporal credit assignment. Various strategies for weight regularization, pruning, and compression are also incorporated to achieve efficient network configurations.

02 Adaptive synaptic weight adjustment using spike-timing-dependent plasticity

Techniques for dynamically adjusting synaptic weights based on spike-timing-dependent plasticity (STDP) rules are provided. These methods modify connection strengths between neurons according to the relative timing of pre-synaptic and post-synaptic spikes. The weight updates follow specific learning rules that strengthen or weaken synapses depending on spike correlations. This plasticity mechanism enables the network to learn temporal patterns and adapt to input stimuli over time.Expand Specific Solutions03 Hardware implementation of synaptic weight storage and update mechanisms

Systems and circuits for physically implementing synaptic weight storage and update operations in neuromorphic hardware are described. These implementations utilize various memory technologies and circuit designs to store weight values and perform weight modifications efficiently. The hardware architectures support parallel weight updates and low-power operation suitable for large-scale spiking neural networks. Specialized memory cells and update circuits enable fast and energy-efficient synaptic operations.Expand Specific Solutions04 Synaptic weight quantization and compression techniques

Methods for reducing the precision and memory requirements of synaptic weights through quantization and compression are disclosed. These techniques represent weight values using reduced bit-widths or sparse representations while maintaining network accuracy. Weight compression schemes can include pruning, clustering, or encoding methods that reduce storage and computational costs. Such approaches enable deployment of spiking neural networks on resource-constrained devices.Expand Specific Solutions05 Training algorithms for optimizing synaptic weight distributions

Training methodologies specifically designed to optimize the distribution of synaptic weights in spiking neural networks are presented. These algorithms employ supervised, unsupervised, or reinforcement learning approaches to adjust weight values for specific tasks. The training processes consider the temporal dynamics of spiking neurons and aim to achieve desired weight distributions that maximize network performance. Various optimization objectives and constraints can be incorporated into the training procedures.Expand Specific Solutions

Key Players in Neuromorphic Computing and SNN Hardware

The optimization of synaptic weight distribution in spiking models represents an emerging field within neuromorphic computing, currently in its early-to-mid development stage with significant growth potential. The market remains relatively nascent but shows promising expansion driven by AI acceleration demands and energy-efficient computing needs. Technology maturity varies considerably across industry players, with established semiconductor giants like Intel, Qualcomm, Samsung, and IBM leading hardware development through their neuromorphic chip initiatives. Companies such as Huawei and Beijing Lingxi Technology are advancing brain-inspired computing architectures, while research institutions including Zhejiang University, Nara Institute of Science & Technology, and CEA contribute fundamental algorithmic innovations. The competitive landscape features a mix of hardware manufacturers, software developers like SAP, and academic institutions, creating a diverse ecosystem where traditional computing paradigms are being challenged by bio-inspired approaches that promise superior energy efficiency and real-time processing capabilities.

International Business Machines Corp.

Technical Solution: IBM has developed advanced neuromorphic computing architectures that optimize synaptic weight distribution through their TrueNorth chip design. Their approach utilizes sparse connectivity patterns and adaptive weight quantization algorithms to minimize power consumption while maintaining computational accuracy. The system implements dynamic weight scaling mechanisms that adjust synaptic strengths based on spike timing patterns, enabling efficient learning in spiking neural networks. IBM's solution incorporates crossbar array architectures with memristive devices that naturally store synaptic weights in analog form, reducing the need for frequent digital conversions and improving overall system efficiency.

Strengths: Proven neuromorphic hardware expertise with TrueNorth architecture, strong research foundation in memristive devices. Weaknesses: Limited commercial availability of neuromorphic solutions, high development costs for specialized hardware implementations.

Intel Corp.

Technical Solution: Intel's Loihi neuromorphic processor implements sophisticated synaptic weight optimization through adaptive learning rules and sparse coding techniques. The architecture features 128 neuromorphic cores with distributed weight storage and on-chip learning capabilities. Intel's approach focuses on event-driven computation where synaptic weights are updated only when spikes occur, significantly reducing power consumption. The system employs hierarchical weight compression algorithms and supports multiple plasticity rules including STDP (Spike-Timing-Dependent Plasticity) for dynamic weight adjustment. Their solution integrates seamlessly with conventional computing systems, enabling hybrid neural-digital processing architectures.

Strengths: Commercial availability through Loihi processor, strong integration with existing computing infrastructure, comprehensive development tools. Weaknesses: Limited scalability compared to biological systems, requires specialized programming expertise for optimal utilization.

Core Innovations in Synaptic Plasticity and Weight Tuning

Synaptic weight training method, target identification method, electronic device and medium

PatentActiveUS11954579B2

Innovation

- A method that combines back propagation and synaptic plasticity rules to quickly train and update synaptic weights in spiking neural networks, enabling rapid target identification by generating spike sequences from images and inputting them into a trained network for efficient processing.

Synaptic weight normalized spiking neuronal networks

PatentActiveUS20120173471A1

Innovation

- Implementing synaptic weight normalization by maintaining total synaptic weights within a predetermined range through dynamic adjustment, using techniques like dendritic and axonal normalization, and incorporating spike-timing dependent plasticity (STDP) to stabilize the neuronal network.

Hardware Implementation Constraints for SNN Deployment

The deployment of Spiking Neural Networks (SNNs) in hardware environments presents significant constraints that directly impact synaptic weight distribution optimization strategies. These limitations stem from the fundamental differences between biological neural computation and digital hardware architectures, creating bottlenecks that must be carefully considered during the design phase.

Memory bandwidth represents one of the most critical constraints in SNN hardware implementation. Traditional von Neumann architectures suffer from the memory wall problem, where frequent weight access creates substantial latency. For SNNs with optimized synaptic weight distributions, this constraint becomes particularly pronounced when dealing with sparse connectivity patterns that require irregular memory access patterns, potentially negating the computational benefits of sparsity.

Precision limitations in hardware platforms significantly influence weight distribution strategies. Fixed-point arithmetic implementations typically support 8-bit to 16-bit precision, constraining the granularity of weight values. This quantization effect can severely impact carefully optimized weight distributions, particularly those relying on subtle weight variations to achieve desired network dynamics. The trade-off between precision and hardware efficiency often forces compromises in weight representation fidelity.

Power consumption constraints impose additional restrictions on weight distribution optimization. Neuromorphic chips and edge computing devices operate under strict power budgets, limiting the complexity of weight update mechanisms and storage systems. Dynamic weight adaptation, while beneficial for learning, may be prohibitively expensive in terms of energy consumption, forcing designers to favor static or infrequently updated weight distributions.

Parallelization challenges arise from the temporal nature of spiking computations combined with hardware resource limitations. Many hardware platforms cannot efficiently support the massive parallelism required for large-scale SNNs with complex weight distributions. This constraint often necessitates weight distribution strategies that can be effectively mapped onto available processing units while maintaining computational efficiency.

Communication overhead between processing elements becomes critical when implementing distributed weight storage schemes. Inter-chip communication latency and bandwidth limitations can significantly impact the effectiveness of weight distribution optimizations, particularly in scenarios requiring frequent weight sharing or synchronization across multiple processing nodes.

Memory bandwidth represents one of the most critical constraints in SNN hardware implementation. Traditional von Neumann architectures suffer from the memory wall problem, where frequent weight access creates substantial latency. For SNNs with optimized synaptic weight distributions, this constraint becomes particularly pronounced when dealing with sparse connectivity patterns that require irregular memory access patterns, potentially negating the computational benefits of sparsity.

Precision limitations in hardware platforms significantly influence weight distribution strategies. Fixed-point arithmetic implementations typically support 8-bit to 16-bit precision, constraining the granularity of weight values. This quantization effect can severely impact carefully optimized weight distributions, particularly those relying on subtle weight variations to achieve desired network dynamics. The trade-off between precision and hardware efficiency often forces compromises in weight representation fidelity.

Power consumption constraints impose additional restrictions on weight distribution optimization. Neuromorphic chips and edge computing devices operate under strict power budgets, limiting the complexity of weight update mechanisms and storage systems. Dynamic weight adaptation, while beneficial for learning, may be prohibitively expensive in terms of energy consumption, forcing designers to favor static or infrequently updated weight distributions.

Parallelization challenges arise from the temporal nature of spiking computations combined with hardware resource limitations. Many hardware platforms cannot efficiently support the massive parallelism required for large-scale SNNs with complex weight distributions. This constraint often necessitates weight distribution strategies that can be effectively mapped onto available processing units while maintaining computational efficiency.

Communication overhead between processing elements becomes critical when implementing distributed weight storage schemes. Inter-chip communication latency and bandwidth limitations can significantly impact the effectiveness of weight distribution optimizations, particularly in scenarios requiring frequent weight sharing or synchronization across multiple processing nodes.

Energy Efficiency Standards for Neuromorphic Systems

The optimization of synaptic weight distribution in spiking neural networks presents unique opportunities for establishing comprehensive energy efficiency standards in neuromorphic systems. Current neuromorphic architectures lack standardized metrics for evaluating power consumption relative to computational performance, particularly when implementing dynamic weight adjustment mechanisms.

Energy efficiency standards must address the fundamental trade-off between synaptic precision and power consumption. Higher resolution weight representations enable more accurate neural computations but significantly increase memory access energy and storage requirements. Establishing quantitative benchmarks for acceptable energy-per-operation thresholds becomes critical when synaptic weights undergo continuous optimization processes.

The temporal dynamics inherent in spiking models introduce additional complexity to energy standardization. Unlike traditional neural networks with static weight matrices, spiking systems require standards that account for event-driven computation patterns and sparse activation regimes. Energy metrics must capture both active computation phases during weight updates and idle power consumption during sparse firing periods.

Memory hierarchy optimization represents a crucial component of energy efficiency standards. Synaptic weight distribution strategies directly impact cache utilization, memory bandwidth requirements, and data movement energy costs. Standards should define maximum acceptable energy overhead for weight storage and retrieval operations across different memory tiers.

Standardized power profiling methodologies must encompass both hardware-specific implementations and algorithm-level optimizations. This includes establishing baseline energy consumption models for various synaptic plasticity rules, weight quantization schemes, and network topologies. Reference implementations should provide comparative benchmarks for evaluating the energy impact of different weight distribution approaches.

Adaptive power management protocols should be integrated into efficiency standards, enabling dynamic adjustment of computational precision based on available energy budgets. These standards must define minimum acceptable performance thresholds while maximizing energy conservation through intelligent weight pruning and selective synapse activation strategies.

Energy efficiency standards must address the fundamental trade-off between synaptic precision and power consumption. Higher resolution weight representations enable more accurate neural computations but significantly increase memory access energy and storage requirements. Establishing quantitative benchmarks for acceptable energy-per-operation thresholds becomes critical when synaptic weights undergo continuous optimization processes.

The temporal dynamics inherent in spiking models introduce additional complexity to energy standardization. Unlike traditional neural networks with static weight matrices, spiking systems require standards that account for event-driven computation patterns and sparse activation regimes. Energy metrics must capture both active computation phases during weight updates and idle power consumption during sparse firing periods.

Memory hierarchy optimization represents a crucial component of energy efficiency standards. Synaptic weight distribution strategies directly impact cache utilization, memory bandwidth requirements, and data movement energy costs. Standards should define maximum acceptable energy overhead for weight storage and retrieval operations across different memory tiers.

Standardized power profiling methodologies must encompass both hardware-specific implementations and algorithm-level optimizations. This includes establishing baseline energy consumption models for various synaptic plasticity rules, weight quantization schemes, and network topologies. Reference implementations should provide comparative benchmarks for evaluating the energy impact of different weight distribution approaches.

Adaptive power management protocols should be integrated into efficiency standards, enabling dynamic adjustment of computational precision based on available energy budgets. These standards must define minimum acceptable performance thresholds while maximizing energy conservation through intelligent weight pruning and selective synapse activation strategies.

Unlock deeper insights with PatSnap Eureka Quick Research — get a full tech report to explore trends and direct your research. Try now!

Generate Your Research Report Instantly with AI Agent

Supercharge your innovation with PatSnap Eureka AI Agent Platform!