Optimize Texture Mapping in AI Rendering for Detail

APR 7, 20269 MIN READ

Generate Your Research Report Instantly with AI Agent

PatSnap Eureka helps you evaluate technical feasibility & market potential.

AI Texture Mapping Background and Optimization Goals

Texture mapping has been a cornerstone of computer graphics since its introduction in the 1970s, serving as the primary method for applying surface detail to 3D models. Traditional texture mapping techniques relied heavily on pre-computed textures and manual artist intervention to achieve realistic surface appearances. However, the emergence of artificial intelligence in rendering has fundamentally transformed this landscape, introducing unprecedented capabilities for dynamic texture generation, enhancement, and optimization.

The evolution of AI-driven texture mapping began with early neural network applications in the 2010s, where machine learning algorithms were first employed to automate texture synthesis and pattern recognition. This progression accelerated dramatically with the advent of deep learning architectures, particularly Generative Adversarial Networks (GANs) and Variational Autoencoders (VAEs), which enabled the creation of highly detailed, contextually appropriate textures from minimal input data.

Contemporary AI rendering systems face increasing demands for photorealistic detail while maintaining real-time performance constraints. The challenge lies in balancing computational efficiency with visual fidelity, particularly when dealing with complex surface materials, dynamic lighting conditions, and varying levels of detail across different viewing distances. Modern applications span from gaming and virtual reality to architectural visualization and film production, each requiring distinct optimization approaches.

The primary technical objectives in AI texture mapping optimization center on achieving superior detail preservation while minimizing computational overhead. Key goals include developing adaptive resolution systems that dynamically adjust texture quality based on viewing distance and importance, implementing intelligent compression algorithms that preserve critical visual information while reducing memory footprint, and creating real-time texture synthesis capabilities that can generate missing or enhanced details on-demand.

Performance optimization targets encompass reducing GPU memory bandwidth requirements, minimizing texture streaming latency, and achieving consistent frame rates across diverse hardware configurations. Additionally, the integration of temporal coherence mechanisms ensures smooth transitions and eliminates flickering artifacts during dynamic scenes, while maintaining the highest possible visual quality standards demanded by modern rendering applications.

The evolution of AI-driven texture mapping began with early neural network applications in the 2010s, where machine learning algorithms were first employed to automate texture synthesis and pattern recognition. This progression accelerated dramatically with the advent of deep learning architectures, particularly Generative Adversarial Networks (GANs) and Variational Autoencoders (VAEs), which enabled the creation of highly detailed, contextually appropriate textures from minimal input data.

Contemporary AI rendering systems face increasing demands for photorealistic detail while maintaining real-time performance constraints. The challenge lies in balancing computational efficiency with visual fidelity, particularly when dealing with complex surface materials, dynamic lighting conditions, and varying levels of detail across different viewing distances. Modern applications span from gaming and virtual reality to architectural visualization and film production, each requiring distinct optimization approaches.

The primary technical objectives in AI texture mapping optimization center on achieving superior detail preservation while minimizing computational overhead. Key goals include developing adaptive resolution systems that dynamically adjust texture quality based on viewing distance and importance, implementing intelligent compression algorithms that preserve critical visual information while reducing memory footprint, and creating real-time texture synthesis capabilities that can generate missing or enhanced details on-demand.

Performance optimization targets encompass reducing GPU memory bandwidth requirements, minimizing texture streaming latency, and achieving consistent frame rates across diverse hardware configurations. Additionally, the integration of temporal coherence mechanisms ensures smooth transitions and eliminates flickering artifacts during dynamic scenes, while maintaining the highest possible visual quality standards demanded by modern rendering applications.

Market Demand for Enhanced AI Rendering Detail Quality

The gaming industry represents the largest market segment driving demand for enhanced AI rendering detail quality, with modern AAA titles requiring increasingly sophisticated texture mapping capabilities to meet consumer expectations for photorealistic visuals. Game developers are under constant pressure to deliver immersive experiences that can compete in a saturated market, where visual fidelity often serves as a key differentiator. The rise of high-resolution displays and next-generation gaming consoles has amplified this demand, as players expect textures that maintain clarity and detail even under close inspection.

Virtual reality and augmented reality applications constitute another rapidly expanding market segment with stringent requirements for texture mapping optimization. These immersive technologies demand ultra-low latency rendering while maintaining high visual quality, as any degradation in texture detail can break the sense of presence and cause user discomfort. The proximity of VR displays to users' eyes makes texture artifacts particularly noticeable, creating a critical need for advanced AI rendering solutions that can deliver consistent detail quality across varying viewing distances and angles.

The architectural visualization and real estate sectors have emerged as significant consumers of enhanced AI rendering technologies, driven by the need to create compelling virtual property tours and design presentations. Professional clients in these industries require texture mapping solutions that can accurately represent material properties such as fabric weaves, wood grains, and surface imperfections with minimal computational overhead. The ability to generate high-quality renderings quickly has become essential for maintaining competitive advantage in project bidding and client presentations.

Film and animation studios represent a premium market segment where texture mapping quality directly impacts production value and audience engagement. The transition toward real-time rendering workflows in content creation has intensified demand for AI-powered solutions that can match traditional offline rendering quality while enabling interactive creative processes. Studios are increasingly seeking texture mapping technologies that can handle complex material interactions and maintain consistency across different lighting conditions.

The automotive and product design industries have shown growing interest in AI rendering solutions that can accelerate the visualization of design concepts and marketing materials. These sectors require texture mapping capabilities that can accurately represent various materials and finishes, enabling designers and marketers to create compelling visual content without extensive manual texture work. The ability to rapidly iterate on design variations while maintaining photorealistic quality has become a crucial competitive factor in product development cycles.

Virtual reality and augmented reality applications constitute another rapidly expanding market segment with stringent requirements for texture mapping optimization. These immersive technologies demand ultra-low latency rendering while maintaining high visual quality, as any degradation in texture detail can break the sense of presence and cause user discomfort. The proximity of VR displays to users' eyes makes texture artifacts particularly noticeable, creating a critical need for advanced AI rendering solutions that can deliver consistent detail quality across varying viewing distances and angles.

The architectural visualization and real estate sectors have emerged as significant consumers of enhanced AI rendering technologies, driven by the need to create compelling virtual property tours and design presentations. Professional clients in these industries require texture mapping solutions that can accurately represent material properties such as fabric weaves, wood grains, and surface imperfections with minimal computational overhead. The ability to generate high-quality renderings quickly has become essential for maintaining competitive advantage in project bidding and client presentations.

Film and animation studios represent a premium market segment where texture mapping quality directly impacts production value and audience engagement. The transition toward real-time rendering workflows in content creation has intensified demand for AI-powered solutions that can match traditional offline rendering quality while enabling interactive creative processes. Studios are increasingly seeking texture mapping technologies that can handle complex material interactions and maintain consistency across different lighting conditions.

The automotive and product design industries have shown growing interest in AI rendering solutions that can accelerate the visualization of design concepts and marketing materials. These sectors require texture mapping capabilities that can accurately represent various materials and finishes, enabling designers and marketers to create compelling visual content without extensive manual texture work. The ability to rapidly iterate on design variations while maintaining photorealistic quality has become a crucial competitive factor in product development cycles.

Current Challenges in AI-Based Texture Mapping Systems

AI-based texture mapping systems face significant computational bottlenecks when processing high-resolution textures in real-time rendering scenarios. The primary challenge stems from the intensive memory bandwidth requirements needed to sample and filter large texture datasets while maintaining interactive frame rates. Current GPU architectures struggle to efficiently handle the massive data throughput required for detailed texture operations, particularly when multiple texture layers are applied simultaneously to complex geometric surfaces.

Memory management presents another critical obstacle in AI-driven texture mapping implementations. Traditional texture caching mechanisms prove inadequate when dealing with dynamically generated or AI-enhanced textures that require frequent updates. The unpredictable memory access patterns characteristic of neural network-based texture generation create cache misses and memory fragmentation, leading to performance degradation and increased latency in rendering pipelines.

Quality consistency across different viewing distances and angles remains a persistent technical challenge. AI texture mapping systems often exhibit artifacts such as temporal flickering, spatial aliasing, and inconsistent detail levels when textures are viewed from varying perspectives. These issues become particularly pronounced in dynamic scenes where lighting conditions change rapidly or when objects undergo complex transformations that require real-time texture adaptation.

Integration complexity with existing rendering pipelines poses substantial implementation barriers for many organizations. Legacy graphics systems lack the architectural flexibility needed to accommodate AI-enhanced texture processing workflows, requiring significant infrastructure modifications. The incompatibility between traditional rasterization-based rendering approaches and modern neural network inference engines creates technical debt and increases development costs.

Scalability limitations emerge when deploying AI texture mapping solutions across diverse hardware configurations. The heterogeneous nature of consumer graphics hardware makes it challenging to optimize texture mapping algorithms for consistent performance across different GPU architectures. Power consumption constraints on mobile and embedded devices further complicate the deployment of computationally intensive AI texture enhancement techniques.

Training data quality and availability continue to constrain the effectiveness of AI-based texture mapping systems. Insufficient high-quality texture datasets limit the ability of neural networks to generate realistic and contextually appropriate surface details. The lack of standardized texture quality metrics makes it difficult to evaluate and compare different AI texture mapping approaches objectively.

Memory management presents another critical obstacle in AI-driven texture mapping implementations. Traditional texture caching mechanisms prove inadequate when dealing with dynamically generated or AI-enhanced textures that require frequent updates. The unpredictable memory access patterns characteristic of neural network-based texture generation create cache misses and memory fragmentation, leading to performance degradation and increased latency in rendering pipelines.

Quality consistency across different viewing distances and angles remains a persistent technical challenge. AI texture mapping systems often exhibit artifacts such as temporal flickering, spatial aliasing, and inconsistent detail levels when textures are viewed from varying perspectives. These issues become particularly pronounced in dynamic scenes where lighting conditions change rapidly or when objects undergo complex transformations that require real-time texture adaptation.

Integration complexity with existing rendering pipelines poses substantial implementation barriers for many organizations. Legacy graphics systems lack the architectural flexibility needed to accommodate AI-enhanced texture processing workflows, requiring significant infrastructure modifications. The incompatibility between traditional rasterization-based rendering approaches and modern neural network inference engines creates technical debt and increases development costs.

Scalability limitations emerge when deploying AI texture mapping solutions across diverse hardware configurations. The heterogeneous nature of consumer graphics hardware makes it challenging to optimize texture mapping algorithms for consistent performance across different GPU architectures. Power consumption constraints on mobile and embedded devices further complicate the deployment of computationally intensive AI texture enhancement techniques.

Training data quality and availability continue to constrain the effectiveness of AI-based texture mapping systems. Insufficient high-quality texture datasets limit the ability of neural networks to generate realistic and contextually appropriate surface details. The lack of standardized texture quality metrics makes it difficult to evaluate and compare different AI texture mapping approaches objectively.

Current AI Texture Mapping Optimization Solutions

01 Texture coordinate generation and mapping techniques

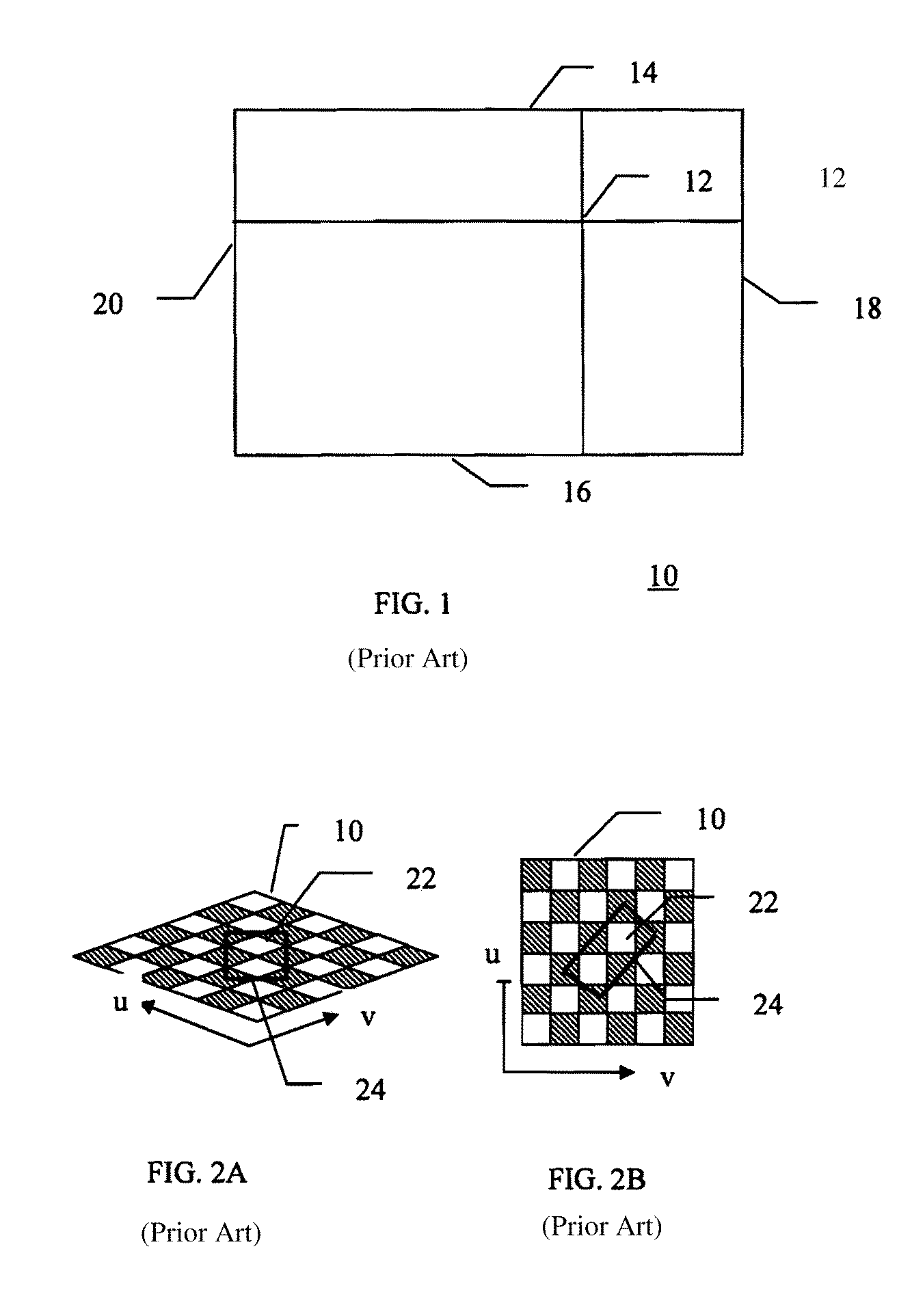

Methods for generating and applying texture coordinates to 3D objects involve calculating appropriate mapping parameters to ensure accurate texture placement on polygon surfaces. These techniques include automatic coordinate generation, perspective correction, and adaptive mapping strategies that account for object geometry and viewing angles. The approaches enable realistic texture application while maintaining computational efficiency.- Texture coordinate generation and mapping techniques: Methods for generating and applying texture coordinates to 3D objects involve calculating appropriate mapping parameters to ensure accurate texture placement on polygon surfaces. These techniques include automatic coordinate generation, perspective correction, and adaptive mapping strategies that account for surface curvature and viewing angles. The approaches enable realistic texture application across complex geometric shapes.

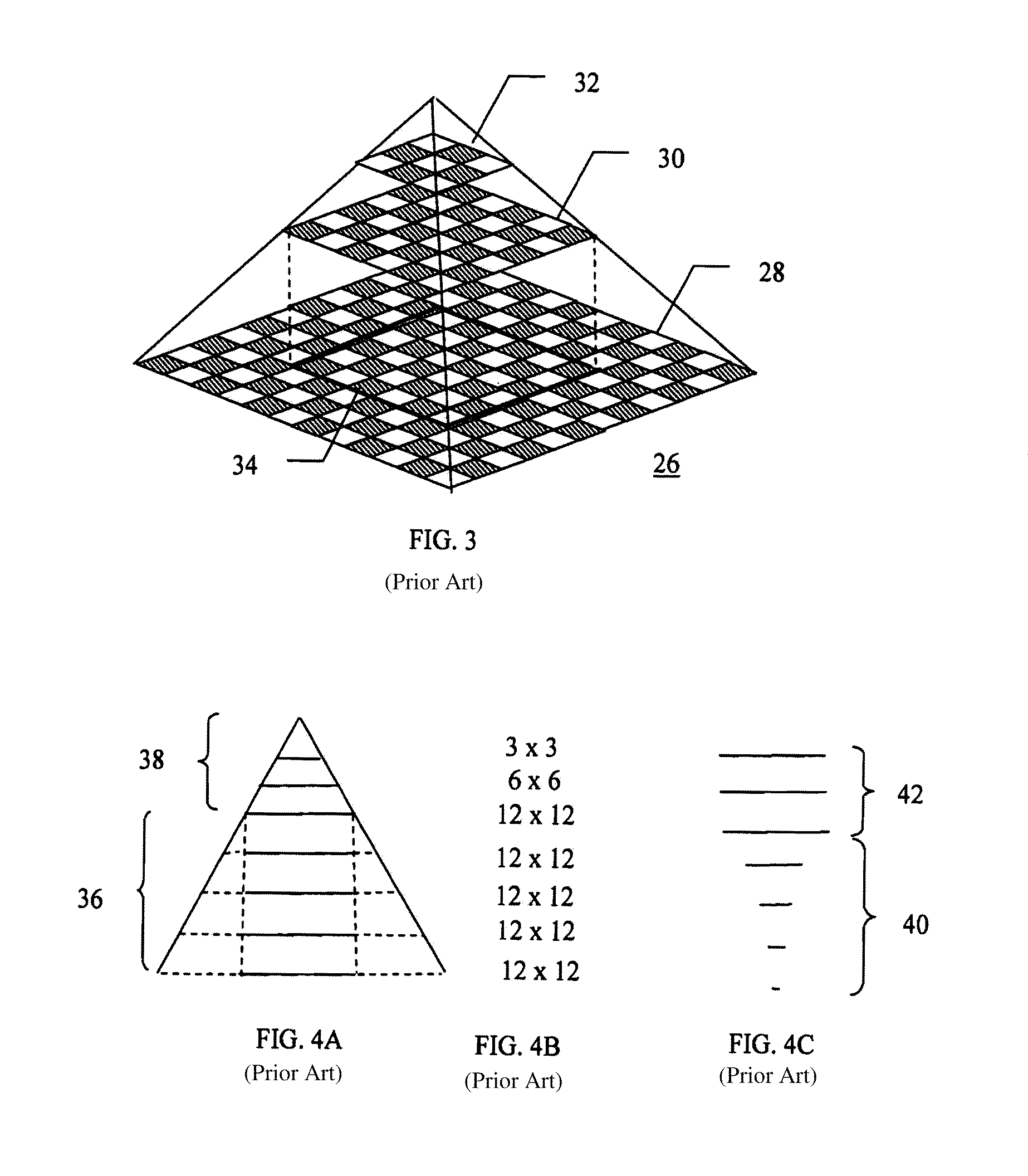

- Multi-resolution and level-of-detail texture mapping: Texture mapping systems that utilize multiple resolution levels to optimize rendering performance and visual quality. These methods involve creating and selecting from various texture detail levels based on viewing distance, screen space coverage, or computational resources. The techniques include mipmapping, texture filtering, and dynamic level-of-detail selection to balance image quality with processing efficiency.

- Texture compression and memory optimization: Approaches for reducing texture memory requirements while maintaining visual fidelity through compression algorithms and efficient storage formats. These methods enable handling of high-resolution textures within limited memory constraints by employing block-based compression, palette techniques, or adaptive encoding schemes. The solutions facilitate real-time decompression during rendering operations.

- Bump mapping and surface detail enhancement: Techniques for simulating surface irregularities and fine geometric details through texture-based methods without increasing polygon count. These approaches manipulate lighting calculations, normal vectors, or displacement values to create the appearance of complex surface features. The methods enhance visual realism by adding perceived depth and texture variation to rendered surfaces.

- Hardware-accelerated texture processing: Specialized graphics processing architectures and circuits designed to accelerate texture mapping operations. These implementations include dedicated texture units, parallel processing pipelines, and optimized memory access patterns for high-performance texture filtering and application. The hardware solutions enable real-time texture mapping for interactive graphics applications with minimal CPU overhead.

02 Multi-resolution and level-of-detail texture mapping

Techniques for managing texture detail at different viewing distances and resolutions utilize hierarchical texture representations and mipmapping strategies. These methods dynamically select appropriate texture resolution based on screen space coverage and distance from the viewer, optimizing both visual quality and rendering performance. The approaches include filtering techniques and blending between detail levels to avoid visual artifacts.Expand Specific Solutions03 Texture filtering and anti-aliasing methods

Advanced filtering techniques improve texture quality by reducing aliasing artifacts and enhancing visual smoothness. These methods include bilinear, trilinear, and anisotropic filtering approaches that sample and blend texture pixels appropriately. The techniques address issues such as texture magnification, minification, and perspective distortion to produce high-quality rendered images.Expand Specific Solutions04 Bump mapping and surface detail enhancement

Methods for adding surface detail without increasing geometric complexity utilize texture-based techniques to simulate surface irregularities and fine details. These approaches modify lighting calculations based on texture information to create the appearance of depth and surface variation. The techniques include normal mapping, displacement mapping, and parallax mapping for enhanced visual realism.Expand Specific Solutions05 Hardware-accelerated texture processing

Specialized hardware architectures and processing pipelines optimize texture mapping operations for real-time rendering applications. These systems implement dedicated texture caching, memory management, and parallel processing capabilities to accelerate texture fetch and filtering operations. The implementations include efficient memory access patterns and optimized data structures for high-performance graphics rendering.Expand Specific Solutions

Major Players in AI Rendering and Graphics Technology

The texture mapping optimization in AI rendering field represents a rapidly evolving competitive landscape characterized by significant technological advancement and substantial market growth potential. The industry is currently in an accelerated development phase, driven by increasing demand for high-quality visual content across gaming, entertainment, and enterprise applications. Major technology corporations including NVIDIA, Microsoft, AMD, and Intel dominate the hardware acceleration segment, while companies like Tencent, NetEase, and specialized firms such as Shenzhen Rayvision Technology focus on software solutions and cloud-based rendering services. The technology maturity varies significantly across different approaches, with established GPU manufacturers like NVIDIA and AMD leading in hardware-accelerated solutions, while emerging players like MetaX Integrated Circuits are developing specialized AI rendering chips. Academic institutions including Zhejiang University and Beihang University contribute fundamental research, indicating strong theoretical foundations supporting commercial applications.

Microsoft Technology Licensing LLC

Technical Solution: Microsoft has developed DirectML-based texture optimization solutions that leverage machine learning for intelligent texture mapping in AI rendering pipelines. Their approach includes neural texture compression algorithms that maintain visual fidelity while reducing memory bandwidth requirements. The company's Azure cloud rendering services incorporate AI-driven level-of-detail systems that dynamically adjust texture resolution based on viewing distance and importance. Microsoft's DirectX 12 Ultimate API includes variable rate shading and mesh shaders that work in conjunction with AI algorithms to optimize texture sampling patterns for enhanced detail preservation.

Strengths: Strong software ecosystem integration, cloud-based scalability, cross-platform compatibility. Weaknesses: Dependency on cloud infrastructure, limited hardware-specific optimizations.

NVIDIA Corp.

Technical Solution: NVIDIA develops advanced GPU architectures with dedicated texture units and RT cores for AI-accelerated rendering. Their RTX series GPUs feature hardware-accelerated ray tracing combined with AI denoising through DLSS technology, enabling real-time texture mapping optimization. The company's OptiX AI denoising framework uses deep learning models to enhance texture detail while reducing computational overhead. NVIDIA's Omniverse platform integrates AI-driven texture synthesis and mapping algorithms that can generate high-resolution textures from low-resolution inputs, significantly improving rendering quality and performance in real-time applications.

Strengths: Industry-leading GPU performance, comprehensive AI rendering ecosystem, strong developer support. Weaknesses: High power consumption, premium pricing limits accessibility.

Core Innovations in Neural Texture Synthesis Patents

Apparatus and method for texture mapping using multiple levels of detail

PatentInactiveUS6373495B1

Innovation

- The method converts the standard formula for multiple levels of detail into an equivalent formula using the plane equation of a triangle, allowing hardware implementation and utilizing a log2 look-up table for accurate computation, enabling high-precision texture mapping.

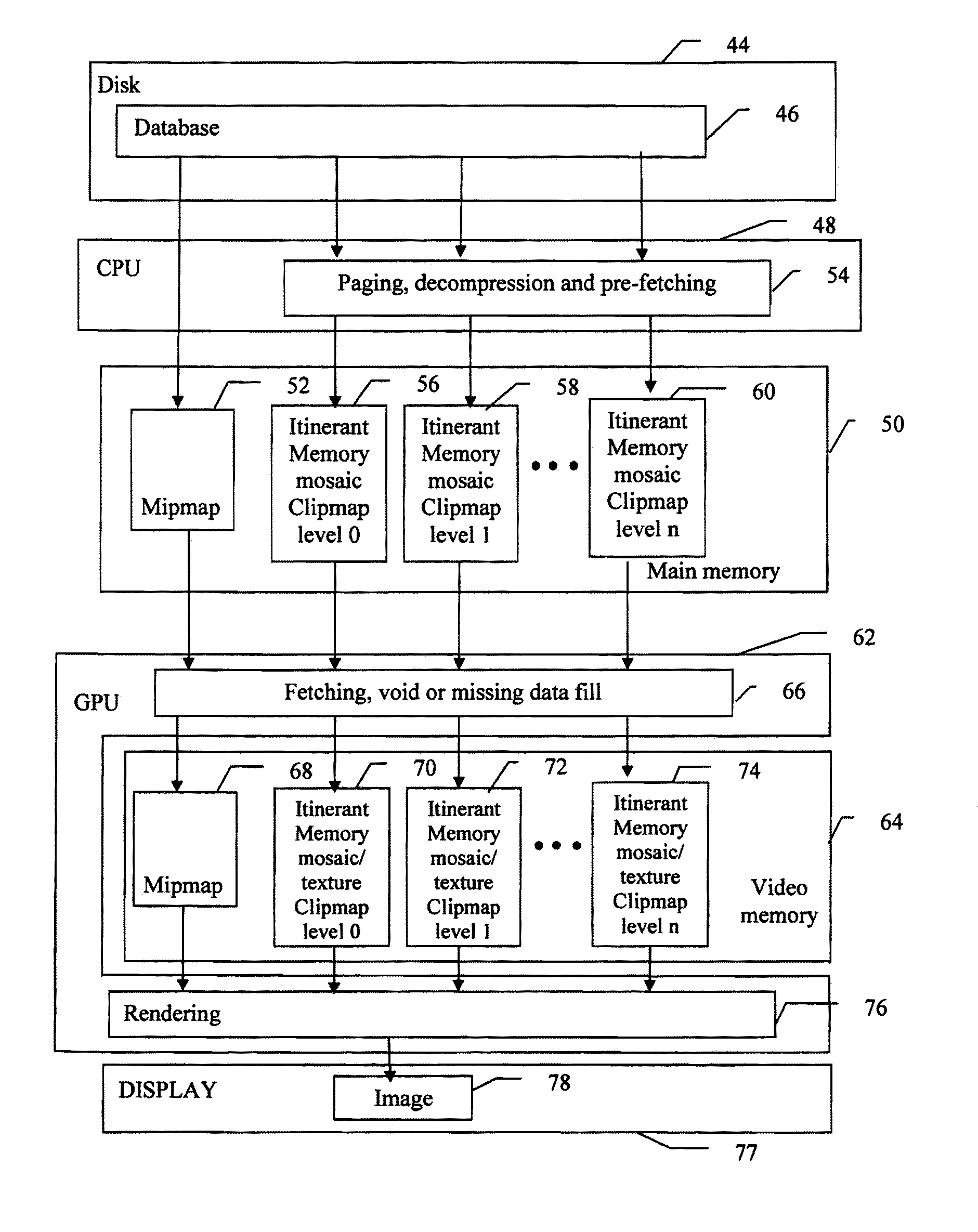

System and method for asynchronous continuous-level-of-detail texture mapping for large-scale terrain rendering

PatentInactiveUS7626591B2

Innovation

- Implementing asynchronous multi-resolution texture mapping that allows independent updating of each clip-map level, using commodity GPUs with vertex and fragment shaders for efficient texture management, and employing toroidal mapping to reduce texel updates and optimize memory usage by interpolating between clip-map levels.

Hardware Requirements for Real-Time AI Texture Processing

Real-time AI texture processing for optimized texture mapping demands sophisticated hardware architectures capable of handling intensive computational workloads while maintaining low latency performance. The primary hardware requirements center around specialized processing units, high-bandwidth memory systems, and efficient data transfer mechanisms that can support the complex algorithms involved in AI-driven texture enhancement and detail optimization.

Graphics Processing Units remain the cornerstone of real-time AI texture processing, with modern architectures requiring a minimum of 16GB VRAM for professional applications and 24GB or higher for enterprise-level implementations. The GPU must support tensor operations with dedicated AI acceleration cores, such as NVIDIA's RT cores and Tensor cores or AMD's RDNA architecture with hardware-accelerated ray tracing capabilities. Memory bandwidth requirements typically exceed 500 GB/s to ensure seamless data flow between processing units and memory subsystems.

Central Processing Unit specifications play a crucial supporting role, requiring multi-core architectures with at least 16 cores operating at frequencies above 3.5 GHz. The CPU handles preprocessing tasks, scene management, and coordination between different processing units. Cache hierarchies must be optimized for frequent texture data access patterns, with L3 cache sizes of 32MB or larger proving beneficial for texture streaming operations.

Memory architecture represents a critical bottleneck in real-time AI texture processing systems. DDR5 memory with speeds exceeding 5600 MHz provides the necessary bandwidth for texture data streaming, while specialized high-bandwidth memory solutions like HBM3 offer superior performance for GPU-intensive operations. Total system memory requirements range from 64GB for development environments to 128GB or more for production systems handling complex scenes with multiple texture layers.

Storage subsystems must accommodate rapid texture asset loading and caching requirements. NVMe SSD arrays with sequential read speeds exceeding 7000 MB/s ensure minimal texture streaming delays. For large-scale operations, distributed storage solutions with parallel access capabilities become essential to maintain consistent performance across multiple concurrent rendering tasks.

Thermal management and power delivery systems require careful consideration due to the sustained high-performance demands of AI texture processing. Cooling solutions must handle thermal design power ratings of 400W or higher for GPU subsystems, while power supply units need sufficient capacity and clean power delivery to maintain stable operation under varying computational loads.

Graphics Processing Units remain the cornerstone of real-time AI texture processing, with modern architectures requiring a minimum of 16GB VRAM for professional applications and 24GB or higher for enterprise-level implementations. The GPU must support tensor operations with dedicated AI acceleration cores, such as NVIDIA's RT cores and Tensor cores or AMD's RDNA architecture with hardware-accelerated ray tracing capabilities. Memory bandwidth requirements typically exceed 500 GB/s to ensure seamless data flow between processing units and memory subsystems.

Central Processing Unit specifications play a crucial supporting role, requiring multi-core architectures with at least 16 cores operating at frequencies above 3.5 GHz. The CPU handles preprocessing tasks, scene management, and coordination between different processing units. Cache hierarchies must be optimized for frequent texture data access patterns, with L3 cache sizes of 32MB or larger proving beneficial for texture streaming operations.

Memory architecture represents a critical bottleneck in real-time AI texture processing systems. DDR5 memory with speeds exceeding 5600 MHz provides the necessary bandwidth for texture data streaming, while specialized high-bandwidth memory solutions like HBM3 offer superior performance for GPU-intensive operations. Total system memory requirements range from 64GB for development environments to 128GB or more for production systems handling complex scenes with multiple texture layers.

Storage subsystems must accommodate rapid texture asset loading and caching requirements. NVMe SSD arrays with sequential read speeds exceeding 7000 MB/s ensure minimal texture streaming delays. For large-scale operations, distributed storage solutions with parallel access capabilities become essential to maintain consistent performance across multiple concurrent rendering tasks.

Thermal management and power delivery systems require careful consideration due to the sustained high-performance demands of AI texture processing. Cooling solutions must handle thermal design power ratings of 400W or higher for GPU subsystems, while power supply units need sufficient capacity and clean power delivery to maintain stable operation under varying computational loads.

Performance Metrics for AI Rendering Quality Assessment

Establishing comprehensive performance metrics for AI rendering quality assessment requires a multi-dimensional evaluation framework that addresses both objective technical parameters and subjective visual quality indicators. Traditional rendering metrics often fall short when applied to AI-enhanced texture mapping systems, necessitating specialized measurement approaches that account for the unique characteristics of machine learning-driven rendering processes.

Objective quality metrics form the foundation of AI rendering assessment, encompassing traditional measures such as Peak Signal-to-Noise Ratio (PSNR), Structural Similarity Index (SSIM), and Mean Squared Error (MSE). However, these conventional metrics must be supplemented with AI-specific measurements including texture coherence scores, detail preservation ratios, and temporal consistency indices. Advanced metrics like Learned Perceptual Image Patch Similarity (LPIPS) and Feature Similarity Index (FSIM) provide more nuanced evaluations that better correlate with human visual perception.

Performance efficiency metrics constitute another critical dimension, measuring computational overhead, memory utilization, and rendering throughput. Key indicators include texture cache hit rates, GPU memory bandwidth utilization, and frame generation latency. These metrics become particularly important when evaluating real-time AI rendering systems where performance constraints directly impact user experience.

Perceptual quality assessment introduces subjective evaluation methodologies that capture human visual preferences and quality judgments. This includes conducting controlled user studies, implementing Mean Opinion Score (MOS) evaluations, and utilizing crowdsourced quality assessments. Advanced perceptual metrics leverage deep learning models trained on human visual system responses to predict subjective quality scores automatically.

Specialized metrics for texture mapping evaluation focus on detail fidelity, aliasing artifacts, and texture streaming efficiency. These include measurements of texture resolution scaling accuracy, mipmap transition smoothness, and anisotropic filtering effectiveness. AI-specific texture metrics evaluate the consistency of generated details across different viewing angles and lighting conditions.

Benchmark standardization ensures reproducible and comparable quality assessments across different AI rendering systems. This involves establishing standardized test scenes, reference datasets, and evaluation protocols that enable fair comparison between various texture mapping optimization approaches and facilitate systematic performance tracking over time.

Objective quality metrics form the foundation of AI rendering assessment, encompassing traditional measures such as Peak Signal-to-Noise Ratio (PSNR), Structural Similarity Index (SSIM), and Mean Squared Error (MSE). However, these conventional metrics must be supplemented with AI-specific measurements including texture coherence scores, detail preservation ratios, and temporal consistency indices. Advanced metrics like Learned Perceptual Image Patch Similarity (LPIPS) and Feature Similarity Index (FSIM) provide more nuanced evaluations that better correlate with human visual perception.

Performance efficiency metrics constitute another critical dimension, measuring computational overhead, memory utilization, and rendering throughput. Key indicators include texture cache hit rates, GPU memory bandwidth utilization, and frame generation latency. These metrics become particularly important when evaluating real-time AI rendering systems where performance constraints directly impact user experience.

Perceptual quality assessment introduces subjective evaluation methodologies that capture human visual preferences and quality judgments. This includes conducting controlled user studies, implementing Mean Opinion Score (MOS) evaluations, and utilizing crowdsourced quality assessments. Advanced perceptual metrics leverage deep learning models trained on human visual system responses to predict subjective quality scores automatically.

Specialized metrics for texture mapping evaluation focus on detail fidelity, aliasing artifacts, and texture streaming efficiency. These include measurements of texture resolution scaling accuracy, mipmap transition smoothness, and anisotropic filtering effectiveness. AI-specific texture metrics evaluate the consistency of generated details across different viewing angles and lighting conditions.

Benchmark standardization ensures reproducible and comparable quality assessments across different AI rendering systems. This involves establishing standardized test scenes, reference datasets, and evaluation protocols that enable fair comparison between various texture mapping optimization approaches and facilitate systematic performance tracking over time.

Unlock deeper insights with PatSnap Eureka Quick Research — get a full tech report to explore trends and direct your research. Try now!

Generate Your Research Report Instantly with AI Agent

Supercharge your innovation with PatSnap Eureka AI Agent Platform!