Optimizing Calibration Processes in Specialized Analog Applications

MAR 31, 20269 MIN READ

Generate Your Research Report Instantly with AI Agent

PatSnap Eureka helps you evaluate technical feasibility & market potential.

Analog Calibration Background and Technical Objectives

Analog calibration has evolved as a cornerstone technology in precision electronic systems, tracing its origins to early measurement instruments of the 1940s and 1950s. Initially developed for military and aerospace applications, analog calibration processes have undergone significant transformation driven by increasing demands for accuracy, reliability, and automation across diverse industrial sectors.

The historical progression of analog calibration reflects the broader evolution of electronic systems. Early calibration methods relied heavily on manual adjustments and reference standards, requiring skilled technicians to perform time-intensive procedures. The introduction of digital control systems in the 1980s marked a pivotal shift, enabling more sophisticated calibration algorithms and automated correction mechanisms.

Contemporary analog calibration encompasses multiple specialized domains, including precision instrumentation, automotive sensors, medical devices, and telecommunications infrastructure. Each application domain presents unique challenges related to environmental conditions, accuracy requirements, and operational constraints. The automotive industry, for instance, demands calibration solutions that maintain precision across extreme temperature variations and mechanical stress, while medical applications require exceptional stability and traceability to regulatory standards.

Current technological trends indicate a convergence toward intelligent calibration systems that incorporate machine learning algorithms and adaptive correction mechanisms. These systems can dynamically adjust calibration parameters based on real-time performance data, environmental conditions, and aging characteristics of analog components. The integration of Internet of Things connectivity further enables remote calibration monitoring and predictive maintenance capabilities.

The primary technical objectives driving modern analog calibration optimization focus on achieving higher accuracy levels while reducing calibration time and complexity. Target specifications increasingly demand sub-percent accuracy across extended temperature ranges, with calibration procedures that can be executed in production environments without specialized equipment or extensive operator training.

Automation represents another critical objective, as manufacturers seek to eliminate human error and reduce labor costs associated with calibration processes. Advanced calibration systems aim to achieve fully automated operation with minimal human intervention, incorporating self-diagnostic capabilities and automatic compensation for component variations and environmental factors.

Long-term strategic goals include developing calibration methodologies that can adapt to emerging analog technologies, such as advanced sensor fusion systems and next-generation power management circuits. These objectives require fundamental advances in calibration theory, measurement techniques, and correction algorithms to address the increasing complexity of modern analog systems.

The historical progression of analog calibration reflects the broader evolution of electronic systems. Early calibration methods relied heavily on manual adjustments and reference standards, requiring skilled technicians to perform time-intensive procedures. The introduction of digital control systems in the 1980s marked a pivotal shift, enabling more sophisticated calibration algorithms and automated correction mechanisms.

Contemporary analog calibration encompasses multiple specialized domains, including precision instrumentation, automotive sensors, medical devices, and telecommunications infrastructure. Each application domain presents unique challenges related to environmental conditions, accuracy requirements, and operational constraints. The automotive industry, for instance, demands calibration solutions that maintain precision across extreme temperature variations and mechanical stress, while medical applications require exceptional stability and traceability to regulatory standards.

Current technological trends indicate a convergence toward intelligent calibration systems that incorporate machine learning algorithms and adaptive correction mechanisms. These systems can dynamically adjust calibration parameters based on real-time performance data, environmental conditions, and aging characteristics of analog components. The integration of Internet of Things connectivity further enables remote calibration monitoring and predictive maintenance capabilities.

The primary technical objectives driving modern analog calibration optimization focus on achieving higher accuracy levels while reducing calibration time and complexity. Target specifications increasingly demand sub-percent accuracy across extended temperature ranges, with calibration procedures that can be executed in production environments without specialized equipment or extensive operator training.

Automation represents another critical objective, as manufacturers seek to eliminate human error and reduce labor costs associated with calibration processes. Advanced calibration systems aim to achieve fully automated operation with minimal human intervention, incorporating self-diagnostic capabilities and automatic compensation for component variations and environmental factors.

Long-term strategic goals include developing calibration methodologies that can adapt to emerging analog technologies, such as advanced sensor fusion systems and next-generation power management circuits. These objectives require fundamental advances in calibration theory, measurement techniques, and correction algorithms to address the increasing complexity of modern analog systems.

Market Demand for Precision Analog Calibration Solutions

The precision analog calibration market is experiencing unprecedented growth driven by the increasing complexity and performance requirements of modern electronic systems. Industries such as aerospace, defense, telecommunications, and medical devices demand exceptional accuracy and reliability from their analog components, creating substantial market opportunities for advanced calibration solutions.

Automotive electronics represents one of the fastest-growing segments, particularly with the proliferation of electric vehicles and autonomous driving systems. These applications require precise sensor calibration for critical functions including battery management systems, motor control units, and safety-critical sensors. The stringent automotive qualification standards and zero-defect requirements have elevated the importance of robust calibration processes throughout the product lifecycle.

The medical device sector continues to drive significant demand for precision calibration solutions. Diagnostic equipment, patient monitoring systems, and therapeutic devices must maintain exceptional accuracy to ensure patient safety and regulatory compliance. The trend toward portable and wearable medical devices has intensified the need for efficient calibration processes that can accommodate miniaturized analog circuits while maintaining measurement precision.

Industrial automation and process control applications constitute another major market driver. Manufacturing facilities increasingly rely on precise analog measurements for quality control, process optimization, and predictive maintenance. The Industry 4.0 transformation has amplified the demand for calibration solutions that can integrate seamlessly with digital manufacturing systems while providing real-time calibration status and traceability.

Telecommunications infrastructure, particularly with the deployment of advanced wireless technologies, requires sophisticated calibration approaches for RF and mixed-signal components. Base stations, network equipment, and test instrumentation demand calibration solutions that can handle wide frequency ranges and complex modulation schemes while maintaining cost-effectiveness in high-volume production environments.

The market landscape reveals a clear preference for automated calibration solutions that reduce manual intervention and improve repeatability. Companies are increasingly seeking calibration systems that can adapt to different product variants, support remote monitoring capabilities, and provide comprehensive data logging for quality assurance and regulatory compliance purposes.

Emerging applications in renewable energy systems, particularly solar inverters and wind turbine control systems, are creating new market segments for precision analog calibration. These applications require long-term stability and accuracy under harsh environmental conditions, driving demand for advanced calibration methodologies that can ensure sustained performance over extended operational periods.

Automotive electronics represents one of the fastest-growing segments, particularly with the proliferation of electric vehicles and autonomous driving systems. These applications require precise sensor calibration for critical functions including battery management systems, motor control units, and safety-critical sensors. The stringent automotive qualification standards and zero-defect requirements have elevated the importance of robust calibration processes throughout the product lifecycle.

The medical device sector continues to drive significant demand for precision calibration solutions. Diagnostic equipment, patient monitoring systems, and therapeutic devices must maintain exceptional accuracy to ensure patient safety and regulatory compliance. The trend toward portable and wearable medical devices has intensified the need for efficient calibration processes that can accommodate miniaturized analog circuits while maintaining measurement precision.

Industrial automation and process control applications constitute another major market driver. Manufacturing facilities increasingly rely on precise analog measurements for quality control, process optimization, and predictive maintenance. The Industry 4.0 transformation has amplified the demand for calibration solutions that can integrate seamlessly with digital manufacturing systems while providing real-time calibration status and traceability.

Telecommunications infrastructure, particularly with the deployment of advanced wireless technologies, requires sophisticated calibration approaches for RF and mixed-signal components. Base stations, network equipment, and test instrumentation demand calibration solutions that can handle wide frequency ranges and complex modulation schemes while maintaining cost-effectiveness in high-volume production environments.

The market landscape reveals a clear preference for automated calibration solutions that reduce manual intervention and improve repeatability. Companies are increasingly seeking calibration systems that can adapt to different product variants, support remote monitoring capabilities, and provide comprehensive data logging for quality assurance and regulatory compliance purposes.

Emerging applications in renewable energy systems, particularly solar inverters and wind turbine control systems, are creating new market segments for precision analog calibration. These applications require long-term stability and accuracy under harsh environmental conditions, driving demand for advanced calibration methodologies that can ensure sustained performance over extended operational periods.

Current Calibration Challenges in Specialized Analog Systems

Specialized analog systems face unprecedented calibration challenges as performance requirements continue to escalate across critical applications. Traditional calibration methodologies, originally designed for general-purpose analog circuits, prove inadequate when confronted with the stringent accuracy demands of precision instrumentation, high-frequency communications, and safety-critical automotive systems. The complexity of modern analog designs, incorporating multiple signal paths and sophisticated compensation mechanisms, creates calibration bottlenecks that significantly impact manufacturing throughput and product reliability.

Temperature-dependent variations represent one of the most persistent calibration challenges in specialized analog applications. Advanced analog circuits exhibit complex thermal behaviors that cannot be adequately addressed through conventional single-point or linear compensation techniques. The interaction between temperature coefficients of different circuit elements creates non-linear drift patterns that require sophisticated multi-dimensional calibration approaches, often necessitating extensive characterization across wide temperature ranges and multiple operating conditions.

Process variation compensation has emerged as another critical challenge, particularly in high-volume manufacturing environments. Modern semiconductor processes exhibit statistical variations that affect key analog parameters such as threshold voltages, transconductance, and parasitic capacitances. These variations manifest differently across various circuit topologies, making it extremely difficult to develop universal calibration strategies that maintain consistent performance across production lots while minimizing calibration time and complexity.

Aging-related parameter drift poses long-term calibration challenges that extend beyond initial manufacturing calibration. Specialized analog systems deployed in harsh environments or mission-critical applications must maintain performance specifications over extended operational lifetimes. Traditional calibration approaches fail to account for time-dependent degradation mechanisms, necessitating the development of adaptive calibration strategies that can compensate for gradual parameter shifts without requiring system downtime or manual intervention.

The increasing integration density of modern analog systems introduces cross-coupling effects that complicate calibration procedures. Signal interference between adjacent circuits, substrate coupling, and power supply interactions create interdependencies that make sequential calibration approaches ineffective. These coupling effects often require simultaneous multi-parameter optimization techniques that significantly increase calibration complexity and computational requirements, challenging existing calibration infrastructure and methodologies.

Bandwidth and speed requirements in high-performance analog applications create additional calibration constraints. Fast settling times and wide bandwidth specifications limit the available calibration windows, making it difficult to perform comprehensive parameter adjustments without impacting system performance. The need for real-time calibration in dynamic operating environments further complicates the challenge, requiring calibration algorithms that can operate within strict timing constraints while maintaining calibration accuracy and stability.

Temperature-dependent variations represent one of the most persistent calibration challenges in specialized analog applications. Advanced analog circuits exhibit complex thermal behaviors that cannot be adequately addressed through conventional single-point or linear compensation techniques. The interaction between temperature coefficients of different circuit elements creates non-linear drift patterns that require sophisticated multi-dimensional calibration approaches, often necessitating extensive characterization across wide temperature ranges and multiple operating conditions.

Process variation compensation has emerged as another critical challenge, particularly in high-volume manufacturing environments. Modern semiconductor processes exhibit statistical variations that affect key analog parameters such as threshold voltages, transconductance, and parasitic capacitances. These variations manifest differently across various circuit topologies, making it extremely difficult to develop universal calibration strategies that maintain consistent performance across production lots while minimizing calibration time and complexity.

Aging-related parameter drift poses long-term calibration challenges that extend beyond initial manufacturing calibration. Specialized analog systems deployed in harsh environments or mission-critical applications must maintain performance specifications over extended operational lifetimes. Traditional calibration approaches fail to account for time-dependent degradation mechanisms, necessitating the development of adaptive calibration strategies that can compensate for gradual parameter shifts without requiring system downtime or manual intervention.

The increasing integration density of modern analog systems introduces cross-coupling effects that complicate calibration procedures. Signal interference between adjacent circuits, substrate coupling, and power supply interactions create interdependencies that make sequential calibration approaches ineffective. These coupling effects often require simultaneous multi-parameter optimization techniques that significantly increase calibration complexity and computational requirements, challenging existing calibration infrastructure and methodologies.

Bandwidth and speed requirements in high-performance analog applications create additional calibration constraints. Fast settling times and wide bandwidth specifications limit the available calibration windows, making it difficult to perform comprehensive parameter adjustments without impacting system performance. The need for real-time calibration in dynamic operating environments further complicates the challenge, requiring calibration algorithms that can operate within strict timing constraints while maintaining calibration accuracy and stability.

Existing Calibration Optimization Solutions

01 Automated calibration using machine learning and artificial intelligence

Advanced calibration processes utilize machine learning algorithms and artificial intelligence to automatically optimize calibration parameters. These systems can analyze historical calibration data, identify patterns, and predict optimal calibration settings. The AI-driven approach reduces manual intervention, improves accuracy, and adapts to changing conditions over time. Neural networks and deep learning models are employed to continuously refine calibration procedures based on real-time feedback and performance metrics.- Automated calibration using machine learning and artificial intelligence: Advanced calibration processes utilize machine learning algorithms and artificial intelligence to automatically optimize calibration parameters. These systems can analyze historical calibration data, identify patterns, and predict optimal calibration settings. The AI-driven approach reduces manual intervention, improves accuracy, and adapts to changing conditions over time. Neural networks and deep learning models are employed to continuously refine calibration procedures based on real-time feedback and performance metrics.

- Real-time adaptive calibration methods: Dynamic calibration techniques that continuously monitor system performance and adjust calibration parameters in real-time without interrupting operations. These methods employ sensors and feedback loops to detect deviations from optimal performance and automatically compensate for environmental changes, component aging, or operational variations. The adaptive approach ensures sustained accuracy and reduces the frequency of manual recalibration cycles.

- Multi-parameter simultaneous calibration optimization: Comprehensive calibration strategies that optimize multiple parameters concurrently rather than sequentially. This approach considers interdependencies between different calibration factors and uses mathematical modeling to find optimal solutions across all parameters simultaneously. The method significantly reduces calibration time and improves overall system performance by accounting for complex interactions between various calibration elements.

- Cloud-based calibration data management and optimization: Calibration systems that leverage cloud computing infrastructure to store, analyze, and optimize calibration data across multiple devices or locations. These platforms enable centralized management of calibration procedures, facilitate data sharing between systems, and provide advanced analytics for identifying optimization opportunities. Remote calibration capabilities and version control ensure consistency and traceability across distributed operations.

- Statistical process control for calibration optimization: Application of statistical methods and process control techniques to monitor and optimize calibration procedures. These approaches use statistical analysis to identify trends, detect anomalies, and establish optimal calibration intervals. Control charts, variance analysis, and uncertainty quantification methods are employed to minimize calibration errors and ensure measurement reliability while reducing unnecessary calibration frequency.

02 Multi-sensor fusion calibration techniques

Optimization of calibration processes through the integration of multiple sensors and measurement devices. This approach involves cross-referencing data from various sensors to achieve higher precision and reliability. The fusion technique compensates for individual sensor limitations and environmental variations by combining complementary measurement sources. Advanced algorithms process the multi-sensor data to generate unified calibration parameters that enhance overall system performance.Expand Specific Solutions03 Real-time adaptive calibration systems

Dynamic calibration methods that continuously monitor system performance and adjust calibration parameters in real-time. These systems detect drift, environmental changes, and operational variations, automatically triggering recalibration when necessary. The adaptive approach minimizes downtime and maintains optimal accuracy throughout the operational lifecycle. Feedback loops and closed-loop control mechanisms ensure that calibration remains current without requiring scheduled maintenance intervals.Expand Specific Solutions04 Statistical process control for calibration optimization

Application of statistical methods and process control techniques to optimize calibration procedures. This includes the use of control charts, variance analysis, and uncertainty quantification to identify optimal calibration intervals and parameters. Statistical models help determine the minimum calibration frequency required to maintain specified accuracy levels while reducing unnecessary calibration cycles. Quality metrics and performance indicators guide continuous improvement of calibration processes.Expand Specific Solutions05 Cloud-based calibration management and optimization

Centralized calibration management systems utilizing cloud computing infrastructure to optimize calibration processes across multiple devices and locations. These platforms enable remote calibration, data storage, and analysis of calibration records. Cloud-based systems facilitate standardization of calibration procedures, enable predictive maintenance, and provide comprehensive traceability. The networked approach allows for sharing of calibration data and best practices across distributed operations.Expand Specific Solutions

Key Players in Analog Calibration Technology Market

The calibration processes optimization in specialized analog applications represents a mature yet rapidly evolving market segment driven by increasing precision demands across automotive, industrial, and IoT sectors. The competitive landscape is dominated by established semiconductor giants including Analog Devices, Texas Instruments, Infineon Technologies, and Microchip Technology, who possess decades of analog expertise and comprehensive calibration IP portfolios. These market leaders compete alongside specialized players like Rambus and Omni Design Technologies, while foundry partners such as Taiwan Semiconductor Manufacturing enable advanced process node implementations. The technology maturity varies significantly, with companies like Siemens and ABB focusing on industrial automation calibration, while emerging players from China including Huawei and Origin Quantum explore next-generation approaches. Market consolidation continues as evidenced by acquisitions like Cypress Semiconductor, indicating strong growth potential despite technical complexity barriers.

Analog Devices, Inc.

Technical Solution: ADI provides comprehensive calibration solutions for specialized analog applications through their precision reference voltage sources, high-resolution ADCs, and automated calibration algorithms. Their approach includes factory calibration using laser trimming techniques to achieve sub-0.1% accuracy, runtime self-calibration capabilities through built-in reference circuits, and temperature compensation algorithms that maintain performance across -40°C to +125°C operating ranges. The company's calibration methodology incorporates machine learning algorithms to predict and compensate for component aging effects, ensuring long-term stability in critical applications such as medical instrumentation and aerospace systems.

Strengths: Industry-leading precision with sub-0.1% accuracy, comprehensive temperature compensation, advanced aging prediction algorithms. Weaknesses: Higher cost compared to standard solutions, complex implementation requirements for full feature utilization.

Microchip Technology, Inc.

Technical Solution: Microchip's calibration strategy emphasizes embedded calibration solutions integrated within their microcontroller and analog IC portfolios. Their approach includes factory calibration using automated test equipment to characterize device parameters, software-based calibration routines that can be customized for specific applications, and non-volatile memory storage for calibration constants. The solution features temperature-aware calibration algorithms that adjust parameters based on integrated temperature sensors, and supports field calibration capabilities allowing end-users to perform recalibration procedures. Their calibration framework is designed for cost-sensitive applications while maintaining adequate precision for industrial and automotive requirements.

Strengths: Cost-effective solutions suitable for volume production, flexible software-based calibration, integrated temperature sensing. Weaknesses: Lower precision compared to specialized calibration ICs, limited performance in extreme environmental conditions.

Core Patents in Advanced Analog Calibration Techniques

Analog preamplifier calibration

PatentInactiveUS20070096815A1

Innovation

- A combined analog and digital calibration circuit that uses a digitally controlled voltage divider with a controllable current source and a diode element to continuously adjust the back gate control voltage, allowing for precise and efficient control of output offset voltage in differential amplifier circuits.

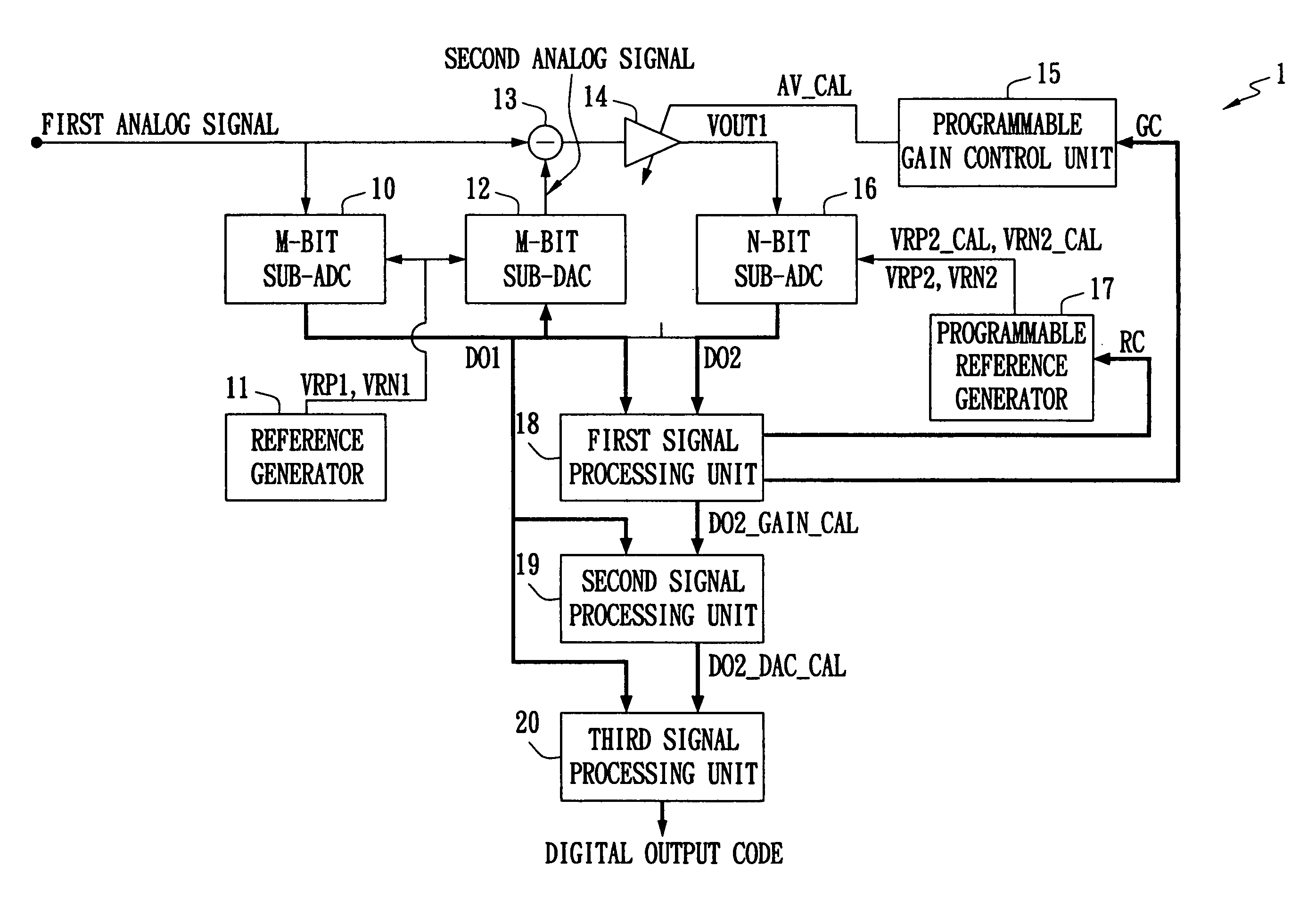

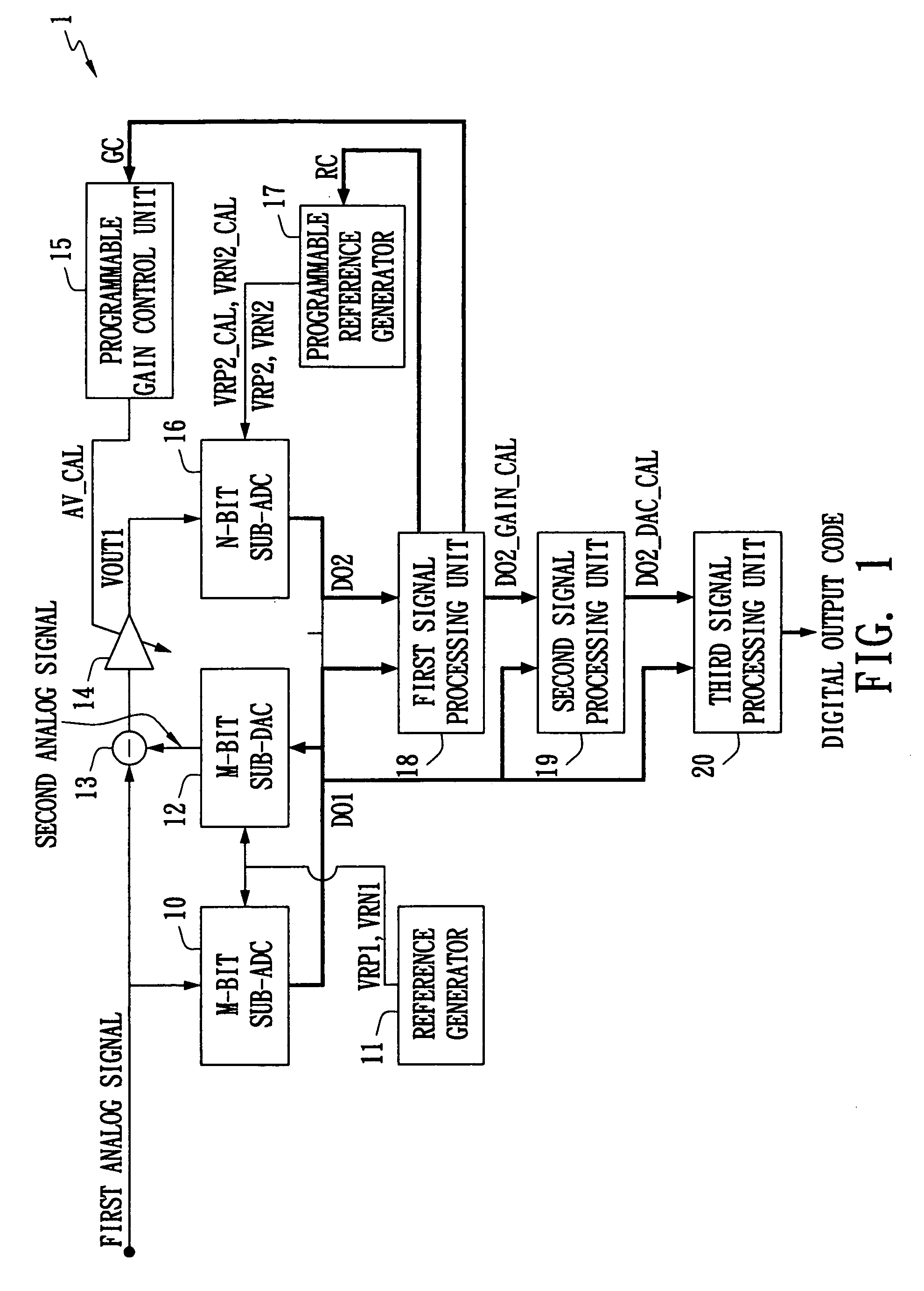

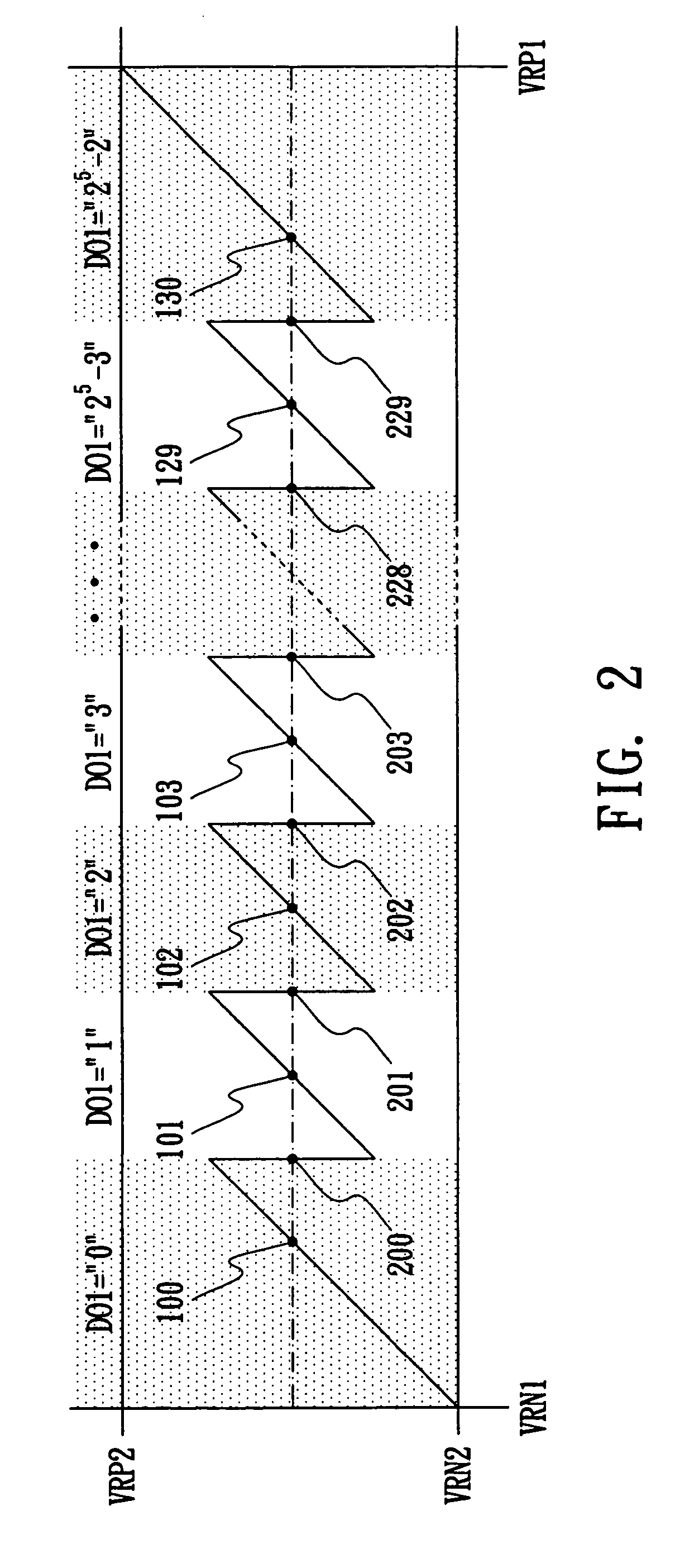

Multi-step analog/digital converter and on-line calibration method thereof

PatentInactiveUS7142138B2

Innovation

- A multi-step ADC with an on-line calibration method that uses two background calibration algorithms to perform residue amplifier gain error and DAC non-linearity calibrations, reducing the need for complex digital signal processing and additional structures by integrating a first signal processing unit, a second signal processing unit, a programmable gain control unit, and a programmable reference voltage generator.

Standards and Compliance for Analog Calibration

Standards and compliance frameworks form the backbone of reliable analog calibration processes, establishing the fundamental requirements that ensure measurement accuracy, traceability, and consistency across different applications and industries. The regulatory landscape for analog calibration is primarily governed by international standards organizations, with ISO/IEC 17025 serving as the cornerstone standard for testing and calibration laboratories. This standard defines the general requirements for competence, impartiality, and consistent operation of laboratories performing calibration activities.

The IEEE standards family, particularly IEEE 1057 and IEEE 1241, provides specific guidelines for digitizing waveform recorders and analog-to-digital converter testing, establishing critical parameters for dynamic performance measurements. These standards define test methods, performance specifications, and uncertainty calculations that are essential for specialized analog applications requiring high precision and reliability.

Industry-specific compliance requirements add additional layers of complexity to analog calibration processes. The aerospace sector mandates adherence to AS9100 quality management systems, while medical device manufacturers must comply with FDA 21 CFR Part 820 and ISO 13485 standards. These regulations impose stringent documentation requirements, validation protocols, and risk management procedures that directly impact calibration methodologies and frequency intervals.

Metrological traceability represents a fundamental compliance requirement, demanding that all calibration measurements be traceable to national or international measurement standards through an unbroken chain of comparisons. This requirement necessitates the use of certified reference standards and participation in proficiency testing programs to demonstrate measurement capability and maintain accreditation status.

Documentation and record-keeping compliance requirements mandate comprehensive calibration certificates that include measurement uncertainty statements, environmental conditions, and calibration procedures used. The growing emphasis on digital transformation has led to increased adoption of electronic calibration records and automated compliance reporting systems, which must still meet the same rigorous standards for data integrity and audit trails established by traditional paper-based systems.

The IEEE standards family, particularly IEEE 1057 and IEEE 1241, provides specific guidelines for digitizing waveform recorders and analog-to-digital converter testing, establishing critical parameters for dynamic performance measurements. These standards define test methods, performance specifications, and uncertainty calculations that are essential for specialized analog applications requiring high precision and reliability.

Industry-specific compliance requirements add additional layers of complexity to analog calibration processes. The aerospace sector mandates adherence to AS9100 quality management systems, while medical device manufacturers must comply with FDA 21 CFR Part 820 and ISO 13485 standards. These regulations impose stringent documentation requirements, validation protocols, and risk management procedures that directly impact calibration methodologies and frequency intervals.

Metrological traceability represents a fundamental compliance requirement, demanding that all calibration measurements be traceable to national or international measurement standards through an unbroken chain of comparisons. This requirement necessitates the use of certified reference standards and participation in proficiency testing programs to demonstrate measurement capability and maintain accreditation status.

Documentation and record-keeping compliance requirements mandate comprehensive calibration certificates that include measurement uncertainty statements, environmental conditions, and calibration procedures used. The growing emphasis on digital transformation has led to increased adoption of electronic calibration records and automated compliance reporting systems, which must still meet the same rigorous standards for data integrity and audit trails established by traditional paper-based systems.

Cost-Benefit Analysis of Calibration Optimization

The economic evaluation of calibration optimization in specialized analog applications reveals significant financial implications across multiple operational dimensions. Initial implementation costs typically range from $50,000 to $500,000 depending on system complexity and automation level. These investments encompass hardware upgrades, software licensing, training programs, and integration services. However, the return on investment becomes apparent through reduced calibration cycle times, which can decrease from hours to minutes in automated systems.

Labor cost reduction represents the most substantial benefit category. Traditional manual calibration processes require skilled technicians spending 2-4 hours per device, while optimized automated systems can reduce this to 15-30 minutes. For facilities processing 1,000 units monthly, this translates to annual savings of $200,000-$400,000 in direct labor costs. Additionally, reduced human intervention minimizes calibration errors, eliminating costly rework cycles that typically affect 3-5% of manually calibrated devices.

Equipment utilization improvements generate secondary benefits through increased throughput capacity. Optimized calibration processes enable 40-60% higher device processing rates without additional capital investment in test equipment. This enhanced efficiency allows manufacturers to defer equipment purchases, representing avoided costs of $100,000-$300,000 per postponed acquisition.

Quality-related cost benefits emerge from improved measurement accuracy and repeatability. Enhanced calibration precision reduces field failures by 20-35%, translating to decreased warranty claims, reduced customer support costs, and improved brand reputation. For high-volume applications, warranty cost reductions alone can justify optimization investments within 18-24 months.

Long-term operational benefits include reduced maintenance requirements for calibration equipment due to more controlled and consistent usage patterns. Predictive maintenance capabilities enabled by optimization systems can extend equipment lifespan by 15-25%, while reducing unplanned downtime costs that typically average $5,000-$15,000 per incident in specialized analog manufacturing environments.

Labor cost reduction represents the most substantial benefit category. Traditional manual calibration processes require skilled technicians spending 2-4 hours per device, while optimized automated systems can reduce this to 15-30 minutes. For facilities processing 1,000 units monthly, this translates to annual savings of $200,000-$400,000 in direct labor costs. Additionally, reduced human intervention minimizes calibration errors, eliminating costly rework cycles that typically affect 3-5% of manually calibrated devices.

Equipment utilization improvements generate secondary benefits through increased throughput capacity. Optimized calibration processes enable 40-60% higher device processing rates without additional capital investment in test equipment. This enhanced efficiency allows manufacturers to defer equipment purchases, representing avoided costs of $100,000-$300,000 per postponed acquisition.

Quality-related cost benefits emerge from improved measurement accuracy and repeatability. Enhanced calibration precision reduces field failures by 20-35%, translating to decreased warranty claims, reduced customer support costs, and improved brand reputation. For high-volume applications, warranty cost reductions alone can justify optimization investments within 18-24 months.

Long-term operational benefits include reduced maintenance requirements for calibration equipment due to more controlled and consistent usage patterns. Predictive maintenance capabilities enabled by optimization systems can extend equipment lifespan by 15-25%, while reducing unplanned downtime costs that typically average $5,000-$15,000 per incident in specialized analog manufacturing environments.

Unlock deeper insights with PatSnap Eureka Quick Research — get a full tech report to explore trends and direct your research. Try now!

Generate Your Research Report Instantly with AI Agent

Supercharge your innovation with PatSnap Eureka AI Agent Platform!