Progressive Activation Function Testing in Multilayer Perceptron Layers

APR 2, 20269 MIN READ

Generate Your Research Report Instantly with AI Agent

PatSnap Eureka helps you evaluate technical feasibility & market potential.

Progressive Activation Function Development Background and Objectives

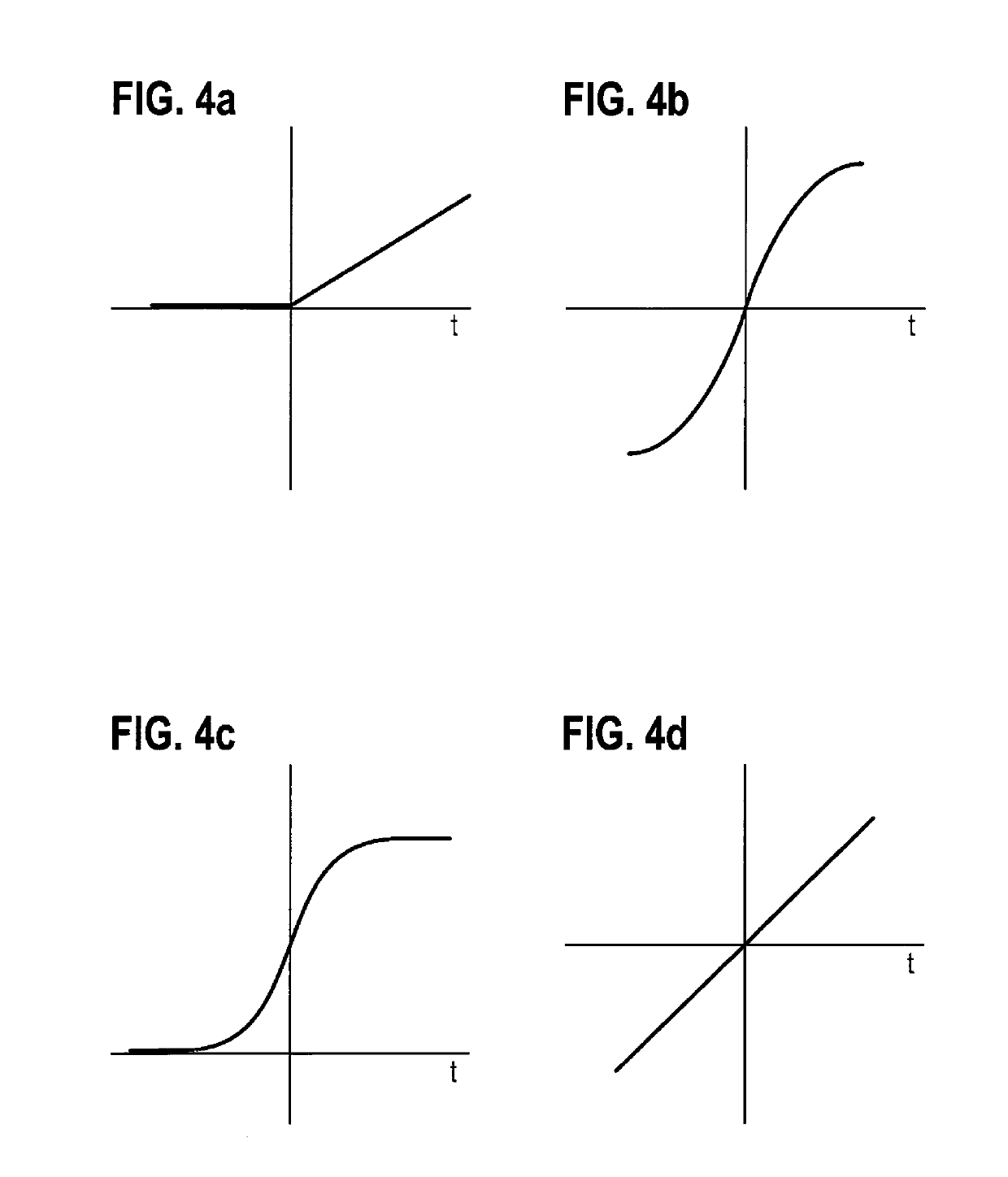

The evolution of activation functions in neural networks has been a cornerstone of deep learning advancement since the inception of artificial neural networks. Traditional activation functions such as sigmoid and tanh dominated early neural network architectures but suffered from significant limitations including vanishing gradient problems and computational inefficiencies. The introduction of ReLU (Rectified Linear Unit) marked a pivotal breakthrough, addressing many of these issues and enabling the training of deeper networks.

However, as neural network architectures have grown increasingly complex and diverse, the limitations of static activation functions have become more apparent. Different layers within a multilayer perceptron often require distinct activation characteristics to optimize feature extraction and representation learning. Early layers may benefit from smooth, differentiable functions for gradient flow, while deeper layers might require more aggressive non-linearities for complex pattern recognition.

Progressive activation functions represent an innovative approach that dynamically adapts activation behavior across different layers or training phases. Unlike traditional static functions, progressive activation mechanisms can modify their mathematical properties based on layer depth, training iteration, or learned parameters. This adaptive nature allows networks to automatically optimize their activation landscape for specific tasks and data distributions.

The development of progressive activation functions has been driven by several key observations from recent deep learning research. Studies have demonstrated that optimal activation functions vary significantly across different network depths and application domains. Additionally, research has shown that activation function choice can dramatically impact convergence speed, final model performance, and generalization capabilities.

The primary objective of progressive activation function development is to create adaptive mechanisms that can automatically determine optimal activation characteristics for each layer in a multilayer perceptron. This involves developing mathematical frameworks that allow activation functions to evolve during training, implementing efficient computational methods for dynamic activation evaluation, and establishing robust testing methodologies to validate performance improvements.

Secondary objectives include reducing manual hyperparameter tuning related to activation function selection, improving gradient flow characteristics across deep networks, and enhancing model interpretability through activation function analysis. The ultimate goal is to create self-optimizing neural networks that can adapt their internal activation mechanisms to maximize performance for specific tasks while maintaining computational efficiency and training stability.

However, as neural network architectures have grown increasingly complex and diverse, the limitations of static activation functions have become more apparent. Different layers within a multilayer perceptron often require distinct activation characteristics to optimize feature extraction and representation learning. Early layers may benefit from smooth, differentiable functions for gradient flow, while deeper layers might require more aggressive non-linearities for complex pattern recognition.

Progressive activation functions represent an innovative approach that dynamically adapts activation behavior across different layers or training phases. Unlike traditional static functions, progressive activation mechanisms can modify their mathematical properties based on layer depth, training iteration, or learned parameters. This adaptive nature allows networks to automatically optimize their activation landscape for specific tasks and data distributions.

The development of progressive activation functions has been driven by several key observations from recent deep learning research. Studies have demonstrated that optimal activation functions vary significantly across different network depths and application domains. Additionally, research has shown that activation function choice can dramatically impact convergence speed, final model performance, and generalization capabilities.

The primary objective of progressive activation function development is to create adaptive mechanisms that can automatically determine optimal activation characteristics for each layer in a multilayer perceptron. This involves developing mathematical frameworks that allow activation functions to evolve during training, implementing efficient computational methods for dynamic activation evaluation, and establishing robust testing methodologies to validate performance improvements.

Secondary objectives include reducing manual hyperparameter tuning related to activation function selection, improving gradient flow characteristics across deep networks, and enhancing model interpretability through activation function analysis. The ultimate goal is to create self-optimizing neural networks that can adapt their internal activation mechanisms to maximize performance for specific tasks while maintaining computational efficiency and training stability.

Market Demand for Advanced MLP Activation Functions

The market demand for advanced multilayer perceptron activation functions has experienced substantial growth driven by the increasing complexity of machine learning applications across diverse industries. Traditional activation functions like sigmoid and ReLU, while foundational, have demonstrated limitations in handling sophisticated neural network architectures that require more nuanced gradient flow and feature representation capabilities.

Enterprise adoption of deep learning solutions has created significant demand for activation functions that can address vanishing gradient problems, improve convergence rates, and enhance model performance. Financial services organizations implementing fraud detection systems require activation functions that maintain stable gradients across deep networks. Healthcare institutions developing diagnostic AI systems need functions that preserve information integrity through multiple processing layers.

The autonomous vehicle industry represents a particularly demanding market segment, where neural networks must process complex sensor data through numerous layers while maintaining real-time performance. Progressive activation function testing has become essential for validating performance across varying network depths and computational constraints. These applications require activation functions that adapt dynamically to different layers and learning phases.

Cloud computing platforms and AI-as-a-Service providers have emerged as major market drivers, seeking activation functions that optimize computational efficiency while maintaining accuracy. The demand extends beyond performance metrics to include energy efficiency considerations, particularly relevant for edge computing deployments and mobile applications.

Research institutions and technology companies are increasingly investing in activation function innovation to gain competitive advantages in AI model performance. The market shows strong preference for functions that demonstrate superior performance in progressive testing scenarios, where activation behavior is evaluated across multiple network configurations and training stages.

The growing emphasis on explainable AI has created additional demand for activation functions that provide interpretable behavior patterns during progressive testing phases. Organizations require functions that not only perform well but also offer insights into model decision-making processes through their activation patterns across different network layers.

Enterprise adoption of deep learning solutions has created significant demand for activation functions that can address vanishing gradient problems, improve convergence rates, and enhance model performance. Financial services organizations implementing fraud detection systems require activation functions that maintain stable gradients across deep networks. Healthcare institutions developing diagnostic AI systems need functions that preserve information integrity through multiple processing layers.

The autonomous vehicle industry represents a particularly demanding market segment, where neural networks must process complex sensor data through numerous layers while maintaining real-time performance. Progressive activation function testing has become essential for validating performance across varying network depths and computational constraints. These applications require activation functions that adapt dynamically to different layers and learning phases.

Cloud computing platforms and AI-as-a-Service providers have emerged as major market drivers, seeking activation functions that optimize computational efficiency while maintaining accuracy. The demand extends beyond performance metrics to include energy efficiency considerations, particularly relevant for edge computing deployments and mobile applications.

Research institutions and technology companies are increasingly investing in activation function innovation to gain competitive advantages in AI model performance. The market shows strong preference for functions that demonstrate superior performance in progressive testing scenarios, where activation behavior is evaluated across multiple network configurations and training stages.

The growing emphasis on explainable AI has created additional demand for activation functions that provide interpretable behavior patterns during progressive testing phases. Organizations require functions that not only perform well but also offer insights into model decision-making processes through their activation patterns across different network layers.

Current State and Challenges in Activation Function Testing

The current landscape of activation function testing in multilayer perceptrons reveals a complex ecosystem of methodologies and approaches, yet significant gaps remain in systematic evaluation frameworks. Traditional testing methods primarily focus on static performance metrics such as convergence speed, gradient flow characteristics, and final accuracy measurements. However, these conventional approaches often fail to capture the dynamic behavior of activation functions throughout the training process, particularly in deep network architectures where layer-specific performance variations become critical.

Existing testing frameworks predominantly rely on benchmark datasets and standardized neural network architectures to evaluate activation function performance. Popular approaches include comparative analysis using datasets like MNIST, CIFAR-10, and ImageNet, where different activation functions are substituted while maintaining identical network structures. While these methods provide valuable baseline comparisons, they lack the granularity needed to understand how activation functions perform across different layers and training phases.

The progressive nature of modern deep learning training introduces unique challenges that current testing methodologies struggle to address. Most evaluation frameworks treat activation functions as static components, failing to account for their evolving behavior as network weights adjust during training. This limitation becomes particularly pronounced in multilayer perceptrons where early layers may require different activation characteristics compared to deeper layers, yet current testing approaches rarely capture these layer-specific requirements.

Computational complexity represents another significant challenge in comprehensive activation function testing. Evaluating multiple activation functions across various network depths, layer positions, and training scenarios requires substantial computational resources. Current testing frameworks often compromise between thoroughness and computational feasibility, leading to incomplete assessments that may miss critical performance characteristics or optimal configuration scenarios.

The lack of standardized metrics for progressive evaluation further complicates the testing landscape. While traditional metrics like accuracy, loss convergence, and gradient magnitude provide useful insights, they fail to capture the temporal dynamics of activation function performance. Existing frameworks rarely incorporate metrics that track activation function behavior evolution, gradient flow stability across training epochs, or layer-specific contribution analysis throughout the learning process.

Reproducibility and consistency issues plague current testing methodologies, particularly when comparing results across different research groups or implementation frameworks. Variations in initialization schemes, optimization algorithms, and hardware configurations can significantly impact activation function performance assessments, yet standardized protocols for controlling these variables remain underdeveloped. This inconsistency hampers the development of reliable, generalizable insights about activation function effectiveness in progressive training scenarios.

Existing testing frameworks predominantly rely on benchmark datasets and standardized neural network architectures to evaluate activation function performance. Popular approaches include comparative analysis using datasets like MNIST, CIFAR-10, and ImageNet, where different activation functions are substituted while maintaining identical network structures. While these methods provide valuable baseline comparisons, they lack the granularity needed to understand how activation functions perform across different layers and training phases.

The progressive nature of modern deep learning training introduces unique challenges that current testing methodologies struggle to address. Most evaluation frameworks treat activation functions as static components, failing to account for their evolving behavior as network weights adjust during training. This limitation becomes particularly pronounced in multilayer perceptrons where early layers may require different activation characteristics compared to deeper layers, yet current testing approaches rarely capture these layer-specific requirements.

Computational complexity represents another significant challenge in comprehensive activation function testing. Evaluating multiple activation functions across various network depths, layer positions, and training scenarios requires substantial computational resources. Current testing frameworks often compromise between thoroughness and computational feasibility, leading to incomplete assessments that may miss critical performance characteristics or optimal configuration scenarios.

The lack of standardized metrics for progressive evaluation further complicates the testing landscape. While traditional metrics like accuracy, loss convergence, and gradient magnitude provide useful insights, they fail to capture the temporal dynamics of activation function performance. Existing frameworks rarely incorporate metrics that track activation function behavior evolution, gradient flow stability across training epochs, or layer-specific contribution analysis throughout the learning process.

Reproducibility and consistency issues plague current testing methodologies, particularly when comparing results across different research groups or implementation frameworks. Variations in initialization schemes, optimization algorithms, and hardware configurations can significantly impact activation function performance assessments, yet standardized protocols for controlling these variables remain underdeveloped. This inconsistency hampers the development of reliable, generalizable insights about activation function effectiveness in progressive training scenarios.

Existing Progressive Activation Testing Solutions

01 Neural network activation function testing methodologies

Methods and systems for testing activation functions in neural networks through progressive evaluation techniques. These approaches involve systematic testing of different activation function behaviors across network layers, enabling optimization of network performance. The testing methodologies include validation of activation function responses under various input conditions and progressive refinement of activation parameters.- Neural network activation function testing methods: Methods and systems for testing activation functions in neural networks through progressive evaluation techniques. These approaches involve systematically testing different activation function parameters and configurations to optimize network performance. The testing process includes monitoring output responses and adjusting activation thresholds progressively to achieve desired neural network behavior.

- Progressive functional testing in semiconductor devices: Techniques for conducting progressive activation and functional testing of semiconductor components and integrated circuits. The methods involve step-by-step activation of circuit elements while monitoring their functional responses. This progressive approach allows for identification of defects and performance issues at various stages of device operation.

- Automated test systems with progressive activation protocols: Automated testing systems that implement progressive activation sequences for evaluating electronic components and systems. These systems utilize controlled activation patterns to systematically test functionality across multiple operational states. The progressive nature allows for comprehensive coverage while minimizing test time and resource usage.

- Software and firmware progressive testing frameworks: Frameworks and methodologies for progressive testing of software functions and firmware activation sequences. These approaches enable incremental validation of software components through staged activation and testing procedures. The progressive testing strategy helps identify issues early in the development cycle and ensures proper function integration.

- Biological and medical device activation testing: Methods for progressive activation testing in biological systems and medical devices. These techniques involve gradual activation of therapeutic functions or diagnostic capabilities while monitoring system responses. The progressive approach ensures safe and effective operation by validating each activation stage before proceeding to the next level.

02 Automated testing frameworks for activation functions

Automated systems and frameworks designed to progressively test and validate activation functions in computational models. These frameworks provide tools for systematic evaluation of activation function performance, including automated test case generation and result analysis. The systems enable efficient testing across multiple activation function types and configurations.Expand Specific Solutions03 Progressive validation of biological activation processes

Testing methodologies for biological activation processes that involve progressive monitoring and validation. These techniques include step-wise evaluation of cellular or molecular activation states, enabling detailed analysis of activation kinetics and mechanisms. The methods support comprehensive assessment of activation function in biological systems.Expand Specific Solutions04 Hardware-based activation function testing systems

Hardware implementations and testing systems specifically designed for evaluating activation functions in processing circuits. These systems provide dedicated hardware components for progressive testing of activation function implementations, including verification of computational accuracy and performance metrics. The hardware-based approaches enable real-time testing and validation.Expand Specific Solutions05 Multi-stage activation function optimization and testing

Techniques for multi-stage optimization and testing of activation functions through progressive refinement processes. These methods involve iterative testing cycles that progressively improve activation function characteristics based on performance feedback. The approaches include adaptive testing strategies that adjust evaluation parameters based on intermediate results.Expand Specific Solutions

Key Players in Deep Learning Framework and MLP Research

The progressive activation function testing in multilayer perceptron layers represents an emerging research area within the broader neural network optimization field, currently in its early developmental stage with significant academic momentum. The market for advanced neural network architectures is experiencing rapid growth, driven by increasing demand for more efficient deep learning models across industries. Leading Chinese institutions including Tsinghua University, Xidian University, Zhejiang University, and Huazhong University of Science & Technology are spearheading fundamental research in this domain, while international players like University of Tokyo, Hokkaido University, and University of Pennsylvania contribute theoretical frameworks. The technology maturity remains in the experimental phase, with most innovations concentrated in academic settings. Companies like NUCTECH and various biotechnology firms including Amgen and Bristol Myers Squibb are beginning to explore practical applications, particularly in specialized domains requiring adaptive neural architectures for complex pattern recognition tasks.

Tsinghua University

Technical Solution: Tsinghua University has developed comprehensive frameworks for progressive activation function testing in multilayer perceptron architectures. Their approach involves systematic evaluation of activation functions including ReLU, Leaky ReLU, ELU, and Swish across different network depths. The methodology incorporates gradient flow analysis, convergence rate monitoring, and performance benchmarking on standard datasets. Their research demonstrates that progressive testing can identify optimal activation combinations for specific layer configurations, leading to improved training stability and model performance. The university's framework includes automated hyperparameter tuning and cross-validation protocols specifically designed for activation function selection in deep neural networks.

Strengths: Strong theoretical foundation and comprehensive testing methodology. Weaknesses: Limited focus on computational efficiency optimization during testing phases.

Zhejiang University

Technical Solution: Zhejiang University has pioneered adaptive activation function testing methodologies for multilayer perceptrons, focusing on dynamic selection mechanisms during training. Their approach utilizes reinforcement learning algorithms to progressively evaluate and select optimal activation functions for each layer based on real-time performance metrics. The system incorporates novel gradient-based selection criteria and implements efficient testing protocols that minimize computational overhead. Their research demonstrates significant improvements in model convergence rates and final accuracy through intelligent activation function progression, particularly effective for complex classification tasks and deep network architectures.

Strengths: Innovative adaptive selection mechanisms and efficient computational implementation. Weaknesses: Complexity in implementation may require specialized expertise for practical deployment.

Core Innovations in Progressive Activation Function Patents

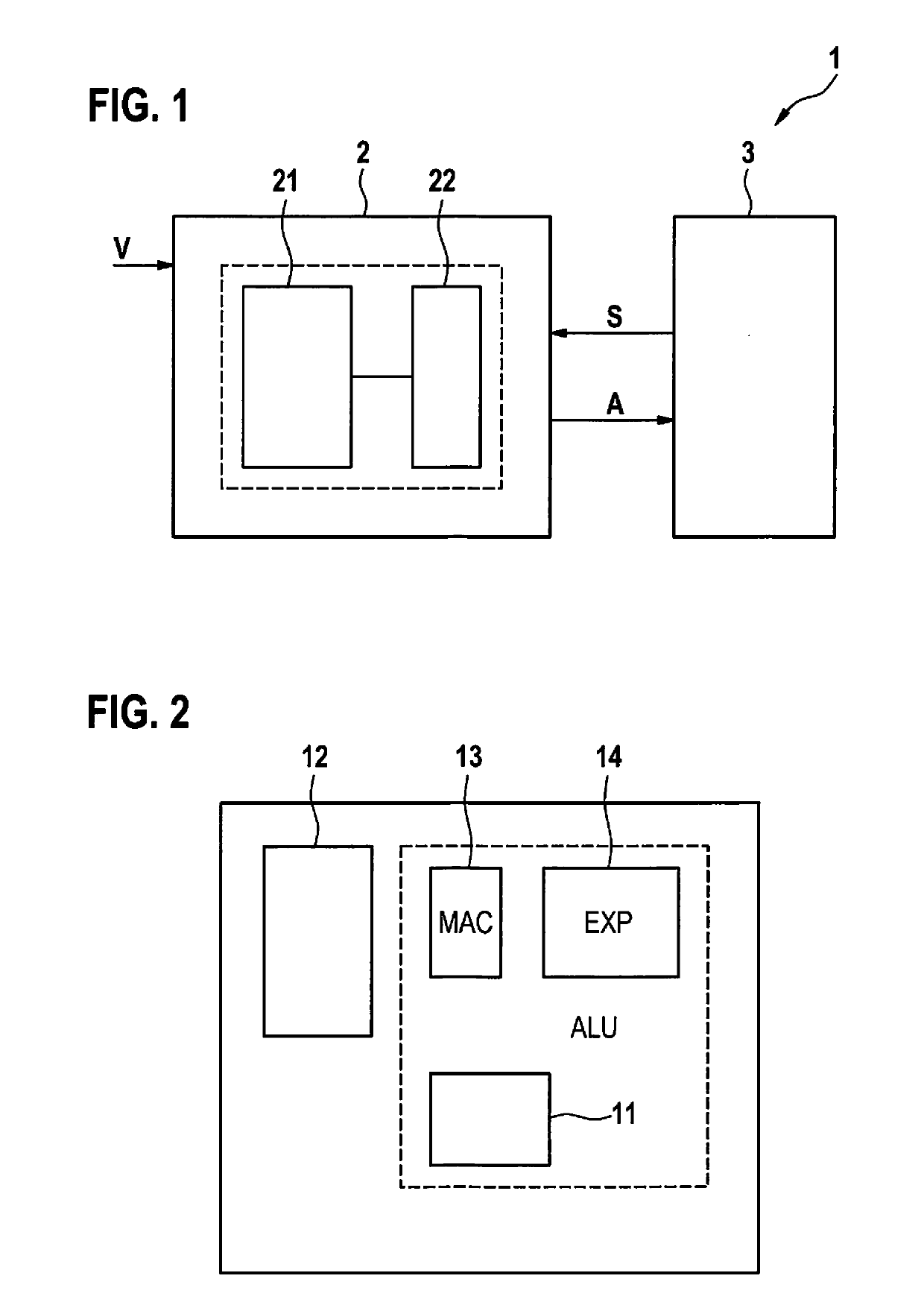

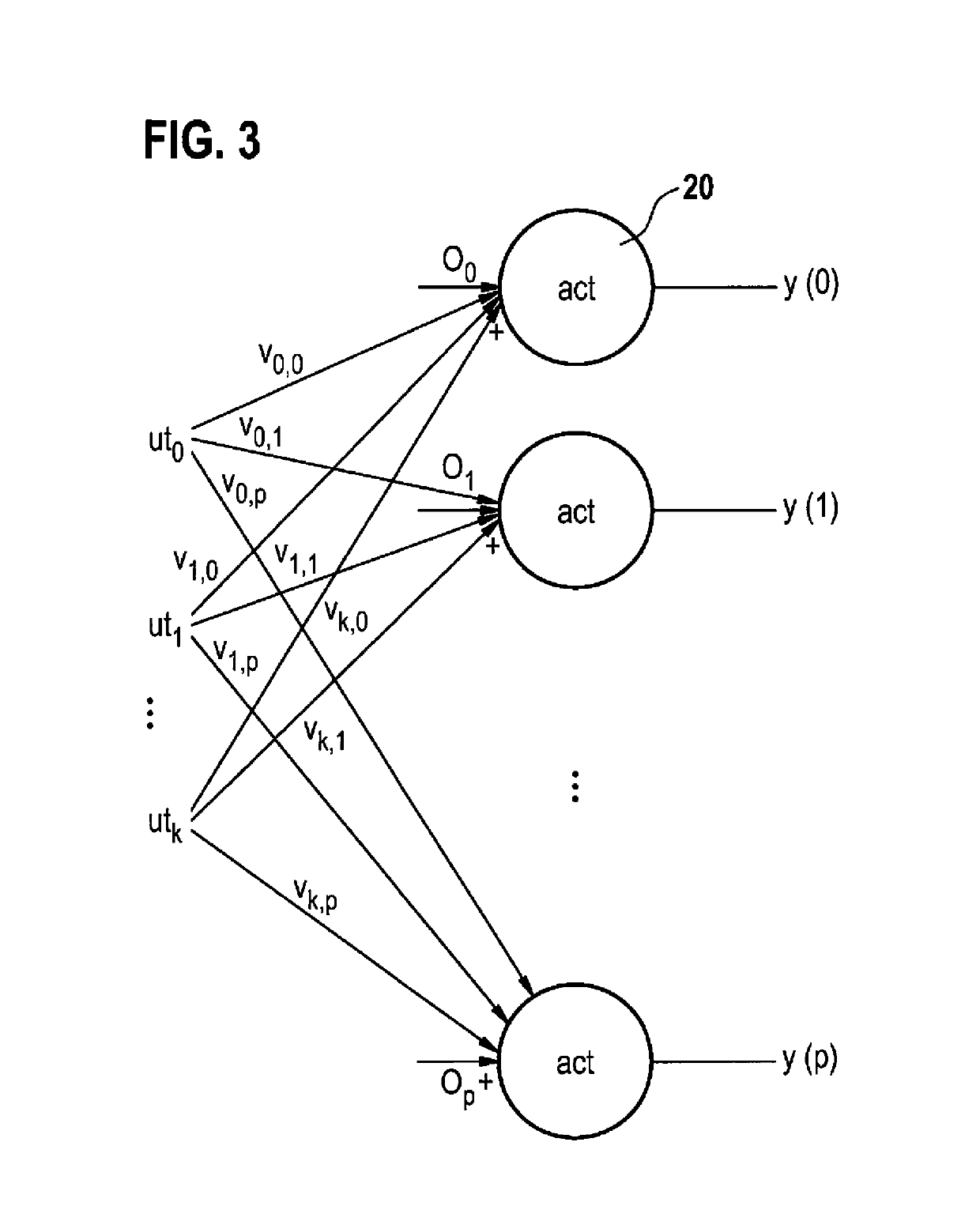

Method for calculating a neuron layer of a multi-layer perceptron model with simplified activation function

PatentActiveUS20190205734A1

Innovation

- A method for calculating a neuron layer of a multi-layer perceptron model using a permanently hardwired processor core configured in hardware, employing simplified sigmoid and tan h functions based on zero-point mirroring of the exponential function, avoiding division and utilizing only multiplications and additions for resource-efficient calculation.

Activation function processing method, activation function processing circuit, and neural network system including the same

PatentActiveUS11928575B2

Innovation

- Implementing a shared lookup table that processes multiple activation functions by converting input values and function values between different activation functions using predetermined address and function value conversion rules, reducing the need for separate lookup tables and thus lowering memory costs.

Computational Efficiency Standards for MLP Testing

Establishing robust computational efficiency standards for progressive activation function testing in multilayer perceptron layers requires comprehensive benchmarking frameworks that address both performance metrics and resource utilization patterns. Current industry practices lack standardized methodologies for evaluating the computational overhead introduced by dynamic activation function switching mechanisms, creating significant challenges in comparing different progressive testing approaches across various hardware architectures and deployment scenarios.

The fundamental efficiency metrics must encompass forward propagation latency, backward propagation computational complexity, and memory footprint variations during progressive testing phases. Standard benchmarking protocols should measure floating-point operations per second (FLOPS) consumption, cache utilization efficiency, and memory bandwidth requirements across different activation function combinations. These metrics become particularly critical when evaluating the trade-offs between testing thoroughness and computational resource consumption in production environments.

Hardware-specific optimization standards play a crucial role in defining acceptable performance thresholds for progressive activation testing implementations. GPU-accelerated environments require different efficiency criteria compared to CPU-based systems, with particular attention to parallel processing capabilities and memory coalescing patterns. Standards must account for varying computational architectures, including specialized AI accelerators and edge computing devices with limited processing power.

Scalability benchmarks represent another essential component of computational efficiency standards, addressing how progressive testing performance degrades with increasing network depth and width. Standard evaluation protocols should define acceptable performance scaling factors for networks ranging from shallow configurations to deep architectures with hundreds of layers. These standards must also consider batch size dependencies and their impact on overall testing efficiency.

Real-time performance requirements necessitate establishing latency thresholds for different application domains, from high-frequency trading systems requiring microsecond response times to autonomous vehicle applications with millisecond constraints. Efficiency standards should provide clear guidelines for determining when progressive activation testing overhead becomes prohibitive for specific use cases, enabling practitioners to make informed decisions about implementation feasibility.

Energy consumption metrics have become increasingly important in modern computational efficiency standards, particularly for mobile and embedded applications. Progressive testing implementations must be evaluated against power consumption benchmarks, considering both peak power draw during intensive testing phases and average energy usage across complete testing cycles.

The fundamental efficiency metrics must encompass forward propagation latency, backward propagation computational complexity, and memory footprint variations during progressive testing phases. Standard benchmarking protocols should measure floating-point operations per second (FLOPS) consumption, cache utilization efficiency, and memory bandwidth requirements across different activation function combinations. These metrics become particularly critical when evaluating the trade-offs between testing thoroughness and computational resource consumption in production environments.

Hardware-specific optimization standards play a crucial role in defining acceptable performance thresholds for progressive activation testing implementations. GPU-accelerated environments require different efficiency criteria compared to CPU-based systems, with particular attention to parallel processing capabilities and memory coalescing patterns. Standards must account for varying computational architectures, including specialized AI accelerators and edge computing devices with limited processing power.

Scalability benchmarks represent another essential component of computational efficiency standards, addressing how progressive testing performance degrades with increasing network depth and width. Standard evaluation protocols should define acceptable performance scaling factors for networks ranging from shallow configurations to deep architectures with hundreds of layers. These standards must also consider batch size dependencies and their impact on overall testing efficiency.

Real-time performance requirements necessitate establishing latency thresholds for different application domains, from high-frequency trading systems requiring microsecond response times to autonomous vehicle applications with millisecond constraints. Efficiency standards should provide clear guidelines for determining when progressive activation testing overhead becomes prohibitive for specific use cases, enabling practitioners to make informed decisions about implementation feasibility.

Energy consumption metrics have become increasingly important in modern computational efficiency standards, particularly for mobile and embedded applications. Progressive testing implementations must be evaluated against power consumption benchmarks, considering both peak power draw during intensive testing phases and average energy usage across complete testing cycles.

Benchmarking Protocols for Progressive Activation Validation

Establishing robust benchmarking protocols for progressive activation validation requires a systematic approach that addresses the unique characteristics of adaptive activation functions in multilayer perceptron architectures. Unlike traditional static activation functions, progressive activation mechanisms demand specialized evaluation frameworks that can capture their dynamic behavior across different training phases and network configurations.

The foundation of effective benchmarking lies in developing standardized datasets that represent diverse computational scenarios. These datasets should encompass varying complexity levels, from simple classification tasks to complex regression problems, ensuring that progressive activation functions are evaluated across their full operational spectrum. Each dataset must be carefully curated to include edge cases and boundary conditions that typically challenge adaptive mechanisms.

Performance metrics for progressive activation validation extend beyond conventional accuracy measurements. The benchmarking protocol must incorporate temporal stability indicators that track activation function behavior throughout the training process. These metrics should quantify convergence rates, gradient flow efficiency, and computational overhead introduced by the progressive mechanisms. Additionally, robustness measures are essential to evaluate how well these functions maintain performance under different initialization conditions and hyperparameter configurations.

Standardization of experimental conditions forms a critical component of the benchmarking framework. This includes defining consistent network architectures, training procedures, and evaluation methodologies that enable fair comparisons between different progressive activation approaches. The protocol should specify minimum requirements for statistical significance, including the number of independent runs and appropriate statistical tests for performance validation.

Cross-validation strategies specifically tailored for progressive activation functions must account for their adaptive nature. Traditional k-fold validation may not adequately capture the temporal dependencies inherent in these systems. Therefore, the benchmarking protocol should incorporate time-aware validation techniques that preserve the sequential nature of the learning process while maintaining statistical rigor.

The benchmarking framework should also establish baseline comparisons with conventional activation functions under identical conditions. This comparative analysis enables researchers to quantify the specific advantages and potential drawbacks of progressive activation mechanisms, providing clear evidence of their practical utility in real-world applications.

The foundation of effective benchmarking lies in developing standardized datasets that represent diverse computational scenarios. These datasets should encompass varying complexity levels, from simple classification tasks to complex regression problems, ensuring that progressive activation functions are evaluated across their full operational spectrum. Each dataset must be carefully curated to include edge cases and boundary conditions that typically challenge adaptive mechanisms.

Performance metrics for progressive activation validation extend beyond conventional accuracy measurements. The benchmarking protocol must incorporate temporal stability indicators that track activation function behavior throughout the training process. These metrics should quantify convergence rates, gradient flow efficiency, and computational overhead introduced by the progressive mechanisms. Additionally, robustness measures are essential to evaluate how well these functions maintain performance under different initialization conditions and hyperparameter configurations.

Standardization of experimental conditions forms a critical component of the benchmarking framework. This includes defining consistent network architectures, training procedures, and evaluation methodologies that enable fair comparisons between different progressive activation approaches. The protocol should specify minimum requirements for statistical significance, including the number of independent runs and appropriate statistical tests for performance validation.

Cross-validation strategies specifically tailored for progressive activation functions must account for their adaptive nature. Traditional k-fold validation may not adequately capture the temporal dependencies inherent in these systems. Therefore, the benchmarking protocol should incorporate time-aware validation techniques that preserve the sequential nature of the learning process while maintaining statistical rigor.

The benchmarking framework should also establish baseline comparisons with conventional activation functions under identical conditions. This comparative analysis enables researchers to quantify the specific advantages and potential drawbacks of progressive activation mechanisms, providing clear evidence of their practical utility in real-world applications.

Unlock deeper insights with PatSnap Eureka Quick Research — get a full tech report to explore trends and direct your research. Try now!

Generate Your Research Report Instantly with AI Agent

Supercharge your innovation with PatSnap Eureka AI Agent Platform!