Quantify System Reliability in World Models for Autonomous Robots

APR 13, 20269 MIN READ

Generate Your Research Report Instantly with AI Agent

PatSnap Eureka helps you evaluate technical feasibility & market potential.

World Model Reliability Background and Objectives

World models have emerged as a fundamental component in autonomous robotics, representing an internal computational framework that enables robots to understand, predict, and interact with their environment. These models serve as digital twins of the physical world, incorporating spatial relationships, object dynamics, and environmental constraints that govern robotic operations. The evolution of world models has progressed from simple geometric representations to sophisticated neural architectures capable of handling complex, dynamic scenarios.

The reliability of world models has become increasingly critical as autonomous robots are deployed in safety-critical applications such as autonomous vehicles, medical robotics, and industrial automation. Traditional approaches to robotics relied heavily on pre-programmed behaviors and reactive control systems, but modern autonomous systems require predictive capabilities that depend entirely on the accuracy and reliability of their world models. This shift has created an urgent need for systematic approaches to quantify and ensure the reliability of these computational representations.

Current challenges in world model reliability stem from the inherent uncertainty in sensor data, the complexity of real-world environments, and the limitations of existing modeling techniques. Autonomous robots must operate in environments with incomplete information, sensor noise, and dynamic changes that can significantly impact the accuracy of their world models. The consequences of unreliable world models can range from mission failure to catastrophic safety incidents, making reliability quantification a paramount concern.

The primary objective of quantifying system reliability in world models is to establish measurable metrics and methodologies that can assess the trustworthiness of robotic perception and prediction systems. This involves developing frameworks that can evaluate model accuracy under various operating conditions, uncertainty propagation mechanisms, and failure detection capabilities. The goal extends beyond simple accuracy measurements to encompass robustness, adaptability, and graceful degradation under adverse conditions.

Furthermore, the reliability quantification effort aims to enable real-time assessment of world model performance, allowing autonomous systems to adapt their behavior based on confidence levels in their environmental understanding. This capability is essential for achieving truly autonomous operation while maintaining safety standards required for deployment in human-centric environments.

The reliability of world models has become increasingly critical as autonomous robots are deployed in safety-critical applications such as autonomous vehicles, medical robotics, and industrial automation. Traditional approaches to robotics relied heavily on pre-programmed behaviors and reactive control systems, but modern autonomous systems require predictive capabilities that depend entirely on the accuracy and reliability of their world models. This shift has created an urgent need for systematic approaches to quantify and ensure the reliability of these computational representations.

Current challenges in world model reliability stem from the inherent uncertainty in sensor data, the complexity of real-world environments, and the limitations of existing modeling techniques. Autonomous robots must operate in environments with incomplete information, sensor noise, and dynamic changes that can significantly impact the accuracy of their world models. The consequences of unreliable world models can range from mission failure to catastrophic safety incidents, making reliability quantification a paramount concern.

The primary objective of quantifying system reliability in world models is to establish measurable metrics and methodologies that can assess the trustworthiness of robotic perception and prediction systems. This involves developing frameworks that can evaluate model accuracy under various operating conditions, uncertainty propagation mechanisms, and failure detection capabilities. The goal extends beyond simple accuracy measurements to encompass robustness, adaptability, and graceful degradation under adverse conditions.

Furthermore, the reliability quantification effort aims to enable real-time assessment of world model performance, allowing autonomous systems to adapt their behavior based on confidence levels in their environmental understanding. This capability is essential for achieving truly autonomous operation while maintaining safety standards required for deployment in human-centric environments.

Market Demand for Reliable Autonomous Robot Systems

The global autonomous robotics market is experiencing unprecedented growth driven by increasing demand for reliable and dependable robotic systems across multiple industries. Manufacturing sectors are particularly driving this demand as companies seek to enhance operational efficiency while maintaining consistent quality standards. The automotive industry has emerged as a leading adopter, requiring autonomous systems that can operate with near-perfect reliability in production environments where even minor failures can result in significant financial losses.

Healthcare applications represent another critical growth area where system reliability is paramount. Surgical robots, rehabilitation devices, and patient care systems must demonstrate exceptional reliability metrics to gain regulatory approval and market acceptance. The aging global population is creating sustained demand for autonomous healthcare solutions that can operate independently while maintaining safety standards that exceed human-operated alternatives.

Logistics and warehousing sectors are rapidly adopting autonomous mobile robots for inventory management, order fulfillment, and material handling operations. These applications require systems capable of continuous operation with minimal downtime, driving demand for advanced reliability quantification methods. E-commerce growth has intensified this need, with companies requiring autonomous systems that can handle peak operational loads while maintaining consistent performance metrics.

The defense and aerospace industries present specialized market segments where reliability requirements reach critical levels. Autonomous systems deployed in these environments must operate in unpredictable conditions while maintaining mission-critical functionality. This has created demand for sophisticated world model reliability assessment techniques that can predict system behavior under extreme operational scenarios.

Agricultural automation represents an emerging market where reliability directly impacts crop yields and operational costs. Autonomous farming equipment must operate reliably across diverse environmental conditions, creating demand for robust reliability quantification frameworks that can account for variable operational parameters.

Current market trends indicate that customers are increasingly prioritizing reliability metrics over pure performance capabilities when selecting autonomous robotic solutions. This shift reflects growing awareness that system failures can result in safety risks, operational disruptions, and significant financial consequences. Consequently, manufacturers are investing heavily in developing comprehensive reliability assessment methodologies to meet evolving market expectations and regulatory requirements.

Healthcare applications represent another critical growth area where system reliability is paramount. Surgical robots, rehabilitation devices, and patient care systems must demonstrate exceptional reliability metrics to gain regulatory approval and market acceptance. The aging global population is creating sustained demand for autonomous healthcare solutions that can operate independently while maintaining safety standards that exceed human-operated alternatives.

Logistics and warehousing sectors are rapidly adopting autonomous mobile robots for inventory management, order fulfillment, and material handling operations. These applications require systems capable of continuous operation with minimal downtime, driving demand for advanced reliability quantification methods. E-commerce growth has intensified this need, with companies requiring autonomous systems that can handle peak operational loads while maintaining consistent performance metrics.

The defense and aerospace industries present specialized market segments where reliability requirements reach critical levels. Autonomous systems deployed in these environments must operate in unpredictable conditions while maintaining mission-critical functionality. This has created demand for sophisticated world model reliability assessment techniques that can predict system behavior under extreme operational scenarios.

Agricultural automation represents an emerging market where reliability directly impacts crop yields and operational costs. Autonomous farming equipment must operate reliably across diverse environmental conditions, creating demand for robust reliability quantification frameworks that can account for variable operational parameters.

Current market trends indicate that customers are increasingly prioritizing reliability metrics over pure performance capabilities when selecting autonomous robotic solutions. This shift reflects growing awareness that system failures can result in safety risks, operational disruptions, and significant financial consequences. Consequently, manufacturers are investing heavily in developing comprehensive reliability assessment methodologies to meet evolving market expectations and regulatory requirements.

Current State of World Model Reliability Quantification

The current landscape of world model reliability quantification for autonomous robots presents a fragmented yet rapidly evolving field. Most existing approaches focus on isolated aspects of reliability assessment rather than comprehensive system-wide evaluation. Traditional methods primarily rely on statistical confidence measures and uncertainty propagation techniques, which provide limited insight into the dynamic nature of real-world environments.

Contemporary research predominantly employs Monte Carlo simulations and Bayesian inference frameworks to estimate prediction uncertainties in world models. These approaches typically quantify epistemic and aleatoric uncertainties separately, using techniques such as dropout-based uncertainty estimation, ensemble methods, and variational inference. However, these methods often fail to capture the complex interdependencies between different components of the world model, leading to incomplete reliability assessments.

The field currently lacks standardized metrics and benchmarking protocols for reliability quantification. Different research groups employ varying evaluation criteria, making it difficult to compare and validate different approaches. Most studies focus on specific domains such as autonomous driving or robotic manipulation, with limited cross-domain applicability of proposed reliability measures.

Recent developments have introduced more sophisticated approaches, including temporal consistency analysis and multi-modal uncertainty fusion. Some researchers have begun exploring information-theoretic measures to quantify model reliability, utilizing concepts such as mutual information and entropy to assess the quality of world model predictions. These approaches show promise but remain largely experimental and lack widespread adoption.

A significant gap exists in real-time reliability assessment capabilities. While offline evaluation methods have shown considerable progress, the computational requirements for continuous reliability monitoring during robot operation remain prohibitive for most practical applications. Current systems typically rely on simplified heuristics or threshold-based approaches that may not capture the full complexity of reliability dynamics.

The integration of domain knowledge and physics-based constraints into reliability quantification frameworks represents an emerging trend. Some recent works have attempted to incorporate physical plausibility checks and causal reasoning to enhance reliability assessment accuracy. However, these approaches are still in early development stages and face challenges in scalability and generalization across different robotic platforms and environments.

Contemporary research predominantly employs Monte Carlo simulations and Bayesian inference frameworks to estimate prediction uncertainties in world models. These approaches typically quantify epistemic and aleatoric uncertainties separately, using techniques such as dropout-based uncertainty estimation, ensemble methods, and variational inference. However, these methods often fail to capture the complex interdependencies between different components of the world model, leading to incomplete reliability assessments.

The field currently lacks standardized metrics and benchmarking protocols for reliability quantification. Different research groups employ varying evaluation criteria, making it difficult to compare and validate different approaches. Most studies focus on specific domains such as autonomous driving or robotic manipulation, with limited cross-domain applicability of proposed reliability measures.

Recent developments have introduced more sophisticated approaches, including temporal consistency analysis and multi-modal uncertainty fusion. Some researchers have begun exploring information-theoretic measures to quantify model reliability, utilizing concepts such as mutual information and entropy to assess the quality of world model predictions. These approaches show promise but remain largely experimental and lack widespread adoption.

A significant gap exists in real-time reliability assessment capabilities. While offline evaluation methods have shown considerable progress, the computational requirements for continuous reliability monitoring during robot operation remain prohibitive for most practical applications. Current systems typically rely on simplified heuristics or threshold-based approaches that may not capture the full complexity of reliability dynamics.

The integration of domain knowledge and physics-based constraints into reliability quantification frameworks represents an emerging trend. Some recent works have attempted to incorporate physical plausibility checks and causal reasoning to enhance reliability assessment accuracy. However, these approaches are still in early development stages and face challenges in scalability and generalization across different robotic platforms and environments.

Existing Reliability Quantification Solutions

01 Reliability assessment and prediction methods for complex systems

Methods and systems for assessing and predicting the reliability of complex world models through statistical analysis, simulation techniques, and predictive modeling. These approaches enable evaluation of system performance under various conditions and identification of potential failure modes before they occur in real-world scenarios.- Reliability assessment and prediction methods for complex systems: Methods and systems for assessing and predicting the reliability of complex world models through statistical analysis, simulation techniques, and predictive modeling. These approaches enable evaluation of system performance under various conditions and identification of potential failure modes before they occur in real-world applications.

- Fault detection and diagnostic systems for model reliability: Systems that implement fault detection, isolation, and diagnostic capabilities to monitor world model performance and identify anomalies or degradation in system reliability. These systems utilize sensor data, pattern recognition, and machine learning algorithms to detect deviations from expected behavior and trigger appropriate responses.

- Redundancy and fail-safe mechanisms for system robustness: Implementation of redundant components, backup systems, and fail-safe mechanisms to enhance the overall reliability of world models. These approaches ensure continued operation even when individual components fail, through techniques such as parallel processing, data replication, and automatic failover protocols.

- Validation and verification frameworks for model accuracy: Comprehensive frameworks for validating and verifying world models to ensure their accuracy and reliability across different scenarios and operating conditions. These frameworks incorporate testing protocols, benchmarking standards, and continuous monitoring to maintain model fidelity and trustworthiness throughout the system lifecycle.

- Adaptive and self-healing systems for maintaining reliability: Advanced systems that incorporate adaptive algorithms and self-healing capabilities to automatically adjust parameters, reconfigure components, and recover from failures to maintain optimal reliability. These systems learn from operational data and environmental changes to continuously improve performance and resilience.

02 Fault detection and diagnostic systems for model reliability

Systems that implement fault detection, isolation, and diagnostic capabilities to monitor the health and reliability of world models. These systems continuously analyze model outputs, detect anomalies, and provide diagnostic information to maintain system reliability and prevent failures.Expand Specific Solutions03 Redundancy and fail-safe mechanisms in system architecture

Implementation of redundant components, backup systems, and fail-safe mechanisms to enhance the reliability of world models. These architectural approaches ensure continued operation even when individual components fail, improving overall system dependability and availability.Expand Specific Solutions04 Validation and verification frameworks for model accuracy

Frameworks and methodologies for validating and verifying the accuracy and reliability of world models through testing protocols, benchmarking procedures, and quality assurance measures. These frameworks ensure that models meet specified reliability standards and perform consistently across different scenarios.Expand Specific Solutions05 Adaptive and self-healing systems for maintaining reliability

Adaptive systems that can self-monitor, self-diagnose, and self-repair to maintain reliability over time. These systems incorporate machine learning and artificial intelligence techniques to automatically adjust parameters, compensate for degradation, and recover from errors without human intervention.Expand Specific Solutions

Key Players in Autonomous Robotics and World Modeling

The autonomous robotics world model reliability sector represents an emerging market at the intersection of AI, robotics, and safety-critical systems. The industry is in its early-to-mid development stage, with significant growth potential driven by increasing demand for reliable autonomous systems across automotive, industrial, and service robotics applications. Market size is expanding rapidly as companies like NVIDIA, Intel, and Google advance foundational AI technologies, while automotive leaders including Bosch, DENSO, and Volvo Autonomous Solutions focus on safety-critical implementations. Technology maturity varies significantly across players - established tech giants like Huawei and Siemens leverage existing infrastructure capabilities, while specialized firms such as Aurora Operations and Zoox pioneer domain-specific solutions. Academic institutions including KAIST, Tongji University, and Worcester Polytechnic Institute contribute fundamental research. Industrial automation companies like FANUC and KUKA integrate reliability frameworks into robotic systems, indicating a convergence toward standardized reliability quantification methods essential for widespread autonomous system deployment.

Robert Bosch GmbH

Technical Solution: Bosch implements reliability quantification in world models through their automotive safety expertise, focusing on functional safety standards and probabilistic risk assessment frameworks. Their approach combines traditional automotive safety methodologies with modern machine learning uncertainty quantification techniques. The system employs multi-layered validation processes including simulation-based testing, hardware-in-the-loop verification, and statistical reliability modeling to ensure autonomous system dependability. Bosch integrates ISO 26262 functional safety standards with probabilistic model checking and formal verification methods to provide quantitative reliability assessments for autonomous robot applications.

Strengths: Deep automotive safety expertise and established industry partnerships, strong regulatory compliance knowledge. Weaknesses: Conservative approach may limit adoption of cutting-edge AI techniques, traditional automotive focus may not fully address broader robotics applications.

Siemens AG

Technical Solution: Siemens approaches world model reliability through their industrial automation and digital twin expertise, implementing comprehensive system-level reliability assessment frameworks for autonomous industrial robots. Their methodology integrates physics-based modeling with data-driven approaches to create hybrid world models that incorporate both mechanistic understanding and learned behaviors. Siemens utilizes advanced simulation environments and digital twin technologies to validate reliability metrics across diverse operational scenarios. Their approach emphasizes industrial-grade reliability standards, incorporating predictive maintenance algorithms and fault detection mechanisms to ensure continuous system dependability in manufacturing and industrial automation applications.

Strengths: Extensive industrial automation experience and robust digital twin technologies, strong focus on industrial-grade reliability standards. Weaknesses: Primarily focused on industrial applications rather than general autonomous robotics, potentially slower adoption of latest AI research developments.

Core Innovations in System Reliability Metrics

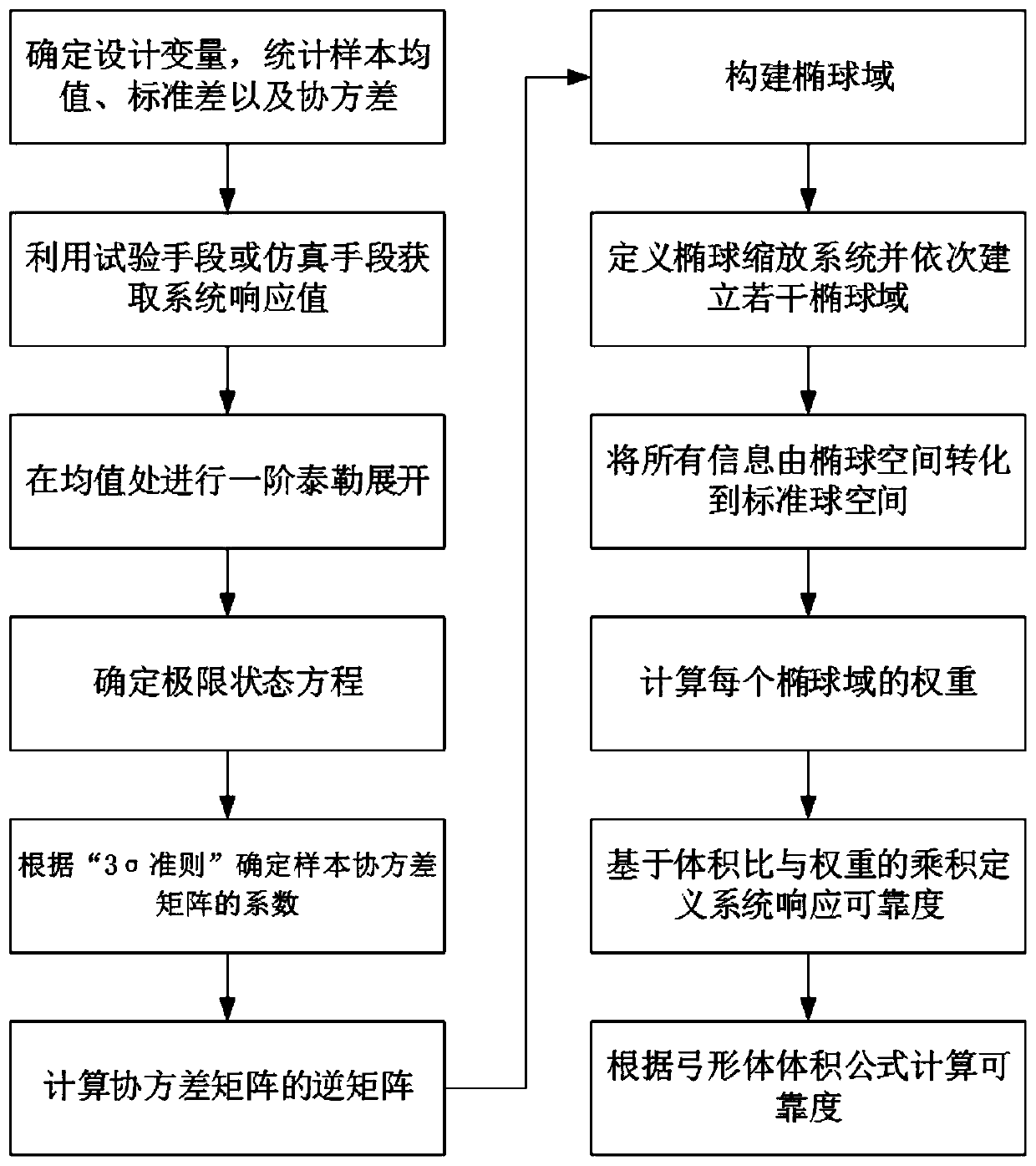

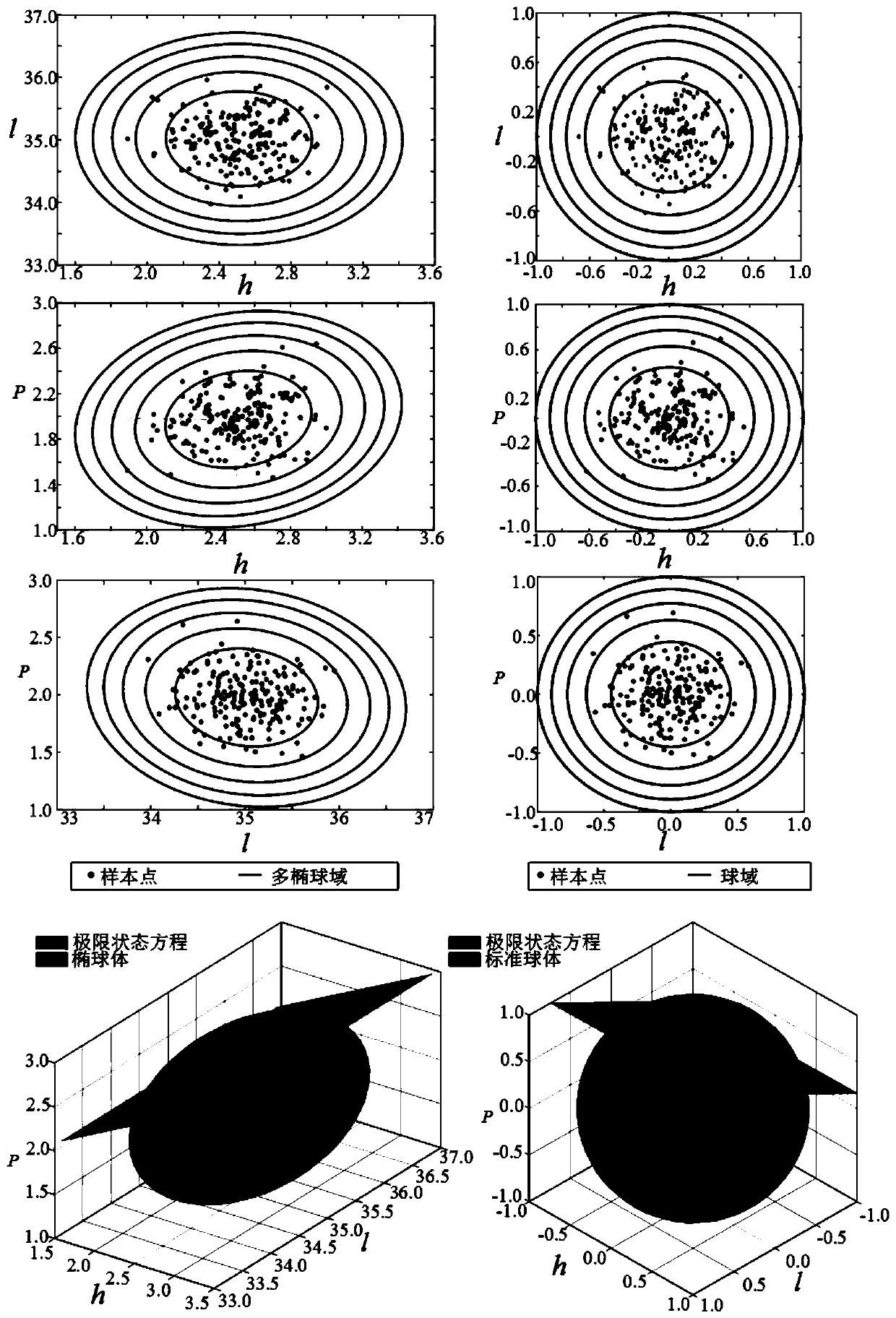

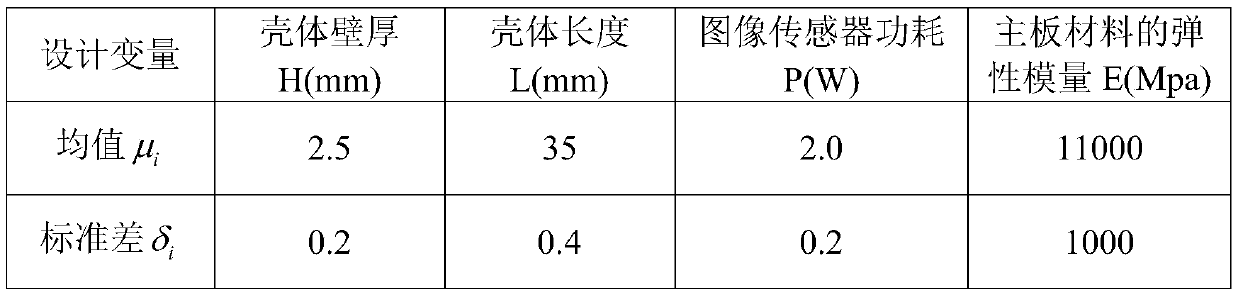

Robot system reliability analysis method based on Gaussian multi-ellipsoid model

PatentActiveCN110895639A

Innovation

- A method based on the Gaussian multi-ellipsoid model is used to define the robot system by measuring uncertainty parameters, establishing a response surface model, performing first-order Taylor expansion, determining the limit state equation, constructing the ellipsoid domain, and calculating the ellipsoid weight and volume. Reliability.

System, method, and processor-readable medium for autonomous vehicle reliability assessment

PatentActiveUS20190064799A1

Innovation

- A system and method for assessing the reliability of vehicle systems using data from sensors and diagnostic sensors, allowing the autonomous driving module to adjust its operation based on the reliability data, potentially using a more modest set of sensors and adapting driving modes to adverse conditions.

Safety Standards for Autonomous Robot Systems

Safety standards for autonomous robot systems represent a critical framework for ensuring reliable operation in complex environments. These standards establish comprehensive guidelines that address the unique challenges posed by autonomous systems operating with varying degrees of independence from human oversight. The development of such standards requires careful consideration of both deterministic safety requirements and probabilistic reliability metrics.

Current safety standards for autonomous robots are primarily derived from existing industrial automation frameworks, including ISO 10218 for industrial robots and ISO 13849 for safety-related control systems. However, these traditional standards face significant limitations when applied to autonomous systems that must operate in unstructured environments with dynamic world models. The integration of machine learning components and real-time decision-making algorithms introduces new categories of potential failures that existing standards do not adequately address.

The functional safety approach, as outlined in IEC 61508, provides a foundation for quantifying acceptable risk levels through Safety Integrity Levels (SIL). For autonomous robots utilizing world models, these standards must be extended to encompass model uncertainty, prediction accuracy degradation, and sensor fusion reliability. The challenge lies in establishing quantifiable metrics that can assess the reliability of probabilistic world representations while maintaining compliance with deterministic safety requirements.

Emerging safety standards specifically designed for autonomous systems, such as ISO 21448 (SOTIF - Safety of the Intended Functionality), address scenarios where system failures occur despite the absence of traditional malfunctions. This standard is particularly relevant for world model reliability, as it focuses on performance limitations and foreseeable misuse scenarios that could compromise system safety even when all components function as designed.

The automotive industry has pioneered advanced safety standards through ISO 26262, which incorporates hazard analysis and risk assessment methodologies applicable to autonomous robot systems. These approaches emphasize the importance of systematic safety lifecycle management, from concept phase through decommissioning, with particular attention to software-intensive systems that rely on environmental perception and prediction capabilities.

Certification processes for autonomous robot safety standards require extensive validation and verification procedures that can accommodate the stochastic nature of world model predictions. This includes establishing test protocols that can evaluate system performance across diverse operational scenarios while maintaining traceability to specific safety requirements and reliability targets.

Current safety standards for autonomous robots are primarily derived from existing industrial automation frameworks, including ISO 10218 for industrial robots and ISO 13849 for safety-related control systems. However, these traditional standards face significant limitations when applied to autonomous systems that must operate in unstructured environments with dynamic world models. The integration of machine learning components and real-time decision-making algorithms introduces new categories of potential failures that existing standards do not adequately address.

The functional safety approach, as outlined in IEC 61508, provides a foundation for quantifying acceptable risk levels through Safety Integrity Levels (SIL). For autonomous robots utilizing world models, these standards must be extended to encompass model uncertainty, prediction accuracy degradation, and sensor fusion reliability. The challenge lies in establishing quantifiable metrics that can assess the reliability of probabilistic world representations while maintaining compliance with deterministic safety requirements.

Emerging safety standards specifically designed for autonomous systems, such as ISO 21448 (SOTIF - Safety of the Intended Functionality), address scenarios where system failures occur despite the absence of traditional malfunctions. This standard is particularly relevant for world model reliability, as it focuses on performance limitations and foreseeable misuse scenarios that could compromise system safety even when all components function as designed.

The automotive industry has pioneered advanced safety standards through ISO 26262, which incorporates hazard analysis and risk assessment methodologies applicable to autonomous robot systems. These approaches emphasize the importance of systematic safety lifecycle management, from concept phase through decommissioning, with particular attention to software-intensive systems that rely on environmental perception and prediction capabilities.

Certification processes for autonomous robot safety standards require extensive validation and verification procedures that can accommodate the stochastic nature of world model predictions. This includes establishing test protocols that can evaluate system performance across diverse operational scenarios while maintaining traceability to specific safety requirements and reliability targets.

Risk Assessment Framework for Autonomous Operations

Establishing a comprehensive risk assessment framework for autonomous operations requires systematic evaluation of multiple failure modes and their cascading effects within robotic systems. The framework must address both probabilistic and deterministic failure scenarios, incorporating uncertainty quantification methods to evaluate world model accuracy under varying operational conditions. This approach enables proactive identification of potential system vulnerabilities before they manifest in critical operational scenarios.

The foundation of effective risk assessment lies in developing hierarchical risk taxonomies that categorize threats based on their origin, severity, and temporal characteristics. Environmental risks encompass sensor degradation, weather-induced perception failures, and dynamic obstacle interactions that challenge world model fidelity. System-level risks include computational resource limitations, communication disruptions, and hardware component failures that directly impact model updating capabilities. Human-robot interaction risks emerge from unpredictable human behavior patterns that may not be adequately represented in training datasets.

Quantitative risk metrics must integrate real-time performance indicators with historical reliability data to provide actionable insights for autonomous decision-making systems. Key performance indicators include model prediction accuracy rates, confidence interval bounds, and temporal consistency measures across different operational scenarios. These metrics enable dynamic risk threshold adjustments based on current system state and environmental conditions, ensuring adaptive response capabilities during mission execution.

Implementation strategies should incorporate multi-layered validation approaches combining simulation-based testing, controlled field trials, and continuous monitoring during operational deployment. Monte Carlo simulation techniques provide statistical confidence bounds for risk probability distributions, while formal verification methods ensure compliance with safety-critical operational requirements. Real-time monitoring systems must track deviation patterns between predicted and observed outcomes, triggering appropriate mitigation responses when reliability thresholds are exceeded.

The framework must also address regulatory compliance requirements and industry safety standards specific to autonomous robotic applications. Integration with existing safety management systems ensures seamless adoption within established operational protocols while maintaining traceability for audit and certification purposes. This comprehensive approach enables organizations to deploy autonomous systems with quantified confidence levels and well-defined operational boundaries.

The foundation of effective risk assessment lies in developing hierarchical risk taxonomies that categorize threats based on their origin, severity, and temporal characteristics. Environmental risks encompass sensor degradation, weather-induced perception failures, and dynamic obstacle interactions that challenge world model fidelity. System-level risks include computational resource limitations, communication disruptions, and hardware component failures that directly impact model updating capabilities. Human-robot interaction risks emerge from unpredictable human behavior patterns that may not be adequately represented in training datasets.

Quantitative risk metrics must integrate real-time performance indicators with historical reliability data to provide actionable insights for autonomous decision-making systems. Key performance indicators include model prediction accuracy rates, confidence interval bounds, and temporal consistency measures across different operational scenarios. These metrics enable dynamic risk threshold adjustments based on current system state and environmental conditions, ensuring adaptive response capabilities during mission execution.

Implementation strategies should incorporate multi-layered validation approaches combining simulation-based testing, controlled field trials, and continuous monitoring during operational deployment. Monte Carlo simulation techniques provide statistical confidence bounds for risk probability distributions, while formal verification methods ensure compliance with safety-critical operational requirements. Real-time monitoring systems must track deviation patterns between predicted and observed outcomes, triggering appropriate mitigation responses when reliability thresholds are exceeded.

The framework must also address regulatory compliance requirements and industry safety standards specific to autonomous robotic applications. Integration with existing safety management systems ensures seamless adoption within established operational protocols while maintaining traceability for audit and certification purposes. This comprehensive approach enables organizations to deploy autonomous systems with quantified confidence levels and well-defined operational boundaries.

Unlock deeper insights with PatSnap Eureka Quick Research — get a full tech report to explore trends and direct your research. Try now!

Generate Your Research Report Instantly with AI Agent

Supercharge your innovation with PatSnap Eureka AI Agent Platform!