Scene Abstractions through Informed Frame Methodologies

MAR 30, 20269 MIN READ

Generate Your Research Report Instantly with AI Agent

PatSnap Eureka helps you evaluate technical feasibility & market potential.

Scene Abstraction Technology Background and Objectives

Scene abstraction technology has emerged as a critical component in computer vision and artificial intelligence systems, fundamentally addressing the challenge of extracting meaningful representations from complex visual environments. This technology domain focuses on developing methodologies that can intelligently process and interpret visual scenes by identifying key elements, relationships, and contextual information while filtering out irrelevant details.

The historical development of scene abstraction can be traced back to early computer vision research in the 1970s, where initial attempts focused on basic object recognition and edge detection. The field has undergone significant evolution through multiple paradigm shifts, from rule-based approaches to statistical methods, and more recently to deep learning architectures. The introduction of convolutional neural networks marked a pivotal moment, enabling more sophisticated feature extraction and hierarchical representation learning.

Informed frame methodologies represent a contemporary approach within this domain, emphasizing the strategic selection and processing of temporal sequences in video data or multi-frame scenarios. These methodologies leverage contextual information across frames to enhance abstraction quality, moving beyond single-frame analysis to incorporate temporal dynamics and inter-frame relationships.

Current technological objectives center on achieving robust scene understanding that can adapt to diverse environmental conditions, lighting variations, and scene complexities. The primary goal involves developing systems capable of generating semantically meaningful abstractions that preserve essential scene characteristics while reducing computational overhead and data dimensionality.

The evolution trajectory shows a clear progression toward more intelligent, context-aware systems. Early approaches relied heavily on handcrafted features and predetermined rules, limiting their adaptability and generalization capabilities. Modern methodologies increasingly incorporate machine learning techniques that can automatically learn optimal abstraction strategies from large-scale datasets.

Contemporary research objectives emphasize real-time processing capabilities, scalability across different application domains, and improved accuracy in dynamic environments. The integration of multi-modal information sources, including depth data, motion vectors, and semantic annotations, represents a key advancement direction. These developments aim to create more comprehensive scene understanding systems that can support applications ranging from autonomous navigation to augmented reality platforms.

The technological landscape continues to evolve toward more sophisticated abstraction mechanisms that can handle increasing scene complexity while maintaining computational efficiency and practical deployment feasibility.

The historical development of scene abstraction can be traced back to early computer vision research in the 1970s, where initial attempts focused on basic object recognition and edge detection. The field has undergone significant evolution through multiple paradigm shifts, from rule-based approaches to statistical methods, and more recently to deep learning architectures. The introduction of convolutional neural networks marked a pivotal moment, enabling more sophisticated feature extraction and hierarchical representation learning.

Informed frame methodologies represent a contemporary approach within this domain, emphasizing the strategic selection and processing of temporal sequences in video data or multi-frame scenarios. These methodologies leverage contextual information across frames to enhance abstraction quality, moving beyond single-frame analysis to incorporate temporal dynamics and inter-frame relationships.

Current technological objectives center on achieving robust scene understanding that can adapt to diverse environmental conditions, lighting variations, and scene complexities. The primary goal involves developing systems capable of generating semantically meaningful abstractions that preserve essential scene characteristics while reducing computational overhead and data dimensionality.

The evolution trajectory shows a clear progression toward more intelligent, context-aware systems. Early approaches relied heavily on handcrafted features and predetermined rules, limiting their adaptability and generalization capabilities. Modern methodologies increasingly incorporate machine learning techniques that can automatically learn optimal abstraction strategies from large-scale datasets.

Contemporary research objectives emphasize real-time processing capabilities, scalability across different application domains, and improved accuracy in dynamic environments. The integration of multi-modal information sources, including depth data, motion vectors, and semantic annotations, represents a key advancement direction. These developments aim to create more comprehensive scene understanding systems that can support applications ranging from autonomous navigation to augmented reality platforms.

The technological landscape continues to evolve toward more sophisticated abstraction mechanisms that can handle increasing scene complexity while maintaining computational efficiency and practical deployment feasibility.

Market Demand for Intelligent Scene Understanding Systems

The market demand for intelligent scene understanding systems has experienced unprecedented growth across multiple industry verticals, driven by the increasing need for automated visual perception and contextual analysis capabilities. Organizations across sectors including autonomous vehicles, robotics, surveillance, augmented reality, and smart city infrastructure are actively seeking advanced solutions that can interpret complex visual environments with human-level comprehension.

Autonomous vehicle manufacturers represent one of the most significant demand drivers, requiring sophisticated scene understanding systems capable of real-time interpretation of dynamic traffic environments. These systems must process multiple visual inputs simultaneously, identifying pedestrians, vehicles, road conditions, and traffic signals while making split-second decisions. The complexity of urban driving scenarios has intensified the need for more robust scene abstraction methodologies that can handle edge cases and unpredictable situations.

The robotics industry demonstrates substantial appetite for intelligent scene understanding technologies, particularly in warehouse automation, manufacturing, and service robotics applications. Modern robotic systems require advanced visual perception capabilities to navigate complex environments, manipulate objects, and interact safely with human workers. The demand extends beyond basic object detection to comprehensive spatial understanding and predictive modeling of dynamic scenes.

Smart city initiatives worldwide are creating substantial market opportunities for scene understanding systems in traffic management, public safety, and urban planning applications. Municipal governments are investing heavily in intelligent surveillance networks that can automatically detect incidents, monitor crowd dynamics, and optimize traffic flow through real-time scene analysis.

The entertainment and media industry has emerged as another significant market segment, with growing demand for automated content analysis, virtual production technologies, and immersive experience creation. Streaming platforms and content creators require sophisticated scene understanding capabilities for automated video editing, content recommendation systems, and augmented reality applications.

Healthcare and medical imaging sectors are increasingly adopting intelligent scene understanding technologies for diagnostic imaging, surgical planning, and patient monitoring applications. The ability to automatically analyze complex medical imagery and extract meaningful insights is driving substantial investment in advanced visual perception systems.

Market growth is further accelerated by the proliferation of edge computing capabilities and improved hardware performance, enabling deployment of sophisticated scene understanding algorithms in resource-constrained environments. The convergence of artificial intelligence, computer vision, and real-time processing technologies has created favorable conditions for widespread adoption across diverse application domains.

Autonomous vehicle manufacturers represent one of the most significant demand drivers, requiring sophisticated scene understanding systems capable of real-time interpretation of dynamic traffic environments. These systems must process multiple visual inputs simultaneously, identifying pedestrians, vehicles, road conditions, and traffic signals while making split-second decisions. The complexity of urban driving scenarios has intensified the need for more robust scene abstraction methodologies that can handle edge cases and unpredictable situations.

The robotics industry demonstrates substantial appetite for intelligent scene understanding technologies, particularly in warehouse automation, manufacturing, and service robotics applications. Modern robotic systems require advanced visual perception capabilities to navigate complex environments, manipulate objects, and interact safely with human workers. The demand extends beyond basic object detection to comprehensive spatial understanding and predictive modeling of dynamic scenes.

Smart city initiatives worldwide are creating substantial market opportunities for scene understanding systems in traffic management, public safety, and urban planning applications. Municipal governments are investing heavily in intelligent surveillance networks that can automatically detect incidents, monitor crowd dynamics, and optimize traffic flow through real-time scene analysis.

The entertainment and media industry has emerged as another significant market segment, with growing demand for automated content analysis, virtual production technologies, and immersive experience creation. Streaming platforms and content creators require sophisticated scene understanding capabilities for automated video editing, content recommendation systems, and augmented reality applications.

Healthcare and medical imaging sectors are increasingly adopting intelligent scene understanding technologies for diagnostic imaging, surgical planning, and patient monitoring applications. The ability to automatically analyze complex medical imagery and extract meaningful insights is driving substantial investment in advanced visual perception systems.

Market growth is further accelerated by the proliferation of edge computing capabilities and improved hardware performance, enabling deployment of sophisticated scene understanding algorithms in resource-constrained environments. The convergence of artificial intelligence, computer vision, and real-time processing technologies has created favorable conditions for widespread adoption across diverse application domains.

Current State and Challenges in Frame-based Scene Analysis

Frame-based scene analysis has emerged as a fundamental approach in computer vision and artificial intelligence, representing scenes through structured data frameworks that capture spatial, temporal, and semantic relationships. Current methodologies predominantly rely on hierarchical decomposition strategies, where complex scenes are broken down into manageable components through various framing techniques including bounding boxes, semantic segmentation masks, and temporal windows.

The state-of-the-art approaches leverage deep learning architectures, particularly convolutional neural networks and transformer-based models, to extract meaningful representations from visual data. These systems typically employ multi-scale feature extraction, where different resolution levels capture varying degrees of detail and context. Recent advances have integrated attention mechanisms to dynamically focus on relevant scene regions, improving the quality of frame-based abstractions.

However, significant challenges persist in achieving robust and generalizable scene understanding. One primary obstacle is the semantic gap between low-level visual features and high-level conceptual understanding. Current frame methodologies often struggle with ambiguous scenes where multiple interpretations are valid, leading to inconsistent abstractions across similar contexts.

Computational complexity represents another critical challenge, as real-time processing requirements conflict with the need for comprehensive scene analysis. Existing algorithms face scalability issues when dealing with high-resolution imagery or extended temporal sequences, particularly in resource-constrained environments such as mobile devices or embedded systems.

The integration of multi-modal information sources poses additional difficulties. While combining visual, textual, and sensor data can enhance scene understanding, current frameworks lack standardized approaches for effectively fusing these heterogeneous information streams. This limitation restricts the development of truly informed frame methodologies that can leverage diverse data sources.

Geographical and domain-specific variations in scene characteristics create further complications. Models trained on specific datasets often exhibit poor generalization when applied to different geographical regions or application domains, highlighting the need for more adaptive and robust framing approaches that can accommodate diverse visual environments and cultural contexts.

The state-of-the-art approaches leverage deep learning architectures, particularly convolutional neural networks and transformer-based models, to extract meaningful representations from visual data. These systems typically employ multi-scale feature extraction, where different resolution levels capture varying degrees of detail and context. Recent advances have integrated attention mechanisms to dynamically focus on relevant scene regions, improving the quality of frame-based abstractions.

However, significant challenges persist in achieving robust and generalizable scene understanding. One primary obstacle is the semantic gap between low-level visual features and high-level conceptual understanding. Current frame methodologies often struggle with ambiguous scenes where multiple interpretations are valid, leading to inconsistent abstractions across similar contexts.

Computational complexity represents another critical challenge, as real-time processing requirements conflict with the need for comprehensive scene analysis. Existing algorithms face scalability issues when dealing with high-resolution imagery or extended temporal sequences, particularly in resource-constrained environments such as mobile devices or embedded systems.

The integration of multi-modal information sources poses additional difficulties. While combining visual, textual, and sensor data can enhance scene understanding, current frameworks lack standardized approaches for effectively fusing these heterogeneous information streams. This limitation restricts the development of truly informed frame methodologies that can leverage diverse data sources.

Geographical and domain-specific variations in scene characteristics create further complications. Models trained on specific datasets often exhibit poor generalization when applied to different geographical regions or application domains, highlighting the need for more adaptive and robust framing approaches that can accommodate diverse visual environments and cultural contexts.

Existing Frame-based Scene Abstraction Solutions

01 Scene recognition and classification methods

Technologies for automatically recognizing and classifying different types of scenes in images or videos using machine learning algorithms, neural networks, and feature extraction techniques. These methods analyze visual characteristics to categorize scenes into predefined classes such as indoor, outdoor, natural, urban, or specific environments.- Video scene segmentation and abstraction techniques: Methods for automatically segmenting video content into meaningful scenes and generating abstractions or summaries. These techniques analyze temporal and spatial features to identify scene boundaries and extract representative frames or segments that capture the essence of each scene. The approaches enable efficient video browsing and content understanding by creating compact representations of video sequences.

- Hierarchical scene representation and modeling: Systems that create multi-level abstractions of scenes by organizing visual information in hierarchical structures. These methods build scene representations at different levels of detail, from low-level features to high-level semantic concepts. The hierarchical approach facilitates scene understanding, object recognition, and enables efficient querying and retrieval of visual content based on abstract scene properties.

- Semantic scene understanding and classification: Techniques for analyzing and classifying scenes based on semantic content and contextual information. These methods extract meaningful features from visual data to categorize scenes into predefined classes or generate semantic descriptions. The approaches leverage machine learning and pattern recognition to identify scene types, objects, and their relationships, enabling automated scene interpretation and content-based organization.

- Interactive scene abstraction and visualization: Systems providing user interfaces and tools for creating, manipulating, and visualizing scene abstractions. These solutions allow users to interactively define abstraction levels, select relevant scene elements, and generate customized visual summaries. The methods support various visualization techniques to present abstracted scene information in intuitive and meaningful ways for different applications and user needs.

- Scene abstraction for augmented and virtual reality: Methods for generating simplified scene representations specifically designed for augmented reality and virtual reality applications. These techniques create abstract scene models that balance visual fidelity with computational efficiency, enabling real-time rendering and interaction. The approaches focus on extracting essential scene geometry and appearance information while reducing complexity to support immersive experiences on resource-constrained devices.

02 Scene abstraction through semantic segmentation

Techniques for abstracting scenes by segmenting images into meaningful regions and identifying semantic components. This involves partitioning visual data into distinct areas representing objects, backgrounds, and contextual elements to create simplified representations of complex scenes.Expand Specific Solutions03 3D scene reconstruction and modeling

Methods for creating three-dimensional abstract representations of scenes from two-dimensional images or sensor data. These approaches involve depth estimation, spatial mapping, and geometric modeling to generate simplified 3D scene structures that capture essential spatial relationships and layouts.Expand Specific Solutions04 Scene understanding through object detection and relationship analysis

Systems that abstract scenes by identifying objects within them and analyzing their spatial and contextual relationships. This includes detecting multiple objects, determining their positions, interactions, and hierarchical structures to create high-level scene descriptions.Expand Specific Solutions05 Scene representation using graph-based and hierarchical structures

Approaches for abstracting scenes using graph-based representations, hierarchical models, or structured frameworks that capture scene components and their interconnections. These methods organize visual information into abstract structures that facilitate scene understanding, retrieval, and manipulation.Expand Specific Solutions

Key Players in Computer Vision and Scene Analysis Industry

The competitive landscape for Scene Abstractions through Informed Frame Methodologies reflects an emerging technology sector in early-to-mid development stages, with significant market potential driven by applications in computer vision, autonomous systems, and multimedia processing. The market encompasses diverse players from established semiconductor giants like QUALCOMM, Intel, and Sony Semiconductor Solutions, to specialized perception technology companies such as Summer Robotics and iniVation AG. Technology maturity varies considerably across participants, with traditional tech leaders like Siemens AG, Mitsubishi Electric, and Adobe leveraging existing capabilities, while innovative startups like Rembrand focus on AI-powered video solutions. Research institutions including Zhejiang University and Fraunhofer-Gesellschaft contribute foundational advances, indicating strong academic-industry collaboration. The presence of automotive manufacturers like Toyota and drone specialists like DJI suggests broad cross-industry adoption potential, positioning this as a rapidly evolving competitive space with substantial growth opportunities.

Toyota Motor Corp.

Technical Solution: Toyota has developed scene abstraction technologies as part of their autonomous driving research and Toyota Safety Sense systems. Their informed frame methodologies focus on understanding complex traffic scenarios, pedestrian behavior, and road conditions through advanced computer vision and sensor fusion techniques. The company's approach integrates camera data with LiDAR and radar information to create comprehensive scene representations that enable safe autonomous navigation. Toyota's technology emphasizes robust performance in diverse weather conditions and lighting scenarios, incorporating machine learning models trained on extensive real-world driving data. Their system can identify and track multiple objects simultaneously while predicting potential collision scenarios and planning appropriate vehicle responses. The implementation is designed for automotive-grade reliability and real-time processing requirements.

Strengths: Extensive automotive expertise, large-scale real-world testing data, focus on safety and reliability. Weaknesses: Primarily automotive-focused applications, conservative approach to technology deployment may limit innovation speed.

SZ DJI Technology Co., Ltd.

Technical Solution: DJI has implemented sophisticated scene abstraction technologies primarily for aerial robotics and autonomous flight systems. Their informed frame methodologies focus on real-time environmental understanding for obstacle avoidance, path planning, and intelligent flight control. The company's approach combines multi-sensor fusion with advanced computer vision algorithms to create comprehensive scene representations that enable safe autonomous navigation in complex environments. DJI's technology incorporates depth estimation, semantic segmentation, and temporal tracking to maintain consistent scene understanding during dynamic flight conditions. Their system is optimized for power-efficient processing on embedded platforms while maintaining high accuracy in scene interpretation for critical safety applications in unmanned aerial vehicles.

Strengths: Market leadership in drone technology, proven real-world deployment, specialized expertise in aerial scene understanding. Weaknesses: Limited application scope beyond aerial systems, regulatory constraints in some markets.

Core Innovations in Informed Frame Processing Technologies

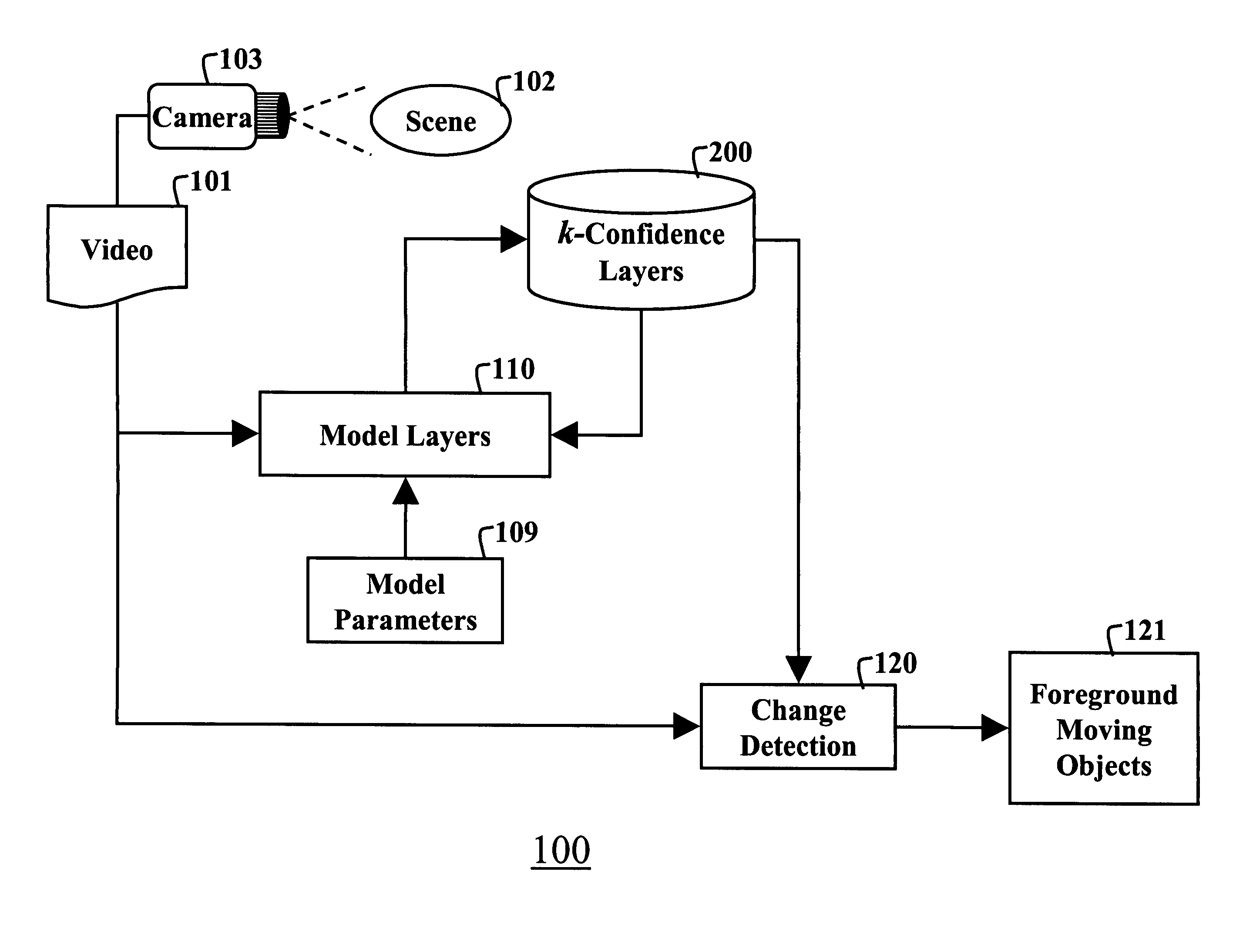

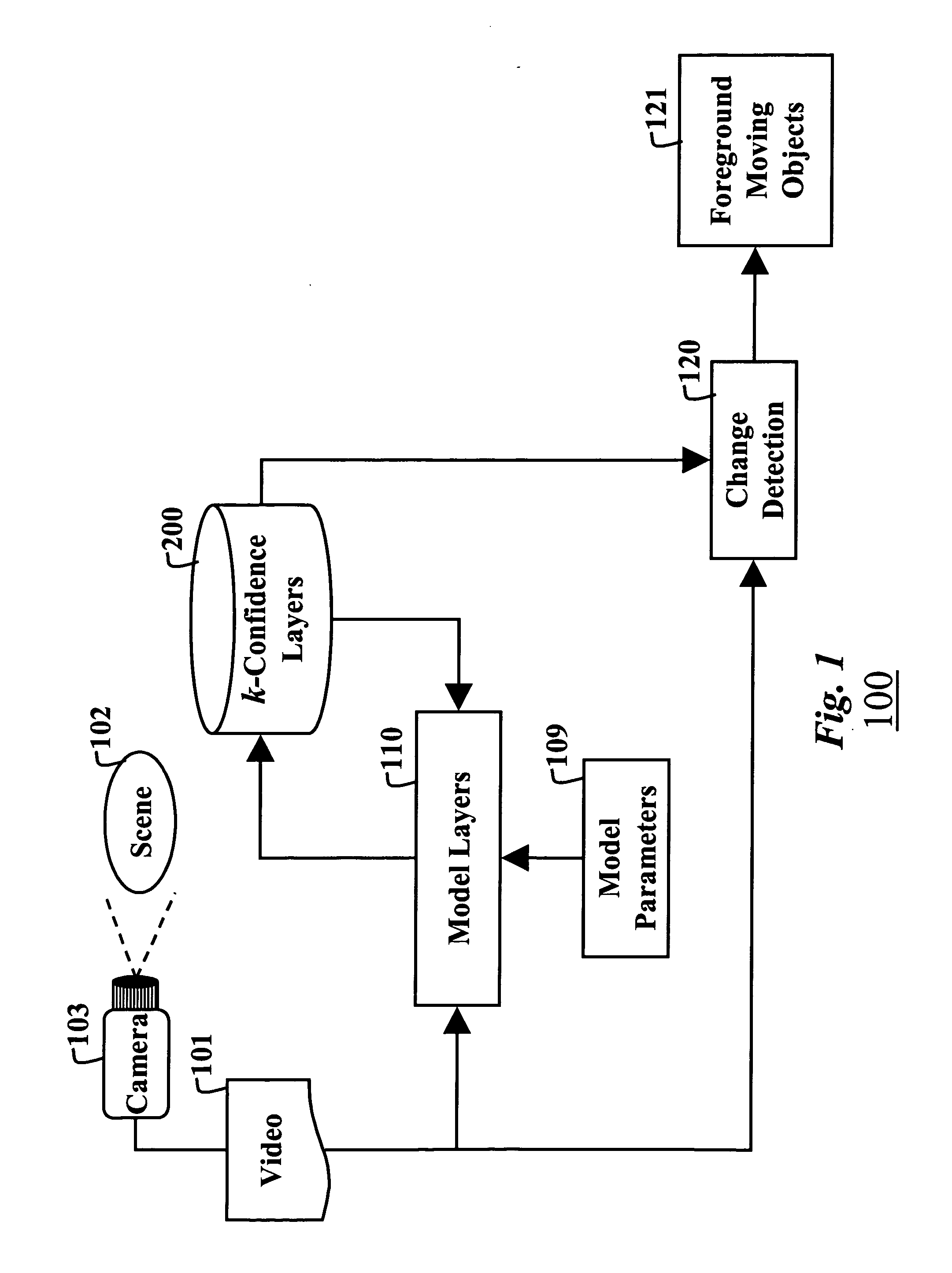

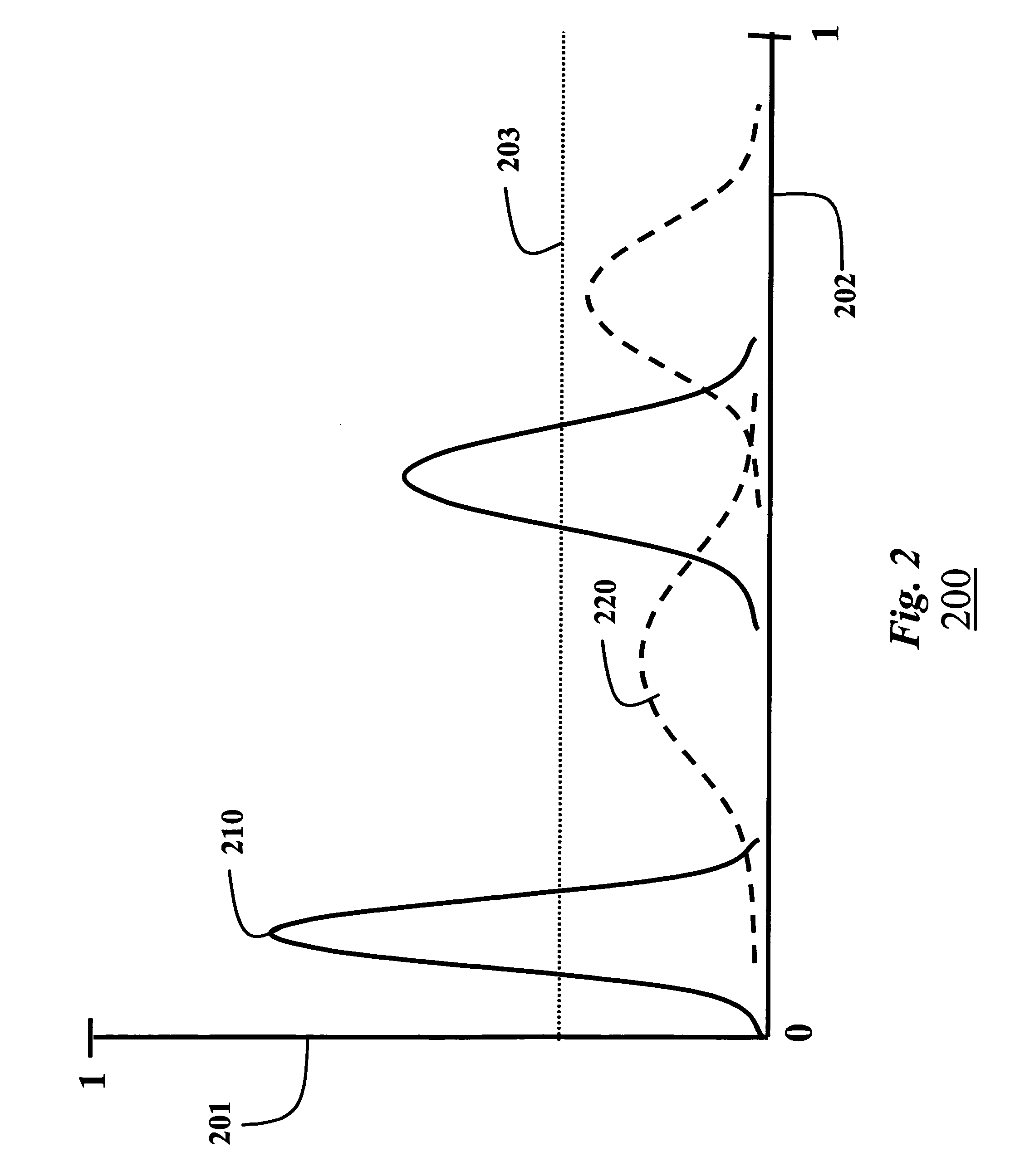

Modeling low frame rate videos with bayesian estimation

PatentInactiveUS20060262959A1

Innovation

- A method using multiple layers of Gaussian distributions to model each pixel, updated recursively with Bayesian estimation, which maintains multi-modality and adapts to changing backgrounds, allowing for efficient detection of moving objects even at low frame rates.

Methods and System for Predicting Driver Awareness of a Feature in a Scene

PatentActiveUS20210303888A1

Innovation

- A method and system that present a video with scene and gaze representations to a neural network, which generates awareness predictions based on driver gaze location, and trains on user-provided awareness indications to improve prediction accuracy.

Privacy and Data Protection in Scene Analysis Systems

Privacy and data protection represent critical considerations in scene analysis systems that employ informed frame methodologies for scene abstraction. As these systems increasingly process visual data from real-world environments, they inevitably capture sensitive information including personal identities, behavioral patterns, and location-specific details that require comprehensive protection frameworks.

The fundamental privacy challenge stems from the inherent nature of scene analysis systems to extract meaningful abstractions from visual frames containing potentially identifiable information. Modern informed frame methodologies often rely on deep learning architectures that process raw visual data to generate semantic representations, creating multiple points where personal data exposure can occur. These systems must balance the need for accurate scene understanding with stringent privacy preservation requirements.

Data minimization principles play a crucial role in privacy-preserving scene analysis implementations. Effective approaches include on-device processing architectures that perform initial scene abstraction locally, transmitting only anonymized feature representations rather than raw visual frames. This methodology significantly reduces privacy risks while maintaining the analytical capabilities necessary for comprehensive scene understanding.

Differential privacy techniques have emerged as promising solutions for protecting individual privacy in scene analysis datasets. By introducing carefully calibrated noise into the abstraction process, these methods enable statistical analysis of scene patterns while preventing the identification of specific individuals or sensitive locations. The challenge lies in optimizing noise parameters to preserve analytical utility while ensuring robust privacy guarantees.

Federated learning frameworks offer additional privacy protection by enabling distributed training of scene analysis models without centralizing sensitive visual data. Participating nodes contribute locally computed model updates based on their scene abstraction results, allowing collective learning while maintaining data locality and reducing exposure risks.

Regulatory compliance considerations, particularly regarding GDPR and similar privacy legislation, necessitate implementing explicit consent mechanisms, data retention policies, and user rights management within scene analysis systems. These requirements often conflict with the continuous processing nature of real-time scene abstraction, demanding innovative technical solutions that satisfy both operational needs and legal obligations.

Emerging privacy-preserving technologies, including homomorphic encryption and secure multi-party computation, present opportunities for conducting scene analysis operations on encrypted data, though computational overhead remains a significant implementation challenge for real-time applications.

The fundamental privacy challenge stems from the inherent nature of scene analysis systems to extract meaningful abstractions from visual frames containing potentially identifiable information. Modern informed frame methodologies often rely on deep learning architectures that process raw visual data to generate semantic representations, creating multiple points where personal data exposure can occur. These systems must balance the need for accurate scene understanding with stringent privacy preservation requirements.

Data minimization principles play a crucial role in privacy-preserving scene analysis implementations. Effective approaches include on-device processing architectures that perform initial scene abstraction locally, transmitting only anonymized feature representations rather than raw visual frames. This methodology significantly reduces privacy risks while maintaining the analytical capabilities necessary for comprehensive scene understanding.

Differential privacy techniques have emerged as promising solutions for protecting individual privacy in scene analysis datasets. By introducing carefully calibrated noise into the abstraction process, these methods enable statistical analysis of scene patterns while preventing the identification of specific individuals or sensitive locations. The challenge lies in optimizing noise parameters to preserve analytical utility while ensuring robust privacy guarantees.

Federated learning frameworks offer additional privacy protection by enabling distributed training of scene analysis models without centralizing sensitive visual data. Participating nodes contribute locally computed model updates based on their scene abstraction results, allowing collective learning while maintaining data locality and reducing exposure risks.

Regulatory compliance considerations, particularly regarding GDPR and similar privacy legislation, necessitate implementing explicit consent mechanisms, data retention policies, and user rights management within scene analysis systems. These requirements often conflict with the continuous processing nature of real-time scene abstraction, demanding innovative technical solutions that satisfy both operational needs and legal obligations.

Emerging privacy-preserving technologies, including homomorphic encryption and secure multi-party computation, present opportunities for conducting scene analysis operations on encrypted data, though computational overhead remains a significant implementation challenge for real-time applications.

Computational Efficiency Optimization for Real-time Processing

Computational efficiency optimization represents a critical bottleneck in implementing scene abstraction through informed frame methodologies for real-time applications. Current processing pipelines face significant challenges when attempting to maintain sub-millisecond response times while preserving abstraction quality. The computational overhead associated with frame-informed decision making creates substantial latency issues, particularly in scenarios requiring immediate visual feedback or autonomous system responses.

Modern real-time processing demands have intensified the need for algorithmic optimization strategies that can reduce computational complexity without compromising abstraction accuracy. Traditional approaches often rely on brute-force processing methods that scale poorly with increasing scene complexity and frame resolution. The integration of informed frame methodologies introduces additional computational layers that must be carefully optimized to maintain real-time performance standards.

Hardware acceleration techniques have emerged as essential components for achieving optimal computational efficiency. GPU-based parallel processing architectures demonstrate significant performance improvements when properly leveraged for scene abstraction tasks. Specialized tensor processing units and dedicated AI accelerators offer promising pathways for reducing processing latency while maintaining high-quality abstraction results.

Algorithmic optimization strategies focus on reducing redundant computations through intelligent caching mechanisms and predictive processing approaches. Temporal coherence exploitation allows systems to minimize recalculation of stable scene elements across consecutive frames. Adaptive quality scaling techniques dynamically adjust abstraction complexity based on available computational resources and real-time constraints.

Memory management optimization plays a crucial role in maintaining consistent real-time performance. Efficient data structure design and memory allocation strategies prevent bottlenecks that commonly occur during intensive scene processing operations. Stream processing architectures enable continuous data flow optimization, reducing memory access latency and improving overall system throughput.

The implementation of multi-threaded processing frameworks allows for parallel execution of abstraction algorithms across multiple computational cores. Load balancing mechanisms ensure optimal resource utilization while preventing processing bottlenecks that could compromise real-time performance requirements. These optimization strategies collectively enable practical deployment of scene abstraction methodologies in time-critical applications.

Modern real-time processing demands have intensified the need for algorithmic optimization strategies that can reduce computational complexity without compromising abstraction accuracy. Traditional approaches often rely on brute-force processing methods that scale poorly with increasing scene complexity and frame resolution. The integration of informed frame methodologies introduces additional computational layers that must be carefully optimized to maintain real-time performance standards.

Hardware acceleration techniques have emerged as essential components for achieving optimal computational efficiency. GPU-based parallel processing architectures demonstrate significant performance improvements when properly leveraged for scene abstraction tasks. Specialized tensor processing units and dedicated AI accelerators offer promising pathways for reducing processing latency while maintaining high-quality abstraction results.

Algorithmic optimization strategies focus on reducing redundant computations through intelligent caching mechanisms and predictive processing approaches. Temporal coherence exploitation allows systems to minimize recalculation of stable scene elements across consecutive frames. Adaptive quality scaling techniques dynamically adjust abstraction complexity based on available computational resources and real-time constraints.

Memory management optimization plays a crucial role in maintaining consistent real-time performance. Efficient data structure design and memory allocation strategies prevent bottlenecks that commonly occur during intensive scene processing operations. Stream processing architectures enable continuous data flow optimization, reducing memory access latency and improving overall system throughput.

The implementation of multi-threaded processing frameworks allows for parallel execution of abstraction algorithms across multiple computational cores. Load balancing mechanisms ensure optimal resource utilization while preventing processing bottlenecks that could compromise real-time performance requirements. These optimization strategies collectively enable practical deployment of scene abstraction methodologies in time-critical applications.

Unlock deeper insights with PatSnap Eureka Quick Research — get a full tech report to explore trends and direct your research. Try now!

Generate Your Research Report Instantly with AI Agent

Supercharge your innovation with PatSnap Eureka AI Agent Platform!