World Models vs. Evolutionary Algorithms: Robustness Metrics

APR 13, 20269 MIN READ

Generate Your Research Report Instantly with AI Agent

PatSnap Eureka helps you evaluate technical feasibility & market potential.

World Models vs EA Robustness Background and Objectives

The intersection of artificial intelligence and autonomous systems has witnessed remarkable evolution over the past decade, with two distinct paradigms emerging as prominent approaches for developing robust decision-making systems. World Models, rooted in predictive modeling and representation learning, have gained significant traction since their conceptual introduction, offering a framework for agents to learn compressed spatial and temporal representations of their environment. Simultaneously, Evolutionary Algorithms have continued to mature as a bio-inspired optimization methodology, demonstrating exceptional adaptability across diverse problem domains.

The convergence of these methodologies has created unprecedented opportunities for addressing one of the most critical challenges in modern AI systems: robustness. As autonomous systems increasingly operate in unpredictable, dynamic environments ranging from autonomous vehicles navigating complex traffic scenarios to robotic systems performing delicate manufacturing tasks, the ability to maintain consistent performance under uncertainty has become paramount.

World Models approach robustness through predictive accuracy and environmental modeling, enabling systems to anticipate and prepare for potential scenarios through learned representations. This methodology emphasizes the importance of understanding environmental dynamics and leveraging this understanding to make informed decisions. The approach has demonstrated particular strength in scenarios where environmental patterns can be learned and extrapolated.

Evolutionary Algorithms tackle robustness from a fundamentally different angle, utilizing population-based search and selection mechanisms that naturally promote solutions capable of handling diverse conditions. Through iterative refinement and survival-of-the-fittest principles, these algorithms tend to discover solutions that exhibit inherent resilience across varying operational parameters.

The primary objective of comparing these approaches centers on establishing comprehensive robustness metrics that can effectively evaluate and quantify system performance under adversarial conditions, environmental variations, and operational uncertainties. This comparison aims to identify the fundamental strengths and limitations of each methodology, ultimately contributing to the development of hybrid approaches that leverage the complementary advantages of both paradigms.

Understanding the robustness characteristics of these distinct approaches is crucial for advancing the field toward more reliable and dependable autonomous systems capable of operating safely in real-world environments.

The convergence of these methodologies has created unprecedented opportunities for addressing one of the most critical challenges in modern AI systems: robustness. As autonomous systems increasingly operate in unpredictable, dynamic environments ranging from autonomous vehicles navigating complex traffic scenarios to robotic systems performing delicate manufacturing tasks, the ability to maintain consistent performance under uncertainty has become paramount.

World Models approach robustness through predictive accuracy and environmental modeling, enabling systems to anticipate and prepare for potential scenarios through learned representations. This methodology emphasizes the importance of understanding environmental dynamics and leveraging this understanding to make informed decisions. The approach has demonstrated particular strength in scenarios where environmental patterns can be learned and extrapolated.

Evolutionary Algorithms tackle robustness from a fundamentally different angle, utilizing population-based search and selection mechanisms that naturally promote solutions capable of handling diverse conditions. Through iterative refinement and survival-of-the-fittest principles, these algorithms tend to discover solutions that exhibit inherent resilience across varying operational parameters.

The primary objective of comparing these approaches centers on establishing comprehensive robustness metrics that can effectively evaluate and quantify system performance under adversarial conditions, environmental variations, and operational uncertainties. This comparison aims to identify the fundamental strengths and limitations of each methodology, ultimately contributing to the development of hybrid approaches that leverage the complementary advantages of both paradigms.

Understanding the robustness characteristics of these distinct approaches is crucial for advancing the field toward more reliable and dependable autonomous systems capable of operating safely in real-world environments.

Market Demand for Robust AI Decision Systems

The global market for robust AI decision systems is experiencing unprecedented growth driven by increasing automation across critical industries. Organizations worldwide are recognizing that traditional AI systems often fail when confronted with unexpected scenarios, adversarial attacks, or distribution shifts in real-world data. This recognition has created substantial demand for AI solutions that can maintain reliable performance under uncertainty and stress conditions.

Financial services represent one of the largest market segments demanding robust AI decision systems. Banks and investment firms require algorithms that can adapt to volatile market conditions while maintaining risk management protocols. The comparison between World Models and Evolutionary Algorithms becomes particularly relevant here, as financial institutions need systems that can both predict market dynamics and evolve trading strategies in response to changing conditions.

Autonomous vehicle manufacturers constitute another major market driver, where robustness metrics directly translate to safety requirements. These companies are actively seeking AI frameworks that can handle edge cases and unexpected road scenarios. The debate between World Models' predictive capabilities and Evolutionary Algorithms' adaptive resilience directly addresses industry needs for systems that can navigate both predicted and unpredicted driving situations.

Healthcare organizations are increasingly demanding robust AI for diagnostic and treatment recommendation systems. Medical AI must demonstrate consistent performance across diverse patient populations and clinical environments. The robustness comparison between these two approaches is crucial for healthcare providers who need systems that can maintain accuracy while adapting to new medical knowledge and patient demographics.

Manufacturing and supply chain management sectors are driving demand for AI systems that can maintain operational efficiency despite disruptions. Companies require decision-making algorithms that can handle supply chain volatility, equipment failures, and demand fluctuations. The evolutionary nature of some algorithms appeals to manufacturers seeking adaptive systems, while others prefer the predictive stability offered by world model approaches.

Cybersecurity markets are experiencing rapid growth in demand for robust AI systems capable of detecting novel threats and adapting to evolving attack patterns. Security firms need AI that can maintain effectiveness against adversarial examples and zero-day exploits, making robustness metrics a critical evaluation criterion for procurement decisions.

Financial services represent one of the largest market segments demanding robust AI decision systems. Banks and investment firms require algorithms that can adapt to volatile market conditions while maintaining risk management protocols. The comparison between World Models and Evolutionary Algorithms becomes particularly relevant here, as financial institutions need systems that can both predict market dynamics and evolve trading strategies in response to changing conditions.

Autonomous vehicle manufacturers constitute another major market driver, where robustness metrics directly translate to safety requirements. These companies are actively seeking AI frameworks that can handle edge cases and unexpected road scenarios. The debate between World Models' predictive capabilities and Evolutionary Algorithms' adaptive resilience directly addresses industry needs for systems that can navigate both predicted and unpredicted driving situations.

Healthcare organizations are increasingly demanding robust AI for diagnostic and treatment recommendation systems. Medical AI must demonstrate consistent performance across diverse patient populations and clinical environments. The robustness comparison between these two approaches is crucial for healthcare providers who need systems that can maintain accuracy while adapting to new medical knowledge and patient demographics.

Manufacturing and supply chain management sectors are driving demand for AI systems that can maintain operational efficiency despite disruptions. Companies require decision-making algorithms that can handle supply chain volatility, equipment failures, and demand fluctuations. The evolutionary nature of some algorithms appeals to manufacturers seeking adaptive systems, while others prefer the predictive stability offered by world model approaches.

Cybersecurity markets are experiencing rapid growth in demand for robust AI systems capable of detecting novel threats and adapting to evolving attack patterns. Security firms need AI that can maintain effectiveness against adversarial examples and zero-day exploits, making robustness metrics a critical evaluation criterion for procurement decisions.

Current Robustness Challenges in World Models and EA

World models face significant robustness challenges when deployed in dynamic and uncertain environments. These neural network-based systems, designed to learn compressed representations of environmental dynamics, often struggle with distribution shifts between training and deployment scenarios. The primary vulnerability lies in their reliance on historical data patterns, which may not adequately capture rare events or novel situations that occur in real-world applications.

Generalization remains a critical weakness for world models, particularly when encountering out-of-distribution states or actions. The learned latent representations can become unstable when the model encounters scenarios that deviate from its training distribution, leading to cascading errors in downstream decision-making processes. This brittleness is especially pronounced in safety-critical applications where robust performance is paramount.

Evolutionary algorithms encounter distinct robustness challenges centered around convergence stability and solution quality consistency. The stochastic nature of evolutionary processes introduces inherent variability in performance across different runs, making it difficult to guarantee consistent results. Population diversity management becomes crucial, as premature convergence can trap the algorithm in suboptimal regions, while excessive diversity may prevent convergence altogether.

Environmental noise and parameter sensitivity pose additional challenges for evolutionary algorithms. Small perturbations in fitness evaluation or algorithmic parameters can significantly impact the evolutionary trajectory, leading to dramatically different outcomes. This sensitivity is particularly problematic in noisy optimization landscapes where fitness evaluations are corrupted by measurement errors or environmental uncertainties.

Both paradigms struggle with scalability-related robustness issues. World models face computational constraints when scaling to high-dimensional state spaces, often requiring architectural compromises that may reduce robustness. Similarly, evolutionary algorithms encounter population size and generation number trade-offs that directly impact their ability to maintain robust search behavior in complex optimization landscapes.

The temporal aspect presents unique challenges for both approaches. World models must maintain predictive accuracy over extended time horizons while accumulating minimal error, whereas evolutionary algorithms must balance exploration and exploitation throughout the evolutionary process to maintain robust search dynamics across varying problem phases.

Generalization remains a critical weakness for world models, particularly when encountering out-of-distribution states or actions. The learned latent representations can become unstable when the model encounters scenarios that deviate from its training distribution, leading to cascading errors in downstream decision-making processes. This brittleness is especially pronounced in safety-critical applications where robust performance is paramount.

Evolutionary algorithms encounter distinct robustness challenges centered around convergence stability and solution quality consistency. The stochastic nature of evolutionary processes introduces inherent variability in performance across different runs, making it difficult to guarantee consistent results. Population diversity management becomes crucial, as premature convergence can trap the algorithm in suboptimal regions, while excessive diversity may prevent convergence altogether.

Environmental noise and parameter sensitivity pose additional challenges for evolutionary algorithms. Small perturbations in fitness evaluation or algorithmic parameters can significantly impact the evolutionary trajectory, leading to dramatically different outcomes. This sensitivity is particularly problematic in noisy optimization landscapes where fitness evaluations are corrupted by measurement errors or environmental uncertainties.

Both paradigms struggle with scalability-related robustness issues. World models face computational constraints when scaling to high-dimensional state spaces, often requiring architectural compromises that may reduce robustness. Similarly, evolutionary algorithms encounter population size and generation number trade-offs that directly impact their ability to maintain robust search behavior in complex optimization landscapes.

The temporal aspect presents unique challenges for both approaches. World models must maintain predictive accuracy over extended time horizons while accumulating minimal error, whereas evolutionary algorithms must balance exploration and exploitation throughout the evolutionary process to maintain robust search dynamics across varying problem phases.

Existing Robustness Metrics and Evaluation Methods

01 Evolutionary algorithms for model optimization and parameter tuning

Evolutionary algorithms can be employed to optimize world models by evolving parameters and structures that enhance robustness. These algorithms use selection, mutation, and crossover operations to iteratively improve model performance across varying conditions. The evolutionary approach enables automatic discovery of robust configurations that can withstand perturbations and uncertainties in the environment.- Evolutionary algorithms for model optimization and parameter tuning: Evolutionary algorithms can be employed to optimize world models by evolving parameters and structures that enhance model performance. These algorithms use selection, mutation, and crossover operations to iteratively improve model configurations. The evolutionary approach allows for exploration of large parameter spaces and can discover robust solutions that generalize well across different conditions. This method is particularly effective for tuning complex models where traditional optimization methods may struggle.

- Robustness testing through adversarial perturbations: World models can be tested for robustness by introducing adversarial perturbations and noise to input data. This approach evaluates how well models maintain performance under challenging conditions and unexpected inputs. Evolutionary algorithms can be used to generate diverse test cases that expose model vulnerabilities. The combination of systematic perturbation testing with evolutionary search helps identify and strengthen weak points in model architectures.

- Multi-objective evolutionary optimization for model robustness: Multi-objective evolutionary algorithms can simultaneously optimize multiple aspects of world models, including accuracy, robustness, and computational efficiency. This approach balances competing objectives to produce models that perform well across various metrics. The evolutionary process maintains a population of diverse solutions representing different trade-offs between objectives. This methodology ensures that robustness is not sacrificed for other performance characteristics.

- Ensemble methods with evolutionary selection: Evolutionary algorithms can be used to select and combine multiple world models into robust ensembles. This approach leverages diversity among models to improve overall system reliability and performance. The evolutionary process optimizes both individual model parameters and ensemble composition strategies. Ensemble methods provide redundancy and error correction capabilities that enhance robustness against various failure modes.

- Adaptive learning and self-evolution for continuous robustness: World models can incorporate adaptive mechanisms that allow continuous evolution and improvement during deployment. Evolutionary algorithms enable models to self-adjust in response to changing environments and new data patterns. This adaptive capability ensures long-term robustness as the model encounters previously unseen scenarios. The self-evolutionary approach combines online learning with evolutionary principles to maintain model effectiveness over time.

02 Robustness testing through adversarial perturbations

World models can be tested for robustness by introducing adversarial perturbations and noise during training and evaluation phases. Evolutionary algorithms can generate diverse test scenarios that challenge model stability and identify vulnerabilities. This approach helps ensure that models maintain performance under unexpected or hostile conditions.Expand Specific Solutions03 Multi-objective evolutionary optimization for robust model design

Multi-objective evolutionary algorithms can simultaneously optimize multiple criteria such as accuracy, computational efficiency, and robustness. This approach balances trade-offs between different performance metrics to create world models that are both effective and resilient. The evolutionary process explores the solution space to find Pareto-optimal configurations that satisfy competing objectives.Expand Specific Solutions04 Ensemble methods with evolutionary selection

Evolutionary algorithms can be used to select and combine multiple world models into robust ensembles. The selection process identifies complementary models that collectively provide better generalization and fault tolerance. This ensemble approach leverages diversity among models to improve overall system robustness against various failure modes and environmental changes.Expand Specific Solutions05 Adaptive learning mechanisms for dynamic robustness

World models can incorporate adaptive learning mechanisms guided by evolutionary algorithms to maintain robustness in changing environments. These mechanisms enable continuous model refinement and self-adjustment based on feedback from real-world interactions. The evolutionary framework supports online adaptation that preserves model stability while accommodating new patterns and conditions.Expand Specific Solutions

Key Players in World Models and EA Research

The World Models vs. Evolutionary Algorithms robustness metrics field represents an emerging intersection of AI methodologies currently in early development stages. The market remains nascent with limited commercial deployment, primarily driven by research institutions and technology giants exploring hybrid optimization approaches. Technology maturity varies significantly across players, with established corporations like Google LLC, IBM, and Siemens AG leveraging substantial R&D capabilities to advance theoretical frameworks, while specialized entities such as Umnai Ltd. focus on explainable AI solutions. Academic institutions including Shanghai Jiao Tong University, Zhejiang University, and Beijing Institute of Technology contribute foundational research in evolutionary computation and world modeling. The competitive landscape shows fragmented development with no dominant standard, as companies like Honda Research Institute Europe, NEC Corp., and Microsoft Technology Licensing explore applications across autonomous systems, industrial automation, and cognitive computing, indicating significant growth potential despite current technological immaturity.

Siemens AG

Technical Solution: Siemens has implemented world model technologies in industrial automation and digital twin applications, developing robustness comparison frameworks that evaluate predictive models against evolutionary optimization approaches. Their methodology focuses on industrial-grade reliability metrics, including system uptime, prediction accuracy under operational stress, and adaptive performance in dynamic manufacturing environments. The company has established evaluation protocols that compare world model effectiveness with evolutionary algorithm robustness across process optimization, predictive maintenance, and quality control applications. Their robustness metrics emphasize operational continuity, scalability across industrial systems, and integration reliability for complex manufacturing processes and infrastructure management scenarios.

Strengths: Industrial automation expertise, proven deployment in complex systems, comprehensive operational metrics. Weaknesses: Industry-specific focus, limited academic research contributions, potential constraints from industrial requirements and legacy system integration.

Robert Bosch GmbH

Technical Solution: Bosch has developed practical world model implementations for automotive and industrial applications, focusing on robustness metrics that compare predictive modeling approaches with evolutionary optimization strategies. Their framework emphasizes real-world validation scenarios, implementing safety-critical robustness measures including fault tolerance, environmental adaptation, and performance consistency under varying operational conditions. The company has established comparative evaluation protocols that assess both world models and evolutionary algorithms for control systems, sensor fusion, and autonomous decision-making applications. Their robustness metrics prioritize reliability, safety margins, and operational stability across diverse industrial environments and automotive scenarios.

Strengths: Real-world application focus, safety-critical system expertise, industrial validation experience. Weaknesses: Domain-specific limitations, conservative approach to experimental techniques, potential constraints from regulatory requirements.

Core Innovations in AI System Robustness Assessment

Evolutionary search for robust solutions

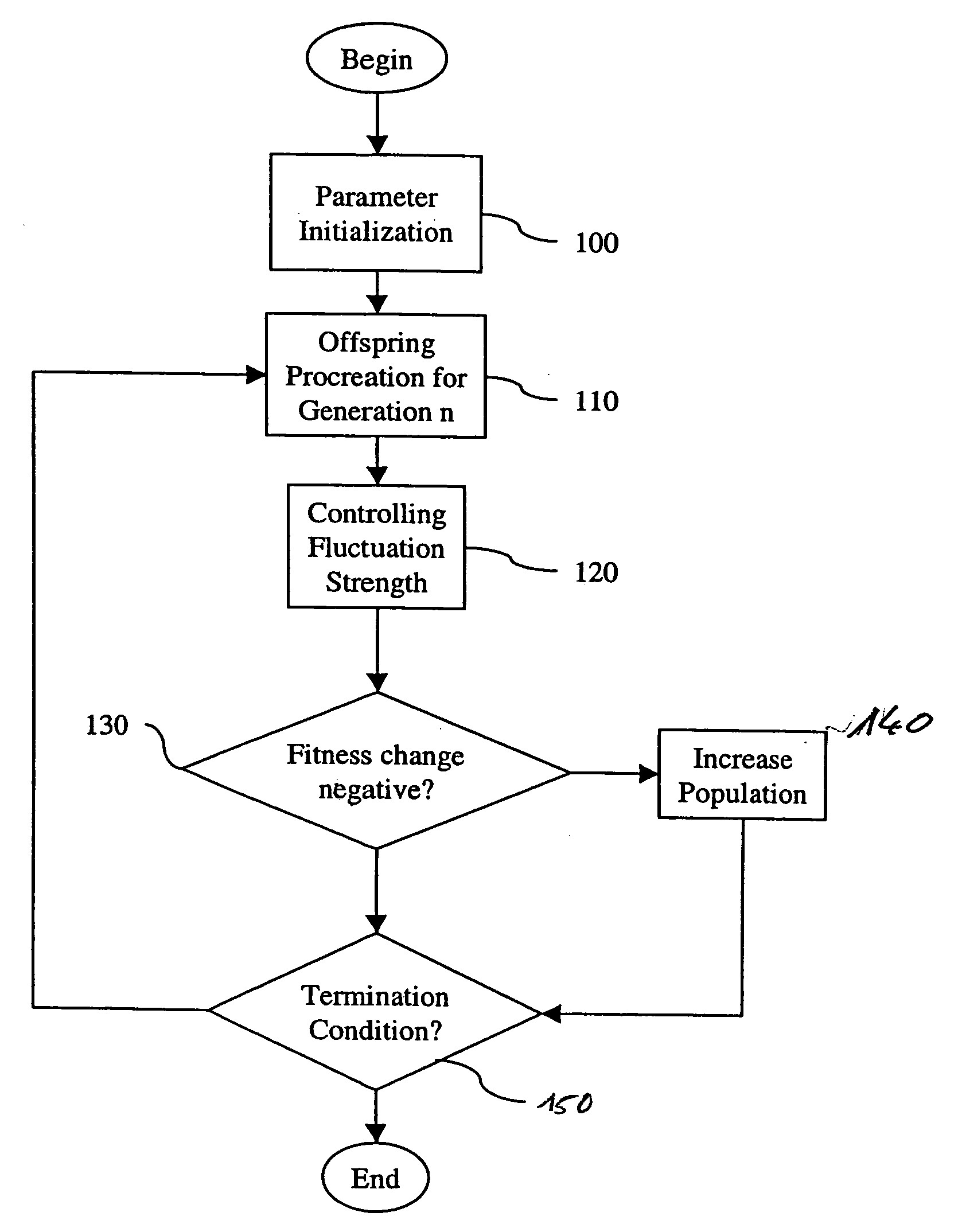

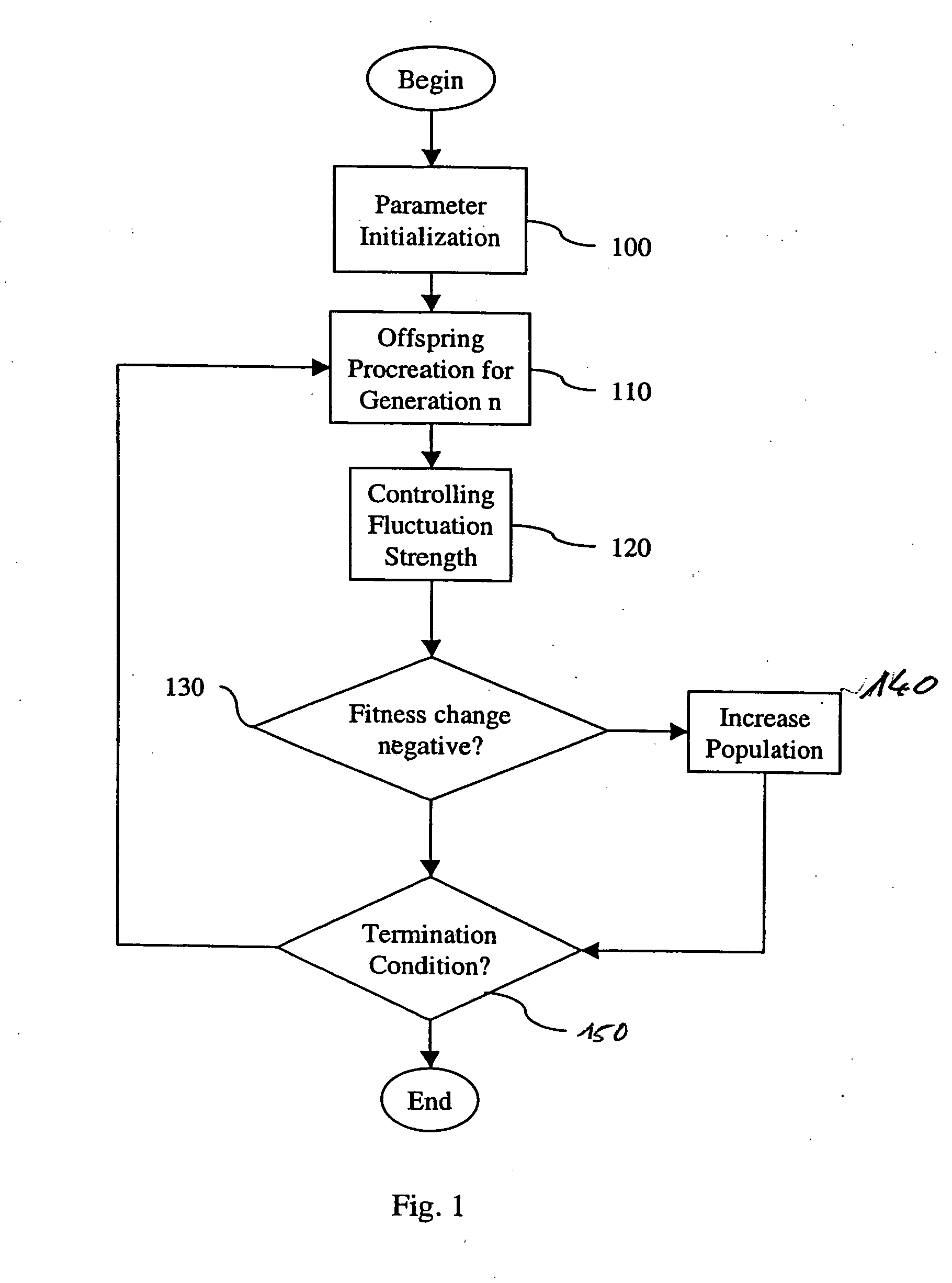

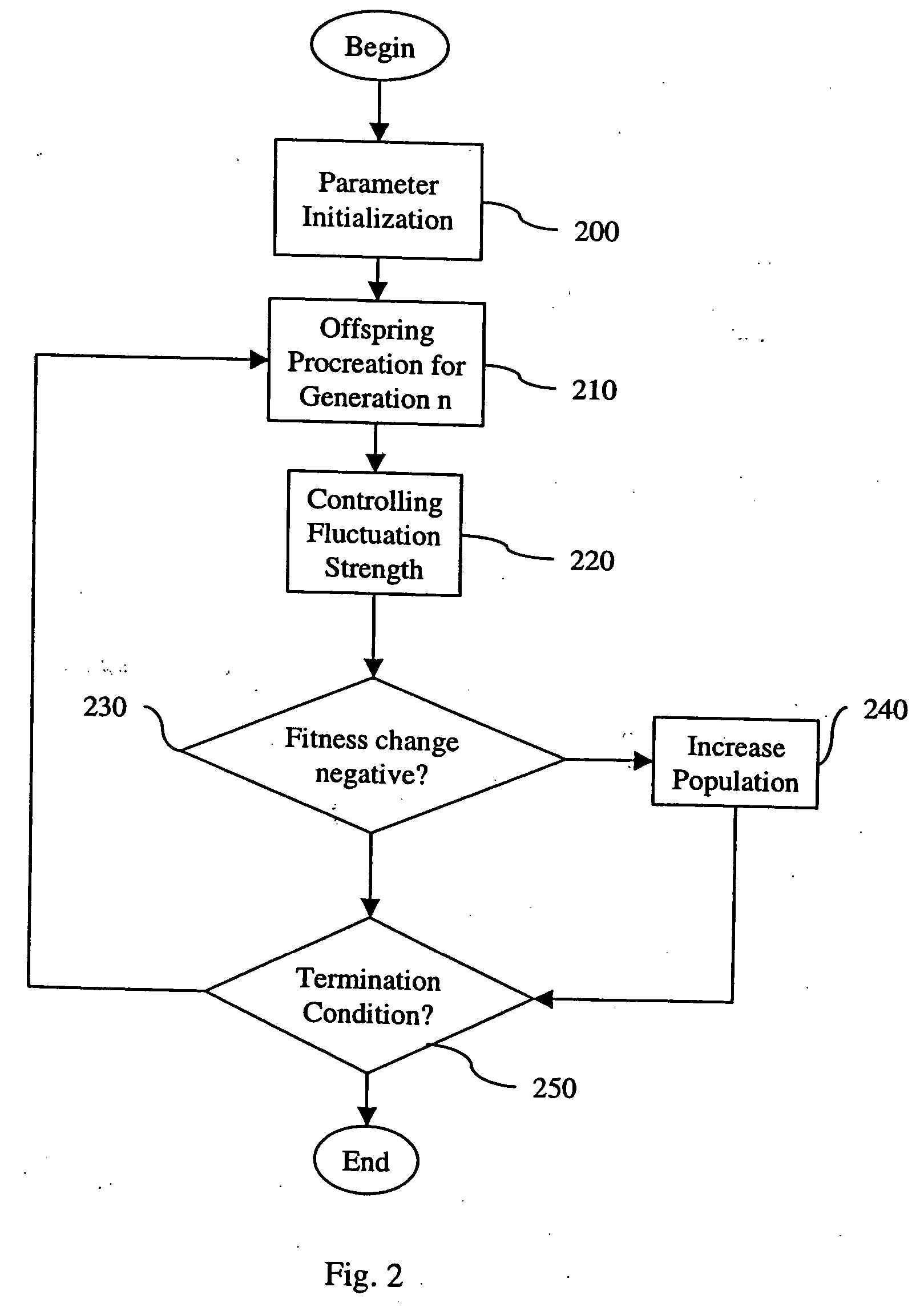

PatentInactiveUS20070094167A1

Innovation

- The method involves creating an initial population of parameter sets, mutating and recombining them with enhanced noise contributions to improve robustness, and controlling mutation strength and population size to reduce residual distance to the optimizer while minimizing fitness evaluations.

Evolutionary search for robust solutions

PatentInactiveEP1768053A1

Innovation

- The method involves controlling observed parental variance to correctly test robustness and using mutations with increased strength to serve as a robustness tester, adjusting mutation strength based on parental population variance and actuator noise, and dynamically adapting population size to reduce residual distance to the optimizer while minimizing fitness evaluations.

Standardization Framework for AI Robustness Testing

The establishment of a comprehensive standardization framework for AI robustness testing has become increasingly critical as artificial intelligence systems are deployed across diverse domains with varying reliability requirements. Current robustness evaluation practices suffer from fragmentation, with different research communities and industrial sectors employing disparate metrics and methodologies that lack interoperability and comparative validity.

A unified standardization framework must address the fundamental challenge of defining consistent robustness metrics that can effectively evaluate both world model-based approaches and evolutionary algorithm implementations. This framework should encompass standardized test suites, benchmark datasets, and evaluation protocols that enable systematic comparison across different AI architectures and problem domains.

The framework architecture should incorporate multi-dimensional robustness assessment criteria, including adversarial resilience, distributional shift tolerance, noise immunity, and temporal stability. Each dimension requires specific measurement protocols and threshold definitions that can be universally applied regardless of the underlying algorithmic approach, whether utilizing world models or evolutionary strategies.

Implementation standards must define precise experimental conditions, including data preprocessing requirements, perturbation generation methods, and statistical significance testing procedures. The framework should specify minimum sample sizes, cross-validation protocols, and reporting formats to ensure reproducible and comparable results across different research groups and industrial applications.

Certification processes within this framework should establish tiered robustness levels corresponding to different application criticality requirements. Safety-critical applications would demand higher robustness thresholds and more comprehensive testing protocols compared to general-purpose AI systems, with clear guidelines for transitioning between certification levels.

The standardization framework must also incorporate continuous evolution mechanisms to accommodate emerging robustness challenges and novel attack vectors. Regular review cycles should update testing methodologies and metric definitions based on evolving threat landscapes and technological advances in both world modeling and evolutionary computation approaches.

International coordination efforts are essential for framework adoption, requiring collaboration between standards organizations, academic institutions, and industry stakeholders to ensure global compatibility and mutual recognition of robustness certifications across different regulatory jurisdictions.

A unified standardization framework must address the fundamental challenge of defining consistent robustness metrics that can effectively evaluate both world model-based approaches and evolutionary algorithm implementations. This framework should encompass standardized test suites, benchmark datasets, and evaluation protocols that enable systematic comparison across different AI architectures and problem domains.

The framework architecture should incorporate multi-dimensional robustness assessment criteria, including adversarial resilience, distributional shift tolerance, noise immunity, and temporal stability. Each dimension requires specific measurement protocols and threshold definitions that can be universally applied regardless of the underlying algorithmic approach, whether utilizing world models or evolutionary strategies.

Implementation standards must define precise experimental conditions, including data preprocessing requirements, perturbation generation methods, and statistical significance testing procedures. The framework should specify minimum sample sizes, cross-validation protocols, and reporting formats to ensure reproducible and comparable results across different research groups and industrial applications.

Certification processes within this framework should establish tiered robustness levels corresponding to different application criticality requirements. Safety-critical applications would demand higher robustness thresholds and more comprehensive testing protocols compared to general-purpose AI systems, with clear guidelines for transitioning between certification levels.

The standardization framework must also incorporate continuous evolution mechanisms to accommodate emerging robustness challenges and novel attack vectors. Regular review cycles should update testing methodologies and metric definitions based on evolving threat landscapes and technological advances in both world modeling and evolutionary computation approaches.

International coordination efforts are essential for framework adoption, requiring collaboration between standards organizations, academic institutions, and industry stakeholders to ensure global compatibility and mutual recognition of robustness certifications across different regulatory jurisdictions.

Benchmarking Protocols for Model Comparison Studies

Establishing standardized benchmarking protocols for comparing World Models and Evolutionary Algorithms in robustness assessment requires a comprehensive framework that addresses the unique characteristics of both paradigms. The fundamental challenge lies in creating evaluation methodologies that can fairly assess model-based approaches against population-based optimization techniques while maintaining scientific rigor and reproducibility.

The core benchmarking framework should incorporate multi-dimensional evaluation criteria encompassing computational efficiency, convergence stability, and generalization capability across diverse problem domains. For World Models, protocols must evaluate their ability to learn accurate environment representations and maintain performance under distributional shifts. Evolutionary Algorithms require assessment of population diversity maintenance, selection pressure optimization, and adaptive parameter tuning effectiveness.

Standardized test suites should include both synthetic and real-world scenarios with varying complexity levels and noise characteristics. The protocol design must account for stochastic nature inherent in both approaches, requiring multiple independent runs with statistical significance testing. Cross-validation methodologies should be adapted to handle temporal dependencies in sequential decision-making tasks while ensuring fair comparison across different algorithmic families.

Evaluation metrics standardization presents critical considerations for meaningful comparisons. Robustness metrics should encompass performance degradation rates under adversarial conditions, recovery capabilities after perturbations, and consistency across different initialization seeds. The benchmarking protocol must define clear baseline establishment procedures, hyperparameter optimization guidelines, and computational resource allocation constraints to ensure equitable comparison conditions.

Implementation guidelines should specify minimum sample sizes, confidence interval requirements, and statistical testing procedures for significance determination. The protocol framework must also address reproducibility concerns through detailed documentation requirements, code availability standards, and result verification procedures. These standardized approaches enable systematic evaluation of relative strengths and limitations between World Models and Evolutionary Algorithms in robustness-critical applications.

The core benchmarking framework should incorporate multi-dimensional evaluation criteria encompassing computational efficiency, convergence stability, and generalization capability across diverse problem domains. For World Models, protocols must evaluate their ability to learn accurate environment representations and maintain performance under distributional shifts. Evolutionary Algorithms require assessment of population diversity maintenance, selection pressure optimization, and adaptive parameter tuning effectiveness.

Standardized test suites should include both synthetic and real-world scenarios with varying complexity levels and noise characteristics. The protocol design must account for stochastic nature inherent in both approaches, requiring multiple independent runs with statistical significance testing. Cross-validation methodologies should be adapted to handle temporal dependencies in sequential decision-making tasks while ensuring fair comparison across different algorithmic families.

Evaluation metrics standardization presents critical considerations for meaningful comparisons. Robustness metrics should encompass performance degradation rates under adversarial conditions, recovery capabilities after perturbations, and consistency across different initialization seeds. The benchmarking protocol must define clear baseline establishment procedures, hyperparameter optimization guidelines, and computational resource allocation constraints to ensure equitable comparison conditions.

Implementation guidelines should specify minimum sample sizes, confidence interval requirements, and statistical testing procedures for significance determination. The protocol framework must also address reproducibility concerns through detailed documentation requirements, code availability standards, and result verification procedures. These standardized approaches enable systematic evaluation of relative strengths and limitations between World Models and Evolutionary Algorithms in robustness-critical applications.

Unlock deeper insights with PatSnap Eureka Quick Research — get a full tech report to explore trends and direct your research. Try now!

Generate Your Research Report Instantly with AI Agent

Supercharge your innovation with PatSnap Eureka AI Agent Platform!