World Models vs. Physical Models: Scalability and Accuracy

APR 13, 20269 MIN READ

Generate Your Research Report Instantly with AI Agent

PatSnap Eureka helps you evaluate technical feasibility & market potential.

World vs Physical Models Background and Objectives

The evolution of computational modeling has reached a critical juncture where two distinct paradigms compete for dominance in understanding and predicting complex systems. World models, emerging from the artificial intelligence and machine learning communities, represent data-driven approaches that learn patterns and relationships directly from observational data without explicit incorporation of underlying physical principles. These models leverage deep learning architectures, neural networks, and statistical methods to capture system behaviors through pattern recognition and correlation analysis.

Physical models, conversely, are grounded in fundamental scientific principles, mathematical equations, and domain-specific knowledge accumulated over centuries of scientific research. These models explicitly encode physical laws, conservation principles, and causal relationships that govern natural phenomena. Traditional engineering and scientific disciplines have long relied on such physics-based approaches for system analysis, prediction, and design optimization.

The contemporary technological landscape presents unprecedented challenges that expose the limitations of both approaches when applied independently. Modern applications in autonomous systems, climate modeling, robotics, and industrial automation demand models that can simultaneously achieve high accuracy in complex scenarios while maintaining computational efficiency at scale. The tension between these requirements has intensified as system complexity increases and real-time decision-making becomes critical.

Scalability concerns arise as physical models often require extensive computational resources for high-fidelity simulations, particularly when dealing with multi-scale phenomena or systems with numerous interacting components. World models, while potentially more computationally efficient during inference, face challenges in generalization beyond training data distributions and may lack interpretability in critical applications.

The accuracy dimension presents equally complex trade-offs. Physical models provide theoretical guarantees and interpretable results but may suffer from modeling assumptions, parameter uncertainties, and computational approximations. World models can capture complex nonlinear relationships and emergent behaviors but may exhibit unpredictable failure modes and limited extrapolation capabilities.

The primary objective of this technological investigation centers on establishing a comprehensive framework for evaluating the scalability-accuracy trade-offs inherent in both modeling paradigms. This analysis aims to identify optimal application domains for each approach, explore hybrid methodologies that leverage the strengths of both paradigms, and develop guidelines for model selection based on specific performance requirements and constraints.

Physical models, conversely, are grounded in fundamental scientific principles, mathematical equations, and domain-specific knowledge accumulated over centuries of scientific research. These models explicitly encode physical laws, conservation principles, and causal relationships that govern natural phenomena. Traditional engineering and scientific disciplines have long relied on such physics-based approaches for system analysis, prediction, and design optimization.

The contemporary technological landscape presents unprecedented challenges that expose the limitations of both approaches when applied independently. Modern applications in autonomous systems, climate modeling, robotics, and industrial automation demand models that can simultaneously achieve high accuracy in complex scenarios while maintaining computational efficiency at scale. The tension between these requirements has intensified as system complexity increases and real-time decision-making becomes critical.

Scalability concerns arise as physical models often require extensive computational resources for high-fidelity simulations, particularly when dealing with multi-scale phenomena or systems with numerous interacting components. World models, while potentially more computationally efficient during inference, face challenges in generalization beyond training data distributions and may lack interpretability in critical applications.

The accuracy dimension presents equally complex trade-offs. Physical models provide theoretical guarantees and interpretable results but may suffer from modeling assumptions, parameter uncertainties, and computational approximations. World models can capture complex nonlinear relationships and emergent behaviors but may exhibit unpredictable failure modes and limited extrapolation capabilities.

The primary objective of this technological investigation centers on establishing a comprehensive framework for evaluating the scalability-accuracy trade-offs inherent in both modeling paradigms. This analysis aims to identify optimal application domains for each approach, explore hybrid methodologies that leverage the strengths of both paradigms, and develop guidelines for model selection based on specific performance requirements and constraints.

Market Demand for Scalable AI Modeling Solutions

The global artificial intelligence modeling market is experiencing unprecedented growth driven by the fundamental tension between World Models and Physical Models regarding scalability and accuracy trade-offs. Organizations across industries are increasingly recognizing that traditional physical modeling approaches, while highly accurate, face significant computational and resource constraints when scaling to complex real-world applications. This recognition has catalyzed substantial demand for more scalable AI modeling solutions that can maintain acceptable accuracy levels while processing vast datasets and complex scenarios.

Enterprise demand for scalable AI modeling solutions spans multiple critical sectors. Autonomous vehicle manufacturers require models capable of processing real-time environmental data while maintaining safety-critical accuracy standards. Financial institutions seek modeling frameworks that can analyze market dynamics across global scales without compromising predictive precision. Manufacturing companies demand solutions that can optimize production processes across multiple facilities while accounting for local variations and constraints.

The healthcare and pharmaceutical industries represent particularly compelling market segments for advanced modeling solutions. Drug discovery processes require models that can simulate molecular interactions at unprecedented scales while maintaining chemical accuracy. Medical imaging applications demand solutions capable of processing massive datasets while preserving diagnostic precision. These requirements highlight the critical need for modeling approaches that transcend traditional scalability-accuracy limitations.

Cloud computing and edge computing infrastructure developments have significantly expanded market opportunities for scalable AI modeling solutions. Organizations can now deploy hybrid modeling architectures that leverage both centralized computational resources and distributed processing capabilities. This infrastructure evolution enables new business models and applications that were previously computationally infeasible.

The convergence of Internet of Things devices, 5G networks, and advanced computing architectures has created market conditions favoring models that can operate effectively across diverse computational environments. Smart city initiatives, industrial automation projects, and environmental monitoring systems all require modeling solutions that can scale from local sensor networks to global analytical frameworks while maintaining operational accuracy.

Investment patterns indicate strong market confidence in scalable AI modeling technologies. Venture capital funding, corporate research investments, and government initiatives are increasingly focused on solutions that address the fundamental scalability-accuracy challenge. This investment momentum suggests sustained market growth and continued technological advancement in addressing the World Models versus Physical Models paradigm.

Enterprise demand for scalable AI modeling solutions spans multiple critical sectors. Autonomous vehicle manufacturers require models capable of processing real-time environmental data while maintaining safety-critical accuracy standards. Financial institutions seek modeling frameworks that can analyze market dynamics across global scales without compromising predictive precision. Manufacturing companies demand solutions that can optimize production processes across multiple facilities while accounting for local variations and constraints.

The healthcare and pharmaceutical industries represent particularly compelling market segments for advanced modeling solutions. Drug discovery processes require models that can simulate molecular interactions at unprecedented scales while maintaining chemical accuracy. Medical imaging applications demand solutions capable of processing massive datasets while preserving diagnostic precision. These requirements highlight the critical need for modeling approaches that transcend traditional scalability-accuracy limitations.

Cloud computing and edge computing infrastructure developments have significantly expanded market opportunities for scalable AI modeling solutions. Organizations can now deploy hybrid modeling architectures that leverage both centralized computational resources and distributed processing capabilities. This infrastructure evolution enables new business models and applications that were previously computationally infeasible.

The convergence of Internet of Things devices, 5G networks, and advanced computing architectures has created market conditions favoring models that can operate effectively across diverse computational environments. Smart city initiatives, industrial automation projects, and environmental monitoring systems all require modeling solutions that can scale from local sensor networks to global analytical frameworks while maintaining operational accuracy.

Investment patterns indicate strong market confidence in scalable AI modeling technologies. Venture capital funding, corporate research investments, and government initiatives are increasingly focused on solutions that address the fundamental scalability-accuracy challenge. This investment momentum suggests sustained market growth and continued technological advancement in addressing the World Models versus Physical Models paradigm.

Current State of World and Physical Modeling Approaches

World modeling and physical modeling represent two fundamental paradigms in computational simulation, each addressing different aspects of system representation and prediction. World models, primarily developed within the artificial intelligence and machine learning communities, focus on learning compressed representations of environments through data-driven approaches. These models typically employ neural networks, particularly recurrent neural networks, variational autoencoders, and transformer architectures, to capture temporal dynamics and spatial relationships from observational data.

Physical models, conversely, are grounded in established scientific principles and mathematical formulations derived from physics, chemistry, and engineering disciplines. These models utilize differential equations, finite element methods, and computational fluid dynamics to simulate real-world phenomena based on fundamental laws of nature. Current physical modeling approaches include molecular dynamics simulations, climate models, and structural analysis systems that have been refined over decades of scientific research.

The contemporary landscape reveals a growing convergence between these traditionally separate domains. Hybrid approaches are emerging that combine the interpretability and theoretical foundation of physical models with the adaptive learning capabilities of world models. Physics-informed neural networks exemplify this trend, incorporating physical constraints and conservation laws into machine learning architectures to improve both accuracy and generalizability.

Recent developments in world modeling have demonstrated remarkable progress in game environments and robotic control tasks, where models like DreamerV3 and MuZero have achieved superhuman performance. These systems excel in scenarios with well-defined rules and limited complexity but face challenges when scaling to real-world applications with high-dimensional state spaces and complex dynamics.

Physical modeling continues to dominate in engineering applications, scientific research, and safety-critical systems where interpretability and theoretical validation are paramount. Advanced computational methods such as multi-scale modeling and adaptive mesh refinement have enhanced the accuracy and efficiency of traditional physical simulations, enabling more detailed representations of complex phenomena.

The current state reflects a technological inflection point where both approaches are being evaluated for their respective strengths in scalability and accuracy across different application domains.

Physical models, conversely, are grounded in established scientific principles and mathematical formulations derived from physics, chemistry, and engineering disciplines. These models utilize differential equations, finite element methods, and computational fluid dynamics to simulate real-world phenomena based on fundamental laws of nature. Current physical modeling approaches include molecular dynamics simulations, climate models, and structural analysis systems that have been refined over decades of scientific research.

The contemporary landscape reveals a growing convergence between these traditionally separate domains. Hybrid approaches are emerging that combine the interpretability and theoretical foundation of physical models with the adaptive learning capabilities of world models. Physics-informed neural networks exemplify this trend, incorporating physical constraints and conservation laws into machine learning architectures to improve both accuracy and generalizability.

Recent developments in world modeling have demonstrated remarkable progress in game environments and robotic control tasks, where models like DreamerV3 and MuZero have achieved superhuman performance. These systems excel in scenarios with well-defined rules and limited complexity but face challenges when scaling to real-world applications with high-dimensional state spaces and complex dynamics.

Physical modeling continues to dominate in engineering applications, scientific research, and safety-critical systems where interpretability and theoretical validation are paramount. Advanced computational methods such as multi-scale modeling and adaptive mesh refinement have enhanced the accuracy and efficiency of traditional physical simulations, enabling more detailed representations of complex phenomena.

The current state reflects a technological inflection point where both approaches are being evaluated for their respective strengths in scalability and accuracy across different application domains.

Existing World Model and Physical Simulation Solutions

01 Hybrid modeling approaches combining world models and physical models

Systems that integrate data-driven world models with physics-based models to leverage the scalability of learned representations while maintaining the accuracy and interpretability of physical principles. This approach allows for improved generalization across different scenarios while ensuring consistency with known physical laws. The hybrid framework can dynamically balance between model types based on data availability and computational constraints.- Hybrid modeling approaches combining world models and physical models: Systems that integrate data-driven world models with physics-based models to leverage the scalability of learned representations while maintaining the accuracy and interpretability of physical principles. This approach allows for improved generalization across different scenarios while ensuring consistency with known physical laws. The hybrid framework can dynamically balance between model types based on data availability and computational constraints.

- Scalable world model architectures for large-scale simulations: Neural network-based world models designed to scale efficiently with increasing data and computational resources. These architectures employ techniques such as hierarchical representations, attention mechanisms, and distributed computing to handle complex environments. The models can learn compressed representations of high-dimensional state spaces, enabling efficient prediction and planning at scale while managing computational complexity.

- Accuracy enhancement through physics-informed constraints: Methods for improving model accuracy by incorporating physical constraints and domain knowledge into learning algorithms. These techniques ensure that predictions respect fundamental physical laws such as conservation principles, symmetries, and boundary conditions. The integration of physics-based priors reduces the amount of training data required and improves model robustness in extrapolation scenarios.

- Adaptive model selection and switching mechanisms: Systems that dynamically select between world models and physical models based on runtime conditions, accuracy requirements, and computational budgets. These mechanisms employ meta-learning or reinforcement learning to determine the optimal model type for specific tasks or regions of the state space. The approach enables real-time trade-offs between computational efficiency and prediction accuracy.

- Validation and uncertainty quantification frameworks: Methodologies for assessing and comparing the scalability and accuracy of different modeling approaches through systematic validation protocols. These frameworks include uncertainty quantification techniques, sensitivity analysis, and benchmark testing across diverse scenarios. The systems provide confidence metrics and error bounds to guide model selection and identify regions where specific model types perform optimally.

02 Scalable world model architectures for large-scale simulations

Neural network-based world models designed to scale efficiently with increasing data and computational resources. These architectures employ techniques such as hierarchical representations, attention mechanisms, and distributed computing to handle complex environments. The models can learn compressed representations of high-dimensional state spaces, enabling faster inference and training on large datasets while maintaining predictive accuracy.Expand Specific Solutions03 Physics-informed neural networks for enhanced accuracy

Methods that incorporate physical constraints and domain knowledge directly into neural network architectures to improve prediction accuracy. These approaches embed differential equations, conservation laws, and other physical principles as regularization terms or architectural constraints. This ensures that learned models respect fundamental physical relationships while benefiting from the flexibility of data-driven learning.Expand Specific Solutions04 Uncertainty quantification and validation frameworks

Techniques for assessing and comparing the reliability of world models versus physical models through systematic uncertainty quantification. These frameworks provide metrics for evaluating model confidence, identifying failure modes, and determining when each modeling approach is most appropriate. Methods include ensemble techniques, Bayesian inference, and sensitivity analysis to characterize prediction uncertainty and validate model outputs against ground truth data.Expand Specific Solutions05 Adaptive model selection and switching mechanisms

Systems that automatically select between world models and physical models based on runtime conditions, data quality, and accuracy requirements. These mechanisms monitor prediction performance and computational efficiency to dynamically switch between modeling approaches or blend their outputs. The adaptive framework optimizes the trade-off between scalability and accuracy by leveraging the strengths of each model type in different operational contexts.Expand Specific Solutions

Key Players in AI Modeling and Simulation Industry

The competition landscape for World Models versus Physical Models in terms of scalability and accuracy reflects an industry in rapid transition, characterized by substantial market growth and diverse technological maturity levels across key players. Major technology companies like NVIDIA, Google, Apple, and Huawei are driving significant advances in AI-powered world models, leveraging their computational infrastructure and machine learning expertise. Meanwhile, traditional engineering firms such as Siemens, Boeing, and Robert Bosch maintain strong positions in physics-based modeling through decades of domain expertise. The market demonstrates a bifurcated maturity pattern where established physical modeling approaches offer proven accuracy in specific domains, while emerging world model technologies show superior scalability potential but varying accuracy depending on application context. Companies like IBM and Tencent are bridging both approaches, while specialized firms and research institutions continue advancing hybrid methodologies that combine the precision of physical models with the adaptability of learned world representations.

Huawei Technologies Co., Ltd.

Technical Solution: Huawei has developed world models for autonomous systems and smart city applications, integrating them with their Atlas AI computing platform. Their approach combines edge computing capabilities with centralized world model training, enabling scalable deployment across distributed systems. Huawei's world models focus on real-time processing for autonomous vehicles and IoT applications, utilizing their proprietary Ascend AI processors for efficient inference. The company emphasizes hybrid modeling approaches that balance accuracy with computational constraints in resource-limited environments.

Strengths: Strong edge computing integration, optimized for resource-constrained environments, comprehensive IoT ecosystem. Weaknesses: Limited global market access, dependency on proprietary hardware platforms.

The Boeing Co.

Technical Solution: Boeing utilizes world models for aerospace simulation and digital twin applications, focusing on high-fidelity physics-based modeling combined with machine learning components. Their world models integrate aerodynamics, structural mechanics, and system dynamics to create comprehensive digital representations of aircraft systems. Boeing's approach emphasizes accuracy over pure scalability, utilizing high-performance computing clusters to run detailed simulations that inform design decisions and operational optimization. Their models incorporate real-world flight data to continuously improve prediction accuracy and system understanding.

Strengths: Extremely high accuracy requirements, extensive domain expertise, robust validation processes. Weaknesses: Limited scalability due to computational complexity, specialized application domain.

Core Innovations in Scalable AI World Modeling

Method and apparatus for modeling devices having different geometries

PatentInactiveUS20050027501A1

Innovation

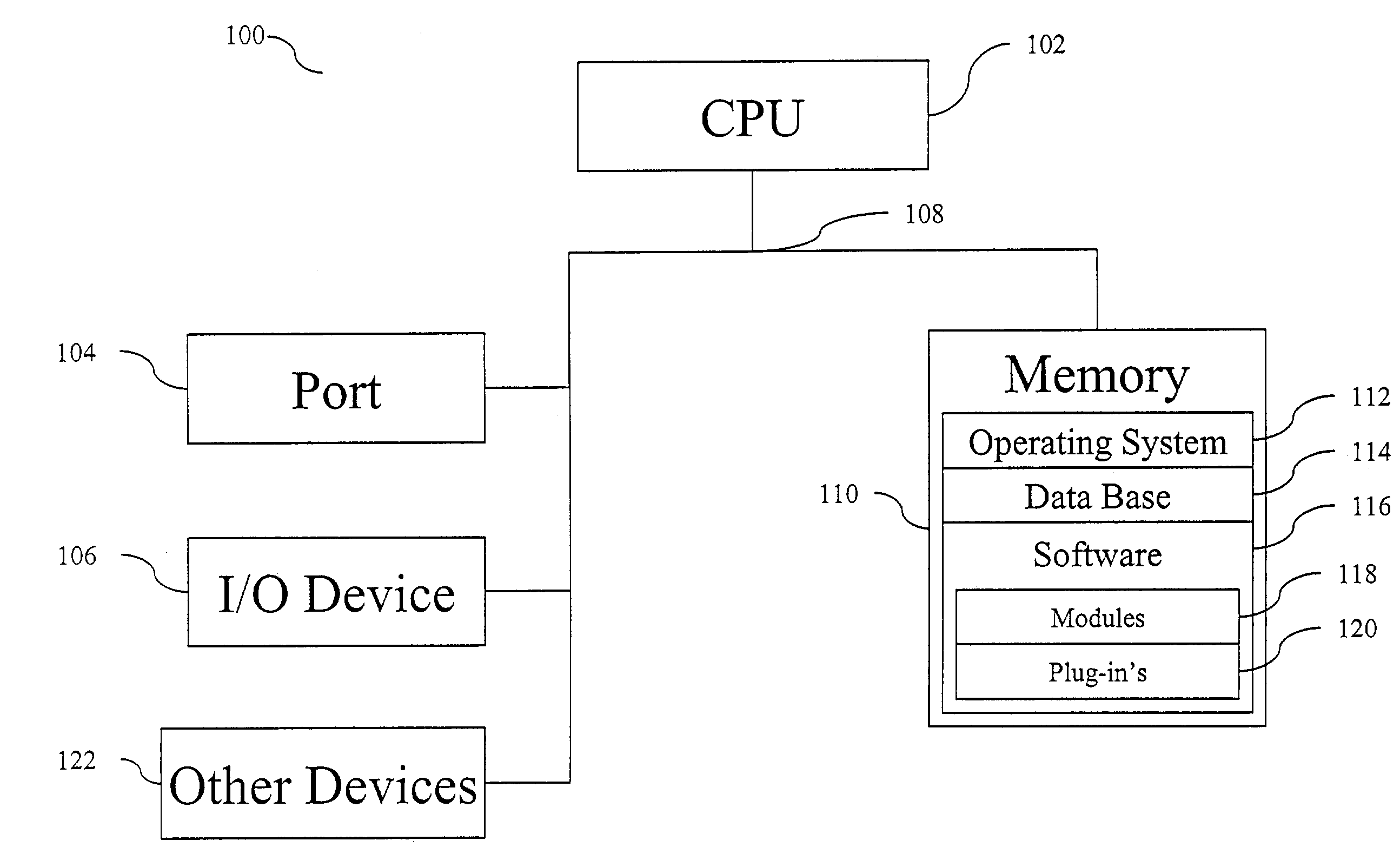

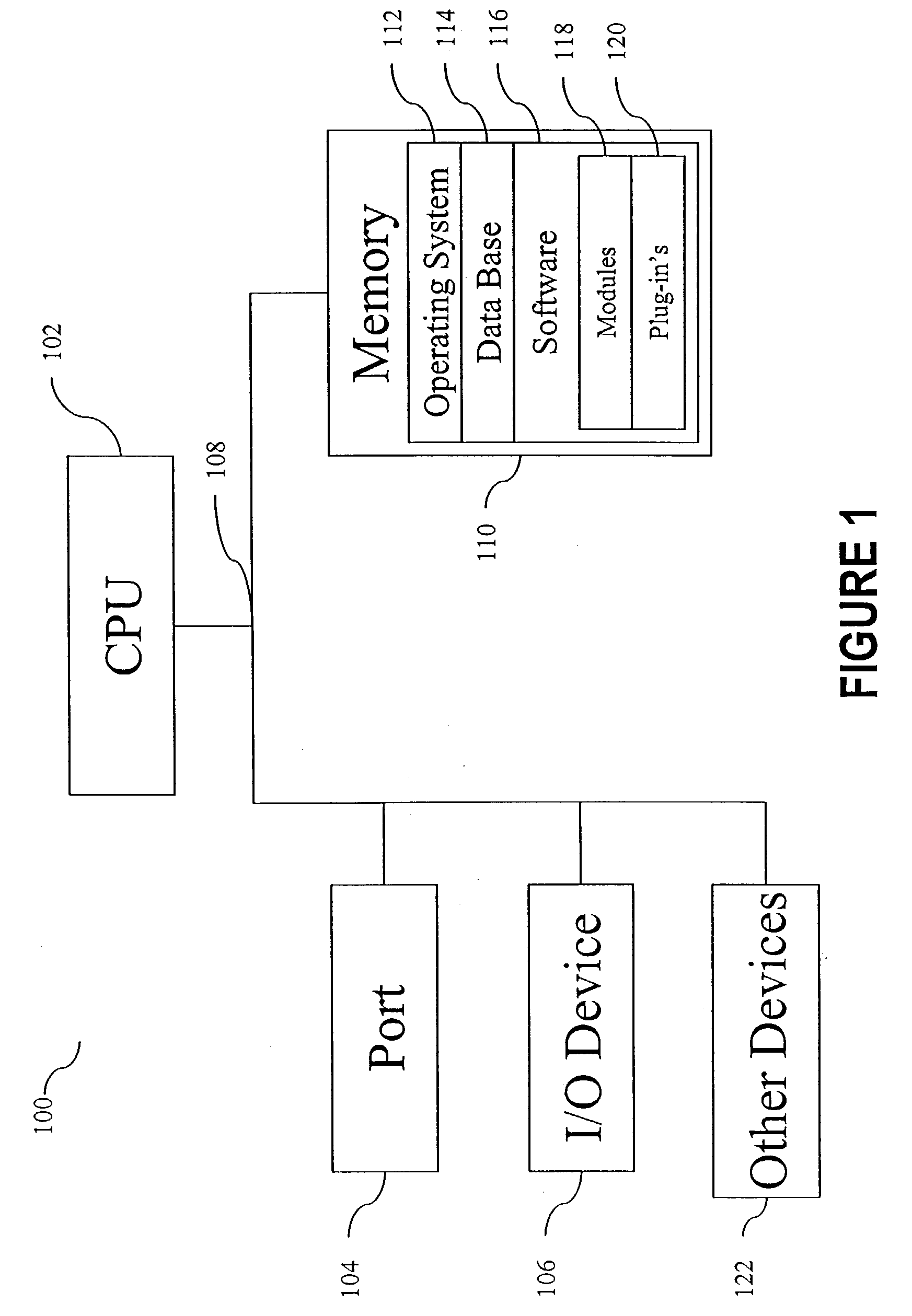

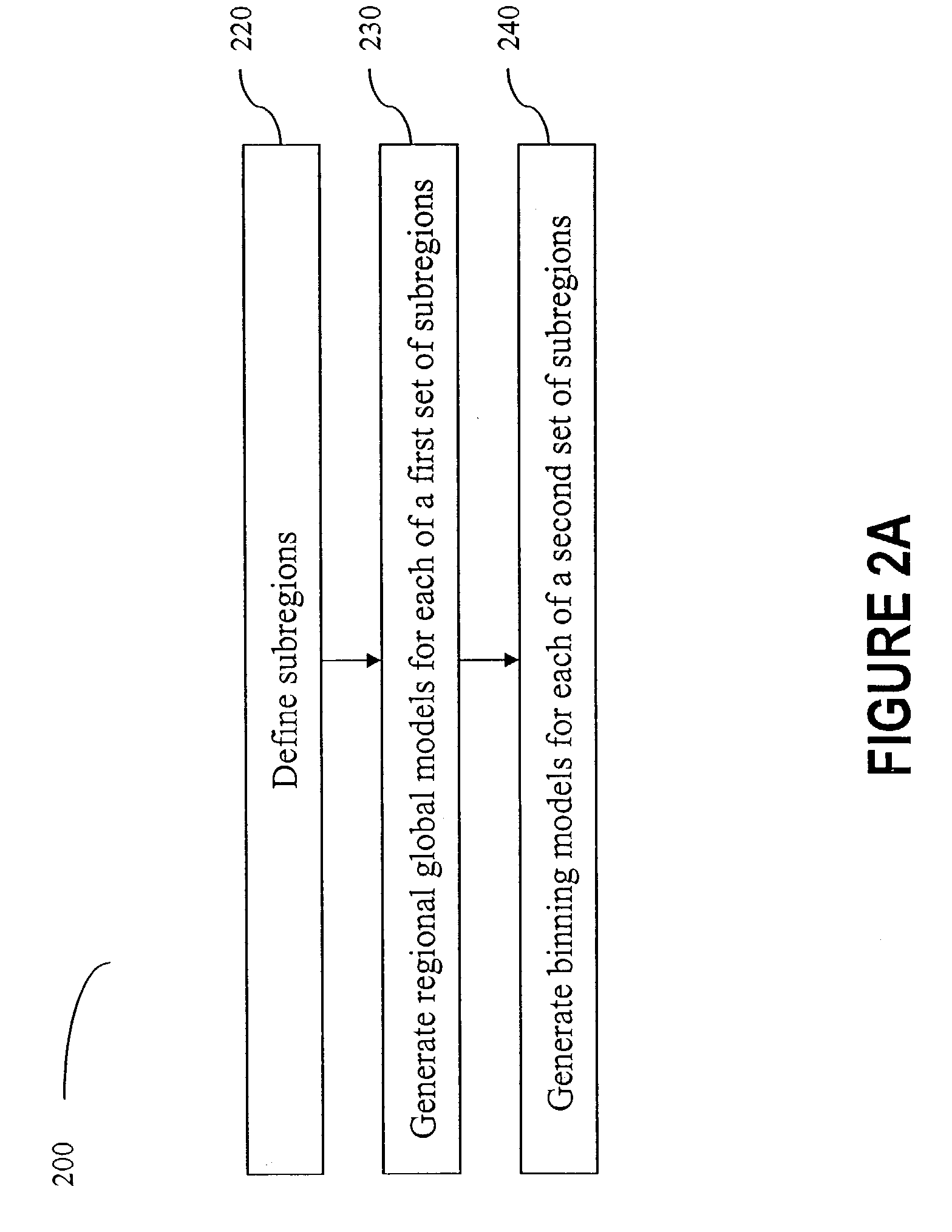

- A method is introduced that divides the geometrical space of semiconductor devices into subregions, generating regional global models for each subregion using model equations and measurement data, and binning models to ensure continuity across geometry variations, allowing for the use of a single set of model parameters to model a wide range of geometrical variations.

Information processing apparatus, information processing method, and non-transitory computer-readable medium

PatentPendingUS20250391037A1

Innovation

- A regression model is used to derive relationships between physical quantities from pairs of latent variables output from a world model, approximating the correspondence between these variables and physical quantities to stabilize the conversion process.

Computational Resource Requirements and Constraints

The computational resource requirements for World Models and Physical Models present fundamentally different scaling characteristics and operational constraints. World Models, particularly those based on neural networks and deep learning architectures, demand substantial GPU memory and processing power during both training and inference phases. These models typically require high-performance computing clusters with specialized hardware accelerators, consuming significant energy resources that can reach megawatt-scale power consumption for large-scale implementations.

Physical Models exhibit more predictable computational scaling patterns, with resource requirements directly correlating to simulation complexity and spatial-temporal resolution. Traditional physics-based simulations can leverage CPU-based parallel computing architectures effectively, though high-fidelity simulations still require substantial computational resources. The memory requirements for Physical Models are generally more manageable and scale linearly with problem size, unlike the exponential scaling often observed in large neural network architectures.

Memory bandwidth emerges as a critical constraint for both approaches, though manifesting differently. World Models face challenges with parameter storage and activation memory during forward passes, particularly for transformer-based architectures that exhibit quadratic memory scaling with sequence length. Physical Models encounter memory bottlenecks primarily in data-intensive operations such as mesh refinement and boundary condition handling in finite element simulations.

Real-time performance constraints significantly impact deployment feasibility. World Models, once trained, can achieve remarkably fast inference times on optimized hardware, making them suitable for real-time applications. However, their training phase remains computationally prohibitive, often requiring weeks or months on distributed computing systems. Physical Models offer more consistent performance characteristics but may struggle to meet real-time requirements for complex multi-physics simulations.

The scalability ceiling differs markedly between approaches. World Models face fundamental limitations related to model size, training data requirements, and diminishing returns from increased parameters. Physical Models encounter scalability constraints primarily from numerical stability, computational complexity of governing equations, and the curse of dimensionality in high-dimensional parameter spaces.

Energy efficiency considerations favor Physical Models for sustained operations, as they maintain relatively stable power consumption profiles. World Models, while energy-intensive during training, can achieve superior energy efficiency during deployment phases, particularly when processing large volumes of similar prediction tasks across distributed inference systems.

Physical Models exhibit more predictable computational scaling patterns, with resource requirements directly correlating to simulation complexity and spatial-temporal resolution. Traditional physics-based simulations can leverage CPU-based parallel computing architectures effectively, though high-fidelity simulations still require substantial computational resources. The memory requirements for Physical Models are generally more manageable and scale linearly with problem size, unlike the exponential scaling often observed in large neural network architectures.

Memory bandwidth emerges as a critical constraint for both approaches, though manifesting differently. World Models face challenges with parameter storage and activation memory during forward passes, particularly for transformer-based architectures that exhibit quadratic memory scaling with sequence length. Physical Models encounter memory bottlenecks primarily in data-intensive operations such as mesh refinement and boundary condition handling in finite element simulations.

Real-time performance constraints significantly impact deployment feasibility. World Models, once trained, can achieve remarkably fast inference times on optimized hardware, making them suitable for real-time applications. However, their training phase remains computationally prohibitive, often requiring weeks or months on distributed computing systems. Physical Models offer more consistent performance characteristics but may struggle to meet real-time requirements for complex multi-physics simulations.

The scalability ceiling differs markedly between approaches. World Models face fundamental limitations related to model size, training data requirements, and diminishing returns from increased parameters. Physical Models encounter scalability constraints primarily from numerical stability, computational complexity of governing equations, and the curse of dimensionality in high-dimensional parameter spaces.

Energy efficiency considerations favor Physical Models for sustained operations, as they maintain relatively stable power consumption profiles. World Models, while energy-intensive during training, can achieve superior energy efficiency during deployment phases, particularly when processing large volumes of similar prediction tasks across distributed inference systems.

Model Interpretability and Validation Frameworks

Model interpretability and validation frameworks represent critical components in evaluating the comparative effectiveness of world models versus physical models, particularly when assessing their scalability and accuracy trade-offs. These frameworks provide systematic approaches to understand model behavior, validate predictions, and establish confidence bounds for different modeling paradigms.

Traditional validation frameworks for physical models rely heavily on established mathematical principles and domain-specific knowledge. These frameworks typically employ analytical validation methods, including dimensional analysis, conservation law verification, and boundary condition testing. Physical models benefit from well-established theoretical foundations that enable direct interpretation of model parameters and their physical significance. Validation often involves comparing model outputs against controlled experimental data and known analytical solutions.

World models present unique challenges for interpretability due to their data-driven nature and complex internal representations. Validation frameworks for these models must address the black-box characteristics inherent in neural network architectures. Emerging approaches include attention visualization, feature attribution methods, and latent space analysis to understand how world models encode environmental dynamics. Cross-validation techniques, uncertainty quantification, and robustness testing become essential components of comprehensive validation protocols.

Hybrid validation frameworks are emerging to address scenarios where both modeling approaches are employed simultaneously. These frameworks incorporate multi-scale validation strategies that can assess model performance across different temporal and spatial scales. They integrate physics-informed constraints with data-driven validation metrics, enabling comprehensive evaluation of model accuracy while maintaining interpretability requirements.

The scalability aspect introduces additional complexity to validation frameworks, as traditional statistical validation methods may not adequately capture performance degradation in high-dimensional spaces. Advanced validation techniques include progressive validation strategies, where models are tested across increasing complexity levels, and distributed validation protocols that can handle large-scale model deployments. These frameworks must also address computational efficiency considerations while maintaining validation rigor.

Future validation frameworks are evolving toward automated interpretability assessment and real-time validation capabilities, enabling continuous model monitoring and adaptive validation strategies that can dynamically adjust validation criteria based on operational conditions and performance requirements.

Traditional validation frameworks for physical models rely heavily on established mathematical principles and domain-specific knowledge. These frameworks typically employ analytical validation methods, including dimensional analysis, conservation law verification, and boundary condition testing. Physical models benefit from well-established theoretical foundations that enable direct interpretation of model parameters and their physical significance. Validation often involves comparing model outputs against controlled experimental data and known analytical solutions.

World models present unique challenges for interpretability due to their data-driven nature and complex internal representations. Validation frameworks for these models must address the black-box characteristics inherent in neural network architectures. Emerging approaches include attention visualization, feature attribution methods, and latent space analysis to understand how world models encode environmental dynamics. Cross-validation techniques, uncertainty quantification, and robustness testing become essential components of comprehensive validation protocols.

Hybrid validation frameworks are emerging to address scenarios where both modeling approaches are employed simultaneously. These frameworks incorporate multi-scale validation strategies that can assess model performance across different temporal and spatial scales. They integrate physics-informed constraints with data-driven validation metrics, enabling comprehensive evaluation of model accuracy while maintaining interpretability requirements.

The scalability aspect introduces additional complexity to validation frameworks, as traditional statistical validation methods may not adequately capture performance degradation in high-dimensional spaces. Advanced validation techniques include progressive validation strategies, where models are tested across increasing complexity levels, and distributed validation protocols that can handle large-scale model deployments. These frameworks must also address computational efficiency considerations while maintaining validation rigor.

Future validation frameworks are evolving toward automated interpretability assessment and real-time validation capabilities, enabling continuous model monitoring and adaptive validation strategies that can dynamically adjust validation criteria based on operational conditions and performance requirements.

Unlock deeper insights with PatSnap Eureka Quick Research — get a full tech report to explore trends and direct your research. Try now!

Generate Your Research Report Instantly with AI Agent

Supercharge your innovation with PatSnap Eureka AI Agent Platform!