Bridge Rectifier vs Memory Buffer: Managing Data Bursts

MAR 24, 20269 MIN READ

Generate Your Research Report Instantly with AI Agent

PatSnap Eureka helps you evaluate technical feasibility & market potential.

Bridge Rectifier Memory Buffer Background and Objectives

The convergence of analog circuit design principles with digital memory management represents a fascinating intersection in modern electronics engineering. Bridge rectifiers, fundamental components in power conversion circuits, have traditionally operated in the analog domain to convert alternating current to direct current. However, the increasing complexity of electronic systems and the exponential growth in data processing requirements have created new challenges that demand innovative approaches to managing data bursts and memory buffering.

The evolution of electronic systems has witnessed a significant shift from purely analog or digital solutions toward hybrid architectures that leverage the strengths of both domains. In high-performance computing environments, data processing units frequently encounter irregular data flows characterized by sudden bursts of information that can overwhelm conventional memory management systems. These data bursts present unique challenges in terms of power consumption, signal integrity, and system reliability.

Traditional memory buffer architectures have relied primarily on digital switching mechanisms and software-based management protocols. While effective for steady-state operations, these approaches often struggle with the dynamic nature of burst data patterns, leading to bottlenecks, increased latency, and potential data loss. The integration of bridge rectifier principles into memory buffer design offers a novel approach to address these limitations by providing more efficient power management and signal conditioning capabilities.

The primary objective of this technological investigation centers on developing hybrid memory buffer systems that incorporate bridge rectifier functionality to enhance data burst management capabilities. This approach aims to achieve superior power efficiency during peak data processing periods while maintaining signal integrity across varying load conditions. The integration seeks to minimize power consumption fluctuations that typically accompany sudden changes in data throughput.

Furthermore, the research objectives encompass the development of adaptive buffering mechanisms that can dynamically adjust their operational characteristics based on incoming data patterns. By leveraging the inherent voltage regulation properties of bridge rectifier circuits, these systems can potentially provide more stable operating conditions for memory components during high-stress data processing scenarios.

The ultimate goal involves creating a new paradigm for memory management that combines the reliability and efficiency of analog circuit principles with the flexibility and programmability of digital memory systems, thereby establishing a foundation for next-generation data processing architectures capable of handling increasingly complex computational workloads.

The evolution of electronic systems has witnessed a significant shift from purely analog or digital solutions toward hybrid architectures that leverage the strengths of both domains. In high-performance computing environments, data processing units frequently encounter irregular data flows characterized by sudden bursts of information that can overwhelm conventional memory management systems. These data bursts present unique challenges in terms of power consumption, signal integrity, and system reliability.

Traditional memory buffer architectures have relied primarily on digital switching mechanisms and software-based management protocols. While effective for steady-state operations, these approaches often struggle with the dynamic nature of burst data patterns, leading to bottlenecks, increased latency, and potential data loss. The integration of bridge rectifier principles into memory buffer design offers a novel approach to address these limitations by providing more efficient power management and signal conditioning capabilities.

The primary objective of this technological investigation centers on developing hybrid memory buffer systems that incorporate bridge rectifier functionality to enhance data burst management capabilities. This approach aims to achieve superior power efficiency during peak data processing periods while maintaining signal integrity across varying load conditions. The integration seeks to minimize power consumption fluctuations that typically accompany sudden changes in data throughput.

Furthermore, the research objectives encompass the development of adaptive buffering mechanisms that can dynamically adjust their operational characteristics based on incoming data patterns. By leveraging the inherent voltage regulation properties of bridge rectifier circuits, these systems can potentially provide more stable operating conditions for memory components during high-stress data processing scenarios.

The ultimate goal involves creating a new paradigm for memory management that combines the reliability and efficiency of analog circuit principles with the flexibility and programmability of digital memory systems, thereby establishing a foundation for next-generation data processing architectures capable of handling increasingly complex computational workloads.

Market Demand for Data Burst Management Solutions

The global data management market is experiencing unprecedented growth driven by the exponential increase in data generation across industries. Organizations worldwide are grappling with the challenge of handling sudden data influxes that can overwhelm traditional processing systems. This surge in data volume, velocity, and variability has created a critical need for robust data burst management solutions that can maintain system stability and performance during peak loads.

Enterprise applications across sectors including telecommunications, financial services, healthcare, and e-commerce are particularly vulnerable to data burst scenarios. Network infrastructure providers face constant pressure to manage traffic spikes during peak usage periods, while financial institutions must process massive transaction volumes during market volatility. Healthcare systems require reliable data handling for real-time patient monitoring, and e-commerce platforms must accommodate shopping surges during promotional events.

The Internet of Things ecosystem has significantly amplified market demand for effective data burst management. Connected devices generate intermittent but intensive data streams that traditional buffering mechanisms struggle to accommodate efficiently. Smart city initiatives, industrial automation systems, and autonomous vehicle networks all require sophisticated solutions that can seamlessly handle unpredictable data patterns without compromising system integrity.

Cloud computing adoption has further intensified the need for advanced data burst management capabilities. As organizations migrate to hybrid and multi-cloud environments, they encounter complex data flow patterns that require intelligent buffering and processing strategies. The shift toward edge computing has created additional demand for localized data burst management solutions that can operate effectively in resource-constrained environments.

Emerging technologies such as artificial intelligence, machine learning, and real-time analytics are driving new requirements for data burst management solutions. These applications demand low-latency processing capabilities that can handle sudden computational workloads without degrading performance. The growing emphasis on real-time decision-making across industries has elevated data burst management from a technical consideration to a business-critical requirement.

Market demand is increasingly focused on solutions that offer adaptive capacity scaling, intelligent load balancing, and predictive burst detection capabilities. Organizations seek integrated approaches that combine hardware optimization with software intelligence to create resilient data processing architectures capable of maintaining consistent performance under varying load conditions.

Enterprise applications across sectors including telecommunications, financial services, healthcare, and e-commerce are particularly vulnerable to data burst scenarios. Network infrastructure providers face constant pressure to manage traffic spikes during peak usage periods, while financial institutions must process massive transaction volumes during market volatility. Healthcare systems require reliable data handling for real-time patient monitoring, and e-commerce platforms must accommodate shopping surges during promotional events.

The Internet of Things ecosystem has significantly amplified market demand for effective data burst management. Connected devices generate intermittent but intensive data streams that traditional buffering mechanisms struggle to accommodate efficiently. Smart city initiatives, industrial automation systems, and autonomous vehicle networks all require sophisticated solutions that can seamlessly handle unpredictable data patterns without compromising system integrity.

Cloud computing adoption has further intensified the need for advanced data burst management capabilities. As organizations migrate to hybrid and multi-cloud environments, they encounter complex data flow patterns that require intelligent buffering and processing strategies. The shift toward edge computing has created additional demand for localized data burst management solutions that can operate effectively in resource-constrained environments.

Emerging technologies such as artificial intelligence, machine learning, and real-time analytics are driving new requirements for data burst management solutions. These applications demand low-latency processing capabilities that can handle sudden computational workloads without degrading performance. The growing emphasis on real-time decision-making across industries has elevated data burst management from a technical consideration to a business-critical requirement.

Market demand is increasingly focused on solutions that offer adaptive capacity scaling, intelligent load balancing, and predictive burst detection capabilities. Organizations seek integrated approaches that combine hardware optimization with software intelligence to create resilient data processing architectures capable of maintaining consistent performance under varying load conditions.

Current State and Challenges in Data Buffer Technologies

Data buffer technologies currently face significant challenges in managing burst traffic patterns, with traditional memory buffer architectures struggling to maintain optimal performance under varying load conditions. Contemporary buffer systems predominantly rely on SRAM-based solutions for high-speed applications and DRAM for larger capacity requirements, yet both approaches exhibit limitations when handling unpredictable data bursts that characterize modern network and computing environments.

The primary technical challenge lies in the fundamental trade-off between buffer depth, access speed, and power consumption. Current FIFO buffer implementations typically operate with fixed memory allocation strategies, leading to either over-provisioning during low-traffic periods or buffer overflow during peak demand. This static approach results in suboptimal resource utilization and increased latency variability, particularly problematic in real-time applications requiring consistent performance guarantees.

Power management represents another critical constraint in existing buffer architectures. Traditional designs maintain constant power consumption regardless of actual data throughput, creating inefficiencies in battery-powered devices and contributing to thermal management challenges in high-density systems. The lack of dynamic power scaling mechanisms limits the deployment of buffer-intensive applications in energy-constrained environments.

Geographically, advanced buffer technology development concentrates primarily in established semiconductor hubs including Silicon Valley, Taiwan, South Korea, and specific regions in Europe. This concentration creates supply chain vulnerabilities and limits innovation diversity, as emerging markets struggle to contribute meaningfully to buffer technology advancement due to high research and development costs.

Current buffer implementations also face scalability challenges when integrated into multi-core and distributed computing architectures. Coherency maintenance across multiple buffer instances introduces complexity and performance overhead, while traditional centralized buffer management approaches create bottlenecks that limit system-wide throughput improvements.

The emergence of new application domains, including edge computing, autonomous vehicles, and IoT devices, demands buffer solutions capable of handling diverse data patterns with minimal latency and power consumption. However, existing technologies lack the adaptive capabilities necessary to optimize performance across such varied operational contexts, highlighting the need for innovative approaches that can dynamically adjust buffer behavior based on real-time traffic characteristics and system requirements.

The primary technical challenge lies in the fundamental trade-off between buffer depth, access speed, and power consumption. Current FIFO buffer implementations typically operate with fixed memory allocation strategies, leading to either over-provisioning during low-traffic periods or buffer overflow during peak demand. This static approach results in suboptimal resource utilization and increased latency variability, particularly problematic in real-time applications requiring consistent performance guarantees.

Power management represents another critical constraint in existing buffer architectures. Traditional designs maintain constant power consumption regardless of actual data throughput, creating inefficiencies in battery-powered devices and contributing to thermal management challenges in high-density systems. The lack of dynamic power scaling mechanisms limits the deployment of buffer-intensive applications in energy-constrained environments.

Geographically, advanced buffer technology development concentrates primarily in established semiconductor hubs including Silicon Valley, Taiwan, South Korea, and specific regions in Europe. This concentration creates supply chain vulnerabilities and limits innovation diversity, as emerging markets struggle to contribute meaningfully to buffer technology advancement due to high research and development costs.

Current buffer implementations also face scalability challenges when integrated into multi-core and distributed computing architectures. Coherency maintenance across multiple buffer instances introduces complexity and performance overhead, while traditional centralized buffer management approaches create bottlenecks that limit system-wide throughput improvements.

The emergence of new application domains, including edge computing, autonomous vehicles, and IoT devices, demands buffer solutions capable of handling diverse data patterns with minimal latency and power consumption. However, existing technologies lack the adaptive capabilities necessary to optimize performance across such varied operational contexts, highlighting the need for innovative approaches that can dynamically adjust buffer behavior based on real-time traffic characteristics and system requirements.

Existing Solutions for Data Burst Handling

01 Bridge rectifier circuits for power conversion and management

Bridge rectifier circuits are fundamental components used to convert alternating current (AC) to direct current (DC) in various electronic systems. These circuits typically employ diode configurations arranged in a bridge topology to achieve full-wave rectification. Advanced implementations may include synchronous rectification techniques, active control mechanisms, and integrated power management features to improve efficiency and reduce power losses. The rectifier circuits can be optimized for different voltage and current requirements, incorporating protection mechanisms and thermal management solutions.- Bridge rectifier circuits for power conversion and management: Bridge rectifier circuits are fundamental components used to convert alternating current (AC) to direct current (DC) in various electronic systems. These circuits typically employ diode configurations arranged in a bridge topology to achieve full-wave rectification. Advanced implementations may include synchronous rectification techniques, active control mechanisms, and integrated power management features to improve efficiency and reduce power losses. The rectifier circuits can be optimized for different voltage and current requirements, incorporating protection mechanisms and thermal management solutions.

- Memory buffer architecture and data flow control: Memory buffer systems are designed to temporarily store data during transfer operations between components operating at different speeds or protocols. These architectures implement various buffering strategies including FIFO (First-In-First-Out), circular buffers, and multi-level cache structures. The buffer management includes mechanisms for handling data overflow, underflow conditions, and maintaining data integrity during high-speed operations. Advanced buffer designs incorporate dynamic allocation, priority-based queuing, and adaptive sizing to optimize performance across varying workload conditions.

- Burst mode data transfer and bandwidth optimization: Burst mode data transfer techniques enable efficient transmission of multiple data units in rapid succession without intermediate delays. These methods maximize bus utilization and memory bandwidth by grouping related data transactions together. Implementation strategies include burst length configuration, address auto-increment mechanisms, and pipeline optimization to reduce latency. The systems incorporate arbitration logic, burst scheduling algorithms, and flow control protocols to manage multiple burst requests and prevent conflicts while maintaining data coherence.

- Memory controller and buffer coordination mechanisms: Memory controllers coordinate data movement between processors, memory devices, and peripheral components through sophisticated buffer management. These controllers implement command queuing, request reordering, and priority scheduling to optimize memory access patterns. The coordination mechanisms handle multiple concurrent data streams, manage buffer allocation dynamically, and ensure proper synchronization between different clock domains. Advanced features include predictive prefetching, write combining, and adaptive refresh scheduling to enhance overall system performance.

- Power management integration with data buffering systems: Integrated power management solutions combine rectification, voltage regulation, and buffer control to optimize energy efficiency in data transfer operations. These systems implement dynamic power scaling based on buffer occupancy levels and data transfer rates. Power-aware buffer management includes techniques such as selective buffer activation, clock gating during idle periods, and voltage-frequency scaling coordinated with burst activity. The integration ensures stable power delivery during high-current burst operations while minimizing energy consumption during low-activity periods.

02 Memory buffer architecture and data flow control

Memory buffer systems are designed to temporarily store data during transfer operations between different components or subsystems operating at varying speeds. These architectures implement sophisticated buffering schemes including FIFO structures, multi-level caching, and intelligent data staging mechanisms. The buffer management includes address generation, pointer control, and status monitoring to ensure efficient data flow. Advanced implementations feature configurable buffer sizes, priority-based access schemes, and error detection capabilities to maintain data integrity during high-speed operations.Expand Specific Solutions03 Data burst transfer protocols and timing control

Data burst transfer mechanisms enable efficient high-speed data transmission by grouping multiple data units into sequential bursts. These protocols implement precise timing control, synchronization signals, and handshaking mechanisms to coordinate burst operations between transmitting and receiving devices. The systems incorporate burst length configuration, interleaving capabilities, and adaptive timing adjustments to optimize throughput. Control logic manages burst initiation, continuation, and termination while maintaining data coherency and minimizing latency during transfer operations.Expand Specific Solutions04 Memory arbitration and bandwidth allocation for burst operations

Memory arbitration systems manage concurrent access requests from multiple sources competing for shared memory resources during burst operations. These mechanisms implement priority schemes, round-robin algorithms, or weighted fair queuing to allocate bandwidth efficiently among competing data streams. The arbitration logic includes request queuing, grant generation, and conflict resolution to prevent data collisions and ensure fair resource distribution. Advanced implementations feature dynamic priority adjustment, quality-of-service guarantees, and latency-sensitive scheduling to optimize overall system performance during intensive burst traffic.Expand Specific Solutions05 Integrated power and data management systems

Integrated systems combine power conversion circuitry with data buffering and transfer control to create unified management solutions. These implementations coordinate power delivery with data flow requirements, implementing power-aware buffering strategies and dynamic voltage scaling based on data traffic patterns. The systems feature cross-domain synchronization between power and data domains, incorporating power state transitions that preserve data integrity. Advanced designs include predictive power management based on burst activity patterns, energy-efficient buffer operation modes, and coordinated shutdown sequences to minimize power consumption while maintaining data availability.Expand Specific Solutions

Key Players in Memory Management and Buffer Industry

The bridge rectifier versus memory buffer technology landscape represents a mature semiconductor market experiencing significant growth driven by increasing data processing demands and IoT proliferation. The market, valued in billions globally, demonstrates strong expansion potential as digital transformation accelerates across industries. Technology maturity varies significantly among key players, with established giants like Samsung Electronics, Micron Technology, and SK Hynix leading in advanced memory solutions and manufacturing capabilities. Companies such as AMD, Qualcomm, and Intel (through acquired Altera) showcase sophisticated processor-memory integration technologies. Emerging players like Yangtze Memory Technologies and specialized firms including Rambus and Avalanche Technology contribute innovative approaches to data burst management. The competitive landscape reflects a mix of vertically integrated manufacturers and specialized design houses, indicating a well-developed ecosystem with both incremental improvements and breakthrough innovations in memory buffer architectures and power management solutions.

Samsung Electronics Co., Ltd.

Technical Solution: Samsung implements advanced memory buffer architectures in their DRAM and SSD controllers to manage data bursts effectively. Their solutions include multi-level buffering systems with intelligent burst detection algorithms that can dynamically adjust buffer allocation based on incoming data patterns. The company utilizes high-speed SRAM buffers combined with sophisticated queue management to handle peak data loads while maintaining consistent throughput. Their memory controllers feature adaptive burst management that can predict and pre-allocate buffer space for anticipated data surges, reducing latency and improving overall system performance in high-bandwidth applications.

Strengths: Industry-leading memory technology expertise, extensive R&D resources, proven scalability in consumer and enterprise markets. Weaknesses: Higher cost solutions, complex integration requirements for specialized applications.

Micron Technology, Inc.

Technical Solution: Micron develops comprehensive memory buffer solutions that leverage their expertise in DRAM and NAND flash technologies. Their approach focuses on hierarchical buffer management systems that can efficiently handle varying burst sizes and frequencies. The company's solutions incorporate predictive algorithms that analyze data access patterns to optimize buffer allocation and minimize overflow conditions. Micron's memory controllers feature advanced error correction and data integrity mechanisms specifically designed to maintain reliability during high-burst scenarios, making them suitable for enterprise storage and data center applications where consistent performance is critical.

Strengths: Strong memory technology foundation, robust error correction capabilities, excellent enterprise market presence. Weaknesses: Limited customization options for specialized applications, dependency on memory market cycles.

Core Innovations in Bridge Rectifier Buffer Design

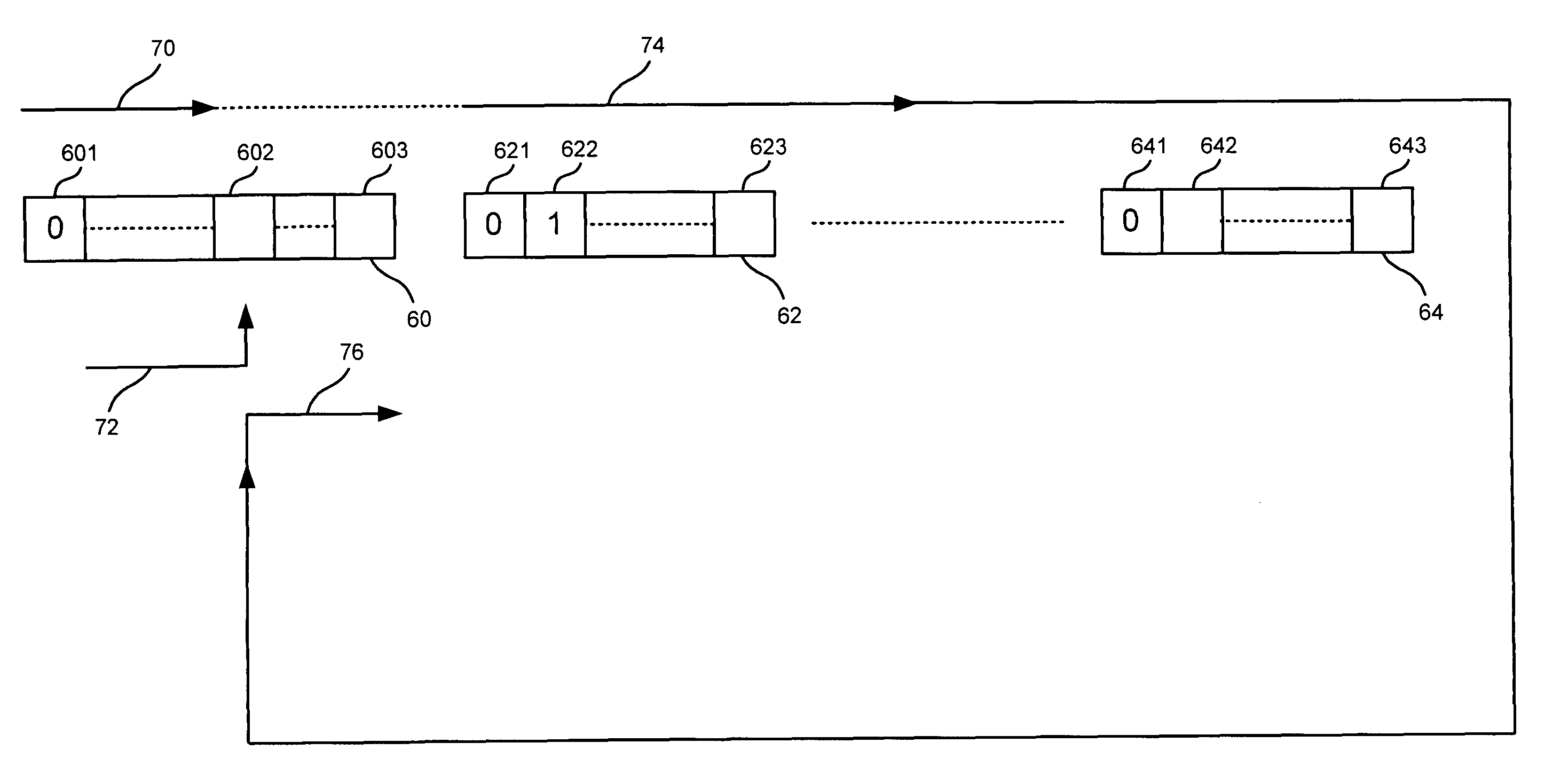

Bridges capable of controlling data flushing and methods for flushing data

PatentActiveUS7822905B2

Innovation

- A flush request control circuit that records the buffer write pointer and compares it with the buffer read pointer to generate a flush acknowledgement signal, ensuring the buffering unit is empty before outputting the signal, thereby preventing false acknowledgments and allowing continuous data transfer.

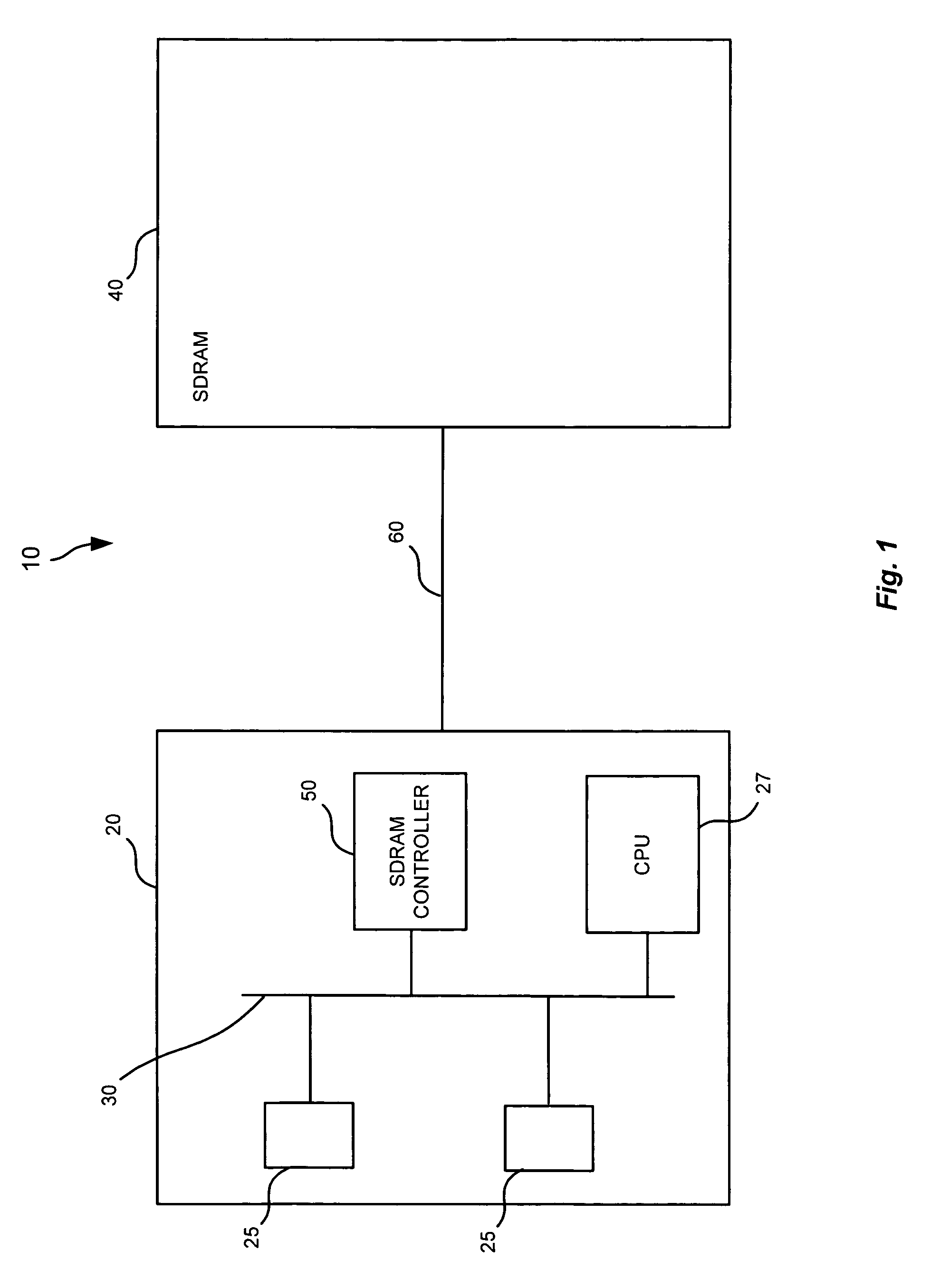

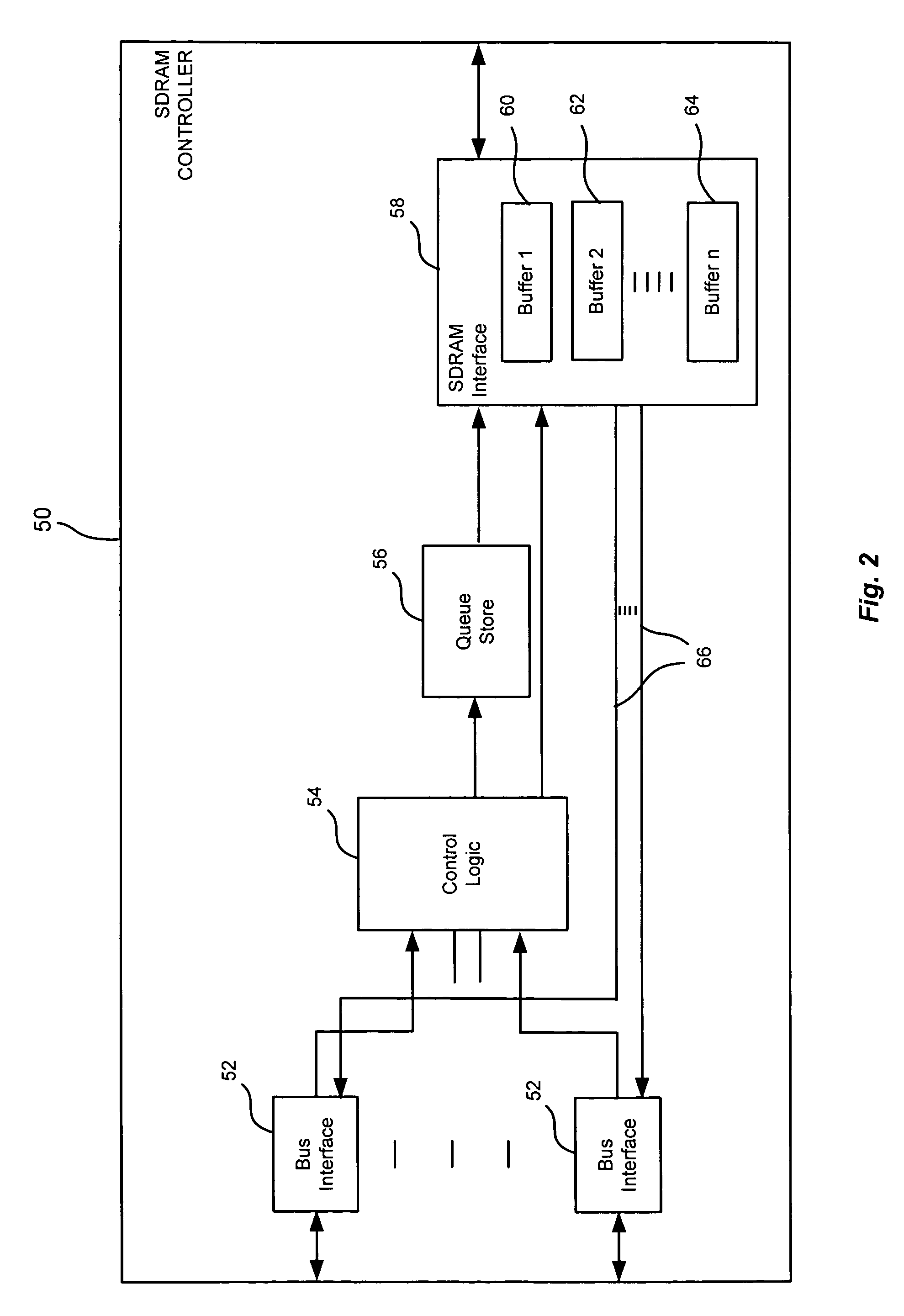

Memory controller having a buffer for providing beginning and end data

PatentInactiveUS7899957B1

Innovation

- The SDRAM controller employs multiple buffers to store data from multiple bursts, allowing data to be retrieved and returned efficiently without additional bus occupancy by caching remaining data in buffers until needed.

Power Efficiency Standards for Memory Systems

Power efficiency standards for memory systems have become increasingly critical as data centers and computing infrastructure face mounting pressure to reduce energy consumption while maintaining high performance. The growing demand for data processing capabilities, coupled with environmental sustainability requirements, has driven the development of comprehensive efficiency frameworks that govern how memory systems manage power during various operational states.

Current industry standards primarily focus on establishing baseline power consumption metrics for different memory technologies, including DDR4, DDR5, and emerging non-volatile memory solutions. These standards define specific power states such as active, idle, and standby modes, with particular attention to dynamic power scaling capabilities that allow memory systems to adjust consumption based on workload demands. The JEDEC organization has been instrumental in developing these specifications, creating standardized testing methodologies that ensure consistent power measurement across different manufacturers and implementations.

The challenge of managing data bursts within these efficiency frameworks requires sophisticated power management strategies that can rapidly transition between different power states without compromising system performance. Modern standards incorporate provisions for burst-mode operations, where memory systems must deliver peak performance while maintaining overall energy efficiency targets. This involves implementing intelligent power gating mechanisms that can selectively activate memory banks and channels based on immediate data access requirements.

Emerging standards are increasingly focusing on holistic system-level efficiency rather than component-level optimization alone. These frameworks consider the entire data path from storage to processing, establishing metrics that account for the energy cost of data movement and temporary buffering operations. Advanced power management protocols now include provisions for predictive power scaling, where memory controllers can anticipate data burst patterns and pre-emptively adjust power states to optimize both performance and efficiency.

The integration of artificial intelligence and machine learning algorithms into power management standards represents a significant evolution in efficiency optimization. These intelligent systems can analyze historical data access patterns to develop more sophisticated power management strategies that adapt to specific application workloads. Future standards are expected to incorporate these adaptive capabilities as mandatory features, enabling memory systems to achieve optimal efficiency across diverse operational scenarios while maintaining the responsiveness required for handling unpredictable data burst events.

Current industry standards primarily focus on establishing baseline power consumption metrics for different memory technologies, including DDR4, DDR5, and emerging non-volatile memory solutions. These standards define specific power states such as active, idle, and standby modes, with particular attention to dynamic power scaling capabilities that allow memory systems to adjust consumption based on workload demands. The JEDEC organization has been instrumental in developing these specifications, creating standardized testing methodologies that ensure consistent power measurement across different manufacturers and implementations.

The challenge of managing data bursts within these efficiency frameworks requires sophisticated power management strategies that can rapidly transition between different power states without compromising system performance. Modern standards incorporate provisions for burst-mode operations, where memory systems must deliver peak performance while maintaining overall energy efficiency targets. This involves implementing intelligent power gating mechanisms that can selectively activate memory banks and channels based on immediate data access requirements.

Emerging standards are increasingly focusing on holistic system-level efficiency rather than component-level optimization alone. These frameworks consider the entire data path from storage to processing, establishing metrics that account for the energy cost of data movement and temporary buffering operations. Advanced power management protocols now include provisions for predictive power scaling, where memory controllers can anticipate data burst patterns and pre-emptively adjust power states to optimize both performance and efficiency.

The integration of artificial intelligence and machine learning algorithms into power management standards represents a significant evolution in efficiency optimization. These intelligent systems can analyze historical data access patterns to develop more sophisticated power management strategies that adapt to specific application workloads. Future standards are expected to incorporate these adaptive capabilities as mandatory features, enabling memory systems to achieve optimal efficiency across diverse operational scenarios while maintaining the responsiveness required for handling unpredictable data burst events.

Latency Optimization in High-Speed Data Processing

Latency optimization in high-speed data processing systems requires sophisticated approaches to minimize delays between data input and output operations. The fundamental challenge lies in balancing processing speed with system stability, particularly when managing irregular data bursts that can overwhelm traditional processing architectures.

Modern high-speed data processing environments demand sub-microsecond response times, necessitating optimization strategies that address both hardware and software bottlenecks. Critical latency sources include memory access delays, context switching overhead, interrupt handling latencies, and data transfer inefficiencies across system buses. These factors compound exponentially as data rates increase beyond gigabit thresholds.

Pipeline optimization represents a cornerstone approach for latency reduction, enabling parallel processing of multiple data streams while maintaining sequential output integrity. Advanced implementations utilize predictive prefetching algorithms that anticipate data requirements based on historical patterns, reducing cache miss penalties by up to 40% in typical burst scenarios. Hardware-accelerated processing units, including FPGAs and specialized ASICs, provide deterministic timing characteristics essential for consistent low-latency performance.

Memory hierarchy optimization plays a crucial role in minimizing data access latencies. Multi-level caching strategies, combined with intelligent data placement algorithms, ensure frequently accessed information remains in high-speed memory tiers. Non-uniform memory access architectures require careful consideration of data locality to prevent cross-node communication delays that can introduce significant latency variations.

Real-time scheduling algorithms specifically designed for burst traffic management employ adaptive priority mechanisms that dynamically adjust processing queues based on incoming data characteristics. These systems utilize machine learning techniques to predict burst patterns and preemptively allocate resources, reducing average processing latency by 25-35% compared to static scheduling approaches.

Network interface optimization through kernel bypass techniques eliminates operating system overhead, enabling direct user-space access to network hardware. Technologies such as DPDK and RDMA provide microsecond-level latency improvements by circumventing traditional network stack processing delays, particularly beneficial for high-frequency data burst scenarios requiring immediate response capabilities.

Modern high-speed data processing environments demand sub-microsecond response times, necessitating optimization strategies that address both hardware and software bottlenecks. Critical latency sources include memory access delays, context switching overhead, interrupt handling latencies, and data transfer inefficiencies across system buses. These factors compound exponentially as data rates increase beyond gigabit thresholds.

Pipeline optimization represents a cornerstone approach for latency reduction, enabling parallel processing of multiple data streams while maintaining sequential output integrity. Advanced implementations utilize predictive prefetching algorithms that anticipate data requirements based on historical patterns, reducing cache miss penalties by up to 40% in typical burst scenarios. Hardware-accelerated processing units, including FPGAs and specialized ASICs, provide deterministic timing characteristics essential for consistent low-latency performance.

Memory hierarchy optimization plays a crucial role in minimizing data access latencies. Multi-level caching strategies, combined with intelligent data placement algorithms, ensure frequently accessed information remains in high-speed memory tiers. Non-uniform memory access architectures require careful consideration of data locality to prevent cross-node communication delays that can introduce significant latency variations.

Real-time scheduling algorithms specifically designed for burst traffic management employ adaptive priority mechanisms that dynamically adjust processing queues based on incoming data characteristics. These systems utilize machine learning techniques to predict burst patterns and preemptively allocate resources, reducing average processing latency by 25-35% compared to static scheduling approaches.

Network interface optimization through kernel bypass techniques eliminates operating system overhead, enabling direct user-space access to network hardware. Technologies such as DPDK and RDMA provide microsecond-level latency improvements by circumventing traditional network stack processing delays, particularly beneficial for high-frequency data burst scenarios requiring immediate response capabilities.

Unlock deeper insights with PatSnap Eureka Quick Research — get a full tech report to explore trends and direct your research. Try now!

Generate Your Research Report Instantly with AI Agent

Supercharge your innovation with PatSnap Eureka AI Agent Platform!