Evaluate Digital Tools for Real-Time Data Analytics

FEB 25, 20269 MIN READ

Generate Your Research Report Instantly with AI Agent

PatSnap Eureka helps you evaluate technical feasibility & market potential.

Digital Analytics Background and Objectives

Digital analytics has emerged as a cornerstone of modern business intelligence, fundamentally transforming how organizations collect, process, and interpret data to drive strategic decision-making. The evolution from traditional batch processing systems to sophisticated real-time analytics platforms represents a paradigm shift that enables businesses to respond instantaneously to market changes, customer behaviors, and operational anomalies.

The historical trajectory of digital analytics began with basic reporting tools in the 1990s, progressed through business intelligence platforms in the 2000s, and has now reached the era of artificial intelligence-powered real-time analytics. This evolution has been driven by exponential data growth, increased computational power, and the demand for immediate insights in fast-paced business environments.

Real-time data analytics encompasses the continuous ingestion, processing, and analysis of data streams as they are generated, enabling organizations to detect patterns, identify opportunities, and mitigate risks within milliseconds or seconds of data creation. This capability has become essential across industries, from financial trading and fraud detection to supply chain optimization and customer experience management.

The primary objective of evaluating digital tools for real-time data analytics centers on identifying solutions that can handle high-velocity data streams while maintaining accuracy, scalability, and cost-effectiveness. Organizations seek platforms capable of processing diverse data types including structured transactional data, semi-structured logs, and unstructured social media feeds simultaneously.

Key technical objectives include achieving sub-second latency for critical business processes, ensuring horizontal scalability to accommodate growing data volumes, and maintaining data integrity throughout the processing pipeline. Additionally, organizations prioritize tools that offer intuitive visualization capabilities, enabling business users to interpret complex analytical results without extensive technical expertise.

Strategic objectives encompass enhancing competitive advantage through faster decision-making, improving operational efficiency by automating response mechanisms, and enabling predictive capabilities that anticipate future trends rather than merely reacting to historical patterns. The ultimate goal involves creating a data-driven culture where real-time insights seamlessly integrate into daily business operations, transforming raw data into actionable intelligence that drives measurable business outcomes.

The historical trajectory of digital analytics began with basic reporting tools in the 1990s, progressed through business intelligence platforms in the 2000s, and has now reached the era of artificial intelligence-powered real-time analytics. This evolution has been driven by exponential data growth, increased computational power, and the demand for immediate insights in fast-paced business environments.

Real-time data analytics encompasses the continuous ingestion, processing, and analysis of data streams as they are generated, enabling organizations to detect patterns, identify opportunities, and mitigate risks within milliseconds or seconds of data creation. This capability has become essential across industries, from financial trading and fraud detection to supply chain optimization and customer experience management.

The primary objective of evaluating digital tools for real-time data analytics centers on identifying solutions that can handle high-velocity data streams while maintaining accuracy, scalability, and cost-effectiveness. Organizations seek platforms capable of processing diverse data types including structured transactional data, semi-structured logs, and unstructured social media feeds simultaneously.

Key technical objectives include achieving sub-second latency for critical business processes, ensuring horizontal scalability to accommodate growing data volumes, and maintaining data integrity throughout the processing pipeline. Additionally, organizations prioritize tools that offer intuitive visualization capabilities, enabling business users to interpret complex analytical results without extensive technical expertise.

Strategic objectives encompass enhancing competitive advantage through faster decision-making, improving operational efficiency by automating response mechanisms, and enabling predictive capabilities that anticipate future trends rather than merely reacting to historical patterns. The ultimate goal involves creating a data-driven culture where real-time insights seamlessly integrate into daily business operations, transforming raw data into actionable intelligence that drives measurable business outcomes.

Market Demand for Real-Time Data Analytics Solutions

The global market for real-time data analytics solutions has experienced unprecedented growth driven by the digital transformation initiatives across industries. Organizations increasingly recognize that traditional batch processing methods cannot meet the demands of modern business environments where millisecond decisions can determine competitive advantage. This shift has created substantial market opportunities for vendors offering real-time analytics capabilities.

Financial services sector represents one of the largest demand drivers, where institutions require instantaneous fraud detection, algorithmic trading, and risk management capabilities. High-frequency trading firms and payment processors have become early adopters, establishing benchmark requirements for latency and throughput that influence broader market expectations. Similarly, e-commerce platforms demand real-time recommendation engines and inventory management systems to optimize customer experiences and operational efficiency.

Manufacturing industries are embracing real-time analytics for predictive maintenance and quality control applications. The Industrial Internet of Things has generated massive data streams from sensors and equipment, creating urgent needs for immediate processing and analysis capabilities. Supply chain optimization has emerged as another critical use case, where real-time visibility into logistics and inventory levels directly impacts operational costs and customer satisfaction.

Healthcare organizations increasingly require real-time patient monitoring and diagnostic support systems. The proliferation of wearable devices and connected medical equipment has created continuous data streams that demand immediate analysis for critical care applications. Telemedicine expansion has further accelerated demand for real-time data processing capabilities in healthcare settings.

The telecommunications sector faces growing pressure to implement real-time network optimization and customer experience management solutions. Network operators must process massive volumes of traffic data instantaneously to maintain service quality and prevent outages. Customer churn prediction and personalized service offerings have become essential competitive differentiators requiring real-time analytics capabilities.

Cloud computing adoption has democratized access to real-time analytics tools, enabling smaller organizations to implement sophisticated data processing capabilities without substantial infrastructure investments. This trend has expanded the addressable market beyond large enterprises to include mid-market companies and specialized applications.

Regulatory compliance requirements across industries have created additional demand drivers, particularly in financial services and healthcare where real-time monitoring and reporting capabilities are increasingly mandated. Data privacy regulations have simultaneously created challenges and opportunities for real-time analytics vendors to develop compliant solutions.

Financial services sector represents one of the largest demand drivers, where institutions require instantaneous fraud detection, algorithmic trading, and risk management capabilities. High-frequency trading firms and payment processors have become early adopters, establishing benchmark requirements for latency and throughput that influence broader market expectations. Similarly, e-commerce platforms demand real-time recommendation engines and inventory management systems to optimize customer experiences and operational efficiency.

Manufacturing industries are embracing real-time analytics for predictive maintenance and quality control applications. The Industrial Internet of Things has generated massive data streams from sensors and equipment, creating urgent needs for immediate processing and analysis capabilities. Supply chain optimization has emerged as another critical use case, where real-time visibility into logistics and inventory levels directly impacts operational costs and customer satisfaction.

Healthcare organizations increasingly require real-time patient monitoring and diagnostic support systems. The proliferation of wearable devices and connected medical equipment has created continuous data streams that demand immediate analysis for critical care applications. Telemedicine expansion has further accelerated demand for real-time data processing capabilities in healthcare settings.

The telecommunications sector faces growing pressure to implement real-time network optimization and customer experience management solutions. Network operators must process massive volumes of traffic data instantaneously to maintain service quality and prevent outages. Customer churn prediction and personalized service offerings have become essential competitive differentiators requiring real-time analytics capabilities.

Cloud computing adoption has democratized access to real-time analytics tools, enabling smaller organizations to implement sophisticated data processing capabilities without substantial infrastructure investments. This trend has expanded the addressable market beyond large enterprises to include mid-market companies and specialized applications.

Regulatory compliance requirements across industries have created additional demand drivers, particularly in financial services and healthcare where real-time monitoring and reporting capabilities are increasingly mandated. Data privacy regulations have simultaneously created challenges and opportunities for real-time analytics vendors to develop compliant solutions.

Current State and Challenges of Digital Analytics Tools

The current landscape of digital analytics tools for real-time data processing presents a complex ecosystem characterized by rapid technological advancement and diverse implementation approaches. Modern organizations increasingly rely on sophisticated platforms that can handle massive data volumes while delivering instantaneous insights. Leading solutions include Apache Kafka for stream processing, Elasticsearch for search and analytics, and cloud-native services like Amazon Kinesis and Google Cloud Dataflow.

Contemporary real-time analytics architectures typically employ distributed computing frameworks such as Apache Spark Streaming and Apache Flink, which enable processing of continuous data streams across multiple nodes. These systems integrate with various data sources including IoT sensors, web applications, social media feeds, and enterprise databases. The technology stack often incorporates in-memory computing capabilities to minimize latency and maximize throughput.

Despite significant technological progress, several critical challenges persist in the real-time analytics domain. Latency requirements continue to push the boundaries of existing infrastructure, particularly when sub-second response times are mandatory for applications like fraud detection or algorithmic trading. Data quality and consistency issues become amplified in streaming environments where traditional batch validation processes are impractical.

Scalability remains a fundamental concern as data volumes grow exponentially. Many organizations struggle with the complexity of managing distributed systems that must automatically scale resources based on fluctuating workloads. The integration of multiple data sources with varying formats, schemas, and update frequencies creates additional technical hurdles that require sophisticated data engineering solutions.

Cost optimization presents another significant challenge, as real-time processing typically demands more computational resources than batch processing alternatives. Organizations must balance performance requirements against infrastructure expenses, particularly in cloud environments where resource consumption directly impacts operational costs. The shortage of skilled professionals capable of designing and maintaining complex real-time analytics systems further constrains adoption and implementation success rates.

Security and compliance considerations add layers of complexity, especially when processing sensitive data streams that must adhere to regulations like GDPR or HIPAA. Ensuring data privacy while maintaining real-time processing capabilities requires careful architectural planning and robust governance frameworks.

Contemporary real-time analytics architectures typically employ distributed computing frameworks such as Apache Spark Streaming and Apache Flink, which enable processing of continuous data streams across multiple nodes. These systems integrate with various data sources including IoT sensors, web applications, social media feeds, and enterprise databases. The technology stack often incorporates in-memory computing capabilities to minimize latency and maximize throughput.

Despite significant technological progress, several critical challenges persist in the real-time analytics domain. Latency requirements continue to push the boundaries of existing infrastructure, particularly when sub-second response times are mandatory for applications like fraud detection or algorithmic trading. Data quality and consistency issues become amplified in streaming environments where traditional batch validation processes are impractical.

Scalability remains a fundamental concern as data volumes grow exponentially. Many organizations struggle with the complexity of managing distributed systems that must automatically scale resources based on fluctuating workloads. The integration of multiple data sources with varying formats, schemas, and update frequencies creates additional technical hurdles that require sophisticated data engineering solutions.

Cost optimization presents another significant challenge, as real-time processing typically demands more computational resources than batch processing alternatives. Organizations must balance performance requirements against infrastructure expenses, particularly in cloud environments where resource consumption directly impacts operational costs. The shortage of skilled professionals capable of designing and maintaining complex real-time analytics systems further constrains adoption and implementation success rates.

Security and compliance considerations add layers of complexity, especially when processing sensitive data streams that must adhere to regulations like GDPR or HIPAA. Ensuring data privacy while maintaining real-time processing capabilities requires careful architectural planning and robust governance frameworks.

Current Digital Tools for Real-Time Analytics

01 Real-time data processing and streaming analytics platforms

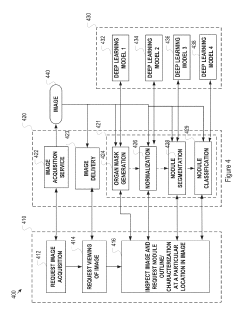

Digital tools that enable continuous data ingestion, processing, and analysis as data is generated. These platforms support high-velocity data streams and provide immediate insights through streaming analytics engines. The systems are designed to handle large volumes of data with minimal latency, allowing organizations to make time-sensitive decisions based on current information. Advanced algorithms and distributed computing architectures enable parallel processing of multiple data streams simultaneously.- Real-time data processing and streaming analytics platforms: Digital tools that enable continuous processing and analysis of data streams as they are generated, allowing organizations to derive immediate insights from incoming data. These platforms support high-velocity data ingestion, processing, and visualization capabilities to facilitate instant decision-making. The systems typically incorporate distributed computing architectures and in-memory processing to handle large volumes of streaming data with minimal latency.

- Cloud-based analytics infrastructure and services: Scalable cloud computing platforms designed specifically for real-time data analytics workloads. These services provide on-demand computational resources, storage, and analytical capabilities that can dynamically scale based on data volume and processing requirements. The infrastructure supports distributed data processing frameworks and enables organizations to perform complex analytics without maintaining physical hardware.

- Interactive dashboards and visualization tools: User interface systems that present real-time data analytics results through dynamic visual representations including charts, graphs, and interactive displays. These tools enable users to monitor key performance indicators, identify trends, and explore data relationships as information updates continuously. The visualization components support customizable views and drill-down capabilities for detailed analysis.

- Machine learning integration for predictive analytics: Systems that incorporate artificial intelligence and machine learning algorithms into real-time data analytics workflows to enable predictive modeling and automated pattern recognition. These tools can identify anomalies, forecast trends, and generate actionable insights from streaming data without manual intervention. The integration allows for continuous model training and refinement based on incoming data.

- Data integration and ETL tools for real-time pipelines: Software solutions that facilitate the extraction, transformation, and loading of data from multiple sources into analytics platforms in real-time. These tools handle data quality, normalization, and enrichment processes while maintaining low latency to ensure timely availability of information for analysis. The systems support various data formats and protocols to enable seamless integration across heterogeneous data sources.

02 Interactive dashboards and visualization tools for real-time monitoring

Solutions that provide dynamic visual representations of data as it changes in real-time. These tools offer customizable interfaces with charts, graphs, and metrics that update automatically to reflect current conditions. Users can interact with visualizations to drill down into specific data points, apply filters, and generate custom views. The systems support multiple data sources and enable stakeholders to monitor key performance indicators and operational metrics continuously.Expand Specific Solutions03 Cloud-based analytics infrastructure for scalable data processing

Infrastructure solutions that leverage cloud computing resources to provide elastic scalability for real-time analytics workloads. These platforms automatically adjust computing capacity based on data volume and processing demands. The architecture supports distributed data storage and parallel processing across multiple nodes, enabling organizations to handle varying workloads without infrastructure constraints. Integration with cloud services provides flexibility in deployment and resource management.Expand Specific Solutions04 Machine learning integration for predictive real-time analytics

Tools that incorporate machine learning algorithms to analyze streaming data and generate predictive insights in real-time. These systems can identify patterns, detect anomalies, and forecast trends as data flows through the analytics pipeline. Automated model training and deployment capabilities enable continuous improvement of analytical accuracy. The integration allows organizations to move beyond descriptive analytics to predictive and prescriptive decision-making.Expand Specific Solutions05 Mobile and edge computing solutions for distributed real-time analytics

Digital tools that enable real-time data analysis at the edge of networks or on mobile devices, reducing latency and bandwidth requirements. These solutions process data closer to its source, providing immediate insights without requiring transmission to centralized servers. The architecture supports offline capabilities and synchronization when connectivity is restored. Mobile interfaces allow users to access real-time analytics from any location, enabling field operations and remote decision-making.Expand Specific Solutions

Major Players in Digital Analytics Platform Market

The real-time data analytics market is experiencing rapid growth as organizations increasingly demand immediate insights from their data streams. The industry has evolved from traditional batch processing to sophisticated real-time analytics platforms, driven by digital transformation initiatives and the need for instant decision-making capabilities. Market leaders like Oracle International Corp., IBM, and Accenture Global Services Ltd. demonstrate mature technology offerings with comprehensive enterprise solutions. Technology giants including Tencent Technology and established consulting firms like Tata Consultancy Services showcase the global competitive landscape spanning multiple regions. Specialized players such as Gathr Data Inc., Datorama Technologies Ltd., and Joulica Ltd. represent emerging innovation in cloud-native and zero-code analytics platforms. The technology maturity varies significantly, with established enterprise vendors offering proven solutions while newer entrants focus on next-generation architectures and specialized use cases, indicating a dynamic market transitioning toward more accessible and intelligent analytics capabilities.

Oracle International Corp.

Technical Solution: Oracle provides comprehensive real-time analytics solutions through Oracle Cloud Infrastructure (OCI) and Oracle Analytics Cloud. Their platform integrates streaming data processing with machine learning capabilities, enabling organizations to process millions of events per second with sub-second latency. The solution includes Oracle Stream Analytics for real-time event processing, Oracle Autonomous Data Warehouse for high-performance analytics, and built-in AI/ML algorithms for predictive insights. Oracle's architecture supports both batch and streaming analytics workloads, with automatic scaling and self-tuning capabilities that optimize performance without manual intervention.

Strengths: Enterprise-grade scalability, integrated AI/ML capabilities, autonomous database management. Weaknesses: High licensing costs, complex implementation for smaller organizations, vendor lock-in concerns.

Accenture Global Services Ltd.

Technical Solution: Accenture delivers real-time analytics solutions through their Applied Intelligence platform, combining cloud-native architectures with advanced analytics frameworks. Their approach leverages Apache Kafka for data streaming, Apache Spark for real-time processing, and custom-built visualization dashboards for immediate insights. Accenture's solutions typically integrate with major cloud providers (AWS, Azure, GCP) and include industry-specific analytics models for sectors like finance, healthcare, and retail. They emphasize edge computing integration for IoT scenarios and provide end-to-end implementation services from data ingestion to actionable insights delivery.

Strengths: Industry expertise, multi-cloud flexibility, comprehensive consulting services. Weaknesses: High service costs, dependency on external consultants, longer implementation timelines.

Core Technologies in Real-Time Data Processing

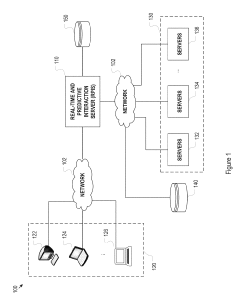

Method and system for facilitating real-time automated data analytics

PatentPendingUS20240311681A1

Innovation

- A method utilizing artificial intelligence and machine learning to aggregate raw data in real-time, transform it using lambda functions, generate structured datasets, and create predictive outputs, which are then used to generate dashboards and alert clients via a graphical user interface, compatible with mobile devices, while ensuring data validation and entitlement rules.

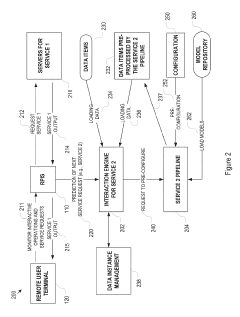

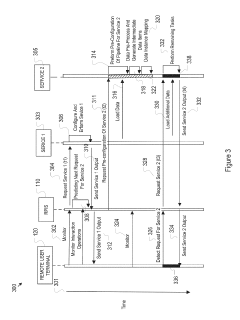

Enhancement of real-time response to request for detached data analytics

PatentInactiveUS10467142B1

Innovation

- A system and method that predictively pre-configure and pre-process data analytics pipelines based on user interactive operations, caching intermediate data for speedy access, allowing only real-time additional processing to complete the service upon actual request, thereby reducing user-perceived delay.

Data Privacy and Security Regulatory Framework

The regulatory landscape for data privacy and security in real-time analytics has evolved significantly, driven by increasing concerns over data protection and the proliferation of digital tools processing sensitive information. Major regulatory frameworks such as the General Data Protection Regulation (GDPR) in Europe, the California Consumer Privacy Act (CCPA) in the United States, and emerging legislation in Asia-Pacific regions establish comprehensive requirements for organizations implementing real-time data analytics solutions.

GDPR represents the most stringent framework, requiring explicit consent for data processing, implementing privacy-by-design principles, and mandating data protection impact assessments for high-risk processing activities. Real-time analytics tools must incorporate mechanisms for data subject rights, including the right to erasure and data portability, which presents technical challenges for streaming data architectures. The regulation's extraterritorial scope affects any organization processing EU residents' data, regardless of geographic location.

In the United States, sector-specific regulations complement state-level privacy laws. The Health Insurance Portability and Accountability Act (HIPAA) governs healthcare data analytics, while the Gramm-Leach-Bliley Act addresses financial services. These regulations require specific technical safeguards, audit trails, and breach notification procedures that real-time analytics platforms must support natively.

Emerging regulatory trends focus on algorithmic transparency and automated decision-making oversight. The EU's proposed AI Act and similar initiatives in other jurisdictions will likely impose additional requirements on real-time analytics systems that incorporate machine learning components. These regulations emphasize explainability, bias detection, and human oversight mechanisms.

Cross-border data transfer regulations significantly impact real-time analytics architectures. Adequacy decisions, Standard Contractual Clauses, and certification mechanisms under various frameworks determine permissible data flows. Organizations must implement technical measures such as encryption, pseudonymization, and data localization to ensure compliance while maintaining analytics performance.

Industry-specific compliance requirements add complexity layers. Financial services face regulations like PCI DSS for payment data, while telecommunications companies must comply with data retention and lawful interception requirements. These sector-specific mandates often conflict with privacy regulations, requiring careful balance in system design and implementation strategies for real-time analytics deployments.

GDPR represents the most stringent framework, requiring explicit consent for data processing, implementing privacy-by-design principles, and mandating data protection impact assessments for high-risk processing activities. Real-time analytics tools must incorporate mechanisms for data subject rights, including the right to erasure and data portability, which presents technical challenges for streaming data architectures. The regulation's extraterritorial scope affects any organization processing EU residents' data, regardless of geographic location.

In the United States, sector-specific regulations complement state-level privacy laws. The Health Insurance Portability and Accountability Act (HIPAA) governs healthcare data analytics, while the Gramm-Leach-Bliley Act addresses financial services. These regulations require specific technical safeguards, audit trails, and breach notification procedures that real-time analytics platforms must support natively.

Emerging regulatory trends focus on algorithmic transparency and automated decision-making oversight. The EU's proposed AI Act and similar initiatives in other jurisdictions will likely impose additional requirements on real-time analytics systems that incorporate machine learning components. These regulations emphasize explainability, bias detection, and human oversight mechanisms.

Cross-border data transfer regulations significantly impact real-time analytics architectures. Adequacy decisions, Standard Contractual Clauses, and certification mechanisms under various frameworks determine permissible data flows. Organizations must implement technical measures such as encryption, pseudonymization, and data localization to ensure compliance while maintaining analytics performance.

Industry-specific compliance requirements add complexity layers. Financial services face regulations like PCI DSS for payment data, while telecommunications companies must comply with data retention and lawful interception requirements. These sector-specific mandates often conflict with privacy regulations, requiring careful balance in system design and implementation strategies for real-time analytics deployments.

Performance Evaluation Metrics for Analytics Tools

Performance evaluation metrics serve as the foundation for assessing real-time data analytics tools, providing quantitative measures to compare capabilities across different platforms. These metrics enable organizations to make informed decisions when selecting analytics solutions that align with their operational requirements and performance expectations.

Latency represents the most critical performance indicator for real-time analytics systems. This metric measures the time elapsed between data ingestion and result availability, typically expressed in milliseconds or microseconds. Sub-second latency is essential for applications requiring immediate responses, such as fraud detection or automated trading systems. Evaluation should consider both average latency and tail latency percentiles to understand system behavior under varying load conditions.

Throughput capacity determines the volume of data an analytics tool can process within a specific timeframe, usually measured in events per second or gigabytes per hour. This metric directly impacts scalability and cost-effectiveness, as higher throughput capabilities reduce infrastructure requirements and operational expenses. Organizations must evaluate throughput under different data complexity scenarios to ensure consistent performance.

Resource utilization efficiency encompasses CPU, memory, and network consumption patterns during analytics operations. Efficient tools minimize resource overhead while maintaining performance levels, enabling cost-effective scaling and reducing infrastructure footprint. Memory usage patterns are particularly important for in-memory analytics platforms, where excessive consumption can lead to performance degradation or system failures.

Accuracy and precision metrics evaluate the correctness of analytical results, especially crucial for machine learning-based analytics tools. These measurements include statistical accuracy rates, false positive and negative ratios, and confidence intervals. Real-time constraints often require trade-offs between speed and accuracy, making these metrics essential for determining acceptable performance boundaries.

Scalability metrics assess how performance characteristics change as data volumes, user concurrency, or computational complexity increase. Linear scalability indicates proportional performance improvement with additional resources, while sub-linear scaling may suggest architectural limitations. Horizontal and vertical scaling capabilities should be evaluated separately to understand deployment flexibility.

Reliability and availability metrics measure system uptime, fault tolerance, and recovery capabilities. Mean time between failures, recovery time objectives, and data consistency guarantees are critical factors for mission-critical applications. These metrics help organizations understand the operational risks associated with different analytics platforms.

Latency represents the most critical performance indicator for real-time analytics systems. This metric measures the time elapsed between data ingestion and result availability, typically expressed in milliseconds or microseconds. Sub-second latency is essential for applications requiring immediate responses, such as fraud detection or automated trading systems. Evaluation should consider both average latency and tail latency percentiles to understand system behavior under varying load conditions.

Throughput capacity determines the volume of data an analytics tool can process within a specific timeframe, usually measured in events per second or gigabytes per hour. This metric directly impacts scalability and cost-effectiveness, as higher throughput capabilities reduce infrastructure requirements and operational expenses. Organizations must evaluate throughput under different data complexity scenarios to ensure consistent performance.

Resource utilization efficiency encompasses CPU, memory, and network consumption patterns during analytics operations. Efficient tools minimize resource overhead while maintaining performance levels, enabling cost-effective scaling and reducing infrastructure footprint. Memory usage patterns are particularly important for in-memory analytics platforms, where excessive consumption can lead to performance degradation or system failures.

Accuracy and precision metrics evaluate the correctness of analytical results, especially crucial for machine learning-based analytics tools. These measurements include statistical accuracy rates, false positive and negative ratios, and confidence intervals. Real-time constraints often require trade-offs between speed and accuracy, making these metrics essential for determining acceptable performance boundaries.

Scalability metrics assess how performance characteristics change as data volumes, user concurrency, or computational complexity increase. Linear scalability indicates proportional performance improvement with additional resources, while sub-linear scaling may suggest architectural limitations. Horizontal and vertical scaling capabilities should be evaluated separately to understand deployment flexibility.

Reliability and availability metrics measure system uptime, fault tolerance, and recovery capabilities. Mean time between failures, recovery time objectives, and data consistency guarantees are critical factors for mission-critical applications. These metrics help organizations understand the operational risks associated with different analytics platforms.

Unlock deeper insights with PatSnap Eureka Quick Research — get a full tech report to explore trends and direct your research. Try now!

Generate Your Research Report Instantly with AI Agent

Supercharge your innovation with PatSnap Eureka AI Agent Platform!