How To Deploy AI Tools For Electron Beam Lithography Process Optimization

APR 28, 20269 MIN READ

Generate Your Research Report Instantly with AI Agent

PatSnap Eureka helps you evaluate technical feasibility & market potential.

AI-Driven EBL Process Optimization Background and Objectives

Electron Beam Lithography (EBL) has emerged as a critical nanofabrication technique since its inception in the 1960s, evolving from simple pattern writing systems to sophisticated multi-beam platforms capable of sub-10nm resolution. The technology's development trajectory shows continuous improvements in throughput, accuracy, and process control, driven by demands from semiconductor manufacturing, photomask production, and advanced research applications in quantum devices and photonics.

The integration of artificial intelligence into EBL processes represents a paradigm shift from traditional rule-based optimization approaches to data-driven methodologies. Historical EBL process optimization relied heavily on empirical parameter adjustment and operator expertise, resulting in lengthy development cycles and suboptimal performance. The exponential growth in computational power and machine learning algorithms now enables real-time process monitoring, predictive modeling, and autonomous parameter optimization.

Current technological trends indicate a convergence of AI capabilities with advanced lithography systems, particularly in areas such as proximity effect correction, dose optimization, and defect prediction. Machine learning algorithms demonstrate significant potential in handling the complex, multi-dimensional parameter spaces inherent in EBL processes, where traditional optimization methods often fail to capture intricate relationships between process variables and output quality.

The primary objective of deploying AI tools for EBL process optimization centers on achieving autonomous, intelligent process control that maximizes throughput while maintaining pattern fidelity. This involves developing predictive models for dose distribution, implementing real-time feedback systems for beam drift correction, and establishing adaptive algorithms for dynamic process parameter adjustment based on substrate variations and environmental conditions.

Secondary objectives include reducing development time for new processes, minimizing material waste through improved first-pass success rates, and enabling consistent performance across different operators and equipment configurations. The ultimate goal encompasses creating a self-learning system capable of continuous improvement through accumulated process knowledge and pattern recognition.

These objectives align with broader industry requirements for increased manufacturing efficiency, reduced cost-per-feature, and enhanced capability for next-generation device architectures requiring unprecedented precision and complexity in nanoscale patterning applications.

The integration of artificial intelligence into EBL processes represents a paradigm shift from traditional rule-based optimization approaches to data-driven methodologies. Historical EBL process optimization relied heavily on empirical parameter adjustment and operator expertise, resulting in lengthy development cycles and suboptimal performance. The exponential growth in computational power and machine learning algorithms now enables real-time process monitoring, predictive modeling, and autonomous parameter optimization.

Current technological trends indicate a convergence of AI capabilities with advanced lithography systems, particularly in areas such as proximity effect correction, dose optimization, and defect prediction. Machine learning algorithms demonstrate significant potential in handling the complex, multi-dimensional parameter spaces inherent in EBL processes, where traditional optimization methods often fail to capture intricate relationships between process variables and output quality.

The primary objective of deploying AI tools for EBL process optimization centers on achieving autonomous, intelligent process control that maximizes throughput while maintaining pattern fidelity. This involves developing predictive models for dose distribution, implementing real-time feedback systems for beam drift correction, and establishing adaptive algorithms for dynamic process parameter adjustment based on substrate variations and environmental conditions.

Secondary objectives include reducing development time for new processes, minimizing material waste through improved first-pass success rates, and enabling consistent performance across different operators and equipment configurations. The ultimate goal encompasses creating a self-learning system capable of continuous improvement through accumulated process knowledge and pattern recognition.

These objectives align with broader industry requirements for increased manufacturing efficiency, reduced cost-per-feature, and enhanced capability for next-generation device architectures requiring unprecedented precision and complexity in nanoscale patterning applications.

Market Demand for AI-Enhanced Semiconductor Manufacturing

The semiconductor manufacturing industry is experiencing unprecedented demand for advanced lithography solutions, driven by the relentless pursuit of smaller node technologies and higher device performance. As chip manufacturers push toward sub-3nm processes, traditional electron beam lithography faces significant challenges in throughput, precision, and yield optimization that conventional process control methods struggle to address effectively.

Market drivers for AI-enhanced semiconductor manufacturing stem from multiple converging factors. The exponential growth in artificial intelligence applications, autonomous vehicles, 5G infrastructure, and edge computing devices has created substantial pressure on semiconductor foundries to deliver higher-performance chips with improved power efficiency. This demand translates directly into requirements for more sophisticated lithography processes that can achieve precise pattern definition at atomic scales.

The economic imperative for AI integration in electron beam lithography becomes evident when considering the cost implications of process variations and defects. Advanced semiconductor fabrication facilities represent multi-billion dollar investments, where even minor improvements in yield and throughput can generate substantial returns. AI-driven process optimization offers the potential to reduce manufacturing costs while simultaneously improving product quality and production speed.

Industry adoption patterns indicate strong momentum toward intelligent manufacturing systems. Leading semiconductor manufacturers are increasingly investing in machine learning capabilities to enhance their lithography processes, recognizing that traditional rule-based control systems cannot adequately handle the complexity of modern nanoscale manufacturing. The integration of real-time data analytics, predictive modeling, and automated process adjustments has become essential for maintaining competitive advantage.

The market opportunity extends beyond established semiconductor giants to include emerging players in specialized applications such as quantum computing, advanced sensors, and next-generation memory devices. These applications often require unique lithography patterns and specifications that benefit significantly from AI-powered optimization algorithms capable of adapting to novel design requirements.

Supply chain considerations further amplify market demand, as geopolitical tensions and trade restrictions have heightened the importance of manufacturing efficiency and self-sufficiency. AI-enhanced lithography systems offer the potential to maximize output from existing equipment while reducing dependence on external process expertise, making them strategically valuable for regional semiconductor manufacturing initiatives.

Market drivers for AI-enhanced semiconductor manufacturing stem from multiple converging factors. The exponential growth in artificial intelligence applications, autonomous vehicles, 5G infrastructure, and edge computing devices has created substantial pressure on semiconductor foundries to deliver higher-performance chips with improved power efficiency. This demand translates directly into requirements for more sophisticated lithography processes that can achieve precise pattern definition at atomic scales.

The economic imperative for AI integration in electron beam lithography becomes evident when considering the cost implications of process variations and defects. Advanced semiconductor fabrication facilities represent multi-billion dollar investments, where even minor improvements in yield and throughput can generate substantial returns. AI-driven process optimization offers the potential to reduce manufacturing costs while simultaneously improving product quality and production speed.

Industry adoption patterns indicate strong momentum toward intelligent manufacturing systems. Leading semiconductor manufacturers are increasingly investing in machine learning capabilities to enhance their lithography processes, recognizing that traditional rule-based control systems cannot adequately handle the complexity of modern nanoscale manufacturing. The integration of real-time data analytics, predictive modeling, and automated process adjustments has become essential for maintaining competitive advantage.

The market opportunity extends beyond established semiconductor giants to include emerging players in specialized applications such as quantum computing, advanced sensors, and next-generation memory devices. These applications often require unique lithography patterns and specifications that benefit significantly from AI-powered optimization algorithms capable of adapting to novel design requirements.

Supply chain considerations further amplify market demand, as geopolitical tensions and trade restrictions have heightened the importance of manufacturing efficiency and self-sufficiency. AI-enhanced lithography systems offer the potential to maximize output from existing equipment while reducing dependence on external process expertise, making them strategically valuable for regional semiconductor manufacturing initiatives.

Current EBL Challenges and AI Integration Status

Electron beam lithography faces several critical challenges that significantly impact manufacturing efficiency and yield rates. Pattern placement accuracy remains a primary concern, with thermal drift and charging effects causing systematic errors that can exceed tolerance limits for advanced node fabrication. Beam drift compensation requires continuous calibration, often interrupting production workflows and reducing throughput by 15-20% in typical manufacturing environments.

Proximity effect correction represents another substantial challenge, where electron scattering in resist materials creates unwanted exposure variations. Current correction algorithms rely on pre-calculated models that struggle with complex geometries and varying substrate conditions. This limitation becomes particularly pronounced in multi-layer device fabrication, where accumulated errors can render entire wafers unusable.

Throughput optimization continues to constrain EBL adoption in high-volume manufacturing. Traditional approaches focus on beam current optimization and writing strategy improvements, but these methods often reach physical limitations imposed by space charge effects and resist sensitivity constraints. The trade-off between resolution and speed remains a fundamental bottleneck that conventional optimization techniques cannot adequately address.

AI integration in EBL processes currently exists in early developmental stages across the industry. Machine learning algorithms have shown promise in predictive maintenance applications, where pattern recognition techniques identify equipment degradation before critical failures occur. Several leading semiconductor manufacturers have implemented neural networks for real-time dose correction, achieving 30-40% improvement in critical dimension uniformity compared to conventional feedback systems.

Deep learning models are being explored for automated defect classification and root cause analysis. These systems can process scanning electron microscope images to identify pattern defects and correlate them with process parameters, enabling faster troubleshooting and yield improvement. However, training data requirements and model interpretability remain significant barriers to widespread adoption.

Current AI deployment efforts focus primarily on post-process analysis rather than real-time optimization. Edge computing integration faces challenges related to computational latency and hardware constraints within existing EBL systems. Most implementations rely on cloud-based processing, which introduces data security concerns and limits real-time decision-making capabilities essential for dynamic process control.

Proximity effect correction represents another substantial challenge, where electron scattering in resist materials creates unwanted exposure variations. Current correction algorithms rely on pre-calculated models that struggle with complex geometries and varying substrate conditions. This limitation becomes particularly pronounced in multi-layer device fabrication, where accumulated errors can render entire wafers unusable.

Throughput optimization continues to constrain EBL adoption in high-volume manufacturing. Traditional approaches focus on beam current optimization and writing strategy improvements, but these methods often reach physical limitations imposed by space charge effects and resist sensitivity constraints. The trade-off between resolution and speed remains a fundamental bottleneck that conventional optimization techniques cannot adequately address.

AI integration in EBL processes currently exists in early developmental stages across the industry. Machine learning algorithms have shown promise in predictive maintenance applications, where pattern recognition techniques identify equipment degradation before critical failures occur. Several leading semiconductor manufacturers have implemented neural networks for real-time dose correction, achieving 30-40% improvement in critical dimension uniformity compared to conventional feedback systems.

Deep learning models are being explored for automated defect classification and root cause analysis. These systems can process scanning electron microscope images to identify pattern defects and correlate them with process parameters, enabling faster troubleshooting and yield improvement. However, training data requirements and model interpretability remain significant barriers to widespread adoption.

Current AI deployment efforts focus primarily on post-process analysis rather than real-time optimization. Edge computing integration faces challenges related to computational latency and hardware constraints within existing EBL systems. Most implementations rely on cloud-based processing, which introduces data security concerns and limits real-time decision-making capabilities essential for dynamic process control.

Existing AI Tools for EBL Process Control

01 Machine Learning Algorithm Optimization for AI Tools

Advanced machine learning techniques and algorithms are employed to enhance the performance and efficiency of AI tools. These methods focus on improving model accuracy, reducing computational overhead, and optimizing training processes through various algorithmic improvements and neural network architectures.- Machine Learning Algorithm Optimization for AI Tools: Advanced machine learning techniques are employed to optimize AI tool performance through algorithmic improvements, neural network architecture refinement, and automated parameter tuning. These methods enhance processing speed, accuracy, and resource utilization in AI applications by implementing sophisticated optimization algorithms that adapt to different computational environments and workload requirements.

- Automated Workflow Management and Process Scheduling: Intelligent workflow management systems that automatically schedule, prioritize, and execute AI processes based on resource availability and task complexity. These systems implement dynamic load balancing, task queue optimization, and real-time process monitoring to maximize throughput and minimize processing delays in AI tool operations.

- Resource Allocation and Performance Monitoring: Sophisticated resource management techniques that optimize computational resource distribution across AI tools, including memory management, CPU utilization optimization, and distributed computing coordination. These approaches incorporate real-time performance monitoring and predictive analytics to ensure optimal system performance and prevent resource bottlenecks.

- Data Processing Pipeline Optimization: Streamlined data processing methodologies that enhance the efficiency of data ingestion, transformation, and analysis within AI tools. These techniques include parallel processing implementations, data compression algorithms, and intelligent caching mechanisms that reduce processing time and improve overall system responsiveness for large-scale data operations.

- Adaptive Learning and Self-Optimization Systems: Self-improving AI systems that continuously learn from operational patterns and automatically adjust their processes for enhanced performance. These systems implement feedback loops, performance analytics, and adaptive algorithms that enable AI tools to evolve and optimize their operations based on historical data and changing requirements without manual intervention.

02 Data Processing and Analytics Enhancement

Optimization techniques for data processing workflows in AI systems, including data preprocessing, feature extraction, and real-time analytics capabilities. These approaches improve data handling efficiency and enable better decision-making processes through enhanced data analysis methodologies.Expand Specific Solutions03 Automated Workflow and Process Management

Systems and methods for automating complex workflows and managing AI-driven processes. These solutions focus on streamlining operations, reducing manual intervention, and creating intelligent process orchestration that adapts to changing requirements and optimizes resource utilization.Expand Specific Solutions04 Performance Monitoring and Resource Optimization

Technologies for monitoring AI tool performance and optimizing computational resources. These systems provide real-time performance metrics, identify bottlenecks, and implement dynamic resource allocation strategies to maximize efficiency and minimize operational costs.Expand Specific Solutions05 Integration and Scalability Solutions

Methods for integrating AI tools with existing systems and ensuring scalable deployment across different environments. These approaches address interoperability challenges, provide seamless integration capabilities, and enable horizontal and vertical scaling of AI applications.Expand Specific Solutions

Key Players in AI-Powered EBL Solutions Industry

The electron beam lithography (EBL) process optimization market represents a mature yet rapidly evolving sector within the semiconductor manufacturing ecosystem. The industry is currently in a growth phase driven by increasing demand for advanced node semiconductors and AI applications. Major equipment manufacturers like ASML Netherlands BV, NuFlare Technology, and IMS Nanofabrication GmbH lead in lithography systems development, while foundries including Taiwan Semiconductor Manufacturing Co. and GLOBALFOUNDRIES drive implementation. Technology maturity varies significantly across players - established companies like Applied Materials, Tokyo Electron, and Carl Zeiss SMT demonstrate high technical readiness in traditional EBL systems, whereas emerging players like Multibeam Corp. and Integral Geometry Science are pioneering next-generation multi-beam and AI-enhanced solutions. Research institutions including MIT and Institute of Microelectronics of Chinese Academy of Sciences contribute fundamental innovations, while integrated device manufacturers like Samsung Electronics and Intel are advancing process optimization capabilities to meet escalating performance requirements in AI and high-performance computing applications.

ASML Netherlands BV

Technical Solution: ASML has developed advanced AI-driven process control systems for electron beam lithography that integrate machine learning algorithms with their existing EUV and e-beam platforms. Their AI tools focus on real-time dose correction, pattern fidelity optimization, and predictive maintenance of electron beam systems. The company employs deep learning models to analyze exposure patterns and automatically adjust beam parameters to compensate for proximity effects and charging phenomena. Their AI framework includes automated defect detection algorithms that can identify and classify lithographic errors in real-time, enabling immediate process corrections. The system also incorporates predictive analytics to forecast equipment maintenance needs and optimize throughput by minimizing downtime.

Strengths: Market-leading lithography expertise, comprehensive AI integration across multiple platforms, strong R&D capabilities. Weaknesses: High system complexity, significant capital investment requirements, limited accessibility for smaller manufacturers.

Taiwan Semiconductor Manufacturing Co., Ltd.

Technical Solution: TSMC has implemented AI-powered electron beam lithography optimization through their advanced process control (APC) systems that utilize machine learning for dose modulation and pattern placement accuracy. Their AI deployment includes neural network-based models for proximity effect correction, automated recipe optimization, and real-time process monitoring. The company has developed proprietary algorithms that analyze historical lithography data to predict optimal exposure parameters for new patterns, reducing development time and improving yield. Their AI tools also incorporate computer vision techniques for automated pattern inspection and defect classification, enabling rapid feedback loops for process improvement. TSMC's approach emphasizes integration with their existing fab automation systems to ensure seamless workflow optimization.

Strengths: Extensive manufacturing experience, proven scalability across high-volume production, strong data analytics capabilities. Weaknesses: Proprietary solutions may limit technology transfer, high implementation costs, complex integration requirements.

Core AI Algorithms for EBL Parameter Optimization

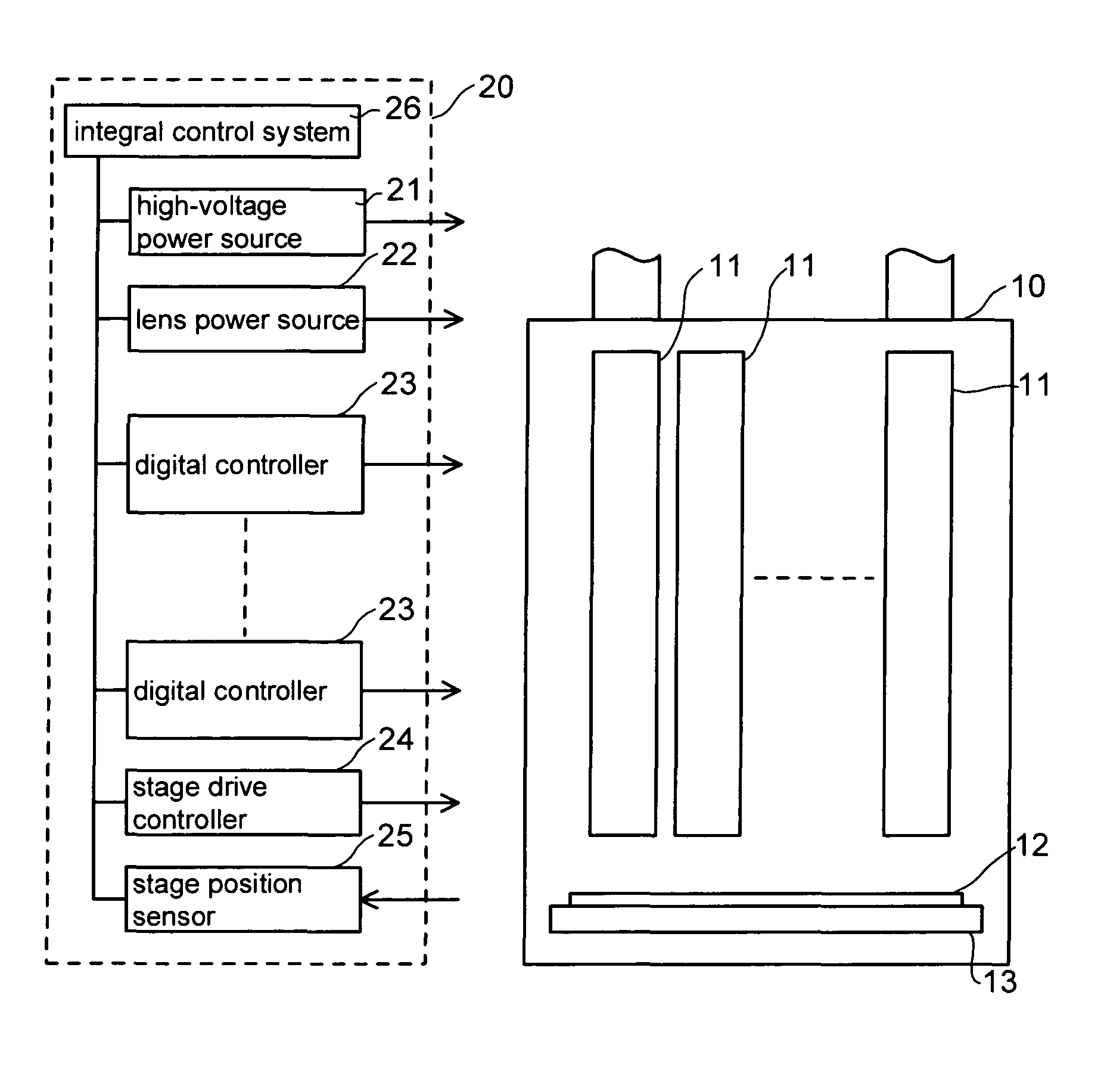

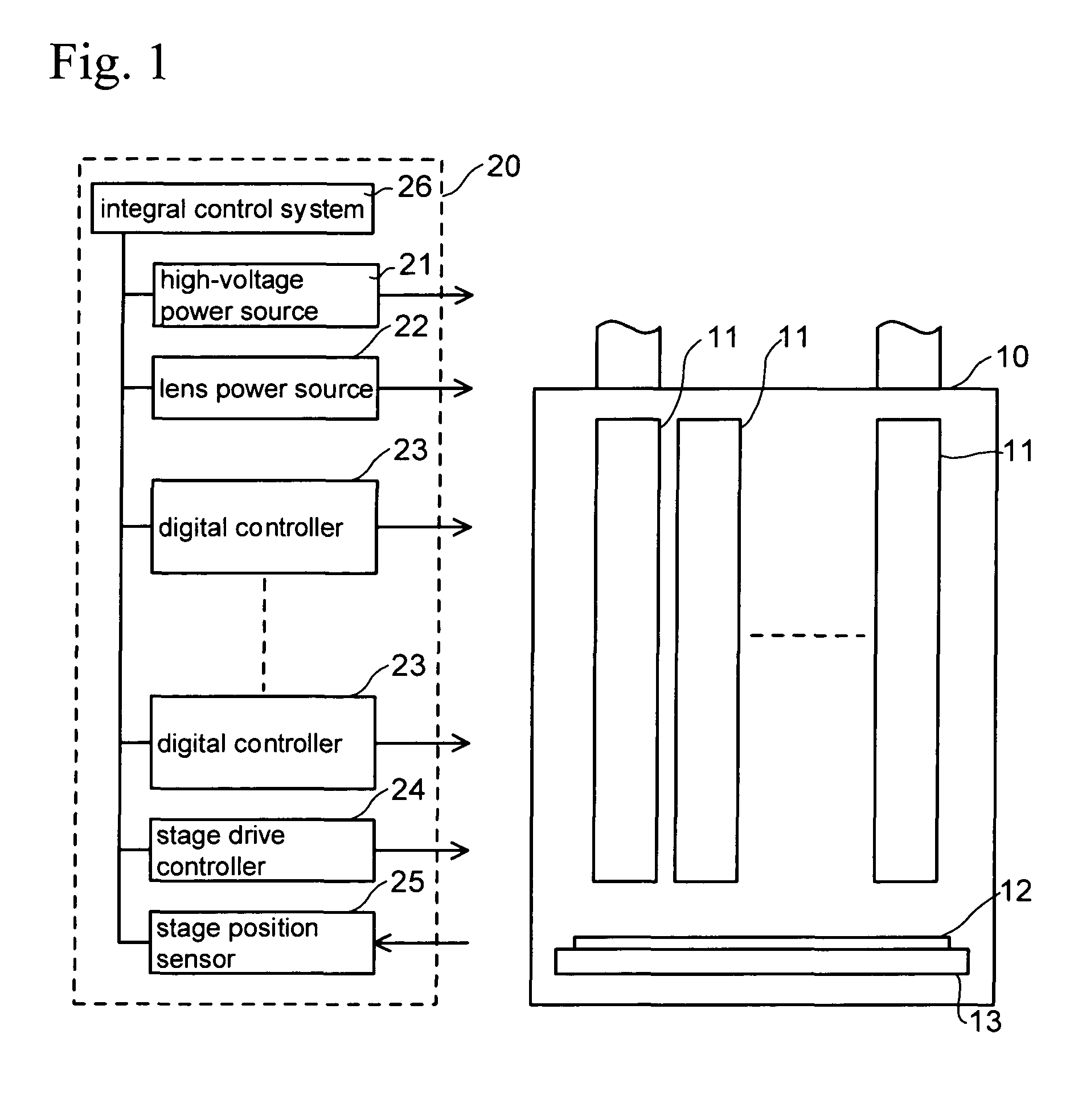

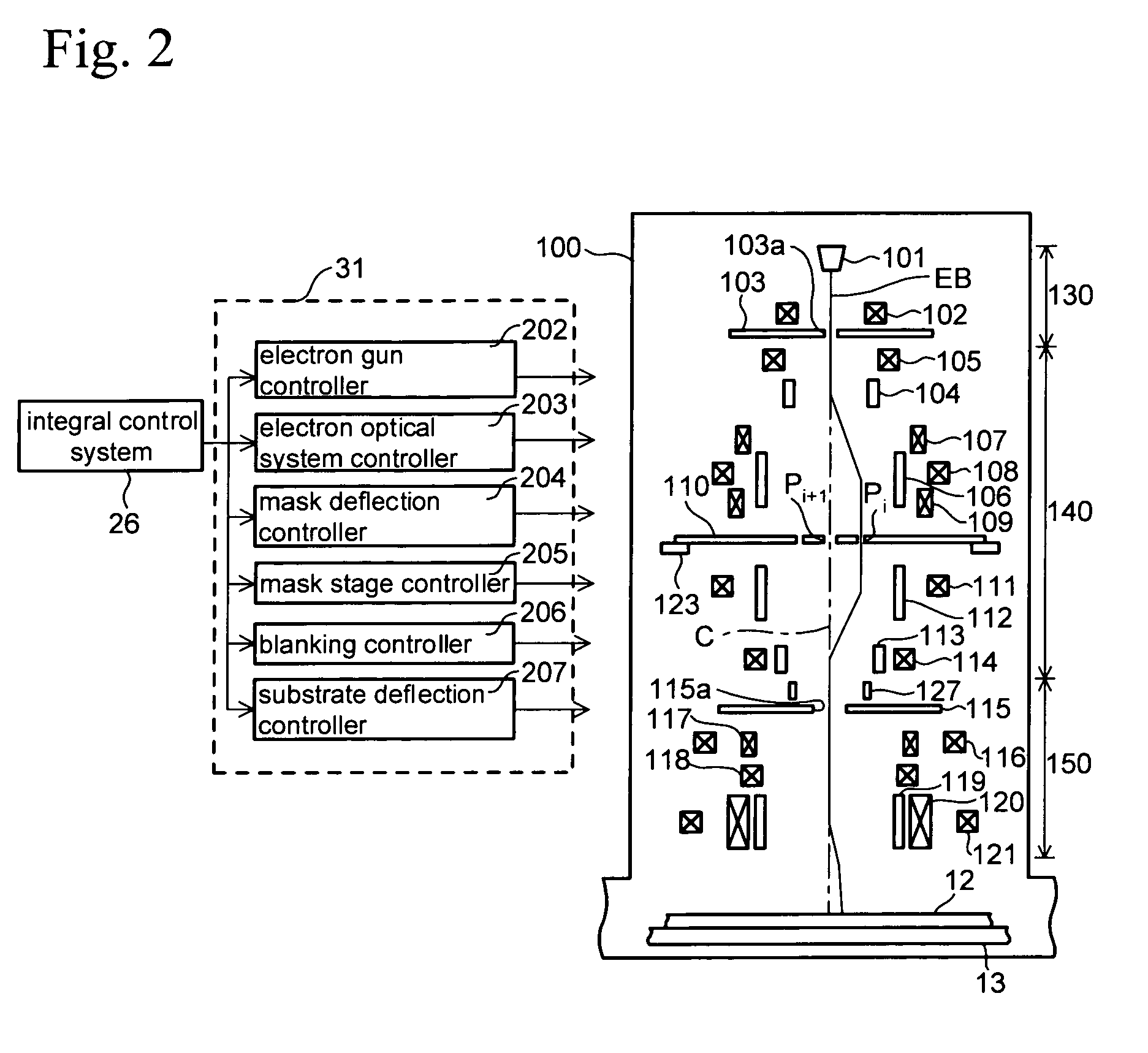

Projection electron beam lithography apparatus and method employing an estimator

PatentInactiveUS7305333B2

Innovation

- The implementation of a Kalman filter that integrates predictive models with real-time measurement capabilities, allowing for adaptive correction of wafer heating and beam drift errors, using an adaptive Kalman filter and multi-model adaptation to improve placement accuracy by reducing noise and uncertainty.

Electron beam lithography apparatus and electron beam lithography method

PatentActiveUS8384052B2

Innovation

- An electron beam lithography apparatus that incorporates a controller to calculate and apply both constant and fluctuating correction coefficients, based on measured environmental factors, to adjust exposure data and ensure accurate beam deflection and focus correction, thereby maintaining high accuracy continuously after initial calibration.

Data Security and IP Protection in AI-EBL Systems

The integration of artificial intelligence tools into electron beam lithography systems introduces significant data security and intellectual property protection challenges that require comprehensive strategic approaches. As AI-EBL systems process highly sensitive semiconductor manufacturing data, including proprietary design patterns, process parameters, and optimization algorithms, establishing robust security frameworks becomes paramount for maintaining competitive advantages and protecting valuable trade secrets.

Data encryption represents the foundational layer of security architecture in AI-EBL deployments. Advanced encryption protocols must be implemented across multiple touchpoints, including data transmission between AI processing units and lithography controllers, storage of training datasets containing proprietary patterns, and communication channels linking cloud-based AI services with on-premise manufacturing equipment. End-to-end encryption ensures that sensitive lithography parameters and design geometries remain protected throughout the entire AI optimization workflow.

Access control mechanisms require sophisticated implementation to balance operational efficiency with security requirements. Multi-factor authentication systems, role-based access privileges, and time-limited session management become essential components for controlling who can interact with AI-EBL optimization tools. These systems must accommodate various user categories, from process engineers requiring full parameter access to maintenance personnel needing limited diagnostic capabilities.

Intellectual property protection extends beyond traditional data security to encompass AI model protection and algorithmic trade secrets. Proprietary machine learning models developed for specific lithography optimization tasks represent significant competitive assets that require specialized protection strategies. Techniques such as model watermarking, federated learning approaches, and secure multi-party computation enable organizations to leverage AI capabilities while maintaining control over their algorithmic innovations.

Compliance frameworks must address both semiconductor industry standards and emerging AI governance requirements. Organizations deploying AI-EBL systems must navigate complex regulatory landscapes including export control regulations, data localization requirements, and industry-specific security standards. Regular security audits, vulnerability assessments, and compliance monitoring ensure that AI-EBL implementations maintain adherence to evolving regulatory expectations while supporting continuous process optimization objectives.

Data encryption represents the foundational layer of security architecture in AI-EBL deployments. Advanced encryption protocols must be implemented across multiple touchpoints, including data transmission between AI processing units and lithography controllers, storage of training datasets containing proprietary patterns, and communication channels linking cloud-based AI services with on-premise manufacturing equipment. End-to-end encryption ensures that sensitive lithography parameters and design geometries remain protected throughout the entire AI optimization workflow.

Access control mechanisms require sophisticated implementation to balance operational efficiency with security requirements. Multi-factor authentication systems, role-based access privileges, and time-limited session management become essential components for controlling who can interact with AI-EBL optimization tools. These systems must accommodate various user categories, from process engineers requiring full parameter access to maintenance personnel needing limited diagnostic capabilities.

Intellectual property protection extends beyond traditional data security to encompass AI model protection and algorithmic trade secrets. Proprietary machine learning models developed for specific lithography optimization tasks represent significant competitive assets that require specialized protection strategies. Techniques such as model watermarking, federated learning approaches, and secure multi-party computation enable organizations to leverage AI capabilities while maintaining control over their algorithmic innovations.

Compliance frameworks must address both semiconductor industry standards and emerging AI governance requirements. Organizations deploying AI-EBL systems must navigate complex regulatory landscapes including export control regulations, data localization requirements, and industry-specific security standards. Regular security audits, vulnerability assessments, and compliance monitoring ensure that AI-EBL implementations maintain adherence to evolving regulatory expectations while supporting continuous process optimization objectives.

Implementation Strategies for AI-EBL Integration

The successful deployment of AI tools in electron beam lithography requires a systematic approach that addresses both technical integration challenges and operational workflow modifications. Organizations must establish a comprehensive framework that encompasses data infrastructure, algorithm deployment, and process validation protocols to ensure seamless AI-EBL integration.

Data pipeline establishment forms the foundation of effective AI deployment in EBL systems. Real-time data collection mechanisms must be implemented to capture critical process parameters including beam current stability, substrate temperature variations, resist exposure characteristics, and pattern fidelity metrics. These data streams require standardized formatting and preprocessing protocols to ensure compatibility with machine learning algorithms while maintaining the high-speed processing requirements of production environments.

Algorithm deployment strategies should prioritize modular implementation approaches that allow for incremental integration without disrupting existing production workflows. Cloud-based AI platforms offer scalability advantages for complex pattern recognition and optimization tasks, while edge computing solutions provide low-latency responses for real-time process adjustments. Hybrid architectures combining both approaches enable organizations to balance computational power with response time requirements.

Process validation protocols must be established to verify AI-driven optimization recommendations before implementation. This includes developing benchmark testing procedures, establishing confidence thresholds for automated adjustments, and creating fallback mechanisms for situations where AI predictions fall outside acceptable parameters. Continuous monitoring systems should track performance improvements and identify potential drift in AI model accuracy over time.

Training and change management initiatives are essential for successful adoption. Technical staff require comprehensive education on AI system operation, interpretation of algorithmic outputs, and troubleshooting procedures. Clear protocols must define when human intervention is necessary and establish escalation procedures for complex optimization scenarios.

Integration with existing manufacturing execution systems ensures seamless data flow and maintains traceability requirements. API development and standardized communication protocols enable AI tools to interface effectively with legacy EBL equipment while preserving existing quality control frameworks and regulatory compliance measures.

Data pipeline establishment forms the foundation of effective AI deployment in EBL systems. Real-time data collection mechanisms must be implemented to capture critical process parameters including beam current stability, substrate temperature variations, resist exposure characteristics, and pattern fidelity metrics. These data streams require standardized formatting and preprocessing protocols to ensure compatibility with machine learning algorithms while maintaining the high-speed processing requirements of production environments.

Algorithm deployment strategies should prioritize modular implementation approaches that allow for incremental integration without disrupting existing production workflows. Cloud-based AI platforms offer scalability advantages for complex pattern recognition and optimization tasks, while edge computing solutions provide low-latency responses for real-time process adjustments. Hybrid architectures combining both approaches enable organizations to balance computational power with response time requirements.

Process validation protocols must be established to verify AI-driven optimization recommendations before implementation. This includes developing benchmark testing procedures, establishing confidence thresholds for automated adjustments, and creating fallback mechanisms for situations where AI predictions fall outside acceptable parameters. Continuous monitoring systems should track performance improvements and identify potential drift in AI model accuracy over time.

Training and change management initiatives are essential for successful adoption. Technical staff require comprehensive education on AI system operation, interpretation of algorithmic outputs, and troubleshooting procedures. Clear protocols must define when human intervention is necessary and establish escalation procedures for complex optimization scenarios.

Integration with existing manufacturing execution systems ensures seamless data flow and maintains traceability requirements. API development and standardized communication protocols enable AI tools to interface effectively with legacy EBL equipment while preserving existing quality control frameworks and regulatory compliance measures.

Unlock deeper insights with PatSnap Eureka Quick Research — get a full tech report to explore trends and direct your research. Try now!

Generate Your Research Report Instantly with AI Agent

Supercharge your innovation with PatSnap Eureka AI Agent Platform!