How to Implement Optical Burst Switching in Data Centers

MAR 2, 20269 MIN READ

Generate Your Research Report Instantly with AI Agent

Patsnap Eureka helps you evaluate technical feasibility & market potential.

OBS Technology Background and Data Center Goals

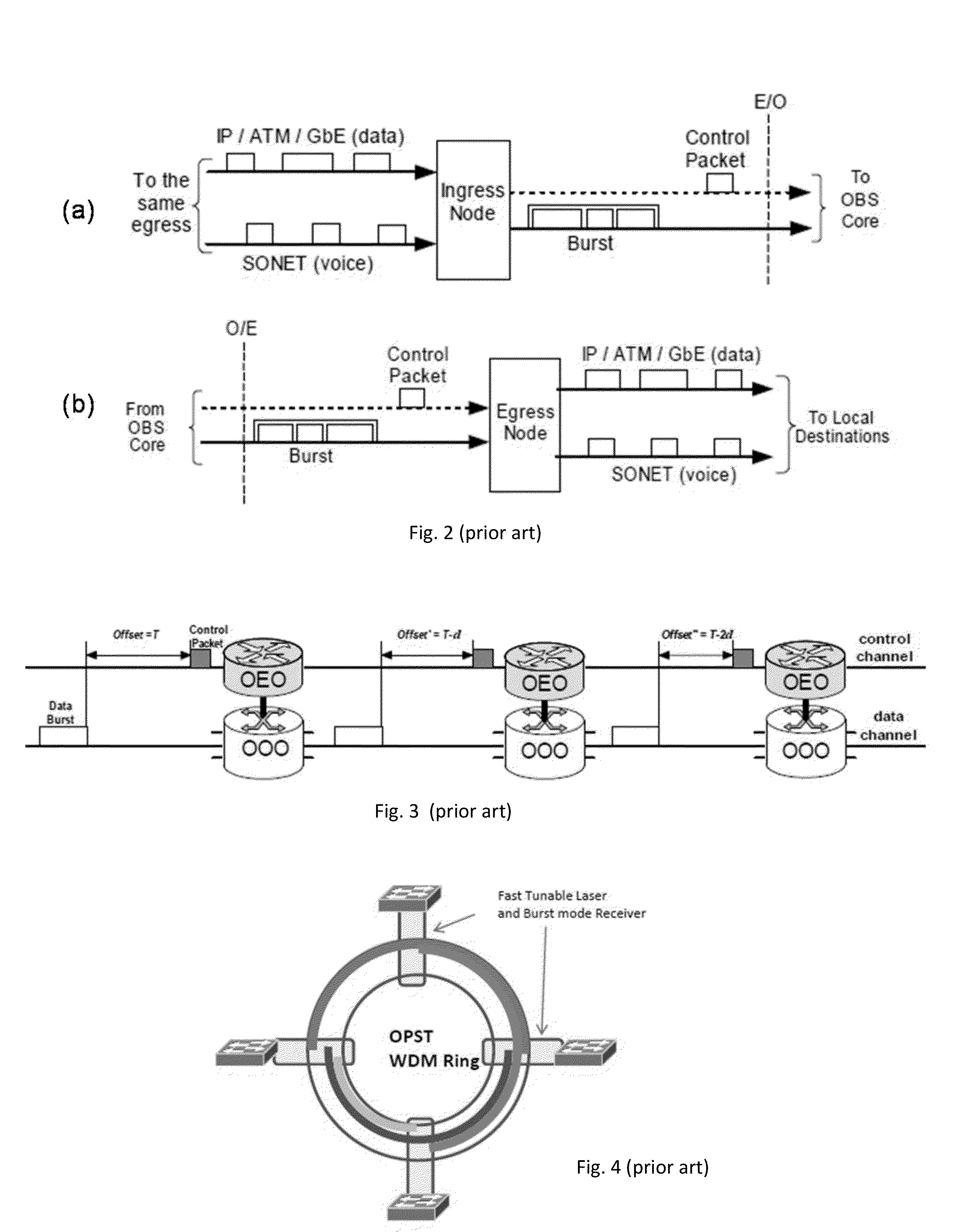

Optical Burst Switching (OBS) represents a revolutionary networking paradigm that emerged in the early 2000s as a hybrid solution combining the benefits of optical circuit switching and optical packet switching. This technology was initially conceived to address the limitations of electronic processing bottlenecks in high-speed optical networks, where the conversion between optical and electronic domains created significant latency and throughput constraints.

The fundamental principle of OBS involves transmitting data in variable-length bursts through all-optical networks without requiring optical-to-electronic conversion at intermediate nodes. Unlike traditional packet switching, OBS separates control information from data payload, with control packets sent ahead of data bursts to reserve network resources along the transmission path. This approach enables efficient bandwidth utilization while maintaining the speed advantages of optical transmission.

The evolution of OBS technology has been driven by the exponential growth in data traffic and the increasing demand for low-latency, high-bandwidth communication systems. Early research focused on burst assembly algorithms, scheduling mechanisms, and contention resolution strategies. Subsequent developments addressed quality of service provisioning, traffic engineering, and integration with existing network infrastructures.

In the context of modern data centers, OBS technology presents compelling opportunities to address critical performance challenges. Data centers face unprecedented demands for east-west traffic handling, where massive volumes of data flow between servers within the same facility. Traditional electronic switching architectures struggle with power consumption, heat generation, and processing delays when managing these traffic patterns at scale.

The primary goals for implementing OBS in data centers include achieving ultra-low latency communication between compute nodes, dramatically reducing power consumption compared to electronic switching solutions, and enabling seamless scalability to accommodate growing bandwidth requirements. Additionally, OBS implementation aims to minimize buffer requirements at switching nodes, reduce network congestion through efficient burst scheduling, and provide deterministic performance characteristics essential for high-performance computing applications.

Furthermore, OBS technology in data centers seeks to enable flexible resource allocation, support for heterogeneous traffic patterns, and improved fault tolerance through dynamic path selection capabilities. These objectives align with the broader industry trend toward software-defined networking and the need for more agile, efficient data center architectures capable of supporting emerging applications such as artificial intelligence, machine learning, and real-time analytics workloads.

The fundamental principle of OBS involves transmitting data in variable-length bursts through all-optical networks without requiring optical-to-electronic conversion at intermediate nodes. Unlike traditional packet switching, OBS separates control information from data payload, with control packets sent ahead of data bursts to reserve network resources along the transmission path. This approach enables efficient bandwidth utilization while maintaining the speed advantages of optical transmission.

The evolution of OBS technology has been driven by the exponential growth in data traffic and the increasing demand for low-latency, high-bandwidth communication systems. Early research focused on burst assembly algorithms, scheduling mechanisms, and contention resolution strategies. Subsequent developments addressed quality of service provisioning, traffic engineering, and integration with existing network infrastructures.

In the context of modern data centers, OBS technology presents compelling opportunities to address critical performance challenges. Data centers face unprecedented demands for east-west traffic handling, where massive volumes of data flow between servers within the same facility. Traditional electronic switching architectures struggle with power consumption, heat generation, and processing delays when managing these traffic patterns at scale.

The primary goals for implementing OBS in data centers include achieving ultra-low latency communication between compute nodes, dramatically reducing power consumption compared to electronic switching solutions, and enabling seamless scalability to accommodate growing bandwidth requirements. Additionally, OBS implementation aims to minimize buffer requirements at switching nodes, reduce network congestion through efficient burst scheduling, and provide deterministic performance characteristics essential for high-performance computing applications.

Furthermore, OBS technology in data centers seeks to enable flexible resource allocation, support for heterogeneous traffic patterns, and improved fault tolerance through dynamic path selection capabilities. These objectives align with the broader industry trend toward software-defined networking and the need for more agile, efficient data center architectures capable of supporting emerging applications such as artificial intelligence, machine learning, and real-time analytics workloads.

Market Demand for High-Speed Data Center Networks

The exponential growth of cloud computing, artificial intelligence, and big data analytics has created unprecedented demands for high-speed data center networks. Modern data centers must handle massive volumes of traffic with ultra-low latency requirements, driving the need for advanced networking technologies that can efficiently manage bandwidth-intensive applications and real-time processing workloads.

Traditional electronic switching architectures face significant bottlenecks when dealing with the scale and speed requirements of contemporary data centers. The increasing deployment of bandwidth-hungry applications such as machine learning training, high-frequency trading, and real-time video processing has exposed the limitations of conventional packet-switched networks, particularly in terms of latency, power consumption, and scalability.

The market demand for optical burst switching solutions stems from the critical need to overcome these electronic switching limitations. Data center operators are actively seeking technologies that can provide transparent, high-bandwidth connectivity while reducing the complexity and power consumption associated with electronic packet processing. The ability to handle bursty traffic patterns efficiently has become a key differentiator in data center network design.

Enterprise customers are driving demand for networks capable of supporting emerging technologies such as edge computing, Internet of Things deployments, and distributed artificial intelligence workloads. These applications require networks that can dynamically allocate bandwidth resources and provide predictable performance characteristics across varying traffic patterns and geographical distributions.

The growing adoption of virtualization and containerization technologies has further intensified the need for flexible, high-performance networking solutions. Data centers must support rapid provisioning and scaling of virtual resources while maintaining consistent network performance and reliability standards across diverse application workloads.

Financial institutions, content delivery networks, and scientific computing organizations represent key market segments demanding ultra-low latency networking solutions. These sectors require networks capable of handling burst traffic patterns while maintaining strict performance guarantees and minimizing jitter in data transmission.

The market opportunity for optical burst switching technologies continues to expand as data center consolidation trends accelerate and organizations seek to maximize infrastructure efficiency while meeting increasingly stringent performance requirements for next-generation applications and services.

Traditional electronic switching architectures face significant bottlenecks when dealing with the scale and speed requirements of contemporary data centers. The increasing deployment of bandwidth-hungry applications such as machine learning training, high-frequency trading, and real-time video processing has exposed the limitations of conventional packet-switched networks, particularly in terms of latency, power consumption, and scalability.

The market demand for optical burst switching solutions stems from the critical need to overcome these electronic switching limitations. Data center operators are actively seeking technologies that can provide transparent, high-bandwidth connectivity while reducing the complexity and power consumption associated with electronic packet processing. The ability to handle bursty traffic patterns efficiently has become a key differentiator in data center network design.

Enterprise customers are driving demand for networks capable of supporting emerging technologies such as edge computing, Internet of Things deployments, and distributed artificial intelligence workloads. These applications require networks that can dynamically allocate bandwidth resources and provide predictable performance characteristics across varying traffic patterns and geographical distributions.

The growing adoption of virtualization and containerization technologies has further intensified the need for flexible, high-performance networking solutions. Data centers must support rapid provisioning and scaling of virtual resources while maintaining consistent network performance and reliability standards across diverse application workloads.

Financial institutions, content delivery networks, and scientific computing organizations represent key market segments demanding ultra-low latency networking solutions. These sectors require networks capable of handling burst traffic patterns while maintaining strict performance guarantees and minimizing jitter in data transmission.

The market opportunity for optical burst switching technologies continues to expand as data center consolidation trends accelerate and organizations seek to maximize infrastructure efficiency while meeting increasingly stringent performance requirements for next-generation applications and services.

Current State and Challenges of OBS Implementation

Optical Burst Switching technology in data center environments currently exists primarily in research and experimental phases, with limited commercial deployment despite decades of development. The fundamental architecture involves edge nodes that aggregate packets into bursts, control channels for reservation signaling, and core optical switches that forward bursts without electronic processing. Current implementations face significant scalability challenges when adapting laboratory prototypes to the massive traffic volumes and stringent latency requirements of modern hyperscale data centers.

The most prominent technical challenge lies in burst contention resolution at optical switches. Unlike traditional electronic switching, optical buffers remain prohibitively expensive and technically complex, forcing reliance on deflection routing, wavelength conversion, or burst dropping mechanisms. These solutions introduce unpredictable latency variations that conflict with data center applications requiring consistent microsecond-level response times. Current contention resolution algorithms struggle to maintain acceptable performance under the bursty, correlated traffic patterns typical in data center workloads.

Synchronization represents another critical bottleneck in OBS implementation. Existing protocols require precise timing coordination between burst assembly at edge nodes and reservation setup at core switches. The distributed nature of data center architectures, combined with varying propagation delays across different optical paths, makes maintaining this synchronization increasingly difficult as network scale grows. Current timing mechanisms lack the robustness needed for reliable operation in dynamic data center environments.

Control plane overhead poses substantial limitations for practical deployment. Traditional OBS control protocols generate significant signaling traffic for burst reservation and teardown, consuming valuable bandwidth and processing resources. In data center contexts where millions of short-lived flows require rapid establishment and termination, this overhead becomes prohibitive. Existing control plane architectures have not adequately addressed the scalability requirements of contemporary data center traffic patterns.

Integration challenges with existing data center infrastructure represent a major deployment barrier. Current OBS implementations require specialized optical hardware and control software that cannot easily interface with standard Ethernet-based data center networks. The lack of standardized protocols and interoperability frameworks limits adoption, as operators cannot incrementally deploy OBS technology without significant infrastructure overhaul. Additionally, network management and monitoring tools for OBS remain immature compared to established electronic switching platforms.

Performance predictability issues further constrain OBS viability in production data center environments. The probabilistic nature of burst contention and variable assembly delays make it difficult to provide the service level guarantees required for critical applications. Current implementations lack sophisticated traffic engineering capabilities needed to optimize performance across diverse workload requirements simultaneously.

The most prominent technical challenge lies in burst contention resolution at optical switches. Unlike traditional electronic switching, optical buffers remain prohibitively expensive and technically complex, forcing reliance on deflection routing, wavelength conversion, or burst dropping mechanisms. These solutions introduce unpredictable latency variations that conflict with data center applications requiring consistent microsecond-level response times. Current contention resolution algorithms struggle to maintain acceptable performance under the bursty, correlated traffic patterns typical in data center workloads.

Synchronization represents another critical bottleneck in OBS implementation. Existing protocols require precise timing coordination between burst assembly at edge nodes and reservation setup at core switches. The distributed nature of data center architectures, combined with varying propagation delays across different optical paths, makes maintaining this synchronization increasingly difficult as network scale grows. Current timing mechanisms lack the robustness needed for reliable operation in dynamic data center environments.

Control plane overhead poses substantial limitations for practical deployment. Traditional OBS control protocols generate significant signaling traffic for burst reservation and teardown, consuming valuable bandwidth and processing resources. In data center contexts where millions of short-lived flows require rapid establishment and termination, this overhead becomes prohibitive. Existing control plane architectures have not adequately addressed the scalability requirements of contemporary data center traffic patterns.

Integration challenges with existing data center infrastructure represent a major deployment barrier. Current OBS implementations require specialized optical hardware and control software that cannot easily interface with standard Ethernet-based data center networks. The lack of standardized protocols and interoperability frameworks limits adoption, as operators cannot incrementally deploy OBS technology without significant infrastructure overhaul. Additionally, network management and monitoring tools for OBS remain immature compared to established electronic switching platforms.

Performance predictability issues further constrain OBS viability in production data center environments. The probabilistic nature of burst contention and variable assembly delays make it difficult to provide the service level guarantees required for critical applications. Current implementations lack sophisticated traffic engineering capabilities needed to optimize performance across diverse workload requirements simultaneously.

Existing OBS Solutions for Data Center Networks

01 Burst assembly and scheduling mechanisms

Optical burst switching networks require efficient mechanisms for assembling data packets into bursts and scheduling their transmission. Various algorithms and methods have been developed to optimize burst assembly based on factors such as burst size, timeout values, and traffic characteristics. These mechanisms aim to improve network throughput and reduce delay by efficiently grouping packets and determining optimal transmission times.- Burst assembly and scheduling mechanisms: Optical burst switching networks require efficient mechanisms for assembling data packets into bursts and scheduling their transmission. Various algorithms and methods have been developed to optimize burst assembly based on factors such as burst size, timeout values, and traffic characteristics. These mechanisms aim to improve network throughput and reduce delay by efficiently grouping packets and determining optimal transmission times.

- Contention resolution and wavelength conversion: When multiple bursts contend for the same output port or wavelength, contention resolution techniques are essential. Solutions include wavelength conversion, fiber delay lines, burst segmentation, and deflection routing. These methods help minimize burst loss and improve network performance by providing alternative paths or temporary storage for conflicting bursts.

- Control channel signaling and reservation protocols: Effective signaling protocols are crucial for optical burst switching to reserve resources along the transmission path. Control packets are sent ahead of data bursts to configure switches and reserve wavelengths. Various reservation protocols have been proposed to handle offset times, resource allocation, and acknowledgment mechanisms to ensure successful burst transmission.

- Quality of Service differentiation and priority handling: Optical burst switching networks need to support different service classes with varying quality requirements. Mechanisms for QoS differentiation include priority-based scheduling, offset time differentiation, and selective burst dropping. These techniques enable the network to provide better service guarantees for high-priority traffic while maintaining overall network efficiency.

- Network architecture and node design: The physical architecture of optical burst switching networks involves specialized node designs and switching fabrics. Key components include optical cross-connects, wavelength routers, and control units. Various architectures have been proposed to optimize switching speed, scalability, and cost-effectiveness while supporting the unique requirements of burst-mode transmission.

02 Contention resolution and resource allocation

When multiple bursts compete for the same resources in optical burst switching networks, contention resolution techniques are essential. Methods include wavelength conversion, fiber delay lines, burst segmentation, and deflection routing. These approaches help minimize burst loss and improve network performance by managing conflicts when bursts arrive simultaneously at network nodes. Resource allocation strategies ensure efficient utilization of available bandwidth and wavelengths.Expand Specific Solutions03 Control packet processing and signaling protocols

Optical burst switching relies on control packets sent ahead of data bursts to reserve resources along the transmission path. Various signaling protocols and control packet processing methods have been developed to establish burst transmission paths, configure optical switches, and manage network resources. These protocols handle offset time management, resource reservation, and coordination between network nodes to ensure successful burst delivery.Expand Specific Solutions04 Quality of service and traffic differentiation

Implementing quality of service mechanisms in optical burst switching networks enables differentiated treatment of traffic with varying priority levels and service requirements. Techniques include priority-based scheduling, offset time differentiation, and resource reservation strategies that ensure high-priority bursts receive preferential treatment. These methods support diverse application requirements and improve overall network performance for critical traffic.Expand Specific Solutions05 Network architecture and node design

The physical architecture and design of optical burst switching nodes significantly impact network performance. Various node architectures have been proposed, including different switch fabric designs, wavelength converter configurations, and buffer management systems. These designs address challenges such as switching speed, scalability, and cost-effectiveness while supporting the unique requirements of burst-switched optical networks.Expand Specific Solutions

Key Players in OBS and Data Center Industry

The optical burst switching (OBS) technology for data centers is in an emerging development stage with significant growth potential, driven by increasing demands for high-bandwidth, low-latency networking solutions. The market remains relatively nascent but shows promising expansion as cloud computing and big data applications intensify bandwidth requirements. Technology maturity varies considerably across key players, with established telecommunications giants like Huawei Technologies, Samsung Electronics, Intel Corp., and ZTE Corp. leading commercial development efforts, while Nokia Solutions & Networks and NEC Corp. contribute substantial infrastructure expertise. Academic institutions including Beijing University of Posts & Telecommunications, Shanghai Jiao Tong University, and Korea Advanced Institute of Science & Technology are advancing fundamental research and prototype development. The competitive landscape reflects a hybrid ecosystem where commercial entities focus on practical implementation while research institutions drive theoretical breakthroughs and proof-of-concept demonstrations.

Huawei Technologies Co., Ltd.

Technical Solution: Huawei has developed comprehensive optical burst switching solutions for data center networks, focusing on high-speed optical packet switching architectures. Their approach integrates wavelength division multiplexing (WDM) with burst switching protocols to achieve microsecond-level switching latency. The company's OBS implementation features advanced buffer management algorithms and contention resolution mechanisms using fiber delay lines and wavelength conversion. Their solution supports burst assembly algorithms that optimize traffic aggregation from multiple input ports, enabling efficient bandwidth utilization in large-scale data center environments. Huawei's OBS technology incorporates machine learning-based traffic prediction to minimize burst collisions and improve overall network throughput performance.

Strengths: Strong integration capabilities with existing data center infrastructure, advanced traffic prediction algorithms, comprehensive end-to-end solutions. Weaknesses: High implementation complexity, significant capital investment requirements for full deployment.

Alcatel-Lucent S.A

Technical Solution: Alcatel-Lucent has pioneered optical burst switching technologies with focus on contention resolution and burst scheduling algorithms for data center networks. Their OBS solution implements sophisticated wavelength assignment strategies and utilizes optical buffering through fiber delay lines to handle traffic bursts efficiently. The company's approach includes adaptive burst assembly mechanisms that dynamically adjust burst sizes based on network conditions and traffic patterns. Their implementation features advanced signaling protocols for burst reservation and supports both one-way and two-way reservation schemes. Alcatel-Lucent's OBS architecture incorporates deflection routing capabilities to handle network congestion and provides quality-of-service differentiation for various data center applications requiring different latency and bandwidth guarantees.

Strengths: Extensive optical networking experience, proven contention resolution algorithms, strong research and development capabilities. Weaknesses: Legacy system integration challenges, higher maintenance complexity compared to traditional switching solutions.

Core Innovations in Optical Burst Switching

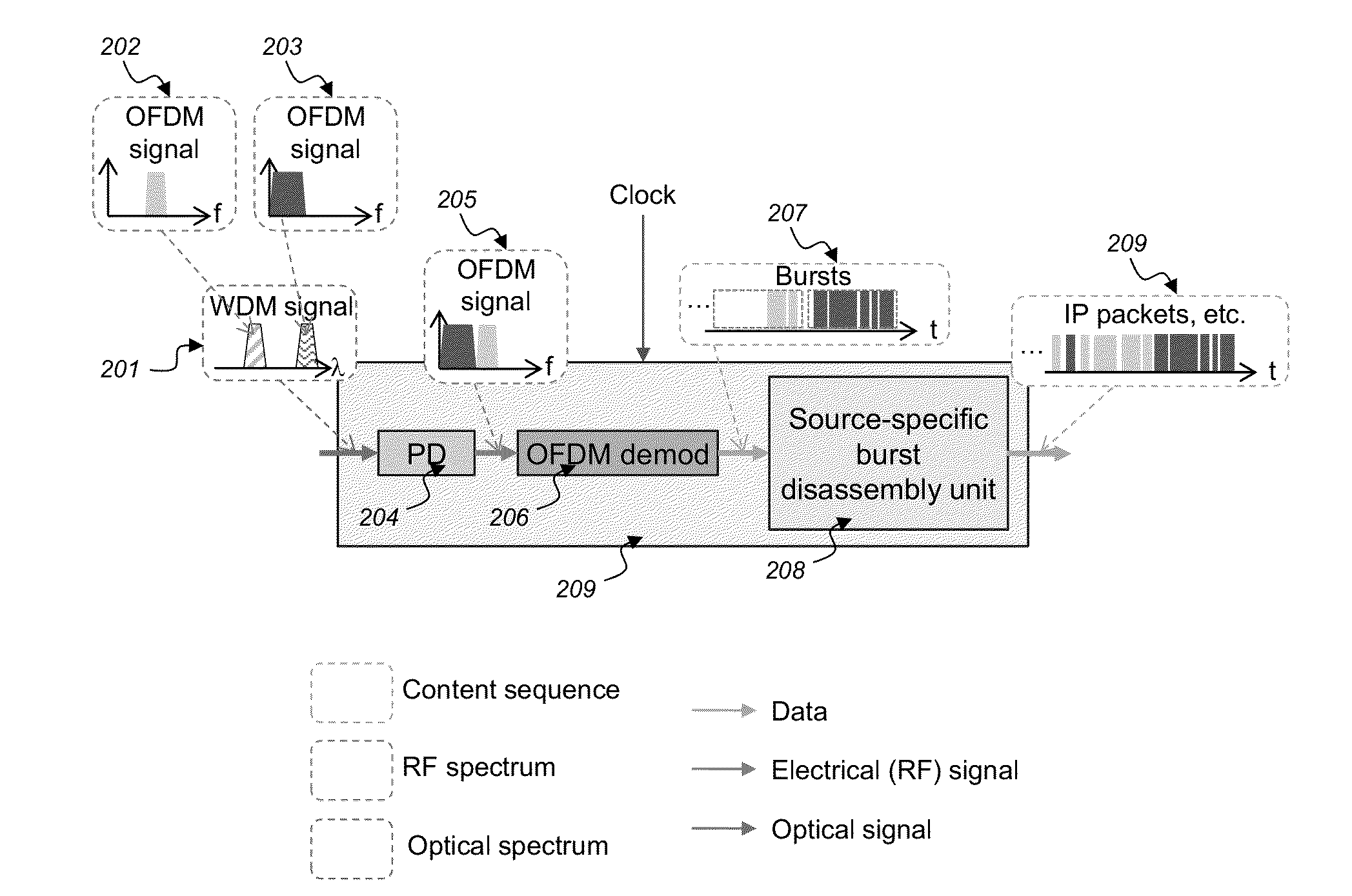

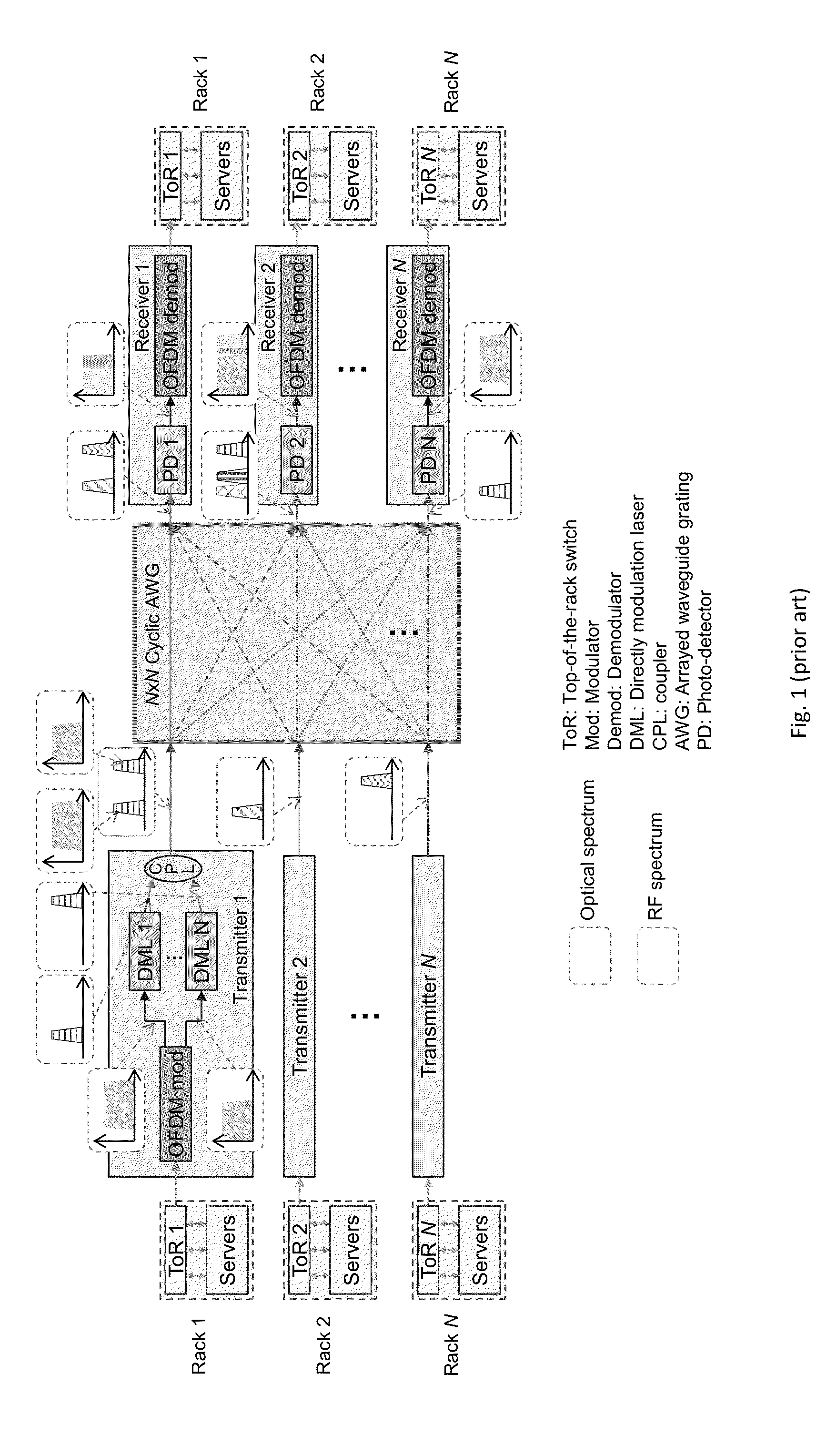

Switching for a MIMO-OFDM Based Flexible Rate Intra-Data Center Network

PatentActiveUS20140212135A1

Innovation

- A MIMO-OFDM based flexible rate intra-data center network with optical burst switching (OBS) capability and a centralized control configuration, enabling efficient sub-wavelength level switching and software-defined network (SDN) functionality, which allows for concurrent burst assembling and wavelength division multiplexing WDM OFDM signal generation without requiring hardware modifications.

System and method for photonic switching

PatentWO2016184345A1

Innovation

- Multi-packet container aggregation mechanism that batches packets from source TOR to destination TOR, enabling efficient optical burst transmission across photonic switches in data center environments.

- Integration of photonic switching technology to overcome electrical packet switch capacity limitations in high-throughput data center environments (5-10 terabytes per second).

- Transport container optimization for handling fragmented traffic scenarios where traditional burst mode switches experience slow container filling in TOR-to-TOR communications.

Energy Efficiency Standards for Data Centers

The implementation of Optical Burst Switching (OBS) in data centers presents significant opportunities for improving energy efficiency, necessitating the establishment of comprehensive energy efficiency standards. Current data center energy consumption patterns reveal that traditional electronic switching architectures consume substantial power through continuous packet processing and buffering mechanisms. OBS technology offers a paradigm shift by reducing electronic processing overhead and enabling more efficient optical path utilization.

Energy efficiency standards for OBS-enabled data centers must address multiple operational parameters. Power consumption metrics should encompass both static and dynamic energy usage, including optical transmitter efficiency, electronic control plane overhead, and burst assembly processing requirements. Standards should define baseline energy consumption thresholds for different traffic loads and establish measurement methodologies for optical switching equipment.

Thermal management standards become particularly critical in OBS implementations due to the heat generation characteristics of high-power optical components. Temperature regulation requirements must account for wavelength stability in optical burst transmissions and the thermal sensitivity of photonic switching elements. Cooling efficiency metrics should incorporate the unique thermal profiles of hybrid optical-electronic architectures.

Performance-to-power ratio standards should establish benchmarks for OBS systems, measuring throughput capacity against energy consumption. These standards must consider burst assembly delays, optical switching reconfiguration times, and the energy cost of maintaining optical paths. Comparative metrics should evaluate OBS efficiency against traditional electronic switching under various traffic patterns.

Sustainability standards should address the lifecycle energy impact of OBS infrastructure, including manufacturing energy costs of photonic components and end-of-life recycling considerations. Standards must also define energy reporting requirements for OBS deployments, ensuring transparent measurement of power usage effectiveness and enabling accurate assessment of environmental impact across different implementation scales.

Energy efficiency standards for OBS-enabled data centers must address multiple operational parameters. Power consumption metrics should encompass both static and dynamic energy usage, including optical transmitter efficiency, electronic control plane overhead, and burst assembly processing requirements. Standards should define baseline energy consumption thresholds for different traffic loads and establish measurement methodologies for optical switching equipment.

Thermal management standards become particularly critical in OBS implementations due to the heat generation characteristics of high-power optical components. Temperature regulation requirements must account for wavelength stability in optical burst transmissions and the thermal sensitivity of photonic switching elements. Cooling efficiency metrics should incorporate the unique thermal profiles of hybrid optical-electronic architectures.

Performance-to-power ratio standards should establish benchmarks for OBS systems, measuring throughput capacity against energy consumption. These standards must consider burst assembly delays, optical switching reconfiguration times, and the energy cost of maintaining optical paths. Comparative metrics should evaluate OBS efficiency against traditional electronic switching under various traffic patterns.

Sustainability standards should address the lifecycle energy impact of OBS infrastructure, including manufacturing energy costs of photonic components and end-of-life recycling considerations. Standards must also define energy reporting requirements for OBS deployments, ensuring transparent measurement of power usage effectiveness and enabling accurate assessment of environmental impact across different implementation scales.

Network Architecture Optimization Strategies

Implementing Optical Burst Switching in data centers requires comprehensive network architecture optimization strategies that address both physical infrastructure and logical network design considerations. The optimization approach must balance performance requirements with cost-effectiveness while ensuring scalability for future growth demands.

The foundational strategy involves establishing a hierarchical network topology that leverages OBS capabilities at different network layers. Core switching infrastructure should be designed with high-capacity optical switches capable of handling burst traffic patterns, while edge nodes require sophisticated buffering mechanisms to aggregate electronic packets into optical bursts. This tiered approach enables efficient traffic flow management and reduces the complexity of burst scheduling algorithms.

Traffic engineering represents a critical optimization dimension, requiring dynamic bandwidth allocation mechanisms that can adapt to varying workload patterns. The architecture must incorporate intelligent burst assembly algorithms that optimize burst size based on real-time traffic characteristics and destination requirements. Load balancing strategies should distribute bursts across multiple optical paths to prevent congestion and maximize network utilization efficiency.

Network resilience optimization involves implementing redundant optical paths and fast failover mechanisms to maintain service continuity during component failures. The architecture should include distributed control plane designs that eliminate single points of failure while maintaining low-latency burst reservation protocols. Protection switching capabilities must be integrated at both the optical and electronic layers to ensure comprehensive fault tolerance.

Quality of Service optimization requires sophisticated traffic classification and prioritization mechanisms that can differentiate between various application requirements. The network architecture should support multiple service classes with distinct burst handling policies, enabling guaranteed bandwidth allocation for critical applications while maintaining best-effort service for background traffic.

Scalability optimization strategies focus on modular network designs that can accommodate incremental capacity expansion without requiring complete infrastructure overhauls. The architecture should support seamless integration of additional optical switching nodes and wavelength channels while maintaining backward compatibility with existing network elements and protocols.

The foundational strategy involves establishing a hierarchical network topology that leverages OBS capabilities at different network layers. Core switching infrastructure should be designed with high-capacity optical switches capable of handling burst traffic patterns, while edge nodes require sophisticated buffering mechanisms to aggregate electronic packets into optical bursts. This tiered approach enables efficient traffic flow management and reduces the complexity of burst scheduling algorithms.

Traffic engineering represents a critical optimization dimension, requiring dynamic bandwidth allocation mechanisms that can adapt to varying workload patterns. The architecture must incorporate intelligent burst assembly algorithms that optimize burst size based on real-time traffic characteristics and destination requirements. Load balancing strategies should distribute bursts across multiple optical paths to prevent congestion and maximize network utilization efficiency.

Network resilience optimization involves implementing redundant optical paths and fast failover mechanisms to maintain service continuity during component failures. The architecture should include distributed control plane designs that eliminate single points of failure while maintaining low-latency burst reservation protocols. Protection switching capabilities must be integrated at both the optical and electronic layers to ensure comprehensive fault tolerance.

Quality of Service optimization requires sophisticated traffic classification and prioritization mechanisms that can differentiate between various application requirements. The network architecture should support multiple service classes with distinct burst handling policies, enabling guaranteed bandwidth allocation for critical applications while maintaining best-effort service for background traffic.

Scalability optimization strategies focus on modular network designs that can accommodate incremental capacity expansion without requiring complete infrastructure overhauls. The architecture should support seamless integration of additional optical switching nodes and wavelength channels while maintaining backward compatibility with existing network elements and protocols.

Unlock deeper insights with Patsnap Eureka Quick Research — get a full tech report to explore trends and direct your research. Try now!

Generate Your Research Report Instantly with AI Agent

Supercharge your innovation with Patsnap Eureka AI Agent Platform!