How to Maximize Data Flow in Digital Platforms

FEB 24, 20269 MIN READ

Generate Your Research Report Instantly with AI Agent

Patsnap Eureka helps you evaluate technical feasibility & market potential.

Digital Platform Data Flow Optimization Background and Goals

Digital platforms have fundamentally transformed how organizations collect, process, and leverage data assets in the modern economy. The exponential growth of digital interactions, IoT devices, and cloud-based services has created unprecedented volumes of data flowing through enterprise systems. This technological evolution has shifted the competitive landscape, where organizations that can effectively harness and optimize their data flows gain significant advantages in decision-making, customer experience, and operational efficiency.

The historical development of digital platforms reveals a clear trajectory from simple data storage systems to complex, interconnected ecosystems capable of real-time data processing and analytics. Early platforms focused primarily on data collection and basic reporting, but contemporary systems demand sophisticated architectures that can handle multi-directional data streams, ensure low-latency processing, and maintain data integrity across distributed networks.

Current market dynamics indicate that data flow optimization has become a critical differentiator for digital platform success. Organizations are increasingly recognizing that the velocity and quality of data movement directly impact their ability to respond to market changes, personalize user experiences, and drive innovation. The emergence of edge computing, 5G networks, and advanced analytics has further amplified the importance of optimizing data pathways within digital ecosystems.

The primary technical objectives for maximizing data flow in digital platforms encompass several key dimensions. Performance optimization aims to minimize latency and maximize throughput across all data processing stages, from ingestion to analysis and output. Scalability targets ensure that platforms can accommodate growing data volumes and user demands without degrading performance or requiring complete architectural overhauls.

Reliability and consistency objectives focus on maintaining data integrity and ensuring continuous availability of data services, even during peak usage periods or system failures. Additionally, cost efficiency goals seek to optimize resource utilization and reduce operational expenses while maintaining or improving data flow performance.

The strategic importance of addressing data flow optimization extends beyond immediate technical benefits. Organizations that successfully implement optimized data flows can achieve faster time-to-market for new products, improve customer satisfaction through real-time personalization, and enhance operational agility. These capabilities are becoming essential for maintaining competitive positioning in increasingly data-driven markets, making data flow optimization a critical component of long-term digital transformation strategies.

The historical development of digital platforms reveals a clear trajectory from simple data storage systems to complex, interconnected ecosystems capable of real-time data processing and analytics. Early platforms focused primarily on data collection and basic reporting, but contemporary systems demand sophisticated architectures that can handle multi-directional data streams, ensure low-latency processing, and maintain data integrity across distributed networks.

Current market dynamics indicate that data flow optimization has become a critical differentiator for digital platform success. Organizations are increasingly recognizing that the velocity and quality of data movement directly impact their ability to respond to market changes, personalize user experiences, and drive innovation. The emergence of edge computing, 5G networks, and advanced analytics has further amplified the importance of optimizing data pathways within digital ecosystems.

The primary technical objectives for maximizing data flow in digital platforms encompass several key dimensions. Performance optimization aims to minimize latency and maximize throughput across all data processing stages, from ingestion to analysis and output. Scalability targets ensure that platforms can accommodate growing data volumes and user demands without degrading performance or requiring complete architectural overhauls.

Reliability and consistency objectives focus on maintaining data integrity and ensuring continuous availability of data services, even during peak usage periods or system failures. Additionally, cost efficiency goals seek to optimize resource utilization and reduce operational expenses while maintaining or improving data flow performance.

The strategic importance of addressing data flow optimization extends beyond immediate technical benefits. Organizations that successfully implement optimized data flows can achieve faster time-to-market for new products, improve customer satisfaction through real-time personalization, and enhance operational agility. These capabilities are becoming essential for maintaining competitive positioning in increasingly data-driven markets, making data flow optimization a critical component of long-term digital transformation strategies.

Market Demand for High-Performance Data Processing Platforms

The global demand for high-performance data processing platforms has experienced unprecedented growth driven by the exponential increase in data generation across industries. Organizations worldwide are grappling with massive volumes of structured and unstructured data that require real-time processing capabilities to extract actionable insights and maintain competitive advantages.

Enterprise sectors including financial services, telecommunications, healthcare, and e-commerce represent the primary demand drivers for advanced data processing solutions. Financial institutions require ultra-low latency processing for algorithmic trading and fraud detection systems, while telecommunications companies need robust platforms to handle network traffic analytics and customer behavior analysis in real-time.

The proliferation of Internet of Things devices and edge computing applications has created new market segments demanding distributed data processing capabilities. Manufacturing industries increasingly rely on real-time data analytics for predictive maintenance and quality control, generating substantial demand for platforms capable of processing streaming data from thousands of sensors simultaneously.

Cloud service providers have emerged as significant consumers of high-performance data processing technologies, seeking to offer enhanced analytics services to their enterprise clients. The shift toward hybrid and multi-cloud architectures has intensified the need for platforms that can seamlessly integrate and process data across diverse infrastructure environments.

Market dynamics reveal a growing preference for platforms offering horizontal scalability and elastic resource allocation. Organizations demand solutions that can dynamically adjust processing capacity based on workload fluctuations while maintaining consistent performance levels. This requirement has driven innovation in distributed computing architectures and containerized processing frameworks.

The regulatory landscape, particularly data privacy regulations and compliance requirements, has influenced market demand patterns. Organizations seek platforms that incorporate built-in security features and audit capabilities while maintaining high throughput rates. This dual requirement for performance and compliance has created opportunities for specialized solutions targeting regulated industries.

Emerging technologies such as artificial intelligence and machine learning workloads have introduced new performance requirements, driving demand for platforms optimized for parallel processing and GPU acceleration. The integration of real-time analytics with predictive modeling capabilities represents a key market trend shaping platform development priorities.

Enterprise sectors including financial services, telecommunications, healthcare, and e-commerce represent the primary demand drivers for advanced data processing solutions. Financial institutions require ultra-low latency processing for algorithmic trading and fraud detection systems, while telecommunications companies need robust platforms to handle network traffic analytics and customer behavior analysis in real-time.

The proliferation of Internet of Things devices and edge computing applications has created new market segments demanding distributed data processing capabilities. Manufacturing industries increasingly rely on real-time data analytics for predictive maintenance and quality control, generating substantial demand for platforms capable of processing streaming data from thousands of sensors simultaneously.

Cloud service providers have emerged as significant consumers of high-performance data processing technologies, seeking to offer enhanced analytics services to their enterprise clients. The shift toward hybrid and multi-cloud architectures has intensified the need for platforms that can seamlessly integrate and process data across diverse infrastructure environments.

Market dynamics reveal a growing preference for platforms offering horizontal scalability and elastic resource allocation. Organizations demand solutions that can dynamically adjust processing capacity based on workload fluctuations while maintaining consistent performance levels. This requirement has driven innovation in distributed computing architectures and containerized processing frameworks.

The regulatory landscape, particularly data privacy regulations and compliance requirements, has influenced market demand patterns. Organizations seek platforms that incorporate built-in security features and audit capabilities while maintaining high throughput rates. This dual requirement for performance and compliance has created opportunities for specialized solutions targeting regulated industries.

Emerging technologies such as artificial intelligence and machine learning workloads have introduced new performance requirements, driving demand for platforms optimized for parallel processing and GPU acceleration. The integration of real-time analytics with predictive modeling capabilities represents a key market trend shaping platform development priorities.

Current State and Bottlenecks in Digital Data Flow Systems

Digital platforms today face unprecedented challenges in managing massive data volumes while maintaining optimal performance. Current data flow systems operate across complex multi-layered architectures that include data ingestion layers, processing engines, storage systems, and delivery networks. These systems must handle diverse data types ranging from structured transactional data to unstructured multimedia content, creating inherent complexity in flow optimization.

The primary bottleneck in contemporary digital data flow systems stems from network bandwidth limitations and latency constraints. Despite advances in fiber optic technology and 5G networks, the physical limitations of data transmission continue to create significant delays, particularly in geographically distributed systems. Edge computing has emerged as a partial solution, but implementation remains inconsistent across different platform architectures.

Storage system inefficiencies represent another critical constraint affecting data flow maximization. Traditional database architectures struggle with concurrent read-write operations at scale, leading to queue formations and processing delays. While distributed storage solutions like Apache Cassandra and MongoDB have addressed some scalability issues, they introduce new challenges related to data consistency and synchronization across nodes.

Processing bottlenecks occur frequently at the computational layer, where CPU and memory limitations restrict the speed of data transformation and analysis operations. Modern platforms often experience resource contention when multiple data-intensive processes compete for the same computational resources. This is particularly evident in real-time analytics scenarios where streaming data requires immediate processing while batch operations continue in parallel.

Data serialization and deserialization processes create additional friction points in the flow pipeline. The overhead associated with converting data between different formats for transmission and storage can consume significant computational resources, especially when dealing with complex data structures or large binary objects.

Current monitoring and optimization tools provide limited visibility into end-to-end data flow performance. Most platforms rely on fragmented monitoring solutions that track individual components rather than providing holistic flow analysis. This limitation makes it difficult to identify cascade effects where bottlenecks in one system component create performance degradation throughout the entire data pipeline.

Security and compliance requirements further constrain data flow optimization efforts. Encryption, authentication, and audit logging processes, while essential for data protection, introduce additional processing overhead and latency that must be balanced against performance objectives in modern digital platform architectures.

The primary bottleneck in contemporary digital data flow systems stems from network bandwidth limitations and latency constraints. Despite advances in fiber optic technology and 5G networks, the physical limitations of data transmission continue to create significant delays, particularly in geographically distributed systems. Edge computing has emerged as a partial solution, but implementation remains inconsistent across different platform architectures.

Storage system inefficiencies represent another critical constraint affecting data flow maximization. Traditional database architectures struggle with concurrent read-write operations at scale, leading to queue formations and processing delays. While distributed storage solutions like Apache Cassandra and MongoDB have addressed some scalability issues, they introduce new challenges related to data consistency and synchronization across nodes.

Processing bottlenecks occur frequently at the computational layer, where CPU and memory limitations restrict the speed of data transformation and analysis operations. Modern platforms often experience resource contention when multiple data-intensive processes compete for the same computational resources. This is particularly evident in real-time analytics scenarios where streaming data requires immediate processing while batch operations continue in parallel.

Data serialization and deserialization processes create additional friction points in the flow pipeline. The overhead associated with converting data between different formats for transmission and storage can consume significant computational resources, especially when dealing with complex data structures or large binary objects.

Current monitoring and optimization tools provide limited visibility into end-to-end data flow performance. Most platforms rely on fragmented monitoring solutions that track individual components rather than providing holistic flow analysis. This limitation makes it difficult to identify cascade effects where bottlenecks in one system component create performance degradation throughout the entire data pipeline.

Security and compliance requirements further constrain data flow optimization efforts. Encryption, authentication, and audit logging processes, while essential for data protection, introduce additional processing overhead and latency that must be balanced against performance objectives in modern digital platform architectures.

Existing Solutions for Data Flow Maximization

01 Data flow management and routing in digital platforms

Digital platforms require sophisticated mechanisms to manage and route data flows between different components, services, and users. This involves implementing routing protocols, load balancing techniques, and traffic management systems to ensure efficient data transmission. The systems can dynamically adjust data paths based on network conditions, user requirements, and platform resources to optimize performance and reliability.- Data flow management and routing in digital platforms: Digital platforms require sophisticated mechanisms to manage and route data flows between different components, services, and users. This involves implementing routing protocols, load balancing techniques, and traffic management systems to ensure efficient data transmission. The systems can dynamically adjust data paths based on network conditions, user demands, and platform requirements to optimize performance and reduce latency.

- Data flow security and access control mechanisms: Protecting data flows within digital platforms requires implementing comprehensive security measures including encryption, authentication, and authorization protocols. These mechanisms ensure that data transmitted across the platform is protected from unauthorized access and tampering. Access control systems can define permissions and policies to regulate which users or services can access specific data streams, maintaining data integrity and confidentiality throughout the flow process.

- Real-time data streaming and processing architectures: Modern digital platforms employ real-time data streaming architectures to handle continuous data flows from multiple sources. These systems utilize stream processing engines and event-driven architectures to process data as it arrives, enabling immediate insights and actions. The architectures support high-throughput data ingestion, transformation, and distribution while maintaining low latency and ensuring data consistency across the platform.

- Data flow monitoring and analytics systems: Comprehensive monitoring and analytics capabilities are essential for understanding and optimizing data flows in digital platforms. These systems track data movement patterns, identify bottlenecks, and provide visibility into data flow performance metrics. Analytics tools can detect anomalies, predict potential issues, and generate insights that help platform operators make informed decisions about infrastructure scaling and optimization.

- Cross-platform data integration and interoperability: Digital platforms often need to integrate data flows from heterogeneous sources and ensure interoperability with external systems. This involves implementing standardized data formats, APIs, and protocols that facilitate seamless data exchange. Integration frameworks enable platforms to aggregate data from various sources, transform it into compatible formats, and distribute it to different destinations while maintaining data quality and consistency throughout the integration process.

02 Data flow security and access control mechanisms

Protecting data flows within digital platforms requires implementing comprehensive security measures including encryption, authentication, and authorization protocols. These mechanisms ensure that data transmitted across the platform is protected from unauthorized access and tampering. Access control systems can define permissions and policies to regulate which users or services can access specific data streams, maintaining data integrity and confidentiality throughout the flow process.Expand Specific Solutions03 Real-time data flow monitoring and analytics

Digital platforms implement monitoring systems to track data flows in real-time, collecting metrics on throughput, latency, and error rates. Analytics capabilities process this monitoring data to identify patterns, detect anomalies, and generate insights about platform performance. These systems enable operators to visualize data flow patterns, troubleshoot issues, and make informed decisions about resource allocation and optimization strategies.Expand Specific Solutions04 Data flow orchestration and workflow automation

Orchestration systems coordinate complex data flows across multiple platform components by defining workflows and automating data processing pipelines. These systems manage dependencies between different data processing stages, handle error recovery, and ensure data consistency. Workflow automation reduces manual intervention, improves efficiency, and enables scalable processing of large data volumes across distributed platform architectures.Expand Specific Solutions05 Cross-platform data integration and interoperability

Digital platforms need to support data flows between heterogeneous systems and external platforms through standardized interfaces and protocols. Integration mechanisms enable data exchange across different platforms while maintaining data format compatibility and semantic consistency. These solutions facilitate seamless data sharing, support multi-platform ecosystems, and enable platforms to leverage external data sources and services to enhance functionality.Expand Specific Solutions

Key Players in Digital Platform and Data Processing Industry

The digital platform data flow optimization landscape is in a mature growth phase, driven by exponential data generation and cloud adoption demands. The market spans multiple billion-dollar segments including cloud storage, data analytics, and enterprise software solutions. Technology maturity varies significantly across players, with established leaders like Microsoft Technology Licensing LLC, Oracle International Corp., and Snowflake Inc. offering sophisticated cloud-native architectures and real-time processing capabilities. Pure Storage Inc. and CTERA Networks Ltd. focus on storage optimization, while Splunk LLC specializes in operational intelligence. Telecommunications giants China Mobile Communications Group and Orange SA provide infrastructure backbone solutions. Emerging players like Yangtze Memory Technologies and Suzhou Inspur Intelligent Technology represent growing Asian market capabilities, particularly in hardware acceleration and integrated cloud services, indicating a competitive landscape with both horizontal platform providers and vertical solution specialists.

Microsoft Technology Licensing LLC

Technical Solution: Microsoft employs a comprehensive data flow optimization strategy through Azure Data Factory and Azure Synapse Analytics, utilizing intelligent data pipelines with automated scaling capabilities. Their approach includes real-time stream processing through Azure Stream Analytics, enabling organizations to process millions of events per second with sub-second latency. The platform integrates machine learning algorithms for predictive data routing and implements advanced compression techniques that reduce data transfer costs by up to 60%. Microsoft's hybrid cloud architecture allows seamless data movement between on-premises and cloud environments, supporting over 200 data connectors for diverse enterprise systems.

Strengths: Comprehensive ecosystem integration, enterprise-grade security, scalable architecture. Weaknesses: High licensing costs, complexity in initial setup, vendor lock-in concerns.

Pure Storage, Inc.

Technical Solution: Pure Storage optimizes data flow through its FlashArray and FlashBlade platforms, leveraging NVMe-based all-flash architecture that delivers consistent sub-millisecond latency and eliminates performance bottlenecks. Their DirectFlash technology bypasses traditional storage controllers, providing direct data paths that increase throughput by up to 10x compared to conventional storage systems. The platform includes AI-driven predictive analytics through Pure1 Meta, which automatically optimizes data placement and predicts performance issues before they impact operations. Pure's data reduction technologies achieve 5:1 average compression ratios while maintaining real-time processing capabilities across multi-petabyte environments.

Strengths: Ultra-low latency performance, predictive analytics capabilities, simplified management. Weaknesses: Higher initial investment costs, limited hybrid cloud integration, dependency on flash storage technology.

Core Innovations in Data Stream Processing Technologies

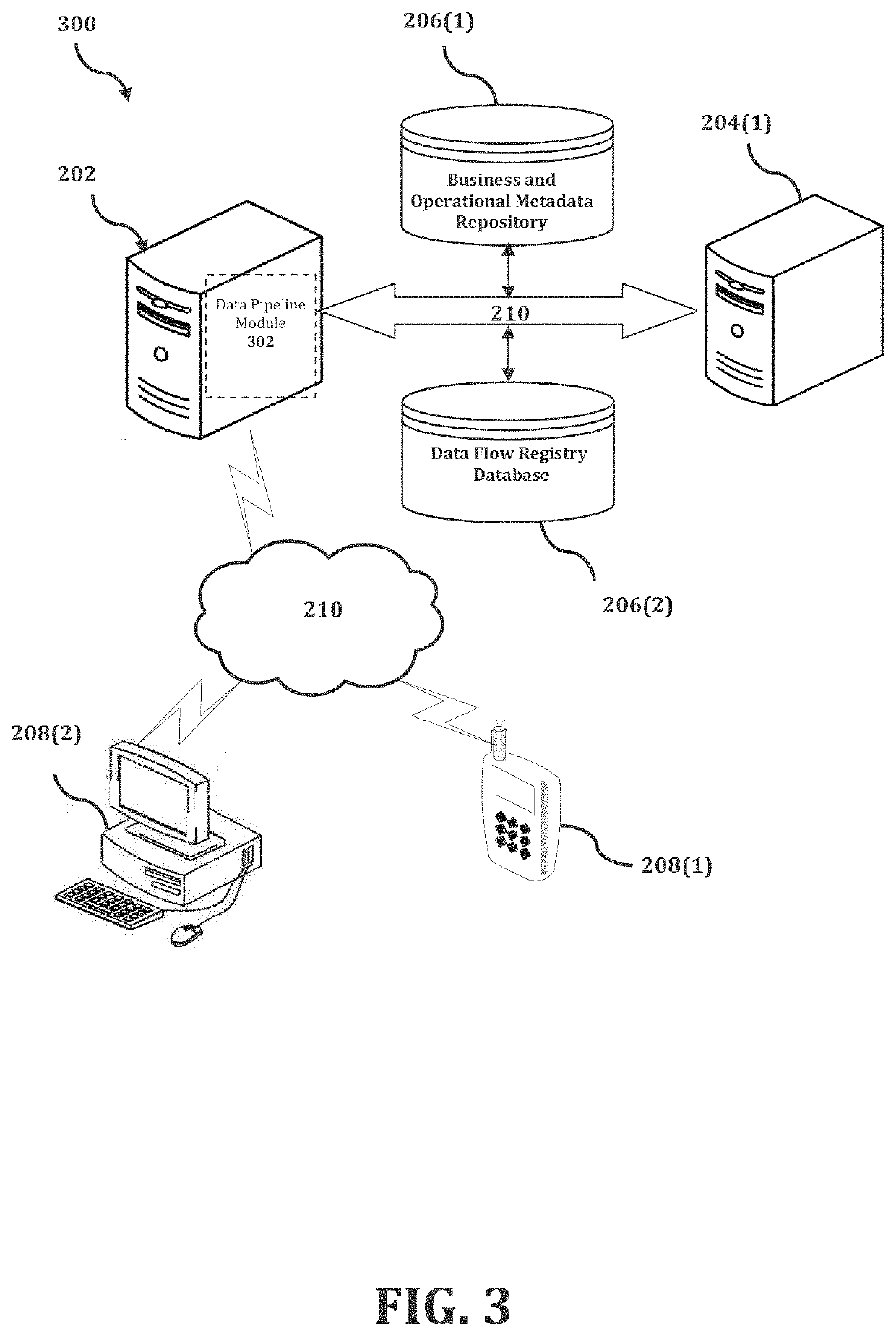

Data pipeline architecture

PatentActiveUS20220103618A1

Innovation

- A method and system utilizing microservice applications for coordinating data flows and processing operations across a distributed hybrid cloud environment, including inbound, in-place, and outbound data flows, with metadata processing and control services, to manage data flows and ensure scalability, resilience, and compliance, leveraging machine learning for workload balancing and data gravity optimization.

Mechanism to synchronize, control, and merge data streams of disparate flow characteristics

PatentActiveUS20210240714A1

Innovation

- A flow control mechanism that monitors and synchronizes the throughput of data streams using a shared control channel, allowing for throttling or withholding of data flow to coordinate processing across related streams, thereby enabling efficient merging and synchronization without the need for large caches or storage.

Data Privacy and Security Compliance Framework

Digital platforms operating at scale must establish comprehensive data privacy and security compliance frameworks to maximize data flow while adhering to increasingly stringent regulatory requirements. The regulatory landscape has evolved significantly with the implementation of GDPR, CCPA, and emerging data protection laws across various jurisdictions, creating a complex web of compliance obligations that directly impact data processing capabilities.

Modern compliance frameworks require a multi-layered approach that integrates privacy-by-design principles into the core architecture of data flow systems. This involves implementing granular consent management systems that can dynamically adjust data collection and processing based on user preferences and regulatory requirements. Advanced consent platforms now utilize machine learning algorithms to optimize consent rates while maintaining full compliance, enabling platforms to maximize legitimate data collection.

Data minimization strategies have become critical components of compliance frameworks, requiring platforms to implement intelligent data filtering mechanisms that collect only necessary information for specific business purposes. These systems employ real-time data classification engines that automatically categorize incoming data streams based on sensitivity levels and regulatory requirements, ensuring optimal data flow while maintaining compliance boundaries.

Cross-border data transfer mechanisms represent a significant challenge in maximizing global data flow. Compliance frameworks must incorporate sophisticated data localization strategies, including the implementation of binding corporate rules, standard contractual clauses, and adequacy decision frameworks. Advanced platforms are deploying edge computing architectures that process data locally while maintaining centralized analytics capabilities through privacy-preserving techniques.

Automated compliance monitoring systems have emerged as essential infrastructure components, utilizing continuous auditing mechanisms that track data lineage, processing activities, and consent status in real-time. These systems integrate with data flow optimization engines to automatically adjust processing parameters when compliance violations are detected, ensuring uninterrupted operations while maintaining regulatory adherence.

The integration of privacy-enhancing technologies such as differential privacy, homomorphic encryption, and secure multi-party computation into compliance frameworks enables platforms to maximize data utility while meeting strict privacy requirements. These technologies allow for advanced analytics and machine learning operations on encrypted or anonymized data streams, significantly expanding the scope of permissible data processing activities within regulatory constraints.

Modern compliance frameworks require a multi-layered approach that integrates privacy-by-design principles into the core architecture of data flow systems. This involves implementing granular consent management systems that can dynamically adjust data collection and processing based on user preferences and regulatory requirements. Advanced consent platforms now utilize machine learning algorithms to optimize consent rates while maintaining full compliance, enabling platforms to maximize legitimate data collection.

Data minimization strategies have become critical components of compliance frameworks, requiring platforms to implement intelligent data filtering mechanisms that collect only necessary information for specific business purposes. These systems employ real-time data classification engines that automatically categorize incoming data streams based on sensitivity levels and regulatory requirements, ensuring optimal data flow while maintaining compliance boundaries.

Cross-border data transfer mechanisms represent a significant challenge in maximizing global data flow. Compliance frameworks must incorporate sophisticated data localization strategies, including the implementation of binding corporate rules, standard contractual clauses, and adequacy decision frameworks. Advanced platforms are deploying edge computing architectures that process data locally while maintaining centralized analytics capabilities through privacy-preserving techniques.

Automated compliance monitoring systems have emerged as essential infrastructure components, utilizing continuous auditing mechanisms that track data lineage, processing activities, and consent status in real-time. These systems integrate with data flow optimization engines to automatically adjust processing parameters when compliance violations are detected, ensuring uninterrupted operations while maintaining regulatory adherence.

The integration of privacy-enhancing technologies such as differential privacy, homomorphic encryption, and secure multi-party computation into compliance frameworks enables platforms to maximize data utility while meeting strict privacy requirements. These technologies allow for advanced analytics and machine learning operations on encrypted or anonymized data streams, significantly expanding the scope of permissible data processing activities within regulatory constraints.

Scalability and Performance Benchmarking Standards

Establishing robust scalability and performance benchmarking standards is fundamental to maximizing data flow in digital platforms. These standards provide quantifiable metrics that enable organizations to measure, compare, and optimize their platform capabilities systematically. Industry-standard benchmarks such as transactions per second (TPS), throughput capacity, latency measurements, and concurrent user handling serve as critical performance indicators that guide architectural decisions and resource allocation strategies.

Performance benchmarking frameworks must encompass multiple dimensions of platform operation, including data ingestion rates, processing throughput, storage efficiency, and network bandwidth utilization. Modern digital platforms require standardized testing methodologies that simulate real-world traffic patterns and data volumes. Load testing protocols should incorporate gradual scaling scenarios, peak traffic simulations, and sustained high-volume operations to validate platform resilience under various operational conditions.

Scalability benchmarks need to address both horizontal and vertical scaling capabilities. Horizontal scaling metrics focus on the platform's ability to distribute workloads across multiple nodes, measuring linear performance improvements as resources are added. Vertical scaling benchmarks evaluate how effectively platforms utilize increased computational resources within individual nodes. These measurements help determine optimal scaling strategies and identify potential bottlenecks in system architecture.

Industry-recognized benchmarking tools and frameworks, such as Apache Bench, JMeter, and specialized cloud-native testing suites, provide standardized approaches to performance evaluation. These tools enable consistent measurement across different platform configurations and facilitate meaningful comparisons between alternative solutions. Automated benchmarking pipelines integrated into continuous integration workflows ensure that performance standards are maintained throughout the development lifecycle.

Establishing baseline performance metrics and setting progressive improvement targets creates accountability frameworks for platform optimization efforts. Regular benchmarking cycles, typically conducted quarterly or during major system updates, help track performance trends and identify degradation patterns before they impact user experience. These standardized measurements form the foundation for data-driven decisions regarding infrastructure investments and architectural modifications.

Performance benchmarking frameworks must encompass multiple dimensions of platform operation, including data ingestion rates, processing throughput, storage efficiency, and network bandwidth utilization. Modern digital platforms require standardized testing methodologies that simulate real-world traffic patterns and data volumes. Load testing protocols should incorporate gradual scaling scenarios, peak traffic simulations, and sustained high-volume operations to validate platform resilience under various operational conditions.

Scalability benchmarks need to address both horizontal and vertical scaling capabilities. Horizontal scaling metrics focus on the platform's ability to distribute workloads across multiple nodes, measuring linear performance improvements as resources are added. Vertical scaling benchmarks evaluate how effectively platforms utilize increased computational resources within individual nodes. These measurements help determine optimal scaling strategies and identify potential bottlenecks in system architecture.

Industry-recognized benchmarking tools and frameworks, such as Apache Bench, JMeter, and specialized cloud-native testing suites, provide standardized approaches to performance evaluation. These tools enable consistent measurement across different platform configurations and facilitate meaningful comparisons between alternative solutions. Automated benchmarking pipelines integrated into continuous integration workflows ensure that performance standards are maintained throughout the development lifecycle.

Establishing baseline performance metrics and setting progressive improvement targets creates accountability frameworks for platform optimization efforts. Regular benchmarking cycles, typically conducted quarterly or during major system updates, help track performance trends and identify degradation patterns before they impact user experience. These standardized measurements form the foundation for data-driven decisions regarding infrastructure investments and architectural modifications.

Unlock deeper insights with Patsnap Eureka Quick Research — get a full tech report to explore trends and direct your research. Try now!

Generate Your Research Report Instantly with AI Agent

Supercharge your innovation with Patsnap Eureka AI Agent Platform!