How to Use Active Memory Expansion for Real-Time Data Analysis

MAR 19, 20269 MIN READ

Generate Your Research Report Instantly with AI Agent

Patsnap Eureka helps you evaluate technical feasibility & market potential.

Active Memory Expansion Background and Objectives

Active Memory Expansion represents a paradigm shift in memory management systems, fundamentally altering how computing systems handle dynamic memory allocation for data-intensive applications. This technology emerged from the growing disparity between processor speeds and memory access latencies, particularly in scenarios requiring real-time data processing capabilities. The concept builds upon traditional virtual memory systems but introduces intelligent, predictive memory allocation mechanisms that can dynamically expand available memory resources based on application demands and data flow patterns.

The evolution of Active Memory Expansion stems from decades of research in memory hierarchy optimization, beginning with early cache management systems in the 1970s and progressing through virtual memory implementations in the 1980s. The technology gained significant momentum in the 2000s as big data analytics and real-time processing requirements intensified across industries. Modern implementations leverage machine learning algorithms to predict memory usage patterns, enabling proactive memory allocation rather than reactive expansion.

Real-time data analysis applications present unique challenges that traditional memory management systems struggle to address effectively. These applications require consistent, low-latency access to large datasets while maintaining predictable performance characteristics. Active Memory Expansion addresses these challenges by implementing intelligent prefetching mechanisms, dynamic memory pool management, and adaptive allocation strategies that respond to changing data processing requirements in microsecond timeframes.

The primary technical objectives of Active Memory Expansion in real-time data analysis contexts include achieving sub-millisecond memory allocation latencies, maintaining consistent throughput under varying workload conditions, and optimizing memory utilization efficiency. These systems aim to eliminate memory-related bottlenecks that traditionally constrain real-time analytics performance, particularly in high-frequency trading, industrial IoT monitoring, and streaming data processing applications.

Contemporary implementations focus on developing hybrid memory architectures that seamlessly integrate traditional DRAM with emerging memory technologies such as persistent memory and high-bandwidth memory modules. The objective extends beyond simple capacity expansion to encompass intelligent data placement, predictive caching strategies, and adaptive memory compression techniques that maximize effective memory bandwidth while minimizing access latencies for time-critical analytical workloads.

The evolution of Active Memory Expansion stems from decades of research in memory hierarchy optimization, beginning with early cache management systems in the 1970s and progressing through virtual memory implementations in the 1980s. The technology gained significant momentum in the 2000s as big data analytics and real-time processing requirements intensified across industries. Modern implementations leverage machine learning algorithms to predict memory usage patterns, enabling proactive memory allocation rather than reactive expansion.

Real-time data analysis applications present unique challenges that traditional memory management systems struggle to address effectively. These applications require consistent, low-latency access to large datasets while maintaining predictable performance characteristics. Active Memory Expansion addresses these challenges by implementing intelligent prefetching mechanisms, dynamic memory pool management, and adaptive allocation strategies that respond to changing data processing requirements in microsecond timeframes.

The primary technical objectives of Active Memory Expansion in real-time data analysis contexts include achieving sub-millisecond memory allocation latencies, maintaining consistent throughput under varying workload conditions, and optimizing memory utilization efficiency. These systems aim to eliminate memory-related bottlenecks that traditionally constrain real-time analytics performance, particularly in high-frequency trading, industrial IoT monitoring, and streaming data processing applications.

Contemporary implementations focus on developing hybrid memory architectures that seamlessly integrate traditional DRAM with emerging memory technologies such as persistent memory and high-bandwidth memory modules. The objective extends beyond simple capacity expansion to encompass intelligent data placement, predictive caching strategies, and adaptive memory compression techniques that maximize effective memory bandwidth while minimizing access latencies for time-critical analytical workloads.

Real-Time Analytics Market Demand Analysis

The real-time analytics market has experienced unprecedented growth driven by the exponential increase in data generation and the critical need for instantaneous decision-making across industries. Organizations are generating massive volumes of data from IoT devices, social media platforms, financial transactions, and operational systems, creating an urgent demand for technologies that can process and analyze this information without delay.

Financial services represent one of the most demanding sectors for real-time analytics, where millisecond delays in fraud detection, algorithmic trading, and risk assessment can result in significant financial losses. The industry requires sophisticated memory management solutions to handle high-frequency trading data, real-time credit scoring, and instant payment processing systems that operate continuously without performance degradation.

E-commerce and digital advertising platforms have emerged as major drivers of real-time analytics demand, requiring immediate processing of user behavior data, personalized recommendation engines, and dynamic pricing algorithms. These applications necessitate active memory expansion capabilities to maintain large datasets in accessible memory while performing complex analytical computations on streaming data.

Manufacturing and industrial IoT applications are increasingly adopting real-time analytics for predictive maintenance, quality control, and supply chain optimization. These use cases demand robust memory architectures capable of handling sensor data streams while maintaining historical context for pattern recognition and anomaly detection.

The telecommunications sector faces growing pressure to implement real-time network optimization, customer experience monitoring, and security threat detection systems. Network operators require memory expansion solutions that can accommodate massive call detail records and network performance metrics while enabling instant analysis for service quality improvements.

Healthcare and life sciences industries are driving demand for real-time patient monitoring systems, clinical decision support tools, and pharmaceutical research analytics. These applications require memory architectures that can handle continuous data streams from medical devices while maintaining compliance with strict regulatory requirements.

The convergence of artificial intelligence and machine learning with real-time analytics has created new market opportunities, particularly in autonomous systems, smart cities, and edge computing environments. These emerging applications require innovative memory expansion approaches to support complex algorithms operating on continuous data streams with minimal latency constraints.

Financial services represent one of the most demanding sectors for real-time analytics, where millisecond delays in fraud detection, algorithmic trading, and risk assessment can result in significant financial losses. The industry requires sophisticated memory management solutions to handle high-frequency trading data, real-time credit scoring, and instant payment processing systems that operate continuously without performance degradation.

E-commerce and digital advertising platforms have emerged as major drivers of real-time analytics demand, requiring immediate processing of user behavior data, personalized recommendation engines, and dynamic pricing algorithms. These applications necessitate active memory expansion capabilities to maintain large datasets in accessible memory while performing complex analytical computations on streaming data.

Manufacturing and industrial IoT applications are increasingly adopting real-time analytics for predictive maintenance, quality control, and supply chain optimization. These use cases demand robust memory architectures capable of handling sensor data streams while maintaining historical context for pattern recognition and anomaly detection.

The telecommunications sector faces growing pressure to implement real-time network optimization, customer experience monitoring, and security threat detection systems. Network operators require memory expansion solutions that can accommodate massive call detail records and network performance metrics while enabling instant analysis for service quality improvements.

Healthcare and life sciences industries are driving demand for real-time patient monitoring systems, clinical decision support tools, and pharmaceutical research analytics. These applications require memory architectures that can handle continuous data streams from medical devices while maintaining compliance with strict regulatory requirements.

The convergence of artificial intelligence and machine learning with real-time analytics has created new market opportunities, particularly in autonomous systems, smart cities, and edge computing environments. These emerging applications require innovative memory expansion approaches to support complex algorithms operating on continuous data streams with minimal latency constraints.

Current Memory Limitations in Real-Time Processing

Real-time data processing systems face significant memory constraints that fundamentally limit their ability to handle large-scale analytical workloads. Traditional memory architectures rely heavily on static allocation schemes where memory resources are predetermined and fixed during system initialization. This approach creates bottlenecks when processing volumes exceed initial capacity estimates, leading to system failures or severe performance degradation.

The primary limitation stems from the finite nature of physical RAM available to processing nodes. Modern real-time systems typically operate with memory capacities ranging from 16GB to 512GB per node, which becomes insufficient when dealing with streaming data rates exceeding several terabytes per hour. When memory utilization approaches 80-90% capacity, garbage collection overhead increases exponentially, causing unpredictable latency spikes that violate real-time processing requirements.

Memory fragmentation presents another critical challenge in sustained real-time operations. As data structures are continuously allocated and deallocated during stream processing, available memory becomes fragmented into non-contiguous blocks. This fragmentation reduces effective memory utilization and forces systems to perform expensive memory compaction operations, introducing processing delays that compromise real-time guarantees.

Current memory management strategies struggle with the dynamic nature of real-time workloads. Static partitioning schemes cannot adapt to varying data characteristics or processing demands, while traditional virtual memory systems introduce unacceptable latency through page fault handling. The mismatch between rigid memory allocation policies and fluid data processing requirements creates performance bottlenecks that limit analytical throughput.

Cache coherency issues compound these limitations in distributed real-time systems. When multiple processing nodes access shared data structures, maintaining memory consistency requires expensive synchronization operations. These coordination overheads scale poorly with system size and data complexity, creating scalability barriers that prevent horizontal expansion of real-time analytical capabilities.

The temporal constraints of real-time processing further exacerbate memory limitations. Unlike batch processing systems that can tolerate memory pressure through spilling to disk, real-time systems must maintain all active data in fast-access memory to meet latency requirements. This constraint severely limits the complexity and scope of analytical operations that can be performed within real-time processing windows.

The primary limitation stems from the finite nature of physical RAM available to processing nodes. Modern real-time systems typically operate with memory capacities ranging from 16GB to 512GB per node, which becomes insufficient when dealing with streaming data rates exceeding several terabytes per hour. When memory utilization approaches 80-90% capacity, garbage collection overhead increases exponentially, causing unpredictable latency spikes that violate real-time processing requirements.

Memory fragmentation presents another critical challenge in sustained real-time operations. As data structures are continuously allocated and deallocated during stream processing, available memory becomes fragmented into non-contiguous blocks. This fragmentation reduces effective memory utilization and forces systems to perform expensive memory compaction operations, introducing processing delays that compromise real-time guarantees.

Current memory management strategies struggle with the dynamic nature of real-time workloads. Static partitioning schemes cannot adapt to varying data characteristics or processing demands, while traditional virtual memory systems introduce unacceptable latency through page fault handling. The mismatch between rigid memory allocation policies and fluid data processing requirements creates performance bottlenecks that limit analytical throughput.

Cache coherency issues compound these limitations in distributed real-time systems. When multiple processing nodes access shared data structures, maintaining memory consistency requires expensive synchronization operations. These coordination overheads scale poorly with system size and data complexity, creating scalability barriers that prevent horizontal expansion of real-time analytical capabilities.

The temporal constraints of real-time processing further exacerbate memory limitations. Unlike batch processing systems that can tolerate memory pressure through spilling to disk, real-time systems must maintain all active data in fast-access memory to meet latency requirements. This constraint severely limits the complexity and scope of analytical operations that can be performed within real-time processing windows.

Existing Active Memory Expansion Approaches

01 Memory expansion through external storage interfaces

Memory capacity can be expanded by utilizing external storage interfaces such as USB ports, SD card slots, or other removable storage media connections. This approach allows users to add additional storage capacity without modifying the internal hardware architecture. The system can dynamically recognize and integrate the external storage devices, providing flexible memory expansion options for various applications.- Memory expansion through external storage devices: Memory capacity can be expanded by connecting external storage devices to the system. This approach allows users to increase available memory without modifying internal hardware components. The expansion can be achieved through various interfaces and connection methods, providing flexible and scalable memory solutions for different computing needs.

- Virtual memory management and address mapping: Active memory expansion can be achieved through virtual memory management techniques that map physical memory addresses to extended address spaces. This method utilizes address translation mechanisms and memory management units to create the appearance of larger memory capacity than physically available, enabling efficient utilization of limited memory resources.

- Memory module expansion slots and interfaces: Physical memory expansion is facilitated through dedicated expansion slots and standardized interfaces on computing devices. These hardware solutions allow users to install additional memory modules to increase total system memory capacity. The design includes mechanical and electrical connections that support hot-swapping and modular memory upgrades.

- Dynamic memory allocation and compression techniques: Memory capacity can be effectively expanded through dynamic allocation algorithms and data compression methods. These techniques optimize memory usage by compressing stored data and intelligently managing memory allocation based on real-time system demands, thereby increasing the effective available memory without adding physical hardware.

- Multi-tier memory architecture and caching systems: Active memory expansion utilizes hierarchical memory architectures that combine different types of memory technologies with varying speeds and capacities. This approach employs caching mechanisms and tiered storage systems to create an expanded memory pool that balances performance and capacity requirements, effectively extending usable memory space.

02 Virtual memory and memory mapping techniques

Active memory expansion can be achieved through virtual memory management and memory mapping technologies. These techniques allow the system to use secondary storage devices as an extension of physical memory, creating a larger addressable memory space. The memory management unit handles the translation between virtual and physical addresses, enabling applications to access more memory than physically available in the system.Expand Specific Solutions03 Memory compression and deduplication

Memory capacity can be effectively expanded through compression algorithms and data deduplication techniques. By compressing data stored in memory or eliminating redundant data blocks, the system can store more information within the same physical memory space. This approach is particularly useful for systems with limited memory resources, allowing for improved memory utilization without additional hardware.Expand Specific Solutions04 Multi-tier memory architecture

Implementing a multi-tier memory architecture enables active memory expansion by combining different types of memory technologies with varying speeds and capacities. This hierarchical approach typically includes fast cache memory, main memory, and slower but larger storage tiers. The system intelligently manages data placement across these tiers based on access patterns, effectively expanding the usable memory capacity while maintaining performance.Expand Specific Solutions05 Dynamic memory allocation and management

Active memory expansion can be realized through advanced dynamic memory allocation and management strategies. These methods involve intelligent algorithms that optimize memory usage by reallocating unused memory blocks, implementing garbage collection, and managing memory pools efficiently. The system can adaptively adjust memory allocation based on application requirements and system load, maximizing the effective memory capacity available to running processes.Expand Specific Solutions

Leading Players in Memory and Analytics Solutions

The active memory expansion technology for real-time data analysis represents an emerging market in the early growth stage, driven by increasing demands for high-performance computing and AI applications. The market demonstrates significant potential with diverse players spanning semiconductor manufacturers, cloud service providers, and research institutions. Technology maturity varies considerably across participants, with established companies like IBM, Qualcomm, and SK Hynix leading in hardware innovation, while specialized firms such as Shanghai Biren Technology and MetaX Integrated Circuits focus on GPU-based solutions. Chinese technology giants including Baidu, Ping An Technology, and Inspur contribute software and cloud infrastructure capabilities. The competitive landscape shows strong collaboration between academic institutions like Zhejiang University, USC, and EPFL with industry players, indicating active research and development. Market fragmentation suggests the technology is still consolidating, with opportunities for breakthrough innovations in memory architecture and real-time processing capabilities across various application domains.

International Business Machines Corp.

Technical Solution: IBM develops comprehensive active memory expansion solutions through their Power Systems architecture and z/OS mainframe technology. Their approach utilizes compressed memory techniques and intelligent data tiering to expand effective memory capacity by 2-3x for real-time analytics workloads. The solution incorporates machine learning algorithms to predict data access patterns and proactively move frequently accessed data to faster memory tiers while compressing less critical data. IBM's Memory Inception technology enables seamless memory pooling across multiple nodes, providing elastic memory scaling for demanding real-time data processing applications. Their implementation supports sub-millisecond latency requirements essential for financial trading systems and real-time fraud detection.

Strengths: Enterprise-grade reliability and proven scalability in mission-critical environments. Weaknesses: High implementation costs and complexity requiring specialized expertise for deployment and maintenance.

SK hynix, Inc.

Technical Solution: SK Hynix focuses on hardware-level active memory expansion through their Computational Storage Devices (CSD) and Processing-in-Memory (PIM) technologies. Their solution integrates AI accelerators directly into memory modules, enabling real-time data processing without traditional CPU bottlenecks. The company's CXL-based memory expansion technology allows dynamic memory allocation across distributed systems, achieving up to 10x memory bandwidth improvements for analytics workloads. Their GDDR6X and HBM3 memory solutions incorporate intelligent caching mechanisms that automatically identify and prioritize critical data streams for real-time analysis. The hardware-accelerated compression and decompression capabilities reduce memory footprint by 40-60% while maintaining microsecond-level access times for time-sensitive applications.

Strengths: Hardware-level optimization provides superior performance and energy efficiency compared to software-only solutions. Weaknesses: Limited flexibility for customization and requires compatible hardware infrastructure for full benefits.

Core Patents in Dynamic Memory Management

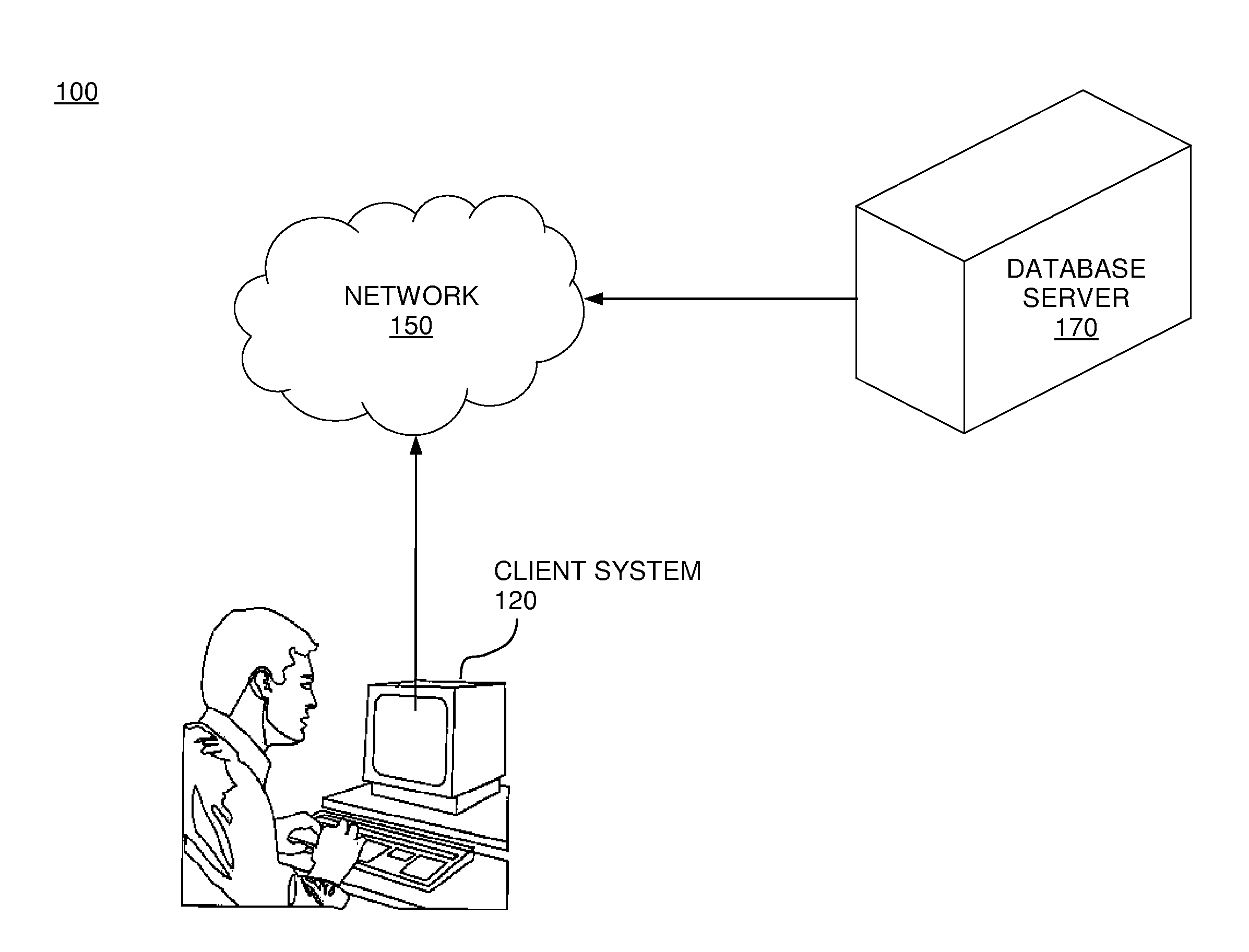

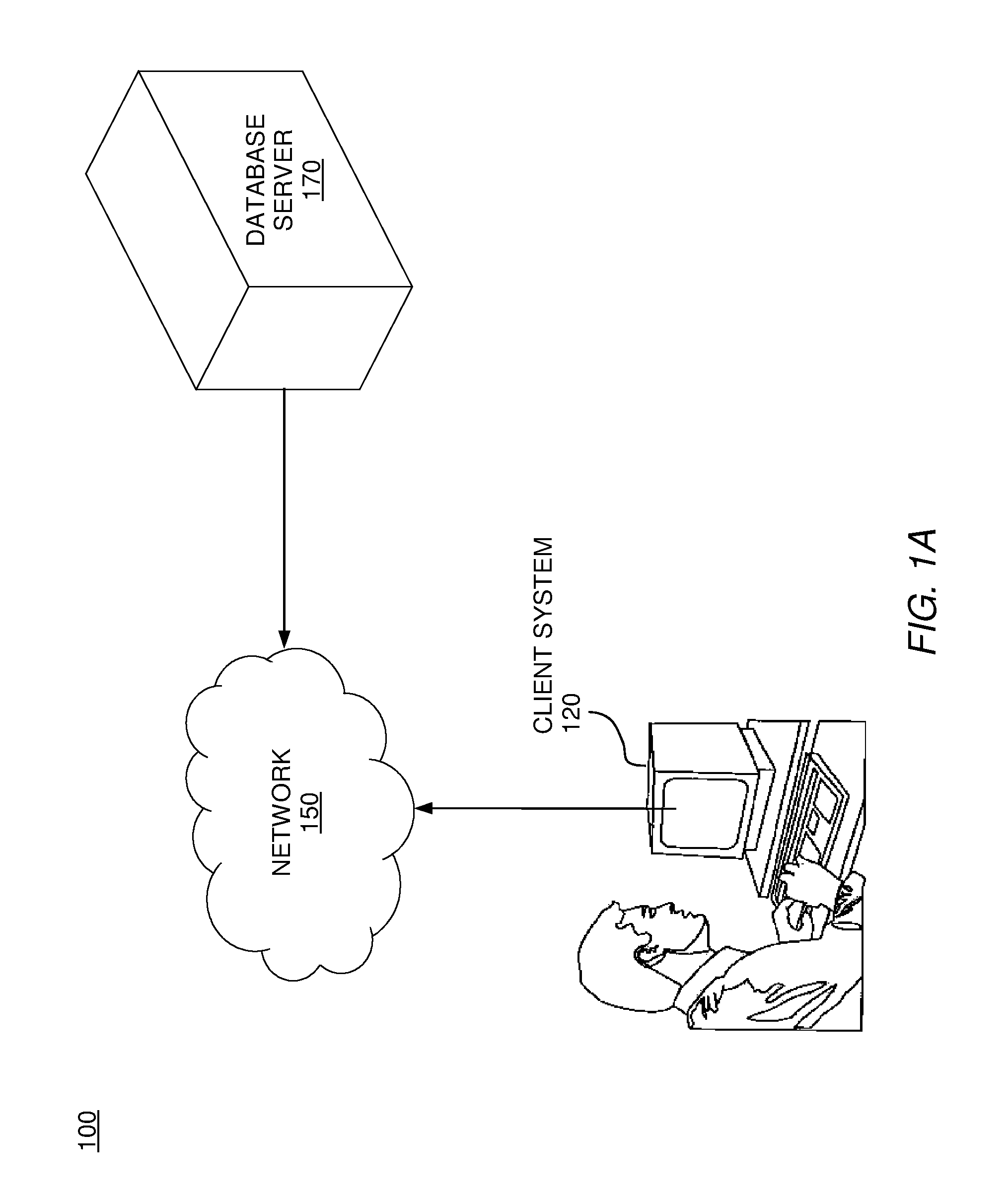

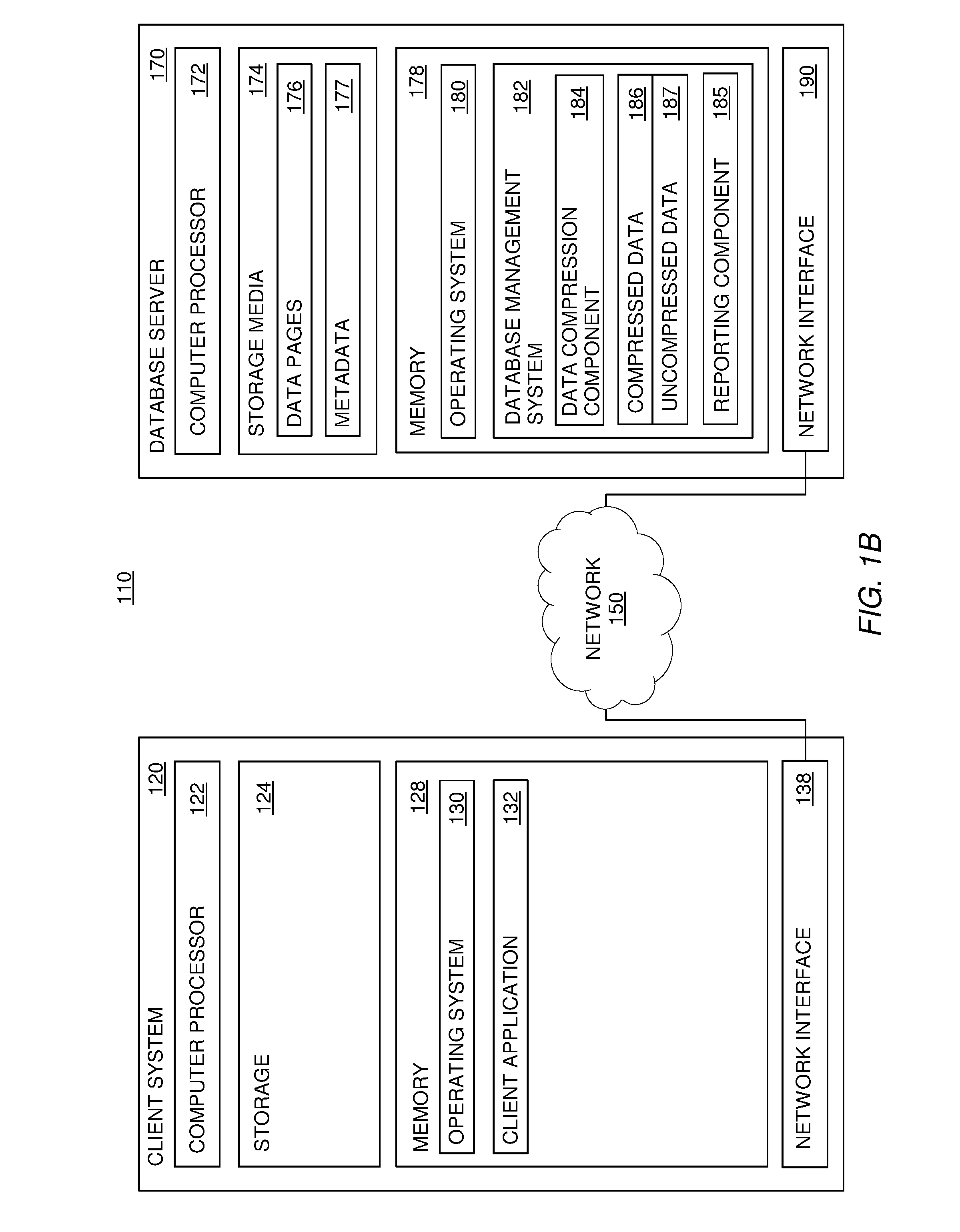

Active memory expansion and rdbms meta data and tooling

PatentInactiveUS20120109908A1

Innovation

- Implement a method that identifies indicatory data associated with retrieved data to determine whether to compress it, using compression criteria to selectively compress data based on metadata, query types, and access frequencies, thereby optimizing memory usage and reducing processing time.

Memory expansion device performing near data processing function and accelerator system including the same

PatentActiveUS20230195660A1

Innovation

- A memory expansion device with an expansion control circuit that receives near data processing requests and performs memory operations, including read and write operations, on a remote memory device, allowing computation to be offloaded from the GPU to the memory expansion device, thereby reducing the need for frequent data transfer and enhancing overall deep neural network operation efficiency.

Performance Optimization Strategies

Performance optimization in active memory expansion for real-time data analysis requires a multi-layered approach that addresses both hardware utilization and software efficiency. The primary strategy involves implementing intelligent memory allocation algorithms that can dynamically adjust memory pools based on real-time workload characteristics. These algorithms must balance between memory expansion speed and system stability, ensuring that memory scaling operations do not introduce latency spikes that could compromise real-time processing requirements.

Cache coherency optimization represents another critical performance dimension. Advanced cache management techniques, including predictive prefetching and adaptive cache line sizing, can significantly reduce memory access latencies during expansion operations. The implementation of non-uniform memory access (NUMA) awareness in memory expansion algorithms ensures optimal data locality, particularly in multi-socket systems where memory bandwidth varies across different memory controllers.

Parallel processing optimization strategies focus on minimizing memory contention during concurrent data analysis operations. Lock-free data structures and atomic operations enable multiple processing threads to access expanded memory regions without traditional synchronization overhead. Memory-mapped I/O techniques can further enhance performance by reducing data copying operations between kernel and user space during memory expansion events.

Workload-aware optimization involves implementing machine learning-based prediction models that anticipate memory expansion needs before they become critical. These predictive systems analyze historical memory usage patterns, data ingestion rates, and processing complexity to proactively trigger memory expansion operations. This approach minimizes the performance impact of reactive memory scaling and maintains consistent processing throughput.

Hardware-specific optimizations leverage modern processor features such as Intel's Memory Protection Extensions (MPX) and Advanced Vector Extensions (AVX) to accelerate memory operations during expansion phases. Additionally, implementing memory compression algorithms can effectively increase available memory capacity without physical expansion, providing an intermediate optimization layer that reduces the frequency of actual memory scaling operations while maintaining real-time performance characteristics.

Cache coherency optimization represents another critical performance dimension. Advanced cache management techniques, including predictive prefetching and adaptive cache line sizing, can significantly reduce memory access latencies during expansion operations. The implementation of non-uniform memory access (NUMA) awareness in memory expansion algorithms ensures optimal data locality, particularly in multi-socket systems where memory bandwidth varies across different memory controllers.

Parallel processing optimization strategies focus on minimizing memory contention during concurrent data analysis operations. Lock-free data structures and atomic operations enable multiple processing threads to access expanded memory regions without traditional synchronization overhead. Memory-mapped I/O techniques can further enhance performance by reducing data copying operations between kernel and user space during memory expansion events.

Workload-aware optimization involves implementing machine learning-based prediction models that anticipate memory expansion needs before they become critical. These predictive systems analyze historical memory usage patterns, data ingestion rates, and processing complexity to proactively trigger memory expansion operations. This approach minimizes the performance impact of reactive memory scaling and maintains consistent processing throughput.

Hardware-specific optimizations leverage modern processor features such as Intel's Memory Protection Extensions (MPX) and Advanced Vector Extensions (AVX) to accelerate memory operations during expansion phases. Additionally, implementing memory compression algorithms can effectively increase available memory capacity without physical expansion, providing an intermediate optimization layer that reduces the frequency of actual memory scaling operations while maintaining real-time performance characteristics.

Integration Challenges and Solutions

The integration of active memory expansion technologies into real-time data analysis systems presents several critical challenges that organizations must address to achieve successful implementation. These challenges span multiple dimensions including architectural compatibility, performance optimization, and operational complexity.

System architecture compatibility represents one of the most significant integration hurdles. Legacy data analysis infrastructures often lack the necessary interfaces and protocols to seamlessly communicate with modern active memory expansion solutions. The mismatch between traditional storage hierarchies and dynamic memory allocation mechanisms requires substantial architectural modifications. Organizations frequently encounter difficulties in establishing efficient data pathways between existing analytical engines and expanded memory pools, necessitating comprehensive system redesigns.

Performance synchronization poses another complex challenge during integration processes. Real-time data analysis demands consistent low-latency operations, while active memory expansion systems may introduce variable response times during memory allocation and deallocation cycles. The temporal misalignment between analytical processing requirements and memory management operations can create bottlenecks that compromise overall system performance.

To address architectural compatibility issues, organizations are implementing hybrid integration approaches that utilize middleware solutions and API abstraction layers. These intermediary components facilitate communication between disparate system components while maintaining operational independence. Containerization technologies and microservices architectures have proven particularly effective in creating modular integration frameworks that can accommodate both legacy systems and modern memory expansion capabilities.

Performance optimization solutions focus on implementing intelligent caching mechanisms and predictive memory allocation algorithms. Advanced scheduling systems now incorporate machine learning models that anticipate memory requirements based on historical usage patterns and real-time workload characteristics. These predictive capabilities enable proactive memory expansion before performance degradation occurs.

Operational complexity is being mitigated through automated orchestration platforms that manage the entire integration lifecycle. These platforms provide centralized monitoring, automated failover mechanisms, and dynamic resource allocation capabilities that reduce manual intervention requirements while ensuring system reliability and performance consistency across integrated environments.

System architecture compatibility represents one of the most significant integration hurdles. Legacy data analysis infrastructures often lack the necessary interfaces and protocols to seamlessly communicate with modern active memory expansion solutions. The mismatch between traditional storage hierarchies and dynamic memory allocation mechanisms requires substantial architectural modifications. Organizations frequently encounter difficulties in establishing efficient data pathways between existing analytical engines and expanded memory pools, necessitating comprehensive system redesigns.

Performance synchronization poses another complex challenge during integration processes. Real-time data analysis demands consistent low-latency operations, while active memory expansion systems may introduce variable response times during memory allocation and deallocation cycles. The temporal misalignment between analytical processing requirements and memory management operations can create bottlenecks that compromise overall system performance.

To address architectural compatibility issues, organizations are implementing hybrid integration approaches that utilize middleware solutions and API abstraction layers. These intermediary components facilitate communication between disparate system components while maintaining operational independence. Containerization technologies and microservices architectures have proven particularly effective in creating modular integration frameworks that can accommodate both legacy systems and modern memory expansion capabilities.

Performance optimization solutions focus on implementing intelligent caching mechanisms and predictive memory allocation algorithms. Advanced scheduling systems now incorporate machine learning models that anticipate memory requirements based on historical usage patterns and real-time workload characteristics. These predictive capabilities enable proactive memory expansion before performance degradation occurs.

Operational complexity is being mitigated through automated orchestration platforms that manage the entire integration lifecycle. These platforms provide centralized monitoring, automated failover mechanisms, and dynamic resource allocation capabilities that reduce manual intervention requirements while ensuring system reliability and performance consistency across integrated environments.

Unlock deeper insights with Patsnap Eureka Quick Research — get a full tech report to explore trends and direct your research. Try now!

Generate Your Research Report Instantly with AI Agent

Supercharge your innovation with Patsnap Eureka AI Agent Platform!