Improving Frame Generation Algorithms for Animation Quality

MAR 30, 20269 MIN READ

Generate Your Research Report Instantly with AI Agent

Patsnap Eureka helps you evaluate technical feasibility & market potential.

Frame Generation Animation Background and Objectives

Frame generation in animation has undergone significant transformation since the early days of hand-drawn animation, where skilled artists manually created each intermediate frame between key poses. The advent of computer graphics in the 1970s and 1980s introduced mathematical interpolation methods, fundamentally changing how animated sequences were produced. Traditional techniques relied heavily on linear interpolation and basic spline curves, which often resulted in mechanical-looking motion that lacked the organic fluidity of hand-crafted animation.

The evolution accelerated dramatically with the introduction of sophisticated algorithms in the 1990s and 2000s. Motion blur simulation, temporal coherence maintenance, and advanced interpolation techniques emerged as critical components. The development of physics-based animation systems and procedural generation methods marked a pivotal shift toward more realistic and dynamic frame generation capabilities.

Contemporary frame generation faces unprecedented challenges driven by rising quality expectations across entertainment, gaming, and virtual reality applications. Modern audiences demand seamless 60fps or higher frame rates, photorealistic rendering quality, and natural motion characteristics that closely mimic real-world physics. The proliferation of high-resolution displays and immersive technologies has intensified these requirements exponentially.

Current algorithmic approaches struggle with several persistent issues. Motion artifacts, including ghosting and temporal inconsistencies, remain problematic in fast-moving sequences. Computational efficiency presents another significant challenge, as real-time applications require algorithms that balance quality with processing speed constraints. Additionally, maintaining visual coherence across generated frames while preserving artistic intent continues to challenge existing methodologies.

The primary objective centers on developing next-generation algorithms that achieve superior animation quality through enhanced temporal consistency, reduced computational overhead, and improved motion fidelity. These algorithms must seamlessly integrate with existing production pipelines while supporting diverse animation styles, from stylized cartoon aesthetics to photorealistic cinematography.

Secondary objectives include establishing robust quality metrics for automated evaluation, enabling real-time performance optimization, and creating adaptive systems that intelligently adjust generation parameters based on content characteristics. The ultimate goal involves democratizing high-quality animation production by making advanced frame generation accessible to smaller studios and independent creators through efficient, user-friendly algorithmic solutions.

The evolution accelerated dramatically with the introduction of sophisticated algorithms in the 1990s and 2000s. Motion blur simulation, temporal coherence maintenance, and advanced interpolation techniques emerged as critical components. The development of physics-based animation systems and procedural generation methods marked a pivotal shift toward more realistic and dynamic frame generation capabilities.

Contemporary frame generation faces unprecedented challenges driven by rising quality expectations across entertainment, gaming, and virtual reality applications. Modern audiences demand seamless 60fps or higher frame rates, photorealistic rendering quality, and natural motion characteristics that closely mimic real-world physics. The proliferation of high-resolution displays and immersive technologies has intensified these requirements exponentially.

Current algorithmic approaches struggle with several persistent issues. Motion artifacts, including ghosting and temporal inconsistencies, remain problematic in fast-moving sequences. Computational efficiency presents another significant challenge, as real-time applications require algorithms that balance quality with processing speed constraints. Additionally, maintaining visual coherence across generated frames while preserving artistic intent continues to challenge existing methodologies.

The primary objective centers on developing next-generation algorithms that achieve superior animation quality through enhanced temporal consistency, reduced computational overhead, and improved motion fidelity. These algorithms must seamlessly integrate with existing production pipelines while supporting diverse animation styles, from stylized cartoon aesthetics to photorealistic cinematography.

Secondary objectives include establishing robust quality metrics for automated evaluation, enabling real-time performance optimization, and creating adaptive systems that intelligently adjust generation parameters based on content characteristics. The ultimate goal involves democratizing high-quality animation production by making advanced frame generation accessible to smaller studios and independent creators through efficient, user-friendly algorithmic solutions.

Market Demand for Enhanced Animation Quality Solutions

The global animation industry has experienced unprecedented growth, driven by expanding entertainment consumption, digital transformation across industries, and increasing demand for high-quality visual content. Traditional animation production faces significant challenges in meeting modern quality expectations while maintaining cost-effectiveness and production timelines. Frame generation algorithms represent a critical bottleneck in achieving superior animation quality, as current methods often struggle with motion blur, temporal inconsistency, and computational efficiency.

Entertainment and media sectors demonstrate the strongest demand for enhanced frame generation solutions. Streaming platforms require high-quality animated content to compete effectively, while gaming companies seek smoother frame rates and more realistic character animations. The rise of virtual reality and augmented reality applications has created additional pressure for real-time, high-fidelity animation rendering capabilities.

Corporate training and educational technology markets increasingly rely on animated content for engagement and knowledge retention. These sectors demand cost-effective solutions that can produce professional-quality animations without extensive manual intervention. Medical visualization, architectural rendering, and industrial design applications also require precise frame generation algorithms to accurately represent complex movements and transformations.

The advertising and marketing industry represents another significant demand driver, as brands increasingly utilize animated content across digital platforms. Social media marketing particularly benefits from smooth, high-quality animations that capture audience attention in competitive environments. Short-form video content creation tools require automated frame generation capabilities to enable non-technical users to produce professional-quality animations.

Emerging markets in Asia-Pacific and Latin America show accelerating adoption of animation technologies, driven by growing digital infrastructure and content localization needs. These regions present substantial opportunities for scalable frame generation solutions that can adapt to diverse cultural and linguistic requirements while maintaining consistent quality standards.

Technical requirements continue evolving toward real-time processing capabilities, cross-platform compatibility, and integration with existing production workflows. Market demand increasingly favors solutions that combine artificial intelligence with traditional animation techniques, enabling both automated enhancement and creative control. The convergence of cloud computing and edge processing creates opportunities for distributed frame generation systems that can scale dynamically based on project requirements.

Entertainment and media sectors demonstrate the strongest demand for enhanced frame generation solutions. Streaming platforms require high-quality animated content to compete effectively, while gaming companies seek smoother frame rates and more realistic character animations. The rise of virtual reality and augmented reality applications has created additional pressure for real-time, high-fidelity animation rendering capabilities.

Corporate training and educational technology markets increasingly rely on animated content for engagement and knowledge retention. These sectors demand cost-effective solutions that can produce professional-quality animations without extensive manual intervention. Medical visualization, architectural rendering, and industrial design applications also require precise frame generation algorithms to accurately represent complex movements and transformations.

The advertising and marketing industry represents another significant demand driver, as brands increasingly utilize animated content across digital platforms. Social media marketing particularly benefits from smooth, high-quality animations that capture audience attention in competitive environments. Short-form video content creation tools require automated frame generation capabilities to enable non-technical users to produce professional-quality animations.

Emerging markets in Asia-Pacific and Latin America show accelerating adoption of animation technologies, driven by growing digital infrastructure and content localization needs. These regions present substantial opportunities for scalable frame generation solutions that can adapt to diverse cultural and linguistic requirements while maintaining consistent quality standards.

Technical requirements continue evolving toward real-time processing capabilities, cross-platform compatibility, and integration with existing production workflows. Market demand increasingly favors solutions that combine artificial intelligence with traditional animation techniques, enabling both automated enhancement and creative control. The convergence of cloud computing and edge processing creates opportunities for distributed frame generation systems that can scale dynamically based on project requirements.

Current State and Challenges in Frame Generation Algorithms

Frame generation algorithms have evolved significantly over the past decade, transitioning from traditional interpolation methods to sophisticated deep learning approaches. Current state-of-the-art solutions primarily rely on neural networks, including convolutional neural networks (CNNs), recurrent neural networks (RNNs), and more recently, transformer-based architectures. These algorithms aim to synthesize intermediate frames between existing keyframes or generate entirely new frames based on learned motion patterns.

The geographical distribution of frame generation technology development shows concentrated advancement in North America, particularly in Silicon Valley and Seattle, where major tech companies invest heavily in animation and gaming technologies. Europe contributes significantly through research institutions in the UK and Germany, while Asia-Pacific regions, especially Japan, South Korea, and China, drive innovation through their robust gaming and animation industries.

Contemporary algorithms face substantial challenges in maintaining temporal consistency across generated sequences. Flickering artifacts, motion blur inconsistencies, and object boundary preservation remain persistent issues that degrade animation quality. The computational complexity of real-time frame generation presents another significant constraint, particularly for high-resolution content and complex scenes with multiple moving objects.

Memory requirements pose additional limitations, as current deep learning models demand substantial GPU resources for training and inference. This creates barriers for smaller studios and independent developers seeking to implement advanced frame generation capabilities. The trade-off between processing speed and output quality continues to challenge algorithm designers.

Training data quality and diversity represent critical bottlenecks in algorithm development. Existing datasets often lack sufficient variety in motion types, lighting conditions, and scene complexity, leading to models that perform well on specific content types but struggle with generalization. The scarcity of high-quality, annotated training data particularly affects specialized animation domains such as fluid dynamics, particle effects, and complex character interactions.

Evaluation metrics for frame generation quality remain inconsistent across the industry. While perceptual metrics like LPIPS and SSIM provide quantitative assessments, they often fail to capture subjective animation quality factors that human viewers prioritize. This measurement gap complicates algorithm comparison and optimization efforts.

Current solutions also struggle with handling occlusions and disocclusions effectively, often producing ghosting artifacts or unrealistic object appearances when elements move behind or emerge from other scene components. The challenge intensifies in scenarios involving transparent or semi-transparent objects, where traditional optical flow methods prove insufficient.

The geographical distribution of frame generation technology development shows concentrated advancement in North America, particularly in Silicon Valley and Seattle, where major tech companies invest heavily in animation and gaming technologies. Europe contributes significantly through research institutions in the UK and Germany, while Asia-Pacific regions, especially Japan, South Korea, and China, drive innovation through their robust gaming and animation industries.

Contemporary algorithms face substantial challenges in maintaining temporal consistency across generated sequences. Flickering artifacts, motion blur inconsistencies, and object boundary preservation remain persistent issues that degrade animation quality. The computational complexity of real-time frame generation presents another significant constraint, particularly for high-resolution content and complex scenes with multiple moving objects.

Memory requirements pose additional limitations, as current deep learning models demand substantial GPU resources for training and inference. This creates barriers for smaller studios and independent developers seeking to implement advanced frame generation capabilities. The trade-off between processing speed and output quality continues to challenge algorithm designers.

Training data quality and diversity represent critical bottlenecks in algorithm development. Existing datasets often lack sufficient variety in motion types, lighting conditions, and scene complexity, leading to models that perform well on specific content types but struggle with generalization. The scarcity of high-quality, annotated training data particularly affects specialized animation domains such as fluid dynamics, particle effects, and complex character interactions.

Evaluation metrics for frame generation quality remain inconsistent across the industry. While perceptual metrics like LPIPS and SSIM provide quantitative assessments, they often fail to capture subjective animation quality factors that human viewers prioritize. This measurement gap complicates algorithm comparison and optimization efforts.

Current solutions also struggle with handling occlusions and disocclusions effectively, often producing ghosting artifacts or unrealistic object appearances when elements move behind or emerge from other scene components. The challenge intensifies in scenarios involving transparent or semi-transparent objects, where traditional optical flow methods prove insufficient.

Existing Frame Generation Algorithm Solutions

01 Motion interpolation and frame rate conversion techniques

Frame generation algorithms utilize motion interpolation and frame rate conversion techniques to create intermediate frames between existing frames in an animation sequence. These methods analyze motion vectors and pixel movements to generate smooth transitions, effectively increasing the frame rate and improving the perceived fluidity of animations. Advanced interpolation algorithms can predict object trajectories and apply temporal filtering to reduce artifacts while maintaining visual coherence.- Motion interpolation and frame rate conversion techniques: Frame generation algorithms utilize motion interpolation methods to create intermediate frames between existing frames, effectively increasing the frame rate of animations. These techniques analyze motion vectors and pixel movements to generate smooth transitions, improving the perceived fluidity of animations. Advanced interpolation methods can adapt to different motion patterns and scene complexities to maintain visual quality.

- Neural network and machine learning based frame synthesis: Modern frame generation approaches employ neural networks and deep learning models to synthesize high-quality intermediate frames. These algorithms learn from large datasets to predict and generate frames that maintain temporal consistency and visual fidelity. The machine learning models can handle complex scenarios including occlusions, lighting changes, and non-linear motion patterns to produce realistic animation sequences.

- Temporal coherence and artifact reduction methods: Quality enhancement in frame generation focuses on maintaining temporal coherence across generated frames while minimizing visual artifacts such as ghosting, blurring, and flickering. These methods implement sophisticated filtering and post-processing techniques to ensure smooth transitions and consistent visual appearance. Advanced algorithms detect and correct potential artifacts before they become visible in the final animation output.

- Adaptive frame generation based on content analysis: Content-aware frame generation algorithms analyze the characteristics of input frames to adaptively adjust generation parameters. These systems identify regions of interest, motion complexity, and scene changes to optimize the frame generation process. By tailoring the algorithm behavior to specific content types, these methods achieve better quality results across diverse animation scenarios while efficiently managing computational resources.

- Real-time frame generation optimization for interactive applications: Optimization techniques enable frame generation algorithms to operate in real-time for interactive applications such as gaming and live video processing. These approaches balance computational efficiency with output quality through parallel processing, hardware acceleration, and algorithmic simplifications. The methods ensure consistent performance while maintaining acceptable animation quality standards for time-sensitive applications.

02 Neural network and machine learning based frame synthesis

Modern frame generation approaches employ neural networks and deep learning models to synthesize high-quality intermediate frames. These systems are trained on large datasets to understand motion patterns, object deformation, and scene dynamics. The machine learning models can intelligently predict frame content by learning complex temporal relationships, resulting in more natural-looking animations with reduced motion blur and improved detail preservation compared to traditional interpolation methods.Expand Specific Solutions03 Optical flow estimation for frame generation

Optical flow algorithms calculate the motion field between consecutive frames to guide the generation of intermediate frames. These techniques analyze pixel displacement patterns and velocity vectors to create accurate motion representations. By computing dense or sparse optical flow fields, the algorithms can warp and blend source frames to produce temporally consistent intermediate frames that maintain object boundaries and handle occlusions effectively.Expand Specific Solutions04 Artifact reduction and quality enhancement methods

Frame generation systems incorporate various techniques to minimize visual artifacts such as ghosting, halos, and motion judder that can degrade animation quality. These methods include adaptive blending strategies, edge-aware processing, and temporal consistency checks. Quality enhancement algorithms also address issues like flickering and unnatural motion by applying post-processing filters and implementing feedback mechanisms that adjust generation parameters based on content characteristics.Expand Specific Solutions05 Real-time frame generation and rendering optimization

Real-time frame generation algorithms focus on computational efficiency to enable smooth playback in interactive applications and live rendering scenarios. These approaches utilize hardware acceleration, parallel processing architectures, and optimized data structures to reduce latency. The systems balance quality and performance by implementing adaptive algorithms that adjust complexity based on available computational resources, ensuring consistent frame delivery rates while maintaining acceptable visual quality.Expand Specific Solutions

Key Players in Animation Software and Algorithm Industry

The frame generation algorithms for animation quality market represents a rapidly evolving technological landscape currently in its growth phase, driven by increasing demand for high-quality visual content across gaming, entertainment, and digital media sectors. The market demonstrates significant expansion potential, estimated in billions globally, as content creators seek enhanced visual fidelity and smoother animation experiences. Technology maturity varies considerably among key players, with NVIDIA Corp. leading through advanced GPU-accelerated solutions and AI-driven rendering technologies, while Samsung Electronics Co., Ltd. and Sony Group Corp. contribute through display and hardware innovations. Tencent Technology and NetEase leverage gaming-focused implementations, whereas Intel Corp. and Qualcomm Inc. provide foundational processing capabilities. Emerging players like Miris introduce specialized 3D streaming platforms, while academic institutions such as Zhejiang University and Beihang University drive fundamental research. The competitive landscape shows established semiconductor giants competing alongside specialized software companies and content platforms, indicating a maturing but still fragmented market with substantial innovation opportunities.

Tencent Technology (Shenzhen) Co., Ltd.

Technical Solution: Tencent has invested heavily in AI-driven frame generation for gaming and video applications, developing machine learning models that can predict and generate high-quality intermediate frames for real-time applications. Their approach focuses on lightweight neural networks optimized for mobile and cloud gaming platforms, utilizing temporal consistency algorithms and motion vector prediction to maintain visual quality while reducing computational overhead. The technology is integrated into their gaming engines and video streaming services to enhance user experience across different devices and network conditions.

Strengths: Large-scale deployment experience, mobile optimization expertise, integration with gaming platforms. Weaknesses: Less specialized hardware acceleration compared to GPU manufacturers, dependency on cloud infrastructure for complex processing.

NVIDIA Corp.

Technical Solution: NVIDIA has developed advanced frame generation technologies through their DLSS (Deep Learning Super Sampling) framework, specifically DLSS 3 Frame Generation which uses AI-powered optical flow acceleration and neural networks to generate intermediate frames between traditionally rendered frames. Their approach leverages dedicated RT cores and Tensor cores in RTX GPUs to analyze motion vectors and create high-quality interpolated frames, achieving up to 4x performance improvements in gaming applications. The technology employs convolutional neural networks trained on high-quality reference data to predict pixel motion and generate temporally coherent frames with minimal artifacts.

Strengths: Industry-leading AI acceleration hardware, extensive training datasets, real-time performance optimization. Weaknesses: Limited to NVIDIA hardware ecosystem, high computational requirements for training models.

Core Innovations in Advanced Frame Generation Techniques

Motion prediction using one or more neural networks

PatentPendingUS20220230376A1

Innovation

- The use of a recurrent generative adversarial network (GAN) with a conditional generative adversarial neural network architecture and a Phase-Functioned Neural Network (PFNN) or Mode-Adaptive Neural Network (MANN) backbone, which enables autoregressive training and correction of pose estimation errors without manual parameter tuning, and incorporates adversarial loss to improve animation quality.

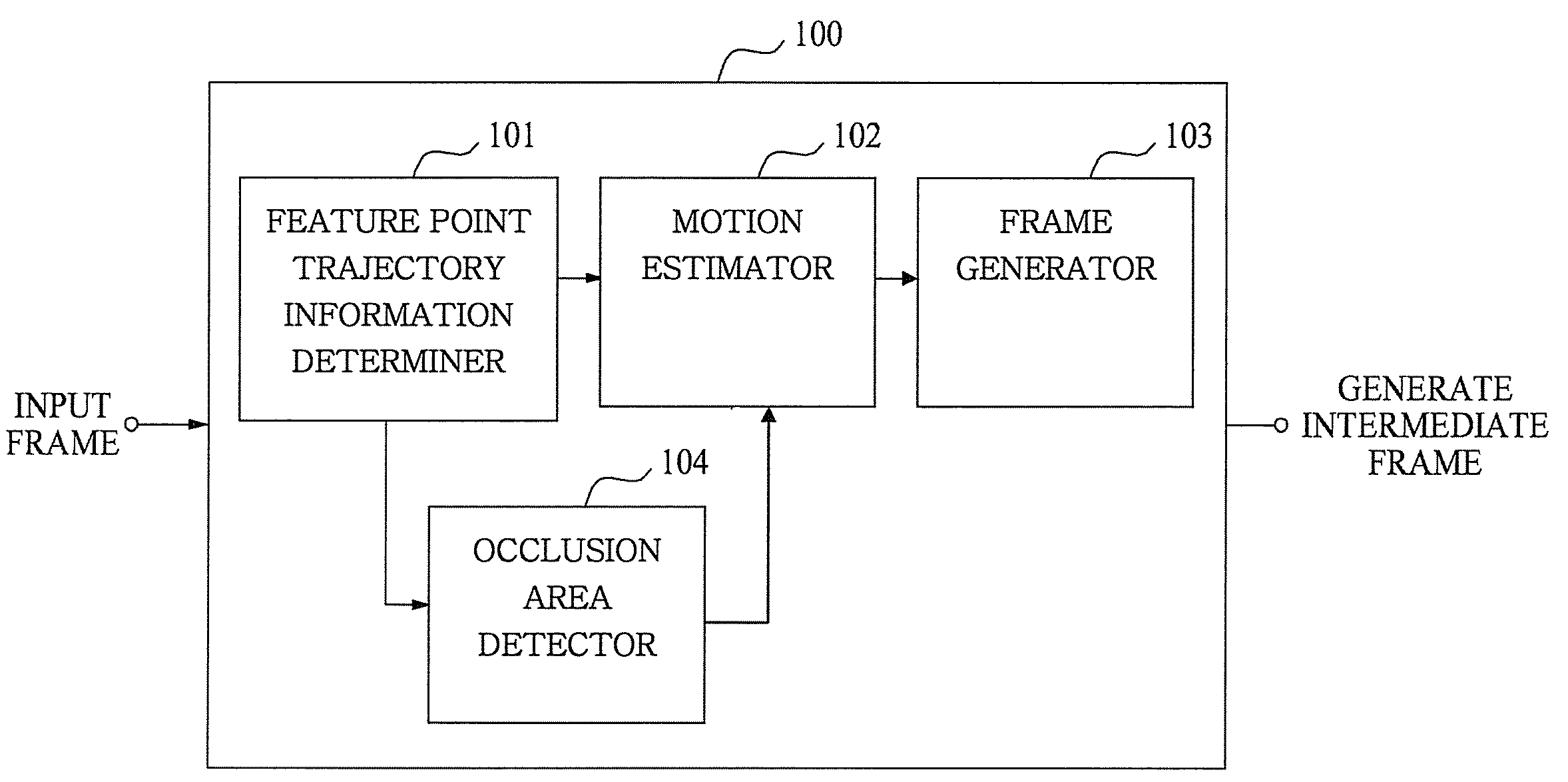

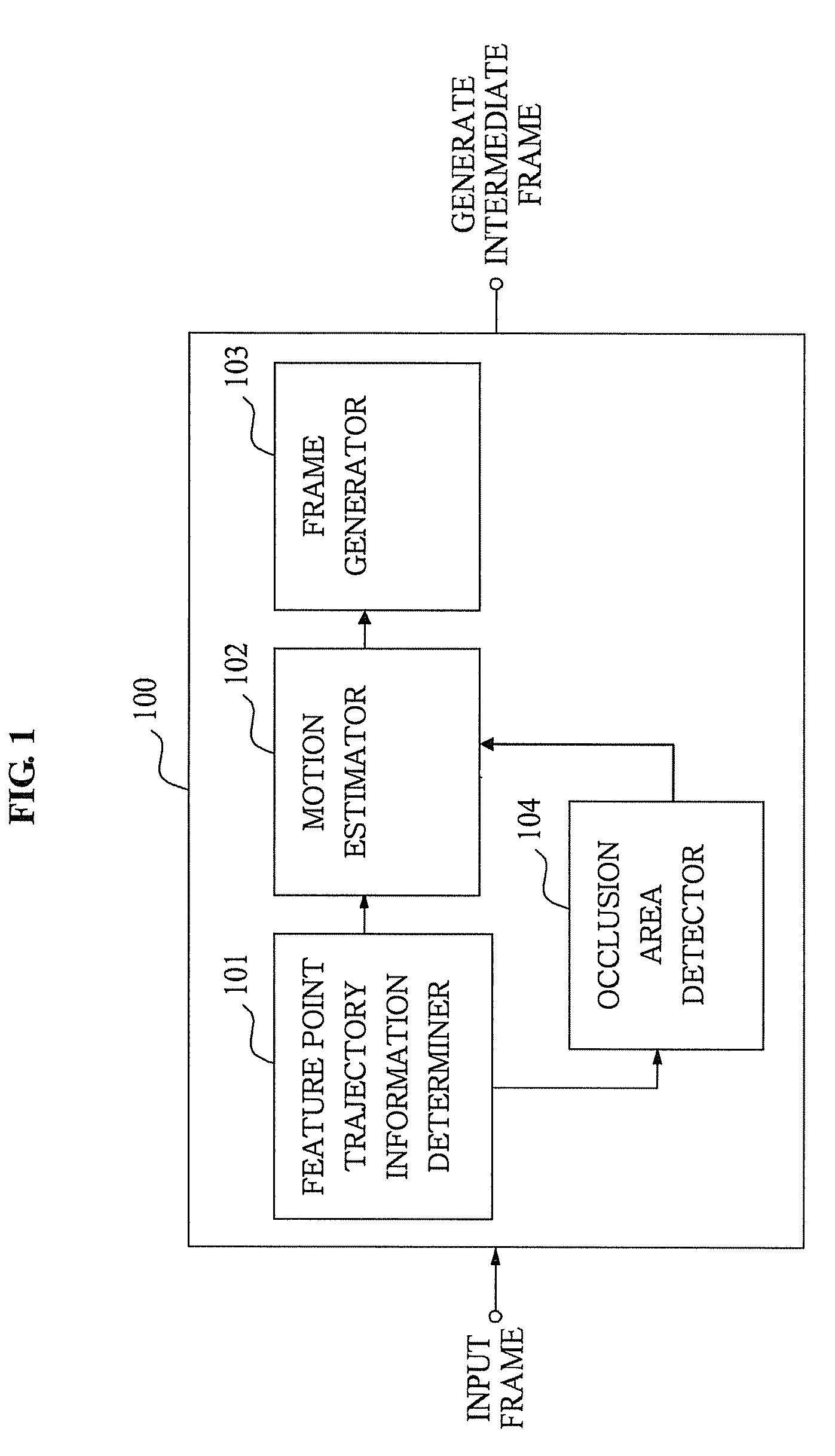

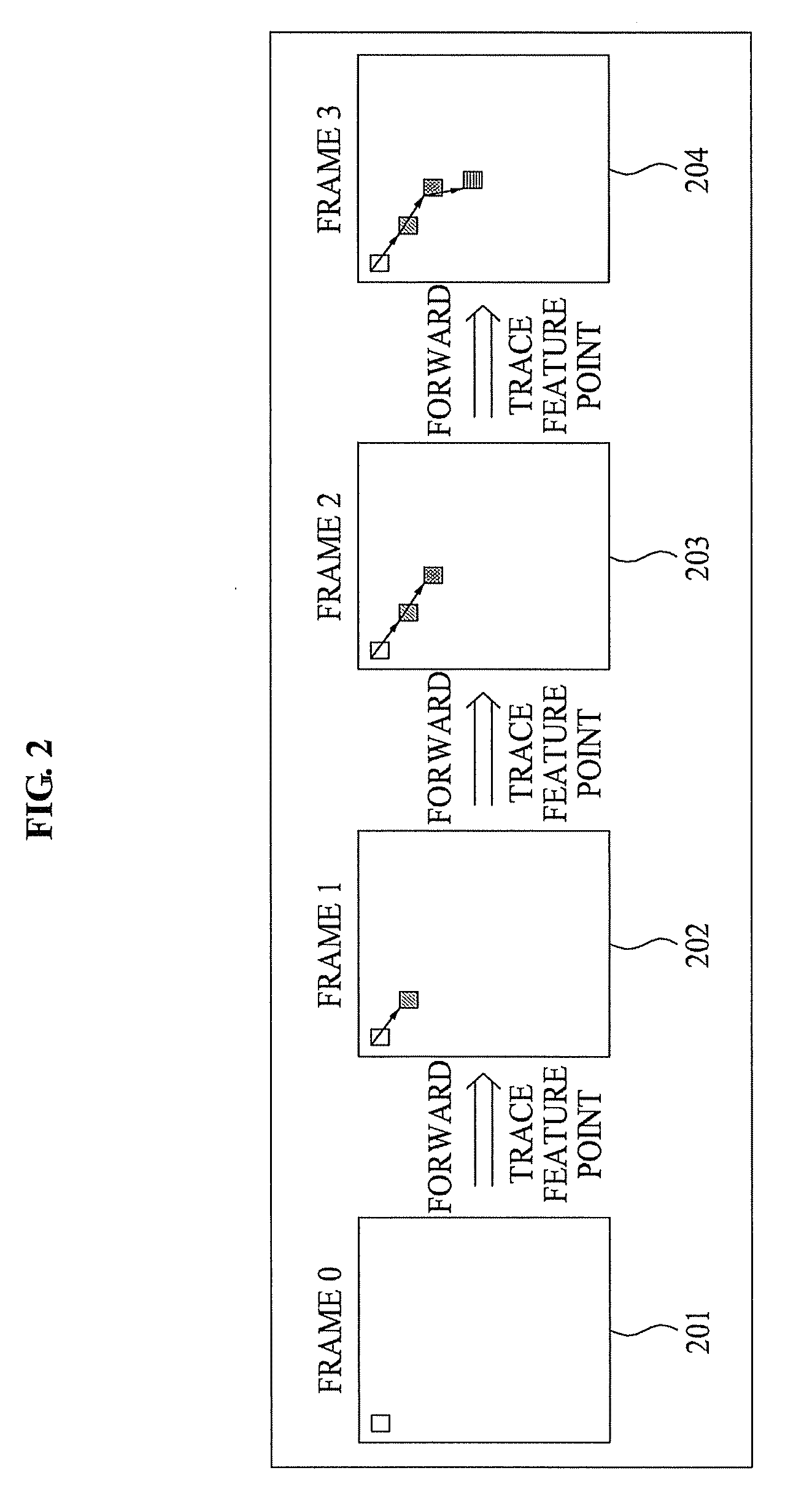

Apparatus and method for improving frame rate using motion trajectory

PatentActiveUS20100104140A1

Innovation

- A method that determines forward feature point trajectory information, performs block-based backward motion estimation, and generates intermediate frames using a motion vector, with additional feature point extraction in cases of scene changes or insufficient feature points, and detects occlusion areas using Sum of Absolute Differences (SAD) to enhance frame rate and image quality.

Real-time Rendering Performance Optimization Strategies

Real-time rendering performance optimization represents a critical intersection with frame generation algorithms, as both domains share fundamental computational constraints and quality objectives. The pursuit of enhanced animation quality through improved frame generation must be balanced against the stringent performance requirements of real-time applications, creating a complex optimization landscape that demands sophisticated strategic approaches.

Modern real-time rendering systems employ multi-threaded architectures to maximize hardware utilization while maintaining consistent frame rates. CPU-GPU parallelization strategies have evolved to overlap computation phases, where frame generation algorithms can leverage dedicated compute shaders running concurrently with traditional graphics pipeline operations. This architectural approach enables sophisticated interpolation and extrapolation techniques without compromising overall system responsiveness.

Temporal coherence optimization has emerged as a cornerstone strategy, exploiting frame-to-frame similarities to reduce computational overhead. Advanced motion vector estimation techniques enable predictive rendering approaches, where frame generation algorithms can anticipate pixel movements and pre-compute intermediate states. These methods significantly reduce redundant calculations while maintaining visual fidelity across animation sequences.

Memory bandwidth optimization plays a crucial role in real-time frame generation performance. Efficient data structures such as hierarchical Z-buffers and compressed texture formats minimize memory transfer bottlenecks that traditionally constrain animation quality improvements. Cache-friendly algorithms that maximize spatial and temporal locality have demonstrated substantial performance gains in complex animation scenarios.

Adaptive quality scaling represents an emerging optimization paradigm, where frame generation algorithms dynamically adjust computational complexity based on scene complexity and available processing resources. This approach enables consistent performance targets while maximizing visual quality during less demanding rendering phases, creating a responsive balance between animation fidelity and real-time constraints.

Hardware-specific optimizations leverage modern GPU architectures' specialized units, including tensor cores for AI-enhanced frame interpolation and variable rate shading capabilities for selective quality enhancement. These optimizations enable sophisticated frame generation techniques previously considered computationally prohibitive for real-time applications.

Modern real-time rendering systems employ multi-threaded architectures to maximize hardware utilization while maintaining consistent frame rates. CPU-GPU parallelization strategies have evolved to overlap computation phases, where frame generation algorithms can leverage dedicated compute shaders running concurrently with traditional graphics pipeline operations. This architectural approach enables sophisticated interpolation and extrapolation techniques without compromising overall system responsiveness.

Temporal coherence optimization has emerged as a cornerstone strategy, exploiting frame-to-frame similarities to reduce computational overhead. Advanced motion vector estimation techniques enable predictive rendering approaches, where frame generation algorithms can anticipate pixel movements and pre-compute intermediate states. These methods significantly reduce redundant calculations while maintaining visual fidelity across animation sequences.

Memory bandwidth optimization plays a crucial role in real-time frame generation performance. Efficient data structures such as hierarchical Z-buffers and compressed texture formats minimize memory transfer bottlenecks that traditionally constrain animation quality improvements. Cache-friendly algorithms that maximize spatial and temporal locality have demonstrated substantial performance gains in complex animation scenarios.

Adaptive quality scaling represents an emerging optimization paradigm, where frame generation algorithms dynamically adjust computational complexity based on scene complexity and available processing resources. This approach enables consistent performance targets while maximizing visual quality during less demanding rendering phases, creating a responsive balance between animation fidelity and real-time constraints.

Hardware-specific optimizations leverage modern GPU architectures' specialized units, including tensor cores for AI-enhanced frame interpolation and variable rate shading capabilities for selective quality enhancement. These optimizations enable sophisticated frame generation techniques previously considered computationally prohibitive for real-time applications.

AI-Driven Frame Interpolation and Generation Methods

Artificial intelligence has fundamentally transformed frame interpolation and generation methodologies, introducing sophisticated neural network architectures that significantly outperform traditional optical flow-based approaches. Deep learning models, particularly convolutional neural networks and generative adversarial networks, have emerged as the dominant paradigm for creating intermediate frames with enhanced temporal coherence and visual fidelity.

Contemporary AI-driven interpolation systems leverage advanced architectures such as Video Frame Interpolation Networks (VFIN) and Super SloMo algorithms, which utilize U-Net structures combined with optical flow estimation modules. These networks learn complex motion patterns from extensive training datasets, enabling them to generate plausible intermediate frames even in challenging scenarios involving occlusions, non-linear motion, and complex lighting conditions.

Transformer-based architectures have recently gained prominence in frame generation tasks, offering superior long-range dependency modeling compared to traditional convolutional approaches. Vision Transformers adapted for temporal interpolation demonstrate remarkable capability in understanding global motion patterns and maintaining consistency across extended sequences, particularly beneficial for animation workflows requiring smooth character movements and scene transitions.

Generative adversarial networks specifically designed for frame synthesis, such as TecoGAN and ESRGAN variants, incorporate temporal consistency losses and perceptual quality metrics to produce visually compelling results. These models employ sophisticated discriminator networks that evaluate both spatial quality and temporal coherence, ensuring generated frames maintain realistic motion characteristics while preserving fine-grained details essential for high-quality animation output.

Recent developments in diffusion models have introduced novel approaches to frame generation, utilizing iterative denoising processes to create intermediate frames with exceptional quality. These probabilistic models demonstrate superior performance in handling complex textures and maintaining artistic style consistency, making them particularly valuable for stylized animation content where traditional interpolation methods often fail to preserve aesthetic integrity.

Multi-scale processing techniques integrated within AI frameworks enable hierarchical frame generation, where coarse motion estimation guides fine-detail synthesis. This approach significantly improves computational efficiency while maintaining output quality, addressing practical deployment requirements in production animation pipelines where processing speed remains a critical consideration alongside visual fidelity.

Contemporary AI-driven interpolation systems leverage advanced architectures such as Video Frame Interpolation Networks (VFIN) and Super SloMo algorithms, which utilize U-Net structures combined with optical flow estimation modules. These networks learn complex motion patterns from extensive training datasets, enabling them to generate plausible intermediate frames even in challenging scenarios involving occlusions, non-linear motion, and complex lighting conditions.

Transformer-based architectures have recently gained prominence in frame generation tasks, offering superior long-range dependency modeling compared to traditional convolutional approaches. Vision Transformers adapted for temporal interpolation demonstrate remarkable capability in understanding global motion patterns and maintaining consistency across extended sequences, particularly beneficial for animation workflows requiring smooth character movements and scene transitions.

Generative adversarial networks specifically designed for frame synthesis, such as TecoGAN and ESRGAN variants, incorporate temporal consistency losses and perceptual quality metrics to produce visually compelling results. These models employ sophisticated discriminator networks that evaluate both spatial quality and temporal coherence, ensuring generated frames maintain realistic motion characteristics while preserving fine-grained details essential for high-quality animation output.

Recent developments in diffusion models have introduced novel approaches to frame generation, utilizing iterative denoising processes to create intermediate frames with exceptional quality. These probabilistic models demonstrate superior performance in handling complex textures and maintaining artistic style consistency, making them particularly valuable for stylized animation content where traditional interpolation methods often fail to preserve aesthetic integrity.

Multi-scale processing techniques integrated within AI frameworks enable hierarchical frame generation, where coarse motion estimation guides fine-detail synthesis. This approach significantly improves computational efficiency while maintaining output quality, addressing practical deployment requirements in production animation pipelines where processing speed remains a critical consideration alongside visual fidelity.

Unlock deeper insights with Patsnap Eureka Quick Research — get a full tech report to explore trends and direct your research. Try now!

Generate Your Research Report Instantly with AI Agent

Supercharge your innovation with Patsnap Eureka AI Agent Platform!