Integrating Active Memory in Virtual Network Functions

MAR 7, 20269 MIN READ

Generate Your Research Report Instantly with AI Agent

PatSnap Eureka helps you evaluate technical feasibility & market potential.

Active Memory VNF Integration Background and Objectives

The evolution of network infrastructure has undergone a fundamental transformation from traditional hardware-based appliances to software-defined architectures. Virtual Network Functions (VNFs) emerged as a cornerstone of Network Functions Virtualization (NFV), enabling network operators to deploy network services as software instances running on commodity hardware. This paradigm shift has revolutionized network deployment flexibility, operational efficiency, and cost-effectiveness across telecommunications and enterprise networks.

Traditional VNF implementations have primarily relied on conventional memory architectures, where data processing follows established patterns of fetching, processing, and storing information. However, the exponential growth in network traffic, the proliferation of IoT devices, and the demanding requirements of 5G and edge computing applications have exposed significant limitations in current VNF performance capabilities.

Active memory technology represents a paradigm shift in memory system design, where memory modules incorporate processing capabilities directly within the memory subsystem. Unlike passive memory that merely stores and retrieves data, active memory can perform computational operations on data in-situ, reducing data movement overhead and enabling more efficient processing workflows.

The integration of active memory into VNF architectures addresses critical performance bottlenecks that have constrained traditional implementations. Conventional VNFs often suffer from memory bandwidth limitations, high latency in data access patterns, and inefficient utilization of processing resources due to frequent data transfers between memory and processing units.

The primary objective of integrating active memory in VNFs is to achieve substantial performance improvements in packet processing, traffic analysis, and network function execution. This integration aims to reduce processing latency by minimizing data movement, increase throughput capacity through parallel in-memory processing, and enhance energy efficiency by eliminating redundant data transfers.

Furthermore, this technological convergence seeks to enable more sophisticated network functions that were previously computationally prohibitive. Advanced deep packet inspection, real-time traffic analytics, and complex security processing can benefit significantly from the computational capabilities embedded within active memory systems.

The strategic goal extends beyond mere performance enhancement to fundamentally reimagining how network functions can be architected and deployed. By leveraging active memory capabilities, VNFs can achieve greater scalability, improved resource utilization, and enhanced responsiveness to dynamic network conditions, ultimately supporting the next generation of network service requirements.

Traditional VNF implementations have primarily relied on conventional memory architectures, where data processing follows established patterns of fetching, processing, and storing information. However, the exponential growth in network traffic, the proliferation of IoT devices, and the demanding requirements of 5G and edge computing applications have exposed significant limitations in current VNF performance capabilities.

Active memory technology represents a paradigm shift in memory system design, where memory modules incorporate processing capabilities directly within the memory subsystem. Unlike passive memory that merely stores and retrieves data, active memory can perform computational operations on data in-situ, reducing data movement overhead and enabling more efficient processing workflows.

The integration of active memory into VNF architectures addresses critical performance bottlenecks that have constrained traditional implementations. Conventional VNFs often suffer from memory bandwidth limitations, high latency in data access patterns, and inefficient utilization of processing resources due to frequent data transfers between memory and processing units.

The primary objective of integrating active memory in VNFs is to achieve substantial performance improvements in packet processing, traffic analysis, and network function execution. This integration aims to reduce processing latency by minimizing data movement, increase throughput capacity through parallel in-memory processing, and enhance energy efficiency by eliminating redundant data transfers.

Furthermore, this technological convergence seeks to enable more sophisticated network functions that were previously computationally prohibitive. Advanced deep packet inspection, real-time traffic analytics, and complex security processing can benefit significantly from the computational capabilities embedded within active memory systems.

The strategic goal extends beyond mere performance enhancement to fundamentally reimagining how network functions can be architected and deployed. By leveraging active memory capabilities, VNFs can achieve greater scalability, improved resource utilization, and enhanced responsiveness to dynamic network conditions, ultimately supporting the next generation of network service requirements.

Market Demand for Memory-Enhanced Virtual Network Functions

The telecommunications industry is experiencing unprecedented demand for network virtualization solutions that can handle increasingly complex workloads while maintaining high performance standards. Traditional Virtual Network Functions (VNFs) face significant limitations in memory management, creating bottlenecks that impact service delivery and operational efficiency. This has generated substantial market interest in memory-enhanced VNF solutions that integrate active memory technologies to address these performance constraints.

Enterprise customers across various sectors are driving demand for VNFs with enhanced memory capabilities, particularly in cloud computing, content delivery networks, and real-time communication services. Organizations require network functions that can dynamically adapt to varying traffic patterns while maintaining consistent performance levels. The growing adoption of edge computing architectures has further intensified this demand, as edge deployments require VNFs capable of processing data with minimal latency constraints.

Service providers are increasingly seeking VNF solutions that offer improved resource utilization and reduced operational costs. Memory-enhanced VNFs present opportunities to consolidate multiple network functions onto fewer hardware platforms while maintaining service quality. This consolidation potential represents significant cost savings in both capital expenditure and operational overhead, making it an attractive proposition for telecommunications operators facing margin pressures.

The emergence of 5G networks has created new market requirements for VNFs that can support ultra-low latency applications and massive device connectivity. These next-generation networks demand memory architectures capable of handling diverse traffic types simultaneously while providing deterministic performance guarantees. Network slice management, a key 5G capability, particularly benefits from active memory integration that enables dynamic resource allocation across different service categories.

Financial services, healthcare, and industrial automation sectors represent high-value market segments with specific requirements for memory-enhanced VNFs. These industries demand network functions with predictable performance characteristics and the ability to handle mission-critical applications. The regulatory compliance requirements in these sectors also drive demand for VNF solutions that can provide detailed performance monitoring and audit capabilities.

Market research indicates strong growth potential for memory-enhanced VNF solutions, driven by the convergence of cloud-native architectures and network function virtualization. The increasing complexity of modern applications and the need for real-time data processing capabilities continue to expand the addressable market for these advanced VNF implementations.

Enterprise customers across various sectors are driving demand for VNFs with enhanced memory capabilities, particularly in cloud computing, content delivery networks, and real-time communication services. Organizations require network functions that can dynamically adapt to varying traffic patterns while maintaining consistent performance levels. The growing adoption of edge computing architectures has further intensified this demand, as edge deployments require VNFs capable of processing data with minimal latency constraints.

Service providers are increasingly seeking VNF solutions that offer improved resource utilization and reduced operational costs. Memory-enhanced VNFs present opportunities to consolidate multiple network functions onto fewer hardware platforms while maintaining service quality. This consolidation potential represents significant cost savings in both capital expenditure and operational overhead, making it an attractive proposition for telecommunications operators facing margin pressures.

The emergence of 5G networks has created new market requirements for VNFs that can support ultra-low latency applications and massive device connectivity. These next-generation networks demand memory architectures capable of handling diverse traffic types simultaneously while providing deterministic performance guarantees. Network slice management, a key 5G capability, particularly benefits from active memory integration that enables dynamic resource allocation across different service categories.

Financial services, healthcare, and industrial automation sectors represent high-value market segments with specific requirements for memory-enhanced VNFs. These industries demand network functions with predictable performance characteristics and the ability to handle mission-critical applications. The regulatory compliance requirements in these sectors also drive demand for VNF solutions that can provide detailed performance monitoring and audit capabilities.

Market research indicates strong growth potential for memory-enhanced VNF solutions, driven by the convergence of cloud-native architectures and network function virtualization. The increasing complexity of modern applications and the need for real-time data processing capabilities continue to expand the addressable market for these advanced VNF implementations.

Current State and Challenges of Active Memory in VNF

The integration of active memory technologies within Virtual Network Functions represents a significant paradigm shift in network infrastructure design, yet the current implementation landscape reveals substantial disparities in technological maturity and deployment readiness. Active memory systems, which enable dynamic data processing and computation directly within memory subsystems, have shown promising potential in traditional computing environments but face unique challenges when adapted to the virtualized networking domain.

Current VNF implementations predominantly rely on conventional memory architectures that separate processing and storage functions, creating inherent bottlenecks in data-intensive network operations. The existing memory subsystems in virtualized environments typically operate as passive storage components, requiring frequent data transfers between memory and processing units. This traditional approach significantly impacts performance in scenarios requiring real-time packet processing, deep packet inspection, and complex network analytics.

The technological readiness of active memory solutions varies considerably across different implementation approaches. Processing-in-memory technologies have demonstrated feasibility in laboratory environments, with several prototype systems achieving notable performance improvements in specific networking workloads. However, the transition from proof-of-concept implementations to production-ready VNF deployments remains incomplete, with most solutions still in early development phases.

Geographic distribution of active memory research and development shows concentrated efforts in North America, Europe, and East Asia, with significant investments from both academic institutions and industry players. The United States leads in fundamental research and patent development, while European initiatives focus on standardization and interoperability frameworks. Asian markets, particularly in South Korea and Japan, emphasize commercial applications and manufacturing capabilities.

Several critical technical challenges impede widespread adoption of active memory in VNF environments. Memory coherency issues arise when multiple processing elements within active memory systems attempt simultaneous access to shared data structures, particularly problematic in multi-tenant virtualized environments. Power consumption and thermal management present additional constraints, as active memory systems typically require higher energy budgets compared to conventional memory architectures.

Programming model complexity represents another significant barrier, as existing VNF development frameworks lack native support for active memory paradigms. Current software development tools and libraries require substantial modifications to effectively leverage active memory capabilities, creating steep learning curves for network function developers. The absence of standardized APIs and programming interfaces further complicates integration efforts across different vendor platforms.

Scalability concerns emerge when considering large-scale VNF deployments, as active memory systems must maintain performance characteristics across varying workload intensities and tenant requirements. Current implementations struggle with dynamic resource allocation and load balancing, particularly in cloud-native environments where VNF instances frequently scale up or down based on demand patterns.

Current VNF implementations predominantly rely on conventional memory architectures that separate processing and storage functions, creating inherent bottlenecks in data-intensive network operations. The existing memory subsystems in virtualized environments typically operate as passive storage components, requiring frequent data transfers between memory and processing units. This traditional approach significantly impacts performance in scenarios requiring real-time packet processing, deep packet inspection, and complex network analytics.

The technological readiness of active memory solutions varies considerably across different implementation approaches. Processing-in-memory technologies have demonstrated feasibility in laboratory environments, with several prototype systems achieving notable performance improvements in specific networking workloads. However, the transition from proof-of-concept implementations to production-ready VNF deployments remains incomplete, with most solutions still in early development phases.

Geographic distribution of active memory research and development shows concentrated efforts in North America, Europe, and East Asia, with significant investments from both academic institutions and industry players. The United States leads in fundamental research and patent development, while European initiatives focus on standardization and interoperability frameworks. Asian markets, particularly in South Korea and Japan, emphasize commercial applications and manufacturing capabilities.

Several critical technical challenges impede widespread adoption of active memory in VNF environments. Memory coherency issues arise when multiple processing elements within active memory systems attempt simultaneous access to shared data structures, particularly problematic in multi-tenant virtualized environments. Power consumption and thermal management present additional constraints, as active memory systems typically require higher energy budgets compared to conventional memory architectures.

Programming model complexity represents another significant barrier, as existing VNF development frameworks lack native support for active memory paradigms. Current software development tools and libraries require substantial modifications to effectively leverage active memory capabilities, creating steep learning curves for network function developers. The absence of standardized APIs and programming interfaces further complicates integration efforts across different vendor platforms.

Scalability concerns emerge when considering large-scale VNF deployments, as active memory systems must maintain performance characteristics across varying workload intensities and tenant requirements. Current implementations struggle with dynamic resource allocation and load balancing, particularly in cloud-native environments where VNF instances frequently scale up or down based on demand patterns.

Existing Active Memory Integration Solutions for VNF

01 Memory management and allocation for virtual network functions

Virtual network functions require efficient memory management techniques to optimize resource utilization. This includes dynamic memory allocation, memory pooling, and memory sharing mechanisms that allow VNFs to scale and adapt to varying workload demands. Advanced memory management strategies enable better performance isolation and resource efficiency in virtualized network environments.- Memory management and allocation for virtual network functions: Virtual network functions require efficient memory management techniques to optimize resource utilization. This includes dynamic memory allocation, memory pooling, and memory sharing mechanisms that allow VNFs to scale and adapt to varying workload demands. Advanced memory management strategies enable better performance isolation and resource efficiency in virtualized network environments.

- Active memory monitoring and optimization for VNF performance: Active memory monitoring systems track memory usage patterns and performance metrics in real-time for virtual network functions. These systems employ intelligent algorithms to detect memory bottlenecks, predict resource requirements, and trigger optimization actions. The monitoring framework enables proactive memory management to maintain service quality and prevent performance degradation.

- Memory virtualization and abstraction layers for network functions: Memory virtualization technologies provide abstraction layers that decouple physical memory resources from virtual network functions. These technologies enable memory overcommitment, memory migration, and flexible memory allocation across multiple VNF instances. The virtualization layer facilitates efficient memory utilization and supports dynamic scaling of network services.

- Memory caching and buffering mechanisms for VNF data processing: Caching and buffering strategies optimize data processing in virtual network functions by storing frequently accessed data in high-speed memory. These mechanisms reduce latency, improve throughput, and enhance overall system performance. Advanced caching algorithms determine optimal data placement and eviction policies based on access patterns and priority levels.

- Memory security and isolation for multi-tenant VNF environments: Security mechanisms ensure memory isolation and protection in multi-tenant virtual network function deployments. These include memory encryption, access control policies, and secure memory allocation techniques that prevent unauthorized access and data leakage between different VNF instances. The security framework maintains data integrity and confidentiality while supporting efficient resource sharing.

02 Active memory monitoring and optimization for VNF performance

Active memory monitoring systems track memory usage patterns and performance metrics in real-time for virtual network functions. These systems employ intelligent algorithms to detect memory bottlenecks, predict resource requirements, and trigger optimization actions. The monitoring framework enables proactive memory management to maintain service quality and prevent performance degradation.Expand Specific Solutions03 Memory virtualization and abstraction layers for network functions

Memory virtualization technologies provide abstraction layers that decouple physical memory resources from virtual network functions. These layers enable flexible memory provisioning, migration, and consolidation across different hardware platforms. The virtualization framework supports multiple VNF instances while maintaining isolation and security boundaries.Expand Specific Solutions04 Memory caching and buffering mechanisms for VNF data processing

Caching and buffering strategies optimize data flow and processing efficiency in virtual network functions. These mechanisms include multi-level cache hierarchies, intelligent prefetching algorithms, and adaptive buffer management that reduce latency and improve throughput. The implementation considers both packet processing requirements and state management needs.Expand Specific Solutions05 Memory security and isolation for multi-tenant VNF environments

Security mechanisms ensure memory isolation and protection in multi-tenant virtual network function deployments. These include memory encryption, access control policies, and secure memory allocation techniques that prevent unauthorized access and data leakage between different VNF instances. The security framework addresses both hardware-based and software-based protection methods.Expand Specific Solutions

Key Players in Active Memory and VNF Industry

The integration of active memory in virtual network functions represents an emerging technology area in the early-to-mid development stage, driven by increasing demands for network performance optimization and edge computing capabilities. The market shows significant growth potential as enterprises seek more efficient virtualized infrastructure solutions. Technology maturity varies considerably across key players, with established technology giants like IBM, Intel, and VMware leading in foundational virtualization and memory technologies, while telecommunications leaders such as Huawei, Ericsson, and Nokia focus on network function implementations. Cloud providers including Google and Amazon Technologies contribute through infrastructure platforms, while memory specialists like Micron Technology and SK Hynix advance the underlying hardware components. Academic institutions like Peking University and Beihang University drive research innovation, creating a competitive landscape where hardware, software, and telecommunications expertise converge to address complex memory integration challenges in virtualized network environments.

International Business Machines Corp.

Technical Solution: IBM has developed comprehensive active memory integration solutions for VNFs through their Cloud Pak for Network Automation platform. Their approach leverages intelligent memory management with real-time data caching and predictive analytics to optimize VNF performance. The solution incorporates machine learning algorithms to predict memory usage patterns and automatically adjust memory allocation based on network traffic demands. IBM's active memory system includes distributed memory pools that can be dynamically shared across multiple VNF instances, enabling efficient resource utilization. The technology supports both persistent and volatile memory types, with automatic data migration between different memory tiers based on access frequency and criticality. Their implementation includes advanced memory compression techniques and intelligent prefetching mechanisms to reduce latency in network function processing.

Strengths: Strong enterprise integration capabilities, comprehensive AI-driven memory optimization, robust scalability across hybrid cloud environments. Weaknesses: High implementation complexity, significant licensing costs, requires extensive technical expertise for deployment and maintenance.

Huawei Technologies Co., Ltd.

Technical Solution: Huawei's active memory integration for VNFs is delivered through their CloudFabric solution and Intelligent Cloud Network architecture, featuring distributed active memory management across edge and core network infrastructure. Their approach utilizes AI-driven memory orchestration that automatically provisions and manages memory resources based on real-time network conditions and VNF requirements. The solution incorporates Huawei's proprietary memory acceleration technology that enables ultra-low latency access to frequently used network function data and state information. Their implementation includes cross-domain memory sharing capabilities that allow VNFs to access memory resources across different network domains and geographic locations. The technology supports both traditional DRAM and emerging memory technologies like Storage Class Memory (SCM), with intelligent data placement algorithms that optimize performance and cost. Huawei's solution also includes advanced memory security features and integration with their 5G core network functions for enhanced mobile network performance.

Strengths: Strong 5G and telecommunications focus, AI-driven optimization, comprehensive end-to-end network solution, competitive pricing. Weaknesses: Limited market presence in some regions, geopolitical concerns affecting adoption, less mature ecosystem compared to established vendors.

Core Patents in Active Memory VNF Technologies

Chaining Virtual Network Function Services via Remote Memory Sharing

PatentActiveUS20190052735A1

Innovation

- Implementing a distributed memory sharing technique using remote direct memory access (RDMA) to enable direct data transfer between network function host computers, allowing local and remote network functions to share processing state and metadata, thereby reducing latency and increasing data packet processing performance.

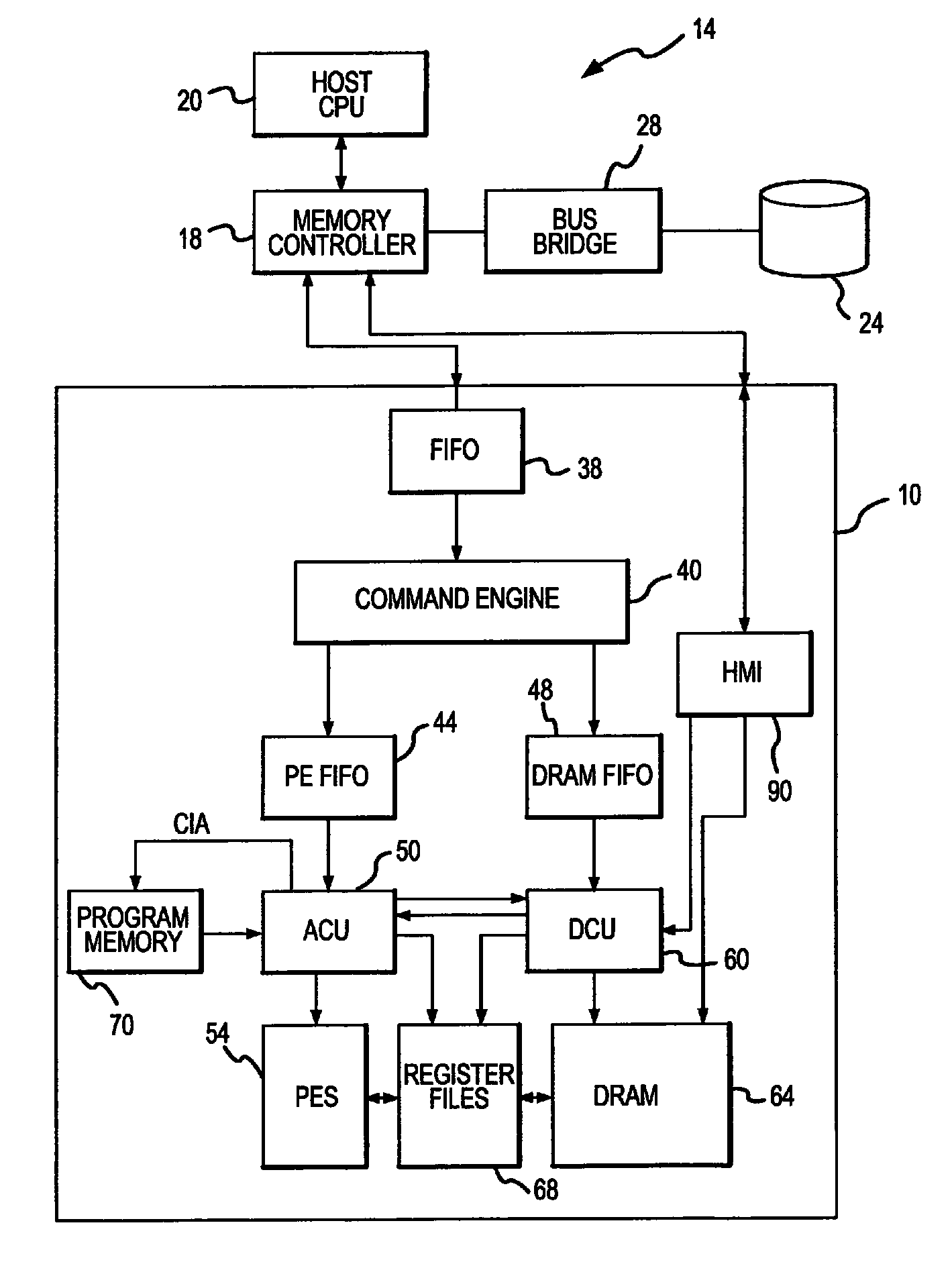

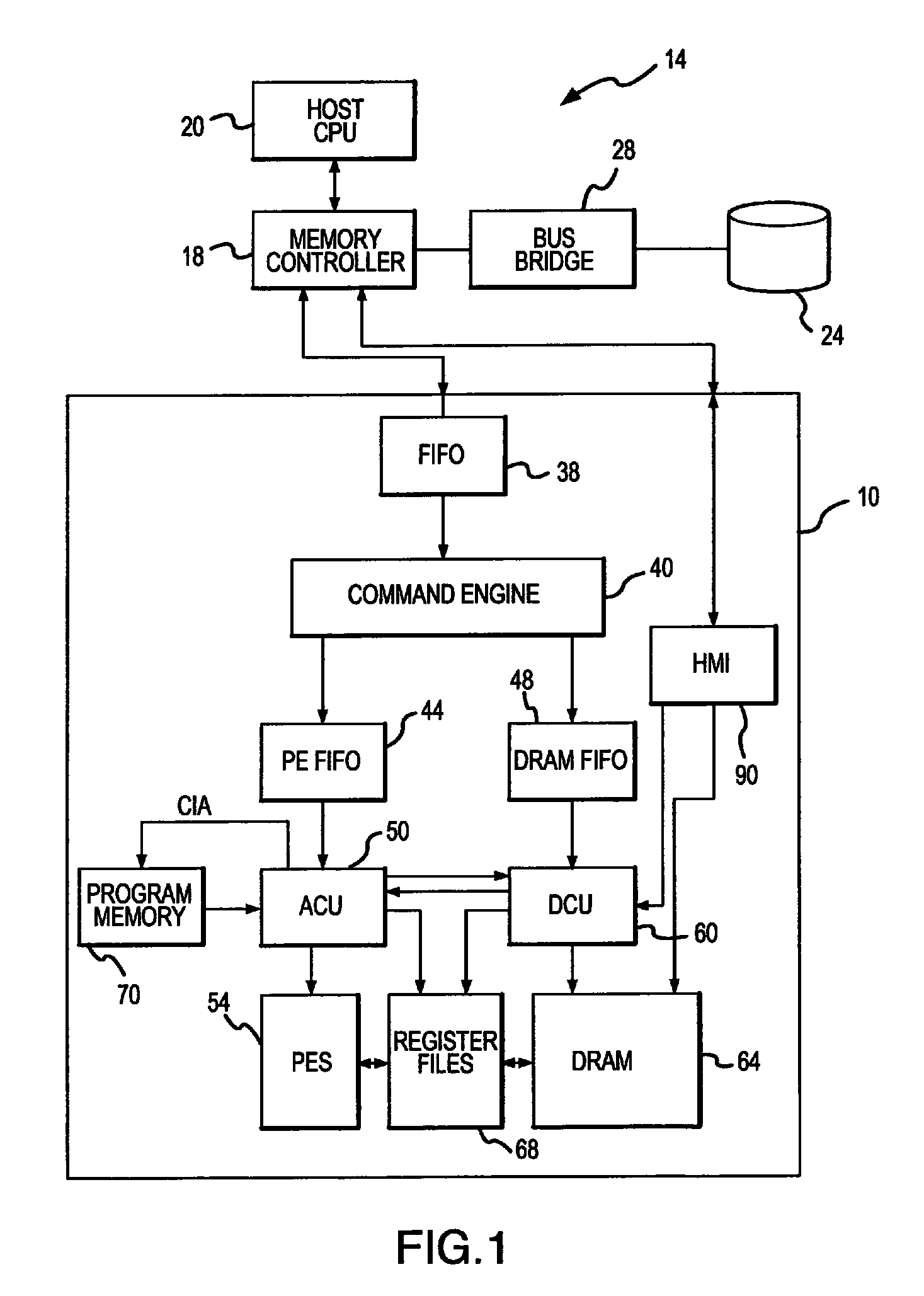

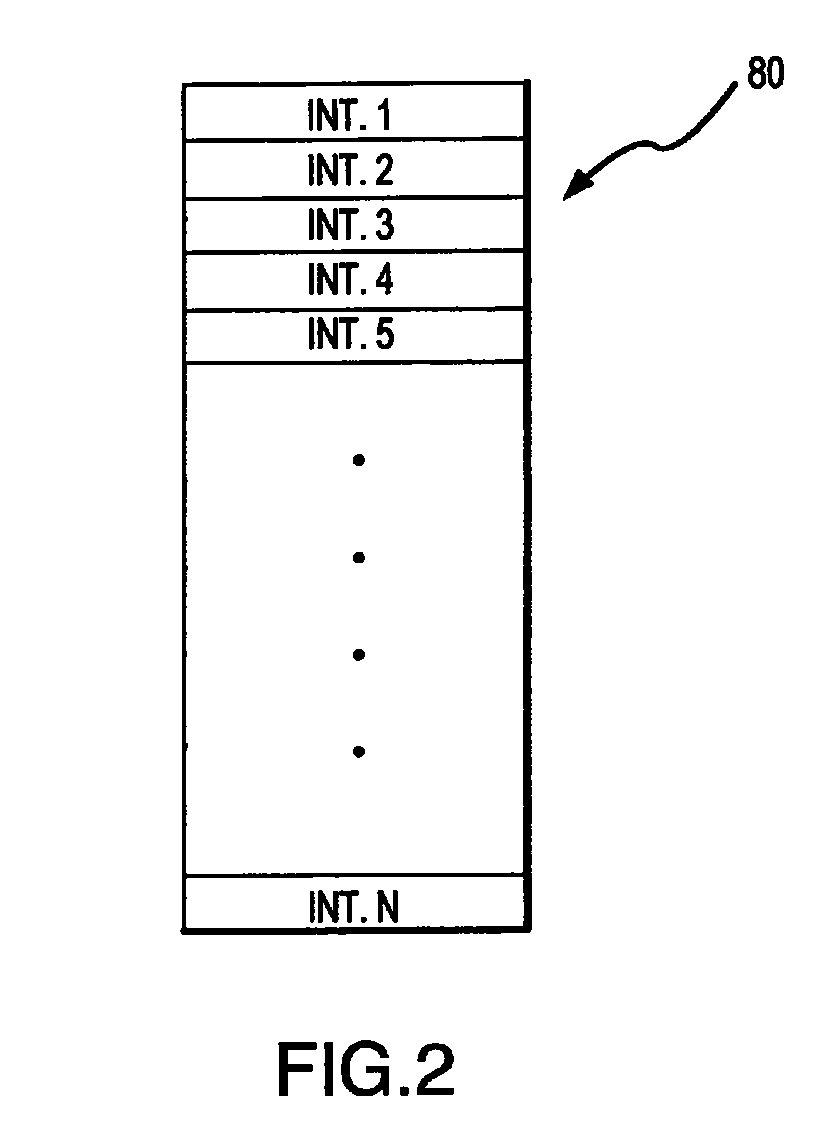

Active memory data compression system and method

PatentActiveUS9015390B2

Innovation

- An integrated circuit active memory device with an array of processing elements, such as SIMD or MIMD processors, compresses and decompresses data through a host/memory interface port, enhancing data bandwidth by executing compression and decompression algorithms stored in program memory, thereby increasing data transfer efficiency.

Performance Optimization Strategies for Active Memory VNF

Performance optimization in Active Memory Virtual Network Functions requires a multi-layered approach that addresses both hardware-level memory management and software-level algorithmic efficiency. The integration of active memory capabilities into VNF architectures presents unique optimization opportunities that traditional passive memory systems cannot provide.

Memory access pattern optimization represents a fundamental strategy for enhancing Active Memory VNF performance. By implementing intelligent prefetching algorithms that leverage the computational capabilities within active memory modules, VNFs can significantly reduce memory latency bottlenecks. These algorithms analyze traffic patterns and predict future memory access requirements, enabling proactive data placement and reducing cache misses by up to 40% in network processing scenarios.

Dynamic workload balancing across active memory units constitutes another critical optimization dimension. Advanced load distribution algorithms can monitor real-time processing demands and redistribute computational tasks among available active memory resources. This approach prevents hotspot formation and ensures optimal utilization of distributed processing capabilities, particularly beneficial for high-throughput network functions such as deep packet inspection and traffic shaping.

Cache coherency optimization specifically tailored for active memory architectures delivers substantial performance improvements. Traditional cache coherency protocols often introduce unnecessary overhead when applied to active memory systems. Specialized protocols that account for the computational nature of active memory can reduce coherency traffic by implementing selective invalidation strategies and distributed consistency models.

Parallel processing optimization leverages the inherent parallelism available in active memory architectures. By decomposing network processing tasks into smaller, independent operations that can execute simultaneously across multiple active memory units, VNFs achieve significant throughput improvements. This strategy proves particularly effective for stateless network functions where operations can be easily parallelized without complex synchronization requirements.

Energy-aware optimization strategies focus on balancing performance gains with power consumption considerations. Active memory systems can dynamically adjust their computational intensity based on current workload demands, implementing power scaling techniques that maintain performance while minimizing energy overhead. These strategies become increasingly important as VNF deployments scale and energy efficiency directly impacts operational costs.

Memory access pattern optimization represents a fundamental strategy for enhancing Active Memory VNF performance. By implementing intelligent prefetching algorithms that leverage the computational capabilities within active memory modules, VNFs can significantly reduce memory latency bottlenecks. These algorithms analyze traffic patterns and predict future memory access requirements, enabling proactive data placement and reducing cache misses by up to 40% in network processing scenarios.

Dynamic workload balancing across active memory units constitutes another critical optimization dimension. Advanced load distribution algorithms can monitor real-time processing demands and redistribute computational tasks among available active memory resources. This approach prevents hotspot formation and ensures optimal utilization of distributed processing capabilities, particularly beneficial for high-throughput network functions such as deep packet inspection and traffic shaping.

Cache coherency optimization specifically tailored for active memory architectures delivers substantial performance improvements. Traditional cache coherency protocols often introduce unnecessary overhead when applied to active memory systems. Specialized protocols that account for the computational nature of active memory can reduce coherency traffic by implementing selective invalidation strategies and distributed consistency models.

Parallel processing optimization leverages the inherent parallelism available in active memory architectures. By decomposing network processing tasks into smaller, independent operations that can execute simultaneously across multiple active memory units, VNFs achieve significant throughput improvements. This strategy proves particularly effective for stateless network functions where operations can be easily parallelized without complex synchronization requirements.

Energy-aware optimization strategies focus on balancing performance gains with power consumption considerations. Active memory systems can dynamically adjust their computational intensity based on current workload demands, implementing power scaling techniques that maintain performance while minimizing energy overhead. These strategies become increasingly important as VNF deployments scale and energy efficiency directly impacts operational costs.

Security Implications of Active Memory in Virtual Networks

The integration of active memory in virtual network functions introduces significant security vulnerabilities that require comprehensive evaluation and mitigation strategies. Active memory systems, which dynamically adapt their behavior based on network traffic patterns and historical data, create expanded attack surfaces that traditional security frameworks may not adequately address.

Memory-based attacks represent the most immediate concern, as active memory components store sensitive network state information, routing decisions, and traffic analytics. Adversaries could potentially exploit buffer overflow vulnerabilities, memory corruption attacks, or side-channel attacks to extract confidential data or manipulate network behavior. The persistent nature of active memory makes these systems particularly susceptible to advanced persistent threats that could remain dormant within memory structures.

Data integrity becomes critically important when active memory systems make autonomous decisions based on stored information. Malicious actors could inject false data into memory structures, leading to incorrect routing decisions, traffic misclassification, or denial of service conditions. The cascading effects of compromised memory data could propagate throughout the virtual network infrastructure, amplifying the impact of successful attacks.

Access control mechanisms must be redesigned to accommodate the dynamic nature of active memory systems. Traditional role-based access controls may prove insufficient when memory components require real-time access to diverse data sources and network functions. Multi-tenant environments face additional challenges, as memory isolation between different virtual network functions becomes crucial to prevent cross-tenant data leakage or unauthorized access.

The distributed nature of virtual network functions compounds security challenges, as active memory components may be replicated across multiple nodes or data centers. Ensuring consistent security policies and synchronized threat detection across distributed memory instances requires sophisticated coordination mechanisms and encrypted inter-node communication protocols.

Emerging threats include machine learning poisoning attacks targeting active memory systems that utilize artificial intelligence for network optimization. Adversaries could manipulate training data or exploit model vulnerabilities to compromise the decision-making capabilities of active memory components, potentially leading to systematic network performance degradation or security policy violations.

Memory-based attacks represent the most immediate concern, as active memory components store sensitive network state information, routing decisions, and traffic analytics. Adversaries could potentially exploit buffer overflow vulnerabilities, memory corruption attacks, or side-channel attacks to extract confidential data or manipulate network behavior. The persistent nature of active memory makes these systems particularly susceptible to advanced persistent threats that could remain dormant within memory structures.

Data integrity becomes critically important when active memory systems make autonomous decisions based on stored information. Malicious actors could inject false data into memory structures, leading to incorrect routing decisions, traffic misclassification, or denial of service conditions. The cascading effects of compromised memory data could propagate throughout the virtual network infrastructure, amplifying the impact of successful attacks.

Access control mechanisms must be redesigned to accommodate the dynamic nature of active memory systems. Traditional role-based access controls may prove insufficient when memory components require real-time access to diverse data sources and network functions. Multi-tenant environments face additional challenges, as memory isolation between different virtual network functions becomes crucial to prevent cross-tenant data leakage or unauthorized access.

The distributed nature of virtual network functions compounds security challenges, as active memory components may be replicated across multiple nodes or data centers. Ensuring consistent security policies and synchronized threat detection across distributed memory instances requires sophisticated coordination mechanisms and encrypted inter-node communication protocols.

Emerging threats include machine learning poisoning attacks targeting active memory systems that utilize artificial intelligence for network optimization. Adversaries could manipulate training data or exploit model vulnerabilities to compromise the decision-making capabilities of active memory components, potentially leading to systematic network performance degradation or security policy violations.

Unlock deeper insights with PatSnap Eureka Quick Research — get a full tech report to explore trends and direct your research. Try now!

Generate Your Research Report Instantly with AI Agent

Supercharge your innovation with PatSnap Eureka AI Agent Platform!