Optimizing Frame Creation Processes for Richer Scene Environments

MAR 30, 20269 MIN READ

Generate Your Research Report Instantly with AI Agent

Patsnap Eureka helps you evaluate technical feasibility & market potential.

Frame Creation Optimization Background and Technical Goals

Frame creation optimization has emerged as a critical technological frontier in computer graphics and real-time rendering, driven by the exponential growth in demand for immersive digital experiences. The evolution from simple 2D graphics to complex 3D environments has fundamentally transformed how visual content is generated, processed, and displayed across various platforms including gaming, virtual reality, augmented reality, and digital cinematography.

The historical trajectory of frame creation technology began with basic rasterization techniques in the 1970s and has progressively advanced through hardware acceleration, programmable shaders, and modern GPU architectures. Early rendering pipelines focused primarily on geometric accuracy and basic lighting models, but contemporary applications demand photorealistic rendering with complex material properties, dynamic lighting, and sophisticated post-processing effects.

Current technological trends indicate a paradigm shift toward real-time ray tracing, machine learning-assisted rendering, and adaptive quality systems. The integration of artificial intelligence in frame generation processes has opened new possibilities for temporal upsampling, denoising algorithms, and predictive rendering techniques. These developments are particularly significant as content creators increasingly require higher frame rates, greater visual fidelity, and more complex scene compositions.

The primary technical objectives in frame creation optimization center on achieving maximum visual quality while maintaining computational efficiency. Key performance metrics include frame rate consistency, latency reduction, power consumption optimization, and scalability across diverse hardware configurations. Modern applications demand frame rates exceeding 60 FPS for standard displays, with emerging technologies requiring 90-120 FPS for VR applications and up to 240 FPS for competitive gaming scenarios.

Advanced scene environments present unique challenges including dynamic object management, complex lighting calculations, particle system optimization, and multi-layered transparency handling. The technical goals encompass developing algorithms that can efficiently process millions of polygons, handle real-time global illumination, and maintain visual coherence across rapidly changing scene conditions while minimizing computational overhead and memory bandwidth requirements.

The historical trajectory of frame creation technology began with basic rasterization techniques in the 1970s and has progressively advanced through hardware acceleration, programmable shaders, and modern GPU architectures. Early rendering pipelines focused primarily on geometric accuracy and basic lighting models, but contemporary applications demand photorealistic rendering with complex material properties, dynamic lighting, and sophisticated post-processing effects.

Current technological trends indicate a paradigm shift toward real-time ray tracing, machine learning-assisted rendering, and adaptive quality systems. The integration of artificial intelligence in frame generation processes has opened new possibilities for temporal upsampling, denoising algorithms, and predictive rendering techniques. These developments are particularly significant as content creators increasingly require higher frame rates, greater visual fidelity, and more complex scene compositions.

The primary technical objectives in frame creation optimization center on achieving maximum visual quality while maintaining computational efficiency. Key performance metrics include frame rate consistency, latency reduction, power consumption optimization, and scalability across diverse hardware configurations. Modern applications demand frame rates exceeding 60 FPS for standard displays, with emerging technologies requiring 90-120 FPS for VR applications and up to 240 FPS for competitive gaming scenarios.

Advanced scene environments present unique challenges including dynamic object management, complex lighting calculations, particle system optimization, and multi-layered transparency handling. The technical goals encompass developing algorithms that can efficiently process millions of polygons, handle real-time global illumination, and maintain visual coherence across rapidly changing scene conditions while minimizing computational overhead and memory bandwidth requirements.

Market Demand for Rich Scene Rendering Solutions

The global demand for rich scene rendering solutions has experienced unprecedented growth across multiple industry verticals, driven by the convergence of advanced computing capabilities and evolving consumer expectations. Gaming industry represents the largest market segment, where developers continuously push boundaries to deliver photorealistic environments that enhance player immersion. Modern AAA titles require sophisticated rendering pipelines capable of handling complex lighting, detailed textures, and dynamic environmental effects in real-time scenarios.

Entertainment and media production sectors demonstrate substantial appetite for enhanced frame creation technologies. Film studios and streaming content producers increasingly rely on real-time rendering solutions to reduce production costs while maintaining cinematic quality. Virtual production techniques, popularized by major studios, necessitate seamless integration between physical and digital environments, creating significant demand for optimized frame generation processes.

Automotive industry emerges as a rapidly expanding market for rich scene rendering applications. Advanced driver assistance systems and autonomous vehicle development require high-fidelity environmental simulation capabilities. Real-time rendering of complex traffic scenarios, weather conditions, and urban landscapes becomes critical for training machine learning algorithms and validating safety systems.

Architecture, engineering, and construction sectors increasingly adopt immersive visualization technologies for project planning and client presentations. Building information modeling platforms integrate sophisticated rendering engines to provide stakeholders with realistic previews of proposed structures. This trend accelerates demand for efficient frame creation processes that can handle large-scale architectural datasets.

Virtual and augmented reality applications across healthcare, education, and training domains drive additional market expansion. Medical simulation platforms require anatomically accurate rendering for surgical training, while educational institutions seek immersive learning environments. These applications demand consistent frame rates and visual fidelity to prevent user discomfort and maintain engagement.

Enterprise collaboration tools incorporating spatial computing capabilities represent an emerging market segment. Remote work trends accelerate adoption of virtual meeting spaces and collaborative design environments, requiring robust rendering solutions that support multiple concurrent users while maintaining visual quality across diverse hardware configurations.

Entertainment and media production sectors demonstrate substantial appetite for enhanced frame creation technologies. Film studios and streaming content producers increasingly rely on real-time rendering solutions to reduce production costs while maintaining cinematic quality. Virtual production techniques, popularized by major studios, necessitate seamless integration between physical and digital environments, creating significant demand for optimized frame generation processes.

Automotive industry emerges as a rapidly expanding market for rich scene rendering applications. Advanced driver assistance systems and autonomous vehicle development require high-fidelity environmental simulation capabilities. Real-time rendering of complex traffic scenarios, weather conditions, and urban landscapes becomes critical for training machine learning algorithms and validating safety systems.

Architecture, engineering, and construction sectors increasingly adopt immersive visualization technologies for project planning and client presentations. Building information modeling platforms integrate sophisticated rendering engines to provide stakeholders with realistic previews of proposed structures. This trend accelerates demand for efficient frame creation processes that can handle large-scale architectural datasets.

Virtual and augmented reality applications across healthcare, education, and training domains drive additional market expansion. Medical simulation platforms require anatomically accurate rendering for surgical training, while educational institutions seek immersive learning environments. These applications demand consistent frame rates and visual fidelity to prevent user discomfort and maintain engagement.

Enterprise collaboration tools incorporating spatial computing capabilities represent an emerging market segment. Remote work trends accelerate adoption of virtual meeting spaces and collaborative design environments, requiring robust rendering solutions that support multiple concurrent users while maintaining visual quality across diverse hardware configurations.

Current State and Bottlenecks in Frame Generation Pipelines

The current frame generation pipeline for rich scene environments operates through a multi-stage process that encompasses geometry processing, texture mapping, lighting calculations, and post-processing effects. Modern rendering engines typically employ deferred rendering architectures, where geometric information is first rendered to multiple buffers before lighting calculations are applied. This approach allows for complex lighting scenarios but introduces significant memory bandwidth requirements and storage overhead.

Contemporary pipelines heavily rely on rasterization-based rendering, which processes triangular primitives through vertex shading, primitive assembly, rasterization, and fragment shading stages. While this approach provides predictable performance characteristics, it struggles with complex geometric details and requires substantial preprocessing for level-of-detail management. The integration of ray tracing capabilities has introduced hybrid rendering approaches, but these systems face challenges in balancing quality and performance across diverse hardware configurations.

Memory bandwidth emerges as a critical bottleneck in current frame generation systems. High-resolution textures, complex geometry buffers, and multiple render targets create substantial data movement requirements between GPU memory and processing units. This limitation becomes particularly pronounced when handling large-scale environments with diverse material properties and lighting conditions. The situation is exacerbated by the need to maintain multiple resolution levels and temporal data for advanced rendering techniques.

Computational complexity represents another significant constraint, particularly in lighting and shading calculations. Real-time global illumination techniques, such as screen-space reflections and ambient occlusion, require extensive sampling and filtering operations that scale poorly with scene complexity. The computational overhead of these techniques often forces developers to implement aggressive optimization strategies that compromise visual fidelity or limit scene complexity.

Pipeline synchronization issues create additional performance barriers, especially when integrating CPU-driven scene management with GPU-accelerated rendering. The asynchronous nature of modern graphics APIs introduces latency concerns and resource management complexities that impact overall system efficiency. These synchronization challenges become more pronounced when implementing dynamic scene updates and procedural content generation within the rendering pipeline.

Current solutions also face scalability limitations when handling diverse content types within single scenes. The pipeline architecture struggles to efficiently process mixed content scenarios involving static geometry, animated characters, particle systems, and volumetric effects simultaneously, often requiring separate rendering passes that increase overall frame generation time.

Contemporary pipelines heavily rely on rasterization-based rendering, which processes triangular primitives through vertex shading, primitive assembly, rasterization, and fragment shading stages. While this approach provides predictable performance characteristics, it struggles with complex geometric details and requires substantial preprocessing for level-of-detail management. The integration of ray tracing capabilities has introduced hybrid rendering approaches, but these systems face challenges in balancing quality and performance across diverse hardware configurations.

Memory bandwidth emerges as a critical bottleneck in current frame generation systems. High-resolution textures, complex geometry buffers, and multiple render targets create substantial data movement requirements between GPU memory and processing units. This limitation becomes particularly pronounced when handling large-scale environments with diverse material properties and lighting conditions. The situation is exacerbated by the need to maintain multiple resolution levels and temporal data for advanced rendering techniques.

Computational complexity represents another significant constraint, particularly in lighting and shading calculations. Real-time global illumination techniques, such as screen-space reflections and ambient occlusion, require extensive sampling and filtering operations that scale poorly with scene complexity. The computational overhead of these techniques often forces developers to implement aggressive optimization strategies that compromise visual fidelity or limit scene complexity.

Pipeline synchronization issues create additional performance barriers, especially when integrating CPU-driven scene management with GPU-accelerated rendering. The asynchronous nature of modern graphics APIs introduces latency concerns and resource management complexities that impact overall system efficiency. These synchronization challenges become more pronounced when implementing dynamic scene updates and procedural content generation within the rendering pipeline.

Current solutions also face scalability limitations when handling diverse content types within single scenes. The pipeline architecture struggles to efficiently process mixed content scenarios involving static geometry, animated characters, particle systems, and volumetric effects simultaneously, often requiring separate rendering passes that increase overall frame generation time.

Existing Frame Optimization Solutions for Complex Scenes

01 Frame creation using molding and casting processes

Frame structures can be created through molding and casting techniques where materials are poured or injected into molds to form the desired frame shape. These processes allow for precise control over frame dimensions and can accommodate various materials including metals, plastics, and composite materials. The molding process may involve heating, cooling, and curing stages to achieve the final frame structure with desired mechanical properties.- Frame creation using molding and casting processes: Frame structures can be created through molding and casting techniques where materials are poured or injected into molds to form the desired frame shape. These processes allow for precise control over frame dimensions and can accommodate various materials including metals, plastics, and composite materials. The molding process may involve heating, cooling, and curing stages to achieve the final frame structure with desired mechanical properties.

- Frame assembly through joining and welding techniques: Frame creation can be accomplished by assembling multiple components through various joining methods such as welding, bonding, or mechanical fastening. This approach allows for the construction of complex frame geometries by combining simpler elements. The joining processes ensure structural integrity and can be optimized for different material combinations and load-bearing requirements.

- Digital and automated frame manufacturing systems: Modern frame creation utilizes computer-controlled and automated manufacturing systems that enable precise fabrication based on digital designs. These systems incorporate robotics, CNC machining, and automated assembly lines to produce frames with high accuracy and repeatability. The digital workflow allows for rapid prototyping and customization while maintaining consistent quality standards.

- Frame formation through additive manufacturing and layering: Frames can be created using additive manufacturing techniques where material is deposited layer by layer to build up the frame structure. This method enables the production of complex geometries that would be difficult or impossible to achieve with traditional manufacturing. The process offers flexibility in material selection and allows for internal structures and features to be integrated during the build process.

- Frame construction using composite and hybrid material systems: Frame creation can involve the use of composite materials and hybrid construction methods that combine different materials to optimize strength, weight, and performance characteristics. These processes may include layering, reinforcement integration, and multi-material bonding techniques. The resulting frames benefit from the complementary properties of different materials while maintaining structural efficiency.

02 Frame assembly through joining and welding techniques

Frame creation can be accomplished by assembling multiple components through various joining methods such as welding, bonding, or mechanical fastening. This approach allows for the construction of complex frame geometries by combining simpler elements. The joining processes ensure structural integrity and can be optimized for different material combinations and load-bearing requirements.Expand Specific Solutions03 Digital and automated frame manufacturing systems

Modern frame creation utilizes computer-controlled and automated manufacturing systems that enable precise fabrication with minimal human intervention. These systems incorporate digital design data to control cutting, forming, and assembly operations. Automation improves consistency, reduces production time, and allows for customization while maintaining quality standards across multiple frame units.Expand Specific Solutions04 Frame formation through material deformation and shaping

Frames can be created by mechanically deforming raw materials through processes such as bending, stamping, forging, or extrusion. These techniques reshape the material without removing substance, maintaining material integrity while achieving desired frame configurations. The deformation processes can be performed at various temperatures and pressures depending on material properties and final frame specifications.Expand Specific Solutions05 Composite and multi-material frame construction

Frame creation can involve combining different materials to achieve enhanced properties such as improved strength-to-weight ratios, corrosion resistance, or thermal characteristics. This approach integrates materials with complementary properties through layering, embedding, or co-processing techniques. The resulting composite frames offer performance advantages over single-material constructions for specific applications.Expand Specific Solutions

Key Players in Graphics Engine and Rendering Industry

The frame creation optimization technology for richer scene environments represents a rapidly evolving sector within the broader graphics and visual computing industry. The market is currently in a growth phase, driven by increasing demand for immersive experiences across gaming, entertainment, and enterprise applications. Major technology giants including NVIDIA, Apple, Intel, and Qualcomm are leading hardware acceleration developments, while companies like Tencent, Disney, and Sony Interactive Entertainment focus on content creation applications. The technology maturity varies significantly across segments - established players like Microsoft, IBM, and Samsung have mature foundational technologies, while specialized firms such as V-Nova International and IKIN are advancing cutting-edge compression and holographic solutions. Academic institutions like ETH Zurich contribute fundamental research, indicating strong innovation pipeline for next-generation frame optimization capabilities.

Apple, Inc.

Technical Solution: Apple's approach focuses on optimizing frame creation through its custom Silicon architecture, particularly the M-series chips with unified memory architecture and dedicated GPU cores. The company's Metal Performance Shaders framework provides optimized compute kernels for graphics rendering, while MetalKit offers high-level APIs for efficient scene management. Apple's Reality Composer and RealityKit frameworks enable developers to create rich AR/VR environments with advanced lighting models, particle systems, and physics-based rendering. The integration of Neural Engine in Apple Silicon accelerates machine learning-based rendering optimizations, including intelligent upscaling and dynamic level-of-detail adjustments for complex scenes.

Strengths: Tight hardware-software integration, energy efficiency, seamless cross-device compatibility. Weaknesses: Limited to Apple ecosystem, restricted customization options for developers.

NVIDIA Corp.

Technical Solution: NVIDIA leverages its RTX GPU architecture with dedicated RT cores for real-time ray tracing and AI-accelerated frame generation through DLSS (Deep Learning Super Sampling) technology. Their Omniverse platform provides comprehensive tools for creating photorealistic 3D environments with advanced lighting, materials, and physics simulation. The company's OptiX ray tracing engine enables developers to build complex scene rendering pipelines with support for global illumination, reflections, and shadows. NVIDIA's latest Ada Lovelace architecture incorporates third-generation RT cores that deliver up to 2.8x ray-triangle intersection throughput compared to previous generations, significantly accelerating frame creation for rich scene environments.

Strengths: Industry-leading GPU performance, comprehensive development ecosystem, advanced AI acceleration. Weaknesses: High power consumption, premium pricing limits accessibility.

Core Innovations in Advanced Frame Creation Algorithms

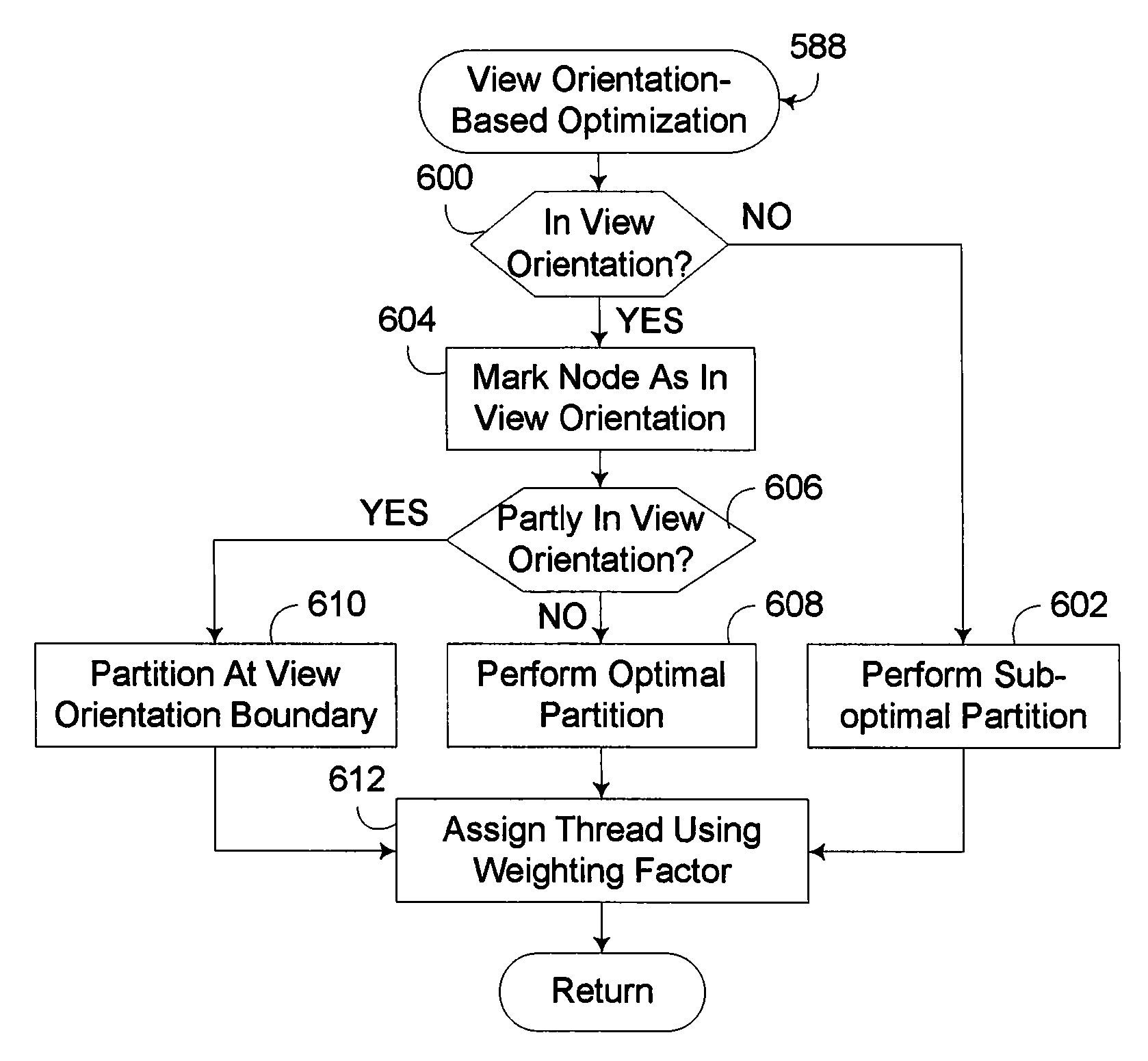

Accelerated Data Structure Optimization Based Upon View Orientation

PatentInactiveUS20100239185A1

Innovation

- Optimizing the generation and use of Accelerated Data Structures (ADS) by utilizing the known view orientation to allocate processing resources more efficiently, such as using different geometry placement algorithms and workload balancing based on the location of primitives relative to the view orientation, thereby reducing the processing burden on primitives outside the view orientation.

Apparatus and method

PatentActiveUS20210287326A1

Innovation

- Incorporating prediction and allocation circuitry to dynamically adjust the initial rendering stage time allocations for ongoing rendering stages, allowing for real-time rendering of image frames by predicting time overruns and adjusting allocations to ensure completion within the allotted time.

Hardware Acceleration Standards for Graphics Processing

Hardware acceleration standards for graphics processing have evolved significantly to address the computational demands of optimizing frame creation processes for richer scene environments. The establishment of unified standards has become crucial as modern graphics applications require consistent performance across diverse hardware platforms while maintaining compatibility and efficiency.

The OpenGL and DirectX APIs represent foundational standards that define how software interfaces with graphics hardware. OpenGL's cross-platform approach provides standardized access to GPU capabilities, enabling developers to implement frame optimization techniques consistently across different hardware vendors. DirectX, particularly DirectX 12 and its successors, introduces low-level hardware access that allows more granular control over frame creation processes, essential for managing complex scene environments with multiple lighting sources, high-polygon models, and advanced shader effects.

Vulkan has emerged as a next-generation standard that addresses the limitations of previous APIs in handling modern graphics workloads. Its explicit multi-threading support and reduced driver overhead enable more efficient frame creation processes, particularly beneficial for scenes with rich environmental details. The standard's design philosophy emphasizes developer control over hardware resources, allowing for optimized memory management and command buffer execution that directly impacts frame generation efficiency.

The Khronos Group's standardization efforts extend beyond rendering APIs to include compute standards like OpenCL and SYCL, which facilitate hybrid rendering approaches. These standards enable developers to leverage both traditional graphics pipelines and general-purpose GPU computing for frame optimization tasks such as culling, level-of-detail calculations, and procedural content generation within rich scene environments.

Hardware-specific acceleration standards have also gained prominence, including NVIDIA's CUDA architecture and AMD's ROCm platform. While not universally adopted, these standards provide deep integration with vendor-specific hardware features, enabling advanced frame optimization techniques such as variable rate shading, mesh shaders, and hardware-accelerated ray tracing for enhanced scene realism.

The emergence of ray tracing acceleration standards, particularly through DirectX Raytracing (DXR) and Vulkan Ray Tracing extensions, represents a paradigm shift in frame creation for rich environments. These standards define hardware abstraction layers for dedicated ray tracing units, enabling real-time global illumination and reflection effects that significantly enhance scene visual fidelity while maintaining acceptable frame rates through optimized acceleration structures and denoising algorithms.

The OpenGL and DirectX APIs represent foundational standards that define how software interfaces with graphics hardware. OpenGL's cross-platform approach provides standardized access to GPU capabilities, enabling developers to implement frame optimization techniques consistently across different hardware vendors. DirectX, particularly DirectX 12 and its successors, introduces low-level hardware access that allows more granular control over frame creation processes, essential for managing complex scene environments with multiple lighting sources, high-polygon models, and advanced shader effects.

Vulkan has emerged as a next-generation standard that addresses the limitations of previous APIs in handling modern graphics workloads. Its explicit multi-threading support and reduced driver overhead enable more efficient frame creation processes, particularly beneficial for scenes with rich environmental details. The standard's design philosophy emphasizes developer control over hardware resources, allowing for optimized memory management and command buffer execution that directly impacts frame generation efficiency.

The Khronos Group's standardization efforts extend beyond rendering APIs to include compute standards like OpenCL and SYCL, which facilitate hybrid rendering approaches. These standards enable developers to leverage both traditional graphics pipelines and general-purpose GPU computing for frame optimization tasks such as culling, level-of-detail calculations, and procedural content generation within rich scene environments.

Hardware-specific acceleration standards have also gained prominence, including NVIDIA's CUDA architecture and AMD's ROCm platform. While not universally adopted, these standards provide deep integration with vendor-specific hardware features, enabling advanced frame optimization techniques such as variable rate shading, mesh shaders, and hardware-accelerated ray tracing for enhanced scene realism.

The emergence of ray tracing acceleration standards, particularly through DirectX Raytracing (DXR) and Vulkan Ray Tracing extensions, represents a paradigm shift in frame creation for rich environments. These standards define hardware abstraction layers for dedicated ray tracing units, enabling real-time global illumination and reflection effects that significantly enhance scene visual fidelity while maintaining acceptable frame rates through optimized acceleration structures and denoising algorithms.

Performance Benchmarking Frameworks for Frame Creation

Performance benchmarking frameworks for frame creation in rich scene environments have become critical evaluation tools as rendering complexity continues to escalate. These frameworks provide standardized methodologies to assess the efficiency, quality, and scalability of frame generation processes across diverse hardware configurations and software implementations. The establishment of comprehensive benchmarking protocols enables developers to make informed decisions about optimization strategies and resource allocation.

Contemporary benchmarking frameworks typically incorporate multi-dimensional evaluation metrics that extend beyond traditional frame rate measurements. These include memory utilization patterns, GPU occupancy rates, thermal performance characteristics, and power consumption profiles. Advanced frameworks also integrate perceptual quality assessments through automated visual fidelity scoring systems that correlate with human perception studies, ensuring that performance gains do not compromise visual integrity.

The architectural design of modern benchmarking frameworks emphasizes modularity and extensibility to accommodate emerging rendering techniques and hardware innovations. Standardized test suites encompass various scene complexity levels, from simple geometric primitives to photorealistic environments with dynamic lighting, particle systems, and complex material interactions. These frameworks often implement automated workload scaling mechanisms that progressively increase scene complexity to identify performance bottlenecks and optimization thresholds.

Cross-platform compatibility represents a fundamental requirement for effective benchmarking frameworks, necessitating abstraction layers that normalize performance metrics across different operating systems, graphics APIs, and hardware architectures. Leading frameworks incorporate statistical analysis engines that provide confidence intervals, variance measurements, and trend analysis capabilities to ensure reproducible and meaningful performance comparisons.

Real-time profiling integration within benchmarking frameworks enables granular analysis of frame creation pipelines, identifying specific rendering stages that contribute to performance limitations. These systems typically feature hierarchical profiling capabilities that drill down from high-level frame timing to individual draw call performance, shader execution efficiency, and memory transfer bottlenecks. Such detailed analysis facilitates targeted optimization efforts and validates the effectiveness of performance enhancement strategies.

Contemporary benchmarking frameworks typically incorporate multi-dimensional evaluation metrics that extend beyond traditional frame rate measurements. These include memory utilization patterns, GPU occupancy rates, thermal performance characteristics, and power consumption profiles. Advanced frameworks also integrate perceptual quality assessments through automated visual fidelity scoring systems that correlate with human perception studies, ensuring that performance gains do not compromise visual integrity.

The architectural design of modern benchmarking frameworks emphasizes modularity and extensibility to accommodate emerging rendering techniques and hardware innovations. Standardized test suites encompass various scene complexity levels, from simple geometric primitives to photorealistic environments with dynamic lighting, particle systems, and complex material interactions. These frameworks often implement automated workload scaling mechanisms that progressively increase scene complexity to identify performance bottlenecks and optimization thresholds.

Cross-platform compatibility represents a fundamental requirement for effective benchmarking frameworks, necessitating abstraction layers that normalize performance metrics across different operating systems, graphics APIs, and hardware architectures. Leading frameworks incorporate statistical analysis engines that provide confidence intervals, variance measurements, and trend analysis capabilities to ensure reproducible and meaningful performance comparisons.

Real-time profiling integration within benchmarking frameworks enables granular analysis of frame creation pipelines, identifying specific rendering stages that contribute to performance limitations. These systems typically feature hierarchical profiling capabilities that drill down from high-level frame timing to individual draw call performance, shader execution efficiency, and memory transfer bottlenecks. Such detailed analysis facilitates targeted optimization efforts and validates the effectiveness of performance enhancement strategies.

Unlock deeper insights with Patsnap Eureka Quick Research — get a full tech report to explore trends and direct your research. Try now!

Generate Your Research Report Instantly with AI Agent

Supercharge your innovation with Patsnap Eureka AI Agent Platform!