Sensor Drift vs Instrument Accuracy

MAR 27, 20269 MIN READ

Generate Your Research Report Instantly with AI Agent

PatSnap Eureka helps you evaluate technical feasibility & market potential.

Sensor Drift Background and Accuracy Goals

Sensor drift represents one of the most persistent challenges in modern instrumentation systems, fundamentally affecting the reliability and precision of measurement devices across diverse industrial applications. This phenomenon occurs when sensors gradually deviate from their original calibration parameters over time, leading to systematic errors that compound measurement uncertainty and compromise data integrity.

The evolution of sensor technology has been driven by the perpetual quest for enhanced accuracy and stability. Early mechanical sensors of the mid-20th century exhibited significant drift characteristics due to material fatigue and environmental susceptibility. The transition to electronic sensors in the 1970s marked a pivotal advancement, introducing solid-state components that demonstrated improved stability profiles. Subsequently, the integration of digital signal processing and smart sensor architectures in the 1990s enabled real-time drift compensation mechanisms.

Contemporary sensor systems face increasingly stringent accuracy requirements as industries demand higher precision for critical applications. Aerospace instrumentation requires measurement uncertainties below 0.01% for flight control systems, while pharmaceutical manufacturing mandates sensor accuracy within 0.05% for process validation. These demanding specifications necessitate comprehensive understanding of drift mechanisms and their mitigation strategies.

The primary technical objective centers on establishing quantitative relationships between drift characteristics and achievable instrument accuracy. This involves developing predictive models that correlate environmental factors, operational duration, and sensor design parameters with long-term stability performance. Advanced characterization techniques must identify drift patterns across different sensor technologies, enabling optimization of calibration intervals and uncertainty budgets.

Modern research initiatives focus on implementing adaptive calibration algorithms and self-diagnostic capabilities within sensor systems. Machine learning approaches show promising potential for predicting drift behavior based on historical performance data and operational conditions. Additionally, the development of reference-grade sensors with ultra-low drift coefficients serves as benchmarks for evaluating commercial sensor performance.

The ultimate goal encompasses creating sensor systems that maintain specified accuracy levels throughout their operational lifetime while minimizing maintenance requirements. This necessitates breakthrough innovations in sensor materials, packaging technologies, and signal processing methodologies. Success in this domain will enable next-generation instrumentation systems capable of autonomous operation in challenging environments while delivering consistent, traceable measurement results that meet evolving industrial standards and regulatory requirements.

The evolution of sensor technology has been driven by the perpetual quest for enhanced accuracy and stability. Early mechanical sensors of the mid-20th century exhibited significant drift characteristics due to material fatigue and environmental susceptibility. The transition to electronic sensors in the 1970s marked a pivotal advancement, introducing solid-state components that demonstrated improved stability profiles. Subsequently, the integration of digital signal processing and smart sensor architectures in the 1990s enabled real-time drift compensation mechanisms.

Contemporary sensor systems face increasingly stringent accuracy requirements as industries demand higher precision for critical applications. Aerospace instrumentation requires measurement uncertainties below 0.01% for flight control systems, while pharmaceutical manufacturing mandates sensor accuracy within 0.05% for process validation. These demanding specifications necessitate comprehensive understanding of drift mechanisms and their mitigation strategies.

The primary technical objective centers on establishing quantitative relationships between drift characteristics and achievable instrument accuracy. This involves developing predictive models that correlate environmental factors, operational duration, and sensor design parameters with long-term stability performance. Advanced characterization techniques must identify drift patterns across different sensor technologies, enabling optimization of calibration intervals and uncertainty budgets.

Modern research initiatives focus on implementing adaptive calibration algorithms and self-diagnostic capabilities within sensor systems. Machine learning approaches show promising potential for predicting drift behavior based on historical performance data and operational conditions. Additionally, the development of reference-grade sensors with ultra-low drift coefficients serves as benchmarks for evaluating commercial sensor performance.

The ultimate goal encompasses creating sensor systems that maintain specified accuracy levels throughout their operational lifetime while minimizing maintenance requirements. This necessitates breakthrough innovations in sensor materials, packaging technologies, and signal processing methodologies. Success in this domain will enable next-generation instrumentation systems capable of autonomous operation in challenging environments while delivering consistent, traceable measurement results that meet evolving industrial standards and regulatory requirements.

Market Demand for High-Precision Instrumentation

The global market for high-precision instrumentation is experiencing unprecedented growth driven by the increasing complexity of modern industrial processes and the stringent quality requirements across multiple sectors. Industries such as aerospace, automotive manufacturing, pharmaceutical production, and semiconductor fabrication demand measurement systems with exceptional accuracy and long-term stability. The challenge of sensor drift versus instrument accuracy has become a critical factor influencing purchasing decisions and operational efficiency.

Manufacturing sectors are particularly sensitive to measurement uncertainties, as even minor deviations can result in significant quality issues and costly production delays. The automotive industry's transition toward electric vehicles and autonomous systems has intensified the need for precise sensor technologies that maintain calibration over extended periods. Similarly, the pharmaceutical sector's regulatory compliance requirements mandate instruments capable of delivering consistent, traceable measurements throughout their operational lifecycle.

The semiconductor industry represents one of the most demanding markets for precision instrumentation, where nanometer-scale manufacturing processes require measurement systems with extraordinary stability. Process control equipment must maintain accuracy specifications over months or years of continuous operation, making sensor drift mitigation a paramount concern for equipment manufacturers and end users alike.

Emerging applications in renewable energy systems, particularly wind and solar installations, are creating new market segments for precision instrumentation. These applications often operate in harsh environmental conditions where traditional calibration approaches are impractical, driving demand for self-compensating measurement systems that can address drift-related accuracy degradation autonomously.

The industrial Internet of Things expansion has amplified the importance of long-term measurement reliability, as remote monitoring systems must provide trustworthy data over extended periods without frequent manual intervention. This trend is particularly evident in oil and gas exploration, environmental monitoring, and smart city infrastructure projects.

Market research indicates strong growth potential for instrumentation solutions that effectively address the sensor drift challenge through advanced compensation algorithms, self-calibration capabilities, and predictive maintenance features. End users increasingly prioritize total cost of ownership considerations, including calibration frequency and maintenance requirements, when evaluating precision measurement systems.

Manufacturing sectors are particularly sensitive to measurement uncertainties, as even minor deviations can result in significant quality issues and costly production delays. The automotive industry's transition toward electric vehicles and autonomous systems has intensified the need for precise sensor technologies that maintain calibration over extended periods. Similarly, the pharmaceutical sector's regulatory compliance requirements mandate instruments capable of delivering consistent, traceable measurements throughout their operational lifecycle.

The semiconductor industry represents one of the most demanding markets for precision instrumentation, where nanometer-scale manufacturing processes require measurement systems with extraordinary stability. Process control equipment must maintain accuracy specifications over months or years of continuous operation, making sensor drift mitigation a paramount concern for equipment manufacturers and end users alike.

Emerging applications in renewable energy systems, particularly wind and solar installations, are creating new market segments for precision instrumentation. These applications often operate in harsh environmental conditions where traditional calibration approaches are impractical, driving demand for self-compensating measurement systems that can address drift-related accuracy degradation autonomously.

The industrial Internet of Things expansion has amplified the importance of long-term measurement reliability, as remote monitoring systems must provide trustworthy data over extended periods without frequent manual intervention. This trend is particularly evident in oil and gas exploration, environmental monitoring, and smart city infrastructure projects.

Market research indicates strong growth potential for instrumentation solutions that effectively address the sensor drift challenge through advanced compensation algorithms, self-calibration capabilities, and predictive maintenance features. End users increasingly prioritize total cost of ownership considerations, including calibration frequency and maintenance requirements, when evaluating precision measurement systems.

Current Sensor Drift Issues and Accuracy Limitations

Sensor drift represents one of the most pervasive challenges in modern instrumentation systems, fundamentally compromising measurement reliability across diverse industrial applications. This phenomenon manifests as gradual, systematic changes in sensor output over time, even when measuring identical physical parameters under constant environmental conditions. The drift typically occurs due to material aging, component degradation, thermal cycling effects, and chemical interactions between sensing elements and their surrounding environment.

Contemporary sensor technologies face significant accuracy limitations stemming from multiple interconnected factors. Temperature variations constitute a primary contributor, causing thermal expansion of sensing materials and altering electronic component characteristics. Humidity fluctuations introduce moisture-related drift in capacitive and resistive sensors, while electromagnetic interference disrupts signal integrity in sensitive measurement circuits. Additionally, mechanical stress from vibration and pressure changes leads to structural deformation of sensing elements, particularly in MEMS-based devices.

Chemical contamination presents another critical challenge, especially in harsh industrial environments where sensors encounter corrosive substances, particulate matter, or reactive gases. These contaminants gradually alter surface properties of sensing elements, creating irreversible changes in calibration characteristics. Power supply instabilities further exacerbate accuracy issues by introducing noise and offset variations that compound with inherent drift phenomena.

Current accuracy limitations are particularly pronounced in long-term monitoring applications where sensors operate continuously for months or years without recalibration. Studies indicate that typical industrial sensors experience drift rates ranging from 0.1% to 5% of full-scale output annually, depending on technology type and operating conditions. Gas sensors demonstrate the highest drift susceptibility, often requiring monthly recalibration to maintain acceptable accuracy levels.

The economic impact of sensor drift extends beyond measurement uncertainty, encompassing increased maintenance costs, production quality issues, and potential safety risks in critical applications. Traditional compensation methods, including periodic manual calibration and environmental correction algorithms, provide only partial solutions while introducing operational complexity and downtime requirements.

Emerging challenges include the integration of sensors in Internet of Things networks, where remote deployment scenarios make frequent recalibration impractical. The demand for autonomous operation over extended periods necessitates innovative approaches to drift mitigation and real-time accuracy assessment, driving research toward self-calibrating sensor architectures and advanced signal processing techniques.

Contemporary sensor technologies face significant accuracy limitations stemming from multiple interconnected factors. Temperature variations constitute a primary contributor, causing thermal expansion of sensing materials and altering electronic component characteristics. Humidity fluctuations introduce moisture-related drift in capacitive and resistive sensors, while electromagnetic interference disrupts signal integrity in sensitive measurement circuits. Additionally, mechanical stress from vibration and pressure changes leads to structural deformation of sensing elements, particularly in MEMS-based devices.

Chemical contamination presents another critical challenge, especially in harsh industrial environments where sensors encounter corrosive substances, particulate matter, or reactive gases. These contaminants gradually alter surface properties of sensing elements, creating irreversible changes in calibration characteristics. Power supply instabilities further exacerbate accuracy issues by introducing noise and offset variations that compound with inherent drift phenomena.

Current accuracy limitations are particularly pronounced in long-term monitoring applications where sensors operate continuously for months or years without recalibration. Studies indicate that typical industrial sensors experience drift rates ranging from 0.1% to 5% of full-scale output annually, depending on technology type and operating conditions. Gas sensors demonstrate the highest drift susceptibility, often requiring monthly recalibration to maintain acceptable accuracy levels.

The economic impact of sensor drift extends beyond measurement uncertainty, encompassing increased maintenance costs, production quality issues, and potential safety risks in critical applications. Traditional compensation methods, including periodic manual calibration and environmental correction algorithms, provide only partial solutions while introducing operational complexity and downtime requirements.

Emerging challenges include the integration of sensors in Internet of Things networks, where remote deployment scenarios make frequent recalibration impractical. The demand for autonomous operation over extended periods necessitates innovative approaches to drift mitigation and real-time accuracy assessment, driving research toward self-calibrating sensor architectures and advanced signal processing techniques.

Existing Drift Compensation Solutions

01 Calibration methods for drift compensation

Various calibration techniques can be employed to compensate for sensor drift and maintain accuracy over time. These methods include periodic recalibration using reference standards, automatic calibration routines, and self-calibration mechanisms. Calibration algorithms can adjust sensor readings based on known reference values or environmental conditions. Advanced calibration approaches may incorporate machine learning algorithms to predict and correct drift patterns based on historical data and operating conditions.- Calibration methods for drift compensation: Various calibration techniques can be employed to compensate for sensor drift and maintain accuracy over time. These methods include periodic recalibration using reference standards, automatic calibration routines, and self-calibration mechanisms. Calibration can be performed at predetermined intervals or triggered by detected drift conditions. Advanced calibration approaches may utilize stored calibration data, temperature compensation algorithms, and multi-point calibration to ensure consistent sensor performance across different operating conditions.

- Temperature compensation techniques: Temperature variations are a significant cause of sensor drift and reduced accuracy. Temperature compensation methods involve measuring ambient or sensor temperature and applying correction factors to the sensor output. These techniques may include using temperature coefficients, lookup tables, or mathematical models to adjust readings based on thermal effects. Some implementations incorporate dedicated temperature sensors and real-time compensation algorithms to maintain accuracy across wide temperature ranges.

- Signal processing and filtering algorithms: Advanced signal processing techniques can improve sensor accuracy and reduce the effects of drift. These include digital filtering methods such as Kalman filtering, moving average filters, and adaptive filtering algorithms. Signal processing approaches can distinguish between actual measurements and drift-induced errors by analyzing signal characteristics, trends, and noise patterns. Machine learning algorithms may also be employed to predict and compensate for drift based on historical data and operating conditions.

- Redundant sensor configurations: Implementing multiple sensors in redundant or complementary configurations can enhance overall system accuracy and reliability. This approach involves using sensor arrays, dual or triple redundant sensors, or combining different sensor types measuring the same parameter. Cross-validation between sensors allows for drift detection and correction. Voting algorithms or weighted averaging methods can be applied to determine the most accurate reading while identifying sensors experiencing drift or failure.

- Drift detection and diagnostic systems: Monitoring systems can be implemented to detect sensor drift and assess accuracy degradation. These systems track sensor performance over time, comparing current readings against expected values, historical trends, or reference measurements. Diagnostic algorithms can identify drift patterns, rate of drift, and predict when recalibration or sensor replacement is needed. Alert mechanisms notify users or trigger automatic corrective actions when drift exceeds acceptable thresholds, ensuring maintained accuracy throughout the sensor lifecycle.

02 Temperature compensation techniques

Temperature variations are a major cause of sensor drift, and compensation techniques can significantly improve accuracy. These techniques involve measuring ambient temperature and applying correction factors to sensor readings. Temperature compensation can be achieved through hardware-based solutions using temperature-sensitive components or software algorithms that adjust readings based on temperature coefficients. Multi-point temperature calibration and real-time temperature monitoring enable dynamic compensation across different operating conditions.Expand Specific Solutions03 Signal processing and filtering methods

Advanced signal processing techniques can reduce noise and improve sensor accuracy by filtering out drift-related errors. Digital filtering algorithms, including Kalman filters and adaptive filters, can distinguish between actual signal changes and drift-induced variations. Statistical methods and moving average calculations help smooth sensor data and identify long-term drift trends. These processing methods can be implemented in real-time to provide continuous drift correction and enhanced measurement stability.Expand Specific Solutions04 Redundant sensor arrays and cross-validation

Implementing multiple sensors in redundant configurations allows for cross-validation and improved accuracy through comparison and averaging. Redundant sensor systems can detect individual sensor drift by comparing readings from multiple sensors measuring the same parameter. Voting algorithms and statistical analysis of sensor array data enable identification of outliers and faulty sensors. This approach enhances overall system reliability and provides fault tolerance while maintaining measurement accuracy even when individual sensors experience drift.Expand Specific Solutions05 Drift detection and diagnostic systems

Automated drift detection systems monitor sensor performance and identify when drift exceeds acceptable thresholds. These systems employ diagnostic algorithms that analyze sensor behavior patterns, response times, and output stability. Machine learning and artificial intelligence techniques can predict sensor degradation and estimate remaining useful life. Early detection of drift enables timely maintenance, recalibration, or sensor replacement before accuracy is significantly compromised. Diagnostic systems may also provide alerts and recommendations for corrective actions.Expand Specific Solutions

Key Players in Precision Sensor Industry

The sensor drift versus instrument accuracy research field represents a mature technological domain experiencing steady growth, driven by increasing demands for precision measurement across automotive, industrial, and medical applications. The market demonstrates significant scale with established players like Bosch, Siemens, and Honeywell leading industrial sensor solutions, while specialized companies such as DexCom and Measurement Specialties focus on niche applications. Technology maturity varies considerably across segments - companies like Applied Materials and Sony leverage advanced semiconductor technologies for high-precision sensors, whereas traditional manufacturers like Illinois Tool Works and Renishaw emphasize mechanical precision and calibration systems. The competitive landscape shows consolidation trends with major conglomerates acquiring specialized sensor companies, while emerging players like Biomech Sensor target specific applications. Academic institutions such as UNIST and University of British Columbia contribute fundamental research, bridging the gap between theoretical understanding and practical implementation of drift compensation techniques.

Siemens Industry, Inc.

Technical Solution: Siemens has developed the SITRANS sensor portfolio with integrated drift monitoring and compensation capabilities for process industries. Their solution utilizes digital signal processing algorithms that continuously analyze sensor output patterns to detect early signs of drift. The system employs reference standard comparisons and statistical process control methods to maintain measurement integrity. Siemens implements cloud-based analytics platforms that aggregate drift data across multiple installations to improve predictive models. Their approach includes automated calibration scheduling based on drift rate analysis and process criticality assessments, ensuring optimal balance between measurement accuracy and operational efficiency.

Strengths: Strong process industry expertise with comprehensive digital solutions. Weaknesses: Solutions may be over-engineered for simple measurement applications requiring basic drift compensation.

Robert Bosch GmbH

Technical Solution: Bosch has developed comprehensive sensor drift compensation algorithms and calibration methodologies for automotive applications. Their approach includes real-time drift detection using machine learning algorithms that continuously monitor sensor performance against expected baselines. The company implements multi-sensor fusion techniques to cross-validate measurements and identify drift patterns. Their MEMS sensor technology incorporates built-in self-test capabilities and temperature compensation mechanisms. Bosch's sensor management systems can automatically adjust calibration parameters based on environmental conditions and usage patterns, maintaining high accuracy over extended operational periods.

Strengths: Industry-leading MEMS technology with robust drift compensation. Weaknesses: Solutions primarily focused on automotive applications, limiting broader industrial applicability.

Core Innovations in Drift Mitigation Patents

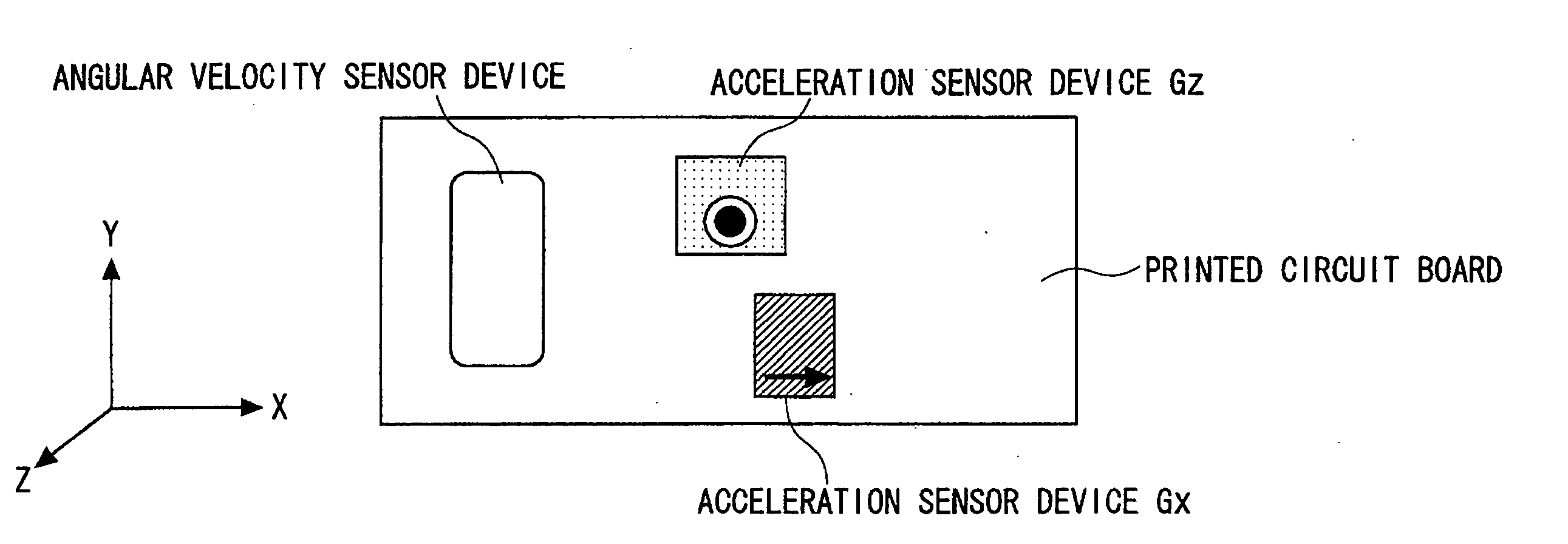

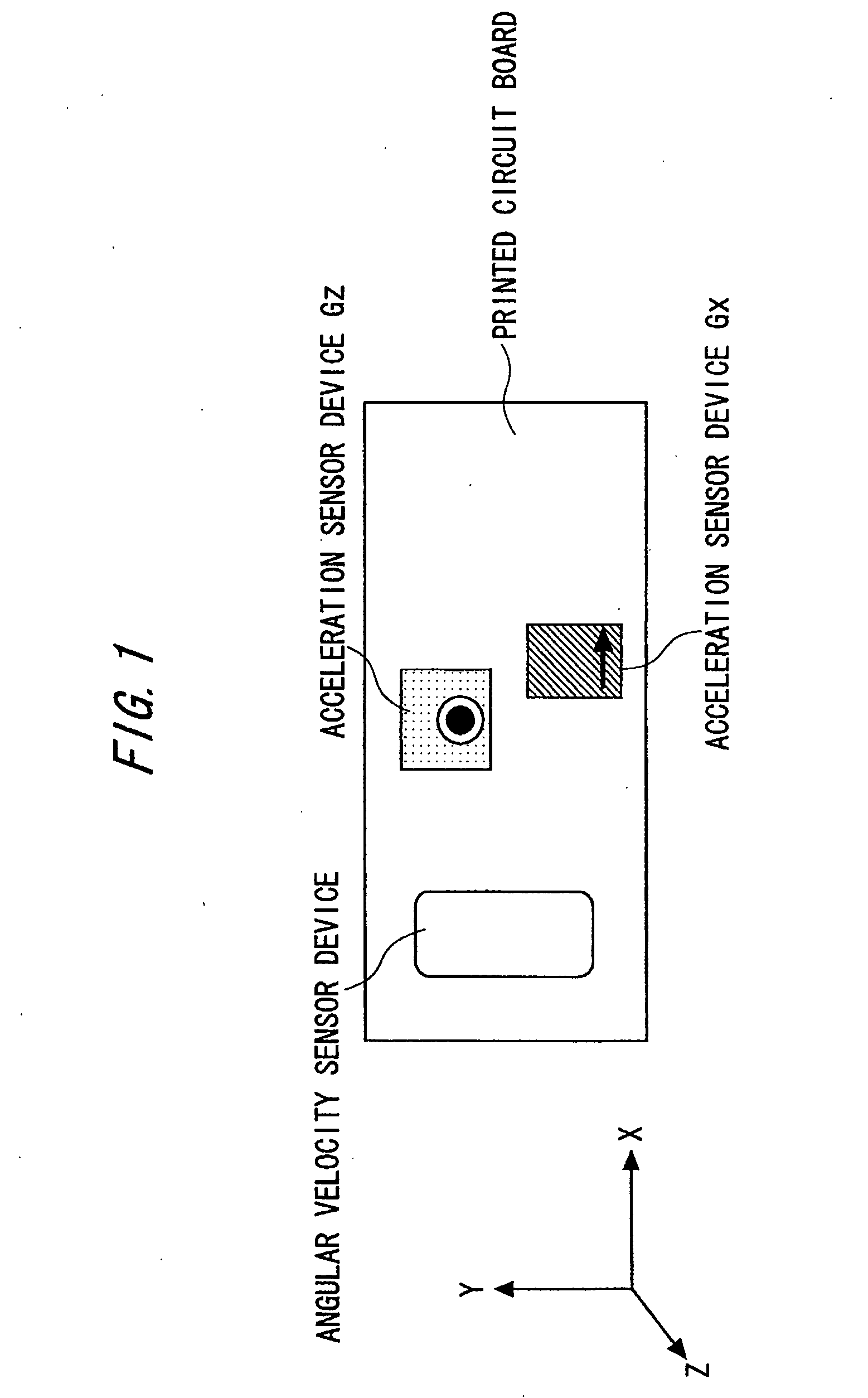

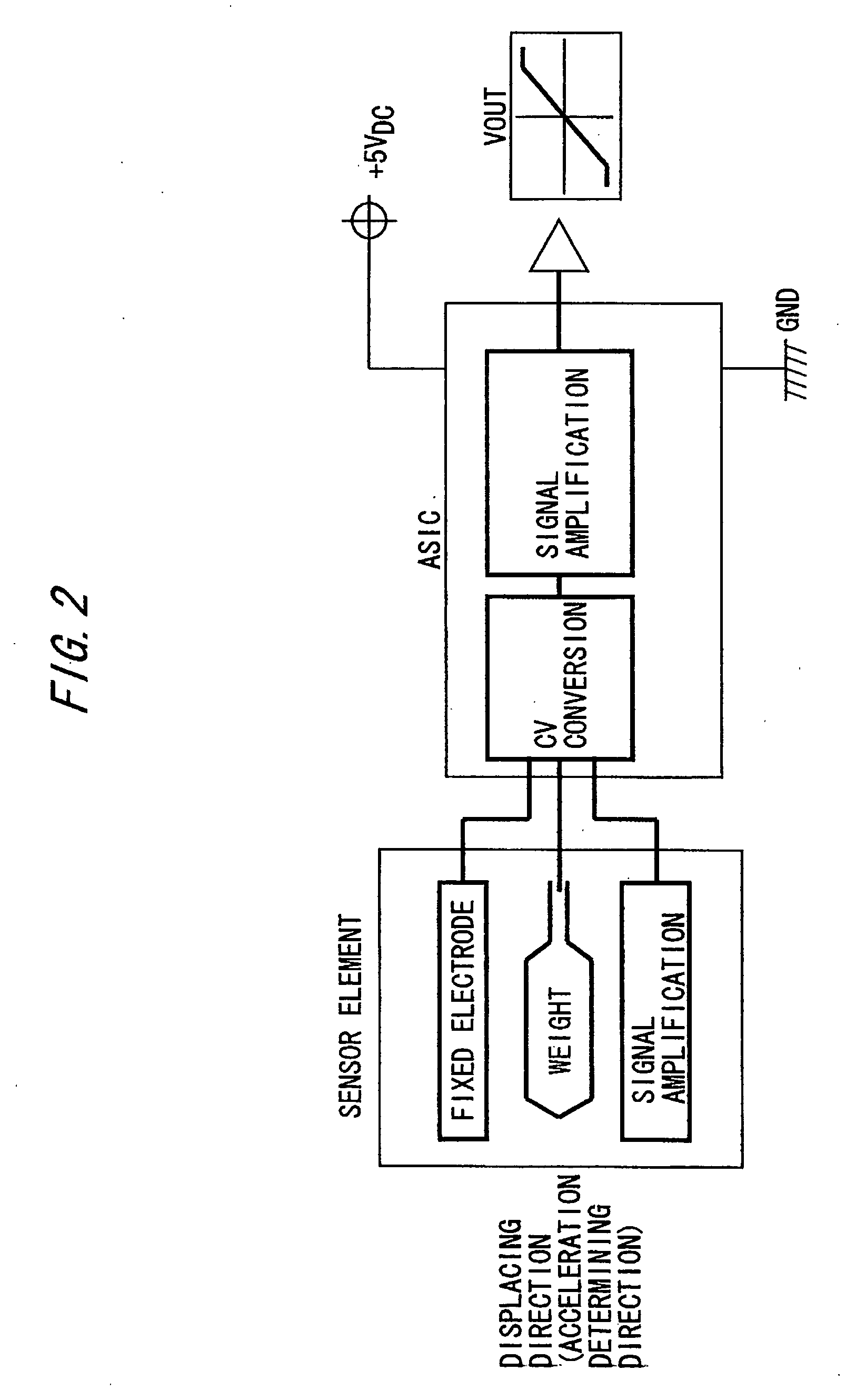

Difference correcting method for posture determining instrument and motion measuring instrument

PatentInactiveUS20060161363A1

Innovation

- A motion capture system utilizing a compact 6-freedom inertia measuring instrument with 3-axis acceleration and angular velocity sensors, which determines the direction of gravity to correct drift errors, allowing for accurate motion measurement in various environments without spatial restrictions by comparing initial and post-motion gravity directions and adjusting sensor data accordingly.

System and method for monitoring a field

PatentActiveUS20200271822A1

Innovation

- A system and method utilizing permanent seafloor sensors with unique drift functions, allowing for continuous monitoring and reducing operational costs by compensating for sensor drift through a drift function that models linear changes, enabling more frequent monitoring and reducing the need for frequent calibration surveys.

Metrological Standards for Sensor Accuracy

Metrological standards for sensor accuracy establish the fundamental framework for quantifying and validating sensor performance in measurement systems. These standards define the criteria and methodologies used to assess how closely sensor outputs correspond to true measured values, providing essential benchmarks for instrument calibration and validation processes.

The International Organization for Standardization (ISO) and the International Electrotechnical Commission (IEC) have developed comprehensive standards that address sensor accuracy requirements across various applications. ISO/IEC Guide 99 establishes the International Vocabulary of Metrology, defining accuracy as the closeness of agreement between a measured quantity value and a true quantity value of a measurand. This definition forms the foundation for all subsequent accuracy assessments and calibration procedures.

Key metrological standards include ISO 5725 series, which addresses accuracy and precision of measurement methods and results, and IEC 61298 series, specifically targeting process measurement and control equipment. These standards establish systematic approaches for determining measurement uncertainty, traceability requirements, and acceptable accuracy tolerances for different sensor categories and applications.

Calibration standards such as ISO/IEC 17025 define the competence requirements for testing and calibration laboratories, ensuring that sensor accuracy assessments are performed under controlled conditions with traceable reference standards. The standard mandates regular calibration intervals, environmental control measures, and documentation procedures that directly impact sensor drift characterization and accuracy validation.

National metrology institutes, including NIST, PTB, and NPL, provide primary reference standards that establish the measurement traceability chain for sensor calibration. These institutes maintain fundamental physical constants and reference materials that serve as the ultimate accuracy benchmarks for industrial sensors and measurement instruments.

Emerging standards address modern sensor technologies, including smart sensors with digital interfaces and wireless communication capabilities. IEC 61508 functional safety standards incorporate accuracy requirements for safety-critical applications, while IEEE standards address specific sensor types such as accelerometers, pressure sensors, and temperature measurement devices, establishing accuracy classes and performance specifications that account for long-term stability and drift characteristics.

The International Organization for Standardization (ISO) and the International Electrotechnical Commission (IEC) have developed comprehensive standards that address sensor accuracy requirements across various applications. ISO/IEC Guide 99 establishes the International Vocabulary of Metrology, defining accuracy as the closeness of agreement between a measured quantity value and a true quantity value of a measurand. This definition forms the foundation for all subsequent accuracy assessments and calibration procedures.

Key metrological standards include ISO 5725 series, which addresses accuracy and precision of measurement methods and results, and IEC 61298 series, specifically targeting process measurement and control equipment. These standards establish systematic approaches for determining measurement uncertainty, traceability requirements, and acceptable accuracy tolerances for different sensor categories and applications.

Calibration standards such as ISO/IEC 17025 define the competence requirements for testing and calibration laboratories, ensuring that sensor accuracy assessments are performed under controlled conditions with traceable reference standards. The standard mandates regular calibration intervals, environmental control measures, and documentation procedures that directly impact sensor drift characterization and accuracy validation.

National metrology institutes, including NIST, PTB, and NPL, provide primary reference standards that establish the measurement traceability chain for sensor calibration. These institutes maintain fundamental physical constants and reference materials that serve as the ultimate accuracy benchmarks for industrial sensors and measurement instruments.

Emerging standards address modern sensor technologies, including smart sensors with digital interfaces and wireless communication capabilities. IEC 61508 functional safety standards incorporate accuracy requirements for safety-critical applications, while IEEE standards address specific sensor types such as accelerometers, pressure sensors, and temperature measurement devices, establishing accuracy classes and performance specifications that account for long-term stability and drift characteristics.

Cost-Benefit Analysis of Drift Correction Methods

The economic evaluation of drift correction methods requires a comprehensive assessment of implementation costs versus performance benefits. Initial investment costs vary significantly across different correction approaches, ranging from software-based algorithmic solutions costing $10,000-50,000 to hardware-intensive recalibration systems requiring $100,000-500,000 in equipment and infrastructure. These upfront expenses must be weighed against the long-term operational benefits and accuracy improvements each method delivers.

Software-based drift correction methods typically offer the most favorable cost-benefit ratio for moderate accuracy requirements. Machine learning algorithms and statistical filtering techniques require minimal hardware modifications while providing 30-60% improvement in measurement stability. The primary costs involve software development, validation testing, and periodic algorithm updates, with total implementation expenses generally under $75,000 for most industrial applications.

Hardware-intensive approaches, including automatic recalibration systems and reference standard integration, demonstrate superior performance gains but require substantial capital investment. These methods can achieve 70-90% drift reduction and maintain accuracy within ±0.1% over extended periods. However, the initial costs often exceed $200,000, plus ongoing maintenance expenses of $20,000-40,000 annually for calibration standards and system upkeep.

The return on investment timeline varies considerably based on application criticality and accuracy requirements. High-precision manufacturing environments typically recover drift correction investments within 12-18 months through reduced product defects and quality control costs. Process industries may require 24-36 months for full cost recovery, while research applications often justify expenses through improved data reliability rather than direct financial returns.

Operational cost savings emerge from reduced manual calibration frequency, decreased instrument downtime, and improved process efficiency. Organizations implementing comprehensive drift correction report 40-70% reduction in calibration-related maintenance costs and 25-45% improvement in overall system availability. These operational benefits often exceed the initial technology investment within the first operational cycle.

Software-based drift correction methods typically offer the most favorable cost-benefit ratio for moderate accuracy requirements. Machine learning algorithms and statistical filtering techniques require minimal hardware modifications while providing 30-60% improvement in measurement stability. The primary costs involve software development, validation testing, and periodic algorithm updates, with total implementation expenses generally under $75,000 for most industrial applications.

Hardware-intensive approaches, including automatic recalibration systems and reference standard integration, demonstrate superior performance gains but require substantial capital investment. These methods can achieve 70-90% drift reduction and maintain accuracy within ±0.1% over extended periods. However, the initial costs often exceed $200,000, plus ongoing maintenance expenses of $20,000-40,000 annually for calibration standards and system upkeep.

The return on investment timeline varies considerably based on application criticality and accuracy requirements. High-precision manufacturing environments typically recover drift correction investments within 12-18 months through reduced product defects and quality control costs. Process industries may require 24-36 months for full cost recovery, while research applications often justify expenses through improved data reliability rather than direct financial returns.

Operational cost savings emerge from reduced manual calibration frequency, decreased instrument downtime, and improved process efficiency. Organizations implementing comprehensive drift correction report 40-70% reduction in calibration-related maintenance costs and 25-45% improvement in overall system availability. These operational benefits often exceed the initial technology investment within the first operational cycle.

Unlock deeper insights with PatSnap Eureka Quick Research — get a full tech report to explore trends and direct your research. Try now!

Generate Your Research Report Instantly with AI Agent

Supercharge your innovation with PatSnap Eureka AI Agent Platform!