Seamless Rate vs System Latency: Optimization Techniques

MAR 2, 20269 MIN READ

Generate Your Research Report Instantly with AI Agent

PatSnap Eureka helps you evaluate technical feasibility & market potential.

Seamless Rate and System Latency Background and Objectives

The relationship between seamless rate and system latency represents a fundamental trade-off in modern computing and communication systems. Seamless rate refers to the continuous, uninterrupted throughput that a system can maintain while delivering consistent performance to end users. System latency encompasses the total time delay from input to output, including processing, transmission, and queuing delays across all system components.

This optimization challenge has evolved significantly since the emergence of real-time computing systems in the 1960s. Early mainframe computers prioritized computational accuracy over speed, but the advent of interactive computing and networked systems shifted focus toward minimizing response times. The proliferation of internet-based services in the 1990s introduced new complexities, as systems needed to balance high throughput with low latency across distributed architectures.

The digital transformation era has intensified these challenges exponentially. Modern applications spanning cloud computing, edge computing, autonomous vehicles, financial trading systems, and real-time gaming demand both high seamless rates and ultra-low latency simultaneously. The emergence of 5G networks, Internet of Things devices, and artificial intelligence applications has created unprecedented performance requirements that traditional optimization approaches struggle to address.

Contemporary systems face the inherent tension between these two metrics because optimizations that improve one often degrade the other. Increasing buffer sizes and batch processing can enhance throughput but introduce additional latency. Conversely, reducing processing delays through simplified algorithms may compromise the system's ability to maintain consistent high-throughput performance under varying load conditions.

The primary objective of seamless rate versus system latency optimization is to identify the optimal operating point where both metrics achieve acceptable performance levels for specific application requirements. This involves developing adaptive algorithms that can dynamically adjust system parameters based on real-time conditions, workload characteristics, and performance constraints.

Advanced optimization techniques aim to break traditional trade-off limitations through innovative approaches including predictive resource allocation, intelligent caching strategies, parallel processing architectures, and machine learning-driven performance tuning. The ultimate goal is achieving near-optimal performance across both dimensions while maintaining system stability and scalability.

This optimization challenge has evolved significantly since the emergence of real-time computing systems in the 1960s. Early mainframe computers prioritized computational accuracy over speed, but the advent of interactive computing and networked systems shifted focus toward minimizing response times. The proliferation of internet-based services in the 1990s introduced new complexities, as systems needed to balance high throughput with low latency across distributed architectures.

The digital transformation era has intensified these challenges exponentially. Modern applications spanning cloud computing, edge computing, autonomous vehicles, financial trading systems, and real-time gaming demand both high seamless rates and ultra-low latency simultaneously. The emergence of 5G networks, Internet of Things devices, and artificial intelligence applications has created unprecedented performance requirements that traditional optimization approaches struggle to address.

Contemporary systems face the inherent tension between these two metrics because optimizations that improve one often degrade the other. Increasing buffer sizes and batch processing can enhance throughput but introduce additional latency. Conversely, reducing processing delays through simplified algorithms may compromise the system's ability to maintain consistent high-throughput performance under varying load conditions.

The primary objective of seamless rate versus system latency optimization is to identify the optimal operating point where both metrics achieve acceptable performance levels for specific application requirements. This involves developing adaptive algorithms that can dynamically adjust system parameters based on real-time conditions, workload characteristics, and performance constraints.

Advanced optimization techniques aim to break traditional trade-off limitations through innovative approaches including predictive resource allocation, intelligent caching strategies, parallel processing architectures, and machine learning-driven performance tuning. The ultimate goal is achieving near-optimal performance across both dimensions while maintaining system stability and scalability.

Market Demand for Low-Latency High-Throughput Systems

The global demand for low-latency high-throughput systems has experienced unprecedented growth across multiple industry verticals, driven by the digital transformation wave and real-time application requirements. Financial services sector represents one of the most demanding markets, where algorithmic trading platforms require sub-microsecond latency for competitive advantage. High-frequency trading firms are increasingly investing in infrastructure that can process millions of transactions per second while maintaining consistent response times below 100 microseconds.

Telecommunications infrastructure has emerged as another critical demand driver, particularly with the rollout of 5G networks and edge computing architectures. Network function virtualization and software-defined networking solutions require systems capable of handling massive data throughput while meeting strict latency requirements for real-time services. The growing adoption of Internet of Things devices and autonomous systems further amplifies this demand, as these applications cannot tolerate delays that could compromise safety or operational efficiency.

Gaming and entertainment industries have witnessed explosive growth in demand for low-latency systems, especially with the rise of cloud gaming platforms and virtual reality applications. These services require seamless streaming capabilities with minimal buffering, pushing the boundaries of what current systems can deliver. The competitive gaming market has become particularly sensitive to latency issues, where even millisecond delays can determine tournament outcomes.

Industrial automation and manufacturing sectors are increasingly adopting real-time control systems that demand both high throughput for sensor data processing and ultra-low latency for safety-critical operations. Smart factory implementations require systems that can process vast amounts of sensor data while maintaining deterministic response times for robotic control and quality assurance processes.

The emergence of artificial intelligence and machine learning applications has created new market segments demanding real-time inference capabilities. Edge AI deployments require systems that can process complex algorithms with minimal delay while handling continuous data streams from multiple sources simultaneously.

Market research indicates that enterprises are willing to invest significantly in infrastructure upgrades to achieve optimal latency-throughput balance, recognizing that system performance directly impacts revenue generation and competitive positioning in their respective markets.

Telecommunications infrastructure has emerged as another critical demand driver, particularly with the rollout of 5G networks and edge computing architectures. Network function virtualization and software-defined networking solutions require systems capable of handling massive data throughput while meeting strict latency requirements for real-time services. The growing adoption of Internet of Things devices and autonomous systems further amplifies this demand, as these applications cannot tolerate delays that could compromise safety or operational efficiency.

Gaming and entertainment industries have witnessed explosive growth in demand for low-latency systems, especially with the rise of cloud gaming platforms and virtual reality applications. These services require seamless streaming capabilities with minimal buffering, pushing the boundaries of what current systems can deliver. The competitive gaming market has become particularly sensitive to latency issues, where even millisecond delays can determine tournament outcomes.

Industrial automation and manufacturing sectors are increasingly adopting real-time control systems that demand both high throughput for sensor data processing and ultra-low latency for safety-critical operations. Smart factory implementations require systems that can process vast amounts of sensor data while maintaining deterministic response times for robotic control and quality assurance processes.

The emergence of artificial intelligence and machine learning applications has created new market segments demanding real-time inference capabilities. Edge AI deployments require systems that can process complex algorithms with minimal delay while handling continuous data streams from multiple sources simultaneously.

Market research indicates that enterprises are willing to invest significantly in infrastructure upgrades to achieve optimal latency-throughput balance, recognizing that system performance directly impacts revenue generation and competitive positioning in their respective markets.

Current State and Challenges in Rate-Latency Optimization

The optimization of seamless rate versus system latency represents a fundamental challenge in modern computing systems, where achieving optimal performance requires balancing throughput maximization with minimal response delays. Current implementations across various domains demonstrate significant disparities in their approaches and effectiveness, revealing both technological achievements and persistent limitations.

Contemporary rate-latency optimization techniques primarily rely on adaptive algorithms that dynamically adjust processing parameters based on real-time system conditions. These solutions typically employ predictive models to anticipate workload patterns and preemptively modify resource allocation strategies. However, existing approaches often struggle with the inherent trade-off between maintaining consistent data flow rates and minimizing end-to-end latency, particularly under varying network conditions and computational loads.

The geographical distribution of technological advancement in this field shows concentrated development in North America and East Asia, with leading research institutions and technology companies driving innovation. European contributions focus primarily on theoretical frameworks and standardization efforts, while emerging markets demonstrate growing interest in practical implementations for telecommunications and cloud computing applications.

Current technical challenges center around the complexity of multi-dimensional optimization problems where traditional linear programming approaches prove insufficient. The non-linear relationship between rate and latency parameters creates optimization landscapes with multiple local minima, making it difficult to achieve globally optimal solutions. Additionally, the dynamic nature of modern distributed systems introduces temporal dependencies that complicate prediction accuracy and control system stability.

Hardware limitations present another significant constraint, particularly in edge computing environments where processing power and memory resources are restricted. The increasing demand for real-time processing capabilities conflicts with energy efficiency requirements, creating additional optimization dimensions that must be simultaneously addressed. Legacy system integration further complicates implementation efforts, as newer optimization techniques must maintain compatibility with existing infrastructure.

Measurement and monitoring capabilities remain inadequate for comprehensive rate-latency optimization, with current instrumentation often introducing overhead that affects the very metrics being optimized. The lack of standardized benchmarking methodologies across different application domains makes it challenging to compare and validate optimization approaches effectively.

Contemporary rate-latency optimization techniques primarily rely on adaptive algorithms that dynamically adjust processing parameters based on real-time system conditions. These solutions typically employ predictive models to anticipate workload patterns and preemptively modify resource allocation strategies. However, existing approaches often struggle with the inherent trade-off between maintaining consistent data flow rates and minimizing end-to-end latency, particularly under varying network conditions and computational loads.

The geographical distribution of technological advancement in this field shows concentrated development in North America and East Asia, with leading research institutions and technology companies driving innovation. European contributions focus primarily on theoretical frameworks and standardization efforts, while emerging markets demonstrate growing interest in practical implementations for telecommunications and cloud computing applications.

Current technical challenges center around the complexity of multi-dimensional optimization problems where traditional linear programming approaches prove insufficient. The non-linear relationship between rate and latency parameters creates optimization landscapes with multiple local minima, making it difficult to achieve globally optimal solutions. Additionally, the dynamic nature of modern distributed systems introduces temporal dependencies that complicate prediction accuracy and control system stability.

Hardware limitations present another significant constraint, particularly in edge computing environments where processing power and memory resources are restricted. The increasing demand for real-time processing capabilities conflicts with energy efficiency requirements, creating additional optimization dimensions that must be simultaneously addressed. Legacy system integration further complicates implementation efforts, as newer optimization techniques must maintain compatibility with existing infrastructure.

Measurement and monitoring capabilities remain inadequate for comprehensive rate-latency optimization, with current instrumentation often introducing overhead that affects the very metrics being optimized. The lack of standardized benchmarking methodologies across different application domains makes it challenging to compare and validate optimization approaches effectively.

Existing Rate-Latency Optimization Solutions

01 Adaptive rate control mechanisms for latency optimization

Systems employ adaptive rate control techniques that dynamically adjust transmission rates based on real-time latency measurements and network conditions. These mechanisms monitor system performance metrics and automatically modify data flow rates to maintain seamless operation while minimizing latency. The adaptive approach ensures optimal balance between throughput and responsiveness across varying network conditions.- Adaptive rate control mechanisms for latency optimization: Systems employ adaptive rate control techniques that dynamically adjust transmission rates based on real-time latency measurements and network conditions. These mechanisms monitor system performance metrics and automatically modify data flow rates to maintain seamless operation while minimizing latency. The adaptive approach ensures optimal balance between throughput and responsiveness across varying network conditions.

- Buffer management and queue optimization for seamless transitions: Advanced buffer management strategies are implemented to handle data flow during rate transitions without introducing perceptible delays. These systems utilize intelligent queuing algorithms and buffer sizing techniques to ensure smooth handoffs between different transmission rates. The optimization focuses on maintaining continuous data delivery while adapting to changing latency requirements.

- Predictive latency compensation and rate adjustment: Predictive algorithms analyze historical latency patterns and network behavior to proactively adjust transmission rates before degradation occurs. These systems use machine learning or statistical models to forecast latency changes and preemptively modify rates to maintain seamless performance. The predictive approach minimizes reactive adjustments and reduces transition artifacts.

- Multi-layer rate synchronization for end-to-end latency control: Coordinated rate management across multiple protocol layers and system components ensures consistent latency performance throughout the transmission path. These architectures implement synchronization mechanisms that align rate changes across different subsystems to prevent bottlenecks and maintain seamless operation. The multi-layer approach provides comprehensive latency control from source to destination.

- Real-time feedback loops for dynamic rate stabilization: Closed-loop feedback systems continuously monitor latency metrics and adjust transmission rates in real-time to maintain target performance levels. These implementations use rapid feedback mechanisms with minimal processing delay to enable immediate rate corrections. The real-time stabilization ensures consistent user experience during varying load conditions and network fluctuations.

02 Buffer management and queue optimization for seamless transitions

Advanced buffer management strategies are implemented to handle data flow during rate transitions without introducing perceptible delays. These systems utilize intelligent queuing algorithms and buffer sizing techniques to ensure smooth handoffs between different transmission rates. The optimization focuses on maintaining continuous data delivery while adapting to changing latency requirements.Expand Specific Solutions03 Predictive latency compensation and rate adjustment

Predictive algorithms analyze historical latency patterns and network behavior to proactively adjust transmission rates before degradation occurs. These systems use machine learning or statistical models to forecast latency changes and preemptively modify rates to maintain seamless performance. The predictive approach minimizes reactive adjustments and reduces transition artifacts.Expand Specific Solutions04 Multi-layer rate synchronization protocols

Coordinated rate adjustment protocols operate across multiple system layers to ensure synchronized transitions that preserve low latency. These protocols manage timing relationships between different processing stages and communication layers to prevent bottlenecks during rate changes. The synchronization mechanisms enable seamless rate adaptation without introducing cumulative delays.Expand Specific Solutions05 Real-time feedback control for latency-aware rate scaling

Closed-loop feedback systems continuously monitor end-to-end latency and adjust transmission rates in real-time to meet performance targets. These control systems implement rapid response mechanisms that detect latency variations and execute rate modifications within strict timing constraints. The feedback approach ensures that rate changes maintain system responsiveness and user experience quality.Expand Specific Solutions

Key Players in High-Performance Computing and Optimization

The seamless rate versus system latency optimization landscape represents a mature technological domain experiencing rapid evolution driven by 5G, edge computing, and real-time applications. The market demonstrates substantial scale with telecommunications giants like China Mobile, NTT Docomo, and China Unicom driving infrastructure demands, while semiconductor leaders including Intel, Qualcomm, AMD, and Texas Instruments advance processing capabilities. Technology maturity varies significantly across segments - established players like Samsung Electronics, Apple, and IBM leverage decades of system optimization expertise, while emerging companies like Groq focus on specialized AI inference acceleration. The competitive dynamics reflect convergence between traditional hardware manufacturers, cloud infrastructure providers like Amazon Technologies, and specialized optimization solution vendors such as Volumez Technologies, indicating a fragmented but rapidly consolidating market where latency-sensitive applications demand increasingly sophisticated rate-latency trade-off solutions.

Intel Corp.

Technical Solution: Intel implements advanced processor architectures with dynamic frequency scaling and turbo boost technologies to optimize the trade-off between seamless data rates and system latency. Their approach includes intelligent cache hierarchies, predictive branch algorithms, and hardware-accelerated packet processing engines that can dynamically adjust processing priorities based on workload characteristics. The company's latest Xeon processors feature specialized low-latency modes that can reduce processing delays by up to 40% while maintaining high throughput rates through parallel execution units and optimized memory controllers.

Strengths: Industry-leading processor performance and extensive optimization experience. Weaknesses: High power consumption and complex implementation requirements.

QUALCOMM, Inc.

Technical Solution: Qualcomm's optimization approach focuses on mobile and wireless communication systems, utilizing advanced signal processing algorithms and adaptive modulation techniques. Their Snapdragon platforms implement sophisticated quality of service (QoS) management that dynamically balances data throughput with latency requirements. The company employs machine learning-based traffic prediction algorithms that can preemptively adjust system parameters, reducing average latency by 25-35% while maintaining seamless connectivity. Their solutions include advanced antenna technologies and baseband processing optimizations specifically designed for real-time applications.

Strengths: Excellent wireless communication expertise and mobile optimization capabilities. Weaknesses: Limited applicability outside mobile and wireless domains.

Core Innovations in Seamless Rate Enhancement Techniques

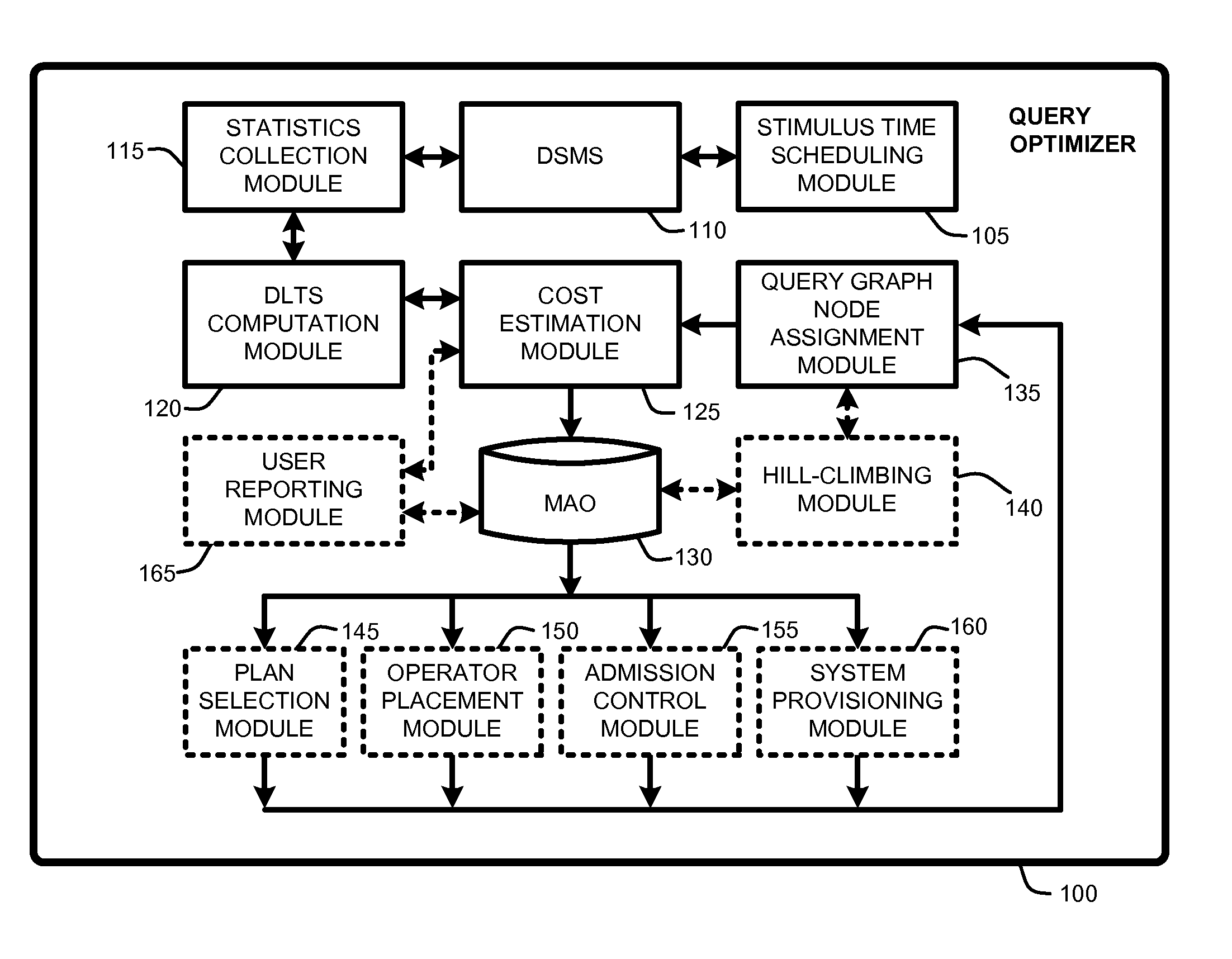

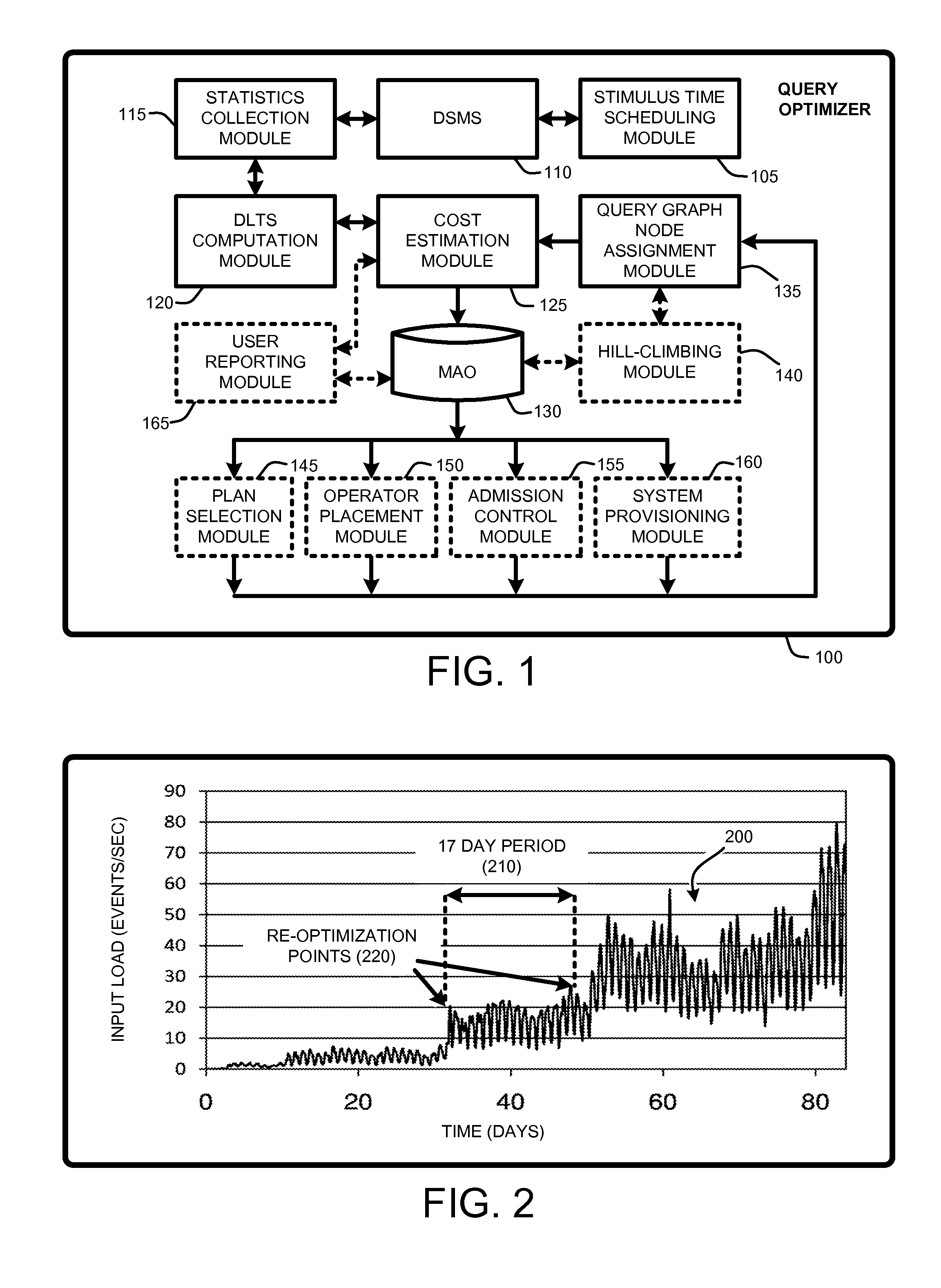

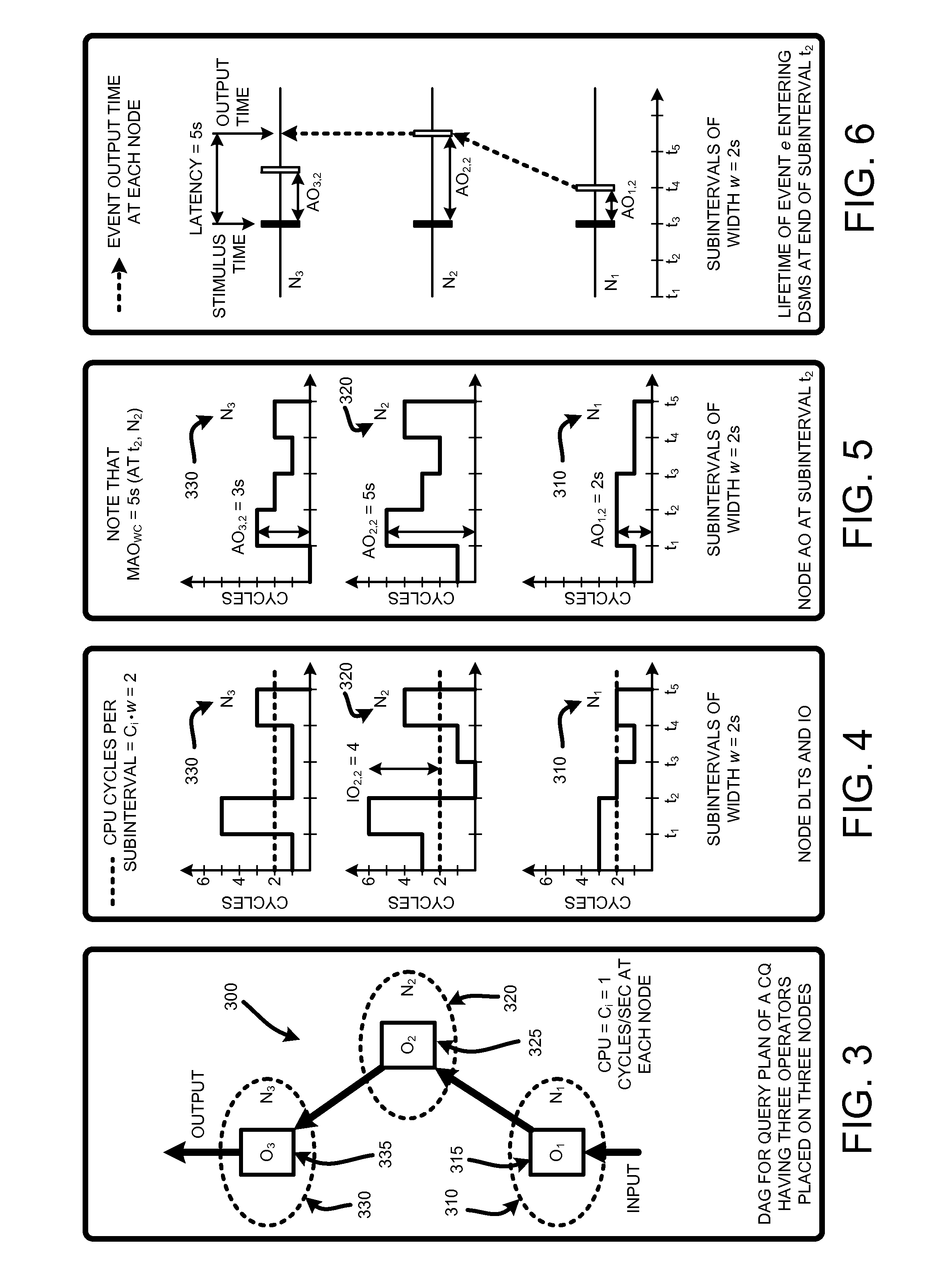

Estimating latencies for query optimization in distributed stream processing

PatentInactiveUS20100030896A1

Innovation

- The Query Optimizer computes a cost estimation metric called Maximum Accumulated Overload (MAO), which is approximately equivalent to worst-case latency, using minimal parameters such as operator selectivity and event arrival workload, allowing for pre-computation and periodic re-computation to optimize latency-based operations.

Method and apparatus for speculative response arbitration to improve system latency

PatentInactiveUS6976106B2

Innovation

- Implementing a speculative response arbitration method where the arbitration circuit dynamically selects a default winner based on internal information such as expected delays and system behavior, allowing for immediate access without the need for a preceding request.

Real-Time System Performance Standards and Compliance

Real-time systems operating in critical environments must adhere to stringent performance standards that define acceptable boundaries for seamless rate delivery and system latency. Industry-standard frameworks such as IEC 61508 for functional safety and DO-178C for avionics establish fundamental requirements where system response times must remain within predetermined thresholds to maintain operational integrity. These standards typically mandate maximum latency limits ranging from microseconds in high-frequency trading systems to milliseconds in automotive safety applications.

Compliance frameworks for real-time performance encompass multiple dimensional metrics beyond simple latency measurements. The ITU-T G.1010 recommendation provides comprehensive guidelines for end-to-end delay budgets across different application categories, while IEEE 802.1 Time-Sensitive Networking standards define precise timing requirements for industrial automation and automotive networks. These frameworks establish quantitative benchmarks for jitter tolerance, packet loss rates, and deterministic delivery guarantees that systems must consistently achieve.

Regulatory compliance in safety-critical domains requires continuous monitoring and validation of performance parameters against established baselines. AUTOSAR Adaptive Platform specifications mandate real-time scheduling policies that ensure predictable execution patterns, while FDA guidelines for medical devices stipulate specific response time requirements for life-critical functions. Compliance verification involves statistical analysis of performance data over extended operational periods to demonstrate sustained adherence to specified standards.

Modern compliance methodologies integrate automated testing frameworks that continuously assess system performance against regulatory benchmarks. Tools such as RTCA DO-254 verification environments enable comprehensive validation of hardware-software integration performance, while ISO 26262 compliance requires demonstrable evidence of timing behavior under various operational scenarios. These approaches ensure that optimization techniques maintain regulatory compliance while improving overall system efficiency.

Emerging standards development focuses on adaptive compliance mechanisms that accommodate dynamic performance optimization while maintaining safety guarantees. The ongoing evolution of 5G URLLC specifications and Industry 4.0 requirements drives the establishment of new performance standards that balance ultra-low latency demands with reliability constraints, creating frameworks for next-generation real-time system compliance.

Compliance frameworks for real-time performance encompass multiple dimensional metrics beyond simple latency measurements. The ITU-T G.1010 recommendation provides comprehensive guidelines for end-to-end delay budgets across different application categories, while IEEE 802.1 Time-Sensitive Networking standards define precise timing requirements for industrial automation and automotive networks. These frameworks establish quantitative benchmarks for jitter tolerance, packet loss rates, and deterministic delivery guarantees that systems must consistently achieve.

Regulatory compliance in safety-critical domains requires continuous monitoring and validation of performance parameters against established baselines. AUTOSAR Adaptive Platform specifications mandate real-time scheduling policies that ensure predictable execution patterns, while FDA guidelines for medical devices stipulate specific response time requirements for life-critical functions. Compliance verification involves statistical analysis of performance data over extended operational periods to demonstrate sustained adherence to specified standards.

Modern compliance methodologies integrate automated testing frameworks that continuously assess system performance against regulatory benchmarks. Tools such as RTCA DO-254 verification environments enable comprehensive validation of hardware-software integration performance, while ISO 26262 compliance requires demonstrable evidence of timing behavior under various operational scenarios. These approaches ensure that optimization techniques maintain regulatory compliance while improving overall system efficiency.

Emerging standards development focuses on adaptive compliance mechanisms that accommodate dynamic performance optimization while maintaining safety guarantees. The ongoing evolution of 5G URLLC specifications and Industry 4.0 requirements drives the establishment of new performance standards that balance ultra-low latency demands with reliability constraints, creating frameworks for next-generation real-time system compliance.

Energy Efficiency Considerations in Rate-Latency Trade-offs

Energy efficiency has emerged as a critical design constraint in modern communication systems, particularly when optimizing the delicate balance between seamless data rates and system latency. The fundamental challenge lies in the inherent trade-off where achieving higher data throughput or lower latency typically demands increased power consumption, creating a three-dimensional optimization problem that requires sophisticated energy-aware design strategies.

Power consumption in rate-latency optimization manifests across multiple system components, with processing units, memory subsystems, and communication interfaces each contributing distinct energy profiles. Dynamic voltage and frequency scaling techniques enable real-time adjustment of computational resources based on current rate and latency requirements, allowing systems to operate at optimal energy points while maintaining performance targets. Advanced power management algorithms can predict traffic patterns and preemptively adjust system configurations to minimize energy waste during low-demand periods.

The relationship between transmission power and data rate follows non-linear patterns, where marginal increases in rate often require exponentially higher energy investments. Energy-efficient modulation schemes and adaptive coding techniques provide mechanisms to optimize this relationship by dynamically selecting the most power-efficient transmission parameters based on channel conditions and latency constraints. These approaches enable systems to maintain seamless operation while minimizing unnecessary energy expenditure.

Latency reduction strategies frequently involve parallel processing architectures and redundant pathways, both of which inherently increase power consumption. Energy-aware scheduling algorithms address this challenge by intelligently distributing workloads across available resources, considering both temporal constraints and power efficiency metrics. Machine learning-based predictive models can optimize these scheduling decisions by learning from historical performance patterns and energy consumption profiles.

Battery-powered and mobile systems face additional constraints where energy efficiency directly impacts operational lifetime and user experience. Adaptive quality-of-service mechanisms enable these systems to gracefully degrade performance parameters when energy reserves become critical, ensuring continued operation while preserving essential functionality. Energy harvesting technologies and advanced battery management systems provide complementary approaches to extend operational capabilities in energy-constrained environments.

Power consumption in rate-latency optimization manifests across multiple system components, with processing units, memory subsystems, and communication interfaces each contributing distinct energy profiles. Dynamic voltage and frequency scaling techniques enable real-time adjustment of computational resources based on current rate and latency requirements, allowing systems to operate at optimal energy points while maintaining performance targets. Advanced power management algorithms can predict traffic patterns and preemptively adjust system configurations to minimize energy waste during low-demand periods.

The relationship between transmission power and data rate follows non-linear patterns, where marginal increases in rate often require exponentially higher energy investments. Energy-efficient modulation schemes and adaptive coding techniques provide mechanisms to optimize this relationship by dynamically selecting the most power-efficient transmission parameters based on channel conditions and latency constraints. These approaches enable systems to maintain seamless operation while minimizing unnecessary energy expenditure.

Latency reduction strategies frequently involve parallel processing architectures and redundant pathways, both of which inherently increase power consumption. Energy-aware scheduling algorithms address this challenge by intelligently distributing workloads across available resources, considering both temporal constraints and power efficiency metrics. Machine learning-based predictive models can optimize these scheduling decisions by learning from historical performance patterns and energy consumption profiles.

Battery-powered and mobile systems face additional constraints where energy efficiency directly impacts operational lifetime and user experience. Adaptive quality-of-service mechanisms enable these systems to gracefully degrade performance parameters when energy reserves become critical, ensuring continued operation while preserving essential functionality. Energy harvesting technologies and advanced battery management systems provide complementary approaches to extend operational capabilities in energy-constrained environments.

Unlock deeper insights with PatSnap Eureka Quick Research — get a full tech report to explore trends and direct your research. Try now!

Generate Your Research Report Instantly with AI Agent

Supercharge your innovation with PatSnap Eureka AI Agent Platform!