Simulation-Driven Design in Applying Machine Vision Technologies

MAR 6, 20269 MIN READ

Generate Your Research Report Instantly with AI Agent

PatSnap Eureka helps you evaluate technical feasibility & market potential.

Simulation-Driven Machine Vision Background and Objectives

Machine vision technology has undergone remarkable evolution since its inception in the 1960s, transitioning from simple pattern recognition systems to sophisticated AI-powered visual perception platforms. The integration of simulation-driven design methodologies represents a paradigm shift in how machine vision systems are conceived, developed, and deployed across industries. This convergence addresses the growing complexity of modern visual recognition tasks while reducing development costs and time-to-market pressures.

The historical trajectory of machine vision reveals distinct phases of technological advancement. Early systems relied on basic geometric pattern matching and threshold-based image processing. The introduction of digital signal processing in the 1980s enabled more sophisticated feature extraction algorithms. The subsequent integration of neural networks in the 1990s marked a significant leap forward, though computational limitations constrained practical applications.

Contemporary machine vision systems leverage deep learning architectures, particularly convolutional neural networks, enabling unprecedented accuracy in object detection, classification, and scene understanding. However, traditional development approaches often require extensive real-world data collection, manual annotation, and iterative testing cycles that can span months or years.

Simulation-driven design emerges as a transformative methodology that addresses these limitations by creating virtual environments for system development and validation. This approach enables rapid prototyping, systematic testing under controlled conditions, and exploration of edge cases that might be difficult or expensive to reproduce in physical settings.

The primary objective of implementing simulation-driven design in machine vision applications centers on accelerating development cycles while maintaining or improving system reliability. By creating photorealistic virtual environments, developers can generate vast datasets with perfect ground truth annotations, eliminating the time-intensive manual labeling process. This synthetic data generation capability proves particularly valuable for rare event detection, hazardous scenario testing, and applications requiring diverse environmental conditions.

Another critical objective involves cost reduction throughout the development lifecycle. Traditional machine vision system development often requires expensive hardware setups, controlled testing environments, and extensive field trials. Simulation platforms can replicate these conditions virtually, allowing multiple design iterations without physical infrastructure investments.

The methodology also aims to enhance system robustness by enabling comprehensive testing across parameter spaces that would be impractical to explore through physical experimentation alone. Virtual environments can systematically vary lighting conditions, object orientations, occlusion patterns, and environmental factors to identify potential failure modes before deployment.

Furthermore, simulation-driven approaches facilitate collaborative development by providing standardized testing environments that can be shared across distributed teams. This standardization ensures consistent evaluation criteria and enables more effective knowledge transfer between development phases.

The historical trajectory of machine vision reveals distinct phases of technological advancement. Early systems relied on basic geometric pattern matching and threshold-based image processing. The introduction of digital signal processing in the 1980s enabled more sophisticated feature extraction algorithms. The subsequent integration of neural networks in the 1990s marked a significant leap forward, though computational limitations constrained practical applications.

Contemporary machine vision systems leverage deep learning architectures, particularly convolutional neural networks, enabling unprecedented accuracy in object detection, classification, and scene understanding. However, traditional development approaches often require extensive real-world data collection, manual annotation, and iterative testing cycles that can span months or years.

Simulation-driven design emerges as a transformative methodology that addresses these limitations by creating virtual environments for system development and validation. This approach enables rapid prototyping, systematic testing under controlled conditions, and exploration of edge cases that might be difficult or expensive to reproduce in physical settings.

The primary objective of implementing simulation-driven design in machine vision applications centers on accelerating development cycles while maintaining or improving system reliability. By creating photorealistic virtual environments, developers can generate vast datasets with perfect ground truth annotations, eliminating the time-intensive manual labeling process. This synthetic data generation capability proves particularly valuable for rare event detection, hazardous scenario testing, and applications requiring diverse environmental conditions.

Another critical objective involves cost reduction throughout the development lifecycle. Traditional machine vision system development often requires expensive hardware setups, controlled testing environments, and extensive field trials. Simulation platforms can replicate these conditions virtually, allowing multiple design iterations without physical infrastructure investments.

The methodology also aims to enhance system robustness by enabling comprehensive testing across parameter spaces that would be impractical to explore through physical experimentation alone. Virtual environments can systematically vary lighting conditions, object orientations, occlusion patterns, and environmental factors to identify potential failure modes before deployment.

Furthermore, simulation-driven approaches facilitate collaborative development by providing standardized testing environments that can be shared across distributed teams. This standardization ensures consistent evaluation criteria and enables more effective knowledge transfer between development phases.

Market Demand for Simulation-Based Vision Solutions

The market demand for simulation-based vision solutions is experiencing unprecedented growth across multiple industrial sectors, driven by the increasing complexity of manufacturing processes and the need for enhanced quality control. Traditional machine vision systems often require extensive physical prototyping and iterative testing, which significantly increases development costs and time-to-market. Simulation-driven approaches address these challenges by enabling virtual validation and optimization before physical implementation.

Manufacturing industries represent the largest market segment for simulation-based vision solutions, particularly in automotive, electronics, and pharmaceutical sectors. These industries face mounting pressure to achieve zero-defect production while maintaining high throughput rates. Simulation-based vision systems enable manufacturers to model various defect scenarios, lighting conditions, and product variations without disrupting production lines or consuming physical samples.

The automotive industry demonstrates particularly strong demand for these solutions, especially in quality inspection of complex assemblies and surface defect detection. Electric vehicle manufacturing has further intensified this demand, as battery cell inspection and electronic component verification require extremely high precision levels that benefit significantly from simulation-based optimization.

Emerging applications in robotics and autonomous systems are creating new market opportunities for simulation-based vision solutions. As robots become more prevalent in unstructured environments, the ability to simulate diverse operational scenarios becomes crucial for developing robust vision algorithms. This trend is particularly evident in logistics, agriculture, and service robotics sectors.

The pharmaceutical and medical device industries are increasingly adopting simulation-based vision solutions for regulatory compliance and validation purposes. These sectors require extensive documentation and validation of inspection processes, making simulation-based approaches attractive for demonstrating system reliability and performance under various conditions.

Cost reduction pressures across industries are driving adoption of simulation-based solutions as organizations seek to minimize physical testing requirements and accelerate product development cycles. The ability to validate vision system performance virtually before deployment significantly reduces implementation risks and associated costs.

Geographic demand patterns show strong growth in Asia-Pacific regions, particularly in countries with expanding manufacturing capabilities and increasing automation adoption. European markets demonstrate steady demand driven by stringent quality standards and regulatory requirements, while North American markets focus on advanced applications in aerospace and medical device manufacturing.

Manufacturing industries represent the largest market segment for simulation-based vision solutions, particularly in automotive, electronics, and pharmaceutical sectors. These industries face mounting pressure to achieve zero-defect production while maintaining high throughput rates. Simulation-based vision systems enable manufacturers to model various defect scenarios, lighting conditions, and product variations without disrupting production lines or consuming physical samples.

The automotive industry demonstrates particularly strong demand for these solutions, especially in quality inspection of complex assemblies and surface defect detection. Electric vehicle manufacturing has further intensified this demand, as battery cell inspection and electronic component verification require extremely high precision levels that benefit significantly from simulation-based optimization.

Emerging applications in robotics and autonomous systems are creating new market opportunities for simulation-based vision solutions. As robots become more prevalent in unstructured environments, the ability to simulate diverse operational scenarios becomes crucial for developing robust vision algorithms. This trend is particularly evident in logistics, agriculture, and service robotics sectors.

The pharmaceutical and medical device industries are increasingly adopting simulation-based vision solutions for regulatory compliance and validation purposes. These sectors require extensive documentation and validation of inspection processes, making simulation-based approaches attractive for demonstrating system reliability and performance under various conditions.

Cost reduction pressures across industries are driving adoption of simulation-based solutions as organizations seek to minimize physical testing requirements and accelerate product development cycles. The ability to validate vision system performance virtually before deployment significantly reduces implementation risks and associated costs.

Geographic demand patterns show strong growth in Asia-Pacific regions, particularly in countries with expanding manufacturing capabilities and increasing automation adoption. European markets demonstrate steady demand driven by stringent quality standards and regulatory requirements, while North American markets focus on advanced applications in aerospace and medical device manufacturing.

Current State of Simulation-Driven Vision Technologies

Simulation-driven design in machine vision has reached a significant maturity level, with multiple commercial platforms and open-source frameworks now supporting comprehensive virtual testing environments. Current implementations leverage advanced physics engines, ray-tracing algorithms, and synthetic data generation capabilities to create realistic visual scenarios that closely mirror real-world conditions. Major technology providers have developed sophisticated simulation suites that integrate seamlessly with existing computer vision development workflows.

The technology landscape is dominated by several key approaches, including photorealistic rendering engines, procedural content generation, and domain randomization techniques. These methods enable developers to create vast datasets of annotated training images without the extensive costs and time requirements associated with traditional data collection. Current simulation platforms can accurately model complex lighting conditions, material properties, surface textures, and environmental variables that significantly impact machine vision system performance.

Industry adoption has accelerated particularly in autonomous vehicle development, robotics, and industrial automation sectors. Leading automotive manufacturers now routinely employ simulation-driven approaches to validate perception algorithms across millions of virtual driving scenarios before physical testing. Similarly, robotics companies utilize simulated environments to train vision systems for object recognition, manipulation tasks, and navigation challenges across diverse operational contexts.

Technical capabilities have evolved to support real-time simulation with hardware-accelerated rendering, enabling iterative design processes and continuous integration workflows. Modern platforms incorporate machine learning-based domain adaptation techniques to bridge the sim-to-real gap, addressing historical concerns about transferability of simulation-trained models to physical deployments.

However, significant challenges persist in achieving perfect fidelity between simulated and real-world visual data. Current limitations include computational complexity for high-fidelity simulations, difficulties in modeling certain material properties and optical phenomena, and the ongoing need for domain-specific calibration. Despite these constraints, simulation-driven design has become an established methodology, with continued advancement in rendering technologies, physics modeling, and synthetic data quality driving broader industry adoption across diverse machine vision applications.

The technology landscape is dominated by several key approaches, including photorealistic rendering engines, procedural content generation, and domain randomization techniques. These methods enable developers to create vast datasets of annotated training images without the extensive costs and time requirements associated with traditional data collection. Current simulation platforms can accurately model complex lighting conditions, material properties, surface textures, and environmental variables that significantly impact machine vision system performance.

Industry adoption has accelerated particularly in autonomous vehicle development, robotics, and industrial automation sectors. Leading automotive manufacturers now routinely employ simulation-driven approaches to validate perception algorithms across millions of virtual driving scenarios before physical testing. Similarly, robotics companies utilize simulated environments to train vision systems for object recognition, manipulation tasks, and navigation challenges across diverse operational contexts.

Technical capabilities have evolved to support real-time simulation with hardware-accelerated rendering, enabling iterative design processes and continuous integration workflows. Modern platforms incorporate machine learning-based domain adaptation techniques to bridge the sim-to-real gap, addressing historical concerns about transferability of simulation-trained models to physical deployments.

However, significant challenges persist in achieving perfect fidelity between simulated and real-world visual data. Current limitations include computational complexity for high-fidelity simulations, difficulties in modeling certain material properties and optical phenomena, and the ongoing need for domain-specific calibration. Despite these constraints, simulation-driven design has become an established methodology, with continued advancement in rendering technologies, physics modeling, and synthetic data quality driving broader industry adoption across diverse machine vision applications.

Existing Simulation-Driven Machine Vision Solutions

01 Image processing and analysis systems

Machine vision technologies employ advanced image processing algorithms to capture, analyze, and interpret visual data from cameras and sensors. These systems utilize techniques such as edge detection, pattern recognition, and feature extraction to process images in real-time. The technology enables automated inspection, measurement, and quality control in various industrial applications by converting visual information into actionable data.- Image processing and analysis systems: Machine vision technologies utilize advanced image processing algorithms to capture, analyze, and interpret visual data from cameras and sensors. These systems employ techniques such as edge detection, pattern recognition, and feature extraction to identify objects, defects, or specific characteristics in images. The processed visual information enables automated decision-making in various industrial and commercial applications, improving accuracy and efficiency in quality control, inspection, and monitoring tasks.

- Deep learning and neural network-based vision systems: Modern machine vision technologies incorporate artificial intelligence and deep learning algorithms to enhance recognition capabilities and adaptability. Neural networks are trained on large datasets to recognize complex patterns, classify objects, and detect anomalies with high precision. These intelligent systems can learn from experience and improve performance over time, enabling applications such as facial recognition, autonomous navigation, and predictive maintenance in manufacturing environments.

- 3D vision and depth sensing technologies: Three-dimensional machine vision systems utilize stereo cameras, structured light, or time-of-flight sensors to capture depth information and create spatial representations of objects. These technologies enable precise measurement of dimensions, volume calculation, and position detection in three-dimensional space. Applications include robotic guidance, bin picking, quality inspection of complex geometries, and augmented reality systems that require accurate spatial understanding.

- Real-time vision processing and embedded systems: High-speed machine vision systems are designed to process visual information in real-time using specialized hardware and optimized algorithms. These systems employ embedded processors, field-programmable gate arrays, or dedicated vision processors to achieve low latency and high throughput. Real-time processing capabilities are essential for applications requiring immediate response, such as high-speed production line inspection, traffic monitoring, and safety systems in autonomous vehicles.

- Multi-spectral and hyperspectral imaging systems: Advanced machine vision technologies extend beyond visible light to capture information across multiple wavelengths, including infrared, ultraviolet, and other spectral bands. These systems analyze material composition, detect hidden defects, and identify substances based on their spectral signatures. Applications include agricultural monitoring, food quality inspection, medical diagnostics, and security screening where conventional imaging cannot provide sufficient information.

02 Object detection and recognition

Machine vision systems incorporate sophisticated algorithms for detecting and recognizing objects within images or video streams. These technologies use deep learning models, neural networks, and computer vision techniques to identify, classify, and track objects with high accuracy. The systems can distinguish between different object types, detect defects, and perform automated sorting and classification tasks in manufacturing and logistics environments.Expand Specific Solutions03 3D vision and depth sensing

Advanced machine vision technologies incorporate three-dimensional imaging capabilities to capture depth information and spatial relationships. These systems utilize stereo vision, structured light, time-of-flight sensors, or laser scanning to create detailed 3D models of objects and environments. The technology enables precise measurements, volume calculations, and robotic guidance in complex manufacturing and assembly operations.Expand Specific Solutions04 Illumination and lighting systems

Machine vision applications require specialized illumination systems to enhance image quality and ensure consistent results. These lighting solutions include LED arrays, structured lighting, backlighting, and multi-spectral illumination techniques designed to highlight specific features, reduce shadows, and improve contrast. Proper illumination is critical for accurate defect detection, surface inspection, and measurement applications across various industrial settings.Expand Specific Solutions05 Integration with automation and robotics

Machine vision technologies are integrated with automated systems and robotic platforms to enable intelligent manufacturing and quality control processes. These integrated solutions provide real-time feedback for robotic guidance, pick-and-place operations, and adaptive manufacturing processes. The systems combine vision sensors, processing units, and control interfaces to create closed-loop automation systems that improve productivity, accuracy, and flexibility in industrial operations.Expand Specific Solutions

Key Players in Vision Simulation and AI Industry

The simulation-driven design in machine vision technologies represents a rapidly evolving market currently in its growth phase, driven by increasing automation demands across automotive, manufacturing, and aerospace sectors. The market demonstrates significant expansion potential, estimated in billions globally, with applications spanning from autonomous vehicles to industrial quality control. Technology maturity varies considerably among key players: established leaders like Cognex Corp., FANUC Corp., and Siemens AG offer mature, commercially proven solutions, while automotive giants Tesla and Ford Global Technologies integrate advanced vision systems into next-generation vehicles. Emerging players like Zoox and DataRobot push technological boundaries with AI-driven approaches, indicating a competitive landscape where traditional machine vision companies compete alongside tech innovators and automotive manufacturers for market dominance.

Cognex Corp.

Technical Solution: Cognex develops comprehensive simulation-driven machine vision solutions that integrate advanced deep learning algorithms with virtual testing environments. Their approach combines physics-based simulation models with real-world data to optimize vision system performance before deployment. The company's VisionPro software suite incorporates simulation capabilities that allow engineers to test various lighting conditions, object orientations, and environmental factors virtually. Their PatMax pattern matching technology is enhanced through simulated training scenarios, enabling robust object recognition across diverse manufacturing conditions. The simulation framework supports iterative design optimization, reducing physical prototyping costs while improving system reliability and accuracy in industrial automation applications.

Strengths: Industry-leading expertise in machine vision with robust simulation tools, extensive library of pre-trained models. Weaknesses: Higher cost compared to competitors, primarily focused on industrial applications limiting broader market reach.

Tesla, Inc.

Technical Solution: Tesla employs sophisticated simulation-driven design methodologies for their Autopilot and Full Self-Driving computer vision systems. Their approach utilizes massive-scale neural network training within simulated environments that replicate real-world driving scenarios. The company's simulation platform generates millions of synthetic driving situations, including edge cases and adverse weather conditions, to train their vision algorithms. Tesla's shadow mode technology allows continuous validation of vision models against simulated predictions in real-time driving scenarios. Their end-to-end neural network architecture is optimized through reinforcement learning in simulated environments, enabling rapid iteration and testing of vision-based autonomous driving capabilities without physical road testing limitations.

Strengths: Massive real-world data collection capabilities, cutting-edge neural network architectures, continuous learning systems. Weaknesses: Limited to automotive applications, regulatory challenges in autonomous driving deployment.

Core Innovations in Vision Simulation Technologies

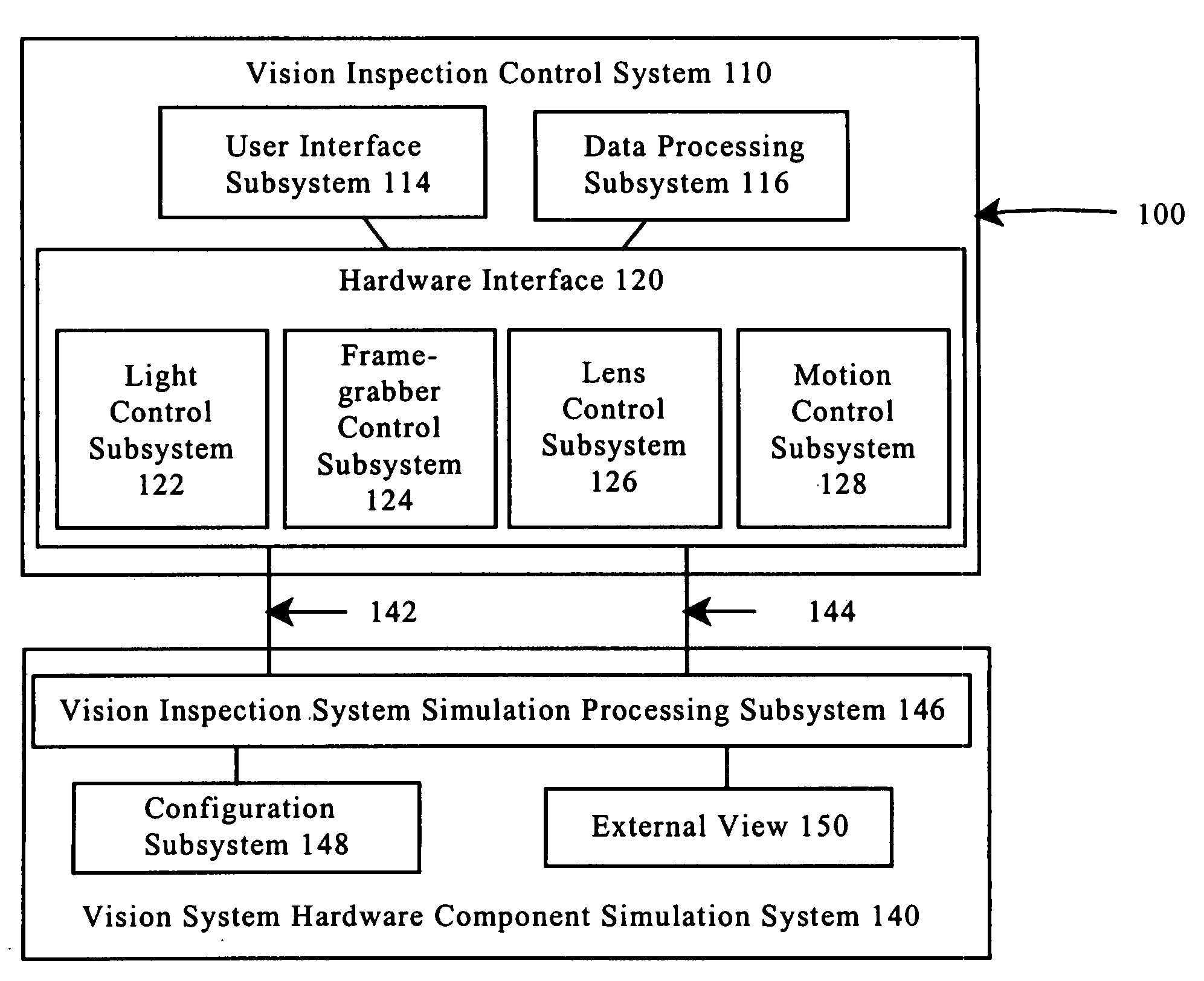

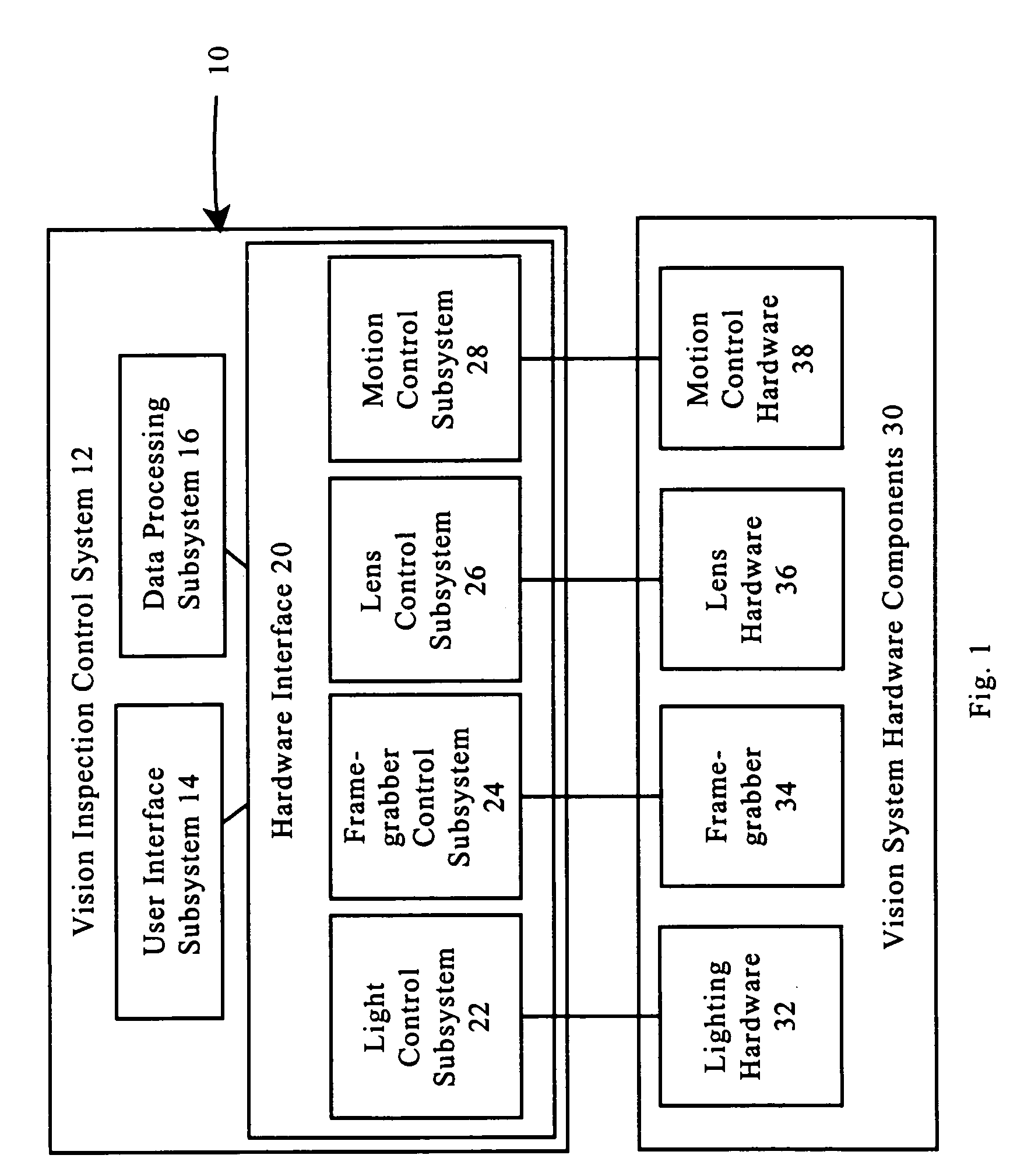

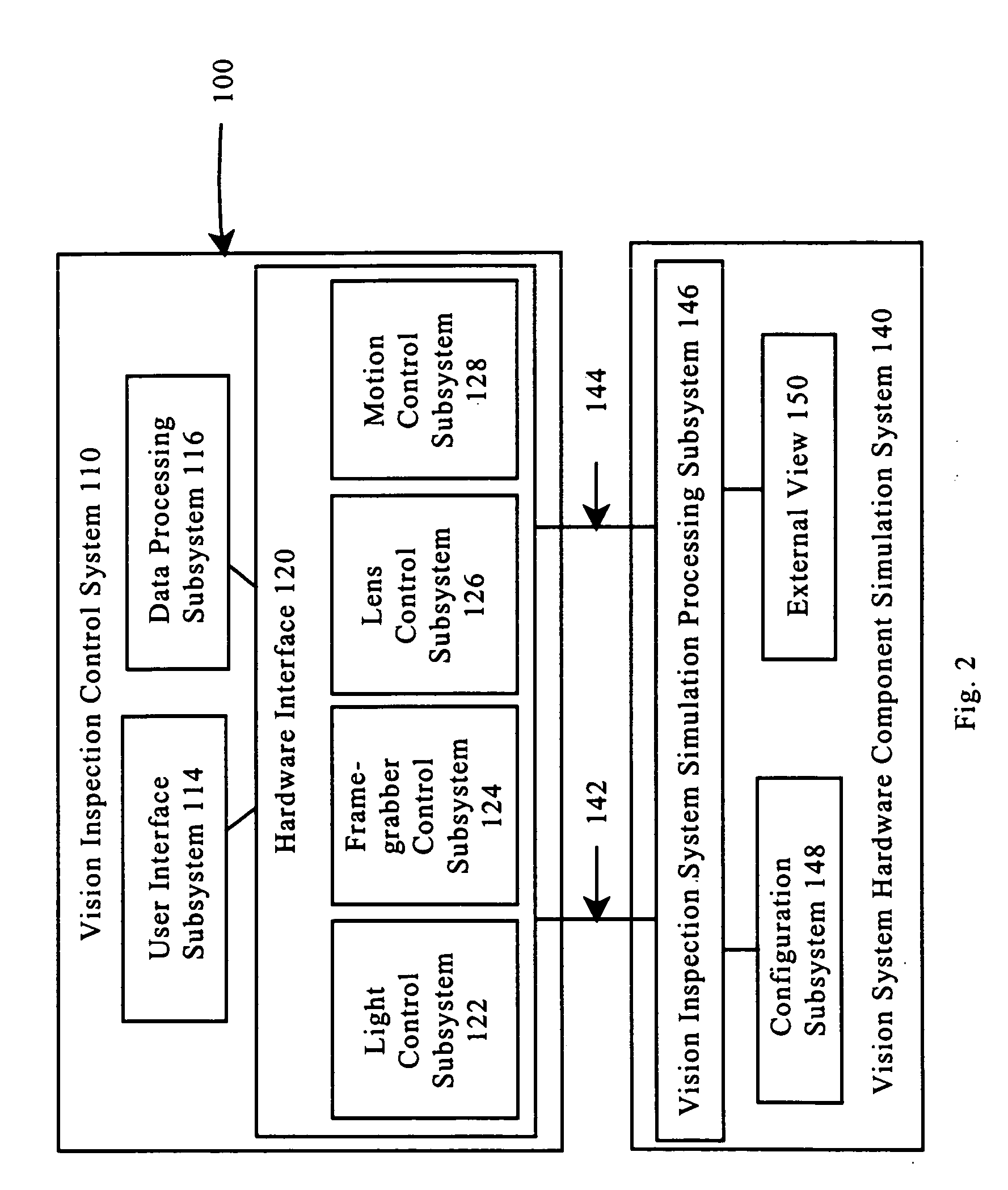

Hardware simulation systems and methods for vision inspection systems

PatentInactiveUS7092860B1

Innovation

- The use of 3D graphical models and simulation systems that generate synthetic images comparable to those produced by a camera in a vision inspection system, allowing for off-line programming and emulation of physical components like cameras, lighting, and motion control platforms, integrated with user interfaces to create a software-only version of vision inspection systems.

Computer vision through simulated hardware optimization

PatentWO2019217126A1

Innovation

- A synthetic world interface is used to model environments, sensors, and platforms, generating synthetic experiment data through simulation services to systematically sweep parameters and identify desirable design configurations efficiently, reducing the need for physical prototyping and accelerating the design process.

Digital Twin Integration in Machine Vision Systems

Digital twin technology represents a paradigmatic shift in machine vision system development, creating virtual replicas that mirror physical vision systems in real-time. This integration enables unprecedented levels of system optimization, predictive maintenance, and performance enhancement through continuous bidirectional data exchange between physical sensors and their digital counterparts.

The convergence of digital twin frameworks with machine vision architectures facilitates comprehensive system modeling that encompasses optical components, image processing algorithms, and environmental variables. These virtual representations capture the complete operational context of vision systems, including lighting conditions, camera positioning, lens characteristics, and processing pipeline parameters. The digital twin continuously ingests sensor data, environmental measurements, and performance metrics to maintain synchronization with its physical counterpart.

Advanced simulation capabilities within digital twin environments enable real-time optimization of machine vision parameters without disrupting production processes. The virtual system can simulate various operational scenarios, test algorithm modifications, and predict system behavior under different conditions. This capability proves particularly valuable for quality control applications where vision system performance directly impacts product quality and manufacturing efficiency.

Machine learning algorithms embedded within digital twin architectures analyze historical performance data to identify patterns, predict potential failures, and recommend preventive maintenance actions. The integration supports adaptive calibration procedures that automatically adjust vision system parameters based on changing environmental conditions or component degradation patterns detected through continuous monitoring.

The implementation of digital twin integration requires robust data infrastructure capable of handling high-frequency sensor data streams, real-time processing capabilities, and secure communication protocols. Edge computing architectures often serve as intermediary layers, performing initial data processing and filtering before transmitting relevant information to cloud-based digital twin platforms.

Standardization efforts focus on developing interoperable frameworks that support seamless integration across different machine vision hardware platforms and software environments. These standards address data format specifications, communication protocols, and security requirements essential for enterprise-scale deployments.

The technology demonstrates significant potential for enhancing system reliability, reducing downtime, and optimizing performance across diverse industrial applications where machine vision systems play critical operational roles.

The convergence of digital twin frameworks with machine vision architectures facilitates comprehensive system modeling that encompasses optical components, image processing algorithms, and environmental variables. These virtual representations capture the complete operational context of vision systems, including lighting conditions, camera positioning, lens characteristics, and processing pipeline parameters. The digital twin continuously ingests sensor data, environmental measurements, and performance metrics to maintain synchronization with its physical counterpart.

Advanced simulation capabilities within digital twin environments enable real-time optimization of machine vision parameters without disrupting production processes. The virtual system can simulate various operational scenarios, test algorithm modifications, and predict system behavior under different conditions. This capability proves particularly valuable for quality control applications where vision system performance directly impacts product quality and manufacturing efficiency.

Machine learning algorithms embedded within digital twin architectures analyze historical performance data to identify patterns, predict potential failures, and recommend preventive maintenance actions. The integration supports adaptive calibration procedures that automatically adjust vision system parameters based on changing environmental conditions or component degradation patterns detected through continuous monitoring.

The implementation of digital twin integration requires robust data infrastructure capable of handling high-frequency sensor data streams, real-time processing capabilities, and secure communication protocols. Edge computing architectures often serve as intermediary layers, performing initial data processing and filtering before transmitting relevant information to cloud-based digital twin platforms.

Standardization efforts focus on developing interoperable frameworks that support seamless integration across different machine vision hardware platforms and software environments. These standards address data format specifications, communication protocols, and security requirements essential for enterprise-scale deployments.

The technology demonstrates significant potential for enhancing system reliability, reducing downtime, and optimizing performance across diverse industrial applications where machine vision systems play critical operational roles.

Synthetic Data Generation for Vision Model Training

Synthetic data generation has emerged as a transformative approach in machine vision model training, addressing critical challenges in data acquisition, annotation costs, and dataset diversity. This methodology leverages computational simulation to create artificial datasets that mimic real-world visual scenarios, enabling robust model development without extensive manual data collection efforts.

The fundamental principle underlying synthetic data generation involves creating photorealistic or domain-specific visual content through advanced rendering engines, procedural generation algorithms, and physics-based simulations. Modern approaches utilize sophisticated 3D modeling environments, ray-tracing technologies, and material property simulations to produce high-fidelity synthetic images that closely approximate real-world conditions.

Domain randomization represents a cornerstone technique in synthetic data generation, systematically varying environmental parameters such as lighting conditions, object textures, camera angles, and background compositions. This approach enhances model generalization capabilities by exposing training algorithms to diverse visual variations that might be difficult or expensive to capture in real-world datasets.

Procedural content generation algorithms enable automated creation of vast datasets with controlled variations in object properties, spatial arrangements, and environmental conditions. These systems can generate millions of labeled training samples with precise ground truth annotations, including bounding boxes, semantic segmentation masks, depth information, and optical flow data.

Advanced simulation platforms now incorporate physically accurate material properties, realistic lighting models, and complex scene dynamics to bridge the domain gap between synthetic and real data. Techniques such as neural rendering, generative adversarial networks, and differentiable rendering are increasingly integrated to enhance photorealism and reduce synthetic-to-real domain transfer challenges.

Quality assessment frameworks for synthetic datasets focus on statistical distribution matching, visual fidelity metrics, and downstream task performance evaluation. These methodologies ensure that generated data maintains appropriate complexity levels and statistical properties necessary for effective model training while avoiding potential biases or artifacts that could compromise learning outcomes.

The fundamental principle underlying synthetic data generation involves creating photorealistic or domain-specific visual content through advanced rendering engines, procedural generation algorithms, and physics-based simulations. Modern approaches utilize sophisticated 3D modeling environments, ray-tracing technologies, and material property simulations to produce high-fidelity synthetic images that closely approximate real-world conditions.

Domain randomization represents a cornerstone technique in synthetic data generation, systematically varying environmental parameters such as lighting conditions, object textures, camera angles, and background compositions. This approach enhances model generalization capabilities by exposing training algorithms to diverse visual variations that might be difficult or expensive to capture in real-world datasets.

Procedural content generation algorithms enable automated creation of vast datasets with controlled variations in object properties, spatial arrangements, and environmental conditions. These systems can generate millions of labeled training samples with precise ground truth annotations, including bounding boxes, semantic segmentation masks, depth information, and optical flow data.

Advanced simulation platforms now incorporate physically accurate material properties, realistic lighting models, and complex scene dynamics to bridge the domain gap between synthetic and real data. Techniques such as neural rendering, generative adversarial networks, and differentiable rendering are increasingly integrated to enhance photorealism and reduce synthetic-to-real domain transfer challenges.

Quality assessment frameworks for synthetic datasets focus on statistical distribution matching, visual fidelity metrics, and downstream task performance evaluation. These methodologies ensure that generated data maintains appropriate complexity levels and statistical properties necessary for effective model training while avoiding potential biases or artifacts that could compromise learning outcomes.

Unlock deeper insights with PatSnap Eureka Quick Research — get a full tech report to explore trends and direct your research. Try now!

Generate Your Research Report Instantly with AI Agent

Supercharge your innovation with PatSnap Eureka AI Agent Platform!