Active Memory Expansion for Real-Time Analysis: Key Metrics

MAR 7, 20269 MIN READ

Generate Your Research Report Instantly with AI Agent

Patsnap Eureka helps you evaluate technical feasibility & market potential.

Active Memory Expansion Background and Objectives

Active memory expansion represents a critical technological paradigm that addresses the growing computational demands of real-time data analysis systems. As modern applications generate unprecedented volumes of data requiring immediate processing, traditional memory architectures face significant limitations in capacity, bandwidth, and latency constraints. The evolution of this technology stems from the fundamental need to bridge the performance gap between processor speeds and memory access times, particularly in scenarios where data sets exceed available physical memory.

The historical development of memory expansion techniques has progressed through several distinct phases, beginning with basic virtual memory systems in the 1960s, advancing through sophisticated caching mechanisms in the 1980s and 1990s, and culminating in today's intelligent memory management solutions. Contemporary approaches leverage advanced algorithms, machine learning techniques, and hardware acceleration to dynamically optimize memory utilization patterns based on real-time workload characteristics.

Current technological trends indicate a convergence toward hybrid memory architectures that combine multiple storage tiers, including high-speed volatile memory, persistent memory technologies, and intelligent storage-class memory solutions. These systems employ predictive algorithms to anticipate data access patterns, enabling proactive memory allocation and reducing latency-critical bottlenecks that traditionally impede real-time analysis performance.

The primary objective of active memory expansion technology centers on achieving seamless scalability of memory resources without compromising system responsiveness or analytical accuracy. This involves developing sophisticated algorithms capable of intelligently managing memory hierarchies, optimizing data placement strategies, and maintaining consistent performance levels regardless of dataset size or complexity variations.

Key performance targets include achieving sub-millisecond memory access times for frequently accessed data, maintaining linear scalability as memory requirements grow, and ensuring fault-tolerant operation under varying workload conditions. Additionally, the technology aims to minimize power consumption while maximizing throughput, addressing both performance and sustainability concerns in large-scale deployment scenarios.

The ultimate goal encompasses creating adaptive memory systems that can automatically adjust their configuration and behavior based on real-time analysis requirements, enabling organizations to process larger datasets more efficiently while maintaining the responsiveness necessary for time-critical decision-making processes across diverse application domains.

The historical development of memory expansion techniques has progressed through several distinct phases, beginning with basic virtual memory systems in the 1960s, advancing through sophisticated caching mechanisms in the 1980s and 1990s, and culminating in today's intelligent memory management solutions. Contemporary approaches leverage advanced algorithms, machine learning techniques, and hardware acceleration to dynamically optimize memory utilization patterns based on real-time workload characteristics.

Current technological trends indicate a convergence toward hybrid memory architectures that combine multiple storage tiers, including high-speed volatile memory, persistent memory technologies, and intelligent storage-class memory solutions. These systems employ predictive algorithms to anticipate data access patterns, enabling proactive memory allocation and reducing latency-critical bottlenecks that traditionally impede real-time analysis performance.

The primary objective of active memory expansion technology centers on achieving seamless scalability of memory resources without compromising system responsiveness or analytical accuracy. This involves developing sophisticated algorithms capable of intelligently managing memory hierarchies, optimizing data placement strategies, and maintaining consistent performance levels regardless of dataset size or complexity variations.

Key performance targets include achieving sub-millisecond memory access times for frequently accessed data, maintaining linear scalability as memory requirements grow, and ensuring fault-tolerant operation under varying workload conditions. Additionally, the technology aims to minimize power consumption while maximizing throughput, addressing both performance and sustainability concerns in large-scale deployment scenarios.

The ultimate goal encompasses creating adaptive memory systems that can automatically adjust their configuration and behavior based on real-time analysis requirements, enabling organizations to process larger datasets more efficiently while maintaining the responsiveness necessary for time-critical decision-making processes across diverse application domains.

Market Demand for Real-Time Analysis Solutions

The market demand for real-time analysis solutions has experienced unprecedented growth across multiple industries, driven by the exponential increase in data generation and the critical need for instantaneous decision-making capabilities. Organizations across sectors including financial services, telecommunications, healthcare, manufacturing, and e-commerce are increasingly recognizing that traditional batch processing approaches are insufficient for meeting modern operational requirements.

Financial institutions represent one of the most demanding segments, where millisecond delays in transaction processing, fraud detection, and algorithmic trading can result in significant financial losses. High-frequency trading platforms, risk management systems, and real-time compliance monitoring have become essential components of competitive financial operations, creating substantial demand for advanced memory expansion technologies that can support continuous data stream processing.

The telecommunications industry faces similar pressures with the proliferation of 5G networks, IoT devices, and edge computing applications. Network operators require real-time analytics for traffic optimization, quality of service monitoring, and predictive maintenance. The massive volume of network telemetry data generated continuously necessitates memory architectures capable of handling concurrent read-write operations while maintaining low-latency access patterns.

Healthcare organizations are increasingly adopting real-time monitoring systems for patient care, medical device management, and clinical decision support. Electronic health records, continuous patient monitoring, and medical imaging applications generate substantial data streams that require immediate processing capabilities. The critical nature of healthcare decisions amplifies the importance of reliable, high-performance memory systems.

Manufacturing sectors are embracing Industry 4.0 initiatives that rely heavily on real-time analytics for predictive maintenance, quality control, and supply chain optimization. Smart factory implementations require continuous monitoring of production lines, equipment performance, and environmental conditions, generating massive datasets that demand immediate analysis capabilities.

E-commerce platforms face growing pressure to deliver personalized experiences through real-time recommendation engines, dynamic pricing algorithms, and fraud prevention systems. The ability to process customer behavior data, inventory updates, and transaction patterns in real-time directly impacts revenue generation and customer satisfaction.

The convergence of artificial intelligence and machine learning with real-time analytics has further intensified market demand. Organizations seek to implement intelligent systems capable of learning and adapting from streaming data, requiring memory architectures that can support both analytical processing and model training simultaneously.

Cloud service providers are responding to this demand by developing specialized offerings for real-time analytics workloads, creating additional market opportunities for memory expansion technologies that can scale dynamically based on processing requirements.

Financial institutions represent one of the most demanding segments, where millisecond delays in transaction processing, fraud detection, and algorithmic trading can result in significant financial losses. High-frequency trading platforms, risk management systems, and real-time compliance monitoring have become essential components of competitive financial operations, creating substantial demand for advanced memory expansion technologies that can support continuous data stream processing.

The telecommunications industry faces similar pressures with the proliferation of 5G networks, IoT devices, and edge computing applications. Network operators require real-time analytics for traffic optimization, quality of service monitoring, and predictive maintenance. The massive volume of network telemetry data generated continuously necessitates memory architectures capable of handling concurrent read-write operations while maintaining low-latency access patterns.

Healthcare organizations are increasingly adopting real-time monitoring systems for patient care, medical device management, and clinical decision support. Electronic health records, continuous patient monitoring, and medical imaging applications generate substantial data streams that require immediate processing capabilities. The critical nature of healthcare decisions amplifies the importance of reliable, high-performance memory systems.

Manufacturing sectors are embracing Industry 4.0 initiatives that rely heavily on real-time analytics for predictive maintenance, quality control, and supply chain optimization. Smart factory implementations require continuous monitoring of production lines, equipment performance, and environmental conditions, generating massive datasets that demand immediate analysis capabilities.

E-commerce platforms face growing pressure to deliver personalized experiences through real-time recommendation engines, dynamic pricing algorithms, and fraud prevention systems. The ability to process customer behavior data, inventory updates, and transaction patterns in real-time directly impacts revenue generation and customer satisfaction.

The convergence of artificial intelligence and machine learning with real-time analytics has further intensified market demand. Organizations seek to implement intelligent systems capable of learning and adapting from streaming data, requiring memory architectures that can support both analytical processing and model training simultaneously.

Cloud service providers are responding to this demand by developing specialized offerings for real-time analytics workloads, creating additional market opportunities for memory expansion technologies that can scale dynamically based on processing requirements.

Current State of Memory Expansion Technologies

Memory expansion technologies for real-time analysis applications have evolved significantly over the past decade, driven by the exponential growth in data processing requirements across industries. Current implementations primarily focus on three core approaches: hardware-based memory scaling, software-defined memory management, and hybrid cloud-edge memory architectures.

Hardware-based solutions dominate the enterprise market, with technologies such as Intel Optane DC Persistent Memory and Samsung's High Bandwidth Memory (HBM) leading the charge. These solutions provide direct memory expansion capabilities, offering capacities ranging from 128GB to several terabytes per node. However, latency constraints remain a critical challenge, with access times varying between 200-400 nanoseconds for persistent memory compared to 10-20 nanoseconds for traditional DRAM.

Software-defined memory management systems have gained traction through solutions like Redis Enterprise, Apache Ignite, and Hazelcast. These platforms implement distributed memory grids that can scale horizontally across multiple nodes while maintaining sub-millisecond response times for frequently accessed data. Current implementations achieve memory utilization rates of 85-92% while supporting concurrent read operations exceeding 1 million requests per second.

Hybrid architectures combining on-premises memory with cloud-based expansion represent an emerging trend. Amazon ElastiCache, Microsoft Azure Cache, and Google Cloud Memorystore provide seamless memory scaling capabilities with latency optimization through edge computing integration. These solutions typically maintain 95th percentile latencies below 5 milliseconds for geographically distributed deployments.

The primary technical constraints affecting current memory expansion technologies include memory coherence protocols, data consistency guarantees, and thermal management limitations. NUMA (Non-Uniform Memory Access) architectures introduce complexity in memory allocation strategies, while maintaining ACID properties across distributed memory systems requires sophisticated consensus algorithms that can impact performance by 15-25%.

Recent advancements in memory fabric technologies, including Gen-Z and OpenCAPI standards, are addressing bandwidth bottlenecks by providing memory-semantic access to remote storage resources. These protocols support memory expansion ratios of up to 64:1 while maintaining application transparency through hardware abstraction layers.

Hardware-based solutions dominate the enterprise market, with technologies such as Intel Optane DC Persistent Memory and Samsung's High Bandwidth Memory (HBM) leading the charge. These solutions provide direct memory expansion capabilities, offering capacities ranging from 128GB to several terabytes per node. However, latency constraints remain a critical challenge, with access times varying between 200-400 nanoseconds for persistent memory compared to 10-20 nanoseconds for traditional DRAM.

Software-defined memory management systems have gained traction through solutions like Redis Enterprise, Apache Ignite, and Hazelcast. These platforms implement distributed memory grids that can scale horizontally across multiple nodes while maintaining sub-millisecond response times for frequently accessed data. Current implementations achieve memory utilization rates of 85-92% while supporting concurrent read operations exceeding 1 million requests per second.

Hybrid architectures combining on-premises memory with cloud-based expansion represent an emerging trend. Amazon ElastiCache, Microsoft Azure Cache, and Google Cloud Memorystore provide seamless memory scaling capabilities with latency optimization through edge computing integration. These solutions typically maintain 95th percentile latencies below 5 milliseconds for geographically distributed deployments.

The primary technical constraints affecting current memory expansion technologies include memory coherence protocols, data consistency guarantees, and thermal management limitations. NUMA (Non-Uniform Memory Access) architectures introduce complexity in memory allocation strategies, while maintaining ACID properties across distributed memory systems requires sophisticated consensus algorithms that can impact performance by 15-25%.

Recent advancements in memory fabric technologies, including Gen-Z and OpenCAPI standards, are addressing bandwidth bottlenecks by providing memory-semantic access to remote storage resources. These protocols support memory expansion ratios of up to 64:1 while maintaining application transparency through hardware abstraction layers.

Current Active Memory Expansion Solutions

01 Memory performance monitoring and optimization metrics

Systems and methods for monitoring memory performance through key metrics such as access latency, bandwidth utilization, and throughput. These metrics enable real-time assessment of memory system efficiency and identification of performance bottlenecks. Advanced monitoring techniques track memory access patterns, cache hit rates, and data transfer speeds to optimize overall system performance.- Memory performance monitoring and optimization metrics: Systems and methods for monitoring memory performance through key metrics such as access latency, bandwidth utilization, and throughput measurements. These metrics enable real-time assessment of memory subsystem efficiency and identification of performance bottlenecks. Advanced monitoring techniques track memory access patterns, cache hit rates, and data transfer speeds to optimize overall system performance.

- Memory usage and capacity tracking metrics: Techniques for tracking and analyzing memory capacity utilization, including active memory allocation, free space availability, and memory consumption patterns. These metrics provide insights into memory resource management, enabling efficient allocation strategies and preventing memory exhaustion. Systems monitor both physical and virtual memory usage to ensure optimal resource distribution across applications.

- Memory reliability and error rate metrics: Methods for measuring memory reliability through error detection and correction metrics, including bit error rates, failure prediction indicators, and data integrity measurements. These metrics assess memory health and predict potential failures before they impact system operation. Advanced techniques monitor error patterns, temperature effects, and aging characteristics to ensure data reliability.

- Memory power consumption and efficiency metrics: Systems for monitoring memory power consumption metrics including active power usage, idle state power draw, and energy efficiency ratios. These measurements enable power-aware memory management strategies and optimization of energy consumption in memory subsystems. Techniques track power states, voltage levels, and current draw to minimize overall system power requirements while maintaining performance.

- Memory access pattern and workload characterization metrics: Approaches for analyzing memory access patterns through metrics such as read-write ratios, sequential versus random access frequencies, and temporal locality measurements. These metrics characterize workload behavior and enable adaptive memory management strategies. Systems collect and analyze access statistics to predict future memory requirements and optimize prefetching and caching strategies.

02 Memory usage and capacity metrics tracking

Techniques for measuring and analyzing memory capacity utilization, including tracking active memory pages, memory allocation patterns, and available memory resources. These metrics help in understanding memory consumption trends, predicting memory requirements, and preventing memory exhaustion scenarios. The tracking systems provide detailed insights into memory footprint and allocation efficiency.Expand Specific Solutions03 Memory quality and reliability metrics

Methods for assessing memory reliability through metrics such as error rates, data integrity checks, and failure prediction indicators. These quality metrics monitor memory health, detect potential failures, and ensure data consistency. The systems implement error correction mechanisms and provide early warning signals for memory degradation.Expand Specific Solutions04 Memory access pattern and behavior analytics

Analytical frameworks for evaluating memory access patterns, including read-write ratios, sequential versus random access metrics, and temporal access characteristics. These behavioral metrics enable prediction of future memory access needs and optimization of memory management strategies. The analysis helps in identifying hot spots and improving cache efficiency.Expand Specific Solutions05 Memory power consumption and efficiency metrics

Systems for measuring memory power consumption, energy efficiency, and thermal characteristics. These metrics track power usage per memory operation, idle power consumption, and energy-performance trade-offs. The monitoring enables optimization of memory configurations for reduced power consumption while maintaining performance requirements.Expand Specific Solutions

Key Players in Memory and Real-Time Analytics

The active memory expansion technology for real-time analysis represents an emerging market segment within the broader memory and computing infrastructure industry, currently in its early-to-mid development stage. The market demonstrates significant growth potential driven by increasing demands for real-time data processing across telecommunications, financial services, and cloud computing sectors. Technology maturity varies considerably among key players, with established semiconductor companies like Micron Technology and IBM leading in foundational memory technologies, while telecommunications giants such as SK Telecom and China Telecom drive application-specific implementations. Research institutions including Tsinghua University and HRL Laboratories contribute to advanced algorithmic development, while technology integrators like Ping An Technology and Lenovo focus on commercial deployment solutions. The competitive landscape shows a convergence of hardware manufacturers, software developers, and system integrators working to address scalability challenges in real-time memory management systems.

International Business Machines Corp.

Technical Solution: IBM has developed advanced active memory expansion technologies through their Power Systems architecture, featuring dynamic memory allocation and real-time workload optimization. Their solution incorporates intelligent memory tiering that automatically moves frequently accessed data to faster memory layers while maintaining transparent access patterns. The system provides comprehensive performance metrics including memory bandwidth utilization, cache hit ratios, and latency measurements across different memory tiers. IBM's approach integrates machine learning algorithms to predict memory access patterns and proactively expand memory pools before bottlenecks occur, ensuring consistent application performance during peak workloads.

Strengths: Enterprise-grade reliability and extensive integration capabilities with existing infrastructure. Weaknesses: High implementation costs and complexity requiring specialized expertise for deployment and maintenance.

Micron Technology, Inc.

Technical Solution: Micron has pioneered memory expansion solutions through their innovative 3D XPoint technology and advanced DRAM architectures. Their active memory expansion system utilizes intelligent memory controllers that dynamically allocate memory resources based on real-time application demands. The technology features automated memory pool scaling with sub-microsecond response times and comprehensive monitoring of key performance indicators including memory throughput, error correction rates, and thermal management metrics. Micron's solution provides detailed analytics on memory utilization patterns, enabling predictive scaling and optimization of memory resources across distributed computing environments while maintaining data integrity and system stability.

Strengths: Leading-edge memory technology with superior performance characteristics and energy efficiency. Weaknesses: Limited software ecosystem compared to established players and higher costs for cutting-edge memory solutions.

Core Innovations in Dynamic Memory Management

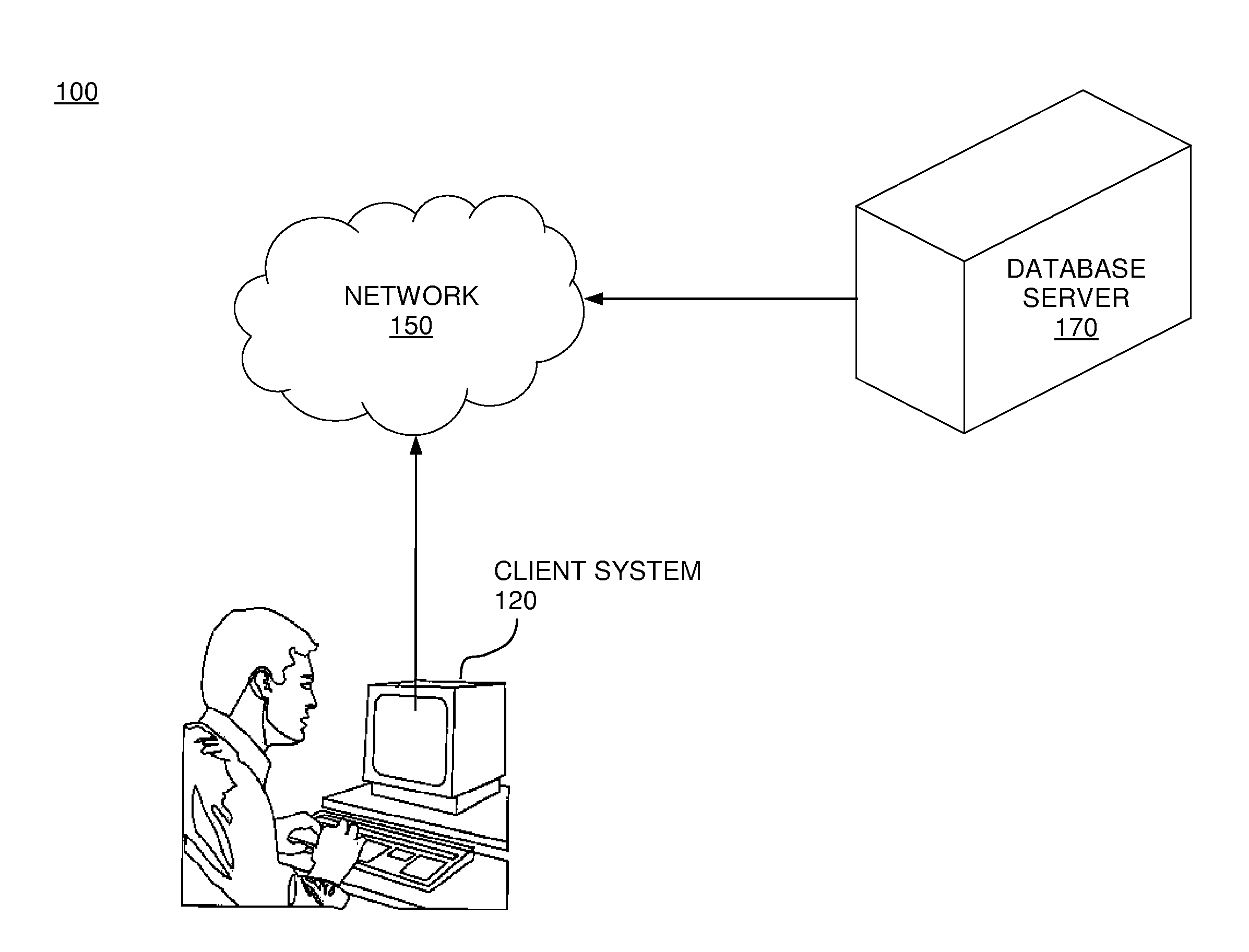

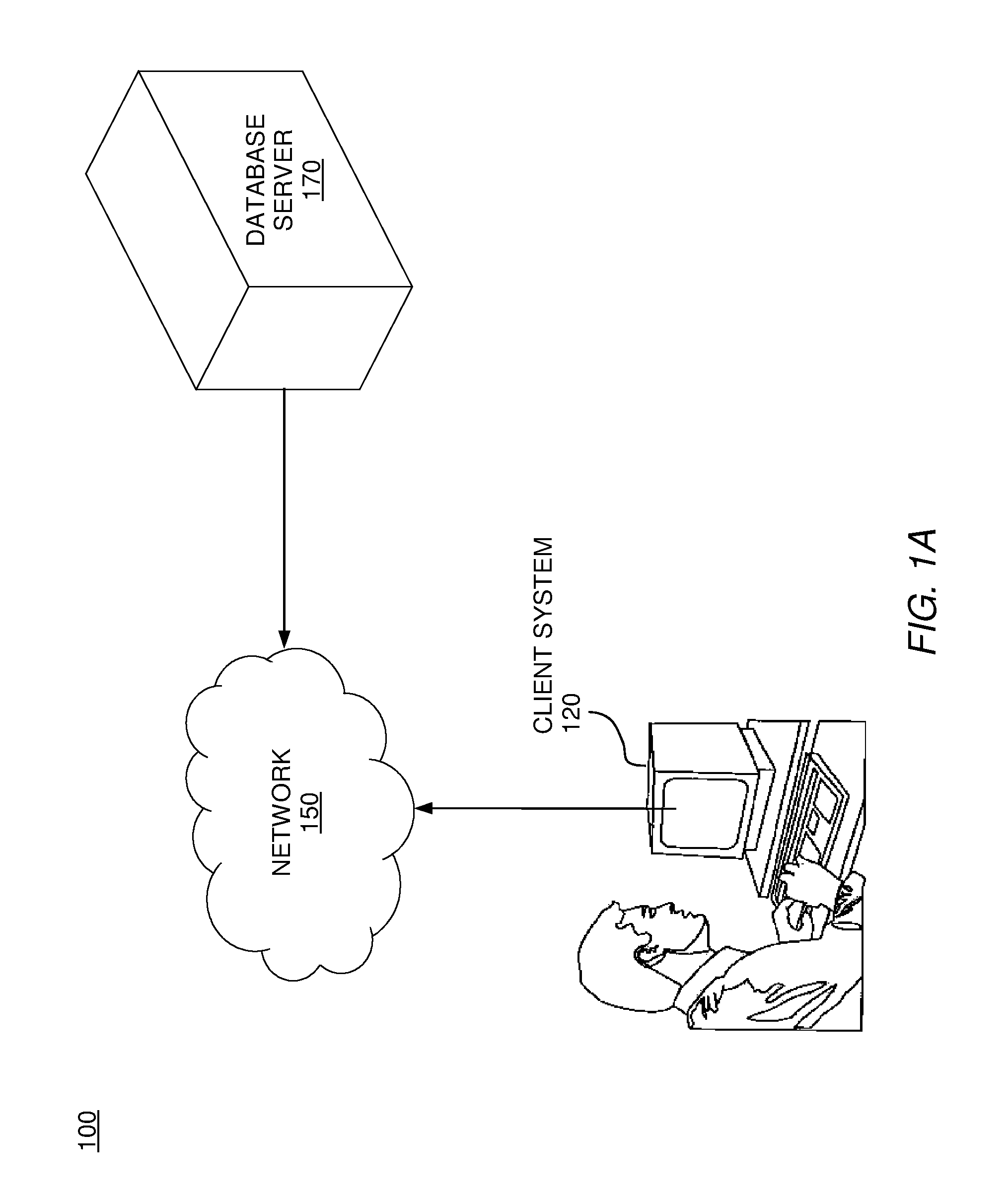

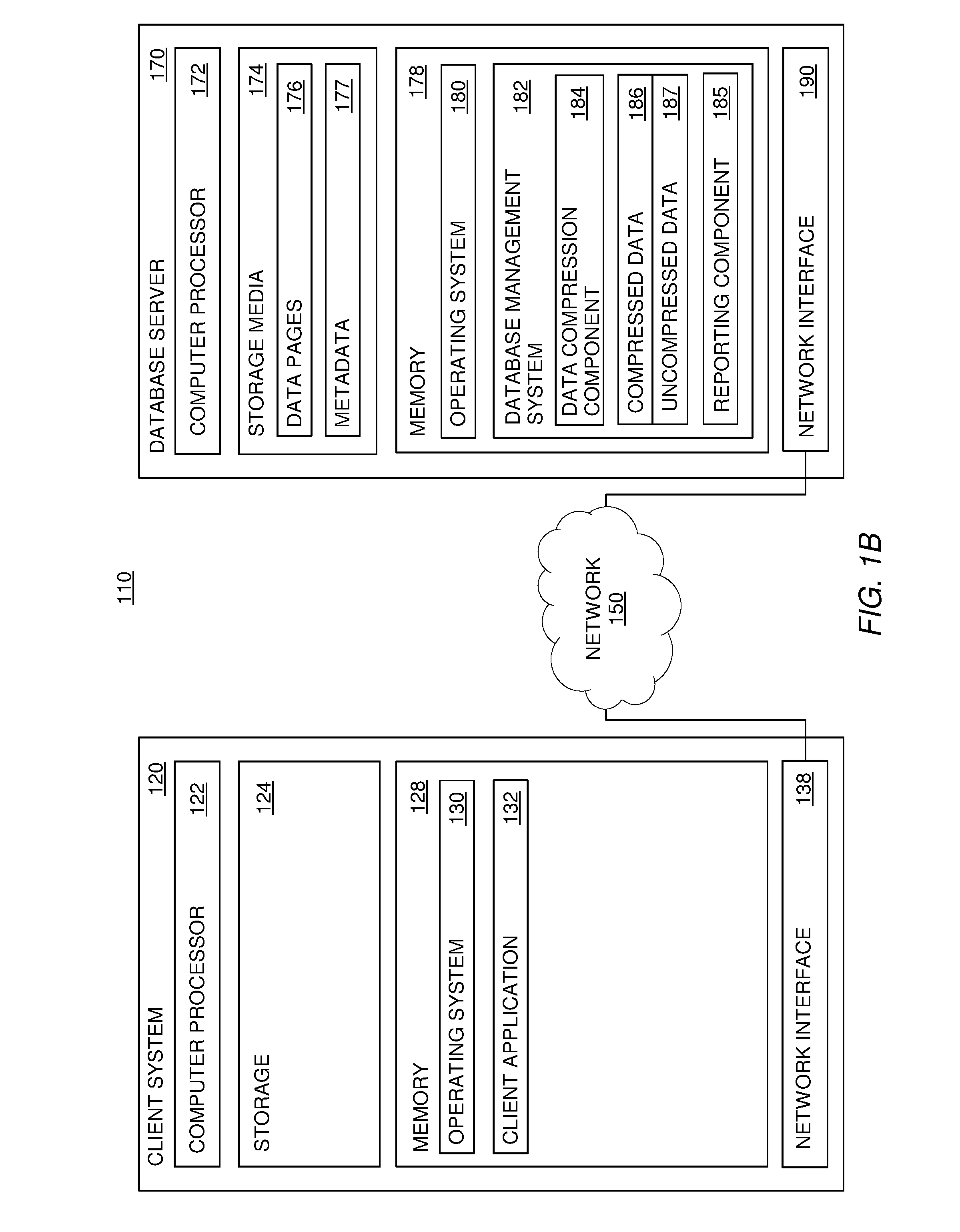

Active memory expansion and rdbms meta data and tooling

PatentInactiveUS20120109908A1

Innovation

- Implement a method that identifies indicatory data associated with retrieved data to determine whether to compress it, using compression criteria to selectively compress data based on metadata, query types, and access frequencies, thereby optimizing memory usage and reducing processing time.

Memory elastic expansion and contraction method and device, equipment, medium and product

PatentPendingCN118193200A

Innovation

- Predict future memory needs through a machine learning model based on memory demand time series data, dynamically perform expansion or contraction operations, and adjust memory parameters to achieve adaptive elastic scaling.

Performance Metrics and Benchmarking Standards

Establishing comprehensive performance metrics for active memory expansion in real-time analysis systems requires a multi-dimensional approach that encompasses both quantitative and qualitative measurements. The primary metrics focus on memory utilization efficiency, access latency, throughput capacity, and system responsiveness under varying workloads. These fundamental indicators provide the foundation for evaluating how effectively memory expansion technologies can support real-time analytical operations.

Memory access latency serves as a critical performance indicator, typically measured in nanoseconds for cache operations and microseconds for extended memory access. Industry benchmarks establish acceptable latency thresholds at sub-100 nanosecond levels for tier-one memory access and sub-10 microsecond levels for expanded memory regions. Throughput measurements evaluate data transfer rates between memory tiers, with current standards targeting minimum bandwidth of 100 GB/s for primary memory interfaces and 25 GB/s for expansion interfaces.

Scalability metrics assess system performance degradation as memory capacity increases beyond traditional boundaries. Key measurements include memory allocation efficiency ratios, fragmentation rates, and garbage collection overhead percentages. Benchmarking standards typically require less than 5% performance degradation when expanding memory capacity by 10x, with fragmentation rates maintained below 15% during sustained operations.

Real-time responsiveness metrics evaluate system behavior under time-critical constraints, measuring deadline miss rates, jitter variance, and worst-case execution times. Industry standards mandate 99.9% deadline compliance for real-time analytical workloads, with maximum jitter variance below 10% of target response times. These metrics ensure that memory expansion does not compromise the deterministic behavior required for real-time analysis applications.

Power efficiency and thermal management metrics have become increasingly important as memory systems scale. Benchmarking standards evaluate performance-per-watt ratios, thermal throttling frequency, and energy consumption patterns across different memory tiers. Current industry targets aim for less than 20% increase in power consumption when doubling effective memory capacity through expansion technologies.

Reliability and fault tolerance metrics assess system stability during extended operations, measuring error correction overhead, memory scrubbing efficiency, and graceful degradation capabilities. Standard benchmarks require bit error rates below 10^-17 for mission-critical applications, with automatic error recovery mechanisms maintaining system availability above 99.99% during memory tier transitions.

Memory access latency serves as a critical performance indicator, typically measured in nanoseconds for cache operations and microseconds for extended memory access. Industry benchmarks establish acceptable latency thresholds at sub-100 nanosecond levels for tier-one memory access and sub-10 microsecond levels for expanded memory regions. Throughput measurements evaluate data transfer rates between memory tiers, with current standards targeting minimum bandwidth of 100 GB/s for primary memory interfaces and 25 GB/s for expansion interfaces.

Scalability metrics assess system performance degradation as memory capacity increases beyond traditional boundaries. Key measurements include memory allocation efficiency ratios, fragmentation rates, and garbage collection overhead percentages. Benchmarking standards typically require less than 5% performance degradation when expanding memory capacity by 10x, with fragmentation rates maintained below 15% during sustained operations.

Real-time responsiveness metrics evaluate system behavior under time-critical constraints, measuring deadline miss rates, jitter variance, and worst-case execution times. Industry standards mandate 99.9% deadline compliance for real-time analytical workloads, with maximum jitter variance below 10% of target response times. These metrics ensure that memory expansion does not compromise the deterministic behavior required for real-time analysis applications.

Power efficiency and thermal management metrics have become increasingly important as memory systems scale. Benchmarking standards evaluate performance-per-watt ratios, thermal throttling frequency, and energy consumption patterns across different memory tiers. Current industry targets aim for less than 20% increase in power consumption when doubling effective memory capacity through expansion technologies.

Reliability and fault tolerance metrics assess system stability during extended operations, measuring error correction overhead, memory scrubbing efficiency, and graceful degradation capabilities. Standard benchmarks require bit error rates below 10^-17 for mission-critical applications, with automatic error recovery mechanisms maintaining system availability above 99.99% during memory tier transitions.

System Integration and Compatibility Challenges

Active memory expansion systems for real-time analysis face significant integration challenges when deployed within existing enterprise infrastructures. Legacy systems often operate on outdated architectures that lack the necessary bandwidth and processing capabilities to support dynamic memory scaling operations. These compatibility gaps create bottlenecks that can severely impact the performance benefits that active memory expansion is designed to deliver.

Hardware compatibility represents a fundamental challenge, particularly regarding memory controller interfaces and bus architectures. Modern active memory expansion solutions require advanced memory management units and high-speed interconnects that may not be present in older systems. The mismatch between new memory expansion technologies and existing hardware platforms often necessitates costly infrastructure upgrades or the implementation of complex adapter solutions that can introduce additional latency.

Software integration complexities arise from the need to modify existing applications and operating systems to effectively utilize expanded memory resources. Real-time analysis applications must be redesigned to recognize and leverage dynamically allocated memory pools, requiring significant changes to memory management routines and data structure implementations. This process becomes particularly challenging when dealing with proprietary software systems where source code modifications are not feasible.

Cross-platform compatibility issues emerge when organizations operate heterogeneous computing environments spanning different operating systems, processor architectures, and virtualization platforms. Active memory expansion solutions must maintain consistent performance characteristics across diverse system configurations while ensuring seamless interoperability between different technology stacks. The complexity increases exponentially in cloud-hybrid environments where workloads may migrate between on-premises and cloud-based infrastructure.

API standardization remains a critical challenge as different vendors implement proprietary interfaces for memory expansion control and monitoring. The absence of universal standards complicates integration efforts and creates vendor lock-in scenarios that limit organizational flexibility. Additionally, security considerations become paramount when integrating active memory systems, as expanded attack surfaces require comprehensive security protocols that must be compatible with existing cybersecurity frameworks while maintaining real-time performance requirements.

Hardware compatibility represents a fundamental challenge, particularly regarding memory controller interfaces and bus architectures. Modern active memory expansion solutions require advanced memory management units and high-speed interconnects that may not be present in older systems. The mismatch between new memory expansion technologies and existing hardware platforms often necessitates costly infrastructure upgrades or the implementation of complex adapter solutions that can introduce additional latency.

Software integration complexities arise from the need to modify existing applications and operating systems to effectively utilize expanded memory resources. Real-time analysis applications must be redesigned to recognize and leverage dynamically allocated memory pools, requiring significant changes to memory management routines and data structure implementations. This process becomes particularly challenging when dealing with proprietary software systems where source code modifications are not feasible.

Cross-platform compatibility issues emerge when organizations operate heterogeneous computing environments spanning different operating systems, processor architectures, and virtualization platforms. Active memory expansion solutions must maintain consistent performance characteristics across diverse system configurations while ensuring seamless interoperability between different technology stacks. The complexity increases exponentially in cloud-hybrid environments where workloads may migrate between on-premises and cloud-based infrastructure.

API standardization remains a critical challenge as different vendors implement proprietary interfaces for memory expansion control and monitoring. The absence of universal standards complicates integration efforts and creates vendor lock-in scenarios that limit organizational flexibility. Additionally, security considerations become paramount when integrating active memory systems, as expanded attack surfaces require comprehensive security protocols that must be compatible with existing cybersecurity frameworks while maintaining real-time performance requirements.

Unlock deeper insights with Patsnap Eureka Quick Research — get a full tech report to explore trends and direct your research. Try now!

Generate Your Research Report Instantly with AI Agent

Supercharge your innovation with Patsnap Eureka AI Agent Platform!