Comparing Processing Capabilities of Machine Vision Systems

APR 3, 20269 MIN READ

Generate Your Research Report Instantly with AI Agent

PatSnap Eureka helps you evaluate technical feasibility & market potential.

Machine Vision Processing Evolution and Performance Goals

Machine vision technology has undergone remarkable transformation since its inception in the 1960s, evolving from simple binary image processing systems to sophisticated AI-powered visual recognition platforms. The early systems were primarily designed for basic quality control tasks in manufacturing environments, utilizing rudimentary pattern matching algorithms and limited computational resources. These foundational systems established the groundwork for what would become one of the most rapidly advancing fields in industrial automation.

The evolution trajectory of machine vision processing has been characterized by exponential improvements in computational speed, accuracy, and versatility. From the initial frame rates of 30 frames per second with basic edge detection capabilities, modern systems now achieve processing speeds exceeding 1000 frames per second while simultaneously performing complex multi-object recognition, classification, and tracking tasks. This dramatic enhancement reflects the convergence of advanced semiconductor technology, optimized algorithms, and specialized processing architectures.

Contemporary machine vision systems demonstrate unprecedented processing capabilities through the integration of deep learning frameworks and parallel computing architectures. Graphics Processing Units (GPUs) and specialized Vision Processing Units (VPUs) have revolutionized the field by enabling real-time execution of computationally intensive neural networks. These advancements have expanded application domains from traditional manufacturing inspection to autonomous vehicles, medical imaging, and augmented reality systems.

The performance goals driving current machine vision development focus on achieving human-level visual perception while maintaining industrial-grade reliability and speed. Key metrics include sub-millisecond latency for critical applications, 99.9% accuracy rates in object detection and classification tasks, and the ability to process multiple high-resolution video streams simultaneously. Additionally, energy efficiency has emerged as a crucial performance parameter, particularly for edge computing applications where power consumption directly impacts system viability.

Future performance targets emphasize the development of adaptive processing capabilities that can dynamically adjust computational resources based on scene complexity and application requirements. The integration of neuromorphic computing principles and event-driven processing architectures represents the next frontier in machine vision evolution, promising to deliver unprecedented efficiency and real-time responsiveness for increasingly complex visual analysis tasks.

The evolution trajectory of machine vision processing has been characterized by exponential improvements in computational speed, accuracy, and versatility. From the initial frame rates of 30 frames per second with basic edge detection capabilities, modern systems now achieve processing speeds exceeding 1000 frames per second while simultaneously performing complex multi-object recognition, classification, and tracking tasks. This dramatic enhancement reflects the convergence of advanced semiconductor technology, optimized algorithms, and specialized processing architectures.

Contemporary machine vision systems demonstrate unprecedented processing capabilities through the integration of deep learning frameworks and parallel computing architectures. Graphics Processing Units (GPUs) and specialized Vision Processing Units (VPUs) have revolutionized the field by enabling real-time execution of computationally intensive neural networks. These advancements have expanded application domains from traditional manufacturing inspection to autonomous vehicles, medical imaging, and augmented reality systems.

The performance goals driving current machine vision development focus on achieving human-level visual perception while maintaining industrial-grade reliability and speed. Key metrics include sub-millisecond latency for critical applications, 99.9% accuracy rates in object detection and classification tasks, and the ability to process multiple high-resolution video streams simultaneously. Additionally, energy efficiency has emerged as a crucial performance parameter, particularly for edge computing applications where power consumption directly impacts system viability.

Future performance targets emphasize the development of adaptive processing capabilities that can dynamically adjust computational resources based on scene complexity and application requirements. The integration of neuromorphic computing principles and event-driven processing architectures represents the next frontier in machine vision evolution, promising to deliver unprecedented efficiency and real-time responsiveness for increasingly complex visual analysis tasks.

Market Demand for Advanced Machine Vision Processing Systems

The global machine vision market is experiencing unprecedented growth driven by the increasing demand for automation across manufacturing, automotive, electronics, and pharmaceutical industries. Organizations are seeking advanced processing capabilities to handle complex visual inspection tasks, quality control processes, and real-time decision-making applications that traditional systems cannot adequately address.

Manufacturing sectors are particularly driving demand for high-performance machine vision systems capable of processing multiple data streams simultaneously. The rise of Industry 4.0 initiatives has created substantial market pressure for vision systems that can integrate seamlessly with existing production lines while delivering enhanced processing speeds and accuracy. Companies require systems that can handle diverse imaging modalities, from standard RGB cameras to hyperspectral and thermal imaging devices.

The automotive industry represents a significant growth segment, with autonomous vehicle development and advanced driver assistance systems requiring sophisticated real-time image processing capabilities. These applications demand vision systems that can process high-resolution video streams at extremely low latencies while maintaining exceptional reliability under varying environmental conditions.

Electronics manufacturing has emerged as another key market driver, where miniaturization trends necessitate vision systems capable of detecting microscopic defects and performing precise measurements on increasingly complex components. The semiconductor industry specifically requires processing capabilities that can handle wafer-level inspection tasks with nanometer-scale precision.

Healthcare and medical device sectors are expanding their adoption of machine vision technologies for diagnostic imaging, surgical guidance, and pharmaceutical quality assurance. These applications require specialized processing capabilities that can handle medical imaging standards while ensuring regulatory compliance and patient safety requirements.

The food and beverage industry is increasingly implementing advanced vision systems for contamination detection, packaging verification, and nutritional analysis. These applications require processing systems capable of handling multiple inspection criteria simultaneously while maintaining high throughput rates essential for commercial food production.

Emerging applications in robotics, logistics, and smart city infrastructure are creating new market segments that demand versatile processing architectures capable of adapting to diverse operational requirements. The convergence of artificial intelligence with machine vision is particularly driving demand for systems with enhanced computational capabilities and flexible processing frameworks.

Manufacturing sectors are particularly driving demand for high-performance machine vision systems capable of processing multiple data streams simultaneously. The rise of Industry 4.0 initiatives has created substantial market pressure for vision systems that can integrate seamlessly with existing production lines while delivering enhanced processing speeds and accuracy. Companies require systems that can handle diverse imaging modalities, from standard RGB cameras to hyperspectral and thermal imaging devices.

The automotive industry represents a significant growth segment, with autonomous vehicle development and advanced driver assistance systems requiring sophisticated real-time image processing capabilities. These applications demand vision systems that can process high-resolution video streams at extremely low latencies while maintaining exceptional reliability under varying environmental conditions.

Electronics manufacturing has emerged as another key market driver, where miniaturization trends necessitate vision systems capable of detecting microscopic defects and performing precise measurements on increasingly complex components. The semiconductor industry specifically requires processing capabilities that can handle wafer-level inspection tasks with nanometer-scale precision.

Healthcare and medical device sectors are expanding their adoption of machine vision technologies for diagnostic imaging, surgical guidance, and pharmaceutical quality assurance. These applications require specialized processing capabilities that can handle medical imaging standards while ensuring regulatory compliance and patient safety requirements.

The food and beverage industry is increasingly implementing advanced vision systems for contamination detection, packaging verification, and nutritional analysis. These applications require processing systems capable of handling multiple inspection criteria simultaneously while maintaining high throughput rates essential for commercial food production.

Emerging applications in robotics, logistics, and smart city infrastructure are creating new market segments that demand versatile processing architectures capable of adapting to diverse operational requirements. The convergence of artificial intelligence with machine vision is particularly driving demand for systems with enhanced computational capabilities and flexible processing frameworks.

Current Processing Capabilities and Performance Bottlenecks

Modern machine vision systems demonstrate varying processing capabilities across different architectural implementations. CPU-based systems typically achieve processing speeds of 30-100 frames per second for standard resolution images, while GPU-accelerated platforms can reach 200-500 FPS for similar workloads. FPGA-based solutions offer deterministic processing with latencies as low as 1-5 milliseconds, making them suitable for real-time industrial applications. Edge computing devices with dedicated AI chips, such as Intel Movidius or NVIDIA Jetson series, provide processing rates of 50-200 FPS while maintaining power consumption below 10-30 watts.

Processing performance varies significantly based on algorithm complexity and image resolution. Basic feature detection algorithms like edge detection or blob analysis can process 4K images at 60+ FPS on mid-range hardware. However, complex deep learning models for object recognition or semantic segmentation may reduce throughput to 5-15 FPS on the same systems. Multi-threading and parallel processing techniques can improve performance by 2-4x, though diminishing returns occur beyond optimal thread counts.

Memory bandwidth emerges as a critical bottleneck in high-throughput applications. Systems processing high-resolution images or video streams often encounter limitations when transferring data between memory subsystems and processing units. DDR4 memory systems with 25-50 GB/s bandwidth may saturate when handling multiple 4K video streams simultaneously. This constraint becomes particularly pronounced in multi-camera setups or applications requiring real-time processing of large image datasets.

Computational bottlenecks manifest differently across processing architectures. CPU-based systems face limitations in parallel processing of pixel-level operations, while GPU implementations may encounter memory allocation overhead when switching between different algorithm types. FPGA solutions, despite offering low latency, often struggle with algorithm flexibility and require significant development time for optimization.

Thermal management presents another significant constraint, particularly in embedded and industrial environments. High-performance processors generate substantial heat during intensive image processing tasks, leading to thermal throttling that can reduce performance by 20-40% under sustained loads. This limitation is especially critical in compact form factors where cooling solutions are restricted.

Network bandwidth and data transmission delays create additional bottlenecks in distributed vision systems. Gigabit Ethernet connections may become saturated when transmitting uncompressed high-resolution images, while wireless connections introduce variable latency that can disrupt real-time processing requirements. These limitations necessitate careful consideration of data compression and transmission protocols in system design.

Processing performance varies significantly based on algorithm complexity and image resolution. Basic feature detection algorithms like edge detection or blob analysis can process 4K images at 60+ FPS on mid-range hardware. However, complex deep learning models for object recognition or semantic segmentation may reduce throughput to 5-15 FPS on the same systems. Multi-threading and parallel processing techniques can improve performance by 2-4x, though diminishing returns occur beyond optimal thread counts.

Memory bandwidth emerges as a critical bottleneck in high-throughput applications. Systems processing high-resolution images or video streams often encounter limitations when transferring data between memory subsystems and processing units. DDR4 memory systems with 25-50 GB/s bandwidth may saturate when handling multiple 4K video streams simultaneously. This constraint becomes particularly pronounced in multi-camera setups or applications requiring real-time processing of large image datasets.

Computational bottlenecks manifest differently across processing architectures. CPU-based systems face limitations in parallel processing of pixel-level operations, while GPU implementations may encounter memory allocation overhead when switching between different algorithm types. FPGA solutions, despite offering low latency, often struggle with algorithm flexibility and require significant development time for optimization.

Thermal management presents another significant constraint, particularly in embedded and industrial environments. High-performance processors generate substantial heat during intensive image processing tasks, leading to thermal throttling that can reduce performance by 20-40% under sustained loads. This limitation is especially critical in compact form factors where cooling solutions are restricted.

Network bandwidth and data transmission delays create additional bottlenecks in distributed vision systems. Gigabit Ethernet connections may become saturated when transmitting uncompressed high-resolution images, while wireless connections introduce variable latency that can disrupt real-time processing requirements. These limitations necessitate careful consideration of data compression and transmission protocols in system design.

Mainstream Processing Architectures and Implementation Methods

01 Real-time image processing and analysis

Machine vision systems incorporate advanced processing capabilities for real-time image acquisition, analysis, and interpretation. These systems utilize high-speed processors and specialized algorithms to capture visual data and extract meaningful information instantly. The processing architecture enables simultaneous handling of multiple image streams, feature detection, pattern recognition, and object identification with minimal latency. Enhanced computational power allows for complex image transformations, filtering operations, and data extraction to support automated decision-making processes.- Real-time image processing and analysis: Machine vision systems incorporate advanced processing capabilities for real-time image acquisition, analysis, and interpretation. These systems utilize high-speed processors and specialized algorithms to capture visual data and extract meaningful information instantly. The processing architecture enables simultaneous handling of multiple image streams, feature detection, pattern recognition, and object identification with minimal latency. Enhanced computational power allows for complex image transformations, filtering operations, and data extraction to support automated decision-making processes.

- Deep learning and neural network integration: Modern machine vision systems leverage deep learning frameworks and neural network architectures to enhance processing capabilities. These systems employ convolutional neural networks, recurrent neural networks, and other artificial intelligence models to improve accuracy in object detection, classification, and segmentation tasks. The integration of machine learning algorithms enables adaptive learning from training data, continuous improvement of recognition performance, and handling of complex visual scenarios that traditional rule-based systems cannot address effectively.

- Multi-sensor fusion and data integration: Advanced machine vision systems combine data from multiple sensors and imaging modalities to create comprehensive scene understanding. The processing capabilities include synchronization of various input sources, calibration of different sensor types, and fusion of heterogeneous data streams. This multi-modal approach enhances system robustness, provides redundancy for critical applications, and enables three-dimensional reconstruction and spatial mapping. The integrated processing framework handles diverse data formats and coordinates information from cameras, depth sensors, thermal imaging, and other sensing technologies.

- Edge computing and distributed processing: Machine vision systems increasingly utilize edge computing architectures to distribute processing tasks across multiple nodes. This approach reduces latency by performing computations closer to data sources, minimizes bandwidth requirements for data transmission, and enables scalable system designs. The distributed processing framework supports parallel computation, load balancing, and efficient resource utilization. Edge-based processing capabilities allow for autonomous operation in environments with limited connectivity while maintaining high performance and responsiveness.

- Adaptive processing and dynamic optimization: Contemporary machine vision systems feature adaptive processing capabilities that dynamically adjust computational resources and algorithms based on operating conditions. These systems monitor performance metrics, environmental factors, and task requirements to optimize processing parameters in real-time. The adaptive framework includes automatic exposure control, dynamic resolution adjustment, computational load management, and algorithm selection based on scene complexity. This flexibility ensures consistent performance across varying conditions while maximizing efficiency and minimizing power consumption.

02 Deep learning and neural network integration

Modern machine vision systems leverage deep learning frameworks and neural network architectures to enhance processing capabilities. These systems employ convolutional neural networks, recurrent neural networks, and other artificial intelligence models to improve accuracy in object detection, classification, and segmentation tasks. The integration of machine learning algorithms enables adaptive learning from training data, continuous improvement of recognition performance, and handling of complex visual scenarios that traditional rule-based systems cannot address effectively.Expand Specific Solutions03 Multi-sensor fusion and data integration

Advanced machine vision systems combine data from multiple sensors and imaging modalities to create comprehensive scene understanding. The processing capabilities include synchronization of various input sources, calibration of different sensor types, and fusion of heterogeneous data streams. These systems can integrate information from cameras, depth sensors, thermal imaging devices, and other detection equipment to generate enriched representations of the environment. The multi-modal processing enhances robustness, accuracy, and reliability of visual perception in diverse operating conditions.Expand Specific Solutions04 Edge computing and distributed processing

Machine vision systems increasingly utilize edge computing architectures to distribute processing tasks across multiple computational nodes. This approach enables local data processing at or near the point of image capture, reducing latency and bandwidth requirements. The distributed processing framework allows for scalable system designs, parallel computation of vision algorithms, and efficient resource utilization. Edge-based processing capabilities support real-time applications, reduce dependency on cloud connectivity, and enhance system responsiveness for time-critical operations.Expand Specific Solutions05 3D reconstruction and spatial analysis

Machine vision systems incorporate sophisticated processing capabilities for three-dimensional scene reconstruction and spatial analysis. These systems employ stereo vision, structured light, time-of-flight, or photogrammetry techniques to generate depth information and volumetric representations. The processing algorithms handle point cloud generation, surface reconstruction, dimensional measurement, and geometric analysis. Advanced computational methods enable accurate spatial localization, pose estimation, and understanding of three-dimensional relationships between objects in the scene.Expand Specific Solutions

Leading Companies in Machine Vision Processing Solutions

The machine vision systems processing capabilities market is experiencing rapid growth, driven by increasing automation demands across manufacturing, automotive, and healthcare sectors. The industry has reached a mature development stage with established market leaders like Cognex Corp. and Banner Engineering Corp. dominating traditional industrial applications, while technology giants such as Samsung Electronics, Sony Group Corp., and Canon Inc. leverage their imaging expertise to advance processing capabilities. The market demonstrates strong technical maturity through diverse player participation, from specialized automation companies like Zebra Technologies and Datalogic IP Tech to semiconductor leaders including Texas Instruments and comprehensive solution providers like IBM and NEC Corp. Academic institutions such as Tsinghua University, Peking University, and University of Michigan contribute foundational research, while emerging players like LeapMind focus on AI-optimized processing architectures, indicating continued innovation potential in edge computing and real-time processing applications.

Cognex Corp.

Technical Solution: Cognex develops advanced machine vision systems with proprietary PatMax pattern matching technology and VisionPro software suite. Their systems utilize deep learning algorithms for defect detection, optical character recognition, and assembly verification with processing speeds up to 1000 parts per minute. The company's In-Sight vision systems integrate high-resolution cameras with embedded processors capable of handling complex image analysis tasks in real-time manufacturing environments.

Strengths: Industry-leading pattern matching accuracy and robust software ecosystem. Weaknesses: Higher cost compared to competitors and complex setup requirements.

International Business Machines Corp.

Technical Solution: IBM's Watson Machine Learning platform provides cloud-based machine vision processing with scalable compute resources and pre-trained models for industrial applications. Their system supports distributed processing across multiple nodes, handling up to 10,000 concurrent image analysis tasks with automatic load balancing. The platform integrates computer vision APIs with enterprise databases, enabling real-time decision making and predictive maintenance capabilities for manufacturing operations.

Strengths: Highly scalable cloud infrastructure and comprehensive enterprise integration. Weaknesses: Dependency on internet connectivity and subscription-based pricing model.

Key Innovations in High-Performance Vision Processing Units

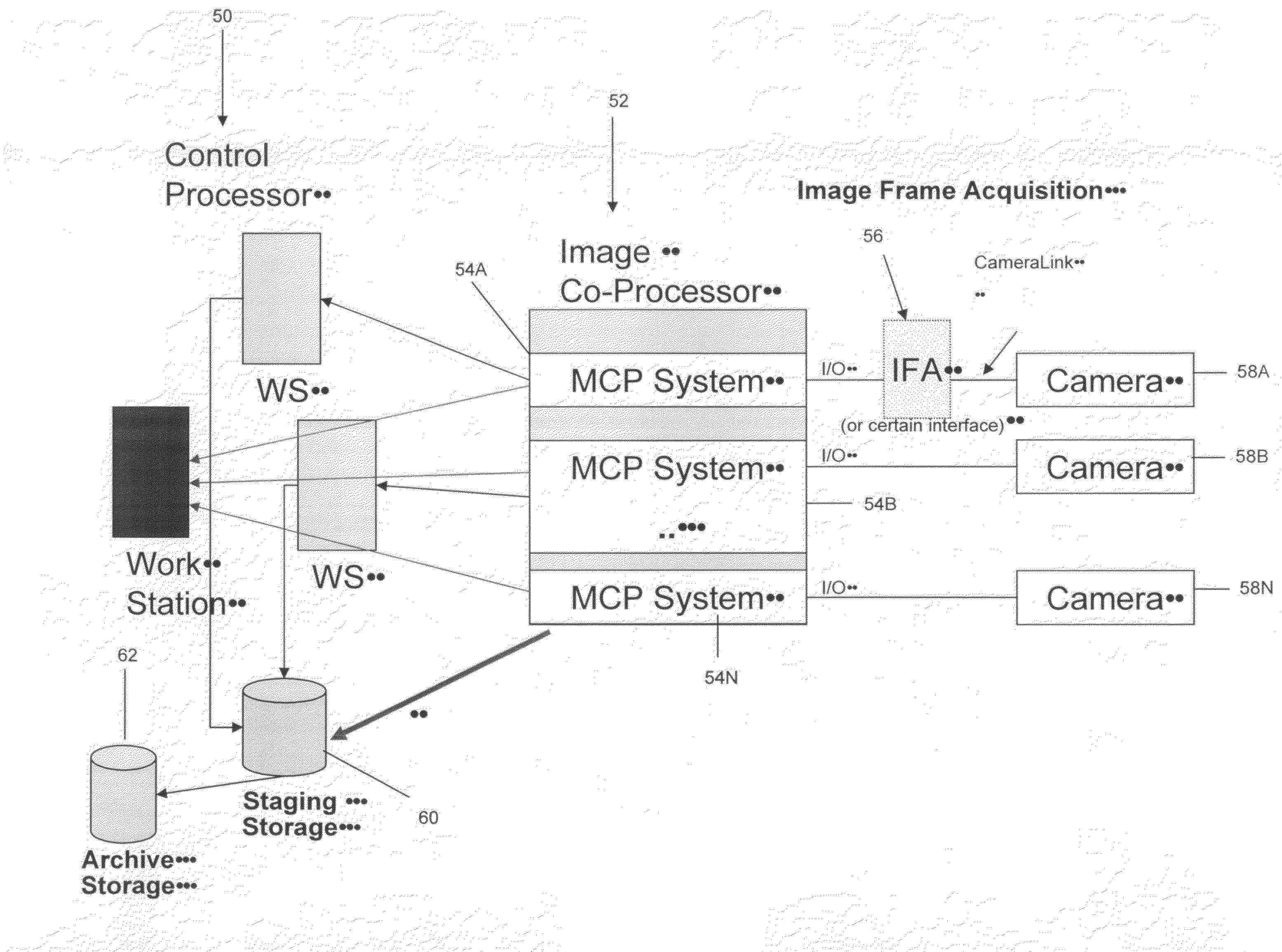

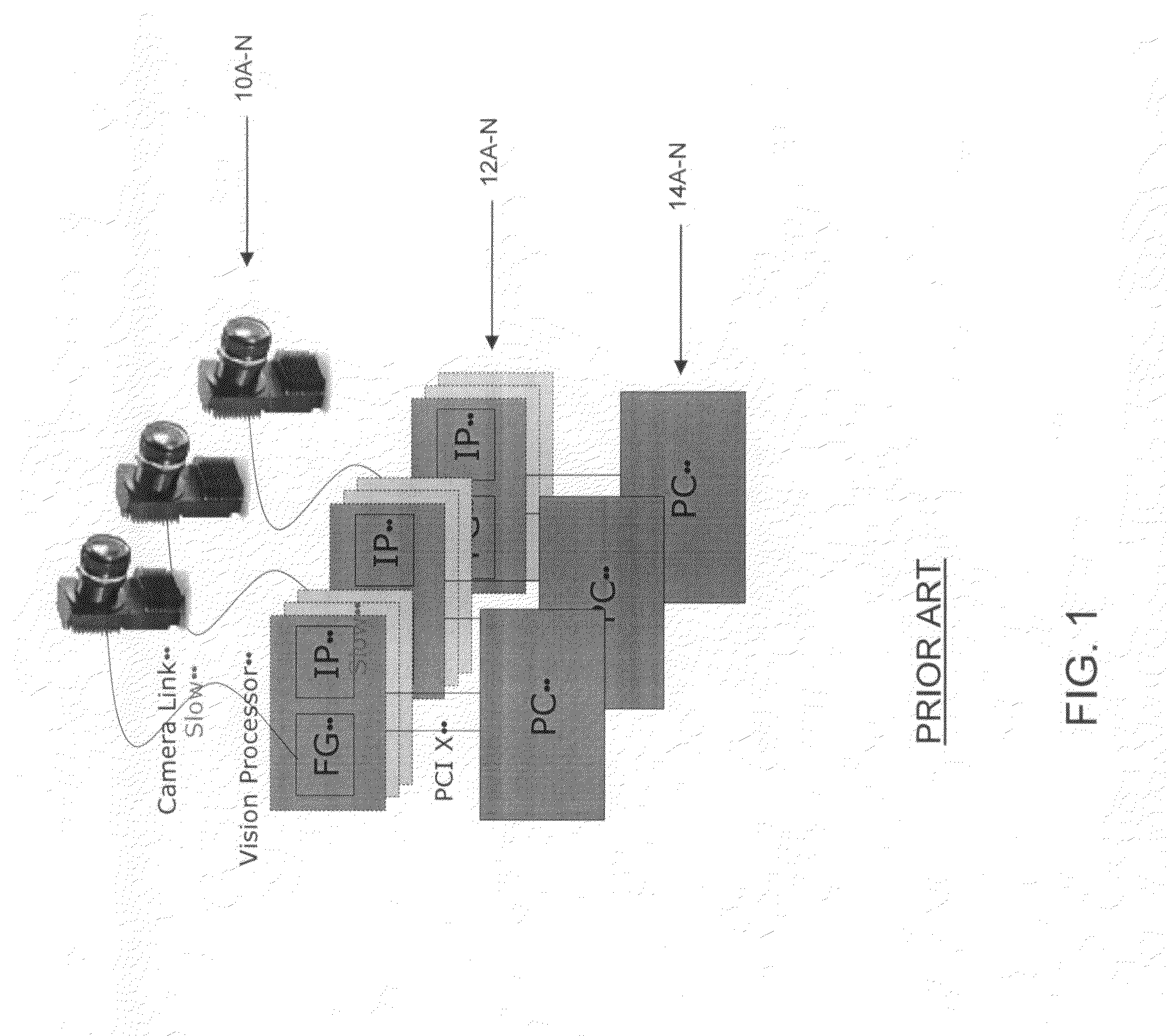

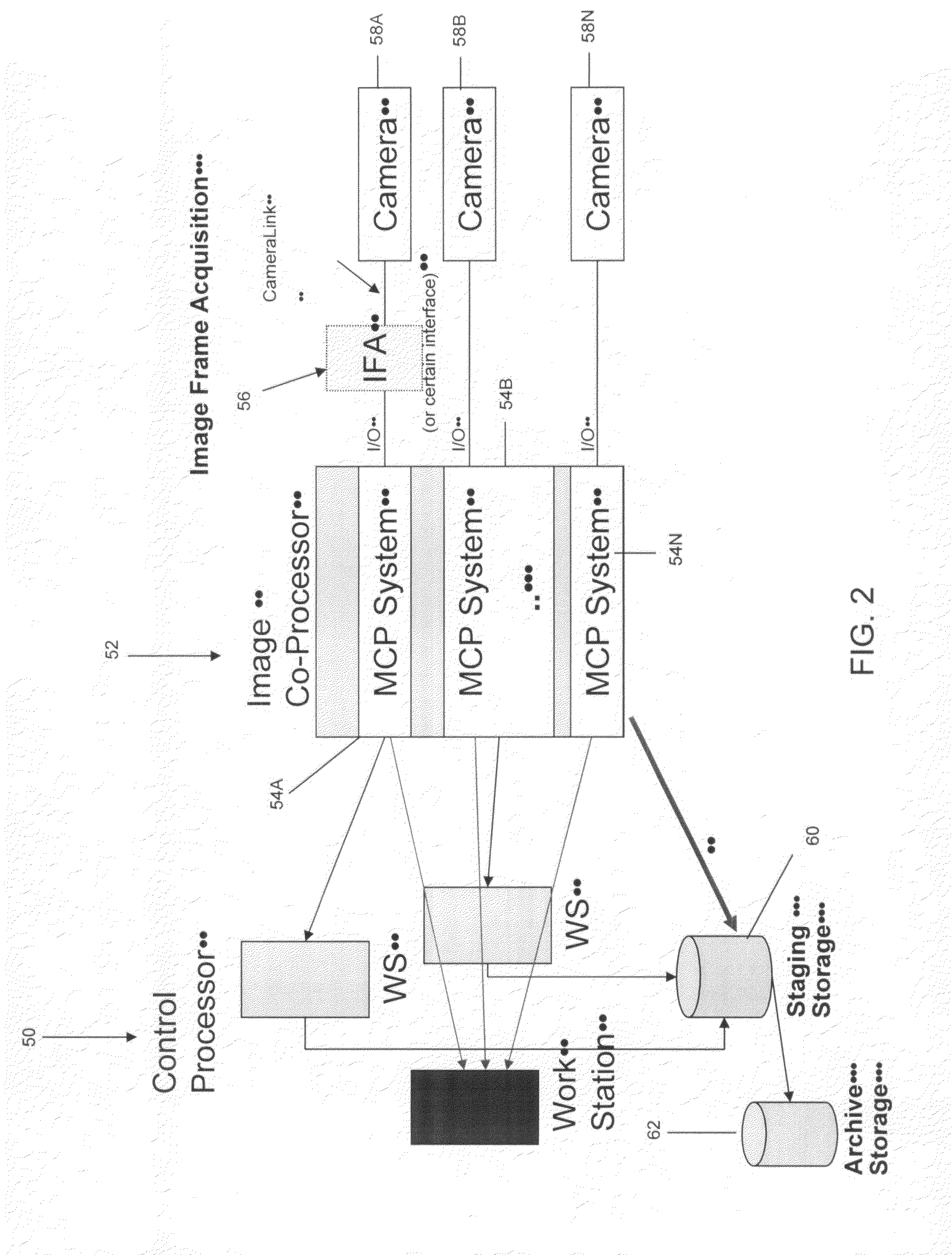

System and method of distributed processing for machine-vision analysis

PatentActiveUS20150339812A1

Innovation

- A distributed processing system that utilizes multiple computing devices connected by data networks to offload machine-vision algorithms from a single processor to a worker, allowing for parallel processing and load balancing by selecting algorithms based on processing time estimates and rankings to meet production timing criteria.

Heterogeneous image processing system

PatentActiveUS20080260296A1

Innovation

- A multi-core processor system is introduced, allowing for distributed and fine-grained execution of image processing applications, utilizing a management processor, general-purpose processors, special-purpose accelerators, and a network-connected infrastructure to offload image processing workloads and reuse existing infrastructure.

Standardization and Benchmarking Frameworks for Vision Systems

The establishment of standardized benchmarking frameworks for machine vision systems has become increasingly critical as the technology proliferates across diverse industrial applications. Current evaluation methodologies lack consistency, making it challenging to objectively compare processing capabilities across different vision platforms and architectures.

Several international organizations have initiated efforts to develop comprehensive benchmarking standards. The IEEE Computer Society has proposed standardized metrics for evaluating vision system performance, including processing speed, accuracy rates, and resource utilization efficiency. Similarly, the International Organization for Standardization (ISO) is developing ISO/IEC 23053, which addresses performance evaluation methods for computer vision systems in industrial environments.

Existing benchmarking frameworks primarily focus on algorithm-level performance metrics such as detection accuracy, classification precision, and inference latency. However, these approaches often fail to capture system-level characteristics including memory bandwidth utilization, power consumption, and thermal management capabilities. The Computer Vision Foundation has introduced MLPerf Vision, a benchmark suite that attempts to address these limitations by incorporating hardware-aware performance measurements.

Industry-specific standardization efforts are emerging across various sectors. The automotive industry has developed ASAM OpenX standards for vision system evaluation in autonomous driving applications, while manufacturing sectors are adopting MTConnect protocols for vision system integration and performance monitoring. These domain-specific frameworks provide more relevant evaluation criteria but create fragmentation in overall standardization efforts.

Current challenges in standardization include the rapid evolution of hardware architectures, from traditional CPUs to specialized vision processing units and neuromorphic chips. Benchmarking frameworks struggle to maintain relevance as new processing paradigms emerge, requiring continuous updates to evaluation methodologies and performance metrics.

Future standardization initiatives must address edge computing scenarios, real-time processing requirements, and multi-modal sensor fusion capabilities. The development of adaptive benchmarking frameworks that can accommodate emerging technologies while maintaining backward compatibility represents a critical research direction for enabling fair and comprehensive comparison of machine vision system processing capabilities.

Several international organizations have initiated efforts to develop comprehensive benchmarking standards. The IEEE Computer Society has proposed standardized metrics for evaluating vision system performance, including processing speed, accuracy rates, and resource utilization efficiency. Similarly, the International Organization for Standardization (ISO) is developing ISO/IEC 23053, which addresses performance evaluation methods for computer vision systems in industrial environments.

Existing benchmarking frameworks primarily focus on algorithm-level performance metrics such as detection accuracy, classification precision, and inference latency. However, these approaches often fail to capture system-level characteristics including memory bandwidth utilization, power consumption, and thermal management capabilities. The Computer Vision Foundation has introduced MLPerf Vision, a benchmark suite that attempts to address these limitations by incorporating hardware-aware performance measurements.

Industry-specific standardization efforts are emerging across various sectors. The automotive industry has developed ASAM OpenX standards for vision system evaluation in autonomous driving applications, while manufacturing sectors are adopting MTConnect protocols for vision system integration and performance monitoring. These domain-specific frameworks provide more relevant evaluation criteria but create fragmentation in overall standardization efforts.

Current challenges in standardization include the rapid evolution of hardware architectures, from traditional CPUs to specialized vision processing units and neuromorphic chips. Benchmarking frameworks struggle to maintain relevance as new processing paradigms emerge, requiring continuous updates to evaluation methodologies and performance metrics.

Future standardization initiatives must address edge computing scenarios, real-time processing requirements, and multi-modal sensor fusion capabilities. The development of adaptive benchmarking frameworks that can accommodate emerging technologies while maintaining backward compatibility represents a critical research direction for enabling fair and comprehensive comparison of machine vision system processing capabilities.

Edge Computing Integration in Machine Vision Processing

Edge computing integration represents a paradigmatic shift in machine vision processing architectures, fundamentally altering how computational resources are distributed and utilized across vision systems. This integration addresses the growing demand for real-time processing capabilities while reducing dependency on centralized cloud infrastructure, particularly crucial for applications requiring immediate response times and enhanced data privacy.

The convergence of edge computing with machine vision systems enables distributed processing architectures where computational tasks are performed closer to data sources. Modern edge devices equipped with specialized processors, including GPUs, TPUs, and dedicated AI accelerators, can execute complex vision algorithms locally. This distributed approach significantly reduces latency from hundreds of milliseconds in cloud-based systems to single-digit milliseconds in edge implementations.

Processing capability distribution across edge nodes creates hierarchical computing structures where different complexity levels of vision tasks are allocated based on available computational resources. Simple preprocessing operations such as image filtering and basic feature extraction occur at sensor-level edge devices, while more complex tasks like object recognition and scene understanding are handled by more powerful edge computing units.

Network bandwidth optimization emerges as a critical advantage of edge integration, as raw image data processing occurs locally, transmitting only processed results or compressed representations to central systems. This approach reduces network traffic by up to 90% compared to traditional cloud-centric architectures, enabling deployment in bandwidth-constrained environments.

Real-time decision-making capabilities are enhanced through edge computing integration, particularly beneficial for autonomous systems, industrial automation, and surveillance applications. Local processing eliminates network round-trip delays, enabling immediate responses to detected events or anomalies within the visual field.

Scalability considerations in edge-integrated machine vision systems involve dynamic load balancing across distributed computing nodes. Advanced implementations utilize federated learning approaches where edge devices collaboratively improve vision models while maintaining data locality, creating self-improving distributed intelligence networks that enhance overall system performance without compromising individual node autonomy.

The convergence of edge computing with machine vision systems enables distributed processing architectures where computational tasks are performed closer to data sources. Modern edge devices equipped with specialized processors, including GPUs, TPUs, and dedicated AI accelerators, can execute complex vision algorithms locally. This distributed approach significantly reduces latency from hundreds of milliseconds in cloud-based systems to single-digit milliseconds in edge implementations.

Processing capability distribution across edge nodes creates hierarchical computing structures where different complexity levels of vision tasks are allocated based on available computational resources. Simple preprocessing operations such as image filtering and basic feature extraction occur at sensor-level edge devices, while more complex tasks like object recognition and scene understanding are handled by more powerful edge computing units.

Network bandwidth optimization emerges as a critical advantage of edge integration, as raw image data processing occurs locally, transmitting only processed results or compressed representations to central systems. This approach reduces network traffic by up to 90% compared to traditional cloud-centric architectures, enabling deployment in bandwidth-constrained environments.

Real-time decision-making capabilities are enhanced through edge computing integration, particularly beneficial for autonomous systems, industrial automation, and surveillance applications. Local processing eliminates network round-trip delays, enabling immediate responses to detected events or anomalies within the visual field.

Scalability considerations in edge-integrated machine vision systems involve dynamic load balancing across distributed computing nodes. Advanced implementations utilize federated learning approaches where edge devices collaboratively improve vision models while maintaining data locality, creating self-improving distributed intelligence networks that enhance overall system performance without compromising individual node autonomy.

Unlock deeper insights with PatSnap Eureka Quick Research — get a full tech report to explore trends and direct your research. Try now!

Generate Your Research Report Instantly with AI Agent

Supercharge your innovation with PatSnap Eureka AI Agent Platform!