Optimize Algorithm Fusion for Machine Vision System Cohesion

APR 3, 20269 MIN READ

Generate Your Research Report Instantly with AI Agent

PatSnap Eureka helps you evaluate technical feasibility & market potential.

Algorithm Fusion Background and Vision System Goals

Machine vision systems have evolved from simple image capture devices to sophisticated multi-algorithmic platforms capable of real-time decision making across diverse industrial applications. The historical development traces back to early pattern recognition systems in the 1960s, progressing through rule-based approaches in the 1980s, statistical methods in the 1990s, and culminating in today's deep learning-powered architectures. This evolution has consistently demonstrated that isolated algorithms, while individually powerful, often fail to address the complex, multi-faceted challenges encountered in real-world vision applications.

The contemporary landscape of machine vision demands unprecedented levels of accuracy, speed, and adaptability. Modern applications spanning autonomous vehicles, medical imaging, industrial quality control, and robotics require systems that can simultaneously handle multiple visual tasks such as object detection, classification, tracking, and scene understanding. Single-algorithm approaches frequently exhibit limitations in robustness, generalization capability, and computational efficiency when confronted with varying environmental conditions, lighting changes, or diverse object characteristics.

Algorithm fusion represents a paradigm shift toward creating synergistic combinations of complementary computational approaches. This methodology leverages the strengths of different algorithmic families while mitigating their individual weaknesses. For instance, combining traditional computer vision techniques with deep learning models can provide both interpretability and high-performance feature extraction. Similarly, integrating multiple neural network architectures can enhance system resilience and accuracy across varied operational scenarios.

The primary technical objectives driving algorithm fusion optimization include achieving superior accuracy through ensemble learning effects, reducing computational overhead through intelligent load distribution, and enhancing system reliability through redundancy and cross-validation mechanisms. Additionally, fusion approaches aim to improve real-time performance by parallelizing complementary algorithms and optimizing resource utilization across available hardware platforms.

Vision system cohesion represents the ultimate goal of creating seamlessly integrated platforms where multiple algorithms operate as unified entities rather than disparate components. This cohesion encompasses data flow optimization, synchronized processing pipelines, and intelligent decision-making frameworks that can dynamically select or combine algorithmic outputs based on contextual requirements and performance metrics.

The contemporary landscape of machine vision demands unprecedented levels of accuracy, speed, and adaptability. Modern applications spanning autonomous vehicles, medical imaging, industrial quality control, and robotics require systems that can simultaneously handle multiple visual tasks such as object detection, classification, tracking, and scene understanding. Single-algorithm approaches frequently exhibit limitations in robustness, generalization capability, and computational efficiency when confronted with varying environmental conditions, lighting changes, or diverse object characteristics.

Algorithm fusion represents a paradigm shift toward creating synergistic combinations of complementary computational approaches. This methodology leverages the strengths of different algorithmic families while mitigating their individual weaknesses. For instance, combining traditional computer vision techniques with deep learning models can provide both interpretability and high-performance feature extraction. Similarly, integrating multiple neural network architectures can enhance system resilience and accuracy across varied operational scenarios.

The primary technical objectives driving algorithm fusion optimization include achieving superior accuracy through ensemble learning effects, reducing computational overhead through intelligent load distribution, and enhancing system reliability through redundancy and cross-validation mechanisms. Additionally, fusion approaches aim to improve real-time performance by parallelizing complementary algorithms and optimizing resource utilization across available hardware platforms.

Vision system cohesion represents the ultimate goal of creating seamlessly integrated platforms where multiple algorithms operate as unified entities rather than disparate components. This cohesion encompasses data flow optimization, synchronized processing pipelines, and intelligent decision-making frameworks that can dynamically select or combine algorithmic outputs based on contextual requirements and performance metrics.

Market Demand for Advanced Machine Vision Solutions

The global machine vision market is experiencing unprecedented growth driven by the increasing demand for automation, quality control, and intelligent manufacturing across diverse industries. Manufacturing sectors, particularly automotive, electronics, and pharmaceuticals, are actively seeking advanced machine vision solutions to enhance production efficiency and maintain stringent quality standards. The integration of artificial intelligence and machine learning algorithms into vision systems has become a critical requirement for modern industrial applications.

Automotive manufacturers represent one of the largest consumer segments for advanced machine vision technologies. These companies require sophisticated algorithm fusion capabilities to handle complex inspection tasks, including surface defect detection, dimensional measurement, and assembly verification. The demand extends beyond traditional quality control to encompass autonomous vehicle development, where multiple vision algorithms must work cohesively to process real-time environmental data.

The electronics industry demonstrates substantial appetite for optimized algorithm fusion in machine vision systems. Component miniaturization and increasing complexity of electronic devices necessitate highly precise inspection capabilities that can seamlessly integrate multiple detection algorithms. Semiconductor fabrication facilities particularly value systems that can coordinate various inspection methodologies while maintaining high throughput rates.

Healthcare and pharmaceutical sectors are emerging as significant growth drivers for advanced machine vision solutions. These industries require systems capable of fusing multiple algorithmic approaches for drug inspection, medical device quality control, and diagnostic imaging applications. The regulatory compliance requirements in these sectors demand robust and reliable algorithm integration that ensures consistent performance across different operational conditions.

Food and beverage industries increasingly adopt machine vision systems with advanced algorithm fusion capabilities to meet safety standards and consumer expectations. These applications require coordinated deployment of multiple detection algorithms for contamination identification, packaging integrity verification, and nutritional content analysis.

The logistics and e-commerce boom has created substantial demand for machine vision systems in warehouse automation and package sorting applications. These environments require algorithm fusion solutions that can handle diverse object recognition tasks while maintaining high processing speeds and accuracy rates across varying lighting conditions and package types.

Emerging applications in agriculture, construction, and security sectors are expanding the market scope for advanced machine vision solutions, creating new opportunities for algorithm fusion optimization technologies.

Automotive manufacturers represent one of the largest consumer segments for advanced machine vision technologies. These companies require sophisticated algorithm fusion capabilities to handle complex inspection tasks, including surface defect detection, dimensional measurement, and assembly verification. The demand extends beyond traditional quality control to encompass autonomous vehicle development, where multiple vision algorithms must work cohesively to process real-time environmental data.

The electronics industry demonstrates substantial appetite for optimized algorithm fusion in machine vision systems. Component miniaturization and increasing complexity of electronic devices necessitate highly precise inspection capabilities that can seamlessly integrate multiple detection algorithms. Semiconductor fabrication facilities particularly value systems that can coordinate various inspection methodologies while maintaining high throughput rates.

Healthcare and pharmaceutical sectors are emerging as significant growth drivers for advanced machine vision solutions. These industries require systems capable of fusing multiple algorithmic approaches for drug inspection, medical device quality control, and diagnostic imaging applications. The regulatory compliance requirements in these sectors demand robust and reliable algorithm integration that ensures consistent performance across different operational conditions.

Food and beverage industries increasingly adopt machine vision systems with advanced algorithm fusion capabilities to meet safety standards and consumer expectations. These applications require coordinated deployment of multiple detection algorithms for contamination identification, packaging integrity verification, and nutritional content analysis.

The logistics and e-commerce boom has created substantial demand for machine vision systems in warehouse automation and package sorting applications. These environments require algorithm fusion solutions that can handle diverse object recognition tasks while maintaining high processing speeds and accuracy rates across varying lighting conditions and package types.

Emerging applications in agriculture, construction, and security sectors are expanding the market scope for advanced machine vision solutions, creating new opportunities for algorithm fusion optimization technologies.

Current Challenges in Multi-Algorithm Integration

Multi-algorithm integration in machine vision systems faces significant architectural challenges that impede optimal performance and system cohesion. The primary obstacle lies in the heterogeneous nature of different algorithms, each designed with distinct computational paradigms, data structures, and processing requirements. Traditional computer vision algorithms such as edge detection, feature extraction, and pattern recognition operate on fundamentally different mathematical foundations, making seamless integration complex and resource-intensive.

Data flow synchronization represents another critical challenge in multi-algorithm environments. Vision systems typically process high-frequency image streams where algorithms must coordinate their execution timing to maintain real-time performance. Misaligned data pipelines create bottlenecks, leading to frame drops, processing delays, and inconsistent output quality. The temporal dependencies between sequential algorithms further complicate this synchronization, as downstream processes rely on timely upstream results.

Memory management and resource allocation pose substantial technical hurdles in integrated systems. Different algorithms exhibit varying memory footprints and computational demands, creating dynamic resource contention scenarios. GPU memory fragmentation becomes particularly problematic when multiple algorithms compete for limited video memory, while CPU-GPU data transfer overhead significantly impacts overall system throughput.

Algorithm compatibility and interface standardization remain persistent challenges across the industry. Legacy algorithms often lack standardized APIs, requiring extensive wrapper development and custom integration layers. Version compatibility issues arise when updating individual algorithms within integrated systems, potentially breaking existing functionality and requiring comprehensive system retesting.

Performance optimization across heterogeneous algorithm combinations presents complex trade-off scenarios. Individual algorithms may perform optimally in isolation but exhibit degraded performance when integrated due to shared resource constraints and interdependencies. Load balancing becomes critical as different algorithms have varying computational complexities and execution times, making it difficult to achieve uniform system utilization.

Debugging and error handling in multi-algorithm systems introduce additional complexity layers. Identifying the source of errors becomes challenging when multiple algorithms interact, and traditional debugging tools often lack visibility into cross-algorithm dependencies. Error propagation through algorithm chains can cascade failures throughout the entire vision system, requiring sophisticated fault isolation mechanisms.

Data flow synchronization represents another critical challenge in multi-algorithm environments. Vision systems typically process high-frequency image streams where algorithms must coordinate their execution timing to maintain real-time performance. Misaligned data pipelines create bottlenecks, leading to frame drops, processing delays, and inconsistent output quality. The temporal dependencies between sequential algorithms further complicate this synchronization, as downstream processes rely on timely upstream results.

Memory management and resource allocation pose substantial technical hurdles in integrated systems. Different algorithms exhibit varying memory footprints and computational demands, creating dynamic resource contention scenarios. GPU memory fragmentation becomes particularly problematic when multiple algorithms compete for limited video memory, while CPU-GPU data transfer overhead significantly impacts overall system throughput.

Algorithm compatibility and interface standardization remain persistent challenges across the industry. Legacy algorithms often lack standardized APIs, requiring extensive wrapper development and custom integration layers. Version compatibility issues arise when updating individual algorithms within integrated systems, potentially breaking existing functionality and requiring comprehensive system retesting.

Performance optimization across heterogeneous algorithm combinations presents complex trade-off scenarios. Individual algorithms may perform optimally in isolation but exhibit degraded performance when integrated due to shared resource constraints and interdependencies. Load balancing becomes critical as different algorithms have varying computational complexities and execution times, making it difficult to achieve uniform system utilization.

Debugging and error handling in multi-algorithm systems introduce additional complexity layers. Identifying the source of errors becomes challenging when multiple algorithms interact, and traditional debugging tools often lack visibility into cross-algorithm dependencies. Error propagation through algorithm chains can cascade failures throughout the entire vision system, requiring sophisticated fault isolation mechanisms.

Existing Multi-Algorithm Fusion Frameworks

01 Multi-algorithm fusion for data processing and analysis

Systems that integrate multiple algorithms to process and analyze data from various sources. These fusion systems combine different computational methods to improve accuracy and reliability of results. The approach enables comprehensive data interpretation by leveraging strengths of individual algorithms while compensating for their limitations.- Multi-algorithm fusion architecture for system integration: Systems that integrate multiple algorithms through fusion architectures to enhance overall system cohesion and performance. These architectures enable different algorithms to work together seamlessly, combining their strengths to achieve better results than individual algorithms. The fusion approach allows for dynamic algorithm selection and weighted combination based on specific operational requirements and contexts.

- Data fusion techniques for algorithm coordination: Methods for coordinating multiple algorithms through data fusion techniques that improve system cohesion. These techniques involve combining data from different sources and processing it through various algorithms to generate unified outputs. The approach enhances decision-making accuracy and system reliability by leveraging complementary information from multiple algorithmic processes.

- Adaptive weighting mechanisms for algorithm fusion: Systems employing adaptive weighting mechanisms to dynamically adjust the contribution of different algorithms in a fused system. These mechanisms automatically optimize algorithm weights based on performance metrics, environmental conditions, or specific task requirements. The adaptive approach ensures optimal system cohesion by balancing algorithm contributions in real-time.

- Hierarchical fusion frameworks for modular algorithm integration: Hierarchical frameworks that organize algorithms in layered structures to achieve better system cohesion. These frameworks enable modular integration where algorithms at different levels perform specialized tasks while maintaining overall system coordination. The hierarchical approach facilitates scalability and maintainability of complex algorithm fusion systems.

- Performance optimization through algorithm fusion evaluation: Methods for evaluating and optimizing the performance of fused algorithm systems to enhance cohesion. These methods include metrics for measuring fusion effectiveness, techniques for identifying bottlenecks, and strategies for improving inter-algorithm communication. The optimization process ensures that the fused system operates more efficiently than its individual components.

02 Sensor fusion and multi-modal data integration

Technologies that fuse data from multiple sensors or different modalities to create a unified representation. The systems process heterogeneous data streams and combine them using fusion algorithms to enhance perception and decision-making capabilities. This approach is particularly useful in applications requiring comprehensive environmental awareness.Expand Specific Solutions03 Machine learning model ensemble and fusion

Methods for combining multiple machine learning models to improve prediction accuracy and robustness. These systems employ various fusion strategies to integrate outputs from different models, creating a more reliable and accurate final result. The ensemble approach reduces individual model biases and enhances overall system performance.Expand Specific Solutions04 Distributed system architecture for algorithm integration

Architectural frameworks designed to support the integration and coordination of multiple algorithms across distributed computing environments. These systems provide mechanisms for algorithm communication, data sharing, and result aggregation. The architecture ensures scalability and efficient resource utilization while maintaining system cohesion.Expand Specific Solutions05 Adaptive fusion strategies and dynamic algorithm selection

Systems that dynamically adjust fusion strategies based on runtime conditions and performance metrics. These approaches include mechanisms for selecting appropriate algorithms and adjusting their weights in the fusion process. The adaptive nature allows the system to optimize performance across varying operational scenarios and data characteristics.Expand Specific Solutions

Leading Companies in Machine Vision Algorithm Development

The machine vision algorithm fusion landscape represents a rapidly evolving market in the growth phase, driven by increasing demand for intelligent automation across industries. Major technology leaders like NVIDIA, Samsung Electronics, and Qualcomm are advancing GPU-accelerated processing and mobile vision chips, while automotive giants including BMW, Toyota, and specialized firms like Motional and TORC Robotics push autonomous driving applications. Academic institutions such as Tsinghua University, Beihang University, and Xidian University contribute foundational research in computer vision algorithms. The technology demonstrates high maturity in established sectors like surveillance (Dahua Technology) and medical imaging (Siemens Medical, Brainlab), while emerging applications in robotics and defense (Helsing, US Air Force) show promising growth potential, creating a competitive ecosystem spanning hardware manufacturers, software developers, and research institutions.

Samsung Electronics Co., Ltd.

Technical Solution: Samsung implements algorithm fusion optimization through their Exynos processors with integrated Neural Processing Units (NPU) designed for mobile and edge vision applications. Their approach focuses on heterogeneous computing architectures that enable parallel execution of multiple vision algorithms across CPU, GPU, and NPU cores. The company's algorithm fusion strategy emphasizes memory bandwidth optimization and power efficiency, utilizing advanced memory hierarchies and data flow optimization techniques. Samsung's vision processing units support real-time fusion of traditional computer vision algorithms with deep learning models, achieving up to 26 TOPS performance while maintaining low power consumption for mobile applications.

Strengths: Power-efficient mobile solutions, integrated hardware-software optimization, cost-effective mass production. Weaknesses: Limited ecosystem compared to NVIDIA, primarily focused on mobile applications, less flexibility for custom implementations.

Robert Bosch GmbH

Technical Solution: Bosch develops algorithm fusion solutions specifically for automotive and industrial machine vision applications through their proprietary Vision Processing Unit (VPU) technology. Their approach combines traditional computer vision algorithms with AI-based methods for applications like advanced driver assistance systems and industrial automation. The company's algorithm fusion framework optimizes processing pipelines by sharing computational resources and intermediate results between different vision algorithms, reducing overall system latency and power consumption. Bosch's solutions integrate sensor fusion capabilities, combining camera data with radar and lidar inputs through unified algorithm processing chains that achieve real-time performance in safety-critical applications.

Strengths: Automotive industry expertise, safety-critical system experience, robust industrial-grade solutions. Weaknesses: Limited general-purpose applications, higher costs for non-automotive markets, proprietary ecosystem limitations.

Core Patents in Vision System Algorithm Optimization

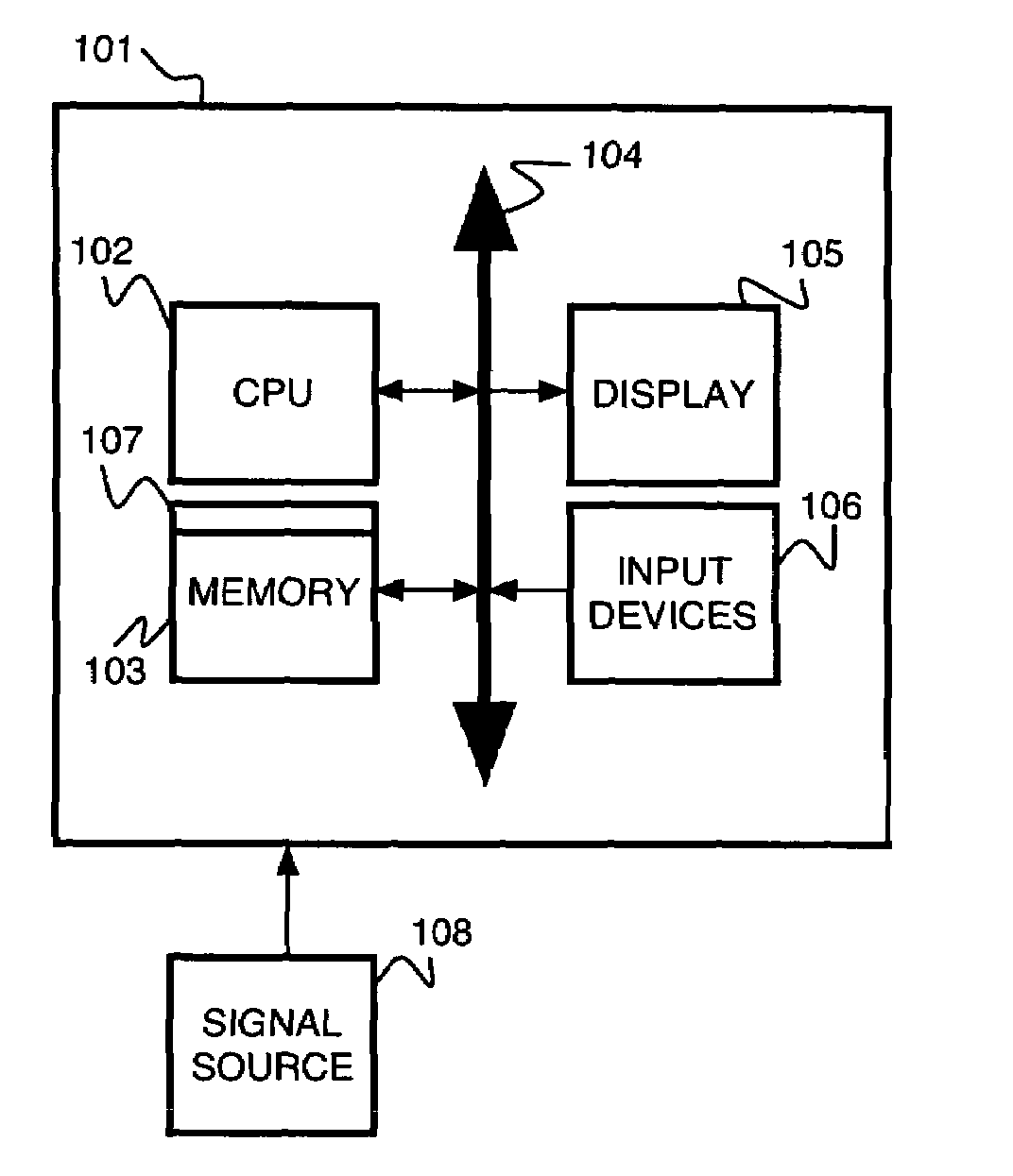

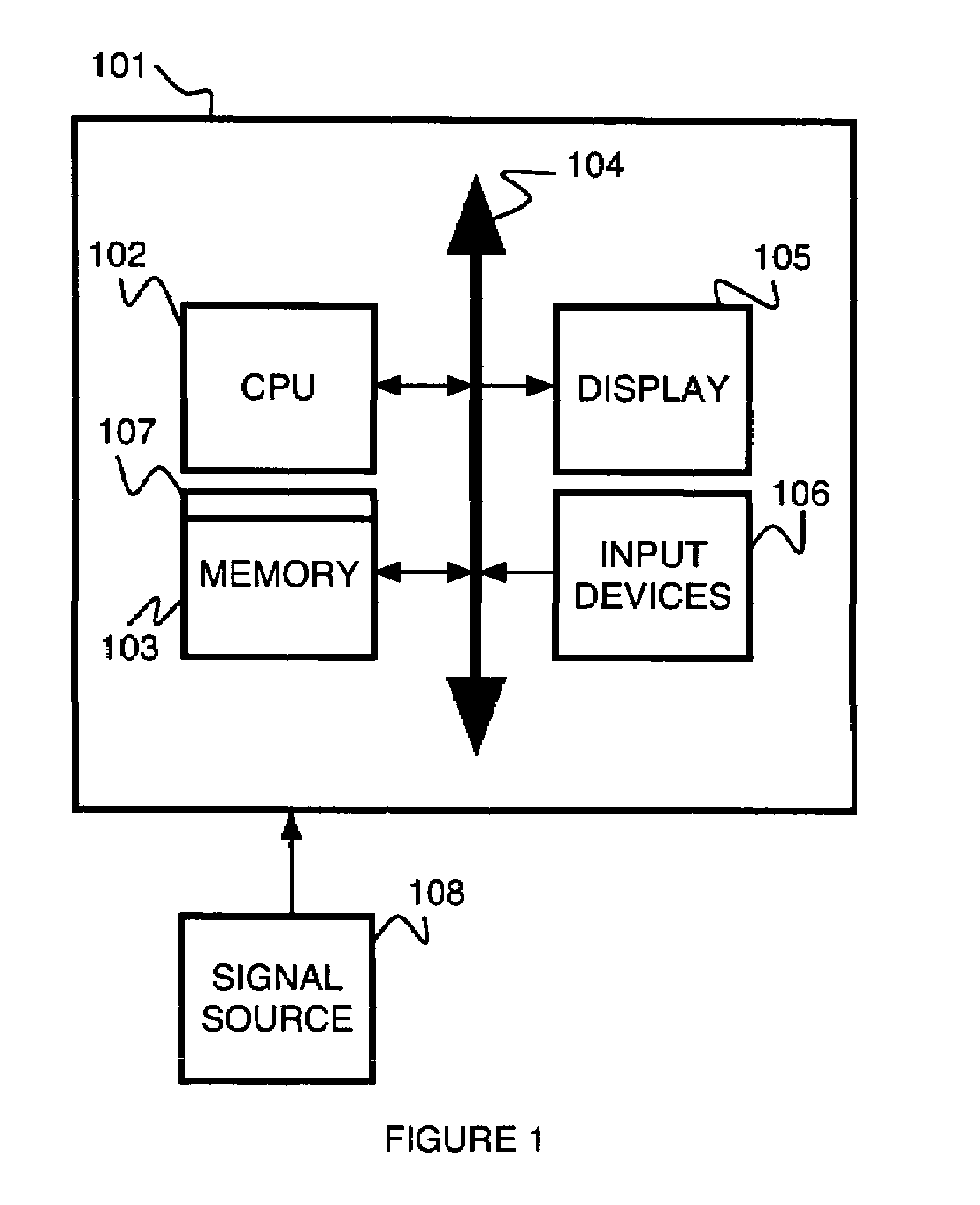

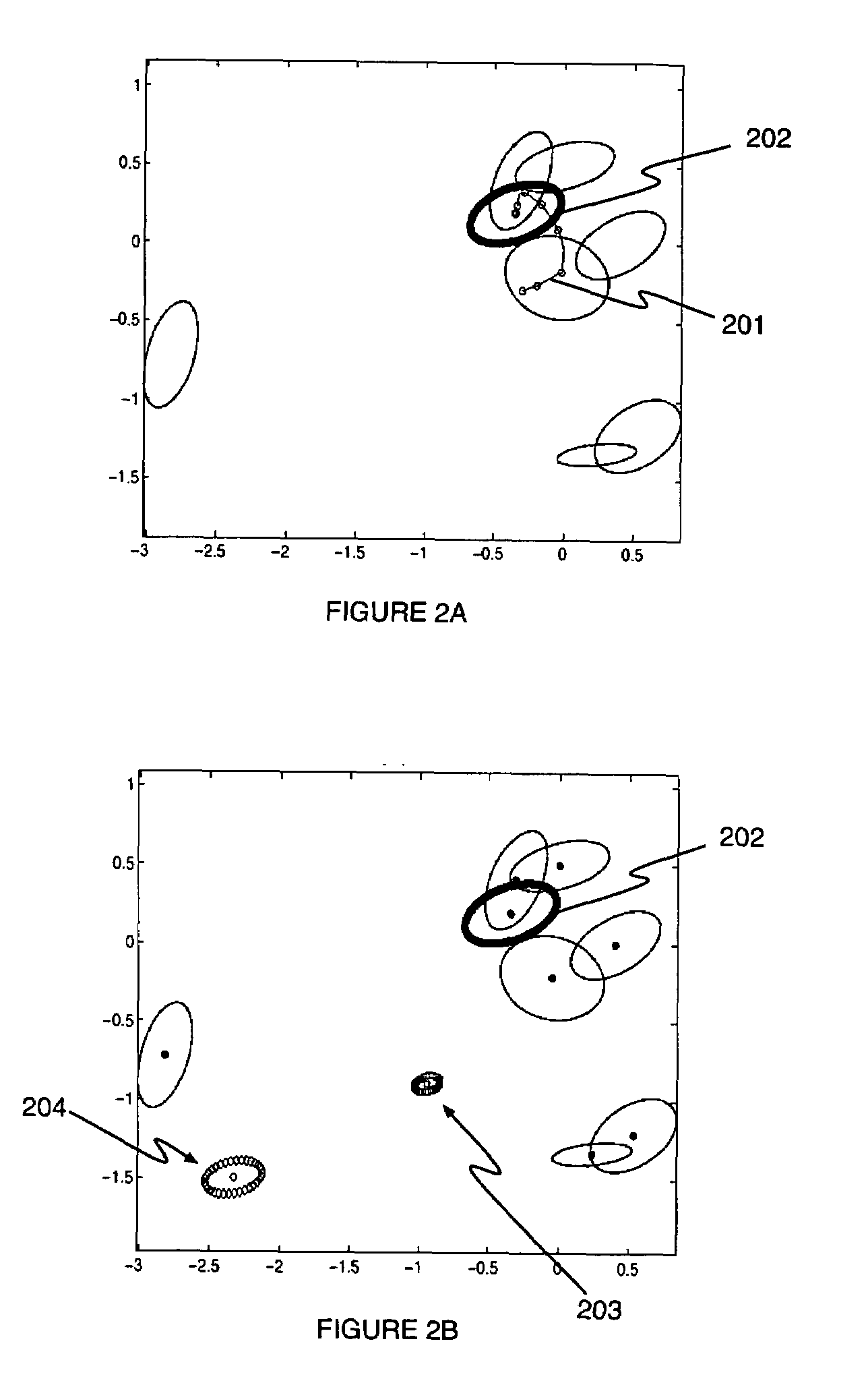

Density estimation-based information fusion for multiple motion computation

PatentInactiveUS7298868B2

Innovation

- The Variable-Bandwidth Density-based Fusion (VBDF) method determines a density-based fusion estimate by tracking the most significant mode across scales, using a convex combination of covariances with weights optimized based on data uncertainty, and employs a variable-bandwidth kernel density estimate to handle multiple source models and outliers.

Fusion-based object-recognition

PatentActiveUS20150363643A1

Innovation

- A data fusion system that combines the results of multiple image capture devices and travel time information using a Bayesian fusion algorithm, operating on learned statistical models to improve recognition accuracy by calculating probability values and incorporating credibility and transition models.

Performance Standards for Industrial Vision Systems

Industrial vision systems require rigorous performance standards to ensure reliable operation across diverse manufacturing environments. These standards encompass multiple dimensions including accuracy, speed, robustness, and consistency metrics that directly impact production quality and efficiency. The establishment of comprehensive performance benchmarks becomes particularly critical when implementing algorithm fusion techniques for enhanced system cohesion.

Accuracy standards typically define acceptable error rates for object detection, classification, and measurement tasks. For high-precision applications such as semiconductor inspection, accuracy requirements may demand sub-pixel precision with error tolerances below 0.1%. Meanwhile, general manufacturing applications might accept accuracy levels within 1-2% deviation from ground truth measurements.

Processing speed requirements vary significantly based on production line velocities and real-time constraints. High-speed packaging lines may require frame processing rates exceeding 1000 fps, while quality inspection systems might operate effectively at 30-60 fps. Latency specifications often mandate end-to-end processing times under 10 milliseconds for critical applications.

Robustness standards address system performance under varying environmental conditions including lighting fluctuations, temperature changes, vibration, and electromagnetic interference. Systems must maintain specified accuracy levels across temperature ranges from -10°C to 60°C and operate reliably under industrial lighting variations of ±30%.

Consistency metrics evaluate system repeatability and reproducibility over extended operational periods. Standards typically require measurement repeatability within 0.5% coefficient of variation and long-term stability with less than 2% drift over 1000 operating hours without recalibration.

Integration compatibility standards ensure seamless communication with existing factory automation systems through standardized protocols such as GigE Vision, USB3 Vision, or industrial Ethernet interfaces. These specifications guarantee interoperability and facilitate algorithm fusion implementations across heterogeneous hardware platforms while maintaining real-time performance requirements.

Accuracy standards typically define acceptable error rates for object detection, classification, and measurement tasks. For high-precision applications such as semiconductor inspection, accuracy requirements may demand sub-pixel precision with error tolerances below 0.1%. Meanwhile, general manufacturing applications might accept accuracy levels within 1-2% deviation from ground truth measurements.

Processing speed requirements vary significantly based on production line velocities and real-time constraints. High-speed packaging lines may require frame processing rates exceeding 1000 fps, while quality inspection systems might operate effectively at 30-60 fps. Latency specifications often mandate end-to-end processing times under 10 milliseconds for critical applications.

Robustness standards address system performance under varying environmental conditions including lighting fluctuations, temperature changes, vibration, and electromagnetic interference. Systems must maintain specified accuracy levels across temperature ranges from -10°C to 60°C and operate reliably under industrial lighting variations of ±30%.

Consistency metrics evaluate system repeatability and reproducibility over extended operational periods. Standards typically require measurement repeatability within 0.5% coefficient of variation and long-term stability with less than 2% drift over 1000 operating hours without recalibration.

Integration compatibility standards ensure seamless communication with existing factory automation systems through standardized protocols such as GigE Vision, USB3 Vision, or industrial Ethernet interfaces. These specifications guarantee interoperability and facilitate algorithm fusion implementations across heterogeneous hardware platforms while maintaining real-time performance requirements.

Real-time Processing Requirements and Constraints

Real-time processing in machine vision systems demands stringent temporal constraints that fundamentally shape algorithm fusion strategies. Modern industrial applications typically require processing latencies below 10-50 milliseconds for critical decision-making tasks, while maintaining frame rates of 30-120 fps depending on the application domain. These temporal boundaries create a complex optimization landscape where computational efficiency must be balanced against algorithmic accuracy and system reliability.

Memory bandwidth limitations represent a critical bottleneck in real-time algorithm fusion architectures. Contemporary machine vision systems often process high-resolution image streams exceeding 4K resolution, generating data throughput rates of several gigabytes per second. The fusion of multiple algorithms compounds this challenge, as intermediate processing results must be efficiently transferred between processing units while maintaining cache coherency and minimizing memory access conflicts.

Processing unit heterogeneity introduces additional complexity layers in real-time fusion implementations. Modern systems typically integrate CPU cores, GPU compute units, and specialized vision processing units (VPUs) or field-programmable gate arrays (FPGAs). Each processing element exhibits distinct performance characteristics, power consumption profiles, and memory access patterns. Effective algorithm fusion must orchestrate workload distribution across these heterogeneous resources while respecting their individual constraints and capabilities.

Power consumption constraints significantly impact algorithm fusion design decisions, particularly in embedded and mobile vision applications. Real-time processing demands often conflict with thermal management requirements, necessitating dynamic performance scaling and intelligent workload scheduling. Advanced fusion architectures must implement adaptive processing strategies that can gracefully degrade performance under thermal stress while maintaining critical functionality thresholds.

Synchronization overhead emerges as a fundamental challenge when fusing algorithms with disparate processing requirements. Different vision algorithms exhibit varying computational complexities and execution patterns, creating potential pipeline stalls and resource underutilization. Effective fusion strategies must implement sophisticated scheduling mechanisms that minimize synchronization bottlenecks while ensuring deterministic processing behavior essential for real-time operation.

Memory bandwidth limitations represent a critical bottleneck in real-time algorithm fusion architectures. Contemporary machine vision systems often process high-resolution image streams exceeding 4K resolution, generating data throughput rates of several gigabytes per second. The fusion of multiple algorithms compounds this challenge, as intermediate processing results must be efficiently transferred between processing units while maintaining cache coherency and minimizing memory access conflicts.

Processing unit heterogeneity introduces additional complexity layers in real-time fusion implementations. Modern systems typically integrate CPU cores, GPU compute units, and specialized vision processing units (VPUs) or field-programmable gate arrays (FPGAs). Each processing element exhibits distinct performance characteristics, power consumption profiles, and memory access patterns. Effective algorithm fusion must orchestrate workload distribution across these heterogeneous resources while respecting their individual constraints and capabilities.

Power consumption constraints significantly impact algorithm fusion design decisions, particularly in embedded and mobile vision applications. Real-time processing demands often conflict with thermal management requirements, necessitating dynamic performance scaling and intelligent workload scheduling. Advanced fusion architectures must implement adaptive processing strategies that can gracefully degrade performance under thermal stress while maintaining critical functionality thresholds.

Synchronization overhead emerges as a fundamental challenge when fusing algorithms with disparate processing requirements. Different vision algorithms exhibit varying computational complexities and execution patterns, creating potential pipeline stalls and resource underutilization. Effective fusion strategies must implement sophisticated scheduling mechanisms that minimize synchronization bottlenecks while ensuring deterministic processing behavior essential for real-time operation.

Unlock deeper insights with PatSnap Eureka Quick Research — get a full tech report to explore trends and direct your research. Try now!

Generate Your Research Report Instantly with AI Agent

Supercharge your innovation with PatSnap Eureka AI Agent Platform!