How to Operate Multi-Threaded Environments on Microcontrollers

FEB 25, 20269 MIN READ

Generate Your Research Report Instantly with AI Agent

PatSnap Eureka helps you evaluate technical feasibility & market potential.

Multi-Threading MCU Background and Objectives

Multi-threaded computing has evolved from a luxury reserved for high-performance systems to an essential capability across diverse computing platforms. The journey began in the 1960s with mainframe computers implementing time-sharing systems, progressing through the development of symmetric multiprocessing in the 1980s, and reaching modern embedded systems where resource constraints demand innovative approaches to concurrent execution.

Microcontrollers traditionally operated under single-threaded paradigms due to their limited processing power, memory constraints, and real-time requirements. However, the increasing complexity of embedded applications has created a compelling need for concurrent task execution. Modern IoT devices, automotive control systems, and industrial automation platforms must simultaneously handle sensor data acquisition, communication protocols, user interfaces, and control algorithms while maintaining deterministic behavior.

The evolution toward multi-threaded microcontroller environments represents a fundamental shift in embedded system architecture. Early attempts focused on cooperative multitasking through simple task schedulers, while contemporary solutions incorporate preemptive scheduling, priority-based task management, and sophisticated synchronization mechanisms. This progression reflects the growing sophistication of embedded applications and the availability of more powerful microcontroller architectures.

Current technological objectives center on achieving efficient resource utilization while preserving the real-time characteristics essential to embedded systems. The primary goal involves implementing thread management systems that minimize context switching overhead, optimize memory usage, and maintain predictable execution timing. These systems must balance the benefits of concurrent execution against the inherent complexity introduced by shared resource management and inter-thread communication.

The strategic importance of multi-threaded microcontroller operation extends beyond immediate performance gains. Organizations seek to leverage threading capabilities to reduce development complexity, improve code modularity, and enhance system maintainability. By enabling parallel execution of independent tasks, multi-threading facilitates cleaner separation of concerns and more intuitive software architectures.

Future objectives encompass the development of lightweight threading frameworks specifically optimized for resource-constrained environments. These frameworks must provide robust synchronization primitives, efficient memory management, and comprehensive debugging capabilities while maintaining minimal overhead. The ultimate goal involves creating threading solutions that democratize concurrent programming for embedded developers, making multi-threaded design patterns accessible without requiring deep expertise in concurrent programming complexities.

Microcontrollers traditionally operated under single-threaded paradigms due to their limited processing power, memory constraints, and real-time requirements. However, the increasing complexity of embedded applications has created a compelling need for concurrent task execution. Modern IoT devices, automotive control systems, and industrial automation platforms must simultaneously handle sensor data acquisition, communication protocols, user interfaces, and control algorithms while maintaining deterministic behavior.

The evolution toward multi-threaded microcontroller environments represents a fundamental shift in embedded system architecture. Early attempts focused on cooperative multitasking through simple task schedulers, while contemporary solutions incorporate preemptive scheduling, priority-based task management, and sophisticated synchronization mechanisms. This progression reflects the growing sophistication of embedded applications and the availability of more powerful microcontroller architectures.

Current technological objectives center on achieving efficient resource utilization while preserving the real-time characteristics essential to embedded systems. The primary goal involves implementing thread management systems that minimize context switching overhead, optimize memory usage, and maintain predictable execution timing. These systems must balance the benefits of concurrent execution against the inherent complexity introduced by shared resource management and inter-thread communication.

The strategic importance of multi-threaded microcontroller operation extends beyond immediate performance gains. Organizations seek to leverage threading capabilities to reduce development complexity, improve code modularity, and enhance system maintainability. By enabling parallel execution of independent tasks, multi-threading facilitates cleaner separation of concerns and more intuitive software architectures.

Future objectives encompass the development of lightweight threading frameworks specifically optimized for resource-constrained environments. These frameworks must provide robust synchronization primitives, efficient memory management, and comprehensive debugging capabilities while maintaining minimal overhead. The ultimate goal involves creating threading solutions that democratize concurrent programming for embedded developers, making multi-threaded design patterns accessible without requiring deep expertise in concurrent programming complexities.

Market Demand for Real-Time MCU Applications

The real-time microcontroller applications market has experienced substantial growth driven by the increasing complexity of embedded systems across multiple industries. Industrial automation represents the largest segment, where multi-threaded MCU operations enable simultaneous control of sensors, actuators, and communication protocols while maintaining deterministic response times. Manufacturing facilities require precise timing coordination between production line components, making real-time multi-threading capabilities essential for operational efficiency.

Automotive electronics constitute another major demand driver, particularly with the proliferation of advanced driver assistance systems and electric vehicle technologies. Modern vehicles integrate numerous ECUs that must handle concurrent tasks such as engine management, safety monitoring, and infotainment processing. The automotive industry's shift toward autonomous driving technologies further amplifies the need for sophisticated real-time processing capabilities on resource-constrained microcontrollers.

The Internet of Things ecosystem has created unprecedented demand for intelligent edge devices capable of local processing while maintaining network connectivity. Smart home systems, wearable devices, and industrial IoT sensors require multi-threaded architectures to balance power consumption with responsive user interactions and reliable data transmission. These applications often demand real-time operating system capabilities on low-power microcontrollers.

Medical device manufacturers increasingly rely on real-time MCU applications for patient monitoring systems, implantable devices, and diagnostic equipment. Regulatory requirements mandate strict timing guarantees and fault tolerance, driving adoption of advanced multi-threading techniques that ensure critical functions remain operational even under system stress.

Telecommunications infrastructure modernization, particularly with 5G network deployment, has created substantial demand for edge computing solutions built on high-performance microcontrollers. Base stations and network equipment require real-time packet processing capabilities while managing multiple communication protocols simultaneously.

The defense and aerospace sectors continue expanding their reliance on embedded real-time systems for navigation, communication, and control applications. These markets prioritize reliability and deterministic behavior over cost considerations, creating opportunities for advanced multi-threading solutions that can guarantee mission-critical performance requirements under extreme operating conditions.

Automotive electronics constitute another major demand driver, particularly with the proliferation of advanced driver assistance systems and electric vehicle technologies. Modern vehicles integrate numerous ECUs that must handle concurrent tasks such as engine management, safety monitoring, and infotainment processing. The automotive industry's shift toward autonomous driving technologies further amplifies the need for sophisticated real-time processing capabilities on resource-constrained microcontrollers.

The Internet of Things ecosystem has created unprecedented demand for intelligent edge devices capable of local processing while maintaining network connectivity. Smart home systems, wearable devices, and industrial IoT sensors require multi-threaded architectures to balance power consumption with responsive user interactions and reliable data transmission. These applications often demand real-time operating system capabilities on low-power microcontrollers.

Medical device manufacturers increasingly rely on real-time MCU applications for patient monitoring systems, implantable devices, and diagnostic equipment. Regulatory requirements mandate strict timing guarantees and fault tolerance, driving adoption of advanced multi-threading techniques that ensure critical functions remain operational even under system stress.

Telecommunications infrastructure modernization, particularly with 5G network deployment, has created substantial demand for edge computing solutions built on high-performance microcontrollers. Base stations and network equipment require real-time packet processing capabilities while managing multiple communication protocols simultaneously.

The defense and aerospace sectors continue expanding their reliance on embedded real-time systems for navigation, communication, and control applications. These markets prioritize reliability and deterministic behavior over cost considerations, creating opportunities for advanced multi-threading solutions that can guarantee mission-critical performance requirements under extreme operating conditions.

Current MCU Multi-Threading Limitations and Challenges

Microcontrollers face fundamental architectural constraints that significantly limit their multi-threading capabilities compared to traditional computing platforms. The primary limitation stems from their single-core design and limited processing power, which restricts true parallel execution. Most MCUs operate with clock frequencies ranging from a few MHz to hundreds of MHz, creating bottlenecks when attempting to manage multiple concurrent tasks efficiently.

Memory constraints represent another critical challenge in MCU multi-threading implementations. Typical microcontrollers possess severely limited RAM, often ranging from a few kilobytes to several hundred kilobytes. This scarcity forces developers to carefully manage stack allocation for each thread, as excessive thread creation can quickly exhaust available memory resources. The lack of memory management units in many MCUs also eliminates hardware-based memory protection, increasing the risk of memory corruption between threads.

Real-time operating system overhead poses significant performance penalties in resource-constrained environments. Context switching operations, which involve saving and restoring processor states between threads, consume valuable CPU cycles and memory bandwidth. The frequency of these operations directly impacts system responsiveness, particularly problematic for time-critical applications requiring deterministic behavior.

Synchronization mechanisms present additional complexity layers in MCU environments. Traditional synchronization primitives like mutexes, semaphores, and message queues require careful implementation to avoid priority inversion and deadlock scenarios. The absence of sophisticated hardware support for atomic operations in many low-end MCUs necessitates interrupt-based solutions, which can introduce timing uncertainties and complicate system design.

Power consumption considerations further complicate multi-threading implementations on battery-powered devices. Thread scheduling algorithms must balance performance requirements with energy efficiency, as frequent context switches and active thread management can significantly impact battery life. Sleep mode coordination across multiple threads becomes particularly challenging when attempting to maintain system responsiveness while minimizing power consumption.

Debugging and testing multi-threaded MCU applications presents substantial difficulties due to limited debugging resources and tools. Race conditions, timing-dependent bugs, and resource conflicts become increasingly difficult to identify and resolve in resource-constrained environments. The lack of sophisticated profiling tools specifically designed for MCU multi-threading further complicates performance optimization efforts.

Hardware peripheral access coordination represents another significant challenge, as most MCU peripherals lack built-in multi-threading support. Developers must implement careful resource sharing mechanisms to prevent conflicts when multiple threads attempt to access the same hardware resources simultaneously, adding complexity to system architecture and potentially reducing overall performance.

Memory constraints represent another critical challenge in MCU multi-threading implementations. Typical microcontrollers possess severely limited RAM, often ranging from a few kilobytes to several hundred kilobytes. This scarcity forces developers to carefully manage stack allocation for each thread, as excessive thread creation can quickly exhaust available memory resources. The lack of memory management units in many MCUs also eliminates hardware-based memory protection, increasing the risk of memory corruption between threads.

Real-time operating system overhead poses significant performance penalties in resource-constrained environments. Context switching operations, which involve saving and restoring processor states between threads, consume valuable CPU cycles and memory bandwidth. The frequency of these operations directly impacts system responsiveness, particularly problematic for time-critical applications requiring deterministic behavior.

Synchronization mechanisms present additional complexity layers in MCU environments. Traditional synchronization primitives like mutexes, semaphores, and message queues require careful implementation to avoid priority inversion and deadlock scenarios. The absence of sophisticated hardware support for atomic operations in many low-end MCUs necessitates interrupt-based solutions, which can introduce timing uncertainties and complicate system design.

Power consumption considerations further complicate multi-threading implementations on battery-powered devices. Thread scheduling algorithms must balance performance requirements with energy efficiency, as frequent context switches and active thread management can significantly impact battery life. Sleep mode coordination across multiple threads becomes particularly challenging when attempting to maintain system responsiveness while minimizing power consumption.

Debugging and testing multi-threaded MCU applications presents substantial difficulties due to limited debugging resources and tools. Race conditions, timing-dependent bugs, and resource conflicts become increasingly difficult to identify and resolve in resource-constrained environments. The lack of sophisticated profiling tools specifically designed for MCU multi-threading further complicates performance optimization efforts.

Hardware peripheral access coordination represents another significant challenge, as most MCU peripherals lack built-in multi-threading support. Developers must implement careful resource sharing mechanisms to prevent conflicts when multiple threads attempt to access the same hardware resources simultaneously, adding complexity to system architecture and potentially reducing overall performance.

Existing MCU Multi-Threading Solutions

01 Thread synchronization and locking mechanisms

Multi-threaded environments require proper synchronization mechanisms to prevent race conditions and ensure data consistency. Various locking techniques including mutex locks, semaphores, and read-write locks can be implemented to coordinate access to shared resources. These mechanisms help manage concurrent thread execution and prevent conflicts when multiple threads attempt to access or modify the same data simultaneously.- Thread synchronization and locking mechanisms: Multi-threaded environments require proper synchronization mechanisms to prevent race conditions and ensure data consistency. Various locking techniques including mutex locks, semaphores, and read-write locks can be implemented to coordinate access to shared resources. These mechanisms help manage concurrent thread execution and prevent conflicts when multiple threads attempt to access or modify the same data simultaneously.

- Thread pool management and scheduling: Efficient management of thread pools is essential for optimizing performance in multi-threaded applications. Thread pool implementations allow for reuse of worker threads, reducing the overhead of thread creation and destruction. Advanced scheduling algorithms can be employed to distribute tasks among available threads, balance workload, and improve overall system throughput while minimizing resource consumption.

- Concurrent data structure access and memory management: Managing concurrent access to data structures requires specialized techniques to maintain consistency and prevent corruption. Lock-free and wait-free data structures can be implemented to allow multiple threads to access shared data without traditional locking overhead. Memory barriers and atomic operations ensure proper ordering of memory operations across different threads, while garbage collection and memory allocation strategies must account for concurrent access patterns.

- Deadlock detection and prevention: Deadlock situations occur when threads are waiting for resources held by each other, creating a circular dependency. Detection mechanisms can identify potential deadlock conditions by analyzing resource allocation graphs and thread dependencies. Prevention strategies include resource ordering, timeout mechanisms, and deadlock avoidance algorithms that ensure safe resource allocation patterns to maintain system progress.

- Parallel processing and task decomposition: Effective utilization of multi-threaded environments involves decomposing complex tasks into smaller parallel units that can be executed concurrently. Work-stealing algorithms and fork-join frameworks enable dynamic load balancing across threads. Techniques for identifying parallelizable code sections and managing dependencies between parallel tasks are crucial for achieving optimal performance gains from multi-core processors.

02 Thread pool management and scheduling

Efficient management of thread pools is essential for optimizing performance in multi-threaded applications. Thread pool implementations allow for reuse of worker threads, reducing the overhead of thread creation and destruction. Advanced scheduling algorithms can be employed to distribute tasks among available threads, balance workload, and improve overall system throughput while minimizing resource consumption.Expand Specific Solutions03 Concurrent data structure operations

Specialized data structures designed for concurrent access enable multiple threads to perform operations simultaneously without corruption. Lock-free and wait-free data structures provide high performance by minimizing contention. These structures include concurrent queues, hash maps, and lists that support atomic operations and ensure thread-safe access patterns in multi-threaded environments.Expand Specific Solutions04 Deadlock detection and prevention

Deadlock situations occur when threads wait indefinitely for resources held by each other. Various strategies can be implemented to detect potential deadlocks through resource allocation graphs and dependency analysis. Prevention techniques include resource ordering, timeout mechanisms, and deadlock avoidance algorithms that ensure safe execution states and maintain system responsiveness.Expand Specific Solutions05 Memory consistency and cache coherence

Multi-threaded systems must address memory consistency issues to ensure that all threads observe a coherent view of shared memory. Memory barriers and fence instructions can be used to enforce ordering constraints on memory operations. Cache coherence protocols ensure that modifications made by one thread are properly propagated to other threads, preventing stale data reads and maintaining data integrity across processor cores.Expand Specific Solutions

Key Players in MCU and RTOS Industry

The multi-threaded microcontroller environment represents a rapidly evolving technological landscape currently in its growth phase, driven by increasing demand for real-time processing in IoT and embedded systems. The market demonstrates significant expansion potential as applications require more sophisticated concurrent processing capabilities. Technology maturity varies considerably across industry players, with established semiconductor giants like Intel Corp., Qualcomm, and Texas Instruments leading through advanced multi-core architectures and real-time operating system integration. Companies such as ARM Limited and AMD provide foundational processor designs enabling multi-threading capabilities, while emerging players like Ceremorphic and Simplex Micro introduce innovative RISC-V based solutions. Traditional tech leaders including Microsoft, IBM, and Samsung contribute through software frameworks and system-level integration. The competitive landscape shows a clear division between hardware innovators developing specialized multi-threading capable microcontrollers and software providers creating development tools and operating systems that enable efficient thread management in resource-constrained environments.

Intel Corp.

Technical Solution: Intel's approach to multi-threading on microcontrollers focuses on their x86-based embedded processors and IoT platforms. They provide hardware-level support for simultaneous multithreading (SMT) and multi-core processing capabilities even in low-power embedded systems. Intel's Real-Time Systems Toolkit includes optimized threading libraries, deterministic scheduling algorithms, and performance profiling tools specifically designed for microcontroller applications. Their solution incorporates Intel Threading Building Blocks (TBB) adapted for embedded systems, offering parallel programming patterns, task-based threading models, and automatic load balancing. The platform supports both preemptive and cooperative threading models with advanced debugging capabilities for multi-threaded embedded applications.

Strengths: High-performance processing capabilities with robust development tools and extensive software ecosystem. Weaknesses: Higher power consumption and cost compared to traditional ARM-based microcontrollers, limiting use in battery-powered applications.

Samsung Electronics Co., Ltd.

Technical Solution: Samsung's multi-threading solution for microcontrollers is integrated into their Exynos IoT and ARTIK platforms, featuring a custom RTOS kernel optimized for their ARM-based microcontroller units. Their approach emphasizes energy-efficient thread scheduling algorithms that dynamically adjust processor frequency and voltage based on thread priority and workload characteristics. Samsung implements hardware-accelerated context switching mechanisms and provides specialized threading libraries for IoT applications including sensor data processing, wireless communication handling, and device management tasks. The platform supports hierarchical thread scheduling, allowing for complex multi-level priority systems and includes built-in security features for thread isolation and secure inter-thread communication in connected device applications.

Strengths: Strong integration with IoT ecosystem and advanced power management features with built-in security capabilities. Weaknesses: Limited availability outside Samsung's ecosystem and relatively newer platform with smaller developer community.

Core RTOS and Scheduler Innovations

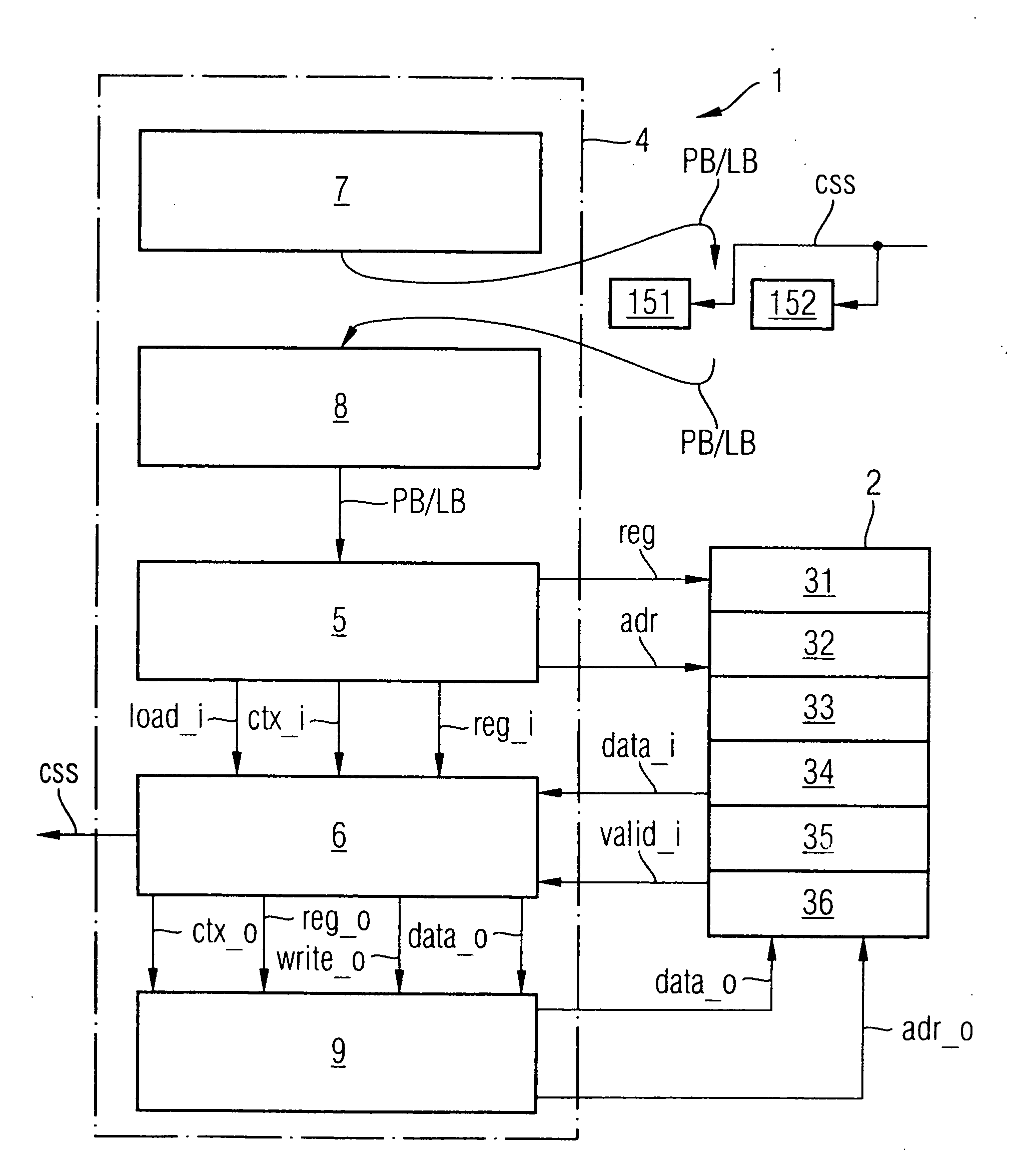

Hardware multithreading systems and methods

PatentInactiveEP1817663A2

Innovation

- A multithreaded microcontroller with special-purpose registers and thread control logic that executes multithreading system call instructions, including wait condition, signal, and mutex lock instructions, to manage thread states and facilitate efficient inter-thread communication.

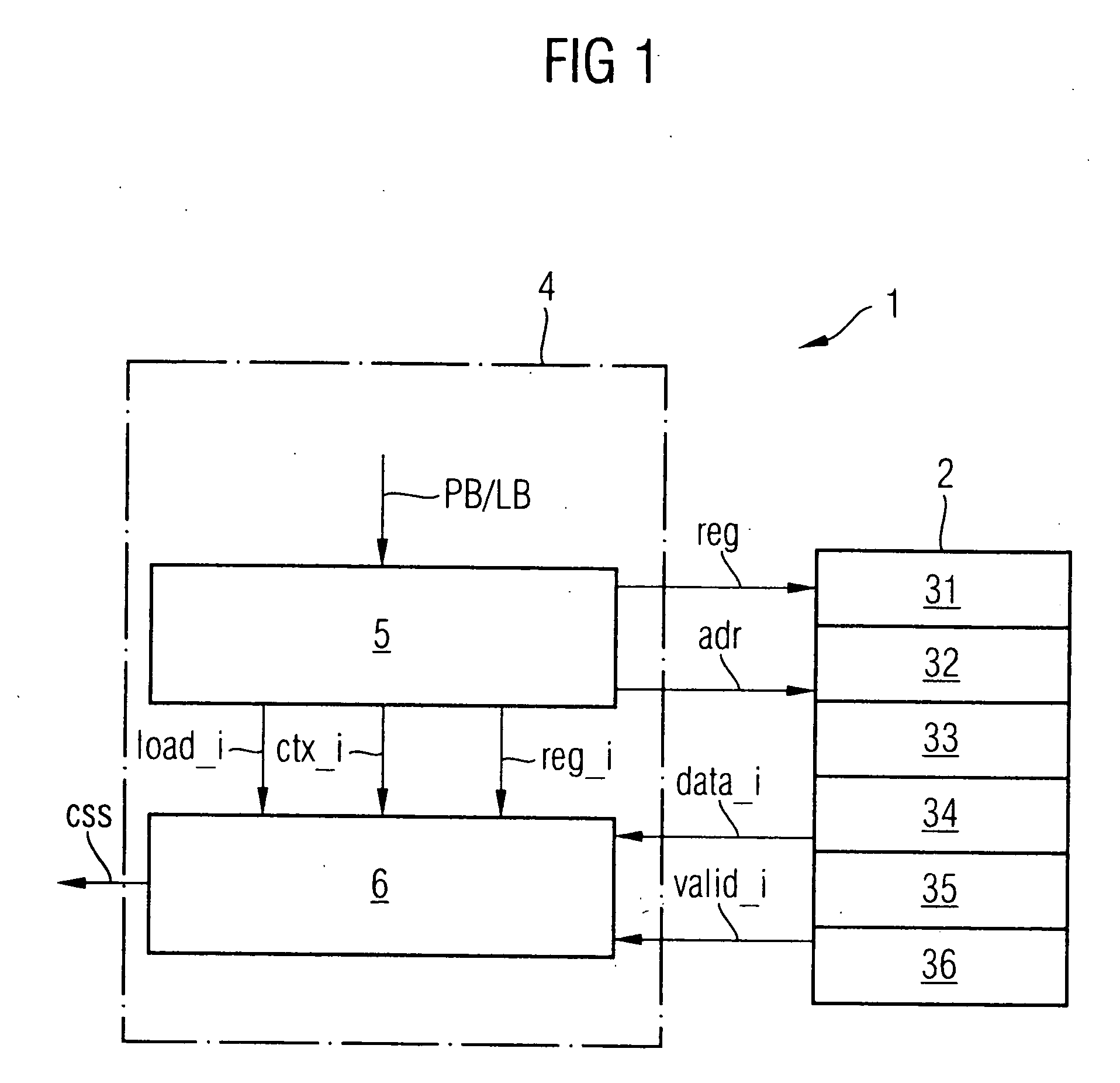

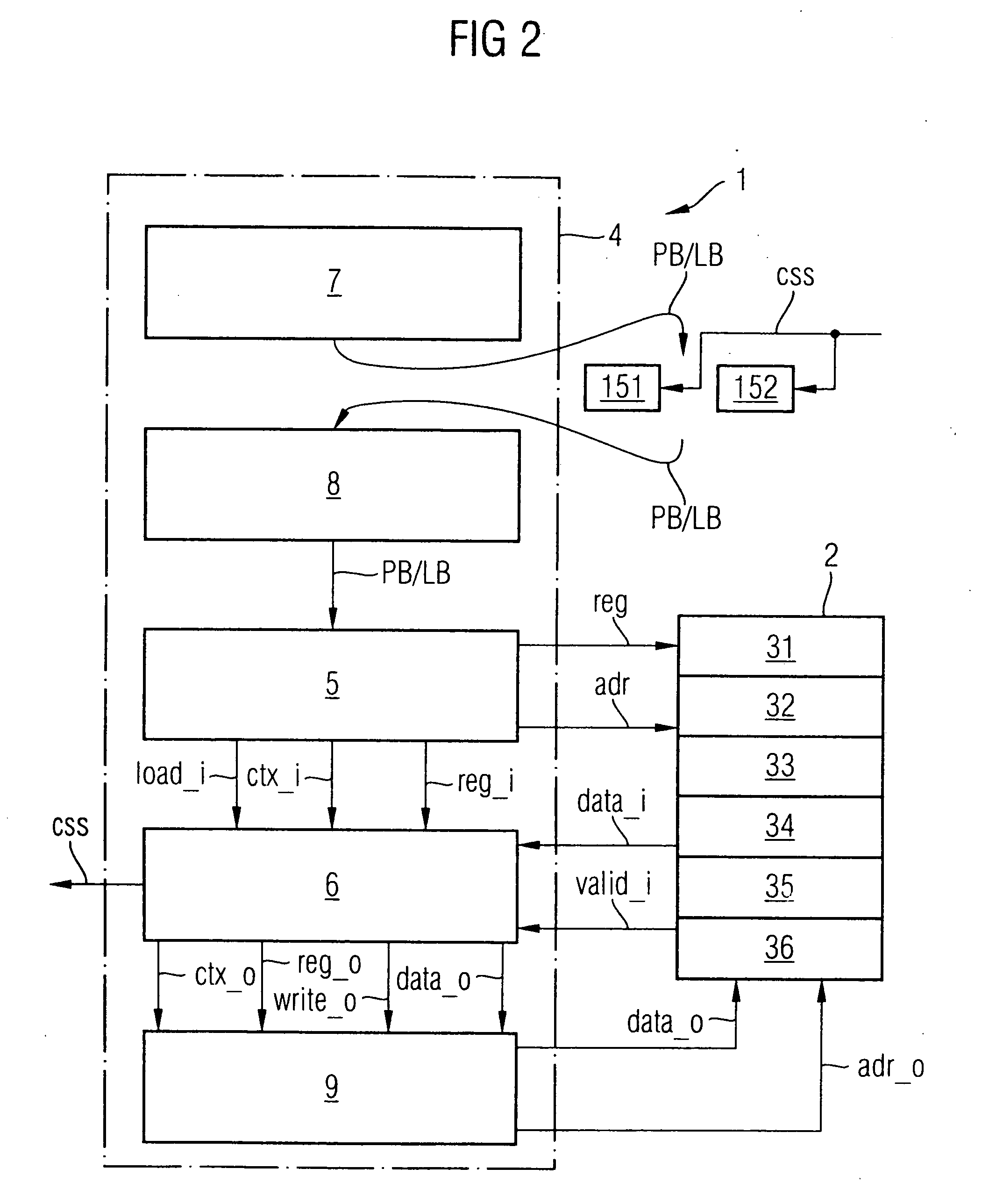

Multi-thread processor and method for operating such a processor

PatentInactiveUS20060230258A1

Innovation

- A multi-thread processor with a synchronization unit that generates a memory-triggered context switch signal by loading and buffering context identifiers and memory values synchronously, allowing for reduced command buffering by arranging buffers above the memory read access unit and using a logic circuit to generate a context switch signal based on specific signal levels.

Memory Management in Resource-Constrained Systems

Memory management represents one of the most critical challenges when implementing multi-threaded environments on microcontrollers. These embedded systems typically operate with severely limited RAM resources, often ranging from a few kilobytes to several hundred kilobytes, making efficient memory allocation and deallocation strategies essential for successful multi-threaded operation.

Stack management constitutes the primary memory concern in multi-threaded microcontroller environments. Each thread requires its own dedicated stack space to store local variables, function parameters, and return addresses. Traditional desktop operating systems can dynamically allocate large stack spaces, but microcontrollers must pre-allocate fixed-size stacks for each thread. This approach demands careful stack size calculation to prevent overflow while avoiding excessive memory waste. Stack overflow detection mechanisms become crucial, as they can cause system crashes or unpredictable behavior in resource-constrained environments.

Heap management presents additional complexity in multi-threaded microcontroller systems. Dynamic memory allocation through malloc and free operations can lead to memory fragmentation, which is particularly problematic when available RAM is limited. Many embedded systems adopt memory pool allocation strategies, where fixed-size memory blocks are pre-allocated and managed through pool managers. This approach reduces fragmentation and provides more predictable memory allocation behavior, essential for real-time applications.

Shared memory protection mechanisms must be implemented efficiently without consuming excessive resources. Traditional memory management units found in larger processors are often absent in microcontrollers, requiring software-based protection schemes. Memory barriers and atomic operations become critical for ensuring data consistency across multiple threads accessing shared memory regions.

Static memory allocation strategies often prove more suitable for microcontroller environments than dynamic allocation. By determining memory requirements at compile time, developers can eliminate runtime allocation overhead and fragmentation issues. This approach requires careful system design but provides better predictability and reliability in resource-constrained scenarios.

Memory optimization techniques specific to multi-threaded microcontroller environments include thread-local storage implementation, where frequently accessed data is stored in dedicated memory regions for each thread, reducing contention and improving performance while maintaining memory efficiency within the constraints of limited available resources.

Stack management constitutes the primary memory concern in multi-threaded microcontroller environments. Each thread requires its own dedicated stack space to store local variables, function parameters, and return addresses. Traditional desktop operating systems can dynamically allocate large stack spaces, but microcontrollers must pre-allocate fixed-size stacks for each thread. This approach demands careful stack size calculation to prevent overflow while avoiding excessive memory waste. Stack overflow detection mechanisms become crucial, as they can cause system crashes or unpredictable behavior in resource-constrained environments.

Heap management presents additional complexity in multi-threaded microcontroller systems. Dynamic memory allocation through malloc and free operations can lead to memory fragmentation, which is particularly problematic when available RAM is limited. Many embedded systems adopt memory pool allocation strategies, where fixed-size memory blocks are pre-allocated and managed through pool managers. This approach reduces fragmentation and provides more predictable memory allocation behavior, essential for real-time applications.

Shared memory protection mechanisms must be implemented efficiently without consuming excessive resources. Traditional memory management units found in larger processors are often absent in microcontrollers, requiring software-based protection schemes. Memory barriers and atomic operations become critical for ensuring data consistency across multiple threads accessing shared memory regions.

Static memory allocation strategies often prove more suitable for microcontroller environments than dynamic allocation. By determining memory requirements at compile time, developers can eliminate runtime allocation overhead and fragmentation issues. This approach requires careful system design but provides better predictability and reliability in resource-constrained scenarios.

Memory optimization techniques specific to multi-threaded microcontroller environments include thread-local storage implementation, where frequently accessed data is stored in dedicated memory regions for each thread, reducing contention and improving performance while maintaining memory efficiency within the constraints of limited available resources.

Power Optimization for Multi-Threaded MCU Operations

Power optimization in multi-threaded microcontroller operations represents a critical design consideration that directly impacts system performance, battery life, and thermal management. As microcontrollers increasingly support concurrent thread execution, the energy consumption patterns become more complex, requiring sophisticated power management strategies to maintain efficiency while preserving computational capabilities.

The fundamental challenge lies in balancing thread scheduling with power states. Traditional single-threaded MCUs could easily enter deep sleep modes during idle periods, but multi-threaded environments must coordinate multiple execution contexts before transitioning to low-power states. This coordination overhead can paradoxically increase power consumption if not properly managed through intelligent thread synchronization and power-aware scheduling algorithms.

Dynamic voltage and frequency scaling emerges as a primary optimization technique for multi-threaded MCU operations. By adjusting clock frequencies and supply voltages based on thread workload characteristics, systems can achieve significant power reductions. Advanced implementations monitor thread execution patterns in real-time, automatically scaling performance parameters to match computational demands while minimizing energy waste during periods of reduced activity.

Thread-aware power gating strategies offer another crucial optimization avenue. Modern MCUs can selectively disable unused processing units, memory banks, and peripheral interfaces based on active thread requirements. This granular control allows systems to maintain essential threads while powering down non-critical resources, achieving optimal power efficiency without compromising system responsiveness or real-time performance guarantees.

Cache management and memory access optimization play vital roles in multi-threaded power efficiency. Intelligent thread placement algorithms can minimize cache conflicts and reduce memory access latency, directly translating to lower power consumption. Additionally, implementing thread-local storage strategies and optimizing data sharing patterns can significantly reduce memory subsystem power draw while maintaining thread isolation and data integrity.

Peripheral power management becomes increasingly complex in multi-threaded environments where different threads may require access to various hardware resources. Advanced power optimization frameworks implement resource pooling and sharing mechanisms that allow multiple threads to efficiently utilize peripherals while minimizing activation overhead and idle power consumption through coordinated resource scheduling.

The fundamental challenge lies in balancing thread scheduling with power states. Traditional single-threaded MCUs could easily enter deep sleep modes during idle periods, but multi-threaded environments must coordinate multiple execution contexts before transitioning to low-power states. This coordination overhead can paradoxically increase power consumption if not properly managed through intelligent thread synchronization and power-aware scheduling algorithms.

Dynamic voltage and frequency scaling emerges as a primary optimization technique for multi-threaded MCU operations. By adjusting clock frequencies and supply voltages based on thread workload characteristics, systems can achieve significant power reductions. Advanced implementations monitor thread execution patterns in real-time, automatically scaling performance parameters to match computational demands while minimizing energy waste during periods of reduced activity.

Thread-aware power gating strategies offer another crucial optimization avenue. Modern MCUs can selectively disable unused processing units, memory banks, and peripheral interfaces based on active thread requirements. This granular control allows systems to maintain essential threads while powering down non-critical resources, achieving optimal power efficiency without compromising system responsiveness or real-time performance guarantees.

Cache management and memory access optimization play vital roles in multi-threaded power efficiency. Intelligent thread placement algorithms can minimize cache conflicts and reduce memory access latency, directly translating to lower power consumption. Additionally, implementing thread-local storage strategies and optimizing data sharing patterns can significantly reduce memory subsystem power draw while maintaining thread isolation and data integrity.

Peripheral power management becomes increasingly complex in multi-threaded environments where different threads may require access to various hardware resources. Advanced power optimization frameworks implement resource pooling and sharing mechanisms that allow multiple threads to efficiently utilize peripherals while minimizing activation overhead and idle power consumption through coordinated resource scheduling.

Unlock deeper insights with PatSnap Eureka Quick Research — get a full tech report to explore trends and direct your research. Try now!

Generate Your Research Report Instantly with AI Agent

Supercharge your innovation with PatSnap Eureka AI Agent Platform!