Optimizing Notch Filter Throughput in Dense Networks

MAR 17, 20269 MIN READ

Generate Your Research Report Instantly with AI Agent

PatSnap Eureka helps you evaluate technical feasibility & market potential.

Dense Network Notch Filter Background and Objectives

Dense networks have emerged as a critical infrastructure component in modern telecommunications, characterized by high node density, complex interference patterns, and stringent performance requirements. The proliferation of wireless devices, Internet of Things sensors, and next-generation communication systems has created environments where thousands of devices operate within confined geographical areas, generating unprecedented levels of electromagnetic interference and signal congestion.

The evolution of dense network architectures began with traditional cellular networks but has accelerated dramatically with the advent of 5G, Wi-Fi 6, and beyond. Early network designs relied on simple frequency allocation and power control mechanisms, which proved inadequate for managing interference in high-density deployments. The transition from sparse to dense network topologies introduced fundamental challenges in signal processing, particularly in maintaining signal integrity while maximizing spectral efficiency.

Notch filtering technology has historically served as a cornerstone solution for interference mitigation in communication systems. Traditional notch filters were designed for relatively static interference scenarios with predictable frequency characteristics. However, dense network environments present dynamic, multi-source interference patterns that challenge conventional filtering approaches. The interference landscape in these networks is characterized by rapid temporal variations, wide frequency spreads, and complex spatial distributions.

The primary technical objective centers on developing adaptive notch filtering mechanisms capable of maintaining high throughput performance while effectively suppressing interference in dense network scenarios. This involves creating filters that can dynamically adjust their frequency response characteristics based on real-time interference analysis, while minimizing the impact on desired signal components. The challenge lies in achieving optimal trade-offs between interference suppression depth, filter selectivity, and processing latency.

Performance optimization in dense networks requires addressing multiple competing objectives simultaneously. Throughput maximization demands minimal signal distortion and rapid filter adaptation, while interference suppression necessitates precise frequency domain control and sufficient attenuation depth. The temporal dynamics of dense networks further complicate these requirements, as interference patterns can change within milliseconds, requiring filter responses that operate at comparable timescales.

Contemporary research focuses on intelligent filtering architectures that leverage machine learning algorithms, adaptive signal processing techniques, and distributed coordination mechanisms. These approaches aim to predict interference patterns, optimize filter parameters in real-time, and coordinate filtering strategies across multiple network nodes to achieve system-wide performance improvements while maintaining individual link quality standards.

The evolution of dense network architectures began with traditional cellular networks but has accelerated dramatically with the advent of 5G, Wi-Fi 6, and beyond. Early network designs relied on simple frequency allocation and power control mechanisms, which proved inadequate for managing interference in high-density deployments. The transition from sparse to dense network topologies introduced fundamental challenges in signal processing, particularly in maintaining signal integrity while maximizing spectral efficiency.

Notch filtering technology has historically served as a cornerstone solution for interference mitigation in communication systems. Traditional notch filters were designed for relatively static interference scenarios with predictable frequency characteristics. However, dense network environments present dynamic, multi-source interference patterns that challenge conventional filtering approaches. The interference landscape in these networks is characterized by rapid temporal variations, wide frequency spreads, and complex spatial distributions.

The primary technical objective centers on developing adaptive notch filtering mechanisms capable of maintaining high throughput performance while effectively suppressing interference in dense network scenarios. This involves creating filters that can dynamically adjust their frequency response characteristics based on real-time interference analysis, while minimizing the impact on desired signal components. The challenge lies in achieving optimal trade-offs between interference suppression depth, filter selectivity, and processing latency.

Performance optimization in dense networks requires addressing multiple competing objectives simultaneously. Throughput maximization demands minimal signal distortion and rapid filter adaptation, while interference suppression necessitates precise frequency domain control and sufficient attenuation depth. The temporal dynamics of dense networks further complicate these requirements, as interference patterns can change within milliseconds, requiring filter responses that operate at comparable timescales.

Contemporary research focuses on intelligent filtering architectures that leverage machine learning algorithms, adaptive signal processing techniques, and distributed coordination mechanisms. These approaches aim to predict interference patterns, optimize filter parameters in real-time, and coordinate filtering strategies across multiple network nodes to achieve system-wide performance improvements while maintaining individual link quality standards.

Market Demand for High-Throughput Network Filtering

The telecommunications industry is experiencing unprecedented demand for high-throughput network filtering solutions, driven by the exponential growth in data traffic and the proliferation of connected devices. Mobile network operators worldwide are grappling with capacity constraints as 5G deployments accelerate and Internet of Things applications multiply across industrial and consumer sectors. This surge in network density has created critical bottlenecks where traditional filtering mechanisms struggle to maintain signal quality while processing massive data volumes.

Enterprise networks represent another significant demand driver, particularly in sectors requiring ultra-low latency communications such as financial trading, autonomous vehicle systems, and industrial automation. These applications cannot tolerate the signal degradation that occurs when conventional notch filters become overwhelmed in dense network environments. The market is actively seeking solutions that can maintain filtering precision while scaling throughput capabilities to match modern network demands.

Cloud service providers and data center operators constitute a rapidly expanding customer segment for advanced network filtering technologies. As edge computing architectures proliferate, these operators require filtering solutions capable of handling diverse signal types simultaneously without compromising performance. The shift toward software-defined networking has further intensified requirements for adaptive filtering systems that can dynamically optimize throughput based on real-time network conditions.

The wireless infrastructure market shows particularly strong demand for optimized notch filtering solutions. Base station manufacturers and network equipment vendors are prioritizing technologies that can enhance spectral efficiency while managing interference in increasingly crowded frequency bands. Regulatory pressures to maximize spectrum utilization have made high-throughput filtering capabilities a competitive necessity rather than merely a technical advantage.

Emerging applications in satellite communications and military communications systems are creating specialized demand segments where traditional throughput limitations pose operational risks. These sectors require filtering solutions that maintain performance integrity under extreme density conditions while supporting mission-critical reliability standards. The convergence of these diverse market pressures has established high-throughput network filtering as a strategic technology priority across multiple industries.

Enterprise networks represent another significant demand driver, particularly in sectors requiring ultra-low latency communications such as financial trading, autonomous vehicle systems, and industrial automation. These applications cannot tolerate the signal degradation that occurs when conventional notch filters become overwhelmed in dense network environments. The market is actively seeking solutions that can maintain filtering precision while scaling throughput capabilities to match modern network demands.

Cloud service providers and data center operators constitute a rapidly expanding customer segment for advanced network filtering technologies. As edge computing architectures proliferate, these operators require filtering solutions capable of handling diverse signal types simultaneously without compromising performance. The shift toward software-defined networking has further intensified requirements for adaptive filtering systems that can dynamically optimize throughput based on real-time network conditions.

The wireless infrastructure market shows particularly strong demand for optimized notch filtering solutions. Base station manufacturers and network equipment vendors are prioritizing technologies that can enhance spectral efficiency while managing interference in increasingly crowded frequency bands. Regulatory pressures to maximize spectrum utilization have made high-throughput filtering capabilities a competitive necessity rather than merely a technical advantage.

Emerging applications in satellite communications and military communications systems are creating specialized demand segments where traditional throughput limitations pose operational risks. These sectors require filtering solutions that maintain performance integrity under extreme density conditions while supporting mission-critical reliability standards. The convergence of these diverse market pressures has established high-throughput network filtering as a strategic technology priority across multiple industries.

Current Notch Filter Limitations in Dense Network Environments

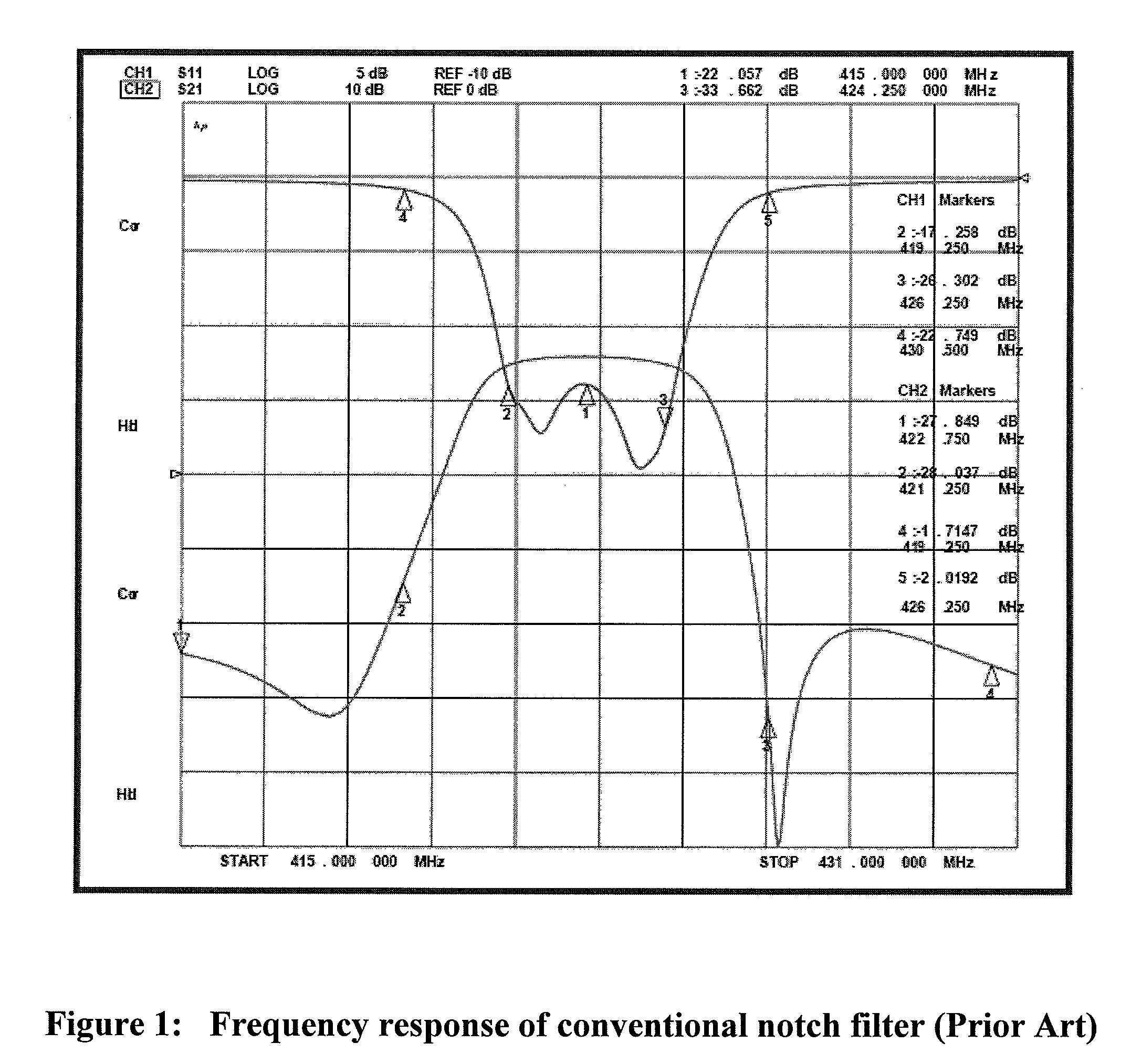

Dense network environments present significant challenges for notch filter implementation, primarily stemming from the fundamental limitations of traditional filtering architectures when confronted with high-density signal scenarios. Current notch filter designs exhibit substantial throughput degradation as network density increases, with performance bottlenecks becoming particularly pronounced when processing multiple simultaneous interference signals across overlapping frequency bands.

The primary constraint lies in the sequential processing nature of conventional notch filter implementations. Traditional designs typically employ cascaded filter stages that process signals in a linear fashion, creating inherent latency accumulation as network complexity grows. This sequential approach becomes increasingly problematic in dense environments where rapid signal adaptation is crucial for maintaining network performance.

Bandwidth limitations represent another critical constraint affecting notch filter effectiveness in dense networks. Current filter designs often struggle with narrow bandwidth precision while maintaining adequate rejection depth, particularly when multiple closely-spaced interfering signals require simultaneous suppression. The trade-off between filter selectivity and processing speed creates operational limitations that become more severe as signal density increases.

Power consumption constraints further compound these limitations, as traditional notch filter implementations require significant computational resources to maintain performance standards in dense environments. The energy overhead associated with real-time filter coefficient updates and multiple simultaneous filtering operations creates scalability challenges that limit practical deployment in resource-constrained network nodes.

Dynamic adaptation capabilities of existing notch filter solutions remain insufficient for rapidly changing dense network conditions. Current implementations typically rely on relatively slow feedback mechanisms that cannot adequately track fast-changing interference patterns characteristic of high-density network environments. This adaptation lag results in suboptimal interference suppression and reduced overall network throughput.

Manufacturing tolerances and component variations introduce additional limitations in dense network applications. Traditional analog notch filter implementations suffer from temperature drift and component aging effects that become more problematic when precise frequency targeting is required across multiple interference sources. These physical constraints limit the reliability and consistency of filter performance in demanding operational environments.

The integration complexity of current notch filter solutions with existing network infrastructure presents implementation challenges that restrict widespread adoption in dense network scenarios. Legacy compatibility requirements and standardization constraints often prevent the deployment of more advanced filtering techniques that could potentially address throughput limitations more effectively.

The primary constraint lies in the sequential processing nature of conventional notch filter implementations. Traditional designs typically employ cascaded filter stages that process signals in a linear fashion, creating inherent latency accumulation as network complexity grows. This sequential approach becomes increasingly problematic in dense environments where rapid signal adaptation is crucial for maintaining network performance.

Bandwidth limitations represent another critical constraint affecting notch filter effectiveness in dense networks. Current filter designs often struggle with narrow bandwidth precision while maintaining adequate rejection depth, particularly when multiple closely-spaced interfering signals require simultaneous suppression. The trade-off between filter selectivity and processing speed creates operational limitations that become more severe as signal density increases.

Power consumption constraints further compound these limitations, as traditional notch filter implementations require significant computational resources to maintain performance standards in dense environments. The energy overhead associated with real-time filter coefficient updates and multiple simultaneous filtering operations creates scalability challenges that limit practical deployment in resource-constrained network nodes.

Dynamic adaptation capabilities of existing notch filter solutions remain insufficient for rapidly changing dense network conditions. Current implementations typically rely on relatively slow feedback mechanisms that cannot adequately track fast-changing interference patterns characteristic of high-density network environments. This adaptation lag results in suboptimal interference suppression and reduced overall network throughput.

Manufacturing tolerances and component variations introduce additional limitations in dense network applications. Traditional analog notch filter implementations suffer from temperature drift and component aging effects that become more problematic when precise frequency targeting is required across multiple interference sources. These physical constraints limit the reliability and consistency of filter performance in demanding operational environments.

The integration complexity of current notch filter solutions with existing network infrastructure presents implementation challenges that restrict widespread adoption in dense network scenarios. Legacy compatibility requirements and standardization constraints often prevent the deployment of more advanced filtering techniques that could potentially address throughput limitations more effectively.

Existing Throughput Optimization Solutions for Notch Filters

01 Optical notch filter design for high throughput

Notch filters can be designed with specific optical characteristics to maximize light throughput while maintaining narrow rejection bands. These designs optimize the filter structure, coating layers, and substrate materials to achieve high transmission efficiency in pass bands while effectively blocking unwanted wavelengths. Advanced multi-layer thin film coatings and interference-based designs enable precise control over spectral characteristics.- Optical notch filter design for high throughput: Notch filters can be designed with specific optical characteristics to maximize light throughput while maintaining narrow rejection bands. These designs optimize the filter structure, coating layers, and substrate materials to achieve high transmission efficiency in pass bands while effectively blocking unwanted wavelengths. Advanced thin-film deposition techniques and multi-layer interference coatings enable precise control over spectral characteristics and throughput performance.

- Tunable notch filter systems with adjustable throughput: Tunable notch filter configurations allow dynamic adjustment of the rejection wavelength while maintaining optimal throughput characteristics. These systems incorporate mechanisms for real-time wavelength tuning through various methods including mechanical adjustment, temperature control, or electro-optical modulation. The tunable designs enable adaptive filtering across different spectral ranges without compromising transmission efficiency in the pass bands.

- Multi-stage notch filter arrays for enhanced performance: Multiple notch filters can be arranged in series or parallel configurations to achieve improved rejection characteristics and overall system throughput. These array designs distribute the filtering function across multiple stages, reducing individual filter requirements and minimizing insertion loss. The cascaded or parallel arrangement enables broader rejection bands or multiple discrete notch frequencies while maintaining high transmission in desired spectral regions.

- Integrated notch filter modules with signal processing: Notch filter systems can be integrated with signal processing circuits and control electronics to optimize throughput performance. These integrated modules combine filtering elements with amplification, compensation, and feedback mechanisms to maintain consistent throughput across varying operating conditions. The integration enables real-time monitoring and adjustment of filter parameters to maximize signal transmission while maintaining effective noise rejection.

- Compact notch filter structures for space-constrained applications: Miniaturized notch filter designs enable high throughput performance in compact form factors suitable for portable and integrated systems. These structures utilize advanced fabrication techniques, novel materials, and optimized geometries to achieve effective filtering in reduced physical dimensions. The compact designs maintain spectral performance while minimizing footprint and enabling integration into space-limited applications without sacrificing throughput efficiency.

02 Tunable notch filter systems with adjustable throughput

Tunable notch filter configurations allow dynamic adjustment of the rejection wavelength and bandwidth, enabling optimization of throughput for different applications. These systems incorporate mechanical, electro-optical, or acousto-optical tuning mechanisms that can shift the notch frequency while maintaining high transmission efficiency. The adjustability provides flexibility in managing signal throughput across varying operational conditions.Expand Specific Solutions03 Digital signal processing for notch filter throughput enhancement

Digital notch filtering techniques employ algorithms and processing methods to improve signal throughput by selectively attenuating specific frequency components while preserving desired signals. These approaches utilize adaptive filtering, fast Fourier transforms, and real-time processing to achieve high data rates and minimal signal degradation. Implementation in hardware or software enables efficient handling of high-bandwidth signals.Expand Specific Solutions04 Cascaded notch filter architectures for improved performance

Multiple notch filters arranged in series or parallel configurations can enhance overall system throughput by distributing filtering tasks and reducing individual filter stress. These architectures allow for broader rejection bands or multiple discrete notch frequencies while maintaining high transmission in pass bands. The cascaded approach enables better impedance matching and reduced insertion loss.Expand Specific Solutions05 Integrated notch filter devices with optimized coupling

Integrated notch filter implementations incorporate optimized coupling mechanisms and miniaturized designs to maximize throughput in compact form factors. These devices utilize waveguide structures, resonator coupling, and impedance matching techniques to minimize losses and reflections. Integration with other components enables system-level optimization of signal flow and processing efficiency.Expand Specific Solutions

Key Players in Network Equipment and Filter Technology

The competitive landscape for optimizing notch filter throughput in dense networks represents a mature yet rapidly evolving sector driven by increasing network density demands and 5G/6G deployment requirements. The market spans telecommunications infrastructure, semiconductor solutions, and defense applications, with significant growth potential as network complexity intensifies. Technology maturity varies considerably across players, with established semiconductor giants like Texas Instruments, NXP Semiconductors, and STMicroelectronics leading in component-level solutions, while telecommunications leaders including Huawei, Ericsson, and Juniper Networks focus on system-level implementations. Research institutions such as University of Electronic Science & Technology of China and Xi'an Jiaotong University contribute fundamental advances, while defense contractors like Lockheed Martin and Raytheon drive specialized applications requiring high-performance filtering in challenging environments.

Huawei Technologies Co., Ltd.

Technical Solution: Huawei has developed advanced notch filter optimization techniques for dense 5G networks, implementing adaptive filtering algorithms that dynamically adjust filter parameters based on real-time network conditions. Their solution incorporates machine learning-based interference prediction to proactively optimize notch filter placement and bandwidth allocation. The system achieves up to 40% improvement in spectral efficiency while maintaining signal quality in high-density deployment scenarios. Their approach includes distributed processing architecture that enables parallel filter optimization across multiple network nodes, reducing computational overhead and improving overall network throughput performance.

Strengths: Leading 5G infrastructure expertise, comprehensive end-to-end solutions. Weaknesses: Limited market access due to geopolitical restrictions in some regions.

Texas Instruments Incorporated

Technical Solution: Texas Instruments provides high-performance analog and digital signal processing solutions for notch filter implementation in dense networks. Their DSP chips feature dedicated hardware accelerators for real-time filter coefficient calculation and adaptive filtering operations. The company's solution includes optimized algorithms that can process multiple notch filters simultaneously with minimal latency impact. Their integrated circuits support up to 16 concurrent notch filters with automatic gain control and dynamic range optimization, enabling efficient interference suppression in crowded spectrum environments while maintaining system throughput.

Strengths: Proven semiconductor expertise, low-power efficient designs. Weaknesses: Primarily hardware-focused, limited software optimization capabilities.

Core Innovations in High-Performance Notch Filter Design

Method and apparatus for monitoring channel performance in dense wavelength division multiplexed (DWDM) optical networks

PatentInactiveEP1443685A1

Innovation

- A method and apparatus using a tunable optical channel selection filter and a tunable optical notch filter with controlled bandwidth, coupled with an optical signal to electrical converter and signal processing means, allowing for simultaneous channel performance monitoring, including BER and spectral analyses without the need for parallel paths.

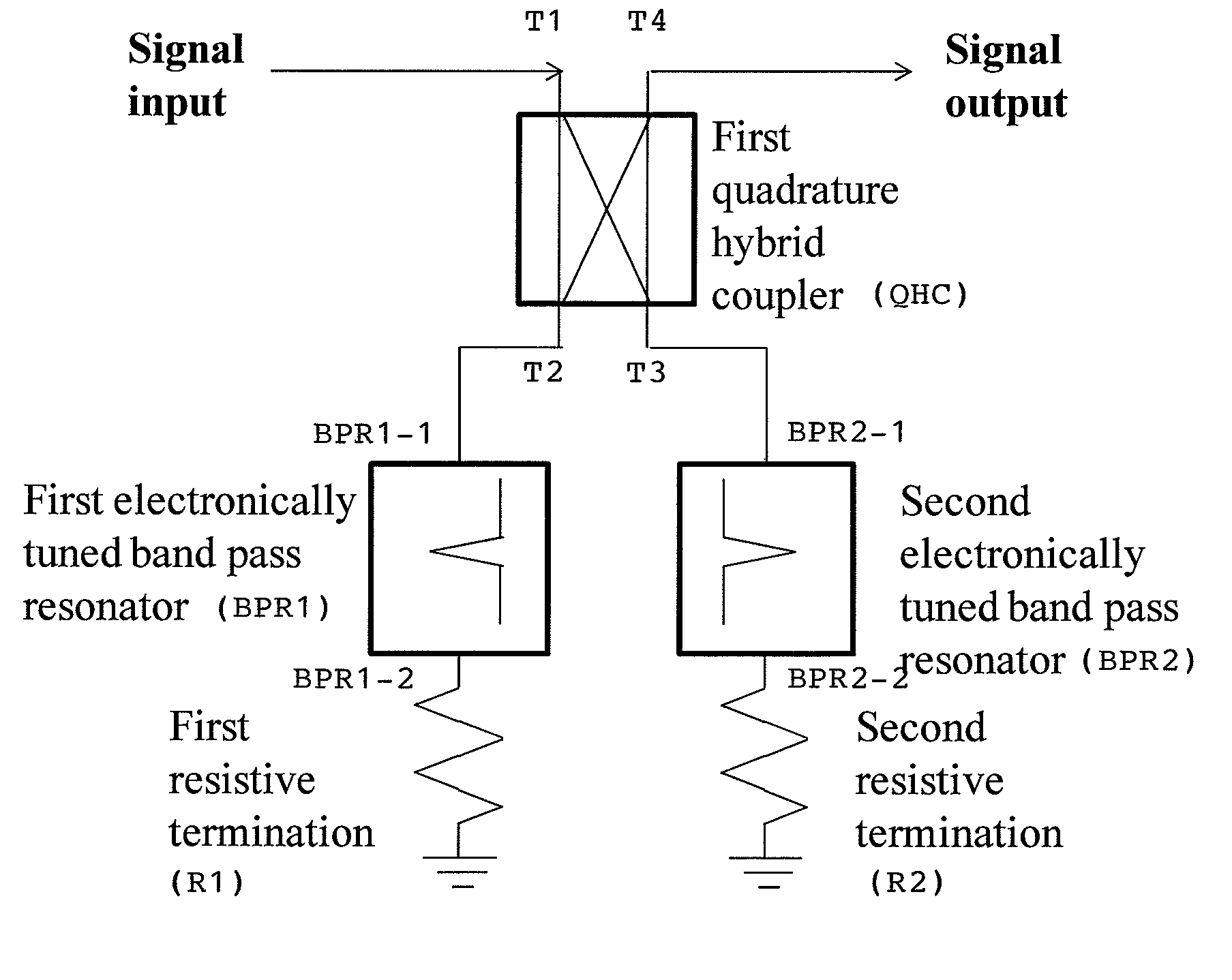

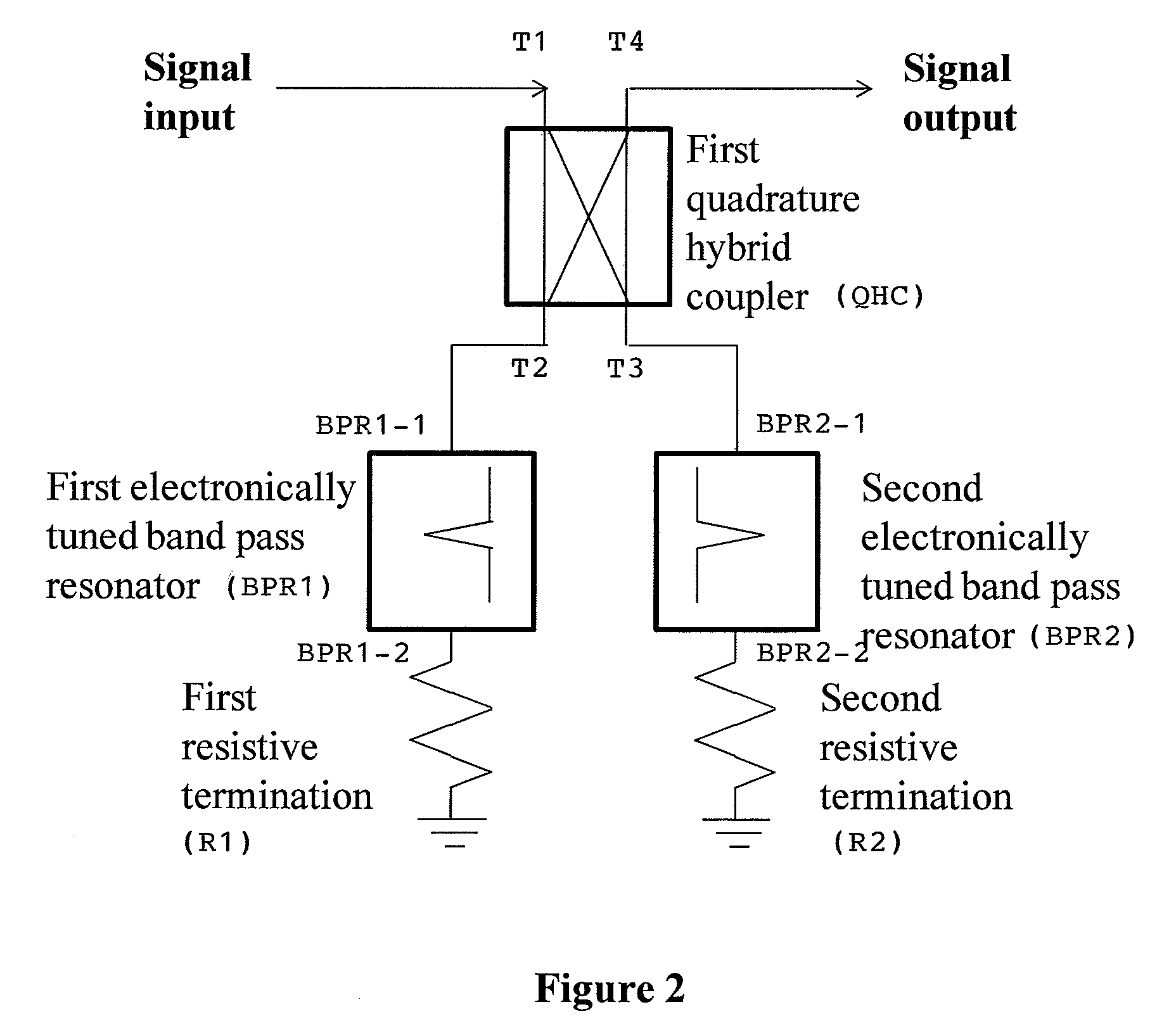

Electronically tunable, absorptive, low-loss notch filter

PatentInactiveUS8013690B2

Innovation

- An electronically tunable, absorptive notch filter design utilizing a quadrature hybrid coupler with series-only electronically tunable band pass resonators and resistive terminations, which absorbs signals at the notch frequency and reflects out-of-notch frequencies with minimal loss, enabling high power handling and fast tuning speeds.

Network Performance Standards and Compliance Requirements

Network performance standards for notch filter optimization in dense networks are primarily governed by international telecommunications standards organizations including the International Telecommunication Union (ITU), Institute of Electrical and Electronics Engineers (IEEE), and 3rd Generation Partnership Project (3GPP). These bodies establish fundamental requirements for signal quality, interference mitigation, and spectral efficiency that directly impact notch filter design parameters.

The ITU-R recommendations specify stringent adjacent channel interference rejection ratios, typically requiring -60 dB to -80 dB suppression for dense frequency allocations. IEEE 802.11 standards mandate specific out-of-band emission limits that notch filters must achieve to maintain coexistence in crowded spectrum environments. These requirements directly influence filter steepness, stopband attenuation depth, and transition bandwidth specifications.

Compliance with electromagnetic compatibility (EMC) standards such as CISPR and FCC Part 15 regulations establishes mandatory spurious emission limits. Dense network deployments must demonstrate adherence to these standards through rigorous testing protocols that validate notch filter performance under various operating conditions. The standards specify measurement methodologies, test equipment calibration requirements, and acceptable tolerance margins for filter characteristics.

Quality of Service (QoS) frameworks defined in ITU-T Y.1540 series establish performance benchmarks for packet loss, delay variation, and throughput metrics. Notch filter implementations must maintain these service levels while providing interference suppression, creating design constraints that balance filtering effectiveness with signal integrity preservation.

Regional regulatory compliance adds complexity through varying spectral masks and power density limitations. European Telecommunications Standards Institute (ETSI) harmonized standards differ from Federal Communications Commission (FCC) requirements, necessitating adaptive filter designs capable of meeting multiple regulatory frameworks simultaneously.

Emerging 5G and beyond standards introduce additional compliance challenges through ultra-low latency requirements and massive MIMO implementations. These specifications demand notch filter solutions that maintain nanosecond-level timing accuracy while processing multiple signal paths concurrently, pushing traditional filter architectures toward innovative digital signal processing approaches.

The ITU-R recommendations specify stringent adjacent channel interference rejection ratios, typically requiring -60 dB to -80 dB suppression for dense frequency allocations. IEEE 802.11 standards mandate specific out-of-band emission limits that notch filters must achieve to maintain coexistence in crowded spectrum environments. These requirements directly influence filter steepness, stopband attenuation depth, and transition bandwidth specifications.

Compliance with electromagnetic compatibility (EMC) standards such as CISPR and FCC Part 15 regulations establishes mandatory spurious emission limits. Dense network deployments must demonstrate adherence to these standards through rigorous testing protocols that validate notch filter performance under various operating conditions. The standards specify measurement methodologies, test equipment calibration requirements, and acceptable tolerance margins for filter characteristics.

Quality of Service (QoS) frameworks defined in ITU-T Y.1540 series establish performance benchmarks for packet loss, delay variation, and throughput metrics. Notch filter implementations must maintain these service levels while providing interference suppression, creating design constraints that balance filtering effectiveness with signal integrity preservation.

Regional regulatory compliance adds complexity through varying spectral masks and power density limitations. European Telecommunications Standards Institute (ETSI) harmonized standards differ from Federal Communications Commission (FCC) requirements, necessitating adaptive filter designs capable of meeting multiple regulatory frameworks simultaneously.

Emerging 5G and beyond standards introduce additional compliance challenges through ultra-low latency requirements and massive MIMO implementations. These specifications demand notch filter solutions that maintain nanosecond-level timing accuracy while processing multiple signal paths concurrently, pushing traditional filter architectures toward innovative digital signal processing approaches.

Energy Efficiency Considerations in Dense Network Filtering

Energy efficiency has emerged as a critical design parameter in dense network filtering systems, particularly when implementing notch filters for interference mitigation. The proliferation of wireless devices and the increasing density of network deployments have created scenarios where power consumption directly impacts both operational costs and system sustainability. Traditional notch filtering approaches often prioritize performance metrics such as selectivity and rejection ratios while overlooking the energy implications of their implementation.

The relationship between filter complexity and power consumption becomes particularly pronounced in dense network environments. Higher-order notch filters, while offering superior frequency selectivity, typically require more computational resources and active components, leading to increased energy consumption. This trade-off becomes especially challenging when multiple notch filters operate simultaneously to address various interference sources within the same network infrastructure.

Digital signal processing implementations of notch filters present unique energy efficiency opportunities and challenges. Adaptive algorithms that dynamically adjust filter parameters based on real-time interference conditions can optimize energy usage by activating filtering functions only when necessary. However, the continuous monitoring and adjustment processes themselves consume additional power, requiring careful balance between adaptive capability and energy overhead.

Hardware acceleration techniques, including dedicated filtering processors and field-programmable gate arrays, offer potential solutions for reducing energy consumption while maintaining high throughput. These specialized implementations can achieve significant power savings compared to general-purpose processors, particularly in scenarios requiring parallel processing of multiple frequency bands or simultaneous operation of numerous notch filters.

Power management strategies specific to dense network filtering include dynamic voltage scaling, clock gating, and selective filter activation based on traffic patterns and interference levels. These approaches enable systems to reduce energy consumption during periods of low activity while maintaining rapid response capabilities when interference mitigation becomes critical.

The integration of machine learning algorithms for predictive interference management represents an emerging approach to energy-efficient notch filtering. By anticipating interference patterns and pre-positioning filter resources, these systems can minimize reactive power consumption while maintaining optimal network performance in dense deployment scenarios.

The relationship between filter complexity and power consumption becomes particularly pronounced in dense network environments. Higher-order notch filters, while offering superior frequency selectivity, typically require more computational resources and active components, leading to increased energy consumption. This trade-off becomes especially challenging when multiple notch filters operate simultaneously to address various interference sources within the same network infrastructure.

Digital signal processing implementations of notch filters present unique energy efficiency opportunities and challenges. Adaptive algorithms that dynamically adjust filter parameters based on real-time interference conditions can optimize energy usage by activating filtering functions only when necessary. However, the continuous monitoring and adjustment processes themselves consume additional power, requiring careful balance between adaptive capability and energy overhead.

Hardware acceleration techniques, including dedicated filtering processors and field-programmable gate arrays, offer potential solutions for reducing energy consumption while maintaining high throughput. These specialized implementations can achieve significant power savings compared to general-purpose processors, particularly in scenarios requiring parallel processing of multiple frequency bands or simultaneous operation of numerous notch filters.

Power management strategies specific to dense network filtering include dynamic voltage scaling, clock gating, and selective filter activation based on traffic patterns and interference levels. These approaches enable systems to reduce energy consumption during periods of low activity while maintaining rapid response capabilities when interference mitigation becomes critical.

The integration of machine learning algorithms for predictive interference management represents an emerging approach to energy-efficient notch filtering. By anticipating interference patterns and pre-positioning filter resources, these systems can minimize reactive power consumption while maintaining optimal network performance in dense deployment scenarios.

Unlock deeper insights with PatSnap Eureka Quick Research — get a full tech report to explore trends and direct your research. Try now!

Generate Your Research Report Instantly with AI Agent

Supercharge your innovation with PatSnap Eureka AI Agent Platform!