Photonic Hardware Acceleration for Transformer Models

MAR 11, 20269 MIN READ

Generate Your Research Report Instantly with AI Agent

PatSnap Eureka helps you evaluate technical feasibility & market potential.

Photonic AI Acceleration Background and Objectives

The convergence of photonic computing and artificial intelligence represents a paradigm shift in computational architecture, driven by the exponential growth in AI model complexity and the physical limitations of traditional electronic processors. Transformer models, which have revolutionized natural language processing and computer vision, demand unprecedented computational resources that strain current hardware capabilities. The attention mechanism at the heart of these models requires massive matrix multiplications and parallel processing, creating bottlenecks that photonic systems are uniquely positioned to address.

Photonic computing leverages the fundamental properties of light to perform calculations at the speed of light with minimal energy dissipation. Unlike electronic systems constrained by resistance and heat generation, photonic processors can execute multiple operations simultaneously through wavelength division multiplexing and spatial parallelism. This inherent parallelism aligns perfectly with the computational demands of transformer architectures, where attention heads operate independently and matrix operations can be distributed across optical channels.

The historical evolution of photonic AI acceleration traces back to early optical computing research in the 1980s, progressing through analog optical processors to modern integrated photonic circuits. Recent breakthroughs in silicon photonics, neuromorphic photonic chips, and optical neural networks have demonstrated the feasibility of large-scale photonic AI systems. The integration of photonic and electronic components has matured to enable hybrid architectures that combine the speed of light-based computation with the precision of digital control.

Current technological objectives focus on developing photonic hardware specifically optimized for transformer model inference and training. Key targets include achieving sub-nanosecond latency for attention computations, reducing energy consumption by orders of magnitude compared to GPU-based systems, and scaling to support models with trillions of parameters. The primary goal is to create photonic accelerators capable of handling the quadratic complexity of self-attention mechanisms while maintaining the numerical precision required for accurate model performance.

The strategic importance of photonic AI acceleration extends beyond performance improvements to address fundamental scalability challenges in artificial intelligence. As transformer models continue to grow in size and complexity, traditional computing approaches face insurmountable power and thermal constraints. Photonic solutions offer a pathway to sustainable AI scaling, enabling the deployment of sophisticated models in edge computing environments and reducing the environmental impact of large-scale AI training and inference operations.

Photonic computing leverages the fundamental properties of light to perform calculations at the speed of light with minimal energy dissipation. Unlike electronic systems constrained by resistance and heat generation, photonic processors can execute multiple operations simultaneously through wavelength division multiplexing and spatial parallelism. This inherent parallelism aligns perfectly with the computational demands of transformer architectures, where attention heads operate independently and matrix operations can be distributed across optical channels.

The historical evolution of photonic AI acceleration traces back to early optical computing research in the 1980s, progressing through analog optical processors to modern integrated photonic circuits. Recent breakthroughs in silicon photonics, neuromorphic photonic chips, and optical neural networks have demonstrated the feasibility of large-scale photonic AI systems. The integration of photonic and electronic components has matured to enable hybrid architectures that combine the speed of light-based computation with the precision of digital control.

Current technological objectives focus on developing photonic hardware specifically optimized for transformer model inference and training. Key targets include achieving sub-nanosecond latency for attention computations, reducing energy consumption by orders of magnitude compared to GPU-based systems, and scaling to support models with trillions of parameters. The primary goal is to create photonic accelerators capable of handling the quadratic complexity of self-attention mechanisms while maintaining the numerical precision required for accurate model performance.

The strategic importance of photonic AI acceleration extends beyond performance improvements to address fundamental scalability challenges in artificial intelligence. As transformer models continue to grow in size and complexity, traditional computing approaches face insurmountable power and thermal constraints. Photonic solutions offer a pathway to sustainable AI scaling, enabling the deployment of sophisticated models in edge computing environments and reducing the environmental impact of large-scale AI training and inference operations.

Market Demand for Transformer Model Acceleration Solutions

The global artificial intelligence market is experiencing unprecedented growth, with transformer models serving as the backbone for numerous breakthrough applications across natural language processing, computer vision, and multimodal AI systems. Large language models such as GPT, BERT, and their variants have demonstrated remarkable capabilities in text generation, translation, summarization, and reasoning tasks, driving widespread adoption across industries including healthcare, finance, education, and entertainment.

Enterprise demand for transformer-based solutions continues to surge as organizations seek to integrate advanced AI capabilities into their operations. Cloud service providers report exponential increases in computational workloads dedicated to transformer inference and training, with many enterprises requiring real-time or near-real-time processing capabilities for customer-facing applications. The proliferation of conversational AI, automated content generation, and intelligent document processing has created substantial market pressure for more efficient acceleration solutions.

Current hardware acceleration approaches face significant limitations in meeting escalating performance requirements. Traditional GPU-based solutions, while effective, encounter bottlenecks in memory bandwidth, energy consumption, and cost-effectiveness when scaling to larger model sizes and higher throughput demands. The attention mechanism, fundamental to transformer architectures, presents particular computational challenges due to its quadratic complexity and memory-intensive operations.

Data centers worldwide are grappling with the enormous energy costs associated with transformer model deployment. The computational intensity of these models has led to substantial increases in operational expenses, prompting organizations to seek more energy-efficient acceleration alternatives. This economic pressure has intensified interest in novel hardware approaches that can deliver superior performance-per-watt ratios.

The market opportunity for photonic hardware acceleration emerges from these converging demands for higher performance, lower energy consumption, and improved cost-effectiveness. Photonic computing offers unique advantages for transformer workloads, including massive parallelism, reduced energy dissipation, and potential for ultra-high bandwidth operations. The linear algebraic operations central to transformer computations align well with photonic processing capabilities, particularly matrix multiplications and convolution operations.

Industry analysts project substantial growth in the AI accelerator market, with specialized hardware solutions gaining increasing market share. The demand spans multiple deployment scenarios, from edge devices requiring efficient inference capabilities to large-scale data centers managing extensive training workloads. This diverse market landscape presents significant opportunities for innovative acceleration technologies that can address specific performance and efficiency requirements across different use cases.

Enterprise demand for transformer-based solutions continues to surge as organizations seek to integrate advanced AI capabilities into their operations. Cloud service providers report exponential increases in computational workloads dedicated to transformer inference and training, with many enterprises requiring real-time or near-real-time processing capabilities for customer-facing applications. The proliferation of conversational AI, automated content generation, and intelligent document processing has created substantial market pressure for more efficient acceleration solutions.

Current hardware acceleration approaches face significant limitations in meeting escalating performance requirements. Traditional GPU-based solutions, while effective, encounter bottlenecks in memory bandwidth, energy consumption, and cost-effectiveness when scaling to larger model sizes and higher throughput demands. The attention mechanism, fundamental to transformer architectures, presents particular computational challenges due to its quadratic complexity and memory-intensive operations.

Data centers worldwide are grappling with the enormous energy costs associated with transformer model deployment. The computational intensity of these models has led to substantial increases in operational expenses, prompting organizations to seek more energy-efficient acceleration alternatives. This economic pressure has intensified interest in novel hardware approaches that can deliver superior performance-per-watt ratios.

The market opportunity for photonic hardware acceleration emerges from these converging demands for higher performance, lower energy consumption, and improved cost-effectiveness. Photonic computing offers unique advantages for transformer workloads, including massive parallelism, reduced energy dissipation, and potential for ultra-high bandwidth operations. The linear algebraic operations central to transformer computations align well with photonic processing capabilities, particularly matrix multiplications and convolution operations.

Industry analysts project substantial growth in the AI accelerator market, with specialized hardware solutions gaining increasing market share. The demand spans multiple deployment scenarios, from edge devices requiring efficient inference capabilities to large-scale data centers managing extensive training workloads. This diverse market landscape presents significant opportunities for innovative acceleration technologies that can address specific performance and efficiency requirements across different use cases.

Current State of Photonic Computing for AI Workloads

Photonic computing for AI workloads has emerged as a promising paradigm to address the computational bottlenecks inherent in traditional electronic processors. Current photonic AI accelerators leverage the unique properties of light, including massive parallelism, high bandwidth, and low-latency signal propagation, to perform matrix-vector multiplications and convolution operations that are fundamental to neural network computations.

Several leading research institutions and companies have developed functional photonic neural network accelerators. MIT's photonic tensor processing unit demonstrates significant energy efficiency improvements over electronic counterparts, achieving up to 100x reduction in power consumption for specific inference tasks. Lightmatter has commercialized photonic AI chips that integrate seamlessly with existing data center infrastructure, focusing primarily on convolutional neural networks and fully connected layers.

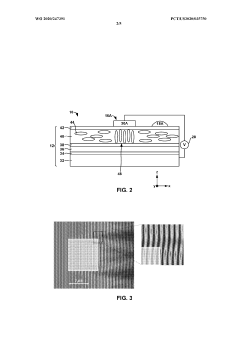

The technology landscape reveals distinct approaches to photonic AI acceleration. Coherent photonic systems utilize interferometric structures and phase modulation to perform complex mathematical operations, offering high precision but requiring sophisticated control mechanisms. Incoherent systems, conversely, rely on intensity-based computations that are more robust to environmental variations but may sacrifice computational precision.

Current implementations face several technical constraints that limit their applicability to transformer architectures. Most existing photonic accelerators excel at linear operations but struggle with the non-linear activation functions and attention mechanisms central to transformer models. The softmax operations in attention layers, normalization procedures, and residual connections present particular challenges for photonic implementation.

Manufacturing scalability remains a critical bottleneck. While laboratory demonstrations show impressive performance metrics, transitioning to commercial-scale production requires overcoming fabrication tolerances, thermal stability issues, and integration complexities with electronic control systems. Silicon photonics platforms offer the most mature manufacturing ecosystem, though they impose wavelength and bandwidth limitations.

Recent advances in neuromorphic photonics and optical memory systems are beginning to address some transformer-specific requirements. Programmable photonic processors with reconfigurable interconnects show promise for implementing the dynamic attention patterns characteristic of transformer models, though current implementations remain limited in scale and flexibility compared to the computational demands of large language models.

Several leading research institutions and companies have developed functional photonic neural network accelerators. MIT's photonic tensor processing unit demonstrates significant energy efficiency improvements over electronic counterparts, achieving up to 100x reduction in power consumption for specific inference tasks. Lightmatter has commercialized photonic AI chips that integrate seamlessly with existing data center infrastructure, focusing primarily on convolutional neural networks and fully connected layers.

The technology landscape reveals distinct approaches to photonic AI acceleration. Coherent photonic systems utilize interferometric structures and phase modulation to perform complex mathematical operations, offering high precision but requiring sophisticated control mechanisms. Incoherent systems, conversely, rely on intensity-based computations that are more robust to environmental variations but may sacrifice computational precision.

Current implementations face several technical constraints that limit their applicability to transformer architectures. Most existing photonic accelerators excel at linear operations but struggle with the non-linear activation functions and attention mechanisms central to transformer models. The softmax operations in attention layers, normalization procedures, and residual connections present particular challenges for photonic implementation.

Manufacturing scalability remains a critical bottleneck. While laboratory demonstrations show impressive performance metrics, transitioning to commercial-scale production requires overcoming fabrication tolerances, thermal stability issues, and integration complexities with electronic control systems. Silicon photonics platforms offer the most mature manufacturing ecosystem, though they impose wavelength and bandwidth limitations.

Recent advances in neuromorphic photonics and optical memory systems are beginning to address some transformer-specific requirements. Programmable photonic processors with reconfigurable interconnects show promise for implementing the dynamic attention patterns characteristic of transformer models, though current implementations remain limited in scale and flexibility compared to the computational demands of large language models.

Existing Photonic Solutions for Neural Network Acceleration

01 Photonic integrated circuits for neural network acceleration

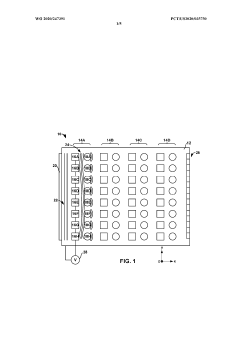

Photonic integrated circuits can be designed to accelerate neural network computations by utilizing optical components such as waveguides, modulators, and photodetectors. These circuits leverage the high bandwidth and low latency of photonic signals to perform matrix multiplications and other neural network operations at significantly higher speeds compared to traditional electronic circuits. The integration of photonic elements enables parallel processing of multiple data streams, making them particularly suitable for deep learning and artificial intelligence applications.- Photonic integrated circuits for neural network acceleration: Photonic integrated circuits can be designed to accelerate neural network computations by utilizing optical components such as waveguides, modulators, and photodetectors. These circuits leverage the high bandwidth and low latency of photonic signals to perform matrix multiplications and other neural network operations at significantly higher speeds than traditional electronic circuits. The integration of photonic components enables parallel processing of multiple data streams, making them particularly suitable for deep learning and artificial intelligence applications.

- Optical computing architectures for data processing acceleration: Optical computing architectures utilize light-based components to perform computational tasks, offering advantages in speed and energy efficiency over conventional electronic processors. These architectures employ optical switches, modulators, and interconnects to process and transmit data using photonic signals. By exploiting the parallelism inherent in optical systems, these architectures can handle large-scale data processing tasks with reduced power consumption and improved throughput, making them suitable for high-performance computing applications.

- Photonic tensor processing units for machine learning: Photonic tensor processing units are specialized hardware accelerators designed to perform tensor operations commonly used in machine learning algorithms. These units utilize optical components to execute matrix multiplications, convolutions, and other tensor operations at high speeds with low power consumption. The photonic approach enables massive parallelism and reduces the bottlenecks associated with electronic data transfer, significantly accelerating training and inference processes in machine learning models.

- Hybrid photonic-electronic systems for computational acceleration: Hybrid systems combine photonic and electronic components to leverage the strengths of both technologies for computational acceleration. These systems use photonic elements for high-speed data transmission and parallel processing, while electronic components handle control logic and digital signal processing. The integration of photonic interconnects with electronic processors reduces latency and power consumption in data-intensive applications, providing a balanced approach to hardware acceleration that optimizes both speed and functionality.

- Optical interconnects and switching for accelerated data communication: Optical interconnects and switching technologies enable high-speed data communication between processing units by using light signals instead of electrical signals. These technologies reduce signal degradation, increase bandwidth, and minimize power consumption in data centers and high-performance computing systems. Optical switches can dynamically route data between multiple processors or memory units, facilitating efficient resource allocation and reducing communication bottlenecks in parallel computing environments.

02 Optical computing architectures for data processing acceleration

Optical computing architectures utilize light-based components to perform computational tasks, offering advantages in speed and energy efficiency. These architectures employ optical switches, beam splitters, and interferometers to manipulate light signals for data processing operations. By exploiting the properties of photons, such as superposition and parallelism, these systems can execute complex algorithms more efficiently than conventional electronic processors. The technology is particularly beneficial for applications requiring high-throughput data processing and real-time computation.Expand Specific Solutions03 Photonic tensor core units for machine learning operations

Photonic tensor core units are specialized hardware components designed to accelerate tensor operations commonly used in machine learning algorithms. These units utilize optical elements to perform high-dimensional matrix operations with reduced power consumption and increased computational speed. The photonic approach enables massive parallelism in tensor computations, allowing for faster training and inference in deep learning models. Integration with existing electronic systems provides a hybrid computing platform that combines the strengths of both technologies.Expand Specific Solutions04 Wavelength division multiplexing for parallel photonic processing

Wavelength division multiplexing technology enables multiple data channels to be transmitted simultaneously through a single optical medium by using different wavelengths of light. This approach significantly increases the data throughput and processing capacity of photonic hardware accelerators. By assigning different computational tasks to distinct wavelengths, the system can perform parallel operations without interference, leading to substantial improvements in overall processing speed. The technique is particularly effective for applications requiring simultaneous processing of multiple data streams or parallel execution of independent computational tasks.Expand Specific Solutions05 Hybrid photonic-electronic integration for accelerated computing

Hybrid integration combines photonic and electronic components on a single platform to leverage the advantages of both technologies. This approach uses photonic elements for high-speed data transmission and certain computational operations, while electronic circuits handle control functions and complex logic operations. The integration enables seamless data transfer between optical and electrical domains, minimizing conversion losses and latency. Such hybrid systems are designed to optimize performance for specific applications, balancing the speed advantages of photonics with the flexibility and maturity of electronic processing.Expand Specific Solutions

Key Players in Photonic AI and Hardware Acceleration

The photonic hardware acceleration for transformer models represents an emerging technology sector in its early development stage, characterized by significant growth potential but limited commercial deployment. The market remains nascent with substantial research investment from both academic institutions and technology corporations, indicating strong future prospects as AI computational demands continue escalating. Technology maturity varies considerably across players, with established electronics giants like Sony Group Corp., Samsung Electronics, Canon Inc., and Huawei Technologies leveraging their existing optical and semiconductor expertise to explore photonic computing applications. Specialized companies such as Shanghai Xizhi Technology demonstrate focused advancement in photonic chip prototypes for AI processing, while leading research universities including Beijing Institute of Technology, Nanjing University, Tianjin University, and Huazhong University of Science & Technology contribute fundamental research breakthroughs. The competitive landscape suggests a convergence of traditional semiconductor manufacturers, AI infrastructure companies, and academic research institutions working toward commercializing photonic acceleration solutions for transformer architectures.

Sony Group Corp.

Technical Solution: Sony has developed optical computing architectures specifically designed for accelerating vision transformer models used in their imaging and camera systems. Their photonic hardware utilizes coherent optical processing to perform convolution-like operations and attention mechanisms efficiently. The company's approach combines their expertise in optical sensors with photonic computing elements, creating hybrid systems that can process visual data directly in the optical domain before conversion to digital signals. Sony's implementation focuses on real-time video processing applications where transformer models are used for object detection and scene understanding.

Strengths: Strong optical technology background, proven expertise in imaging systems, established market presence in consumer electronics. Weaknesses: Limited focus on general-purpose transformer acceleration, primarily application-specific solutions.

Samsung Electronics Co., Ltd.

Technical Solution: Samsung has invested in photonic neural network processors that utilize Mach-Zehnder interferometer arrays for implementing transformer model computations. Their technology focuses on analog optical computing for matrix-vector multiplications, which are fundamental operations in transformer attention layers. The company's approach integrates photonic processing units with their advanced semiconductor manufacturing capabilities, enabling on-chip optical interconnects for high-bandwidth data movement. Samsung's photonic accelerators target edge AI applications where power efficiency is critical, particularly for mobile transformer model deployment.

Strengths: Advanced semiconductor manufacturing capabilities, strong integration with memory technologies, significant investment in AI hardware. Weaknesses: Early stage development, limited proven performance metrics for large transformer models.

Core Innovations in Photonic Transformer Architectures

Photonic neural network

PatentWO2020247391A1

Innovation

- A photonic neural network device featuring a planar waveguide with a layer of changeable refractive index and programmable electrodes that apply configurable voltages to induce amplitude or phase modulation of light, enabling reconfigurable and scalable neural network architectures with thousands of neurons and connections.

Techniques to support transformer models in analog compute-in-memory hardware

PatentPendingUS20260065046A1

Innovation

- Implementing multi-layer perceptrons to approximate non-vector-matrix multiplication operations, such as layer normalization, softmax, and GELU activation functions, within an analog compute-in-memory architecture using crossbar arrays that store weight values as conductance or capacitance quantities, and utilizing shift, shift-scale, and dense neural networks to decompose complex mathematical functions into linear transformations.

Energy Efficiency Analysis of Photonic vs Electronic AI

Energy consumption represents one of the most critical differentiators between photonic and electronic approaches to AI acceleration, particularly for transformer models. Traditional electronic processors face fundamental thermodynamic limitations, with energy dissipation scaling quadratically with computational complexity in attention mechanisms. This creates substantial power overhead as transformer models grow in size and depth.

Photonic computing systems demonstrate remarkable energy efficiency advantages through their inherent parallelism and reduced heat generation. Optical operations can be performed at significantly lower energy costs compared to electronic switching, with photonic matrix multiplications consuming orders of magnitude less power than their electronic counterparts. The absence of electron movement in optical computations eliminates resistive losses that plague electronic systems.

Quantitative analysis reveals that photonic accelerators can achieve energy efficiency improvements of 10-100x over conventional GPUs for specific transformer operations. Matrix-vector multiplications, which dominate transformer inference workloads, show particularly pronounced benefits. Photonic systems typically operate at sub-picojoule energy levels per operation, while electronic systems require nanojoule-scale energy for equivalent computations.

The energy profile differs significantly between training and inference scenarios. Electronic systems maintain relatively consistent power consumption regardless of utilization, while photonic systems exhibit more dynamic energy scaling. During transformer inference, photonic accelerators can reduce total system power consumption by 60-80% compared to state-of-the-art electronic accelerators, primarily due to elimination of data movement energy costs.

Thermal management considerations further amplify the energy efficiency gap. Electronic AI accelerators require substantial cooling infrastructure, often consuming 30-40% additional energy for thermal management. Photonic systems generate minimal heat, virtually eliminating cooling requirements and reducing overall system energy consumption.

However, energy efficiency advantages vary significantly across different transformer operations. While linear transformations and attention computations show dramatic improvements, non-linear activation functions still require electronic processing, creating hybrid energy profiles that must be carefully optimized for maximum efficiency gains.

Photonic computing systems demonstrate remarkable energy efficiency advantages through their inherent parallelism and reduced heat generation. Optical operations can be performed at significantly lower energy costs compared to electronic switching, with photonic matrix multiplications consuming orders of magnitude less power than their electronic counterparts. The absence of electron movement in optical computations eliminates resistive losses that plague electronic systems.

Quantitative analysis reveals that photonic accelerators can achieve energy efficiency improvements of 10-100x over conventional GPUs for specific transformer operations. Matrix-vector multiplications, which dominate transformer inference workloads, show particularly pronounced benefits. Photonic systems typically operate at sub-picojoule energy levels per operation, while electronic systems require nanojoule-scale energy for equivalent computations.

The energy profile differs significantly between training and inference scenarios. Electronic systems maintain relatively consistent power consumption regardless of utilization, while photonic systems exhibit more dynamic energy scaling. During transformer inference, photonic accelerators can reduce total system power consumption by 60-80% compared to state-of-the-art electronic accelerators, primarily due to elimination of data movement energy costs.

Thermal management considerations further amplify the energy efficiency gap. Electronic AI accelerators require substantial cooling infrastructure, often consuming 30-40% additional energy for thermal management. Photonic systems generate minimal heat, virtually eliminating cooling requirements and reducing overall system energy consumption.

However, energy efficiency advantages vary significantly across different transformer operations. While linear transformations and attention computations show dramatic improvements, non-linear activation functions still require electronic processing, creating hybrid energy profiles that must be carefully optimized for maximum efficiency gains.

Integration Challenges with Existing AI Infrastructure

The integration of photonic hardware acceleration systems with existing AI infrastructure presents multifaceted challenges that span technical, operational, and architectural domains. Current AI ecosystems are predominantly designed around electronic processors, creating fundamental compatibility gaps when introducing photonic computing elements.

Interface standardization represents a critical bottleneck in photonic-electronic integration. Existing AI frameworks like TensorFlow, PyTorch, and CUDA are optimized for electronic architectures, requiring substantial modifications to accommodate photonic processing units. The lack of standardized APIs and communication protocols between photonic accelerators and conventional processors creates significant development overhead and limits seamless integration.

Data format conversion poses another substantial challenge. Electronic systems process discrete digital signals, while photonic systems often operate with analog optical signals or hybrid analog-digital representations. This necessitates sophisticated conversion mechanisms that can introduce latency and potential information loss, potentially negating some performance advantages of photonic acceleration.

Power delivery and thermal management systems in existing data centers are calibrated for electronic components. Photonic systems require different power profiles, including specialized laser power supplies and optical component cooling requirements. Retrofitting existing infrastructure to support these requirements involves considerable capital investment and operational complexity.

Software stack compatibility issues extend beyond basic interfacing. Existing debugging tools, performance monitoring systems, and deployment pipelines lack native support for photonic components. This creates blind spots in system optimization and troubleshooting, requiring development of entirely new toolchains and methodologies.

Network architecture modifications become necessary when integrating photonic accelerators into distributed AI systems. Traditional interconnect fabrics may not efficiently support the unique communication patterns and bandwidth requirements of photonic processing units, potentially creating bottlenecks that limit overall system performance.

The heterogeneous nature of photonic-electronic systems also complicates workload scheduling and resource allocation. Existing orchestration systems lack the intelligence to optimally distribute computational tasks between photonic and electronic components, requiring sophisticated new algorithms for hybrid system management.

Interface standardization represents a critical bottleneck in photonic-electronic integration. Existing AI frameworks like TensorFlow, PyTorch, and CUDA are optimized for electronic architectures, requiring substantial modifications to accommodate photonic processing units. The lack of standardized APIs and communication protocols between photonic accelerators and conventional processors creates significant development overhead and limits seamless integration.

Data format conversion poses another substantial challenge. Electronic systems process discrete digital signals, while photonic systems often operate with analog optical signals or hybrid analog-digital representations. This necessitates sophisticated conversion mechanisms that can introduce latency and potential information loss, potentially negating some performance advantages of photonic acceleration.

Power delivery and thermal management systems in existing data centers are calibrated for electronic components. Photonic systems require different power profiles, including specialized laser power supplies and optical component cooling requirements. Retrofitting existing infrastructure to support these requirements involves considerable capital investment and operational complexity.

Software stack compatibility issues extend beyond basic interfacing. Existing debugging tools, performance monitoring systems, and deployment pipelines lack native support for photonic components. This creates blind spots in system optimization and troubleshooting, requiring development of entirely new toolchains and methodologies.

Network architecture modifications become necessary when integrating photonic accelerators into distributed AI systems. Traditional interconnect fabrics may not efficiently support the unique communication patterns and bandwidth requirements of photonic processing units, potentially creating bottlenecks that limit overall system performance.

The heterogeneous nature of photonic-electronic systems also complicates workload scheduling and resource allocation. Existing orchestration systems lack the intelligence to optimally distribute computational tasks between photonic and electronic components, requiring sophisticated new algorithms for hybrid system management.

Unlock deeper insights with PatSnap Eureka Quick Research — get a full tech report to explore trends and direct your research. Try now!

Generate Your Research Report Instantly with AI Agent

Supercharge your innovation with PatSnap Eureka AI Agent Platform!