RISC Overhead Examination for Improved Energy Performance

MAR 26, 20269 MIN READ

Generate Your Research Report Instantly with AI Agent

PatSnap Eureka helps you evaluate technical feasibility & market potential.

RISC Architecture Energy Goals and Background

RISC (Reduced Instruction Set Computer) architecture emerged in the 1980s as a revolutionary approach to processor design, fundamentally challenging the prevailing Complex Instruction Set Computer (CISC) paradigm. The core philosophy behind RISC architecture centers on simplifying instruction sets to achieve higher performance through faster execution cycles and more efficient pipeline utilization. This architectural approach has evolved significantly over the decades, transitioning from purely performance-focused designs to encompass comprehensive energy efficiency considerations.

The historical development of RISC architecture can be traced through several distinct phases. Initially, pioneers like IBM's 801 project and Stanford's MIPS processor demonstrated that simplified instruction sets could deliver superior performance per clock cycle. The 1990s witnessed widespread adoption of RISC principles in workstations and servers, with architectures like SPARC, PowerPC, and Alpha gaining prominence. The mobile computing revolution of the 2000s marked a pivotal shift, as ARM's RISC-based processors became dominant in smartphones and tablets, primarily due to their exceptional energy efficiency characteristics.

Contemporary RISC architecture development is increasingly driven by energy performance optimization goals. Modern processors face stringent power consumption constraints across diverse application domains, from battery-powered mobile devices to large-scale data centers where energy costs significantly impact operational expenses. The traditional RISC advantages of simplified decode logic, regular instruction formats, and predictable execution patterns naturally align with energy-efficient design principles.

Current energy optimization objectives in RISC architecture encompass multiple dimensions. Static power reduction focuses on minimizing leakage currents through advanced manufacturing processes and power gating techniques. Dynamic power optimization targets reducing switching activity through intelligent instruction scheduling, clock gating, and voltage scaling mechanisms. Additionally, architectural innovations such as heterogeneous multi-core designs, specialized accelerators, and adaptive frequency scaling represent contemporary approaches to achieving optimal energy-performance trade-offs.

The emergence of open-source RISC-V architecture has further accelerated innovation in energy-efficient RISC design. This modular, extensible instruction set architecture enables customized implementations tailored for specific energy performance requirements, fostering rapid experimentation and deployment across diverse computing segments from IoT devices to high-performance computing systems.

The historical development of RISC architecture can be traced through several distinct phases. Initially, pioneers like IBM's 801 project and Stanford's MIPS processor demonstrated that simplified instruction sets could deliver superior performance per clock cycle. The 1990s witnessed widespread adoption of RISC principles in workstations and servers, with architectures like SPARC, PowerPC, and Alpha gaining prominence. The mobile computing revolution of the 2000s marked a pivotal shift, as ARM's RISC-based processors became dominant in smartphones and tablets, primarily due to their exceptional energy efficiency characteristics.

Contemporary RISC architecture development is increasingly driven by energy performance optimization goals. Modern processors face stringent power consumption constraints across diverse application domains, from battery-powered mobile devices to large-scale data centers where energy costs significantly impact operational expenses. The traditional RISC advantages of simplified decode logic, regular instruction formats, and predictable execution patterns naturally align with energy-efficient design principles.

Current energy optimization objectives in RISC architecture encompass multiple dimensions. Static power reduction focuses on minimizing leakage currents through advanced manufacturing processes and power gating techniques. Dynamic power optimization targets reducing switching activity through intelligent instruction scheduling, clock gating, and voltage scaling mechanisms. Additionally, architectural innovations such as heterogeneous multi-core designs, specialized accelerators, and adaptive frequency scaling represent contemporary approaches to achieving optimal energy-performance trade-offs.

The emergence of open-source RISC-V architecture has further accelerated innovation in energy-efficient RISC design. This modular, extensible instruction set architecture enables customized implementations tailored for specific energy performance requirements, fostering rapid experimentation and deployment across diverse computing segments from IoT devices to high-performance computing systems.

Market Demand for Energy-Efficient RISC Processors

The global semiconductor industry is experiencing unprecedented demand for energy-efficient processing solutions, driven by the convergence of mobile computing, Internet of Things deployments, and sustainability imperatives across enterprise infrastructure. RISC processors have emerged as a critical technology segment within this landscape, offering inherent architectural advantages that align with contemporary energy optimization requirements.

Mobile device manufacturers represent the largest market segment driving demand for energy-efficient RISC processors. Smartphone and tablet vendors continuously seek processing solutions that deliver enhanced computational performance while extending battery life. The proliferation of always-on applications, real-time AI processing, and high-resolution multimedia content has intensified the need for processors that minimize energy overhead without compromising functionality.

Data center operators constitute another significant demand driver, as cloud service providers face mounting pressure to reduce operational costs and meet environmental sustainability targets. Energy consumption represents a substantial portion of data center operational expenses, making energy-efficient RISC architectures increasingly attractive for server deployments. The shift toward edge computing further amplifies this demand, as distributed processing nodes require processors capable of delivering consistent performance within strict power budgets.

The automotive industry presents a rapidly expanding market opportunity for energy-efficient RISC processors. Advanced driver assistance systems, autonomous vehicle platforms, and electric vehicle control systems require processing solutions that maintain reliable operation while minimizing power consumption. Electric vehicle manufacturers particularly value energy-efficient processors as they directly impact vehicle range and battery performance.

Industrial IoT applications generate substantial demand for low-power RISC processors capable of operating in resource-constrained environments. Manufacturing automation, smart city infrastructure, and environmental monitoring systems require processors that can function reliably for extended periods while consuming minimal energy. These applications often operate in remote locations where power availability is limited, making energy efficiency a critical selection criterion.

Emerging applications in wearable technology, medical devices, and smart home systems continue expanding the addressable market for energy-efficient RISC processors. These applications demand processors that deliver adequate computational capability while operating within extremely tight power envelopes, often requiring battery life measured in months or years rather than hours.

Mobile device manufacturers represent the largest market segment driving demand for energy-efficient RISC processors. Smartphone and tablet vendors continuously seek processing solutions that deliver enhanced computational performance while extending battery life. The proliferation of always-on applications, real-time AI processing, and high-resolution multimedia content has intensified the need for processors that minimize energy overhead without compromising functionality.

Data center operators constitute another significant demand driver, as cloud service providers face mounting pressure to reduce operational costs and meet environmental sustainability targets. Energy consumption represents a substantial portion of data center operational expenses, making energy-efficient RISC architectures increasingly attractive for server deployments. The shift toward edge computing further amplifies this demand, as distributed processing nodes require processors capable of delivering consistent performance within strict power budgets.

The automotive industry presents a rapidly expanding market opportunity for energy-efficient RISC processors. Advanced driver assistance systems, autonomous vehicle platforms, and electric vehicle control systems require processing solutions that maintain reliable operation while minimizing power consumption. Electric vehicle manufacturers particularly value energy-efficient processors as they directly impact vehicle range and battery performance.

Industrial IoT applications generate substantial demand for low-power RISC processors capable of operating in resource-constrained environments. Manufacturing automation, smart city infrastructure, and environmental monitoring systems require processors that can function reliably for extended periods while consuming minimal energy. These applications often operate in remote locations where power availability is limited, making energy efficiency a critical selection criterion.

Emerging applications in wearable technology, medical devices, and smart home systems continue expanding the addressable market for energy-efficient RISC processors. These applications demand processors that deliver adequate computational capability while operating within extremely tight power envelopes, often requiring battery life measured in months or years rather than hours.

Current RISC Overhead Issues and Energy Challenges

RISC-V processors, despite their open-source advantages and simplified instruction set architecture, face significant overhead challenges that directly impact energy efficiency in modern computing systems. The primary overhead issues stem from instruction fetch inefficiencies, where the fixed 32-bit instruction width can lead to increased memory bandwidth requirements compared to variable-length instruction sets. This results in higher energy consumption during instruction cache operations and memory subsystem activities.

Pipeline stalls represent another critical overhead source in RISC-V implementations. The load-use hazards inherent in the RISC architecture frequently cause pipeline bubbles, particularly in scenarios involving memory-intensive operations. These stalls not only degrade performance but also increase dynamic power consumption as pipeline stages remain active while waiting for data dependencies to resolve. The energy cost becomes particularly pronounced in embedded systems where power budgets are constrained.

Branch prediction mechanisms in RISC-V processors often exhibit suboptimal energy characteristics due to the architecture's emphasis on simplicity over prediction accuracy. Mispredicted branches trigger pipeline flushes and instruction re-fetching, leading to wasted energy in both the execution units and memory hierarchy. The energy penalty is amplified in applications with irregular control flow patterns, where traditional two-level predictors struggle to maintain high accuracy rates.

Register file access patterns in RISC-V create additional energy overhead challenges. The 32-register architecture, while providing programming flexibility, increases the bit width required for register addressing and can lead to unnecessary register file accesses. Multi-ported register files consume significant static power, and the energy per access scales with the number of read and write ports required for superscalar implementations.

Cache coherency protocols in multi-core RISC-V systems introduce substantial energy overhead through increased interconnect traffic and cache state transitions. The weak memory ordering model, while offering performance benefits, requires additional synchronization instructions that consume energy without contributing directly to computational progress. These overheads become particularly problematic in heterogeneous computing environments where RISC-V cores interact with specialized accelerators.

Compiler-generated code inefficiencies further exacerbate energy overhead issues. The RISC philosophy of moving complexity from hardware to software often results in longer instruction sequences for complex operations, increasing both dynamic energy consumption and instruction cache pressure. Optimization challenges arise particularly in floating-point operations and SIMD workloads where the base RISC-V instruction set requires multiple instructions to accomplish tasks that other architectures handle with single instructions.

Pipeline stalls represent another critical overhead source in RISC-V implementations. The load-use hazards inherent in the RISC architecture frequently cause pipeline bubbles, particularly in scenarios involving memory-intensive operations. These stalls not only degrade performance but also increase dynamic power consumption as pipeline stages remain active while waiting for data dependencies to resolve. The energy cost becomes particularly pronounced in embedded systems where power budgets are constrained.

Branch prediction mechanisms in RISC-V processors often exhibit suboptimal energy characteristics due to the architecture's emphasis on simplicity over prediction accuracy. Mispredicted branches trigger pipeline flushes and instruction re-fetching, leading to wasted energy in both the execution units and memory hierarchy. The energy penalty is amplified in applications with irregular control flow patterns, where traditional two-level predictors struggle to maintain high accuracy rates.

Register file access patterns in RISC-V create additional energy overhead challenges. The 32-register architecture, while providing programming flexibility, increases the bit width required for register addressing and can lead to unnecessary register file accesses. Multi-ported register files consume significant static power, and the energy per access scales with the number of read and write ports required for superscalar implementations.

Cache coherency protocols in multi-core RISC-V systems introduce substantial energy overhead through increased interconnect traffic and cache state transitions. The weak memory ordering model, while offering performance benefits, requires additional synchronization instructions that consume energy without contributing directly to computational progress. These overheads become particularly problematic in heterogeneous computing environments where RISC-V cores interact with specialized accelerators.

Compiler-generated code inefficiencies further exacerbate energy overhead issues. The RISC philosophy of moving complexity from hardware to software often results in longer instruction sequences for complex operations, increasing both dynamic energy consumption and instruction cache pressure. Optimization challenges arise particularly in floating-point operations and SIMD workloads where the base RISC-V instruction set requires multiple instructions to accomplish tasks that other architectures handle with single instructions.

Existing RISC Overhead Reduction Solutions

01 RISC processor architecture optimization for reduced power consumption

RISC (Reduced Instruction Set Computer) architectures can be optimized to minimize energy consumption through simplified instruction sets and efficient execution pipelines. These designs reduce the number of clock cycles required per instruction and minimize transistor switching activity, leading to lower dynamic power consumption. Architectural features such as streamlined decode logic and reduced complexity in control units contribute to overall energy efficiency improvements.- RISC processor architecture optimization for reduced power consumption: RISC (Reduced Instruction Set Computer) architectures can be optimized to minimize energy consumption through simplified instruction sets and efficient execution pipelines. These designs reduce the number of clock cycles required per instruction and minimize transistor switching activity, leading to lower dynamic power consumption. Architectural features such as streamlined decode logic and reduced complexity in control units contribute to overall energy efficiency improvements.

- Dynamic voltage and frequency scaling techniques: Energy performance in RISC processors can be enhanced through dynamic adjustment of operating voltage and clock frequency based on workload demands. These techniques allow the processor to operate at lower power states during periods of reduced computational requirements, significantly reducing energy consumption without sacrificing performance when needed. Implementation involves monitoring system activity and adjusting power parameters in real-time.

- Power management through instruction scheduling and pipeline control: Advanced instruction scheduling algorithms and pipeline management strategies can improve energy efficiency by reducing idle cycles and optimizing resource utilization. These methods involve intelligent reordering of instructions to minimize pipeline stalls and reduce unnecessary power consumption in functional units. Clock gating and selective activation of processor components based on instruction requirements further enhance energy performance.

- Cache memory optimization for energy efficiency: Cache hierarchy design and management strategies play a crucial role in RISC processor energy performance. Optimized cache structures reduce memory access latency and minimize energy-intensive off-chip memory transactions. Techniques include adaptive cache sizing, intelligent prefetching mechanisms, and low-power cache access modes that balance performance requirements with energy conservation goals.

- Hardware-software co-design for energy-aware computing: Integrated approaches combining hardware features with software-level optimizations enable comprehensive energy management in RISC systems. These solutions involve compiler optimizations that generate energy-efficient code, operating system support for power management policies, and hardware mechanisms that expose energy-related information to software layers. The synergy between hardware capabilities and software intelligence maximizes overall system energy efficiency.

02 Dynamic voltage and frequency scaling techniques

Energy performance in RISC processors can be enhanced through dynamic adjustment of operating voltage and clock frequency based on workload demands. These techniques allow the processor to operate at lower power states during periods of reduced computational requirements, significantly reducing energy consumption without sacrificing performance during peak loads. Implementation involves monitoring system activity and adjusting power parameters in real-time.Expand Specific Solutions03 Power gating and clock gating mechanisms

Advanced power management strategies include selectively disabling unused functional units and clock distribution networks within RISC processors. These mechanisms prevent unnecessary power dissipation in idle circuit blocks by cutting off power supply or clock signals to inactive components. Fine-grained control over power domains enables significant reduction in both static and dynamic power consumption.Expand Specific Solutions04 Instruction-level parallelism and pipeline optimization

Energy efficiency can be improved through enhanced instruction-level parallelism and optimized pipeline designs that maximize computational throughput per unit of energy consumed. Techniques include superscalar execution, out-of-order processing, and branch prediction mechanisms that reduce pipeline stalls and wasted clock cycles. These optimizations ensure that energy is utilized effectively for productive computation.Expand Specific Solutions05 Memory hierarchy and cache optimization for energy efficiency

RISC processor energy performance is significantly influenced by memory subsystem design, including cache hierarchies and memory access patterns. Optimizations include implementing low-power cache designs, reducing memory access frequency through improved locality, and utilizing energy-efficient memory technologies. Proper cache sizing and replacement policies minimize energy-intensive off-chip memory accesses.Expand Specific Solutions

Key Players in RISC Processor and Energy Tech Industry

The RISC overhead examination for improved energy performance represents an emerging field within the broader energy optimization landscape, currently in its early development stage with significant growth potential. The market is characterized by increasing demand for energy-efficient computing solutions, driven by sustainability concerns and operational cost reduction needs. Technology maturity varies considerably across different applications, with established players like Google LLC and Hitachi Ltd. demonstrating advanced capabilities in processor optimization and energy management systems. Academic institutions including Tianjin University, Xi'an Jiaotong University, and Southeast University are conducting foundational research, while energy sector companies such as State Grid Corp. of China and NARI Technology Co., Ltd. are exploring practical implementations. The competitive landscape shows a convergence of traditional energy companies, technology giants, and research institutions, indicating the interdisciplinary nature of RISC-based energy optimization solutions and the nascent but promising market opportunities.

Xi'an Jiaotong University

Technical Solution: Xi'an Jiaotong University has developed innovative RISC-V processor designs that address instruction overhead through novel micro-architectural techniques and compiler optimizations. Their research includes implementation of speculative execution mechanisms and advanced branch prediction algorithms that reduce pipeline stalls and improve energy efficiency by 15-25%. The university has contributed to RISC-V instruction set extensions for energy-aware computing and developed simulation frameworks for analyzing instruction-level energy consumption. Their work encompasses both hardware and software co-design approaches to minimize RISC-V execution overhead in various application domains.

Strengths: Comprehensive research approach, strong collaboration with industry partners, focus on practical implementations. Weaknesses: Limited large-scale deployment experience, primarily academic research environment.

Southeast University

Technical Solution: Southeast University has conducted extensive research on RISC-V instruction set optimization for energy-efficient computing, developing novel techniques for reducing execution overhead through instruction fusion and micro-architectural enhancements. Their research focuses on implementing adaptive instruction scheduling algorithms that can reduce energy consumption by 20-35% in embedded systems. The university has published significant work on RISC-V pipeline optimization, branch prediction improvements, and memory hierarchy enhancements specifically targeting energy performance. Their contributions include open-source tools for RISC-V performance analysis and energy profiling that help identify and eliminate instruction execution bottlenecks.

Strengths: Strong academic research foundation, open-source contributions, focus on energy-efficient computing. Weaknesses: Limited commercial implementation experience, primarily theoretical research focus.

Core Innovations in RISC Energy Performance Patents

Method and system for reducing power consumption in a computing device when the computing device executes instructions in a tight loop

PatentInactiveUS6993668B2

Innovation

- The system employs a previous effective address register and comparator to disable data cache directory and TLB translations by recognizing a tight loop, reducing access to the instruction cache, and dividing the branching logic unit into clock domains to only enable necessary components during the loop.

Energy efficient processing device

PatentInactiveUS20090228686A1

Innovation

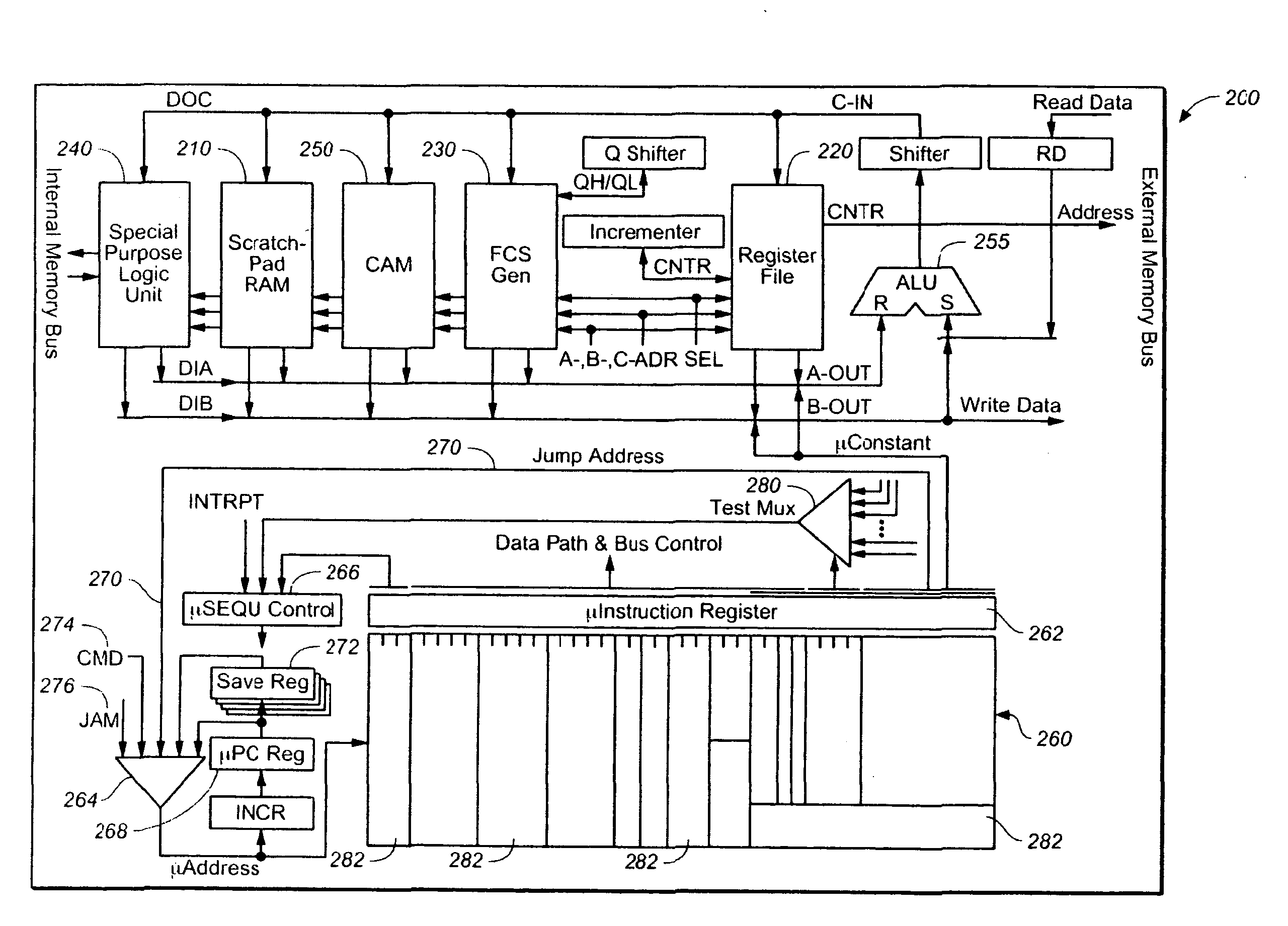

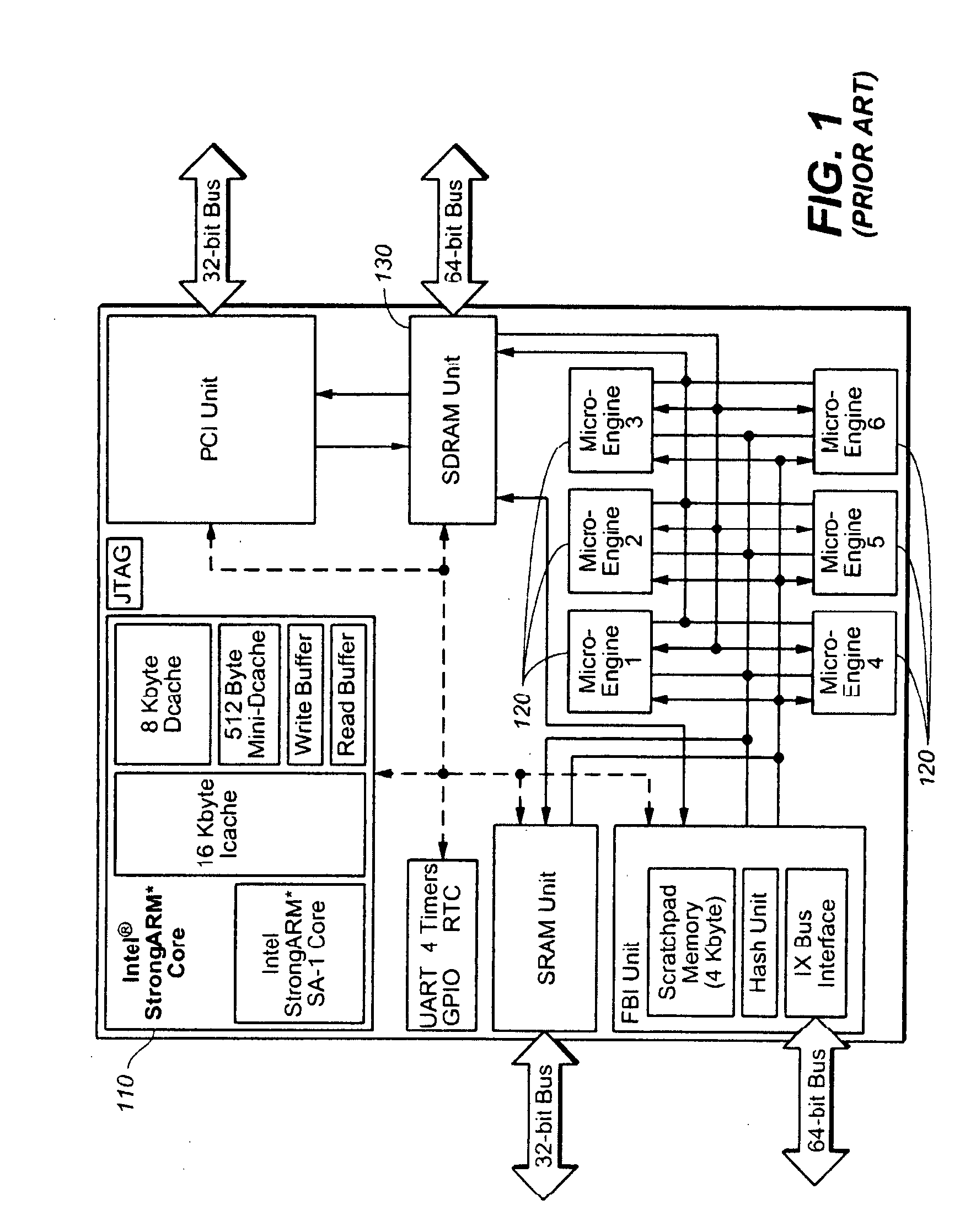

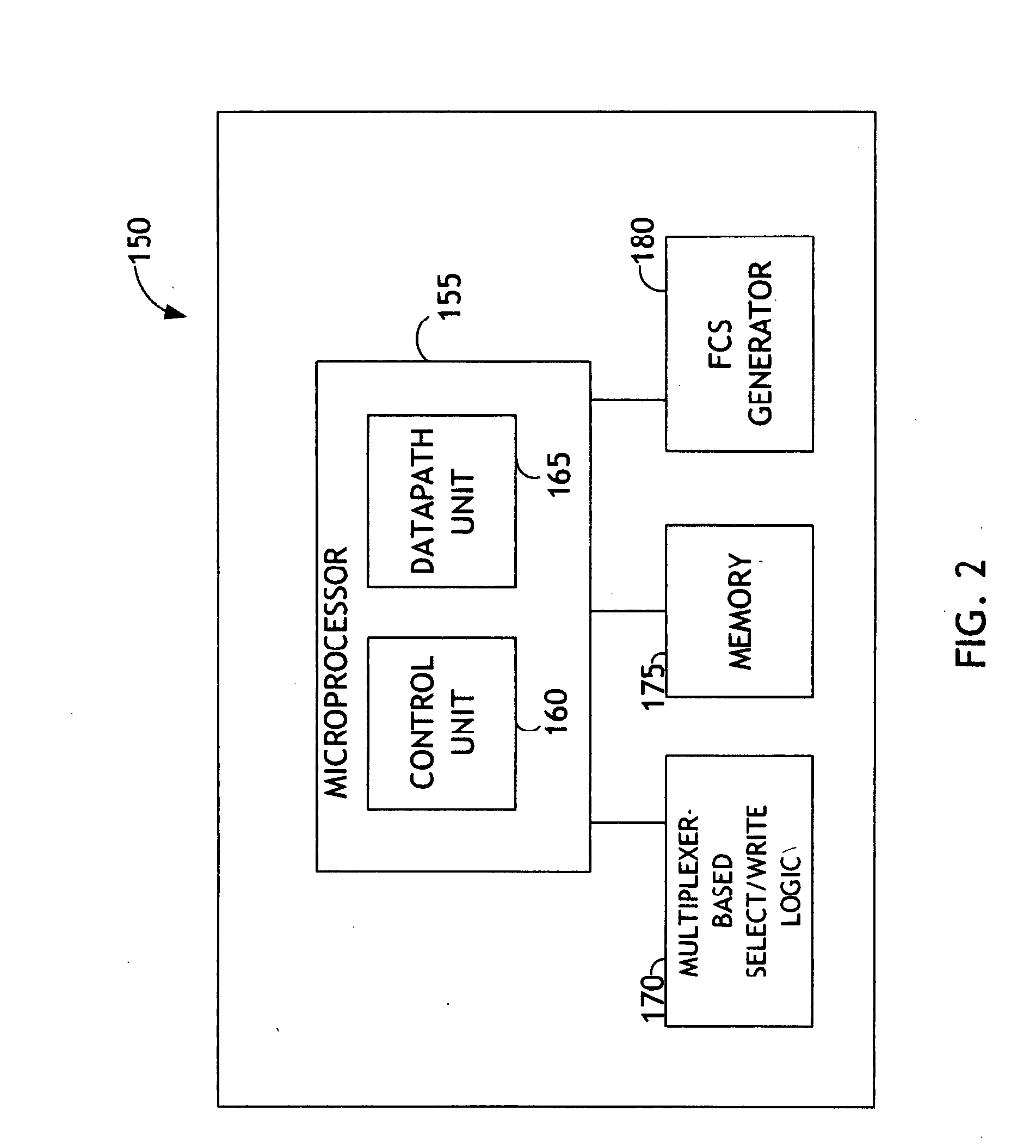

- A network processor with a microcoded architecture employing non-opcode-oriented, fully decoded microcode instructions that do not require an instruction decoder, utilizing a programmable microsequencer for state management and control, and a data manipulation subsystem controlled by fully decoded microinstructions, resulting in reduced power consumption and compact size.

RISC Power Management Standards and Protocols

The standardization of power management protocols for RISC architectures has become increasingly critical as energy efficiency demands intensify across computing platforms. Current industry standards primarily revolve around the Advanced Configuration and Power Interface (ACPI) specification, which provides a comprehensive framework for power state management in RISC-based systems. However, ACPI's original design for x86 architectures presents adaptation challenges for RISC processors, necessitating specialized extensions and modifications.

The ARM Power State Coordination Interface (PSCI) represents a significant advancement in RISC-specific power management standardization. This protocol defines standardized interfaces for power state management across ARM-based systems, enabling consistent power control mechanisms regardless of the underlying hardware implementation. PSCI facilitates coordination between different processing elements and power domains, ensuring optimal energy distribution while maintaining system stability and performance requirements.

RISC-V has introduced its own power management framework through the RISC-V Privileged Architecture specification, which defines power and clock gating protocols specifically tailored for modular RISC-V implementations. This standard emphasizes fine-grained power control capabilities, allowing individual functional units to be powered down independently based on workload characteristics and thermal constraints.

The IEEE 1801 Unified Power Format (UPF) has gained traction as a cross-platform standard for describing power management intent in RISC designs. UPF enables designers to specify power domains, isolation strategies, and retention policies at the register-transfer level, facilitating automated power optimization during synthesis and implementation phases.

Emerging protocols focus on dynamic voltage and frequency scaling (DVFS) coordination, with standards like the System Control and Management Interface (SCMI) providing vendor-neutral approaches to runtime power management. These protocols enable real-time adjustment of operating parameters based on workload analysis and thermal feedback, maximizing energy efficiency while preserving computational throughput.

The integration of these standards requires careful consideration of inter-protocol communication mechanisms and compatibility layers to ensure seamless operation across heterogeneous RISC environments.

The ARM Power State Coordination Interface (PSCI) represents a significant advancement in RISC-specific power management standardization. This protocol defines standardized interfaces for power state management across ARM-based systems, enabling consistent power control mechanisms regardless of the underlying hardware implementation. PSCI facilitates coordination between different processing elements and power domains, ensuring optimal energy distribution while maintaining system stability and performance requirements.

RISC-V has introduced its own power management framework through the RISC-V Privileged Architecture specification, which defines power and clock gating protocols specifically tailored for modular RISC-V implementations. This standard emphasizes fine-grained power control capabilities, allowing individual functional units to be powered down independently based on workload characteristics and thermal constraints.

The IEEE 1801 Unified Power Format (UPF) has gained traction as a cross-platform standard for describing power management intent in RISC designs. UPF enables designers to specify power domains, isolation strategies, and retention policies at the register-transfer level, facilitating automated power optimization during synthesis and implementation phases.

Emerging protocols focus on dynamic voltage and frequency scaling (DVFS) coordination, with standards like the System Control and Management Interface (SCMI) providing vendor-neutral approaches to runtime power management. These protocols enable real-time adjustment of operating parameters based on workload analysis and thermal feedback, maximizing energy efficiency while preserving computational throughput.

The integration of these standards requires careful consideration of inter-protocol communication mechanisms and compatibility layers to ensure seamless operation across heterogeneous RISC environments.

Thermal Design Considerations for RISC Systems

Thermal management represents a critical design challenge in RISC-based systems, particularly when optimizing for energy performance. As RISC processors operate at increasingly higher frequencies and integrate more cores, the relationship between thermal characteristics and energy efficiency becomes paramount. Effective thermal design directly impacts power consumption patterns, processor throttling behavior, and overall system reliability.

The fundamental thermal considerations for RISC systems begin with understanding heat generation patterns across different instruction types and execution units. RISC architectures, while generally more energy-efficient per instruction, can generate significant thermal loads during intensive computational tasks. The simplified instruction set allows for more predictable thermal profiles, enabling designers to implement targeted cooling strategies that align with specific workload characteristics.

Package-level thermal design plays a crucial role in maintaining optimal operating temperatures. Modern RISC processors require sophisticated heat spreader designs and thermal interface materials that can efficiently conduct heat away from the die. The choice of packaging technology, including flip-chip configurations and advanced substrate materials, directly influences thermal resistance and heat dissipation capabilities.

System-level thermal management strategies must account for the interaction between RISC processors and surrounding components. Proper airflow design, heat sink selection, and thermal zone management ensure that processors can maintain peak performance without triggering thermal throttling mechanisms. Advanced thermal solutions may incorporate dynamic cooling adjustments based on real-time temperature monitoring and workload prediction.

Temperature-aware design methodologies are increasingly important for RISC systems targeting energy optimization. These approaches involve implementing thermal sensors, dynamic voltage and frequency scaling mechanisms, and intelligent task scheduling algorithms that consider thermal constraints. By proactively managing thermal conditions, systems can maintain consistent performance while minimizing energy consumption and extending component lifespan.

The integration of thermal modeling tools during the design phase enables engineers to predict hotspot formation and optimize component placement for improved heat distribution across the system architecture.

The fundamental thermal considerations for RISC systems begin with understanding heat generation patterns across different instruction types and execution units. RISC architectures, while generally more energy-efficient per instruction, can generate significant thermal loads during intensive computational tasks. The simplified instruction set allows for more predictable thermal profiles, enabling designers to implement targeted cooling strategies that align with specific workload characteristics.

Package-level thermal design plays a crucial role in maintaining optimal operating temperatures. Modern RISC processors require sophisticated heat spreader designs and thermal interface materials that can efficiently conduct heat away from the die. The choice of packaging technology, including flip-chip configurations and advanced substrate materials, directly influences thermal resistance and heat dissipation capabilities.

System-level thermal management strategies must account for the interaction between RISC processors and surrounding components. Proper airflow design, heat sink selection, and thermal zone management ensure that processors can maintain peak performance without triggering thermal throttling mechanisms. Advanced thermal solutions may incorporate dynamic cooling adjustments based on real-time temperature monitoring and workload prediction.

Temperature-aware design methodologies are increasingly important for RISC systems targeting energy optimization. These approaches involve implementing thermal sensors, dynamic voltage and frequency scaling mechanisms, and intelligent task scheduling algorithms that consider thermal constraints. By proactively managing thermal conditions, systems can maintain consistent performance while minimizing energy consumption and extending component lifespan.

The integration of thermal modeling tools during the design phase enables engineers to predict hotspot formation and optimize component placement for improved heat distribution across the system architecture.

Unlock deeper insights with PatSnap Eureka Quick Research — get a full tech report to explore trends and direct your research. Try now!

Generate Your Research Report Instantly with AI Agent

Supercharge your innovation with PatSnap Eureka AI Agent Platform!