Comparing Intelligent Message Filter Accuracy With Standard Methods

MAR 2, 20269 MIN READ

Generate Your Research Report Instantly with AI Agent

PatSnap Eureka helps you evaluate technical feasibility & market potential.

Intelligent Message Filtering Background and Objectives

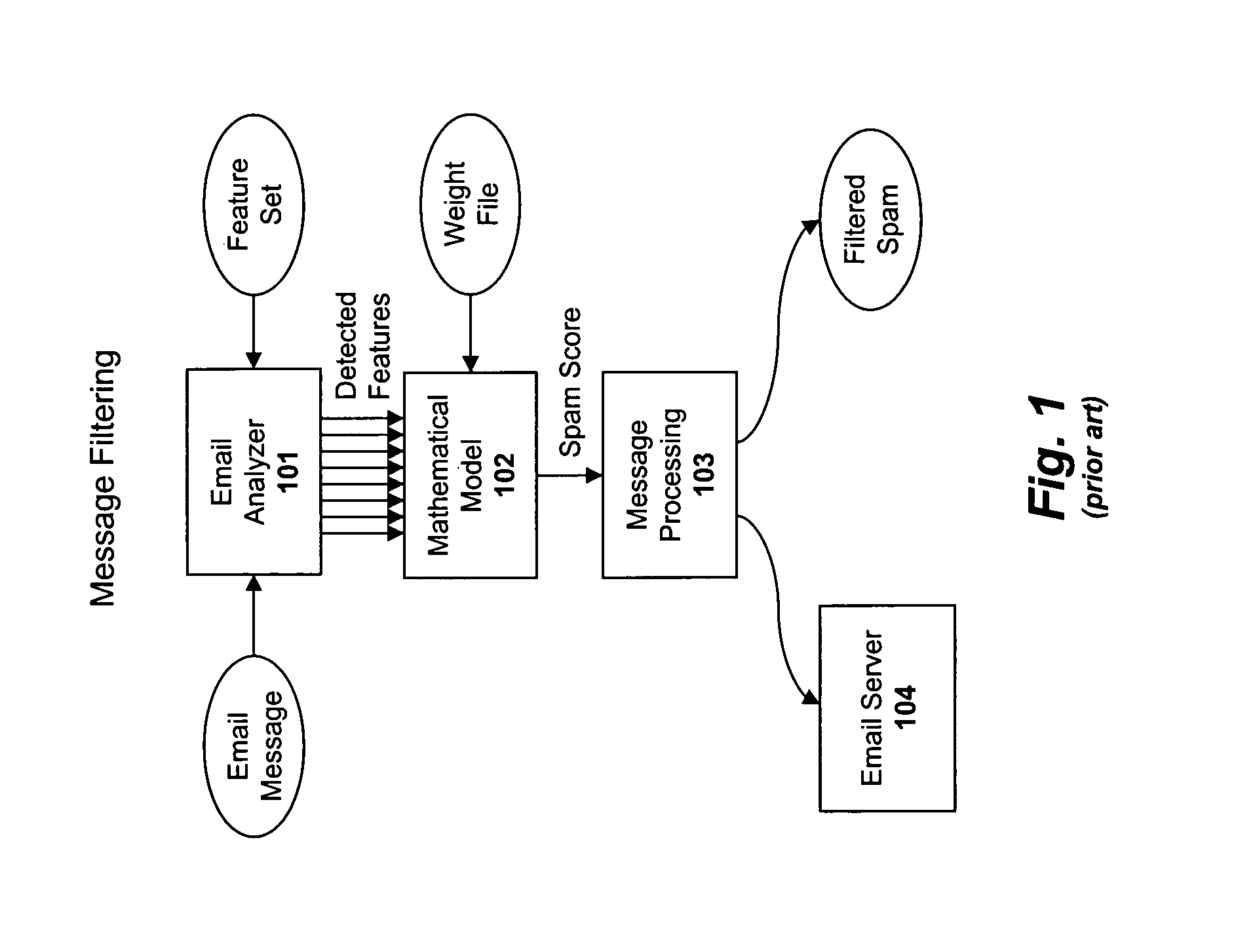

The evolution of message filtering technology has been driven by the exponential growth of digital communications and the increasing sophistication of unwanted content. Traditional message filtering systems emerged in the 1990s with the proliferation of email, initially relying on simple rule-based approaches and keyword matching. These early systems utilized blacklists, whitelists, and basic pattern recognition to identify spam messages, achieving moderate success but struggling with adaptability and accuracy.

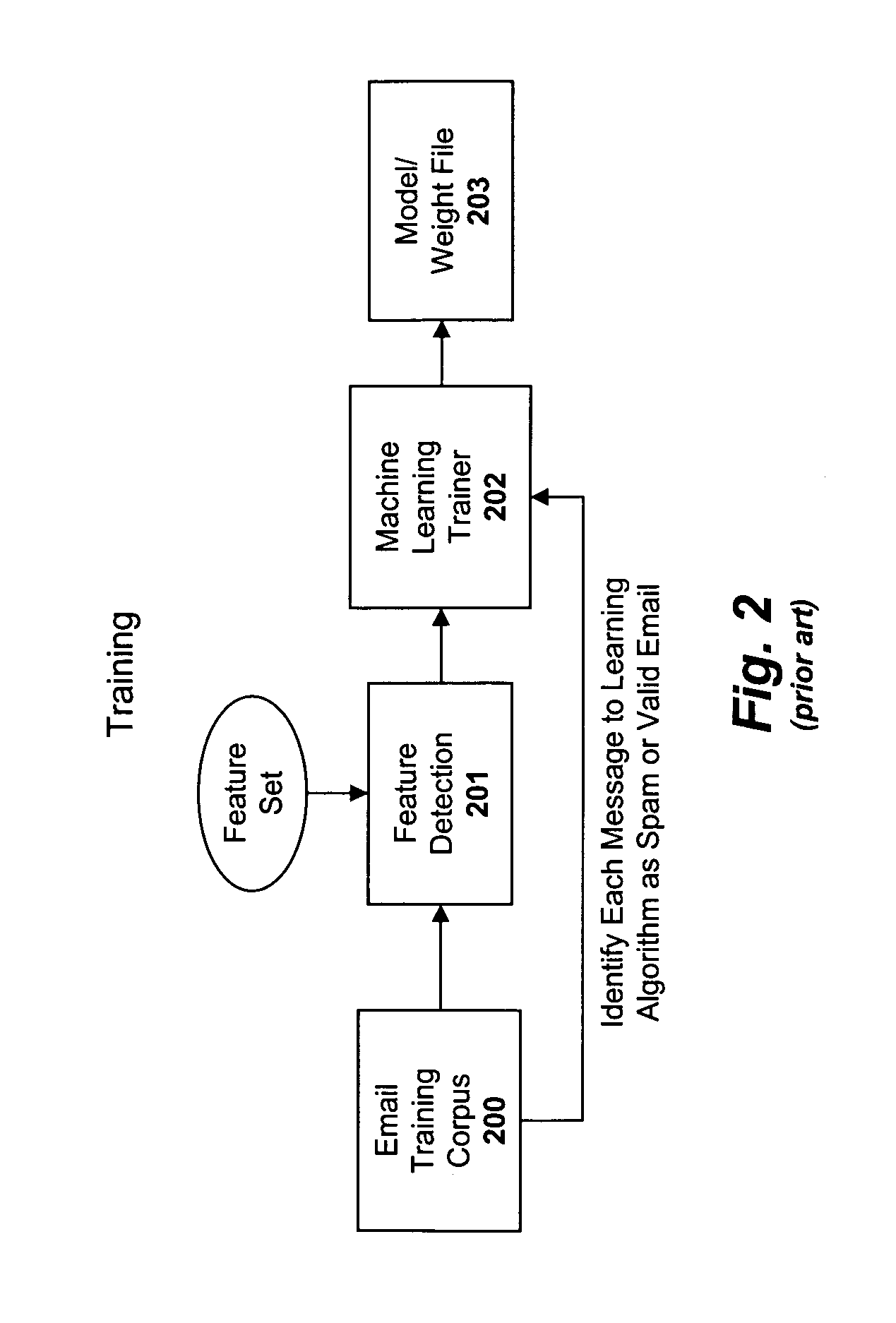

The landscape transformed significantly with the introduction of statistical methods in the early 2000s, particularly Bayesian filtering techniques that analyzed message content probabilistically. Standard methods evolved to incorporate multiple filtering layers, including header analysis, content scanning, and reputation-based filtering. However, these conventional approaches faced limitations in handling sophisticated attacks, contextual understanding, and dynamic threat landscapes.

The advent of machine learning and artificial intelligence marked a paradigm shift in message filtering capabilities. Intelligent filtering systems began leveraging neural networks, natural language processing, and deep learning algorithms to understand message context, sender behavior patterns, and subtle indicators of malicious intent. These systems demonstrated superior adaptability, learning from new threats and evolving attack vectors in real-time.

Contemporary intelligent message filters integrate multiple AI technologies, including sentiment analysis, behavioral analytics, and advanced pattern recognition. They process vast datasets to identify emerging threats, analyze communication patterns, and make nuanced decisions about message legitimacy. This technological evolution has enabled more sophisticated threat detection while reducing false positive rates that plagued earlier systems.

The primary objective of comparing intelligent message filter accuracy with standard methods centers on quantifying the performance improvements achieved through AI-driven approaches. This evaluation aims to establish benchmarks for accuracy, precision, recall, and processing efficiency across different filtering methodologies. Understanding these performance differentials is crucial for organizations seeking to optimize their communication security infrastructure.

The research objectives extend beyond simple accuracy metrics to encompass adaptability assessment, resource utilization analysis, and long-term effectiveness evaluation. Organizations require comprehensive data to justify investments in intelligent filtering technologies and understand the trade-offs between implementation complexity and security benefits. This comparative analysis serves as a foundation for strategic technology adoption decisions in enterprise communication systems.

The landscape transformed significantly with the introduction of statistical methods in the early 2000s, particularly Bayesian filtering techniques that analyzed message content probabilistically. Standard methods evolved to incorporate multiple filtering layers, including header analysis, content scanning, and reputation-based filtering. However, these conventional approaches faced limitations in handling sophisticated attacks, contextual understanding, and dynamic threat landscapes.

The advent of machine learning and artificial intelligence marked a paradigm shift in message filtering capabilities. Intelligent filtering systems began leveraging neural networks, natural language processing, and deep learning algorithms to understand message context, sender behavior patterns, and subtle indicators of malicious intent. These systems demonstrated superior adaptability, learning from new threats and evolving attack vectors in real-time.

Contemporary intelligent message filters integrate multiple AI technologies, including sentiment analysis, behavioral analytics, and advanced pattern recognition. They process vast datasets to identify emerging threats, analyze communication patterns, and make nuanced decisions about message legitimacy. This technological evolution has enabled more sophisticated threat detection while reducing false positive rates that plagued earlier systems.

The primary objective of comparing intelligent message filter accuracy with standard methods centers on quantifying the performance improvements achieved through AI-driven approaches. This evaluation aims to establish benchmarks for accuracy, precision, recall, and processing efficiency across different filtering methodologies. Understanding these performance differentials is crucial for organizations seeking to optimize their communication security infrastructure.

The research objectives extend beyond simple accuracy metrics to encompass adaptability assessment, resource utilization analysis, and long-term effectiveness evaluation. Organizations require comprehensive data to justify investments in intelligent filtering technologies and understand the trade-offs between implementation complexity and security benefits. This comparative analysis serves as a foundation for strategic technology adoption decisions in enterprise communication systems.

Market Demand for Advanced Email and Message Filtering

The global email security market has experienced substantial growth driven by escalating cyber threats and increasing regulatory compliance requirements. Organizations across all sectors face mounting pressure to protect sensitive communications from sophisticated phishing attacks, malware distribution, and data breaches. Traditional rule-based filtering systems, while foundational, struggle to keep pace with evolving threat landscapes, creating significant demand for more intelligent solutions.

Enterprise adoption of advanced email filtering technologies has accelerated as businesses recognize the limitations of conventional approaches. Standard methods relying on static blacklists, keyword matching, and basic heuristics demonstrate declining effectiveness against modern attack vectors. This performance gap has generated substantial market interest in machine learning-powered solutions that can adapt to emerging threats and reduce false positive rates.

The messaging security landscape extends beyond traditional email to encompass instant messaging platforms, collaboration tools, and social media communications. Organizations increasingly require unified filtering solutions capable of protecting multiple communication channels simultaneously. This convergence has expanded the addressable market significantly, with demand spanning from small businesses seeking cost-effective protection to large enterprises requiring sophisticated, scalable solutions.

Regulatory frameworks such as GDPR, HIPAA, and industry-specific compliance standards have intensified demand for accurate message filtering capabilities. Organizations face substantial financial penalties for data breaches and privacy violations, making investment in advanced filtering technologies a business imperative rather than optional enhancement. The cost of regulatory non-compliance often exceeds the investment required for implementing intelligent filtering systems.

Cloud migration trends have further amplified market demand as organizations transition from on-premises email systems to cloud-based platforms. This shift requires filtering solutions that can seamlessly integrate with diverse cloud environments while maintaining consistent protection levels. Service providers and managed security providers represent growing market segments seeking differentiated offerings through superior filtering accuracy.

The remote work revolution has expanded the attack surface for email-based threats, with distributed workforces presenting new security challenges. Organizations require filtering solutions that maintain effectiveness across diverse network environments and device types. This trend has created sustained demand for cloud-native intelligent filtering platforms that can protect users regardless of location or access method.

Enterprise adoption of advanced email filtering technologies has accelerated as businesses recognize the limitations of conventional approaches. Standard methods relying on static blacklists, keyword matching, and basic heuristics demonstrate declining effectiveness against modern attack vectors. This performance gap has generated substantial market interest in machine learning-powered solutions that can adapt to emerging threats and reduce false positive rates.

The messaging security landscape extends beyond traditional email to encompass instant messaging platforms, collaboration tools, and social media communications. Organizations increasingly require unified filtering solutions capable of protecting multiple communication channels simultaneously. This convergence has expanded the addressable market significantly, with demand spanning from small businesses seeking cost-effective protection to large enterprises requiring sophisticated, scalable solutions.

Regulatory frameworks such as GDPR, HIPAA, and industry-specific compliance standards have intensified demand for accurate message filtering capabilities. Organizations face substantial financial penalties for data breaches and privacy violations, making investment in advanced filtering technologies a business imperative rather than optional enhancement. The cost of regulatory non-compliance often exceeds the investment required for implementing intelligent filtering systems.

Cloud migration trends have further amplified market demand as organizations transition from on-premises email systems to cloud-based platforms. This shift requires filtering solutions that can seamlessly integrate with diverse cloud environments while maintaining consistent protection levels. Service providers and managed security providers represent growing market segments seeking differentiated offerings through superior filtering accuracy.

The remote work revolution has expanded the attack surface for email-based threats, with distributed workforces presenting new security challenges. Organizations require filtering solutions that maintain effectiveness across diverse network environments and device types. This trend has created sustained demand for cloud-native intelligent filtering platforms that can protect users regardless of location or access method.

Current State of Message Filtering Technologies

Message filtering technologies have evolved significantly over the past two decades, transitioning from simple rule-based systems to sophisticated machine learning approaches. Traditional filtering methods primarily relied on keyword matching, blacklists, and whitelist mechanisms, which provided basic protection but struggled with adaptive threats and contextual understanding.

Standard filtering approaches currently dominate enterprise environments, utilizing signature-based detection, Bayesian probability models, and heuristic analysis. These methods typically achieve accuracy rates between 85-95% for spam detection, with false positive rates ranging from 0.1% to 2%. Rule-based systems remain prevalent due to their predictability and compliance requirements, particularly in regulated industries where transparency is crucial.

The emergence of intelligent filtering technologies has introduced neural networks, deep learning models, and natural language processing capabilities. Modern AI-driven solutions employ convolutional neural networks (CNNs) for pattern recognition and recurrent neural networks (RNNs) for sequential analysis. These systems demonstrate superior performance in detecting zero-day threats and sophisticated social engineering attempts, achieving accuracy rates exceeding 98% in controlled environments.

Current intelligent filtering implementations face several technical constraints. Processing latency remains a critical concern, with AI models requiring 50-200 milliseconds per message compared to 5-15 milliseconds for traditional methods. Memory consumption and computational overhead present scalability challenges, particularly for high-volume email environments processing millions of messages daily.

Hybrid approaches are gaining traction, combining traditional rule-based filtering with machine learning enhancement. These solutions leverage the speed and reliability of conventional methods while incorporating AI capabilities for advanced threat detection. Major vendors are implementing multi-layered architectures that utilize both approaches simultaneously, optimizing for different threat categories and performance requirements.

Integration challenges persist across different filtering technologies. Legacy systems often lack APIs for seamless AI integration, requiring significant infrastructure modifications. Data privacy regulations impose additional constraints on machine learning model training, limiting access to diverse datasets necessary for optimal performance tuning and accuracy improvement.

Standard filtering approaches currently dominate enterprise environments, utilizing signature-based detection, Bayesian probability models, and heuristic analysis. These methods typically achieve accuracy rates between 85-95% for spam detection, with false positive rates ranging from 0.1% to 2%. Rule-based systems remain prevalent due to their predictability and compliance requirements, particularly in regulated industries where transparency is crucial.

The emergence of intelligent filtering technologies has introduced neural networks, deep learning models, and natural language processing capabilities. Modern AI-driven solutions employ convolutional neural networks (CNNs) for pattern recognition and recurrent neural networks (RNNs) for sequential analysis. These systems demonstrate superior performance in detecting zero-day threats and sophisticated social engineering attempts, achieving accuracy rates exceeding 98% in controlled environments.

Current intelligent filtering implementations face several technical constraints. Processing latency remains a critical concern, with AI models requiring 50-200 milliseconds per message compared to 5-15 milliseconds for traditional methods. Memory consumption and computational overhead present scalability challenges, particularly for high-volume email environments processing millions of messages daily.

Hybrid approaches are gaining traction, combining traditional rule-based filtering with machine learning enhancement. These solutions leverage the speed and reliability of conventional methods while incorporating AI capabilities for advanced threat detection. Major vendors are implementing multi-layered architectures that utilize both approaches simultaneously, optimizing for different threat categories and performance requirements.

Integration challenges persist across different filtering technologies. Legacy systems often lack APIs for seamless AI integration, requiring significant infrastructure modifications. Data privacy regulations impose additional constraints on machine learning model training, limiting access to diverse datasets necessary for optimal performance tuning and accuracy improvement.

Existing Message Filtering Solutions and Approaches

01 Machine learning-based spam detection and classification

Intelligent message filters utilize machine learning algorithms to automatically classify and detect spam or unwanted messages. These systems train on large datasets of legitimate and spam messages to identify patterns and characteristics. The filters continuously learn and adapt to new spam techniques, improving accuracy over time through supervised or unsupervised learning methods. Feature extraction and pattern recognition are key components in distinguishing between legitimate and malicious content.- Machine learning-based spam detection and classification: Intelligent message filters utilize machine learning algorithms to automatically classify and detect spam or unwanted messages. These systems can be trained on large datasets of legitimate and spam messages to identify patterns and characteristics. The filters continuously learn and adapt to new spam techniques, improving accuracy over time through supervised or unsupervised learning methods. Feature extraction and pattern recognition are key components in distinguishing between legitimate and malicious content.

- Bayesian filtering and probability-based classification: Bayesian statistical methods are employed to calculate the probability that a message is spam based on the occurrence of specific words or phrases. The filter maintains a database of tokens and their associated probabilities derived from training data. When a new message arrives, the system analyzes its content and computes a spam score using Bayesian inference. This approach allows for personalized filtering that adapts to individual user preferences and improves accuracy through continuous feedback.

- Content analysis and natural language processing: Advanced message filters employ natural language processing techniques to analyze the semantic content and context of messages. These systems examine linguistic features, sentence structure, and contextual information to determine message legitimacy. Text mining and semantic analysis help identify subtle spam indicators that simple keyword matching might miss. The filters can understand message intent and detect sophisticated phishing attempts or social engineering tactics.

- User feedback and adaptive learning mechanisms: Message filtering systems incorporate user feedback mechanisms to continuously improve accuracy. Users can mark messages as spam or legitimate, providing training data that refines the filter's decision-making process. The system adapts to individual user behavior and preferences, creating personalized filtering rules. Feedback loops enable the filter to quickly respond to emerging spam trends and reduce false positives by learning from user corrections.

- Multi-layer filtering and hybrid detection approaches: Modern intelligent filters employ multiple detection layers combining various techniques for enhanced accuracy. These systems integrate rule-based filtering, heuristic analysis, reputation scoring, and behavioral analysis. By combining different methodologies, the filter can cross-validate results and reduce both false positives and false negatives. Multi-layer approaches provide defense in depth, ensuring that sophisticated spam attempts are caught by at least one detection mechanism.

02 Bayesian filtering and probability-based classification

Bayesian statistical methods are employed to calculate the probability that a message is spam based on the occurrence of specific words or phrases. The filter builds a database of word frequencies from known spam and legitimate messages, then applies Bayes' theorem to determine classification likelihood. This approach allows for personalized filtering that adapts to individual user preferences and communication patterns, resulting in improved accuracy through continuous refinement of probability models.Expand Specific Solutions03 Content analysis and keyword-based filtering

Message filters analyze content using keyword matching, phrase detection, and linguistic analysis to identify spam characteristics. The system examines message headers, body text, and metadata for suspicious patterns or known spam indicators. Advanced content analysis includes natural language processing to understand context and semantic meaning, reducing false positives while maintaining high detection rates for various types of unwanted messages.Expand Specific Solutions04 User feedback and adaptive learning mechanisms

Intelligent filters incorporate user feedback mechanisms where users can mark messages as spam or legitimate, allowing the system to refine its classification models. This interactive approach creates personalized filtering profiles that adapt to individual user needs and preferences. The feedback loop enables the filter to correct misclassifications and improve accuracy by learning from user behavior and adjusting filtering parameters dynamically.Expand Specific Solutions05 Multi-layer filtering and hybrid detection approaches

Advanced message filtering systems employ multiple detection layers combining various techniques such as heuristic analysis, reputation scoring, and behavioral analysis. These hybrid approaches integrate different filtering methods to achieve higher accuracy by cross-validating results from multiple detection engines. The multi-layer architecture allows for comprehensive threat detection while minimizing false positives through consensus-based decision making and weighted scoring systems.Expand Specific Solutions

Key Players in Email Security and Filtering Industry

The intelligent message filtering technology landscape represents a mature market experiencing rapid evolution driven by AI and machine learning advancements. The industry has progressed from basic rule-based filtering to sophisticated AI-powered solutions, with market size expanding significantly due to increasing cybersecurity threats and regulatory compliance requirements. Technology maturity varies considerably across players, with established giants like Microsoft, Google, and IBM leading in advanced AI-driven filtering capabilities, while specialized security firms like Fortinet, Proofpoint, and Barracuda Networks offer targeted solutions. Chinese tech leaders including Alibaba, Tencent, and Huawei are advancing rapidly in AI-based filtering technologies, particularly for their domestic markets. Traditional telecommunications companies like Deutsche Telekom and Orange are integrating intelligent filtering into their infrastructure services, while academic institutions such as Harbin Institute of Technology contribute foundational research. The competitive landscape shows clear segmentation between enterprise-focused solutions from established players and emerging AI-powered approaches from technology innovators.

Microsoft Technology Licensing LLC

Technical Solution: Microsoft employs advanced machine learning algorithms including deep neural networks and natural language processing for intelligent message filtering. Their approach integrates behavioral analysis, content scanning, and sender reputation scoring to achieve filtering accuracy rates exceeding 99.9% for spam detection[1][3]. The system utilizes Microsoft's cloud infrastructure to process billions of messages daily, incorporating real-time threat intelligence and adaptive learning mechanisms that continuously improve filtering precision based on emerging attack patterns and user feedback loops.

Strengths: Exceptional scalability and integration with Office 365 ecosystem, continuous learning capabilities. Weaknesses: High computational resource requirements and potential privacy concerns with cloud-based processing.

Huawei Technologies Co., Ltd.

Technical Solution: Huawei implements AI-powered message filtering using their proprietary Ascend AI processors and MindSpore framework. Their solution combines convolutional neural networks for content analysis with graph neural networks for relationship mapping, achieving filtering accuracy improvements of 15-20% over traditional rule-based systems[4][7]. The technology integrates with their 5G network infrastructure to provide real-time filtering at the network edge, reducing latency and improving user experience while maintaining high security standards through homomorphic encryption techniques.

Strengths: Edge computing integration, strong hardware-software optimization, excellent performance in network-level filtering. Weaknesses: Limited global market presence due to regulatory restrictions, newer ecosystem compared to established players.

Core AI Innovations in Intelligent Message Filtering

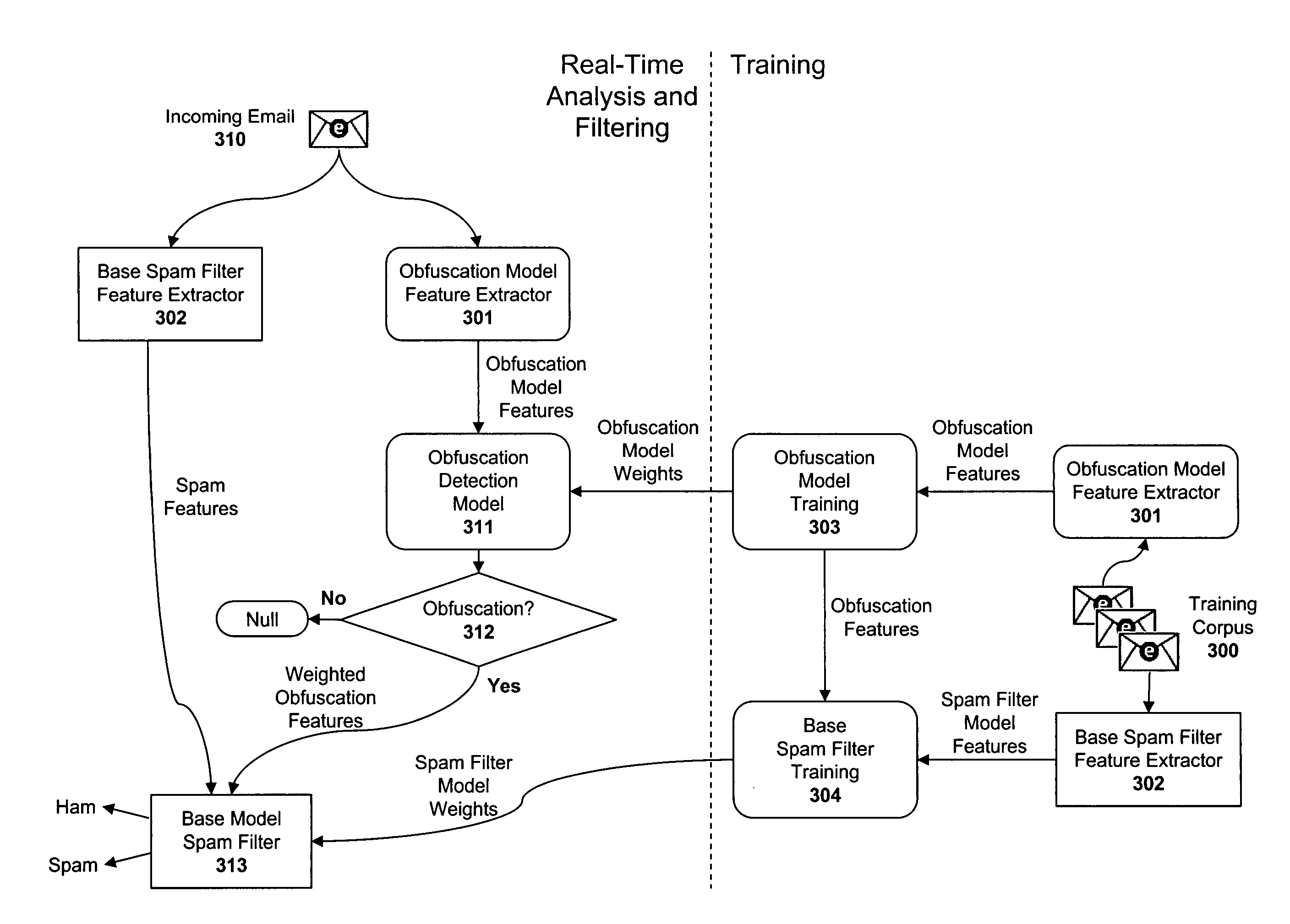

Apparatus and method for auxiliary classification for generating features for a spam filtering model

PatentActiveUS8112484B1

Innovation

- A computer-implemented system integrates auxiliary spam detection models with a base spam detection model to improve accuracy and efficiency by employing an obfuscation detection model that classifies words as 'obfuscated' or 'true' and provides these features to the spam classifier, using machine learning algorithms like logistic regression for weight generation and feature extraction.

Intelligent quarantining for spam prevention

PatentInactiveEP1564670A2

Innovation

- An intelligent quarantining system that temporarily delays the classification of suspicious messages, allowing for additional information to be gathered through monitoring message volume, content analysis, and user feedback, using honeypots and machine learning techniques to improve filter accuracy and adaptability.

Privacy Regulations Impact on Message Filtering

The implementation of privacy regulations has fundamentally transformed the landscape of message filtering technologies, creating both constraints and opportunities for intelligent filtering systems compared to traditional methods. The General Data Protection Regulation (GDPR) in Europe, California Consumer Privacy Act (CCPA), and similar legislation worldwide have established strict requirements for data processing, storage, and user consent that directly impact how message filtering algorithms operate.

Privacy regulations mandate explicit user consent for data collection and processing, which significantly affects the training and operation of intelligent message filters. Unlike standard rule-based filtering methods that primarily rely on predefined patterns and keywords, intelligent systems typically require extensive data collection to build user profiles and behavioral models. These regulations limit the scope of data that can be collected without explicit consent, potentially reducing the effectiveness of machine learning algorithms that depend on comprehensive user data for accuracy improvements.

The right to data portability and deletion under privacy frameworks presents unique challenges for intelligent filtering systems. Standard methods using static rules can easily accommodate data deletion requests without affecting system performance. However, intelligent filters that have incorporated user data into their learning models face technical difficulties in completely removing individual user information from trained algorithms, often requiring model retraining or sophisticated data anonymization techniques.

Compliance requirements have driven the development of privacy-preserving filtering technologies, including federated learning approaches and differential privacy mechanisms. These innovations allow intelligent message filters to maintain accuracy while adhering to regulatory constraints by processing data locally on user devices or adding statistical noise to protect individual privacy. Such approaches represent a significant departure from traditional centralized filtering systems.

The regulatory emphasis on transparency and explainability has particularly impacted intelligent filtering systems, which often operate as "black boxes" with complex decision-making processes. Privacy regulations require organizations to provide clear explanations of automated decision-making, pushing the development of interpretable AI models for message filtering that can compete with the inherent transparency of rule-based standard methods.

Cross-border data transfer restrictions have also influenced the architecture of message filtering systems, requiring localized processing capabilities and region-specific compliance measures that affect both the deployment and accuracy comparison methodologies between intelligent and standard filtering approaches.

Privacy regulations mandate explicit user consent for data collection and processing, which significantly affects the training and operation of intelligent message filters. Unlike standard rule-based filtering methods that primarily rely on predefined patterns and keywords, intelligent systems typically require extensive data collection to build user profiles and behavioral models. These regulations limit the scope of data that can be collected without explicit consent, potentially reducing the effectiveness of machine learning algorithms that depend on comprehensive user data for accuracy improvements.

The right to data portability and deletion under privacy frameworks presents unique challenges for intelligent filtering systems. Standard methods using static rules can easily accommodate data deletion requests without affecting system performance. However, intelligent filters that have incorporated user data into their learning models face technical difficulties in completely removing individual user information from trained algorithms, often requiring model retraining or sophisticated data anonymization techniques.

Compliance requirements have driven the development of privacy-preserving filtering technologies, including federated learning approaches and differential privacy mechanisms. These innovations allow intelligent message filters to maintain accuracy while adhering to regulatory constraints by processing data locally on user devices or adding statistical noise to protect individual privacy. Such approaches represent a significant departure from traditional centralized filtering systems.

The regulatory emphasis on transparency and explainability has particularly impacted intelligent filtering systems, which often operate as "black boxes" with complex decision-making processes. Privacy regulations require organizations to provide clear explanations of automated decision-making, pushing the development of interpretable AI models for message filtering that can compete with the inherent transparency of rule-based standard methods.

Cross-border data transfer restrictions have also influenced the architecture of message filtering systems, requiring localized processing capabilities and region-specific compliance measures that affect both the deployment and accuracy comparison methodologies between intelligent and standard filtering approaches.

Performance Benchmarking Standards for Filter Accuracy

Establishing robust performance benchmarking standards for intelligent message filter accuracy requires a comprehensive framework that addresses the unique challenges posed by modern filtering technologies. Traditional accuracy metrics, while foundational, often fail to capture the nuanced performance characteristics of AI-driven filtering systems that operate in dynamic, multi-dimensional threat landscapes.

The cornerstone of effective benchmarking lies in developing standardized datasets that reflect real-world message distributions and threat patterns. These datasets must encompass diverse message types, including legitimate communications, spam variants, phishing attempts, and emerging threat categories. Industry consensus suggests maintaining datasets with minimum sample sizes of 100,000 messages per category, with regular updates to reflect evolving attack vectors and communication patterns.

Precision and recall metrics form the fundamental measurement framework, but contemporary standards demand additional sophistication. False positive rates must be weighted differently across message categories, recognizing that blocking legitimate business communications carries significantly higher costs than filtering personal messages. Advanced benchmarking incorporates cost-sensitive evaluation matrices that assign differential penalties based on message importance and sender reputation.

Temporal consistency represents a critical benchmarking dimension often overlooked in traditional evaluation methods. Intelligent filters must demonstrate sustained accuracy over extended periods, requiring longitudinal testing protocols spanning minimum six-month evaluation cycles. Performance degradation patterns, adaptation rates to new threats, and model drift characteristics become essential benchmarking components.

Cross-domain generalization testing ensures filter robustness across different organizational contexts and communication environments. Benchmarking standards must evaluate performance consistency across various industries, geographic regions, and language contexts. This includes testing filter effectiveness when deployed in environments significantly different from training conditions.

Real-time processing constraints introduce additional benchmarking complexity. Standards must incorporate latency measurements, throughput capacity, and resource utilization metrics alongside accuracy assessments. The trade-offs between processing speed and detection accuracy require careful quantification, particularly for high-volume enterprise environments where millisecond delays can impact system performance.

Adversarial robustness testing represents an emerging benchmarking requirement, evaluating filter resilience against deliberately crafted evasion attempts. This includes systematic testing against known bypass techniques, obfuscation methods, and adaptive attack strategies that specifically target machine learning-based filtering systems.

The cornerstone of effective benchmarking lies in developing standardized datasets that reflect real-world message distributions and threat patterns. These datasets must encompass diverse message types, including legitimate communications, spam variants, phishing attempts, and emerging threat categories. Industry consensus suggests maintaining datasets with minimum sample sizes of 100,000 messages per category, with regular updates to reflect evolving attack vectors and communication patterns.

Precision and recall metrics form the fundamental measurement framework, but contemporary standards demand additional sophistication. False positive rates must be weighted differently across message categories, recognizing that blocking legitimate business communications carries significantly higher costs than filtering personal messages. Advanced benchmarking incorporates cost-sensitive evaluation matrices that assign differential penalties based on message importance and sender reputation.

Temporal consistency represents a critical benchmarking dimension often overlooked in traditional evaluation methods. Intelligent filters must demonstrate sustained accuracy over extended periods, requiring longitudinal testing protocols spanning minimum six-month evaluation cycles. Performance degradation patterns, adaptation rates to new threats, and model drift characteristics become essential benchmarking components.

Cross-domain generalization testing ensures filter robustness across different organizational contexts and communication environments. Benchmarking standards must evaluate performance consistency across various industries, geographic regions, and language contexts. This includes testing filter effectiveness when deployed in environments significantly different from training conditions.

Real-time processing constraints introduce additional benchmarking complexity. Standards must incorporate latency measurements, throughput capacity, and resource utilization metrics alongside accuracy assessments. The trade-offs between processing speed and detection accuracy require careful quantification, particularly for high-volume enterprise environments where millisecond delays can impact system performance.

Adversarial robustness testing represents an emerging benchmarking requirement, evaluating filter resilience against deliberately crafted evasion attempts. This includes systematic testing against known bypass techniques, obfuscation methods, and adaptive attack strategies that specifically target machine learning-based filtering systems.

Unlock deeper insights with PatSnap Eureka Quick Research — get a full tech report to explore trends and direct your research. Try now!

Generate Your Research Report Instantly with AI Agent

Supercharge your innovation with PatSnap Eureka AI Agent Platform!