How to Develop Multi-Core Microcontroller Applications

FEB 25, 20269 MIN READ

Generate Your Research Report Instantly with AI Agent

Patsnap Eureka helps you evaluate technical feasibility & market potential.

Multi-Core MCU Development Background and Objectives

Multi-core microcontroller technology has emerged as a critical solution to address the growing computational demands of modern embedded systems. The evolution from single-core to multi-core architectures represents a fundamental shift in embedded system design philosophy, driven by the need to handle increasingly complex real-time applications while maintaining power efficiency and cost-effectiveness.

The historical development of multi-core MCUs began in the early 2000s when traditional single-core processors reached physical limitations in terms of clock speed scaling and power consumption. Leading semiconductor manufacturers recognized that parallel processing architectures could deliver superior performance per watt, leading to the introduction of heterogeneous and homogeneous multi-core designs specifically tailored for embedded applications.

Current market trends indicate exponential growth in applications requiring simultaneous execution of multiple tasks with varying real-time constraints. Industrial IoT systems, automotive electronics, advanced motor control, and edge AI processing represent key domains where multi-core MCUs provide distinct advantages over traditional single-core solutions. These applications demand concurrent handling of communication protocols, sensor data processing, control algorithms, and user interface management.

The primary technical objectives driving multi-core MCU development include achieving deterministic real-time performance through task isolation, maximizing system throughput via parallel execution, and optimizing power consumption through dynamic core management. Advanced objectives encompass implementing fault-tolerant systems through redundant core utilization and enabling scalable software architectures that can adapt to varying computational loads.

Modern multi-core MCU architectures typically feature asymmetric designs combining high-performance cores for computationally intensive tasks with low-power cores for background operations. This heterogeneous approach allows developers to optimize both performance and energy efficiency by mapping specific application functions to the most suitable processing elements.

The strategic importance of multi-core MCU technology extends beyond immediate performance gains, positioning organizations to address future challenges in autonomous systems, machine learning inference at the edge, and complex real-time control applications that will define the next generation of embedded systems.

The historical development of multi-core MCUs began in the early 2000s when traditional single-core processors reached physical limitations in terms of clock speed scaling and power consumption. Leading semiconductor manufacturers recognized that parallel processing architectures could deliver superior performance per watt, leading to the introduction of heterogeneous and homogeneous multi-core designs specifically tailored for embedded applications.

Current market trends indicate exponential growth in applications requiring simultaneous execution of multiple tasks with varying real-time constraints. Industrial IoT systems, automotive electronics, advanced motor control, and edge AI processing represent key domains where multi-core MCUs provide distinct advantages over traditional single-core solutions. These applications demand concurrent handling of communication protocols, sensor data processing, control algorithms, and user interface management.

The primary technical objectives driving multi-core MCU development include achieving deterministic real-time performance through task isolation, maximizing system throughput via parallel execution, and optimizing power consumption through dynamic core management. Advanced objectives encompass implementing fault-tolerant systems through redundant core utilization and enabling scalable software architectures that can adapt to varying computational loads.

Modern multi-core MCU architectures typically feature asymmetric designs combining high-performance cores for computationally intensive tasks with low-power cores for background operations. This heterogeneous approach allows developers to optimize both performance and energy efficiency by mapping specific application functions to the most suitable processing elements.

The strategic importance of multi-core MCU technology extends beyond immediate performance gains, positioning organizations to address future challenges in autonomous systems, machine learning inference at the edge, and complex real-time control applications that will define the next generation of embedded systems.

Market Demand for Multi-Core Embedded Systems

The global embedded systems market is experiencing unprecedented growth driven by the proliferation of Internet of Things (IoT) devices, autonomous vehicles, industrial automation, and smart infrastructure. Multi-core microcontrollers have emerged as a critical enabler for these applications, addressing the increasing computational demands while maintaining power efficiency and real-time performance requirements.

Automotive sector represents one of the most significant demand drivers for multi-core embedded systems. Advanced driver assistance systems (ADAS), electric vehicle control units, and autonomous driving platforms require sophisticated processing capabilities to handle multiple concurrent tasks such as sensor fusion, image processing, and real-time decision making. The transition toward software-defined vehicles has further amplified the need for powerful multi-core architectures that can support over-the-air updates and complex software stacks.

Industrial automation and Industry 4.0 initiatives are creating substantial market opportunities for multi-core microcontrollers. Smart manufacturing systems demand real-time processing of sensor data, predictive maintenance algorithms, and seamless connectivity with cloud platforms. Multi-core architectures enable the separation of safety-critical control functions from communication and data processing tasks, ensuring system reliability while supporting advanced analytics capabilities.

The consumer electronics segment continues to drive innovation in multi-core embedded systems, particularly in smart home devices, wearables, and mobile accessories. These applications require efficient multitasking capabilities to handle user interfaces, wireless communication protocols, and sensor data processing simultaneously while maintaining low power consumption for extended battery life.

Edge computing applications are emerging as a transformative market segment, where multi-core microcontrollers enable local processing of artificial intelligence and machine learning workloads. This trend reduces latency, improves data privacy, and decreases bandwidth requirements for cloud connectivity, making it particularly attractive for applications in healthcare monitoring, smart cities, and industrial IoT deployments.

The telecommunications infrastructure sector is experiencing growing demand for multi-core embedded systems to support 5G network equipment, base stations, and network edge devices. These applications require high-performance processing capabilities to handle massive data throughput while maintaining strict timing requirements and energy efficiency standards.

Automotive sector represents one of the most significant demand drivers for multi-core embedded systems. Advanced driver assistance systems (ADAS), electric vehicle control units, and autonomous driving platforms require sophisticated processing capabilities to handle multiple concurrent tasks such as sensor fusion, image processing, and real-time decision making. The transition toward software-defined vehicles has further amplified the need for powerful multi-core architectures that can support over-the-air updates and complex software stacks.

Industrial automation and Industry 4.0 initiatives are creating substantial market opportunities for multi-core microcontrollers. Smart manufacturing systems demand real-time processing of sensor data, predictive maintenance algorithms, and seamless connectivity with cloud platforms. Multi-core architectures enable the separation of safety-critical control functions from communication and data processing tasks, ensuring system reliability while supporting advanced analytics capabilities.

The consumer electronics segment continues to drive innovation in multi-core embedded systems, particularly in smart home devices, wearables, and mobile accessories. These applications require efficient multitasking capabilities to handle user interfaces, wireless communication protocols, and sensor data processing simultaneously while maintaining low power consumption for extended battery life.

Edge computing applications are emerging as a transformative market segment, where multi-core microcontrollers enable local processing of artificial intelligence and machine learning workloads. This trend reduces latency, improves data privacy, and decreases bandwidth requirements for cloud connectivity, making it particularly attractive for applications in healthcare monitoring, smart cities, and industrial IoT deployments.

The telecommunications infrastructure sector is experiencing growing demand for multi-core embedded systems to support 5G network equipment, base stations, and network edge devices. These applications require high-performance processing capabilities to handle massive data throughput while maintaining strict timing requirements and energy efficiency standards.

Current State and Challenges of Multi-Core MCU Development

Multi-core microcontroller development has reached a critical juncture where the technology demonstrates significant potential while facing substantial implementation barriers. Current multi-core MCU architectures primarily utilize ARM Cortex-M series processors, with dual-core and quad-core configurations becoming increasingly prevalent in automotive, industrial automation, and IoT applications. Leading semiconductor manufacturers including STMicroelectronics, NXP, Infineon, and Microchip have introduced various multi-core solutions, yet adoption rates remain moderate due to development complexity.

The heterogeneous computing approach has gained traction, combining high-performance cores with low-power auxiliary processors to optimize both computational capability and energy efficiency. Modern multi-core MCUs typically feature shared memory architectures with dedicated cache systems, enabling parallel processing while maintaining real-time performance requirements. However, the geographical distribution of expertise remains concentrated in established semiconductor hubs, creating knowledge gaps in emerging markets.

Software development complexity represents the most significant challenge in multi-core MCU adoption. Traditional embedded developers often lack experience with parallel programming paradigms, concurrent task management, and inter-core communication protocols. Debugging multi-threaded applications across multiple cores requires sophisticated toolchains that many development teams find prohibitively expensive or technically challenging to implement effectively.

Memory management and synchronization issues create additional barriers to widespread adoption. Shared resource conflicts, cache coherency problems, and race conditions frequently emerge during development, requiring deep understanding of hardware architecture and advanced programming techniques. The lack of standardized development frameworks across different vendor platforms further complicates the development process, forcing teams to invest significant time in platform-specific optimization.

Real-time determinism remains a critical concern, particularly in safety-critical applications where timing predictability is paramount. Balancing workload distribution across cores while maintaining strict timing requirements presents ongoing challenges that current development tools inadequately address. Additionally, power management complexity increases exponentially with multi-core designs, requiring sophisticated algorithms to optimize performance per watt ratios.

The current ecosystem suffers from fragmented toolchain support and limited educational resources. While hardware capabilities continue advancing rapidly, software development methodologies and supporting infrastructure lag significantly behind, creating a substantial gap between theoretical multi-core potential and practical implementation reality in embedded systems development.

The heterogeneous computing approach has gained traction, combining high-performance cores with low-power auxiliary processors to optimize both computational capability and energy efficiency. Modern multi-core MCUs typically feature shared memory architectures with dedicated cache systems, enabling parallel processing while maintaining real-time performance requirements. However, the geographical distribution of expertise remains concentrated in established semiconductor hubs, creating knowledge gaps in emerging markets.

Software development complexity represents the most significant challenge in multi-core MCU adoption. Traditional embedded developers often lack experience with parallel programming paradigms, concurrent task management, and inter-core communication protocols. Debugging multi-threaded applications across multiple cores requires sophisticated toolchains that many development teams find prohibitively expensive or technically challenging to implement effectively.

Memory management and synchronization issues create additional barriers to widespread adoption. Shared resource conflicts, cache coherency problems, and race conditions frequently emerge during development, requiring deep understanding of hardware architecture and advanced programming techniques. The lack of standardized development frameworks across different vendor platforms further complicates the development process, forcing teams to invest significant time in platform-specific optimization.

Real-time determinism remains a critical concern, particularly in safety-critical applications where timing predictability is paramount. Balancing workload distribution across cores while maintaining strict timing requirements presents ongoing challenges that current development tools inadequately address. Additionally, power management complexity increases exponentially with multi-core designs, requiring sophisticated algorithms to optimize performance per watt ratios.

The current ecosystem suffers from fragmented toolchain support and limited educational resources. While hardware capabilities continue advancing rapidly, software development methodologies and supporting infrastructure lag significantly behind, creating a substantial gap between theoretical multi-core potential and practical implementation reality in embedded systems development.

Existing Multi-Core Application Development Solutions

01 Multi-core processor architecture and core coordination

Multi-core microcontrollers feature multiple processing cores integrated on a single chip, enabling parallel processing capabilities. The architecture includes mechanisms for coordinating operations between cores, managing shared resources, and distributing workloads efficiently. Core coordination involves synchronization protocols, inter-core communication pathways, and arbitration logic to ensure coherent operation across all processing units.- Multi-core processor architecture and core coordination: Multi-core microcontrollers feature multiple processing cores integrated on a single chip, enabling parallel processing capabilities. The architecture includes mechanisms for coordinating operations between cores, managing shared resources, and distributing workloads efficiently. Core coordination involves synchronization protocols, inter-core communication pathways, and arbitration logic to ensure coherent operation across all processing units.

- Power management and energy efficiency in multi-core systems: Advanced power management techniques are implemented to optimize energy consumption across multiple cores. These include dynamic voltage and frequency scaling, selective core activation and deactivation, power gating strategies, and intelligent workload distribution to minimize power consumption while maintaining performance. The power management system can independently control each core's power state based on processing demands.

- Memory architecture and cache coherency: Multi-core microcontrollers employ sophisticated memory hierarchies including shared and distributed cache systems. Cache coherency protocols ensure data consistency across multiple cores accessing shared memory spaces. The memory architecture includes mechanisms for managing memory access conflicts, implementing cache synchronization, and optimizing data transfer between cores and memory subsystems.

- Task scheduling and load balancing: Efficient task scheduling algorithms distribute computational workloads across available cores to maximize throughput and minimize latency. Load balancing mechanisms monitor core utilization and dynamically reassign tasks to prevent bottlenecks. The scheduling system considers factors such as task priority, dependencies, real-time constraints, and core availability to optimize overall system performance.

- Inter-core communication and data transfer: Dedicated communication infrastructure enables efficient data exchange between processor cores. This includes high-speed interconnect fabrics, message passing interfaces, shared memory regions, and direct memory access channels. The communication system supports various data transfer modes, implements buffering mechanisms, and provides low-latency pathways for inter-core signaling and synchronization.

02 Power management and energy efficiency in multi-core systems

Advanced power management techniques are implemented to optimize energy consumption across multiple cores. These include dynamic voltage and frequency scaling, selective core activation and deactivation, power gating strategies, and intelligent workload distribution to minimize power consumption while maintaining performance. The power management system can independently control each core's power state based on processing demands.Expand Specific Solutions03 Inter-core communication and data sharing mechanisms

Multi-core microcontrollers incorporate specialized communication infrastructure to enable efficient data exchange between cores. This includes shared memory architectures, message passing interfaces, dedicated communication buses, and cache coherency protocols. These mechanisms ensure fast and reliable data transfer while maintaining data integrity across multiple processing cores operating simultaneously.Expand Specific Solutions04 Task scheduling and load balancing across cores

Sophisticated scheduling algorithms distribute computational tasks across available cores to maximize throughput and minimize latency. The scheduling system considers factors such as task priority, core availability, processing requirements, and real-time constraints. Load balancing mechanisms dynamically adjust task allocation to prevent core overutilization and ensure optimal system performance.Expand Specific Solutions05 Debug and monitoring capabilities for multi-core systems

Multi-core microcontrollers include comprehensive debugging and monitoring features that provide visibility into the operation of individual cores and the system as a whole. These capabilities include performance counters, trace mechanisms, breakpoint management across cores, and real-time monitoring of core states. The debug infrastructure enables developers to analyze inter-core interactions and identify performance bottlenecks.Expand Specific Solutions

Key Players in Multi-Core MCU and Development Tools

The multi-core microcontroller application development landscape represents a rapidly maturing market driven by increasing demand for high-performance embedded systems across automotive, IoT, and industrial automation sectors. The industry is transitioning from early adoption to mainstream deployment, with market growth fueled by AI edge computing and real-time processing requirements. Technology maturity varies significantly among key players: established semiconductor giants like Intel, AMD, Texas Instruments, and Samsung Electronics lead with comprehensive multi-core architectures and robust development ecosystems, while specialized firms such as Microchip Technology and MediaTek focus on targeted applications. Emerging players like Feiteng Information Technology represent regional innovation hubs. The competitive landscape shows consolidation around platform-based solutions, with companies like IBM, Synopsys, and Continental Automotive driving software toolchain advancement and automotive-specific implementations, indicating a shift toward integrated hardware-software development environments.

Advanced Micro Devices, Inc.

Technical Solution: AMD delivers multi-core microcontroller development capabilities through their Ryzen Embedded and EPYC Embedded processor families featuring up to 64 cores with simultaneous multithreading technology. Their development approach leverages ROCm (Radeon Open Compute) platform for heterogeneous computing applications, enabling developers to utilize both CPU and GPU cores simultaneously. The framework includes advanced profiling tools like AMD μProf for multi-threaded performance analysis, support for industry-standard parallel programming models including OpenCL and HIP, and optimized libraries for compute-intensive workloads. AMD's solution emphasizes high-performance computing with efficient power management and scalable multi-core architectures.

Strengths: Exceptional multi-core performance and competitive pricing, strong support for heterogeneous computing. Weaknesses: Smaller market share in embedded space, limited ecosystem compared to Intel and ARM solutions.

Texas Instruments Incorporated

Technical Solution: TI offers multi-core microcontroller solutions primarily through their Sitara ARM processors and C2000 real-time control MCUs with dual-core architectures. Their Code Composer Studio IDE provides integrated multi-core debugging capabilities, allowing simultaneous debugging of multiple cores with shared memory management. The development framework includes SYS/BIOS real-time operating system optimized for multi-core synchronization, inter-processor communication (IPC) libraries, and resource management APIs. TI's approach emphasizes real-time performance with deterministic task scheduling and low-latency inter-core communication protocols specifically designed for industrial automation and automotive applications.

Strengths: Excellent real-time performance and industrial-grade reliability, comprehensive documentation and support. Weaknesses: Limited to specific application domains, smaller ecosystem compared to general-purpose processors.

Core Technologies in Multi-Core Programming and Debugging

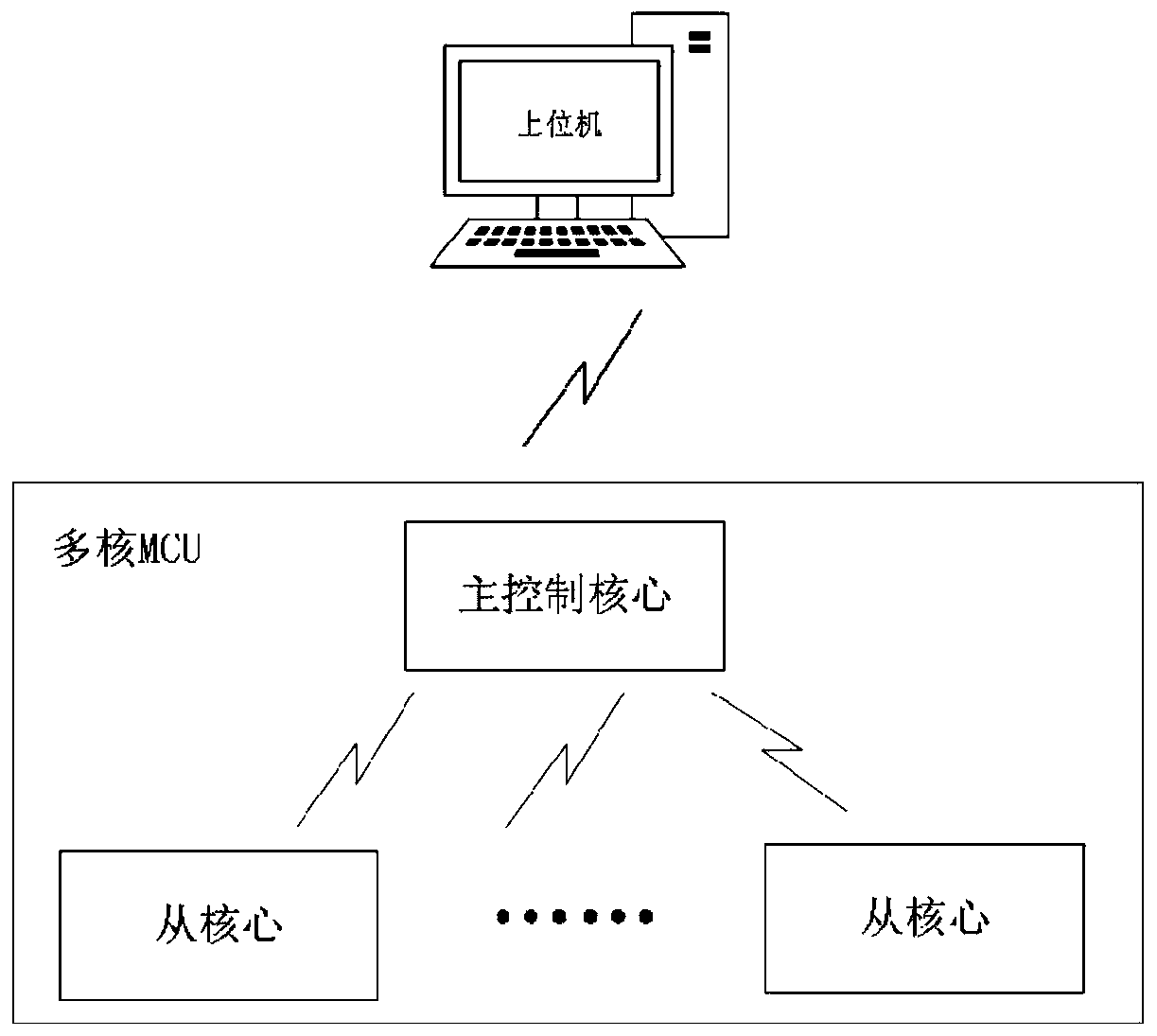

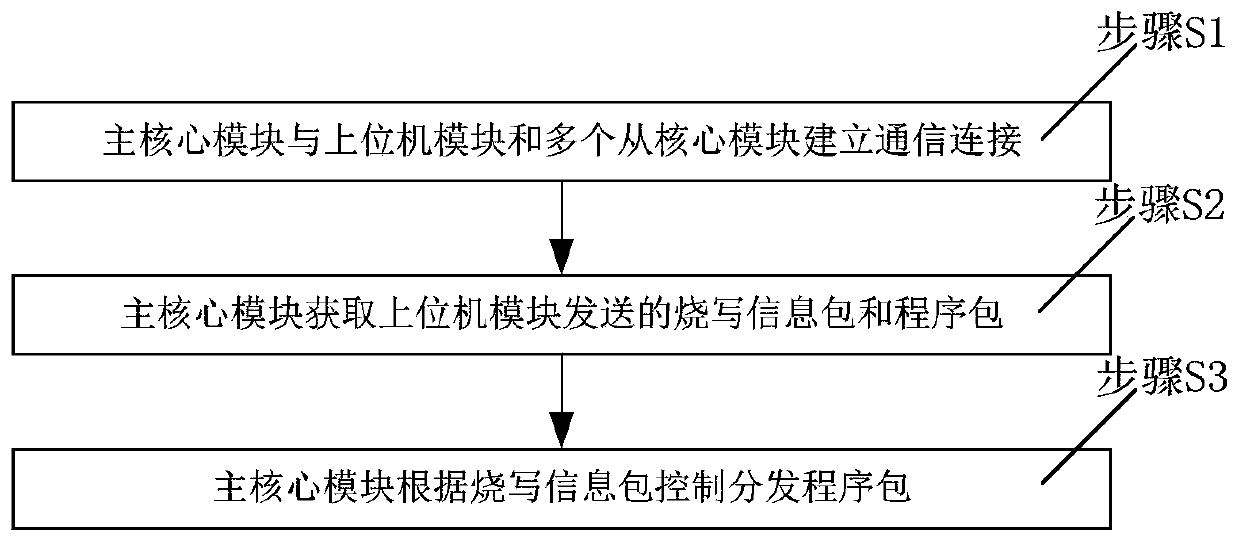

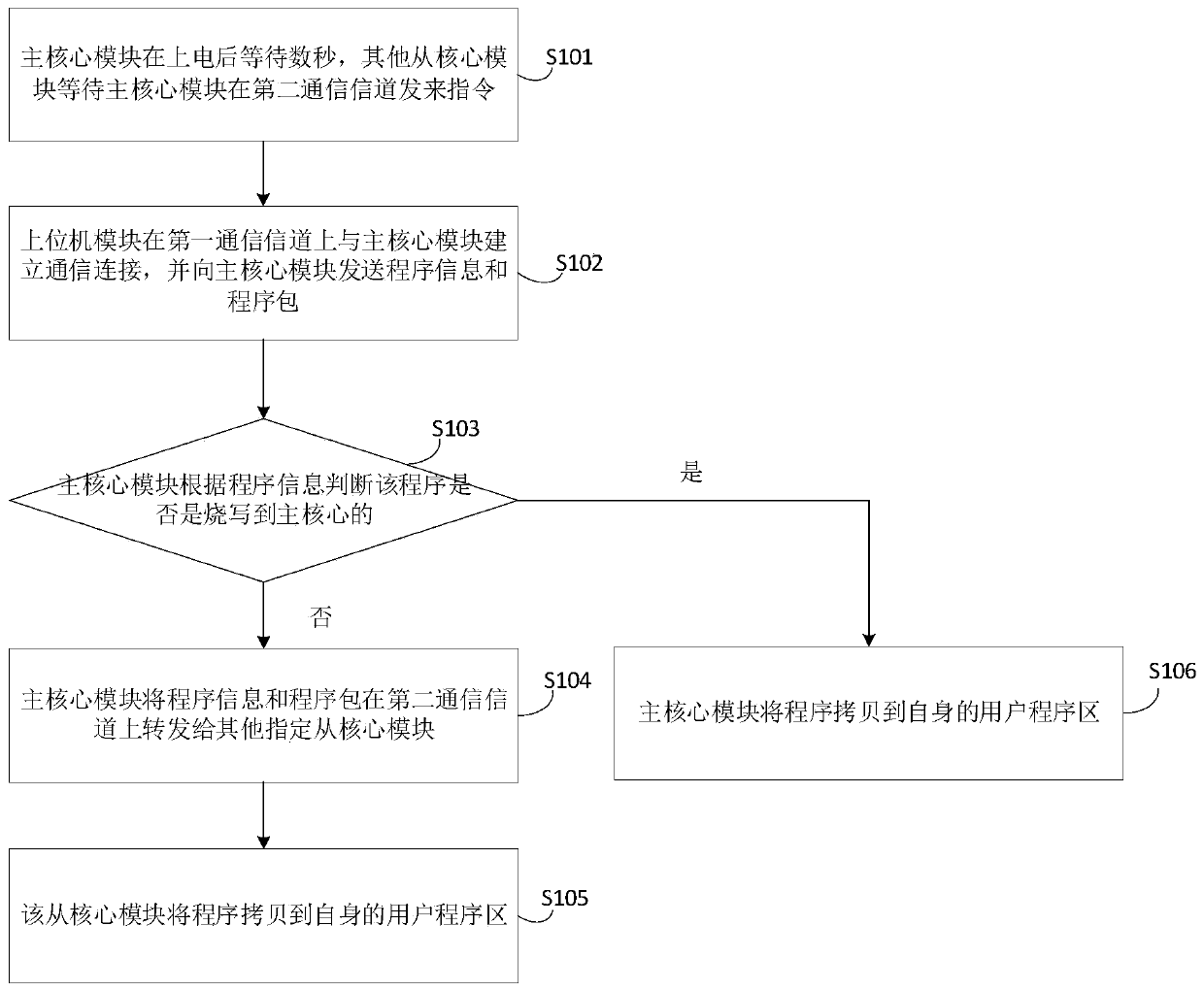

Program development method and system for multi-core programmable controller

PatentActiveCN110187891A

Innovation

- By using communication methods to connect the platform and chips in the multi-core programmable controller, communication connections are established between the main core module, the host computer module and the slave core module, the program package is distributed, and the programming information package is used to determine whether it belongs to the main core module or the slave core module. From the core module, perform the corresponding program copying and programming operations.

Method for designing an application task architecture of an electronic control unit with one or more virtual cores

PatentWO2019145632A1

Innovation

- The method involves designing an application task architecture that uses virtual cores, allowing for abstraction and grouping of cores for separate computing domains, enabling a common architecture across multiple microcontrollers, with virtual cores assigned to specific tasks and associated with real cores, facilitating easier adaptation and reuse.

Real-Time Operating Systems for Multi-Core MCUs

Real-time operating systems represent a critical enabling technology for multi-core microcontroller applications, providing the essential software infrastructure to manage concurrent tasks, inter-core communication, and deterministic timing requirements. The evolution from single-core to multi-core MCU architectures has fundamentally transformed RTOS design paradigms, necessitating sophisticated scheduling algorithms and resource management mechanisms that can effectively utilize parallel processing capabilities while maintaining real-time guarantees.

Traditional RTOS architectures designed for single-core systems face significant challenges when adapted to multi-core environments. Key technical hurdles include maintaining cache coherency across cores, implementing efficient inter-processor communication mechanisms, and ensuring predictable task execution timing in the presence of shared resource contention. Modern multi-core RTOS solutions address these challenges through asymmetric multiprocessing (AMP) and symmetric multiprocessing (SMP) approaches, each offering distinct advantages for different application scenarios.

Asymmetric multiprocessing configurations allow independent RTOS instances to run on separate cores, with each core dedicated to specific functional domains. This approach simplifies system design and provides strong isolation between subsystems, making it particularly suitable for safety-critical applications where fault containment is paramount. Communication between cores typically occurs through shared memory regions, message queues, or dedicated inter-processor communication peripherals.

Symmetric multiprocessing implementations enable a single RTOS instance to manage all available cores dynamically, distributing tasks across the multi-core architecture based on real-time priorities and system load. SMP systems require sophisticated kernel designs that support atomic operations, spinlocks, and priority inheritance protocols to prevent priority inversion scenarios that could compromise real-time performance guarantees.

Contemporary multi-core RTOS solutions incorporate advanced features such as CPU affinity controls, allowing critical tasks to be bound to specific cores for predictable execution patterns. Load balancing algorithms distribute computational workloads across available processing resources while respecting real-time constraints and inter-task dependencies. Memory management subsystems implement non-uniform memory access (NUMA) awareness to optimize data locality and minimize cross-core memory access latencies.

The integration of hardware abstraction layers specifically designed for multi-core architectures enables RTOS portability across different MCU families while providing standardized interfaces for core management, interrupt handling, and peripheral access coordination. These abstraction mechanisms facilitate application development by hiding low-level hardware complexities while exposing essential multi-core capabilities through well-defined APIs.

Traditional RTOS architectures designed for single-core systems face significant challenges when adapted to multi-core environments. Key technical hurdles include maintaining cache coherency across cores, implementing efficient inter-processor communication mechanisms, and ensuring predictable task execution timing in the presence of shared resource contention. Modern multi-core RTOS solutions address these challenges through asymmetric multiprocessing (AMP) and symmetric multiprocessing (SMP) approaches, each offering distinct advantages for different application scenarios.

Asymmetric multiprocessing configurations allow independent RTOS instances to run on separate cores, with each core dedicated to specific functional domains. This approach simplifies system design and provides strong isolation between subsystems, making it particularly suitable for safety-critical applications where fault containment is paramount. Communication between cores typically occurs through shared memory regions, message queues, or dedicated inter-processor communication peripherals.

Symmetric multiprocessing implementations enable a single RTOS instance to manage all available cores dynamically, distributing tasks across the multi-core architecture based on real-time priorities and system load. SMP systems require sophisticated kernel designs that support atomic operations, spinlocks, and priority inheritance protocols to prevent priority inversion scenarios that could compromise real-time performance guarantees.

Contemporary multi-core RTOS solutions incorporate advanced features such as CPU affinity controls, allowing critical tasks to be bound to specific cores for predictable execution patterns. Load balancing algorithms distribute computational workloads across available processing resources while respecting real-time constraints and inter-task dependencies. Memory management subsystems implement non-uniform memory access (NUMA) awareness to optimize data locality and minimize cross-core memory access latencies.

The integration of hardware abstraction layers specifically designed for multi-core architectures enables RTOS portability across different MCU families while providing standardized interfaces for core management, interrupt handling, and peripheral access coordination. These abstraction mechanisms facilitate application development by hiding low-level hardware complexities while exposing essential multi-core capabilities through well-defined APIs.

Power Management Strategies in Multi-Core Applications

Power management represents one of the most critical challenges in multi-core microcontroller applications, where multiple processing units operate simultaneously while maintaining energy efficiency and thermal stability. The complexity increases exponentially as each core can operate at different performance levels, requiring sophisticated coordination mechanisms to optimize overall system power consumption.

Dynamic Voltage and Frequency Scaling (DVFS) serves as the cornerstone of multi-core power management strategies. This technique allows individual cores to adjust their operating voltage and clock frequency based on computational workload requirements. Advanced implementations utilize predictive algorithms that analyze task characteristics and adjust power states proactively, reducing response latency while maintaining energy efficiency.

Core-level power gating emerges as another fundamental strategy, enabling complete shutdown of unused processing units during idle periods. Modern multi-core architectures implement hierarchical power domains, allowing fine-grained control over individual cores, cache subsystems, and peripheral interfaces. The challenge lies in minimizing wake-up latency while maximizing power savings during dormant states.

Workload-aware task scheduling plays a pivotal role in power optimization across multi-core systems. Intelligent schedulers analyze task dependencies, execution patterns, and power characteristics to distribute computational loads efficiently. This approach considers both performance requirements and thermal constraints, preventing hotspot formation while maintaining system responsiveness.

Thermal management integration becomes increasingly important as core density increases. Advanced power management systems incorporate real-time temperature monitoring and implement thermal-aware scheduling algorithms. These systems can dynamically migrate tasks between cores, adjust performance levels, or trigger emergency throttling to prevent thermal damage.

Cache coherency protocols significantly impact power consumption in multi-core architectures. Optimized coherency mechanisms reduce unnecessary memory transactions and minimize inter-core communication overhead. Power-aware cache management strategies include selective cache line invalidation, adaptive cache sizing, and intelligent prefetching algorithms that balance performance with energy consumption.

Emerging techniques focus on machine learning-based power prediction models that adapt to application-specific usage patterns. These systems learn from historical power consumption data to optimize future power management decisions, achieving superior energy efficiency compared to traditional static approaches while maintaining real-time performance requirements.

Dynamic Voltage and Frequency Scaling (DVFS) serves as the cornerstone of multi-core power management strategies. This technique allows individual cores to adjust their operating voltage and clock frequency based on computational workload requirements. Advanced implementations utilize predictive algorithms that analyze task characteristics and adjust power states proactively, reducing response latency while maintaining energy efficiency.

Core-level power gating emerges as another fundamental strategy, enabling complete shutdown of unused processing units during idle periods. Modern multi-core architectures implement hierarchical power domains, allowing fine-grained control over individual cores, cache subsystems, and peripheral interfaces. The challenge lies in minimizing wake-up latency while maximizing power savings during dormant states.

Workload-aware task scheduling plays a pivotal role in power optimization across multi-core systems. Intelligent schedulers analyze task dependencies, execution patterns, and power characteristics to distribute computational loads efficiently. This approach considers both performance requirements and thermal constraints, preventing hotspot formation while maintaining system responsiveness.

Thermal management integration becomes increasingly important as core density increases. Advanced power management systems incorporate real-time temperature monitoring and implement thermal-aware scheduling algorithms. These systems can dynamically migrate tasks between cores, adjust performance levels, or trigger emergency throttling to prevent thermal damage.

Cache coherency protocols significantly impact power consumption in multi-core architectures. Optimized coherency mechanisms reduce unnecessary memory transactions and minimize inter-core communication overhead. Power-aware cache management strategies include selective cache line invalidation, adaptive cache sizing, and intelligent prefetching algorithms that balance performance with energy consumption.

Emerging techniques focus on machine learning-based power prediction models that adapt to application-specific usage patterns. These systems learn from historical power consumption data to optimize future power management decisions, achieving superior energy efficiency compared to traditional static approaches while maintaining real-time performance requirements.

Unlock deeper insights with Patsnap Eureka Quick Research — get a full tech report to explore trends and direct your research. Try now!

Generate Your Research Report Instantly with AI Agent

Supercharge your innovation with Patsnap Eureka AI Agent Platform!