Microcontroller Vs SoC: Efficiency in Data Processing

FEB 25, 20269 MIN READ

Generate Your Research Report Instantly with AI Agent

PatSnap Eureka helps you evaluate technical feasibility & market potential.

MCU vs SoC Data Processing Background and Objectives

The evolution of embedded computing has witnessed a fundamental shift from simple microcontrollers to sophisticated System-on-Chip architectures, driven by escalating demands for computational efficiency and processing capabilities. This technological progression reflects the industry's response to increasingly complex applications requiring real-time data processing, from IoT devices to autonomous systems. The distinction between microcontrollers and SoCs has become critical as engineers face decisions that directly impact system performance, power consumption, and development costs.

Microcontrollers emerged in the 1970s as integrated solutions combining CPU, memory, and peripherals on a single chip, primarily targeting control-oriented applications with modest computational requirements. Their design philosophy emphasized simplicity, low power consumption, and cost-effectiveness, making them ideal for embedded systems with deterministic processing needs. Traditional MCUs excel in applications where predictable timing, minimal power draw, and straightforward programming models are paramount.

System-on-Chip architectures represent a paradigm shift toward heterogeneous computing platforms that integrate multiple processing units, advanced memory hierarchies, and specialized accelerators. Modern SoCs incorporate ARM Cortex-A series processors, GPU cores, DSP units, and dedicated AI processing elements, enabling parallel execution and sophisticated data manipulation capabilities. This architectural complexity allows SoCs to handle multimedia processing, machine learning inference, and high-throughput data analytics that would overwhelm traditional microcontrollers.

The primary objective of this technical investigation centers on quantifying the efficiency differentials between MCUs and SoCs across various data processing scenarios. Key performance metrics include computational throughput measured in operations per second, power efficiency expressed as performance per watt, memory bandwidth utilization, and real-time response characteristics. Understanding these metrics enables informed architectural decisions based on specific application requirements and constraints.

Contemporary embedded applications increasingly demand sophisticated data processing capabilities, including sensor fusion, signal processing, computer vision, and predictive analytics. These requirements challenge traditional MCU architectures while highlighting the advantages of SoC platforms with their parallel processing capabilities and specialized computational units. The objective extends beyond raw performance comparison to encompass total system efficiency, development complexity, and long-term scalability considerations.

Microcontrollers emerged in the 1970s as integrated solutions combining CPU, memory, and peripherals on a single chip, primarily targeting control-oriented applications with modest computational requirements. Their design philosophy emphasized simplicity, low power consumption, and cost-effectiveness, making them ideal for embedded systems with deterministic processing needs. Traditional MCUs excel in applications where predictable timing, minimal power draw, and straightforward programming models are paramount.

System-on-Chip architectures represent a paradigm shift toward heterogeneous computing platforms that integrate multiple processing units, advanced memory hierarchies, and specialized accelerators. Modern SoCs incorporate ARM Cortex-A series processors, GPU cores, DSP units, and dedicated AI processing elements, enabling parallel execution and sophisticated data manipulation capabilities. This architectural complexity allows SoCs to handle multimedia processing, machine learning inference, and high-throughput data analytics that would overwhelm traditional microcontrollers.

The primary objective of this technical investigation centers on quantifying the efficiency differentials between MCUs and SoCs across various data processing scenarios. Key performance metrics include computational throughput measured in operations per second, power efficiency expressed as performance per watt, memory bandwidth utilization, and real-time response characteristics. Understanding these metrics enables informed architectural decisions based on specific application requirements and constraints.

Contemporary embedded applications increasingly demand sophisticated data processing capabilities, including sensor fusion, signal processing, computer vision, and predictive analytics. These requirements challenge traditional MCU architectures while highlighting the advantages of SoC platforms with their parallel processing capabilities and specialized computational units. The objective extends beyond raw performance comparison to encompass total system efficiency, development complexity, and long-term scalability considerations.

Market Demand for Efficient Data Processing Solutions

The global demand for efficient data processing solutions has experienced unprecedented growth across multiple industry sectors, driven by the proliferation of Internet of Things devices, edge computing applications, and real-time analytics requirements. Organizations are increasingly seeking processing architectures that can deliver optimal performance while maintaining energy efficiency and cost-effectiveness.

Industrial automation represents one of the most significant demand drivers, where manufacturing facilities require real-time sensor data processing, predictive maintenance analytics, and automated control systems. These applications demand processing solutions that can handle multiple data streams simultaneously while operating in harsh environmental conditions with minimal power consumption.

The automotive industry has emerged as another major market segment, particularly with the advancement of autonomous driving technologies and connected vehicle systems. Modern vehicles generate massive amounts of data from cameras, lidar, radar, and various sensors that require immediate processing for safety-critical decisions. The choice between microcontroller and SoC architectures directly impacts system responsiveness, power efficiency, and overall vehicle performance.

Healthcare and medical device markets are experiencing substantial growth in demand for portable diagnostic equipment, wearable health monitors, and remote patient monitoring systems. These applications require processing solutions that can perform complex signal analysis and data interpretation while maintaining extended battery life and compact form factors.

Smart city infrastructure development has created significant opportunities for efficient data processing solutions in traffic management systems, environmental monitoring networks, and public safety applications. These deployments often involve thousands of interconnected devices that must process and transmit data reliably while operating on limited power budgets.

Consumer electronics continue to drive demand for more sophisticated processing capabilities in smartphones, smart home devices, and wearable technology. Users expect seamless performance, extended battery life, and advanced features such as artificial intelligence processing and multimedia capabilities.

The telecommunications sector requires efficient data processing for network infrastructure, particularly with the deployment of 5G networks and edge computing nodes. These applications demand high-throughput processing capabilities while maintaining energy efficiency to reduce operational costs and environmental impact.

Market research indicates that organizations are increasingly prioritizing processing solutions that offer flexibility in handling diverse workloads, scalability for future requirements, and integration capabilities with existing systems. The decision between microcontroller and SoC architectures has become critical in meeting these evolving market demands while balancing performance, power consumption, and cost considerations.

Industrial automation represents one of the most significant demand drivers, where manufacturing facilities require real-time sensor data processing, predictive maintenance analytics, and automated control systems. These applications demand processing solutions that can handle multiple data streams simultaneously while operating in harsh environmental conditions with minimal power consumption.

The automotive industry has emerged as another major market segment, particularly with the advancement of autonomous driving technologies and connected vehicle systems. Modern vehicles generate massive amounts of data from cameras, lidar, radar, and various sensors that require immediate processing for safety-critical decisions. The choice between microcontroller and SoC architectures directly impacts system responsiveness, power efficiency, and overall vehicle performance.

Healthcare and medical device markets are experiencing substantial growth in demand for portable diagnostic equipment, wearable health monitors, and remote patient monitoring systems. These applications require processing solutions that can perform complex signal analysis and data interpretation while maintaining extended battery life and compact form factors.

Smart city infrastructure development has created significant opportunities for efficient data processing solutions in traffic management systems, environmental monitoring networks, and public safety applications. These deployments often involve thousands of interconnected devices that must process and transmit data reliably while operating on limited power budgets.

Consumer electronics continue to drive demand for more sophisticated processing capabilities in smartphones, smart home devices, and wearable technology. Users expect seamless performance, extended battery life, and advanced features such as artificial intelligence processing and multimedia capabilities.

The telecommunications sector requires efficient data processing for network infrastructure, particularly with the deployment of 5G networks and edge computing nodes. These applications demand high-throughput processing capabilities while maintaining energy efficiency to reduce operational costs and environmental impact.

Market research indicates that organizations are increasingly prioritizing processing solutions that offer flexibility in handling diverse workloads, scalability for future requirements, and integration capabilities with existing systems. The decision between microcontroller and SoC architectures has become critical in meeting these evolving market demands while balancing performance, power consumption, and cost considerations.

Current State of MCU and SoC Processing Capabilities

Microcontrollers currently dominate the embedded systems landscape with their optimized architecture for real-time control applications. Modern MCUs feature ARM Cortex-M series processors operating at frequencies ranging from 48MHz to 800MHz, with integrated peripherals including ADCs, timers, and communication interfaces. Leading MCU families such as STM32, ESP32, and Nordic nRF series demonstrate processing capabilities sufficient for sensor data acquisition, basic signal processing, and IoT connectivity tasks.

Contemporary MCUs excel in deterministic processing scenarios where predictable response times are critical. Their Harvard architecture enables simultaneous instruction fetch and data access, while dedicated hardware accelerators for cryptography and digital signal processing enhance computational efficiency. Power consumption remains exceptionally low, with sleep modes consuming microamperes and active processing requiring only milliwatts.

System-on-Chip solutions represent a significant leap in processing capability, integrating multi-core ARM Cortex-A processors with dedicated GPU units, neural processing units, and high-speed memory controllers. Current SoC architectures like Qualcomm Snapdragon, Apple Silicon, and NVIDIA Jetson series deliver processing performance measured in TOPS (Tera Operations Per Second) for AI workloads and GFLOPS for general computing tasks.

Modern SoCs incorporate heterogeneous computing architectures combining high-performance cores for intensive tasks with efficiency cores for background operations. Advanced process nodes at 5nm and 7nm enable higher transistor density and improved power efficiency. Integrated AI accelerators, such as Google's TPU and Apple's Neural Engine, provide specialized hardware for machine learning inference with significantly reduced power consumption compared to general-purpose processors.

The processing gap between MCUs and SoCs continues to widen as SoCs integrate more specialized compute units. Current flagship SoCs process complex algorithms including computer vision, natural language processing, and real-time video encoding at frame rates exceeding 60fps in 4K resolution. Meanwhile, MCUs maintain their advantage in ultra-low power scenarios and real-time deterministic processing requirements.

Memory bandwidth represents another critical differentiator, with SoCs supporting LPDDR5 memory interfaces delivering over 50GB/s bandwidth, while MCUs typically operate with on-chip SRAM and external flash memory with significantly lower bandwidth requirements. This architectural difference fundamentally shapes their respective data processing capabilities and application suitability.

Contemporary MCUs excel in deterministic processing scenarios where predictable response times are critical. Their Harvard architecture enables simultaneous instruction fetch and data access, while dedicated hardware accelerators for cryptography and digital signal processing enhance computational efficiency. Power consumption remains exceptionally low, with sleep modes consuming microamperes and active processing requiring only milliwatts.

System-on-Chip solutions represent a significant leap in processing capability, integrating multi-core ARM Cortex-A processors with dedicated GPU units, neural processing units, and high-speed memory controllers. Current SoC architectures like Qualcomm Snapdragon, Apple Silicon, and NVIDIA Jetson series deliver processing performance measured in TOPS (Tera Operations Per Second) for AI workloads and GFLOPS for general computing tasks.

Modern SoCs incorporate heterogeneous computing architectures combining high-performance cores for intensive tasks with efficiency cores for background operations. Advanced process nodes at 5nm and 7nm enable higher transistor density and improved power efficiency. Integrated AI accelerators, such as Google's TPU and Apple's Neural Engine, provide specialized hardware for machine learning inference with significantly reduced power consumption compared to general-purpose processors.

The processing gap between MCUs and SoCs continues to widen as SoCs integrate more specialized compute units. Current flagship SoCs process complex algorithms including computer vision, natural language processing, and real-time video encoding at frame rates exceeding 60fps in 4K resolution. Meanwhile, MCUs maintain their advantage in ultra-low power scenarios and real-time deterministic processing requirements.

Memory bandwidth represents another critical differentiator, with SoCs supporting LPDDR5 memory interfaces delivering over 50GB/s bandwidth, while MCUs typically operate with on-chip SRAM and external flash memory with significantly lower bandwidth requirements. This architectural difference fundamentally shapes their respective data processing capabilities and application suitability.

Existing Data Processing Optimization Solutions

01 Power management and low-power operation modes

Microcontrollers and SoCs can achieve improved efficiency through advanced power management techniques including multiple power domains, dynamic voltage and frequency scaling, and various sleep modes. These techniques allow the system to reduce power consumption during idle periods or low-activity states while maintaining quick wake-up capabilities. Implementation of intelligent power gating and clock gating mechanisms further optimizes energy usage by shutting down unused circuit blocks.- Power management and low-power operation modes: Microcontrollers and SoCs can achieve improved efficiency through advanced power management techniques including multiple power domains, dynamic voltage and frequency scaling, and various sleep modes. These techniques allow the system to reduce power consumption during idle periods or low-activity states while maintaining quick wake-up capabilities. Implementation of intelligent power gating and clock gating mechanisms helps minimize static and dynamic power consumption across different operational scenarios.

- Integrated peripheral optimization and resource sharing: Efficiency improvements can be achieved through optimized integration of peripheral components and intelligent resource sharing mechanisms within the SoC architecture. This includes shared memory architectures, unified bus systems, and efficient DMA controllers that reduce CPU intervention. The integration approach minimizes external component requirements and reduces overall system power consumption while improving data transfer efficiency between different functional blocks.

- Clock management and frequency optimization: Advanced clock distribution networks and frequency management systems enable microcontrollers and SoCs to operate at optimal performance-per-watt ratios. Techniques include adaptive clock scaling, multiple clock domains, and phase-locked loop configurations that allow different subsystems to operate at appropriate frequencies based on workload requirements. This approach reduces unnecessary power consumption while maintaining system responsiveness and performance.

- Processing architecture and instruction set efficiency: Microcontroller and SoC efficiency can be enhanced through optimized processing architectures including pipeline designs, instruction set optimizations, and hardware accelerators for common tasks. These architectural improvements reduce the number of clock cycles required for operations and enable more work to be completed per unit of energy consumed. Specialized processing units for specific functions help offload the main processor and improve overall system efficiency.

- Thermal management and efficiency monitoring: Effective thermal management systems and real-time efficiency monitoring capabilities help maintain optimal operating conditions for microcontrollers and SoCs. These systems include temperature sensors, thermal throttling mechanisms, and adaptive performance scaling based on thermal conditions. Monitoring circuits track power consumption and performance metrics to enable dynamic optimization of system efficiency across varying workloads and environmental conditions.

02 Integrated peripheral optimization and resource sharing

Efficiency improvements can be achieved by optimizing the integration and operation of peripheral components within the SoC architecture. This includes intelligent bus arbitration, shared memory architectures, and efficient DMA controllers that reduce CPU intervention. The consolidation of multiple functions into a single chip reduces external component requirements and improves overall system efficiency through reduced interconnect overhead and improved data transfer mechanisms.Expand Specific Solutions03 Processing architecture and instruction set optimization

Enhanced processing efficiency is achieved through optimized instruction set architectures, pipeline designs, and execution units tailored for specific applications. This includes implementation of hardware accelerators for common tasks, efficient cache hierarchies, and branch prediction mechanisms. The architecture may incorporate specialized processing units that handle specific workloads more efficiently than general-purpose cores, reducing overall power consumption and improving performance per watt.Expand Specific Solutions04 Clock management and synchronization techniques

Efficient clock distribution and management systems contribute significantly to overall SoC efficiency. This includes implementation of multiple clock domains, adaptive clock scaling based on workload requirements, and phase-locked loop configurations that minimize jitter and power consumption. Advanced clock gating techniques selectively disable clock signals to inactive modules, while maintaining synchronization across different operational domains to ensure system reliability and reduce dynamic power consumption.Expand Specific Solutions05 Thermal management and efficiency monitoring

Thermal management systems integrated within microcontrollers and SoCs monitor operating temperatures and adjust performance parameters to maintain efficiency while preventing overheating. This includes on-chip temperature sensors, dynamic thermal management algorithms, and feedback mechanisms that adjust voltage and frequency based on thermal conditions. Real-time efficiency monitoring circuits track power consumption and performance metrics, enabling adaptive optimization strategies that balance performance requirements with energy efficiency goals.Expand Specific Solutions

Major Players in MCU and SoC Market Landscape

The microcontroller versus SoC efficiency debate reflects a mature semiconductor industry experiencing rapid evolution driven by IoT expansion and edge computing demands. The market, valued at hundreds of billions globally, showcases distinct technological trajectories where traditional microcontroller leaders like Texas Instruments, STMicroelectronics, and Renesas Electronics maintain strong positions in power-efficient, cost-sensitive applications, while SoC innovators including Qualcomm, Samsung Electronics, and Intel dominate performance-intensive processing scenarios. Advanced players like ARM Limited provide foundational IP architectures spanning both domains, while emerging companies such as Ambiq Micro pioneer ultra-low-power solutions and Ampere Computing targets cloud-optimized processors. The competitive landscape demonstrates high technical maturity with established giants like AMD and specialized firms like Silicon Laboratories driving innovation in their respective niches, indicating a bifurcated market where application-specific optimization determines technological superiority rather than universal solutions.

Texas Instruments Incorporated

Technical Solution: Texas Instruments offers both microcontroller and SoC solutions, providing a comprehensive comparison perspective. Their microcontrollers like the MSP430 series focus on ultra-low power consumption for simple control tasks, while their Sitara SoCs integrate ARM Cortex processors with specialized peripherals for more complex applications. TI's approach emphasizes real-time processing capabilities and deterministic behavior. Their solutions feature integrated analog components, power management units, and communication interfaces. The company's microcontrollers excel in battery-powered applications requiring long operational life, while their SoCs provide better performance for applications requiring multimedia processing, networking, or advanced user interfaces. TI's development ecosystem supports both architectures with comprehensive tools and libraries.

Strengths: Broad portfolio covering both technologies, excellent power efficiency, strong real-time capabilities. Weaknesses: Limited high-performance computing capabilities compared to leading SoC vendors.

QUALCOMM, Inc.

Technical Solution: QUALCOMM develops advanced SoC architectures that integrate multiple processing units including CPU, GPU, DSP, and dedicated AI accelerators on a single chip. Their Snapdragon series demonstrates superior data processing efficiency through heterogeneous computing, where different processing units handle specific tasks optimally. The company's approach leverages dynamic voltage and frequency scaling (DVFS) to balance performance and power consumption. Their SoCs incorporate advanced memory hierarchies with multiple cache levels and high-bandwidth memory interfaces, enabling efficient data movement and reduced latency. QUALCOMM's unified memory architecture allows seamless data sharing between processing units, significantly improving overall system efficiency compared to discrete microcontroller solutions.

Strengths: Industry-leading integration capabilities, advanced power management, strong ecosystem support. Weaknesses: Higher cost compared to simple microcontrollers, complex development requirements.

Core Technologies in MCU vs SoC Processing Efficiency

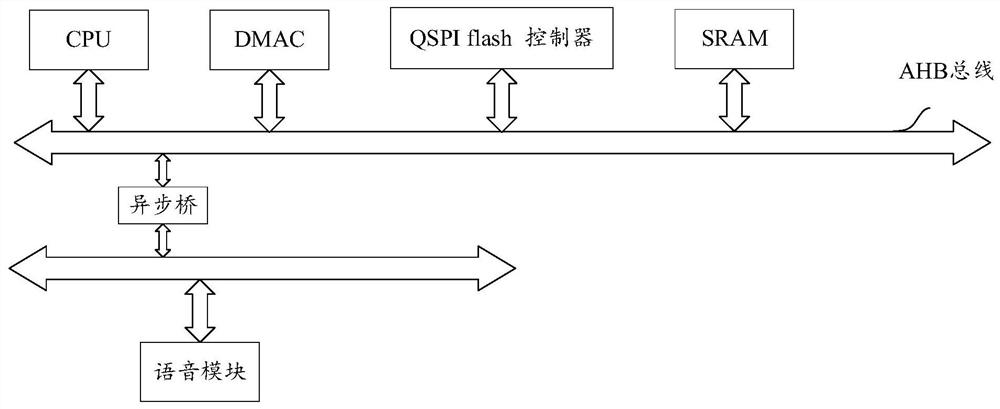

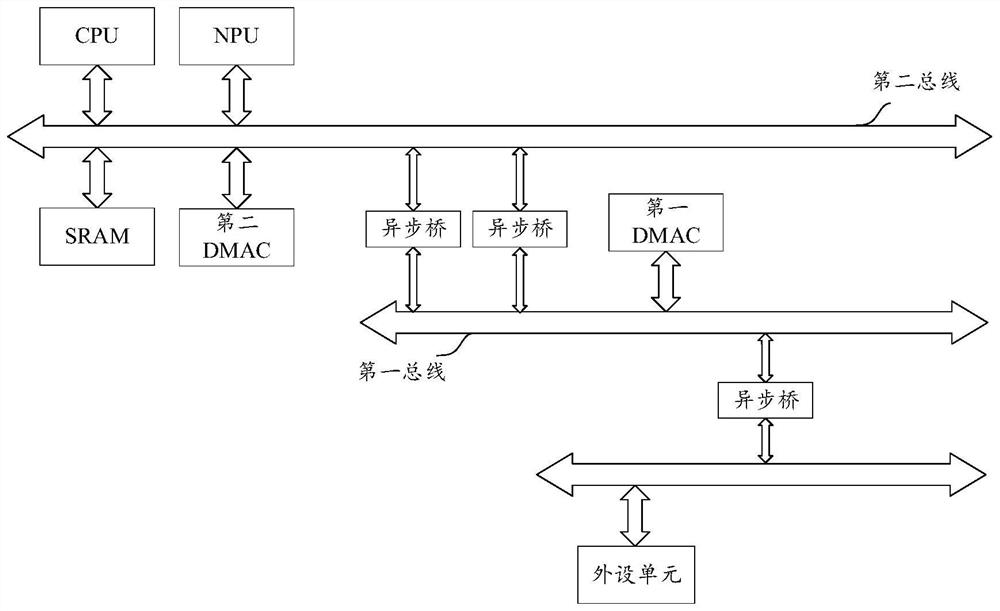

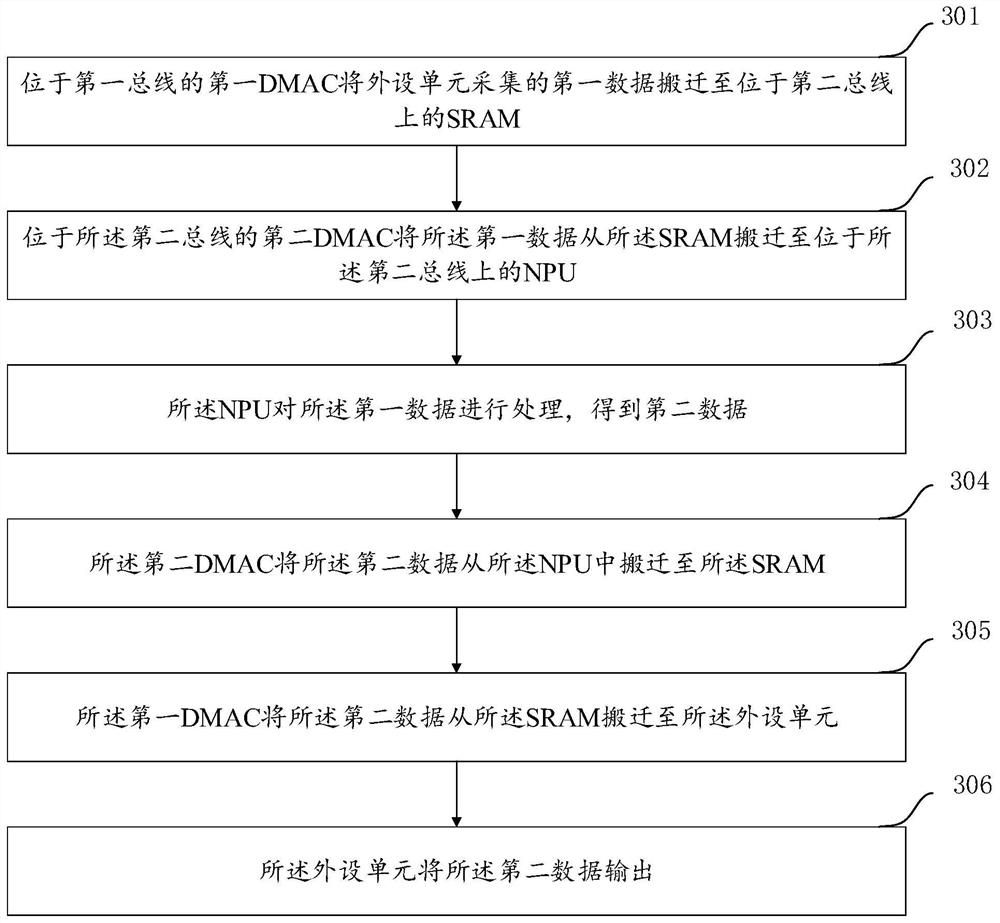

System-on-chip SoC and data processing method suitable for SoC

PatentActiveCN112035398A

Innovation

- Design a system-on-chip SoC that uses an asynchronous bridge to connect two buses. The first bus is used for peripheral units and DMAC. The second bus is used for neural network processors NPU and SRAM. The data bit width of the second bus is larger and The read and write channels are separated, data processing is performed through the NPU, and two DMACs are set up on the two buses to work together to improve data transfer efficiency.

Energy efficient microprocessor with index selected hardware architecture

PatentActiveUS20220188264A1

Innovation

- The Stella SoC implements a software-controlled, virtual ASIC architecture with programmable switches and compute blocks, allowing dynamic reconfiguration of hardware structures to optimize computation and data flow, mimicking ASIC efficiency while maintaining general-purpose flexibility.

Power Consumption Standards and Regulations

Power consumption standards and regulations play a crucial role in determining the efficiency characteristics of microcontrollers and System-on-Chip (SoC) solutions for data processing applications. These regulatory frameworks establish mandatory requirements and voluntary guidelines that directly influence design decisions, manufacturing processes, and deployment strategies across different market segments.

The Energy Star program, administered by the U.S. Environmental Protection Agency, sets stringent power efficiency requirements for computing devices, including embedded systems used in data processing applications. These standards mandate specific power consumption thresholds during active processing, idle states, and sleep modes. Microcontrollers typically demonstrate superior compliance with these regulations due to their inherently lower power consumption profiles, often operating within 10-100 milliwatts during active data processing tasks.

International Electrotechnical Commission (IEC) standards, particularly IEC 62430 and IEC 62623, establish comprehensive frameworks for measuring and reporting power consumption in electronic devices. These standards require manufacturers to provide detailed power consumption data across various operational modes, enabling accurate comparison between microcontroller and SoC solutions. The standards also mandate specific testing methodologies that account for dynamic power scaling, thermal management, and processing load variations.

European Union's Ecodesign Directive 2009/125/EC imposes strict energy efficiency requirements on electronic products, including embedded computing systems used in industrial and consumer applications. This directive establishes maximum standby power consumption limits and requires manufacturers to implement power management features that automatically reduce consumption during periods of low activity. SoC solutions often incorporate advanced power management units to meet these regulatory requirements, while microcontrollers achieve compliance through simpler but effective sleep mode implementations.

The Federal Communications Commission (FCC) Part 15 regulations in the United States include specific provisions for power consumption in wireless-enabled data processing devices. These regulations limit both conducted and radiated emissions, which are directly correlated with power consumption levels. Microcontrollers with integrated wireless capabilities must balance processing efficiency with electromagnetic compatibility requirements, often resulting in more conservative power management strategies compared to SoC solutions that can implement sophisticated interference mitigation techniques.

Emerging regulations in automotive and industrial sectors, such as ISO 26262 for functional safety and IEC 61508 for industrial safety systems, increasingly incorporate power consumption requirements as safety-critical parameters. These standards recognize that excessive power consumption can lead to thermal failures and system instability, potentially compromising data processing reliability and safety.

The Energy Star program, administered by the U.S. Environmental Protection Agency, sets stringent power efficiency requirements for computing devices, including embedded systems used in data processing applications. These standards mandate specific power consumption thresholds during active processing, idle states, and sleep modes. Microcontrollers typically demonstrate superior compliance with these regulations due to their inherently lower power consumption profiles, often operating within 10-100 milliwatts during active data processing tasks.

International Electrotechnical Commission (IEC) standards, particularly IEC 62430 and IEC 62623, establish comprehensive frameworks for measuring and reporting power consumption in electronic devices. These standards require manufacturers to provide detailed power consumption data across various operational modes, enabling accurate comparison between microcontroller and SoC solutions. The standards also mandate specific testing methodologies that account for dynamic power scaling, thermal management, and processing load variations.

European Union's Ecodesign Directive 2009/125/EC imposes strict energy efficiency requirements on electronic products, including embedded computing systems used in industrial and consumer applications. This directive establishes maximum standby power consumption limits and requires manufacturers to implement power management features that automatically reduce consumption during periods of low activity. SoC solutions often incorporate advanced power management units to meet these regulatory requirements, while microcontrollers achieve compliance through simpler but effective sleep mode implementations.

The Federal Communications Commission (FCC) Part 15 regulations in the United States include specific provisions for power consumption in wireless-enabled data processing devices. These regulations limit both conducted and radiated emissions, which are directly correlated with power consumption levels. Microcontrollers with integrated wireless capabilities must balance processing efficiency with electromagnetic compatibility requirements, often resulting in more conservative power management strategies compared to SoC solutions that can implement sophisticated interference mitigation techniques.

Emerging regulations in automotive and industrial sectors, such as ISO 26262 for functional safety and IEC 61508 for industrial safety systems, increasingly incorporate power consumption requirements as safety-critical parameters. These standards recognize that excessive power consumption can lead to thermal failures and system instability, potentially compromising data processing reliability and safety.

Cost-Performance Trade-offs in Processing Architecture

The cost-performance trade-offs between microcontrollers and System-on-Chip (SoC) architectures represent a fundamental decision point in embedded system design, with implications extending far beyond initial procurement costs. These trade-offs encompass multiple dimensions including processing capability, power consumption, development complexity, and long-term scalability requirements.

From a pure cost perspective, microcontrollers typically offer significant advantages in high-volume applications. Entry-level 8-bit and 16-bit microcontrollers can cost less than one dollar in quantity, while even sophisticated 32-bit ARM Cortex-M series processors remain under five dollars. In contrast, application processors and SoCs generally start at ten dollars and can exceed fifty dollars for high-performance variants with integrated graphics processing units and advanced connectivity features.

However, performance capabilities create a compelling counter-narrative to simple cost comparisons. Modern SoCs deliver processing power measured in gigahertz with multi-core architectures, compared to microcontrollers operating in the megahertz range with single-core designs. This performance differential translates directly into data processing throughput, with SoCs capable of handling complex algorithms, real-time video processing, and machine learning inference tasks that would overwhelm traditional microcontroller architectures.

Power consumption considerations add another layer of complexity to cost-performance analysis. While SoCs consume significantly more power during active operation, their superior processing efficiency can result in shorter duty cycles for equivalent computational tasks. Advanced power management features in modern SoCs, including dynamic voltage and frequency scaling, can optimize energy consumption based on workload requirements.

Development and integration costs represent hidden factors that substantially impact total cost of ownership. SoC-based designs typically require more sophisticated printed circuit board layouts, external memory components, and complex software stacks including operating systems and middleware. These requirements increase both development time and engineering expertise requirements, potentially adding months to product development cycles.

The scalability dimension reveals long-term cost implications often overlooked in initial architecture decisions. SoC platforms generally provide greater flexibility for feature expansion and performance upgrades through software updates, potentially extending product lifecycles and reducing the need for hardware redesigns. Microcontroller-based systems may require complete architectural overhauls to accommodate significant functionality increases.

Manufacturing volume thresholds significantly influence the cost-performance equation. Low-volume applications favor microcontroller solutions due to lower development costs and simpler supply chain management. High-volume products can amortize SoC development complexity across larger production runs, making the superior performance capabilities economically viable despite higher per-unit costs and development investments.

From a pure cost perspective, microcontrollers typically offer significant advantages in high-volume applications. Entry-level 8-bit and 16-bit microcontrollers can cost less than one dollar in quantity, while even sophisticated 32-bit ARM Cortex-M series processors remain under five dollars. In contrast, application processors and SoCs generally start at ten dollars and can exceed fifty dollars for high-performance variants with integrated graphics processing units and advanced connectivity features.

However, performance capabilities create a compelling counter-narrative to simple cost comparisons. Modern SoCs deliver processing power measured in gigahertz with multi-core architectures, compared to microcontrollers operating in the megahertz range with single-core designs. This performance differential translates directly into data processing throughput, with SoCs capable of handling complex algorithms, real-time video processing, and machine learning inference tasks that would overwhelm traditional microcontroller architectures.

Power consumption considerations add another layer of complexity to cost-performance analysis. While SoCs consume significantly more power during active operation, their superior processing efficiency can result in shorter duty cycles for equivalent computational tasks. Advanced power management features in modern SoCs, including dynamic voltage and frequency scaling, can optimize energy consumption based on workload requirements.

Development and integration costs represent hidden factors that substantially impact total cost of ownership. SoC-based designs typically require more sophisticated printed circuit board layouts, external memory components, and complex software stacks including operating systems and middleware. These requirements increase both development time and engineering expertise requirements, potentially adding months to product development cycles.

The scalability dimension reveals long-term cost implications often overlooked in initial architecture decisions. SoC platforms generally provide greater flexibility for feature expansion and performance upgrades through software updates, potentially extending product lifecycles and reducing the need for hardware redesigns. Microcontroller-based systems may require complete architectural overhauls to accommodate significant functionality increases.

Manufacturing volume thresholds significantly influence the cost-performance equation. Low-volume applications favor microcontroller solutions due to lower development costs and simpler supply chain management. High-volume products can amortize SoC development complexity across larger production runs, making the superior performance capabilities economically viable despite higher per-unit costs and development investments.

Unlock deeper insights with PatSnap Eureka Quick Research — get a full tech report to explore trends and direct your research. Try now!

Generate Your Research Report Instantly with AI Agent

Supercharge your innovation with PatSnap Eureka AI Agent Platform!