Reducing Network Jitter through Adaptive Control Protocols

MAR 18, 20269 MIN READ

Generate Your Research Report Instantly with AI Agent

PatSnap Eureka helps you evaluate technical feasibility & market potential.

Network Jitter Control Background and Objectives

Network jitter, defined as the variation in packet delay across a network connection, has emerged as a critical performance metric in modern digital communications. This phenomenon occurs when data packets experience inconsistent transmission delays due to network congestion, routing changes, buffer overflow, or varying processing times at intermediate nodes. The unpredictable nature of jitter significantly impacts real-time applications such as voice over IP, video conferencing, online gaming, and streaming services, where consistent timing is essential for optimal user experience.

The evolution of network infrastructure has paradoxically intensified jitter-related challenges. While bandwidth capacity has increased substantially, the complexity of modern networks with multiple routing paths, diverse hardware configurations, and dynamic traffic patterns has created new sources of timing variability. Traditional static buffering and fixed-rate transmission protocols, designed for simpler network architectures, prove inadequate for managing contemporary jitter scenarios.

Adaptive control protocols represent a paradigm shift from reactive to proactive jitter management. These intelligent systems continuously monitor network conditions, analyze traffic patterns, and dynamically adjust transmission parameters to minimize timing variations. Unlike conventional approaches that apply uniform buffering strategies, adaptive protocols implement real-time decision-making algorithms that respond to changing network states, optimizing performance based on current conditions rather than predetermined configurations.

The primary objective of implementing adaptive control protocols for jitter reduction encompasses multiple technical goals. First, achieving consistent packet delivery timing through dynamic buffer management and intelligent queuing mechanisms. Second, maintaining optimal quality of service levels across varying network conditions while minimizing latency overhead. Third, developing predictive algorithms capable of anticipating network congestion and preemptively adjusting transmission strategies.

Furthermore, these protocols aim to establish seamless interoperability across heterogeneous network environments, ensuring consistent performance regardless of underlying infrastructure variations. The ultimate goal involves creating self-optimizing network systems that automatically adapt to traffic fluctuations, hardware limitations, and environmental changes without requiring manual intervention or service interruption.

The evolution of network infrastructure has paradoxically intensified jitter-related challenges. While bandwidth capacity has increased substantially, the complexity of modern networks with multiple routing paths, diverse hardware configurations, and dynamic traffic patterns has created new sources of timing variability. Traditional static buffering and fixed-rate transmission protocols, designed for simpler network architectures, prove inadequate for managing contemporary jitter scenarios.

Adaptive control protocols represent a paradigm shift from reactive to proactive jitter management. These intelligent systems continuously monitor network conditions, analyze traffic patterns, and dynamically adjust transmission parameters to minimize timing variations. Unlike conventional approaches that apply uniform buffering strategies, adaptive protocols implement real-time decision-making algorithms that respond to changing network states, optimizing performance based on current conditions rather than predetermined configurations.

The primary objective of implementing adaptive control protocols for jitter reduction encompasses multiple technical goals. First, achieving consistent packet delivery timing through dynamic buffer management and intelligent queuing mechanisms. Second, maintaining optimal quality of service levels across varying network conditions while minimizing latency overhead. Third, developing predictive algorithms capable of anticipating network congestion and preemptively adjusting transmission strategies.

Furthermore, these protocols aim to establish seamless interoperability across heterogeneous network environments, ensuring consistent performance regardless of underlying infrastructure variations. The ultimate goal involves creating self-optimizing network systems that automatically adapt to traffic fluctuations, hardware limitations, and environmental changes without requiring manual intervention or service interruption.

Market Demand for Low-Latency Network Solutions

The global demand for low-latency network solutions has experienced unprecedented growth across multiple industry verticals, driven by the proliferation of real-time applications and mission-critical services. Financial trading platforms represent one of the most demanding sectors, where microsecond delays can translate to significant financial losses. High-frequency trading firms require network infrastructures capable of maintaining consistent sub-millisecond latencies, making adaptive jitter control protocols essential for maintaining competitive advantages.

Gaming and interactive entertainment industries constitute another major demand driver, with online gaming platforms serving millions of concurrent users who expect seamless, responsive experiences. The rise of cloud gaming services has further intensified requirements for stable, low-jitter connections, as any network inconsistency directly impacts user experience and retention rates. Esports tournaments and competitive gaming environments particularly demand ultra-reliable network performance.

Telecommunications carriers face mounting pressure to deliver consistent Quality of Service across their networks, especially with the deployment of 5G infrastructure. Network operators must guarantee service level agreements for enterprise customers while managing increasingly complex traffic patterns. The convergence of voice, video, and data services over IP networks has created stringent latency requirements that traditional static protocols struggle to meet.

Industrial automation and Internet of Things applications represent rapidly expanding market segments requiring deterministic network behavior. Manufacturing systems, autonomous vehicles, and smart grid infrastructure depend on predictable network performance for safety-critical operations. These applications cannot tolerate the variable delays caused by network jitter, creating substantial demand for adaptive control mechanisms.

Healthcare technology adoption has accelerated demand for reliable low-latency networks, particularly in telemedicine and remote surgery applications. Medical professionals require guaranteed network performance for real-time patient monitoring and diagnostic procedures, where communication delays could impact patient outcomes.

The enterprise video conferencing market has grown substantially, with organizations demanding consistent audio and video quality for business communications. Remote work trends have amplified requirements for stable network performance across diverse connection types and geographic locations.

Cloud service providers increasingly compete on network performance metrics, driving investment in advanced traffic management technologies. Content delivery networks and edge computing platforms require sophisticated jitter control mechanisms to maintain service quality across distributed infrastructures, creating substantial market opportunities for adaptive protocol solutions.

Gaming and interactive entertainment industries constitute another major demand driver, with online gaming platforms serving millions of concurrent users who expect seamless, responsive experiences. The rise of cloud gaming services has further intensified requirements for stable, low-jitter connections, as any network inconsistency directly impacts user experience and retention rates. Esports tournaments and competitive gaming environments particularly demand ultra-reliable network performance.

Telecommunications carriers face mounting pressure to deliver consistent Quality of Service across their networks, especially with the deployment of 5G infrastructure. Network operators must guarantee service level agreements for enterprise customers while managing increasingly complex traffic patterns. The convergence of voice, video, and data services over IP networks has created stringent latency requirements that traditional static protocols struggle to meet.

Industrial automation and Internet of Things applications represent rapidly expanding market segments requiring deterministic network behavior. Manufacturing systems, autonomous vehicles, and smart grid infrastructure depend on predictable network performance for safety-critical operations. These applications cannot tolerate the variable delays caused by network jitter, creating substantial demand for adaptive control mechanisms.

Healthcare technology adoption has accelerated demand for reliable low-latency networks, particularly in telemedicine and remote surgery applications. Medical professionals require guaranteed network performance for real-time patient monitoring and diagnostic procedures, where communication delays could impact patient outcomes.

The enterprise video conferencing market has grown substantially, with organizations demanding consistent audio and video quality for business communications. Remote work trends have amplified requirements for stable network performance across diverse connection types and geographic locations.

Cloud service providers increasingly compete on network performance metrics, driving investment in advanced traffic management technologies. Content delivery networks and edge computing platforms require sophisticated jitter control mechanisms to maintain service quality across distributed infrastructures, creating substantial market opportunities for adaptive protocol solutions.

Current Jitter Issues and Adaptive Protocol Limitations

Network jitter represents one of the most persistent challenges in modern communication systems, manifesting as irregular variations in packet delay that significantly impact real-time applications. Current jitter issues primarily stem from network congestion, routing inconsistencies, and buffer management inefficiencies across diverse network infrastructures. These variations create substantial problems for voice over IP communications, video conferencing, and streaming services, where consistent timing is critical for maintaining quality of service.

Traditional jitter mitigation approaches rely heavily on static buffering mechanisms and fixed-size jitter buffers that attempt to smooth out delay variations. However, these conventional methods often prove inadequate in dynamic network environments where traffic patterns and congestion levels fluctuate rapidly. The static nature of these solutions frequently results in either excessive latency due to oversized buffers or packet loss from undersized buffer configurations.

Adaptive control protocols have emerged as promising solutions to address these limitations, yet they face significant implementation challenges. Current adaptive mechanisms struggle with accurate real-time network condition assessment, often relying on outdated or insufficient metrics to make control decisions. The complexity of predicting optimal buffer sizes and adjustment timing creates substantial computational overhead that can paradoxically contribute to the very latency issues these protocols aim to resolve.

Existing adaptive protocols also encounter difficulties in balancing responsiveness with stability. Overly aggressive adaptation can lead to oscillatory behavior where the system continuously overcorrects, while conservative approaches may fail to respond adequately to rapid network changes. This challenge is particularly pronounced in heterogeneous network environments where different segments exhibit varying characteristics and performance requirements.

Furthermore, current adaptive control implementations often lack comprehensive integration with broader network management systems. Many protocols operate in isolation, making localized decisions without considering network-wide optimization opportunities. This fragmented approach limits the effectiveness of jitter reduction efforts and can create conflicting behaviors when multiple adaptive systems operate simultaneously within the same network infrastructure.

The scalability limitations of existing adaptive protocols present additional constraints, particularly in large-scale deployments where centralized control becomes impractical and distributed decision-making introduces coordination challenges that can exacerbate rather than alleviate jitter-related issues.

Traditional jitter mitigation approaches rely heavily on static buffering mechanisms and fixed-size jitter buffers that attempt to smooth out delay variations. However, these conventional methods often prove inadequate in dynamic network environments where traffic patterns and congestion levels fluctuate rapidly. The static nature of these solutions frequently results in either excessive latency due to oversized buffers or packet loss from undersized buffer configurations.

Adaptive control protocols have emerged as promising solutions to address these limitations, yet they face significant implementation challenges. Current adaptive mechanisms struggle with accurate real-time network condition assessment, often relying on outdated or insufficient metrics to make control decisions. The complexity of predicting optimal buffer sizes and adjustment timing creates substantial computational overhead that can paradoxically contribute to the very latency issues these protocols aim to resolve.

Existing adaptive protocols also encounter difficulties in balancing responsiveness with stability. Overly aggressive adaptation can lead to oscillatory behavior where the system continuously overcorrects, while conservative approaches may fail to respond adequately to rapid network changes. This challenge is particularly pronounced in heterogeneous network environments where different segments exhibit varying characteristics and performance requirements.

Furthermore, current adaptive control implementations often lack comprehensive integration with broader network management systems. Many protocols operate in isolation, making localized decisions without considering network-wide optimization opportunities. This fragmented approach limits the effectiveness of jitter reduction efforts and can create conflicting behaviors when multiple adaptive systems operate simultaneously within the same network infrastructure.

The scalability limitations of existing adaptive protocols present additional constraints, particularly in large-scale deployments where centralized control becomes impractical and distributed decision-making introduces coordination challenges that can exacerbate rather than alleviate jitter-related issues.

Existing Adaptive Control Solutions for Jitter Reduction

01 Dynamic buffer management for jitter control

Adaptive control protocols employ dynamic buffer management techniques to mitigate network jitter. These methods involve adjusting buffer sizes based on real-time network conditions, monitoring packet arrival patterns, and implementing adaptive playout delays. The buffer dynamically expands or contracts to accommodate varying packet inter-arrival times, ensuring smooth data delivery while minimizing latency. This approach helps maintain quality of service in real-time applications by compensating for network variability.- Dynamic buffer management for jitter control: Adaptive control protocols employ dynamic buffer management techniques to mitigate network jitter. These methods involve adjusting buffer sizes based on real-time network conditions, monitoring packet arrival patterns, and implementing adaptive playout strategies. The buffer dynamically expands or contracts to accommodate varying packet delays, ensuring smooth data delivery while minimizing latency. This approach helps maintain quality of service in real-time applications by compensating for variable network delays.

- Packet scheduling and prioritization mechanisms: Advanced packet scheduling algorithms are utilized to manage network jitter through intelligent prioritization of data packets. These mechanisms classify packets based on their sensitivity to delay variations and assign appropriate transmission priorities. The protocols implement queue management strategies that reorder packets, adjust transmission timing, and allocate bandwidth resources to minimize jitter effects on critical traffic flows. This ensures time-sensitive data receives preferential treatment during network congestion.

- Adaptive rate control and bandwidth allocation: Rate control mechanisms dynamically adjust transmission rates based on detected jitter levels and network capacity. These protocols monitor network performance metrics and adaptively modify data transmission speeds to match available bandwidth. The system implements feedback loops that continuously assess jitter characteristics and adjust encoding rates, packet sizes, or transmission intervals accordingly. This adaptive approach optimizes throughput while maintaining acceptable jitter levels for quality-sensitive applications.

- Timestamp-based synchronization and delay compensation: Protocols utilize precise timestamp mechanisms to measure and compensate for jitter-induced delays. These systems embed timing information in packets, enabling receivers to calculate delay variations and reconstruct proper packet timing. The approach implements clock synchronization algorithms and delay estimation techniques to maintain temporal relationships between packets. Compensation algorithms adjust playout timing based on calculated jitter metrics, ensuring synchronized delivery of time-critical data streams.

- Predictive jitter modeling and proactive adaptation: Advanced protocols employ predictive models to anticipate jitter patterns and proactively adjust control parameters. These systems analyze historical network behavior, traffic patterns, and statistical characteristics to forecast future jitter conditions. Machine learning algorithms or statistical models predict delay variations, enabling preemptive adjustments to transmission strategies. The proactive adaptation reduces reaction time to changing network conditions and improves overall stability of real-time communications.

02 Packet scheduling and transmission rate adaptation

Network jitter can be controlled through intelligent packet scheduling algorithms and adaptive transmission rate mechanisms. These protocols analyze network congestion levels, packet loss rates, and delay variations to adjust transmission parameters accordingly. The system modifies packet sending rates, prioritizes time-sensitive data, and implements traffic shaping techniques to reduce jitter effects. This ensures consistent packet delivery timing and improves overall network performance.Expand Specific Solutions03 Timestamp-based synchronization and clock recovery

Adaptive protocols utilize timestamp mechanisms and clock recovery algorithms to address network jitter issues. These techniques involve embedding timing information in packets, implementing phase-locked loops, and using reference clocks to reconstruct original timing at the receiver. The system compensates for variable network delays by analyzing timestamp data and adjusting playback timing, which is particularly important for multimedia streaming and voice communications.Expand Specific Solutions04 Predictive jitter estimation and compensation

Advanced control protocols implement predictive algorithms to estimate and compensate for network jitter before it affects application performance. These methods use statistical analysis, machine learning models, and historical network data to forecast jitter patterns. The system proactively adjusts parameters such as buffer depths, encoding rates, and error correction levels based on predictions, enabling preemptive mitigation of jitter-related quality degradation.Expand Specific Solutions05 Quality of Service (QoS) mechanisms for jitter reduction

Network protocols incorporate QoS mechanisms specifically designed to reduce jitter for sensitive traffic types. These include priority queuing, traffic classification, bandwidth reservation, and differentiated services. The protocols identify jitter-sensitive applications and allocate network resources accordingly, ensuring consistent packet delivery intervals. Implementation may involve router configurations, network policy enforcement, and end-to-end QoS guarantees across multiple network segments.Expand Specific Solutions

Key Players in Network Infrastructure and Protocol Industry

The network jitter reduction through adaptive control protocols represents a mature technology domain in the growth phase, driven by increasing demands for real-time applications and 5G deployment. The market demonstrates substantial scale with established telecommunications infrastructure providers leading development. Technology maturity varies significantly across players, with Qualcomm, Intel, and Huawei advancing sophisticated adaptive algorithms for mobile and wireless networks, while traditional telecom giants like Ericsson, Nokia Technologies, and Cisco Technology focus on carrier-grade solutions. Samsung Electronics and Avago Technologies contribute specialized semiconductor implementations, whereas companies like AT&T, British Telecommunications, and Charter Communications drive deployment requirements. The competitive landscape shows convergence between hardware manufacturers, software developers, and service providers, indicating a maturing ecosystem where adaptive control protocols are becoming standardized components rather than differentiating technologies.

QUALCOMM, Inc.

Technical Solution: Qualcomm develops adaptive control protocols for wireless networks through their Snapdragon platforms and 5G modem solutions that implement dynamic spectrum management and intelligent power control mechanisms. Their technology features adaptive beamforming and MIMO optimization algorithms that adjust transmission parameters based on channel conditions to minimize packet delay variations. The solution includes advanced interference mitigation techniques and adaptive coding schemes that respond to network congestion in real-time. Qualcomm's wireless solutions incorporate machine learning-based traffic prediction models that enable proactive network optimization and resource allocation. Their adaptive control protocols feature distributed coordination mechanisms that optimize network performance across multiple devices while maintaining low-latency communication through intelligent scheduling and prioritization algorithms.

Strengths: Leading wireless technology expertise with strong mobile device ecosystem integration and advanced 5G capabilities. Weaknesses: Primarily focused on wireless solutions with limited wired networking capabilities and dependency on device manufacturer adoption.

Huawei Technologies Co., Ltd.

Technical Solution: Huawei develops intelligent network control protocols using AI-driven traffic prediction and adaptive resource allocation mechanisms. Their CloudFabric solution implements dynamic buffer management and intelligent packet scheduling algorithms that adjust transmission parameters in real-time based on network conditions. The technology incorporates deep packet inspection capabilities combined with machine learning models to identify traffic patterns and optimize routing decisions. Huawei's 5G network infrastructure includes advanced jitter control mechanisms through precise timing synchronization and adaptive modulation schemes. Their solution features distributed control architecture that enables rapid response to network changes while maintaining low-latency communication paths through predictive congestion avoidance algorithms.

Strengths: Strong R&D capabilities in 5G and AI-driven networking with cost-effective solutions for global markets. Weaknesses: Geopolitical restrictions limit market access in certain regions and regulatory compliance challenges.

Core Innovations in Adaptive Network Control Algorithms

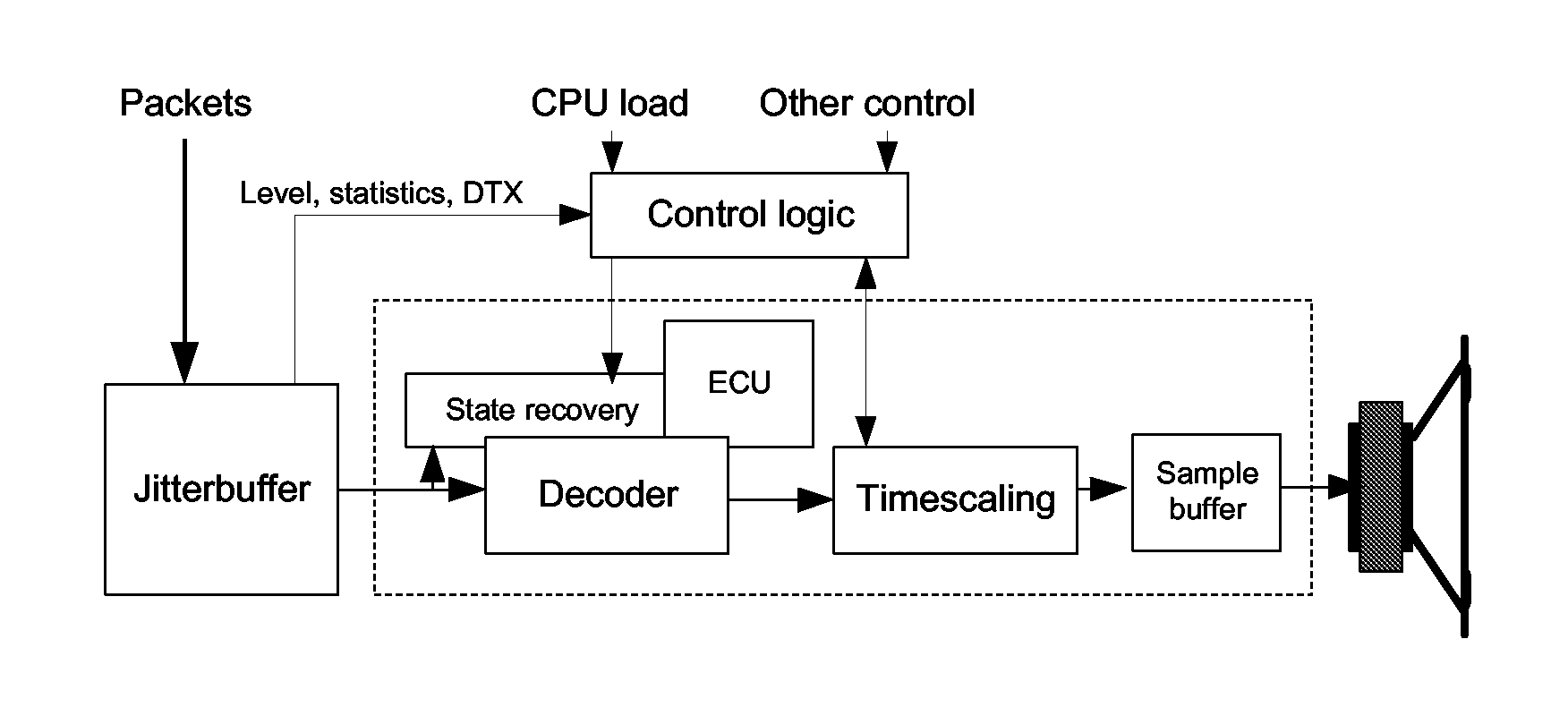

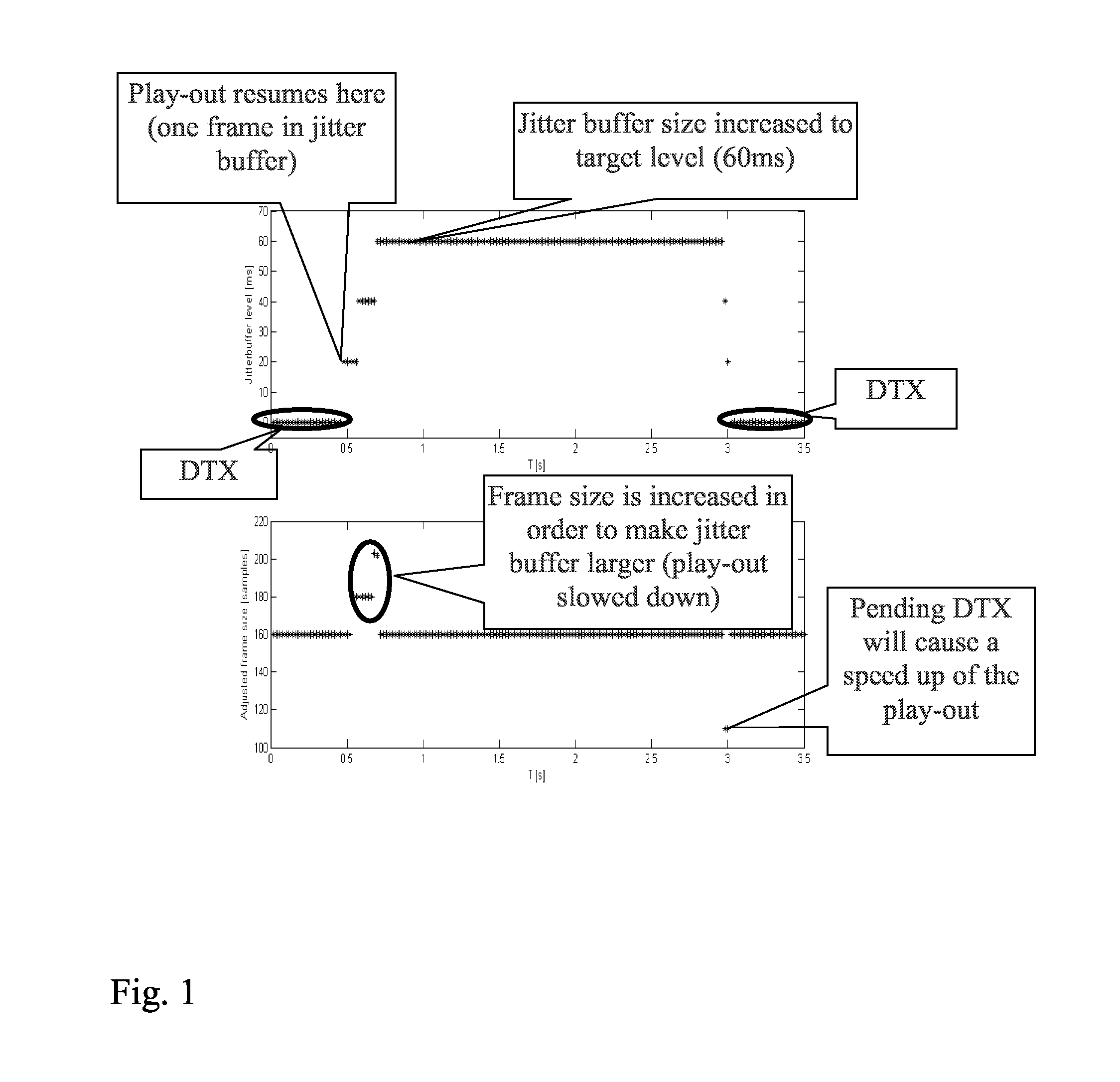

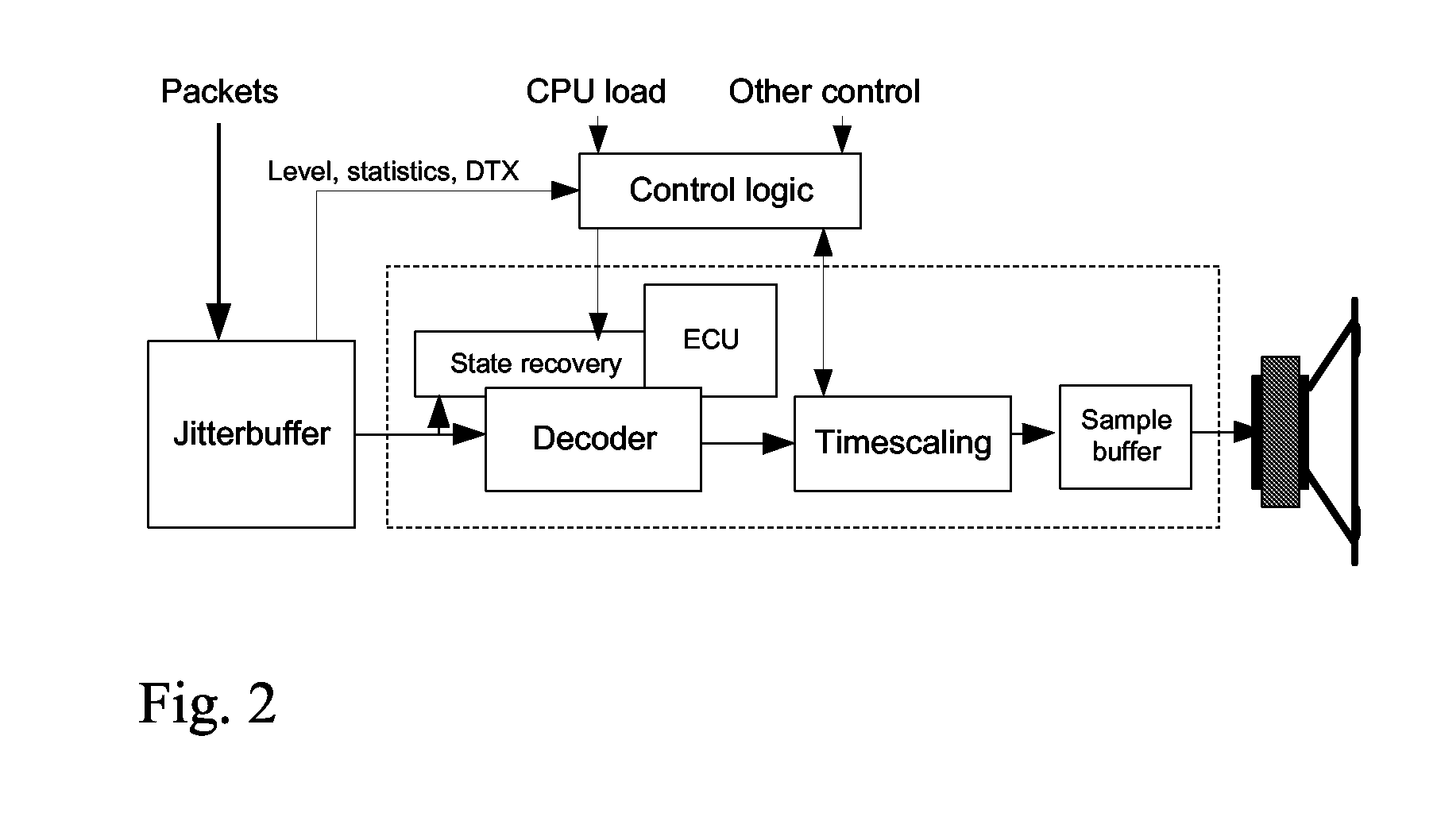

Control mechanism for adaptive play-out with state recovery

PatentActiveUS7864814B2

Innovation

- A control logic means that adaptively controls initial buffering time and time scaling, utilizing information from the jitter buffer, decoder, and state recovery means to improve interactivity and speech quality, allowing for aggressive time scaling while minimizing distortions and CPU load.

A self-adaptive jitter buffer adjustment method for packet-switched network

PatentInactiveEP1517466A3

Innovation

- A self-adaptive jitter buffer adjustment method that dynamically adjusts buffer parameters based on packet sequence numbers and filling level changes, using RTP/RTCP for data transmission, without considering packet dropping rate, to effectively track and absorb network jitter, reducing complexity and computational costs.

Network Protocol Standards and Compliance Requirements

Network protocol standards play a fundamental role in establishing the framework for adaptive jitter control mechanisms. The Internet Engineering Task Force (IETF) has developed several key standards that directly impact jitter management, including RFC 3550 for Real-time Transport Protocol (RTP), RFC 3611 for RTP Control Protocol Extended Reports, and RFC 5506 for Support for Reduced-Size RTCP. These standards define the baseline requirements for timestamp accuracy, packet sequencing, and control message formats that adaptive protocols must adhere to when implementing jitter reduction techniques.

Quality of Service (QoS) compliance requirements significantly influence the design of adaptive control protocols for jitter reduction. ITU-T Recommendation G.114 establishes acceptable delay and jitter thresholds for voice communications, while IEEE 802.1p provides traffic prioritization mechanisms that adaptive protocols must leverage. Compliance with these standards ensures that jitter control mechanisms operate within acceptable performance boundaries while maintaining interoperability across diverse network infrastructures.

Regulatory compliance frameworks impose additional constraints on adaptive jitter control implementations. The Federal Communications Commission (FCC) regulations for Voice over Internet Protocol (VoIP) services mandate specific performance metrics that directly affect protocol design decisions. Similarly, European Telecommunications Standards Institute (ETSI) requirements for network performance monitoring create obligations for adaptive protocols to provide standardized reporting mechanisms and maintain audit trails of control decisions.

Emerging standards for Software-Defined Networking (SDN) and Network Function Virtualization (NFV) are reshaping compliance landscapes for adaptive jitter control. OpenFlow protocol specifications and ETSI NFV architectural frameworks introduce new requirements for protocol flexibility and programmability. These evolving standards demand that adaptive control mechanisms support dynamic reconfiguration while maintaining compliance with traditional networking protocols and performance requirements.

Cross-protocol compatibility requirements present significant challenges for adaptive jitter control implementations. Protocols must maintain backward compatibility with legacy systems while supporting modern adaptive features. This includes ensuring proper interaction with existing routing protocols, maintaining compliance with security standards such as IPSec and TLS, and supporting various media codecs without violating their respective specifications or performance characteristics.

Quality of Service (QoS) compliance requirements significantly influence the design of adaptive control protocols for jitter reduction. ITU-T Recommendation G.114 establishes acceptable delay and jitter thresholds for voice communications, while IEEE 802.1p provides traffic prioritization mechanisms that adaptive protocols must leverage. Compliance with these standards ensures that jitter control mechanisms operate within acceptable performance boundaries while maintaining interoperability across diverse network infrastructures.

Regulatory compliance frameworks impose additional constraints on adaptive jitter control implementations. The Federal Communications Commission (FCC) regulations for Voice over Internet Protocol (VoIP) services mandate specific performance metrics that directly affect protocol design decisions. Similarly, European Telecommunications Standards Institute (ETSI) requirements for network performance monitoring create obligations for adaptive protocols to provide standardized reporting mechanisms and maintain audit trails of control decisions.

Emerging standards for Software-Defined Networking (SDN) and Network Function Virtualization (NFV) are reshaping compliance landscapes for adaptive jitter control. OpenFlow protocol specifications and ETSI NFV architectural frameworks introduce new requirements for protocol flexibility and programmability. These evolving standards demand that adaptive control mechanisms support dynamic reconfiguration while maintaining compliance with traditional networking protocols and performance requirements.

Cross-protocol compatibility requirements present significant challenges for adaptive jitter control implementations. Protocols must maintain backward compatibility with legacy systems while supporting modern adaptive features. This includes ensuring proper interaction with existing routing protocols, maintaining compliance with security standards such as IPSec and TLS, and supporting various media codecs without violating their respective specifications or performance characteristics.

Quality of Service Impact Assessment and Metrics

Network jitter significantly impacts Quality of Service (QoS) across various application domains, with different services exhibiting varying degrees of sensitivity to timing variations. Real-time applications such as Voice over IP (VoIP) and video conferencing demonstrate the highest vulnerability to jitter effects, where variations exceeding 20-30 milliseconds can result in noticeable degradation in user experience. Interactive gaming applications similarly require stringent jitter control, typically demanding sub-10 millisecond consistency to maintain responsive gameplay.

The quantitative assessment of jitter impact relies on several key performance indicators that collectively determine service quality. Mean Opinion Score (MOS) serves as a fundamental metric for voice applications, correlating directly with jitter levels and packet delay variation. For video services, Peak Signal-to-Noise Ratio (PSNR) and structural similarity indices provide measurable indicators of quality degradation attributable to network timing inconsistencies.

Adaptive control protocols demonstrate measurable improvements in QoS metrics through dynamic adjustment mechanisms. Buffer management strategies within these protocols typically reduce jitter-induced packet loss by 15-25% compared to static approaches. Latency compensation algorithms show effectiveness in maintaining consistent end-to-end delay characteristics, particularly beneficial for applications requiring predictable response times.

Service Level Agreement (SLA) compliance represents a critical business metric directly influenced by jitter control effectiveness. Organizations implementing adaptive protocols report improved SLA adherence rates, with typical improvements ranging from 12-18% in meeting guaranteed service parameters. Network availability metrics also benefit from reduced jitter-related service interruptions.

The economic impact assessment reveals substantial cost implications of jitter-related service degradation. Enterprise communications systems experience reduced productivity costs when jitter levels remain within acceptable thresholds. Customer satisfaction indices correlate strongly with consistent service delivery, directly linking jitter control effectiveness to business outcomes and customer retention rates in service provider environments.

The quantitative assessment of jitter impact relies on several key performance indicators that collectively determine service quality. Mean Opinion Score (MOS) serves as a fundamental metric for voice applications, correlating directly with jitter levels and packet delay variation. For video services, Peak Signal-to-Noise Ratio (PSNR) and structural similarity indices provide measurable indicators of quality degradation attributable to network timing inconsistencies.

Adaptive control protocols demonstrate measurable improvements in QoS metrics through dynamic adjustment mechanisms. Buffer management strategies within these protocols typically reduce jitter-induced packet loss by 15-25% compared to static approaches. Latency compensation algorithms show effectiveness in maintaining consistent end-to-end delay characteristics, particularly beneficial for applications requiring predictable response times.

Service Level Agreement (SLA) compliance represents a critical business metric directly influenced by jitter control effectiveness. Organizations implementing adaptive protocols report improved SLA adherence rates, with typical improvements ranging from 12-18% in meeting guaranteed service parameters. Network availability metrics also benefit from reduced jitter-related service interruptions.

The economic impact assessment reveals substantial cost implications of jitter-related service degradation. Enterprise communications systems experience reduced productivity costs when jitter levels remain within acceptable thresholds. Customer satisfaction indices correlate strongly with consistent service delivery, directly linking jitter control effectiveness to business outcomes and customer retention rates in service provider environments.

Unlock deeper insights with PatSnap Eureka Quick Research — get a full tech report to explore trends and direct your research. Try now!

Generate Your Research Report Instantly with AI Agent

Supercharge your innovation with PatSnap Eureka AI Agent Platform!