Spatial Computing Architectures for Mixed Reality Systems

MAR 17, 20269 MIN READ

Generate Your Research Report Instantly with AI Agent

PatSnap Eureka helps you evaluate technical feasibility & market potential.

Spatial Computing Background and MR System Goals

Spatial computing represents a paradigm shift in how digital information interacts with physical environments, fundamentally transforming the relationship between virtual content and real-world spaces. This computational approach enables systems to understand, map, and manipulate three-dimensional environments in real-time, creating seamless integration between digital and physical realms. The evolution of spatial computing has been driven by advances in computer vision, sensor technologies, and processing capabilities, establishing the foundation for sophisticated mixed reality applications.

The historical development of spatial computing can be traced from early computer graphics research in the 1960s through augmented reality experiments in the 1990s, culminating in today's advanced mixed reality platforms. Key technological milestones include the development of simultaneous localization and mapping algorithms, depth sensing technologies, and real-time 3D reconstruction methods. These innovations have progressively enhanced the accuracy and responsiveness of spatial understanding systems.

Mixed reality systems represent the convergence of spatial computing capabilities with immersive user experiences, enabling applications that seamlessly blend virtual objects with physical environments. Unlike traditional virtual or augmented reality approaches, mixed reality systems require sophisticated spatial awareness to maintain consistent object placement, occlusion handling, and environmental interaction. This demands robust architectural frameworks capable of processing multiple data streams simultaneously while maintaining low latency performance.

The primary technical objectives for mixed reality spatial computing architectures encompass several critical areas. Real-time environmental mapping and tracking constitute fundamental requirements, enabling systems to continuously update their understanding of dynamic physical spaces. Accurate pose estimation and object registration ensure virtual content remains properly aligned with real-world references, while efficient rendering pipelines maintain visual fidelity across varying computational constraints.

Performance optimization represents another crucial goal, as mixed reality applications must achieve consistent frame rates while processing computationally intensive spatial analysis tasks. This requires architectural designs that effectively balance processing loads across available hardware resources, including specialized processors, graphics units, and dedicated spatial computing accelerators. Energy efficiency considerations become particularly important for mobile and wearable mixed reality devices.

Scalability and adaptability objectives focus on creating architectures capable of handling diverse environmental conditions and application requirements. Systems must accommodate varying spatial complexities, from simple indoor environments to complex outdoor scenarios with dynamic lighting and weather conditions. Additionally, architectural flexibility enables support for different interaction modalities and content types across various mixed reality applications.

The historical development of spatial computing can be traced from early computer graphics research in the 1960s through augmented reality experiments in the 1990s, culminating in today's advanced mixed reality platforms. Key technological milestones include the development of simultaneous localization and mapping algorithms, depth sensing technologies, and real-time 3D reconstruction methods. These innovations have progressively enhanced the accuracy and responsiveness of spatial understanding systems.

Mixed reality systems represent the convergence of spatial computing capabilities with immersive user experiences, enabling applications that seamlessly blend virtual objects with physical environments. Unlike traditional virtual or augmented reality approaches, mixed reality systems require sophisticated spatial awareness to maintain consistent object placement, occlusion handling, and environmental interaction. This demands robust architectural frameworks capable of processing multiple data streams simultaneously while maintaining low latency performance.

The primary technical objectives for mixed reality spatial computing architectures encompass several critical areas. Real-time environmental mapping and tracking constitute fundamental requirements, enabling systems to continuously update their understanding of dynamic physical spaces. Accurate pose estimation and object registration ensure virtual content remains properly aligned with real-world references, while efficient rendering pipelines maintain visual fidelity across varying computational constraints.

Performance optimization represents another crucial goal, as mixed reality applications must achieve consistent frame rates while processing computationally intensive spatial analysis tasks. This requires architectural designs that effectively balance processing loads across available hardware resources, including specialized processors, graphics units, and dedicated spatial computing accelerators. Energy efficiency considerations become particularly important for mobile and wearable mixed reality devices.

Scalability and adaptability objectives focus on creating architectures capable of handling diverse environmental conditions and application requirements. Systems must accommodate varying spatial complexities, from simple indoor environments to complex outdoor scenarios with dynamic lighting and weather conditions. Additionally, architectural flexibility enables support for different interaction modalities and content types across various mixed reality applications.

Market Demand for Mixed Reality Spatial Computing

The mixed reality spatial computing market is experiencing unprecedented growth driven by convergent technological advances and evolving user expectations across multiple industry verticals. Enterprise adoption represents the primary demand driver, with manufacturing, healthcare, education, and retail sectors increasingly recognizing spatial computing's transformative potential for operational efficiency and user engagement.

Manufacturing industries demonstrate substantial appetite for mixed reality spatial computing solutions that enable real-time visualization of complex assembly processes, remote expert assistance, and immersive training environments. Automotive manufacturers and aerospace companies particularly value systems that can overlay digital instructions onto physical components, reducing assembly errors and accelerating workforce onboarding.

Healthcare sector demand centers on surgical planning, medical education, and patient treatment applications. Medical institutions seek spatial computing architectures capable of rendering high-fidelity anatomical models, enabling surgeons to visualize patient-specific data in three-dimensional space while maintaining sterile operating environments. The precision requirements in healthcare applications drive demand for architectures with minimal latency and exceptional tracking accuracy.

Educational institutions increasingly request mixed reality systems that transform traditional learning methodologies through immersive historical recreations, scientific simulations, and collaborative virtual laboratories. The demand emphasizes scalable architectures supporting multiple simultaneous users while maintaining consistent performance across diverse hardware configurations.

Consumer market demand focuses on entertainment, social interaction, and productivity applications. Gaming enthusiasts seek seamless integration between physical and virtual environments, while remote workers demand spatial computing solutions that enhance collaboration through shared virtual workspaces and intuitive gesture-based interfaces.

Retail and e-commerce sectors drive demand for spatial computing architectures enabling virtual product visualization, allowing customers to preview furniture placement, clothing fitting, or product customization in real-world contexts. These applications require robust computer vision capabilities and accurate environmental understanding.

The growing demand necessitates spatial computing architectures that address fundamental challenges including computational efficiency, power consumption, thermal management, and seamless integration with existing infrastructure. Market requirements emphasize modular, scalable solutions capable of adapting to diverse deployment scenarios while maintaining consistent user experiences across varying environmental conditions and hardware specifications.

Manufacturing industries demonstrate substantial appetite for mixed reality spatial computing solutions that enable real-time visualization of complex assembly processes, remote expert assistance, and immersive training environments. Automotive manufacturers and aerospace companies particularly value systems that can overlay digital instructions onto physical components, reducing assembly errors and accelerating workforce onboarding.

Healthcare sector demand centers on surgical planning, medical education, and patient treatment applications. Medical institutions seek spatial computing architectures capable of rendering high-fidelity anatomical models, enabling surgeons to visualize patient-specific data in three-dimensional space while maintaining sterile operating environments. The precision requirements in healthcare applications drive demand for architectures with minimal latency and exceptional tracking accuracy.

Educational institutions increasingly request mixed reality systems that transform traditional learning methodologies through immersive historical recreations, scientific simulations, and collaborative virtual laboratories. The demand emphasizes scalable architectures supporting multiple simultaneous users while maintaining consistent performance across diverse hardware configurations.

Consumer market demand focuses on entertainment, social interaction, and productivity applications. Gaming enthusiasts seek seamless integration between physical and virtual environments, while remote workers demand spatial computing solutions that enhance collaboration through shared virtual workspaces and intuitive gesture-based interfaces.

Retail and e-commerce sectors drive demand for spatial computing architectures enabling virtual product visualization, allowing customers to preview furniture placement, clothing fitting, or product customization in real-world contexts. These applications require robust computer vision capabilities and accurate environmental understanding.

The growing demand necessitates spatial computing architectures that address fundamental challenges including computational efficiency, power consumption, thermal management, and seamless integration with existing infrastructure. Market requirements emphasize modular, scalable solutions capable of adapting to diverse deployment scenarios while maintaining consistent user experiences across varying environmental conditions and hardware specifications.

Current State of Spatial Computing Architecture Challenges

Spatial computing architectures for mixed reality systems face significant computational bottlenecks in real-time processing requirements. Current architectures struggle to simultaneously handle complex 3D scene reconstruction, object tracking, and rendering while maintaining the sub-20ms latency threshold necessary for comfortable user experiences. The computational overhead of simultaneous localization and mapping (SLAM) algorithms, combined with advanced computer vision processing, often exceeds the capabilities of mobile processors, forcing compromises in visual quality or frame rates.

Memory bandwidth limitations represent another critical constraint in existing spatial computing implementations. Mixed reality applications require rapid access to large datasets including 3D meshes, texture maps, sensor data streams, and tracking information. Current memory architectures cannot efficiently support the concurrent read-write operations demanded by real-time spatial understanding algorithms, leading to performance degradation and increased power consumption.

Sensor fusion complexity poses substantial integration challenges across different hardware platforms. Modern mixed reality systems incorporate multiple sensor types including RGB cameras, depth sensors, inertial measurement units, and environmental sensors. The heterogeneous nature of these data streams requires sophisticated synchronization mechanisms and calibration procedures that current architectures handle inefficiently, resulting in tracking drift and reduced spatial accuracy.

Power efficiency constraints severely limit the computational capabilities of standalone mixed reality devices. The energy requirements for continuous spatial processing, high-resolution displays, and wireless connectivity push current battery technologies to their limits. Existing architectures lack specialized processing units optimized for spatial computing workloads, forcing reliance on general-purpose processors that consume excessive power for these specific tasks.

Scalability issues emerge when spatial computing systems attempt to handle large-scale environments or multiple concurrent users. Current architectures demonstrate poor performance scaling as scene complexity increases, with processing requirements growing exponentially rather than linearly. The lack of distributed processing frameworks specifically designed for spatial computing limits the ability to leverage cloud resources effectively.

Standardization gaps across different mixed reality platforms create fragmentation in spatial computing implementations. The absence of unified APIs and data formats forces developers to create platform-specific solutions, hindering interoperability and limiting the development of robust spatial computing ecosystems that could benefit from shared computational resources and standardized processing pipelines.

Memory bandwidth limitations represent another critical constraint in existing spatial computing implementations. Mixed reality applications require rapid access to large datasets including 3D meshes, texture maps, sensor data streams, and tracking information. Current memory architectures cannot efficiently support the concurrent read-write operations demanded by real-time spatial understanding algorithms, leading to performance degradation and increased power consumption.

Sensor fusion complexity poses substantial integration challenges across different hardware platforms. Modern mixed reality systems incorporate multiple sensor types including RGB cameras, depth sensors, inertial measurement units, and environmental sensors. The heterogeneous nature of these data streams requires sophisticated synchronization mechanisms and calibration procedures that current architectures handle inefficiently, resulting in tracking drift and reduced spatial accuracy.

Power efficiency constraints severely limit the computational capabilities of standalone mixed reality devices. The energy requirements for continuous spatial processing, high-resolution displays, and wireless connectivity push current battery technologies to their limits. Existing architectures lack specialized processing units optimized for spatial computing workloads, forcing reliance on general-purpose processors that consume excessive power for these specific tasks.

Scalability issues emerge when spatial computing systems attempt to handle large-scale environments or multiple concurrent users. Current architectures demonstrate poor performance scaling as scene complexity increases, with processing requirements growing exponentially rather than linearly. The lack of distributed processing frameworks specifically designed for spatial computing limits the ability to leverage cloud resources effectively.

Standardization gaps across different mixed reality platforms create fragmentation in spatial computing implementations. The absence of unified APIs and data formats forces developers to create platform-specific solutions, hindering interoperability and limiting the development of robust spatial computing ecosystems that could benefit from shared computational resources and standardized processing pipelines.

Existing Spatial Computing Architecture Solutions

01 Distributed computing architectures for spatial data processing

Spatial computing systems utilize distributed computing architectures to process large-scale spatial data efficiently. These architectures employ multiple processing nodes working in parallel to handle complex spatial computations, coordinate transformations, and real-time data analysis. The distributed approach enables scalable processing of three-dimensional spatial information and supports concurrent operations across multiple spatial domains.- Distributed computing architectures for spatial data processing: Spatial computing systems utilize distributed architectures to process large-scale spatial data efficiently. These architectures employ multiple processing nodes working in parallel to handle complex spatial computations, coordinate transformations, and real-time data analysis. The distributed approach enables scalable processing of three-dimensional spatial information and supports concurrent operations across multiple spatial domains.

- Hardware acceleration and specialized processing units for spatial computing: Dedicated hardware components and specialized processing units are integrated into spatial computing architectures to accelerate spatial calculations and rendering operations. These include custom processors optimized for geometric transformations, spatial indexing, and real-time visualization. The hardware acceleration significantly improves performance for computationally intensive spatial operations and enables low-latency processing required for interactive applications.

- Memory management and data organization for spatial information: Spatial computing architectures implement specialized memory hierarchies and data organization schemes to efficiently store and access spatial data structures. These systems utilize optimized caching strategies, spatial indexing methods, and memory allocation techniques tailored for three-dimensional coordinate systems and volumetric data. The memory management approaches minimize access latency and maximize throughput for spatial queries and updates.

- Integration of sensor data and real-time spatial mapping: Architectures incorporate frameworks for integrating multiple sensor inputs to construct and update spatial representations in real-time. These systems process data from various sources to build comprehensive spatial models, perform simultaneous localization and mapping, and maintain accurate environmental representations. The integration enables dynamic spatial awareness and supports adaptive computing based on changing spatial contexts.

- Communication protocols and interfaces for spatial computing systems: Specialized communication protocols and interface standards facilitate data exchange between components in spatial computing architectures. These protocols support efficient transmission of spatial data, coordinate synchronization across distributed nodes, and interoperability between different spatial computing devices. The communication frameworks enable seamless integration of spatial computing capabilities into broader computing ecosystems.

02 Hardware acceleration and specialized processing units for spatial computing

Specialized hardware components and acceleration units are integrated into spatial computing architectures to enhance performance. These include dedicated processors for geometric calculations, spatial indexing operations, and coordinate system transformations. The hardware acceleration enables real-time processing of spatial data streams and reduces latency in spatial computation tasks.Expand Specific Solutions03 Memory management and data organization for spatial information

Spatial computing architectures implement specialized memory management strategies to efficiently store and retrieve spatial data structures. These systems organize spatial information using hierarchical data structures, spatial indexing mechanisms, and optimized memory allocation schemes. The architecture supports rapid access to spatial relationships and enables efficient querying of multidimensional spatial datasets.Expand Specific Solutions04 Integration of sensor data and spatial mapping systems

Architectures incorporate frameworks for integrating multiple sensor inputs and generating spatial maps in real-time. These systems process data from various sensing modalities to construct comprehensive spatial representations. The architecture supports fusion of sensor data streams, spatial registration, and dynamic updating of spatial models based on environmental changes.Expand Specific Solutions05 Communication protocols and interfaces for spatial computing networks

Spatial computing architectures define communication protocols and interfaces for exchanging spatial information across networked systems. These protocols enable coordination between distributed spatial computing nodes, synchronization of spatial reference frames, and sharing of spatial computation results. The architecture supports both local and remote spatial data access with optimized bandwidth utilization.Expand Specific Solutions

Key Players in Spatial Computing and MR Industry

The spatial computing architectures for mixed reality systems market represents a rapidly evolving competitive landscape currently in its growth phase, with significant market expansion driven by enterprise adoption and consumer interest. The market demonstrates substantial scale potential, evidenced by major technology investments from industry leaders. Technology maturity varies considerably across players, with established giants like Apple, Microsoft, and Qualcomm leveraging advanced hardware capabilities, while specialized companies such as Magic Leap and Snap focus on innovative AR/VR solutions. Meta Platforms Technologies and Alibaba Group contribute robust software platforms, whereas emerging players like VirtaMed target niche applications. Chinese companies including Tencent Technology and Beijing Zitiao Network Technology represent growing regional competition. The fragmented landscape suggests the technology remains in early-to-mid maturity stages, with no single dominant standard yet established across spatial computing architectures.

Magic Leap, Inc.

Technical Solution: Magic Leap has developed a unique spatial computing architecture centered around their "Digital Lightfield" technology and waveguide display system. Their approach combines multiple depth sensors, cameras, and IMUs to create what they call a "Persistent Coordinate Frame" that maintains spatial understanding across sessions. The Magic Leap 2 architecture employs advanced computer vision algorithms for dense spatial mapping, enabling precise occlusion handling and realistic light estimation for virtual objects. Their spatial computing platform includes the Lumin OS, specifically designed for mixed reality interactions, supporting natural hand gestures, eye tracking, and voice commands. The system utilizes machine learning models running on dedicated neural processing units to understand spatial context, recognize objects, and predict user intentions. Magic Leap's architecture emphasizes comfort and natural interaction, with lightweight optics and intuitive spatial interfaces that allow users to manipulate digital content as if it were physically present in their environment.

Strengths: Advanced waveguide display technology, comfortable form factor, precise spatial tracking, enterprise-focused applications. Weaknesses: Limited consumer market presence, high price point, smaller ecosystem compared to competitors.

Microsoft Technology Licensing LLC

Technical Solution: Microsoft's HoloLens platform represents a pioneering approach to spatial computing architecture for mixed reality systems. The architecture is built around the Holographic Processing Unit (HPU), a custom silicon chip designed specifically for processing spatial data and holographic rendering. The system employs advanced spatial mapping techniques using time-of-flight depth sensors and multiple cameras to create detailed 3D meshes of the environment in real-time. Microsoft's spatial computing framework includes the Mixed Reality Toolkit (MRTK) and Azure Spatial Anchors, enabling persistent holographic content across sessions and devices. The architecture supports spatial audio processing, gaze tracking, gesture recognition, and voice commands through integrated AI processing. Their approach emphasizes enterprise applications with robust tracking accuracy and stability, utilizing cloud computing for complex spatial computations and collaborative mixed reality experiences across multiple users and locations.

Strengths: Enterprise-focused solutions, robust tracking accuracy, strong cloud integration, comprehensive development ecosystem. Weaknesses: High cost, limited field of view, relatively heavy form factor.

Core Innovations in MR Spatial Computing Patents

3D spatial mapping in a 3D coordinate system of an ar headset using 2d images

PatentWO2024097249A1

Innovation

- The use of 2D images from medical imaging devices like X-ray and ultrasound devices, which are co-registered and overlaid in a 3D coordinate system of an AR headset, allowing for precise navigation and alignment of medical instruments by identifying reference points, lines, or areas in the 3D space, using optical codes and image visible markers to maintain accurate positioning even if the patient moves.

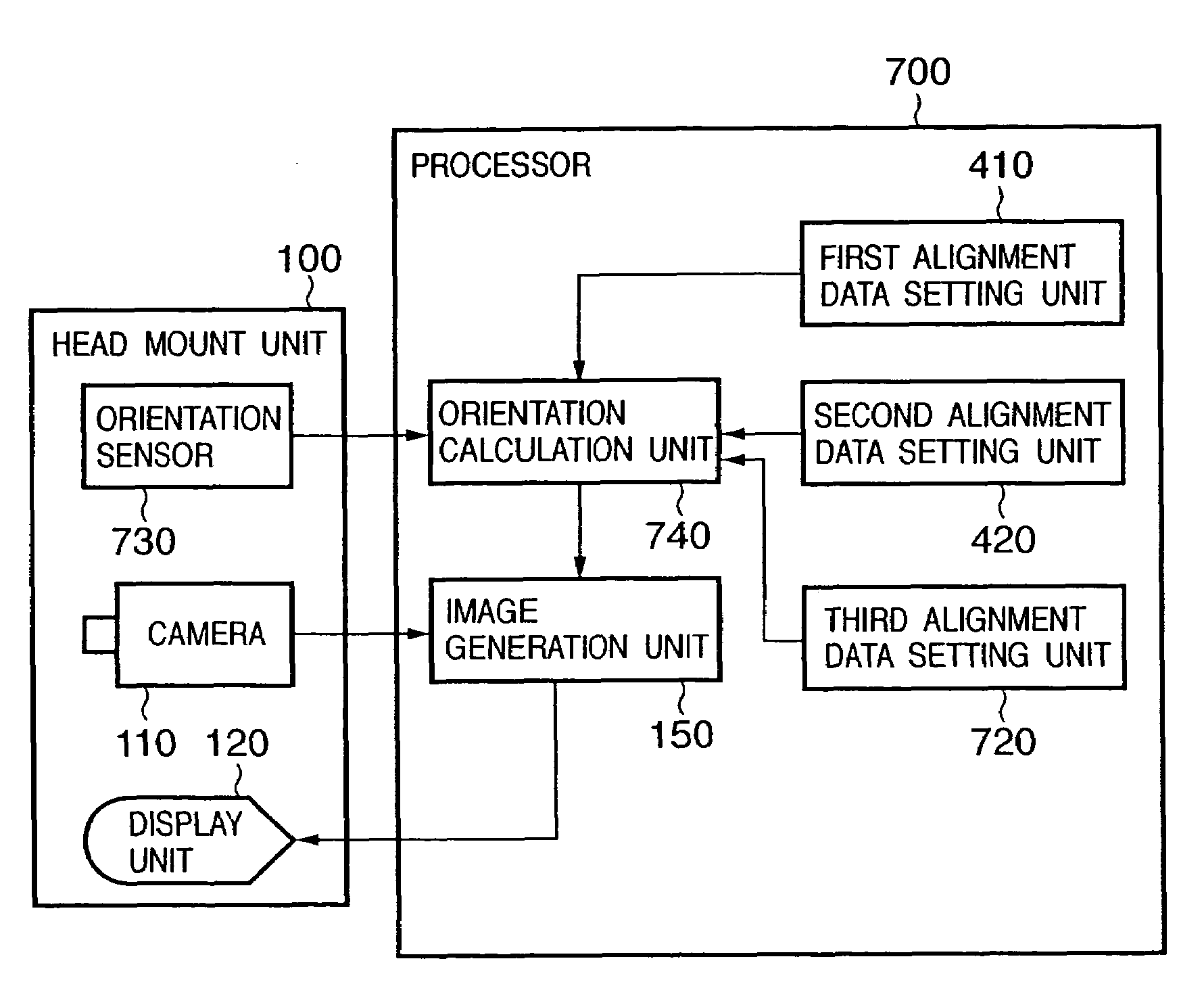

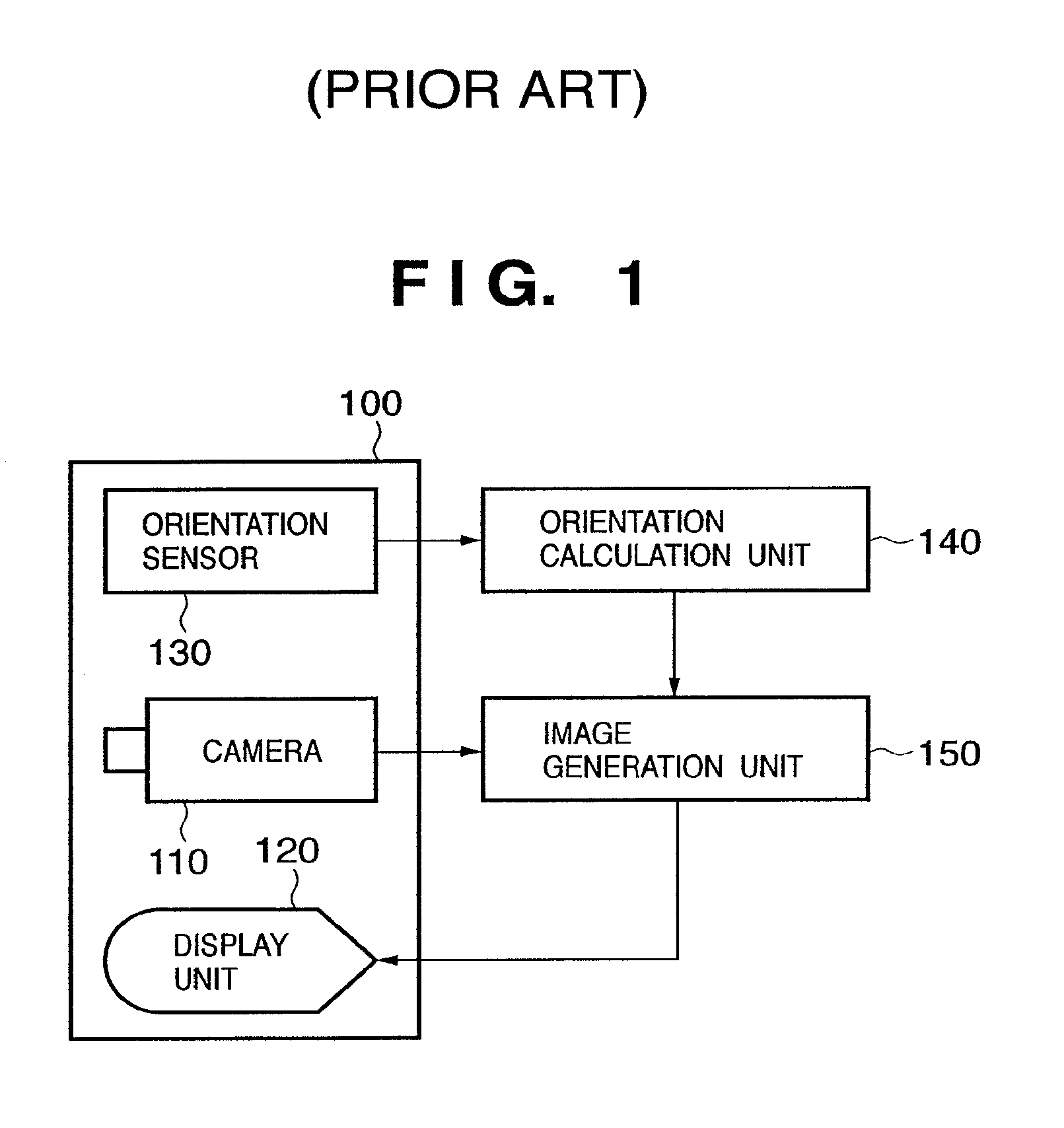

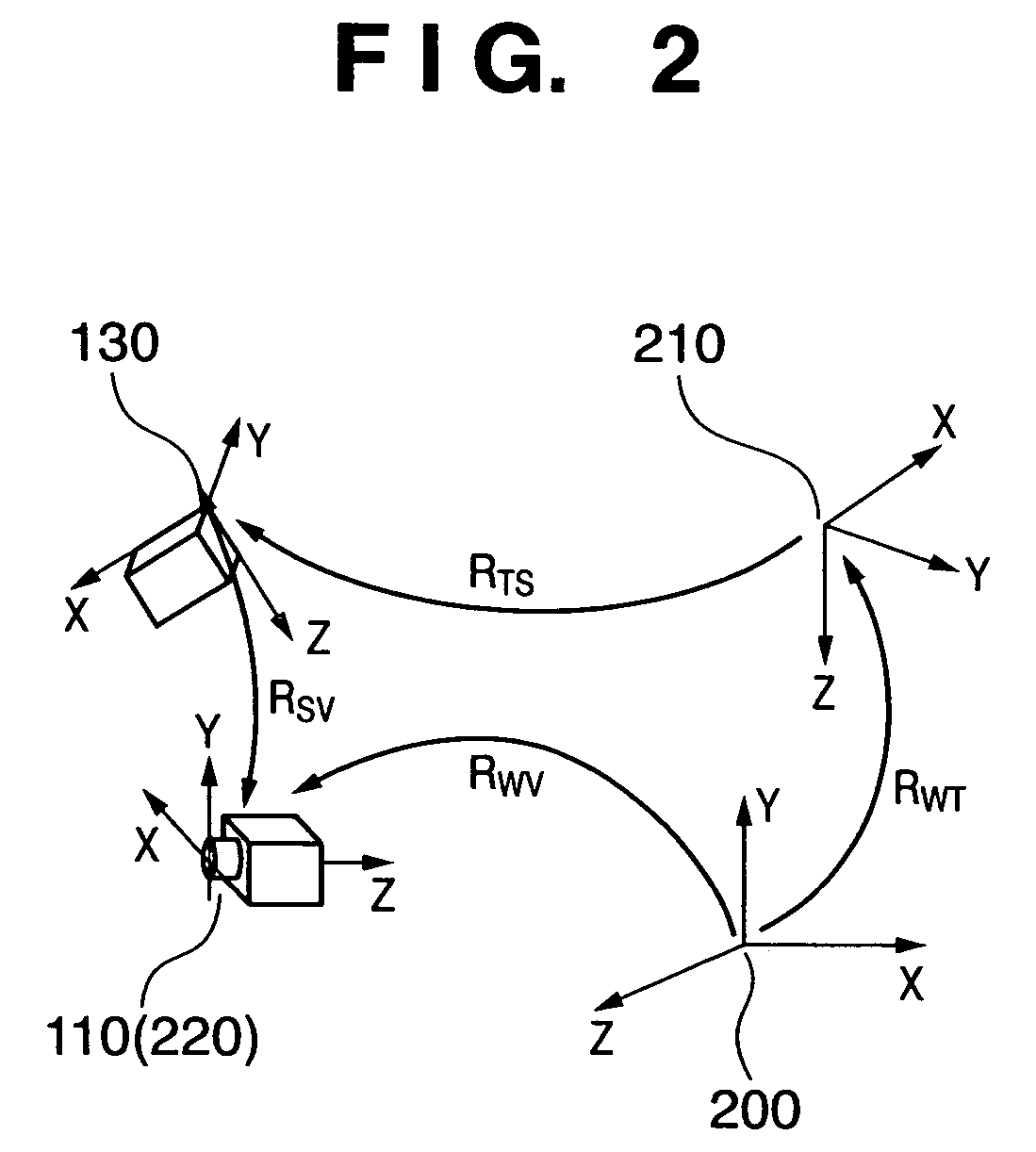

Data conversion method and apparatus, and orientation measurement apparatus

PatentActiveUS7414596B2

Innovation

- A data conversion method that involves setting first alignment data representing the gravitational direction on the reference coordinate system, calculating a second alignment data for the difference angle in the azimuth direction, and using these data along with orientation data from the sensor coordinate system to convert orientation measurements into the reference coordinate system, allowing for easy re-derivation of alignment data without restricting the design of the reference coordinate system.

Privacy and Security in Spatial Computing Systems

Privacy and security considerations represent critical challenges in spatial computing architectures for mixed reality systems, as these platforms collect, process, and store unprecedented amounts of sensitive user data. The immersive nature of MR environments necessitates continuous monitoring of user movements, biometric data, environmental mapping, and behavioral patterns, creating substantial privacy vulnerabilities that traditional computing paradigms have not encountered.

Spatial computing systems inherently require extensive data collection to function effectively, including real-time tracking of eye movements, hand gestures, facial expressions, and spatial positioning. This biometric information, combined with environmental scanning data, creates detailed digital profiles that could potentially reveal intimate personal information about users' physical spaces, daily routines, and behavioral preferences. The persistent nature of this data collection raises significant concerns about user consent, data minimization, and the potential for unauthorized surveillance.

Authentication and access control mechanisms in spatial computing present unique challenges due to the distributed nature of MR systems. Traditional password-based authentication becomes impractical in immersive environments, necessitating the development of biometric authentication methods such as iris scanning, voice recognition, or gesture-based authentication. However, these methods introduce additional security vectors while requiring robust encryption protocols to protect biometric templates from unauthorized access or spoofing attacks.

Data transmission security becomes particularly complex in spatial computing architectures due to the real-time processing requirements and the involvement of multiple computing nodes, including edge devices, cloud servers, and local processing units. End-to-end encryption protocols must be optimized to maintain low latency while ensuring data integrity across distributed computing environments. The challenge intensifies when considering cross-platform interoperability and the need for secure data sharing between different MR applications and services.

Privacy-preserving computation techniques, including differential privacy, homomorphic encryption, and federated learning, emerge as essential components for protecting user data while maintaining system functionality. These approaches enable spatial computing systems to perform necessary computations on user data without exposing raw personal information, though implementation complexity and computational overhead remain significant considerations for real-time MR applications.

Regulatory compliance adds another layer of complexity, as spatial computing systems must adhere to evolving privacy regulations such as GDPR, CCPA, and emerging legislation specifically targeting immersive technologies. The global nature of many MR platforms requires navigation of multiple jurisdictional requirements while maintaining consistent security standards across different deployment environments.

Spatial computing systems inherently require extensive data collection to function effectively, including real-time tracking of eye movements, hand gestures, facial expressions, and spatial positioning. This biometric information, combined with environmental scanning data, creates detailed digital profiles that could potentially reveal intimate personal information about users' physical spaces, daily routines, and behavioral preferences. The persistent nature of this data collection raises significant concerns about user consent, data minimization, and the potential for unauthorized surveillance.

Authentication and access control mechanisms in spatial computing present unique challenges due to the distributed nature of MR systems. Traditional password-based authentication becomes impractical in immersive environments, necessitating the development of biometric authentication methods such as iris scanning, voice recognition, or gesture-based authentication. However, these methods introduce additional security vectors while requiring robust encryption protocols to protect biometric templates from unauthorized access or spoofing attacks.

Data transmission security becomes particularly complex in spatial computing architectures due to the real-time processing requirements and the involvement of multiple computing nodes, including edge devices, cloud servers, and local processing units. End-to-end encryption protocols must be optimized to maintain low latency while ensuring data integrity across distributed computing environments. The challenge intensifies when considering cross-platform interoperability and the need for secure data sharing between different MR applications and services.

Privacy-preserving computation techniques, including differential privacy, homomorphic encryption, and federated learning, emerge as essential components for protecting user data while maintaining system functionality. These approaches enable spatial computing systems to perform necessary computations on user data without exposing raw personal information, though implementation complexity and computational overhead remain significant considerations for real-time MR applications.

Regulatory compliance adds another layer of complexity, as spatial computing systems must adhere to evolving privacy regulations such as GDPR, CCPA, and emerging legislation specifically targeting immersive technologies. The global nature of many MR platforms requires navigation of multiple jurisdictional requirements while maintaining consistent security standards across different deployment environments.

Hardware-Software Integration for MR Architectures

Hardware-software integration represents the cornerstone of effective mixed reality architectures, where seamless coordination between computational components and physical devices determines system performance and user experience quality. Modern MR systems require sophisticated integration strategies that bridge the gap between high-performance processing units, specialized sensors, and real-time rendering capabilities while maintaining optimal power efficiency and thermal management.

The integration architecture typically employs a multi-layered approach, where dedicated hardware accelerators work in conjunction with software abstraction layers to handle computationally intensive tasks such as simultaneous localization and mapping, object recognition, and real-time graphics rendering. Graphics processing units and specialized AI chips are increasingly integrated with custom silicon designed specifically for spatial computing workloads, enabling parallel processing of multiple data streams from cameras, depth sensors, and inertial measurement units.

Software frameworks play a crucial role in orchestrating hardware resources, with real-time operating systems providing deterministic scheduling for time-critical operations while higher-level middleware manages resource allocation and inter-component communication. Advanced driver architectures ensure low-latency data flow between sensors and processing units, while sophisticated memory management systems optimize data sharing between different computational domains.

Power management integration presents unique challenges in MR systems, requiring dynamic scaling of processing capabilities based on computational demands and thermal constraints. Adaptive algorithms continuously monitor system performance and automatically adjust hardware configurations to maintain optimal balance between processing power and energy consumption, ensuring sustained operation within portable form factors.

The emergence of edge computing integration further enhances MR capabilities by distributing computational loads between local hardware and cloud-based resources. This hybrid approach enables complex spatial computing tasks to leverage both immediate local processing for latency-sensitive operations and remote computational power for resource-intensive algorithms, creating more capable and responsive mixed reality experiences while managing hardware limitations effectively.

The integration architecture typically employs a multi-layered approach, where dedicated hardware accelerators work in conjunction with software abstraction layers to handle computationally intensive tasks such as simultaneous localization and mapping, object recognition, and real-time graphics rendering. Graphics processing units and specialized AI chips are increasingly integrated with custom silicon designed specifically for spatial computing workloads, enabling parallel processing of multiple data streams from cameras, depth sensors, and inertial measurement units.

Software frameworks play a crucial role in orchestrating hardware resources, with real-time operating systems providing deterministic scheduling for time-critical operations while higher-level middleware manages resource allocation and inter-component communication. Advanced driver architectures ensure low-latency data flow between sensors and processing units, while sophisticated memory management systems optimize data sharing between different computational domains.

Power management integration presents unique challenges in MR systems, requiring dynamic scaling of processing capabilities based on computational demands and thermal constraints. Adaptive algorithms continuously monitor system performance and automatically adjust hardware configurations to maintain optimal balance between processing power and energy consumption, ensuring sustained operation within portable form factors.

The emergence of edge computing integration further enhances MR capabilities by distributing computational loads between local hardware and cloud-based resources. This hybrid approach enables complex spatial computing tasks to leverage both immediate local processing for latency-sensitive operations and remote computational power for resource-intensive algorithms, creating more capable and responsive mixed reality experiences while managing hardware limitations effectively.

Unlock deeper insights with PatSnap Eureka Quick Research — get a full tech report to explore trends and direct your research. Try now!

Generate Your Research Report Instantly with AI Agent

Supercharge your innovation with PatSnap Eureka AI Agent Platform!