Spatial Computing Platforms for Scientific Visualization

MAR 17, 20269 MIN READ

Generate Your Research Report Instantly with AI Agent

PatSnap Eureka helps you evaluate technical feasibility & market potential.

Spatial Computing Background and Scientific Visualization Goals

Spatial computing represents a paradigm shift in human-computer interaction, fundamentally transforming how users perceive, interact with, and manipulate digital information within three-dimensional environments. This emerging field encompasses technologies such as augmented reality (AR), virtual reality (VR), mixed reality (MR), and extended reality (XR), creating immersive experiences that blend physical and digital worlds seamlessly.

The evolution of spatial computing traces back to early virtual reality experiments in the 1960s, progressing through decades of incremental advances in display technology, tracking systems, and computational power. Key milestones include the development of head-mounted displays, motion tracking sensors, and real-time 3D rendering capabilities. Recent breakthroughs in computer vision, machine learning, and miniaturized hardware have accelerated spatial computing adoption across various industries.

Contemporary spatial computing platforms leverage sophisticated algorithms for spatial mapping, object recognition, and environmental understanding. These systems create persistent digital overlays that respond to user gestures, voice commands, and environmental changes, enabling intuitive interaction paradigms that transcend traditional screen-based interfaces.

Scientific visualization has emerged as a critical application domain for spatial computing technologies, addressing longstanding challenges in data comprehension and analysis. Traditional scientific visualization relies on two-dimensional displays to represent complex multidimensional datasets, often resulting in information loss and cognitive barriers for researchers attempting to understand intricate relationships within their data.

The primary goal of integrating spatial computing with scientific visualization is to enhance researchers' ability to explore, analyze, and communicate complex scientific phenomena through immersive three-dimensional representations. This integration aims to provide intuitive manipulation of volumetric datasets, enabling scientists to examine molecular structures, astronomical phenomena, climate models, and biological systems with unprecedented clarity and depth.

Furthermore, spatial computing platforms for scientific visualization seek to facilitate collaborative research environments where multiple researchers can simultaneously interact with shared datasets regardless of geographical constraints. These platforms aspire to democratize access to advanced visualization tools, reducing technical barriers and enabling domain experts to focus on scientific discovery rather than technical implementation challenges.

The ultimate objective encompasses creating adaptive visualization systems that leverage artificial intelligence to automatically optimize data presentation based on user expertise, research context, and analytical objectives, thereby accelerating scientific discovery and enhancing research productivity across diverse scientific disciplines.

The evolution of spatial computing traces back to early virtual reality experiments in the 1960s, progressing through decades of incremental advances in display technology, tracking systems, and computational power. Key milestones include the development of head-mounted displays, motion tracking sensors, and real-time 3D rendering capabilities. Recent breakthroughs in computer vision, machine learning, and miniaturized hardware have accelerated spatial computing adoption across various industries.

Contemporary spatial computing platforms leverage sophisticated algorithms for spatial mapping, object recognition, and environmental understanding. These systems create persistent digital overlays that respond to user gestures, voice commands, and environmental changes, enabling intuitive interaction paradigms that transcend traditional screen-based interfaces.

Scientific visualization has emerged as a critical application domain for spatial computing technologies, addressing longstanding challenges in data comprehension and analysis. Traditional scientific visualization relies on two-dimensional displays to represent complex multidimensional datasets, often resulting in information loss and cognitive barriers for researchers attempting to understand intricate relationships within their data.

The primary goal of integrating spatial computing with scientific visualization is to enhance researchers' ability to explore, analyze, and communicate complex scientific phenomena through immersive three-dimensional representations. This integration aims to provide intuitive manipulation of volumetric datasets, enabling scientists to examine molecular structures, astronomical phenomena, climate models, and biological systems with unprecedented clarity and depth.

Furthermore, spatial computing platforms for scientific visualization seek to facilitate collaborative research environments where multiple researchers can simultaneously interact with shared datasets regardless of geographical constraints. These platforms aspire to democratize access to advanced visualization tools, reducing technical barriers and enabling domain experts to focus on scientific discovery rather than technical implementation challenges.

The ultimate objective encompasses creating adaptive visualization systems that leverage artificial intelligence to automatically optimize data presentation based on user expertise, research context, and analytical objectives, thereby accelerating scientific discovery and enhancing research productivity across diverse scientific disciplines.

Market Demand for Immersive Scientific Data Visualization

The scientific research community is experiencing an unprecedented surge in data complexity and volume, driving substantial demand for advanced visualization technologies that can effectively represent multidimensional datasets. Traditional two-dimensional visualization methods are increasingly inadequate for handling complex scientific phenomena such as molecular dynamics, climate modeling, astronomical observations, and fluid dynamics simulations. Researchers across disciplines are seeking immersive visualization solutions that enable intuitive exploration and analysis of their data in three-dimensional space.

Academic institutions and research laboratories represent the primary market segment for immersive scientific data visualization platforms. Universities with strong STEM programs, national laboratories, and government research facilities are actively investing in spatial computing technologies to enhance their research capabilities. These institutions require sophisticated visualization tools that can handle large-scale datasets while providing collaborative environments for interdisciplinary research teams.

The pharmaceutical and biotechnology sectors demonstrate particularly strong demand for immersive visualization platforms, especially for molecular modeling, drug discovery, and protein structure analysis. Companies in these industries are leveraging spatial computing to accelerate research timelines and improve decision-making processes. The ability to visualize complex molecular interactions in three-dimensional space provides researchers with enhanced understanding of biological processes and drug mechanisms.

Engineering and manufacturing industries are increasingly adopting immersive scientific visualization for computational fluid dynamics, structural analysis, and materials science applications. These sectors require real-time visualization capabilities that can support design optimization and performance analysis. The demand extends to aerospace, automotive, and energy companies that rely on complex simulations for product development and safety analysis.

Healthcare and medical research institutions represent another significant market segment, particularly for applications involving medical imaging, surgical planning, and anatomical education. The integration of spatial computing platforms with medical datasets enables more precise diagnosis and treatment planning, driving adoption across hospitals and medical schools.

The market demand is further amplified by the growing emphasis on data-driven research methodologies and the need for effective science communication. Researchers require visualization tools that not only support analysis but also facilitate presentation of findings to diverse audiences, including policymakers, funding agencies, and the general public.

Academic institutions and research laboratories represent the primary market segment for immersive scientific data visualization platforms. Universities with strong STEM programs, national laboratories, and government research facilities are actively investing in spatial computing technologies to enhance their research capabilities. These institutions require sophisticated visualization tools that can handle large-scale datasets while providing collaborative environments for interdisciplinary research teams.

The pharmaceutical and biotechnology sectors demonstrate particularly strong demand for immersive visualization platforms, especially for molecular modeling, drug discovery, and protein structure analysis. Companies in these industries are leveraging spatial computing to accelerate research timelines and improve decision-making processes. The ability to visualize complex molecular interactions in three-dimensional space provides researchers with enhanced understanding of biological processes and drug mechanisms.

Engineering and manufacturing industries are increasingly adopting immersive scientific visualization for computational fluid dynamics, structural analysis, and materials science applications. These sectors require real-time visualization capabilities that can support design optimization and performance analysis. The demand extends to aerospace, automotive, and energy companies that rely on complex simulations for product development and safety analysis.

Healthcare and medical research institutions represent another significant market segment, particularly for applications involving medical imaging, surgical planning, and anatomical education. The integration of spatial computing platforms with medical datasets enables more precise diagnosis and treatment planning, driving adoption across hospitals and medical schools.

The market demand is further amplified by the growing emphasis on data-driven research methodologies and the need for effective science communication. Researchers require visualization tools that not only support analysis but also facilitate presentation of findings to diverse audiences, including policymakers, funding agencies, and the general public.

Current State and Challenges of Spatial Computing Platforms

Spatial computing platforms for scientific visualization have reached a critical juncture where technological capabilities are rapidly expanding while significant implementation challenges persist. Current platforms demonstrate varying degrees of maturity, with established solutions like Unity3D and Unreal Engine providing robust foundations for immersive scientific data representation, while specialized frameworks such as ParaView VR and Omniverse offer domain-specific advantages for complex scientific datasets.

The hardware ecosystem presents a fragmented landscape with multiple competing standards. High-end VR headsets like the Meta Quest Pro and HTC Vive Pro series deliver impressive visual fidelity but remain constrained by processing power limitations when handling large-scale scientific datasets. Mixed reality devices, including Microsoft HoloLens 2 and Magic Leap 2, offer compelling spatial anchoring capabilities but struggle with field-of-view restrictions and computational bottlenecks that limit real-time rendering of complex molecular structures or fluid dynamics simulations.

Software architecture challenges represent the most significant barrier to widespread adoption. Current platforms lack standardized APIs for scientific data ingestion, forcing researchers to develop custom integration solutions for each visualization scenario. The absence of unified data format support creates substantial friction when transitioning between different spatial computing environments, particularly when dealing with multi-dimensional datasets common in climate modeling, genomics, and particle physics research.

Performance optimization remains critically underdeveloped across existing platforms. Real-time rendering of volumetric data, essential for applications like medical imaging and atmospheric modeling, frequently exceeds current hardware capabilities. Most platforms resort to aggressive data decimation or pre-processing techniques that compromise scientific accuracy, creating a fundamental tension between visual fidelity and computational feasibility.

Collaboration and multi-user functionality present additional complexity layers. While platforms like Spatial and Mozilla Hubs enable shared virtual environments, they lack the precision tracking and data synchronization required for collaborative scientific analysis. Network latency issues compound these challenges, particularly when multiple researchers attempt to manipulate the same dataset simultaneously across distributed locations.

Integration with existing scientific workflows represents another substantial hurdle. Current spatial computing platforms operate largely in isolation from established research tools like MATLAB, Python scientific libraries, and domain-specific simulation software. This disconnection forces researchers to export data through multiple conversion steps, introducing potential errors and significantly extending analysis timelines.

Despite these challenges, emerging solutions show promise in addressing core limitations. Cloud-based rendering services are beginning to offload computational demands from local hardware, while advances in spatial tracking algorithms improve precision for scientific measurement applications. However, the gap between current capabilities and the demanding requirements of scientific visualization workflows remains substantial, requiring continued innovation across hardware, software, and integration methodologies.

The hardware ecosystem presents a fragmented landscape with multiple competing standards. High-end VR headsets like the Meta Quest Pro and HTC Vive Pro series deliver impressive visual fidelity but remain constrained by processing power limitations when handling large-scale scientific datasets. Mixed reality devices, including Microsoft HoloLens 2 and Magic Leap 2, offer compelling spatial anchoring capabilities but struggle with field-of-view restrictions and computational bottlenecks that limit real-time rendering of complex molecular structures or fluid dynamics simulations.

Software architecture challenges represent the most significant barrier to widespread adoption. Current platforms lack standardized APIs for scientific data ingestion, forcing researchers to develop custom integration solutions for each visualization scenario. The absence of unified data format support creates substantial friction when transitioning between different spatial computing environments, particularly when dealing with multi-dimensional datasets common in climate modeling, genomics, and particle physics research.

Performance optimization remains critically underdeveloped across existing platforms. Real-time rendering of volumetric data, essential for applications like medical imaging and atmospheric modeling, frequently exceeds current hardware capabilities. Most platforms resort to aggressive data decimation or pre-processing techniques that compromise scientific accuracy, creating a fundamental tension between visual fidelity and computational feasibility.

Collaboration and multi-user functionality present additional complexity layers. While platforms like Spatial and Mozilla Hubs enable shared virtual environments, they lack the precision tracking and data synchronization required for collaborative scientific analysis. Network latency issues compound these challenges, particularly when multiple researchers attempt to manipulate the same dataset simultaneously across distributed locations.

Integration with existing scientific workflows represents another substantial hurdle. Current spatial computing platforms operate largely in isolation from established research tools like MATLAB, Python scientific libraries, and domain-specific simulation software. This disconnection forces researchers to export data through multiple conversion steps, introducing potential errors and significantly extending analysis timelines.

Despite these challenges, emerging solutions show promise in addressing core limitations. Cloud-based rendering services are beginning to offload computational demands from local hardware, while advances in spatial tracking algorithms improve precision for scientific measurement applications. However, the gap between current capabilities and the demanding requirements of scientific visualization workflows remains substantial, requiring continued innovation across hardware, software, and integration methodologies.

Existing Spatial Computing Solutions for Scientific Applications

01 Augmented Reality and Virtual Reality Integration

Spatial computing platforms integrate augmented reality (AR) and virtual reality (VR) technologies to create immersive experiences. These platforms utilize head-mounted displays, sensors, and tracking systems to overlay digital content onto the physical world or create fully virtual environments. The integration enables users to interact with three-dimensional digital objects in real-time, providing enhanced visualization and interaction capabilities for various applications including gaming, training, and design.- Augmented Reality and Virtual Reality Integration: Spatial computing platforms integrate augmented reality (AR) and virtual reality (VR) technologies to create immersive user experiences. These platforms enable the overlay of digital content onto physical environments or the creation of fully virtual spaces. The systems utilize advanced rendering techniques, head-mounted displays, and spatial tracking to provide seamless interaction between users and digital objects in three-dimensional space.

- Spatial Mapping and Environment Recognition: Advanced spatial computing platforms employ sophisticated mapping and environment recognition technologies to understand and interpret physical spaces. These systems use sensors, cameras, and depth-sensing technologies to create detailed three-dimensional maps of surroundings. The platforms can identify surfaces, objects, and spatial relationships, enabling accurate placement of virtual content and facilitating natural interaction within mixed reality environments.

- Gesture and Motion Tracking Systems: Spatial computing platforms incorporate gesture recognition and motion tracking capabilities to enable intuitive user interaction without traditional input devices. These systems utilize computer vision, machine learning algorithms, and sensor fusion to detect and interpret hand movements, body gestures, and eye tracking. The technology allows users to manipulate virtual objects, navigate interfaces, and interact with digital content through natural movements and gestures.

- Multi-User Collaboration and Shared Experiences: Modern spatial computing platforms support multi-user collaboration by enabling multiple participants to share the same virtual or augmented space simultaneously. These systems synchronize spatial data, user positions, and interactions across different devices and locations. The platforms facilitate collaborative work, social interactions, and shared experiences by maintaining consistent spatial references and enabling real-time communication between users in mixed reality environments.

- Cloud-Based Processing and Edge Computing: Spatial computing platforms leverage cloud-based processing and edge computing architectures to handle computationally intensive tasks such as real-time rendering, spatial analysis, and data processing. These distributed computing approaches enable platforms to offload processing from local devices, reduce latency, and provide scalable performance. The systems balance computational loads between edge devices and cloud servers to optimize responsiveness while maintaining high-quality spatial computing experiences.

02 Spatial Mapping and Environment Recognition

Advanced spatial computing platforms employ sophisticated mapping technologies to scan, recognize, and understand physical environments. These systems use depth sensors, cameras, and computer vision algorithms to create detailed three-dimensional maps of surroundings. The platforms can identify surfaces, objects, and spatial relationships, enabling accurate placement of virtual content and facilitating natural interaction between digital and physical elements. This capability is essential for applications requiring precise spatial awareness and environmental understanding.Expand Specific Solutions03 Multi-User Collaboration and Shared Experiences

Spatial computing platforms support collaborative environments where multiple users can simultaneously interact within shared virtual or mixed reality spaces. These systems synchronize user positions, gestures, and interactions across networked devices, enabling real-time collaboration regardless of physical location. The platforms facilitate shared visualization of data, collaborative design sessions, and interactive meetings, enhancing teamwork and communication through spatially-aware interfaces.Expand Specific Solutions04 Gesture and Motion-Based Input Systems

These platforms incorporate advanced input mechanisms that recognize and interpret user gestures, hand movements, and body motions as control commands. Using computer vision, depth sensing, and machine learning algorithms, the systems track user movements in three-dimensional space and translate them into meaningful interactions with virtual content. This natural input method eliminates the need for traditional controllers and enables intuitive manipulation of digital objects within spatial computing environments.Expand Specific Solutions05 Spatial Audio and Haptic Feedback Integration

Spatial computing platforms enhance immersion through integrated spatial audio systems and haptic feedback mechanisms. These technologies provide directional sound that corresponds to virtual object positions and tactile sensations that simulate physical interactions. The platforms process audio signals to create three-dimensional soundscapes and coordinate haptic actuators to deliver touch-based feedback, creating more realistic and engaging user experiences in virtual and mixed reality environments.Expand Specific Solutions

Key Players in Spatial Computing and Scientific Software Industry

The spatial computing platforms for scientific visualization market represents an emerging technology sector in its early growth phase, characterized by significant technological advancement potential and expanding market opportunities. The industry encompasses diverse players ranging from established technology giants like NVIDIA, Microsoft Technology Licensing, IBM, and Adobe to specialized visualization companies such as Ziosoft and Luminary Cloud. Academic institutions including Yale University, UNC Chapel Hill, and Beijing Institute of Technology contribute foundational research, while aerospace leaders like Boeing and defense contractors drive enterprise adoption. Healthcare visualization applications are advancing through companies like Siemens Healthineers and Philips, alongside emerging mixed reality pioneers such as Osterhout Group. The technology maturity varies significantly across segments, with GPU computing and cloud platforms reaching commercial viability while spatial computing integration remains in development phases, creating opportunities for both incremental improvements and breakthrough innovations.

International Business Machines Corp.

Technical Solution: IBM's spatial computing platform focuses on quantum visualization and AI-enhanced scientific data analysis through their Watson and quantum computing initiatives. Their approach combines traditional high-performance computing with quantum simulation capabilities, enabling visualization of quantum states and molecular interactions. IBM's Cloud Pak for Data provides spatial analytics tools that can process and visualize large-scale scientific datasets using machine learning algorithms. The platform offers specialized modules for materials science, drug discovery, and climate modeling, with emphasis on handling multi-dimensional data structures and providing interactive exploration capabilities for researchers.

Strengths: Advanced AI integration, quantum computing expertise, enterprise-grade security and scalability. Weaknesses: Complex implementation requirements, high licensing costs, limited consumer market presence.

Adobe, Inc.

Technical Solution: Adobe's spatial computing platform leverages their Creative Cloud ecosystem with advanced 3D modeling and visualization tools specifically adapted for scientific applications. Their Substance 3D suite provides photorealistic material rendering capabilities essential for scientific visualization, while Adobe Aero enables augmented reality experiences for educational and research purposes. The platform integrates with Adobe's AI-powered Sensei technology to automate complex visualization tasks and enhance data interpretation. Their cloud-based collaboration tools allow research teams to share and iterate on 3D scientific models with version control and real-time feedback mechanisms.

Strengths: Intuitive user interface, strong creative tools integration, excellent rendering quality. Weaknesses: Limited scientific-specific features, subscription-based pricing model, less suitable for real-time simulation.

Core Technologies in Immersive Scientific Data Rendering

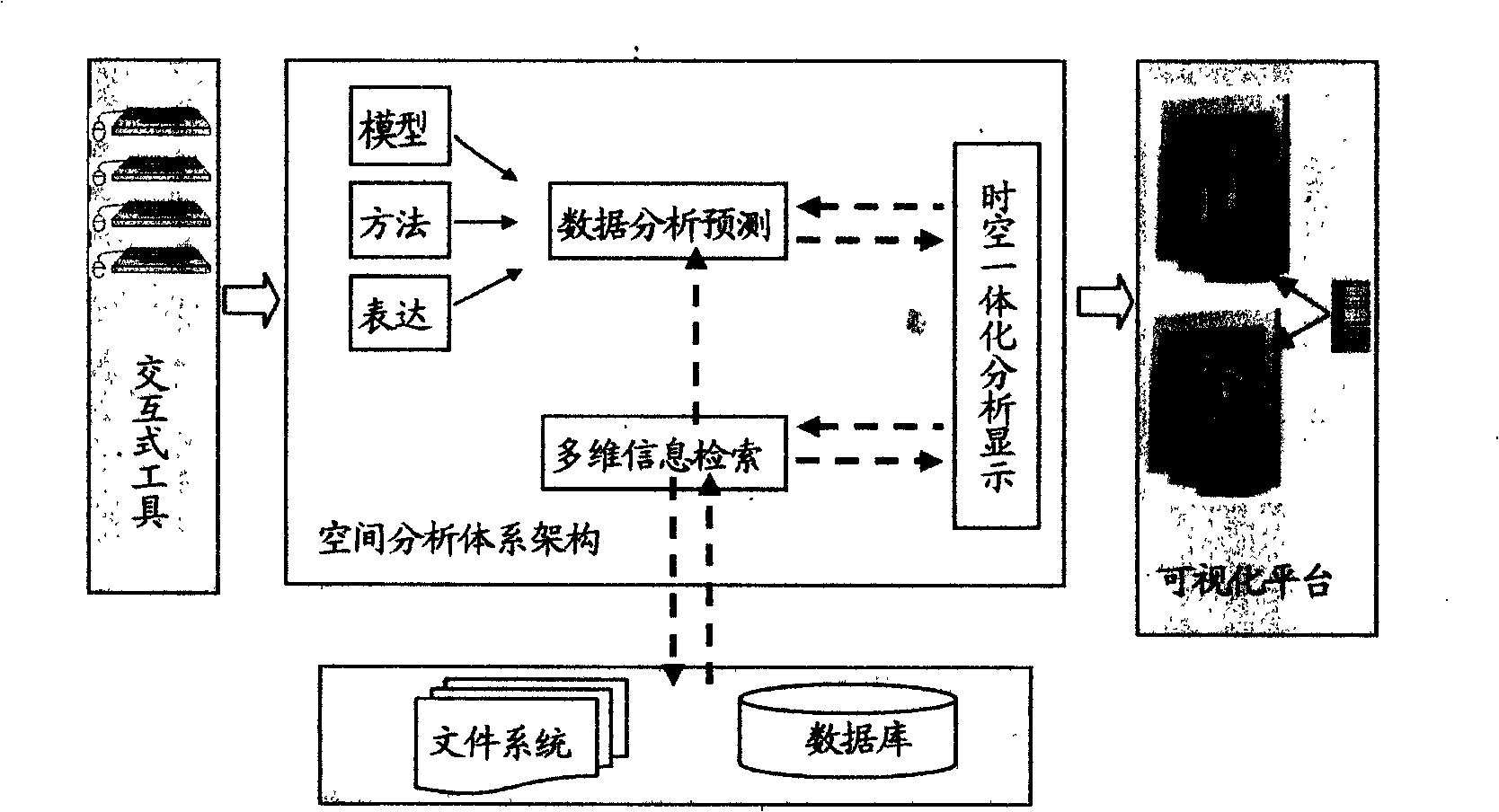

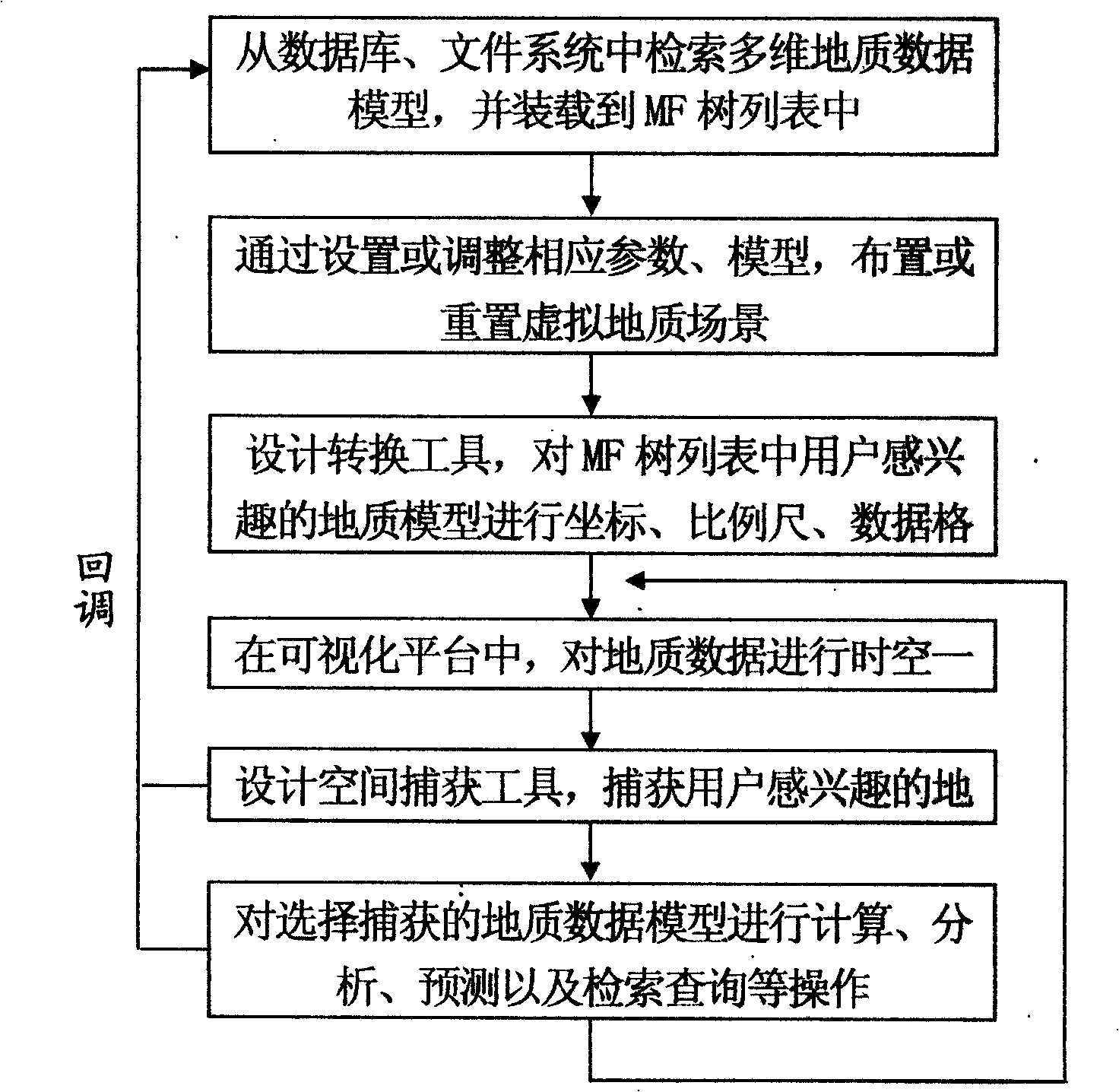

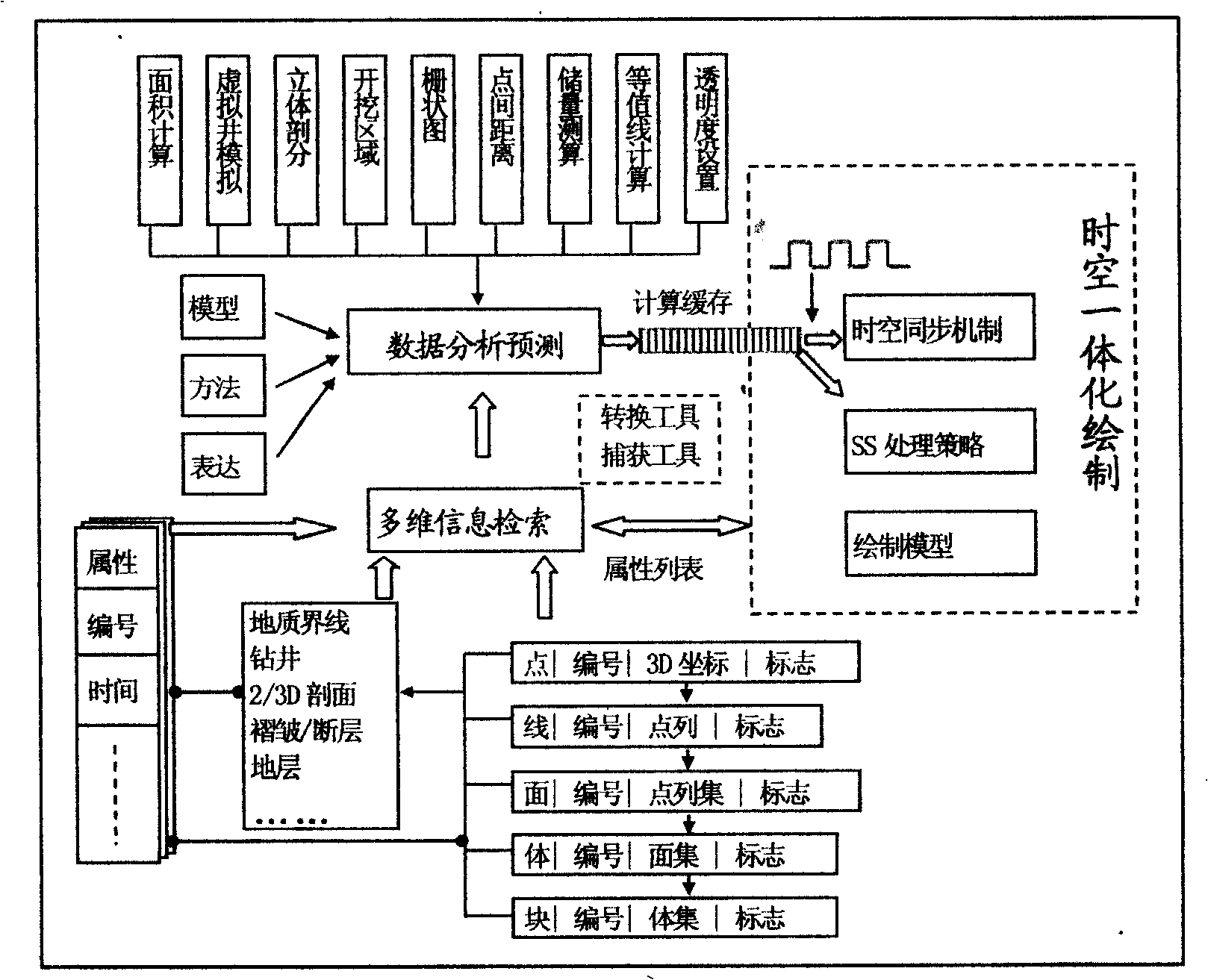

Visual analyzing and predicting method based on a virtual geological model

PatentInactiveCN101515372A

Innovation

- Interactive visualization tools based on virtual geological models are used to achieve spatial-temporal integrated mapping and analysis by retrieving multi-dimensional geological data, designing conversion tools, spatio-temporal synchronization mechanisms and SS strategies, and provide spatial capture tools for calculation and prediction, achieving fast and accurate 3D geological data processing and display.

Spatial data visualization

PatentActiveUS9799143B2

Innovation

- A system and method for spatial data visualization that includes a path bundle processing module to normalize and aggregate data from multiple sources, an analytics module to perform computations and identify spatial patterns, and a visualization module to generate heatmaps and trajectory paths, enabling efficient analysis and user interaction tracking.

Data Privacy and Security in Cloud-Based Spatial Platforms

Cloud-based spatial computing platforms for scientific visualization face unprecedented data privacy and security challenges due to the sensitive nature of research data and the distributed architecture of cloud environments. Scientific datasets often contain proprietary research findings, personal information from studies, or classified government research that requires stringent protection measures. The multi-tenant nature of cloud platforms introduces additional complexity, as data isolation and access control become critical factors in maintaining confidentiality.

Data encryption represents the foundational security layer for cloud-based spatial platforms. End-to-end encryption protocols ensure that scientific datasets remain protected during transmission and storage, with advanced encryption standards like AES-256 becoming industry benchmarks. However, the computational intensity of spatial visualization processing creates unique challenges, as encrypted data must be decrypted for processing, creating potential vulnerability windows. Homomorphic encryption techniques are emerging as promising solutions, enabling computation on encrypted data without exposing the underlying information.

Access control mechanisms in cloud-based spatial platforms require sophisticated identity and access management systems tailored to scientific collaboration workflows. Role-based access control (RBAC) and attribute-based access control (ABAC) frameworks provide granular permissions management, allowing researchers to share specific datasets or visualization components while maintaining overall data sovereignty. Multi-factor authentication and zero-trust security models are increasingly adopted to verify user identities and validate access requests continuously.

Data residency and sovereignty concerns present significant challenges for international scientific collaborations. Regulatory frameworks like GDPR, HIPAA, and various national data protection laws impose strict requirements on data location and processing. Cloud-based spatial platforms must implement geo-fencing capabilities and provide transparent data lineage tracking to ensure compliance with jurisdictional requirements while maintaining the collaborative benefits of cloud computing.

Emerging security technologies such as confidential computing and secure enclaves offer promising solutions for protecting sensitive scientific data during processing. These hardware-based security features create isolated execution environments within cloud infrastructure, enabling secure computation on sensitive datasets without exposing data to cloud providers or other tenants. Integration of blockchain technology for audit trails and data provenance tracking is also gaining traction in scientific computing environments.

Data encryption represents the foundational security layer for cloud-based spatial platforms. End-to-end encryption protocols ensure that scientific datasets remain protected during transmission and storage, with advanced encryption standards like AES-256 becoming industry benchmarks. However, the computational intensity of spatial visualization processing creates unique challenges, as encrypted data must be decrypted for processing, creating potential vulnerability windows. Homomorphic encryption techniques are emerging as promising solutions, enabling computation on encrypted data without exposing the underlying information.

Access control mechanisms in cloud-based spatial platforms require sophisticated identity and access management systems tailored to scientific collaboration workflows. Role-based access control (RBAC) and attribute-based access control (ABAC) frameworks provide granular permissions management, allowing researchers to share specific datasets or visualization components while maintaining overall data sovereignty. Multi-factor authentication and zero-trust security models are increasingly adopted to verify user identities and validate access requests continuously.

Data residency and sovereignty concerns present significant challenges for international scientific collaborations. Regulatory frameworks like GDPR, HIPAA, and various national data protection laws impose strict requirements on data location and processing. Cloud-based spatial platforms must implement geo-fencing capabilities and provide transparent data lineage tracking to ensure compliance with jurisdictional requirements while maintaining the collaborative benefits of cloud computing.

Emerging security technologies such as confidential computing and secure enclaves offer promising solutions for protecting sensitive scientific data during processing. These hardware-based security features create isolated execution environments within cloud infrastructure, enabling secure computation on sensitive datasets without exposing data to cloud providers or other tenants. Integration of blockchain technology for audit trails and data provenance tracking is also gaining traction in scientific computing environments.

Hardware Requirements and Performance Optimization Strategies

Spatial computing platforms for scientific visualization demand sophisticated hardware architectures capable of processing massive datasets while maintaining real-time rendering performance. The fundamental hardware requirements encompass high-performance graphics processing units with substantial video memory, typically requiring GPUs with at least 16GB VRAM for complex volumetric datasets. Multi-core CPUs with high clock speeds are essential for data preprocessing and spatial transformation calculations, while large system memory configurations of 64GB or more enable efficient handling of multi-dimensional scientific datasets.

Storage infrastructure represents a critical bottleneck in spatial computing workflows. High-speed NVMe SSD arrays configured in RAID configurations provide the necessary bandwidth for streaming large-scale simulation data. Network infrastructure must support high-throughput data transfer protocols, particularly when implementing distributed rendering across multiple nodes or accessing remote scientific databases.

Performance optimization strategies focus on leveraging parallel processing architectures through GPU compute shaders and CUDA implementations. Level-of-detail algorithms dynamically adjust rendering complexity based on spatial proximity and viewing angles, significantly reducing computational overhead. Temporal coherence optimization techniques cache intermediate calculations between frames, exploiting the predictable nature of scientific data exploration patterns.

Memory management optimization involves implementing sophisticated caching hierarchies that prioritize frequently accessed data regions. Out-of-core rendering techniques enable visualization of datasets exceeding available system memory by intelligently streaming data segments based on current viewport requirements. Compression algorithms specifically designed for scientific data types reduce memory footprint while preserving numerical accuracy.

Distributed computing strategies partition rendering workloads across multiple processing nodes, enabling scalability for enterprise-level scientific visualization applications. Load balancing algorithms dynamically redistribute computational tasks based on real-time performance metrics, ensuring optimal resource utilization across heterogeneous hardware configurations.

Storage infrastructure represents a critical bottleneck in spatial computing workflows. High-speed NVMe SSD arrays configured in RAID configurations provide the necessary bandwidth for streaming large-scale simulation data. Network infrastructure must support high-throughput data transfer protocols, particularly when implementing distributed rendering across multiple nodes or accessing remote scientific databases.

Performance optimization strategies focus on leveraging parallel processing architectures through GPU compute shaders and CUDA implementations. Level-of-detail algorithms dynamically adjust rendering complexity based on spatial proximity and viewing angles, significantly reducing computational overhead. Temporal coherence optimization techniques cache intermediate calculations between frames, exploiting the predictable nature of scientific data exploration patterns.

Memory management optimization involves implementing sophisticated caching hierarchies that prioritize frequently accessed data regions. Out-of-core rendering techniques enable visualization of datasets exceeding available system memory by intelligently streaming data segments based on current viewport requirements. Compression algorithms specifically designed for scientific data types reduce memory footprint while preserving numerical accuracy.

Distributed computing strategies partition rendering workloads across multiple processing nodes, enabling scalability for enterprise-level scientific visualization applications. Load balancing algorithms dynamically redistribute computational tasks based on real-time performance metrics, ensuring optimal resource utilization across heterogeneous hardware configurations.

Unlock deeper insights with PatSnap Eureka Quick Research — get a full tech report to explore trends and direct your research. Try now!

Generate Your Research Report Instantly with AI Agent

Supercharge your innovation with PatSnap Eureka AI Agent Platform!