RISC vs CISC: Which Supports Scalability in Cloud Environments?

MAR 26, 20269 MIN READ

Generate Your Research Report Instantly with AI Agent

Patsnap Eureka helps you evaluate technical feasibility & market potential.

RISC vs CISC Architecture Evolution and Cloud Computing Goals

The evolution of processor architectures has been fundamentally shaped by the contrasting philosophies of Reduced Instruction Set Computing (RISC) and Complex Instruction Set Computing (CISC). RISC architecture emerged in the 1980s as a response to the growing complexity of CISC processors, advocating for simplified instruction sets that could execute faster and more efficiently. This architectural paradigm emphasized the principle that simpler instructions, executed at higher frequencies, could outperform complex instructions that required multiple clock cycles.

CISC architecture, exemplified by Intel's x86 family, dominated the computing landscape for decades through its comprehensive instruction sets designed to minimize the semantic gap between high-level programming languages and machine code. These processors incorporated complex addressing modes and multi-step operations within single instructions, optimizing for code density and programmer productivity in an era when memory was expensive and compiler technology was less sophisticated.

The historical trajectory of these architectures reveals distinct evolutionary paths driven by different optimization priorities. RISC processors, including ARM and SPARC designs, focused on pipeline efficiency, uniform instruction formats, and simplified decode logic. Meanwhile, CISC architectures evolved through microcode optimization, superscalar execution, and sophisticated branch prediction mechanisms to maintain performance competitiveness.

The advent of cloud computing has fundamentally altered the performance requirements and optimization targets for processor architectures. Cloud environments demand exceptional scalability, energy efficiency, and multi-tenant workload management capabilities. These requirements have shifted the architectural evaluation criteria from traditional metrics like single-threaded performance to more complex considerations including power consumption per operation, thermal design constraints, and parallel processing efficiency.

Modern cloud computing goals encompass horizontal scalability across distributed systems, vertical scalability within individual nodes, and dynamic resource allocation based on workload characteristics. The architecture's ability to support virtualization technologies, container orchestration, and microservices deployment has become paramount. Additionally, cloud providers increasingly prioritize total cost of ownership, which encompasses not only initial hardware costs but also operational expenses related to power consumption, cooling requirements, and data center space utilization.

The convergence of these architectural evolution trends with cloud computing objectives has created new evaluation frameworks for assessing processor suitability in large-scale distributed environments, fundamentally reshaping the RISC versus CISC debate in contemporary computing contexts.

CISC architecture, exemplified by Intel's x86 family, dominated the computing landscape for decades through its comprehensive instruction sets designed to minimize the semantic gap between high-level programming languages and machine code. These processors incorporated complex addressing modes and multi-step operations within single instructions, optimizing for code density and programmer productivity in an era when memory was expensive and compiler technology was less sophisticated.

The historical trajectory of these architectures reveals distinct evolutionary paths driven by different optimization priorities. RISC processors, including ARM and SPARC designs, focused on pipeline efficiency, uniform instruction formats, and simplified decode logic. Meanwhile, CISC architectures evolved through microcode optimization, superscalar execution, and sophisticated branch prediction mechanisms to maintain performance competitiveness.

The advent of cloud computing has fundamentally altered the performance requirements and optimization targets for processor architectures. Cloud environments demand exceptional scalability, energy efficiency, and multi-tenant workload management capabilities. These requirements have shifted the architectural evaluation criteria from traditional metrics like single-threaded performance to more complex considerations including power consumption per operation, thermal design constraints, and parallel processing efficiency.

Modern cloud computing goals encompass horizontal scalability across distributed systems, vertical scalability within individual nodes, and dynamic resource allocation based on workload characteristics. The architecture's ability to support virtualization technologies, container orchestration, and microservices deployment has become paramount. Additionally, cloud providers increasingly prioritize total cost of ownership, which encompasses not only initial hardware costs but also operational expenses related to power consumption, cooling requirements, and data center space utilization.

The convergence of these architectural evolution trends with cloud computing objectives has created new evaluation frameworks for assessing processor suitability in large-scale distributed environments, fundamentally reshaping the RISC versus CISC debate in contemporary computing contexts.

Market Demand for Scalable Cloud Infrastructure Solutions

The global cloud infrastructure market has experienced unprecedented growth driven by digital transformation initiatives across industries. Organizations are increasingly migrating from traditional on-premises systems to cloud-based solutions, creating substantial demand for scalable computing architectures. This shift has intensified focus on the underlying processor architectures that power cloud data centers, particularly the debate between RISC and CISC designs for optimal scalability performance.

Enterprise demand for elastic computing resources has become a critical factor in cloud adoption decisions. Modern businesses require infrastructure that can dynamically scale to handle varying workloads, from baseline operations to peak traffic scenarios. This scalability requirement extends beyond simple capacity expansion to include performance consistency, energy efficiency, and cost optimization across different load conditions.

The rise of containerization and microservices architectures has further amplified the need for processor designs that excel in multi-tenant environments. Cloud service providers must support thousands of concurrent virtual machines and containers while maintaining isolation and performance guarantees. This operational complexity places unique demands on processor architectures, favoring designs that can efficiently handle context switching, memory management, and parallel processing tasks.

Data-intensive applications including artificial intelligence, machine learning, and big data analytics have emerged as significant drivers of cloud infrastructure demand. These workloads require processors capable of handling both compute-intensive operations and high-throughput data processing. The architectural choice between RISC and CISC becomes particularly relevant when considering how different instruction set designs impact performance for these emerging application categories.

Cost optimization remains a primary concern for cloud infrastructure operators managing large-scale deployments. Energy efficiency directly impacts operational expenses, while processor utilization rates affect capital expenditure efficiency. Organizations increasingly evaluate processor architectures based on their ability to deliver consistent performance per watt and performance per dollar metrics across diverse workload patterns.

The growing emphasis on edge computing and distributed cloud architectures has created additional market pressure for scalable processor solutions. As computing moves closer to data sources and end users, the demand for architectures that can scale efficiently across different deployment scenarios continues to expand, influencing long-term infrastructure investment decisions.

Enterprise demand for elastic computing resources has become a critical factor in cloud adoption decisions. Modern businesses require infrastructure that can dynamically scale to handle varying workloads, from baseline operations to peak traffic scenarios. This scalability requirement extends beyond simple capacity expansion to include performance consistency, energy efficiency, and cost optimization across different load conditions.

The rise of containerization and microservices architectures has further amplified the need for processor designs that excel in multi-tenant environments. Cloud service providers must support thousands of concurrent virtual machines and containers while maintaining isolation and performance guarantees. This operational complexity places unique demands on processor architectures, favoring designs that can efficiently handle context switching, memory management, and parallel processing tasks.

Data-intensive applications including artificial intelligence, machine learning, and big data analytics have emerged as significant drivers of cloud infrastructure demand. These workloads require processors capable of handling both compute-intensive operations and high-throughput data processing. The architectural choice between RISC and CISC becomes particularly relevant when considering how different instruction set designs impact performance for these emerging application categories.

Cost optimization remains a primary concern for cloud infrastructure operators managing large-scale deployments. Energy efficiency directly impacts operational expenses, while processor utilization rates affect capital expenditure efficiency. Organizations increasingly evaluate processor architectures based on their ability to deliver consistent performance per watt and performance per dollar metrics across diverse workload patterns.

The growing emphasis on edge computing and distributed cloud architectures has created additional market pressure for scalable processor solutions. As computing moves closer to data sources and end users, the demand for architectures that can scale efficiently across different deployment scenarios continues to expand, influencing long-term infrastructure investment decisions.

Current State of RISC and CISC in Cloud Deployments

RISC and CISC architectures currently dominate different segments of cloud computing infrastructure, each demonstrating distinct advantages in specific deployment scenarios. The contemporary cloud landscape reveals a clear architectural divide, with RISC-based processors gaining significant momentum in hyperscale data centers while CISC architectures maintain their stronghold in enterprise and hybrid cloud environments.

ARM-based RISC processors have achieved remarkable penetration in cloud deployments, particularly following Amazon Web Services' introduction of Graviton processors. Major cloud providers including AWS, Google Cloud, and Microsoft Azure now offer ARM-based instances, with AWS reporting up to 40% better price-performance ratios for certain workloads. This adoption trend reflects RISC's inherent advantages in power efficiency and thermal management, critical factors for large-scale cloud operations.

Intel and AMD's x86 CISC processors continue to dominate the overall cloud infrastructure market, holding approximately 85% market share across global data centers. Their established ecosystem, comprehensive software compatibility, and superior single-threaded performance make them preferred choices for legacy applications and compute-intensive workloads. Enterprise customers particularly favor x86 architectures for their proven reliability and extensive toolchain support.

The current deployment patterns reveal architectural specialization based on workload characteristics. RISC processors excel in containerized environments, microservices architectures, and web-scale applications where parallel processing and energy efficiency are paramount. Cloud-native applications increasingly leverage ARM instances for cost optimization and environmental sustainability goals.

Conversely, CISC deployments remain prevalent in database systems, high-performance computing clusters, and applications requiring complex instruction processing. Financial services, scientific computing, and enterprise resource planning systems continue to rely heavily on x86 architectures due to their mature optimization frameworks and predictable performance characteristics.

Geographic distribution shows interesting patterns, with Asian cloud providers more aggressively adopting ARM-based solutions, while North American and European markets maintain stronger x86 preferences. This divergence reflects different market priorities, regulatory requirements, and customer expectations across regions.

The emergence of custom silicon initiatives by major cloud providers signals a shift toward specialized RISC implementations. Google's TPUs, Amazon's Graviton series, and Apple's M-series processors demonstrate how cloud-scale operators are leveraging RISC principles to optimize specific workload categories while reducing dependency on traditional CISC vendors.

ARM-based RISC processors have achieved remarkable penetration in cloud deployments, particularly following Amazon Web Services' introduction of Graviton processors. Major cloud providers including AWS, Google Cloud, and Microsoft Azure now offer ARM-based instances, with AWS reporting up to 40% better price-performance ratios for certain workloads. This adoption trend reflects RISC's inherent advantages in power efficiency and thermal management, critical factors for large-scale cloud operations.

Intel and AMD's x86 CISC processors continue to dominate the overall cloud infrastructure market, holding approximately 85% market share across global data centers. Their established ecosystem, comprehensive software compatibility, and superior single-threaded performance make them preferred choices for legacy applications and compute-intensive workloads. Enterprise customers particularly favor x86 architectures for their proven reliability and extensive toolchain support.

The current deployment patterns reveal architectural specialization based on workload characteristics. RISC processors excel in containerized environments, microservices architectures, and web-scale applications where parallel processing and energy efficiency are paramount. Cloud-native applications increasingly leverage ARM instances for cost optimization and environmental sustainability goals.

Conversely, CISC deployments remain prevalent in database systems, high-performance computing clusters, and applications requiring complex instruction processing. Financial services, scientific computing, and enterprise resource planning systems continue to rely heavily on x86 architectures due to their mature optimization frameworks and predictable performance characteristics.

Geographic distribution shows interesting patterns, with Asian cloud providers more aggressively adopting ARM-based solutions, while North American and European markets maintain stronger x86 preferences. This divergence reflects different market priorities, regulatory requirements, and customer expectations across regions.

The emergence of custom silicon initiatives by major cloud providers signals a shift toward specialized RISC implementations. Google's TPUs, Amazon's Graviton series, and Apple's M-series processors demonstrate how cloud-scale operators are leveraging RISC principles to optimize specific workload categories while reducing dependency on traditional CISC vendors.

Existing RISC and CISC Solutions for Cloud Scalability

01 Hybrid RISC-CISC architecture for improved scalability

Processor architectures that combine RISC and CISC principles to achieve better scalability by leveraging the simplicity of RISC instruction sets while maintaining compatibility with complex instruction sets. This hybrid approach allows for efficient instruction execution and improved performance scaling across different workload types through dynamic instruction translation and optimization.- Hybrid RISC-CISC architecture for improved scalability: Processor architectures that combine RISC and CISC principles to achieve better scalability by leveraging the simplicity of RISC instruction sets with the flexibility of CISC operations. These hybrid approaches allow for efficient instruction execution while maintaining compatibility with complex instruction sets, enabling better performance scaling across different workload types.

- Instruction set optimization for scalable processing: Techniques for optimizing instruction sets to enhance processor scalability, including methods for reducing instruction complexity, improving instruction decode efficiency, and enabling parallel execution. These optimizations allow processors to scale performance more effectively by streamlining instruction processing pipelines and reducing execution bottlenecks.

- Multi-core and parallel processing scalability: Architectural approaches for scaling processor performance through multi-core designs and parallel processing capabilities. These solutions address scalability by distributing workloads across multiple processing units, enabling efficient resource utilization and improved throughput for both RISC and CISC based systems.

- Memory hierarchy and cache optimization for scalability: Design strategies for memory systems and cache architectures that support scalable processor performance. These approaches focus on optimizing data access patterns, reducing memory latency, and improving bandwidth utilization to enable effective scaling of both RISC and CISC processor architectures across different performance levels.

- Dynamic instruction translation and execution optimization: Methods for dynamically translating and optimizing instruction execution to improve scalability across different processor architectures. These techniques enable efficient execution of both simple and complex instructions through runtime optimization, instruction reordering, and adaptive execution strategies that scale with varying computational demands.

02 Instruction set translation and emulation techniques

Methods for translating between RISC and CISC instruction sets to enable scalability across different processor architectures. These techniques involve converting complex instructions into simpler operations or vice versa, allowing processors to execute code originally designed for different instruction set architectures while maintaining performance and scalability benefits.Expand Specific Solutions03 Parallel processing and multi-core scalability

Scalability improvements through parallel execution units and multi-core architectures that can be implemented in both RISC and CISC designs. These approaches focus on distributing workloads across multiple processing cores or execution units to achieve better performance scaling, with considerations for instruction-level parallelism and thread-level parallelism specific to each architecture type.Expand Specific Solutions04 Pipeline optimization for scalable instruction execution

Pipeline design techniques that enhance scalability in processor architectures by optimizing instruction flow and execution stages. These methods address the different pipeline requirements of RISC and CISC architectures, including techniques for handling variable-length instructions, branch prediction, and out-of-order execution to improve throughput and scalability.Expand Specific Solutions05 Cache hierarchy and memory management for scalability

Memory subsystem designs that support scalability in both RISC and CISC architectures through optimized cache hierarchies and memory management strategies. These approaches focus on reducing memory access latency and improving bandwidth utilization to support scaling across different performance levels, including techniques for cache coherency and memory addressing specific to each instruction set architecture.Expand Specific Solutions

Key Players in Cloud Processor and Architecture Market

The RISC vs CISC scalability debate in cloud environments represents a mature technology landscape experiencing significant transformation driven by cloud-native demands. The market, valued in hundreds of billions globally, shows established players like IBM, AMD, and Oracle maintaining strong positions with CISC-based solutions, while emerging companies such as Loongson Technology and Alibaba Cloud are advancing RISC architectures. Technology maturity varies significantly - traditional CISC implementations from Microsoft Technology Licensing and Hewlett Packard Enterprise demonstrate proven enterprise scalability, whereas RISC innovations from Huawei Technologies and Chinese cloud providers like Inspur Cloud are rapidly evolving. The competitive landscape indicates a shift toward hybrid approaches, with companies like Fujitsu and Hitachi integrating both architectures to optimize cloud workload performance and energy efficiency in distributed computing environments.

Microsoft Technology Licensing LLC

Technical Solution: Microsoft leverages both RISC and CISC architectures in Azure cloud infrastructure through their partnership with ARM-based processors and Intel x86 systems. Their Azure platform utilizes ARM-based virtual machines for cost-effective workloads while maintaining x86 compatibility for legacy applications. Microsoft's approach focuses on workload-specific architecture selection, deploying RISC-based ARM processors for web services and microservices that benefit from power efficiency, while using CISC x86 processors for compute-intensive applications requiring complex instruction sets. Their cloud scalability strategy involves dynamic resource allocation across heterogeneous processor architectures, enabling optimal performance per watt ratios.

Strengths: Flexible multi-architecture support, strong ecosystem integration, excellent power efficiency optimization. Weaknesses: Complex management overhead, potential compatibility issues between architectures, higher development costs for multi-platform support.

International Business Machines Corp.

Technical Solution: IBM's cloud scalability approach centers on their POWER architecture, which represents a hybrid RISC design optimized for enterprise workloads. Their POWER processors feature simultaneous multithreading capabilities supporting up to 8 threads per core, enabling superior throughput for cloud applications. IBM's cloud infrastructure utilizes POWER's reduced instruction complexity to achieve better performance per core in virtualized environments. The company's scalability strategy involves vertical scaling through high-core-count processors and horizontal scaling through efficient inter-processor communication. Their OpenPOWER initiative promotes RISC-based solutions for cloud computing, emphasizing the architecture's advantages in parallel processing and energy efficiency for large-scale deployments.

Strengths: Superior multithreading capabilities, excellent enterprise-grade reliability, strong performance in virtualized environments. Weaknesses: Limited market ecosystem compared to x86, higher initial costs, reduced software compatibility with mainstream applications.

Core Innovations in Processor Design for Cloud Environments

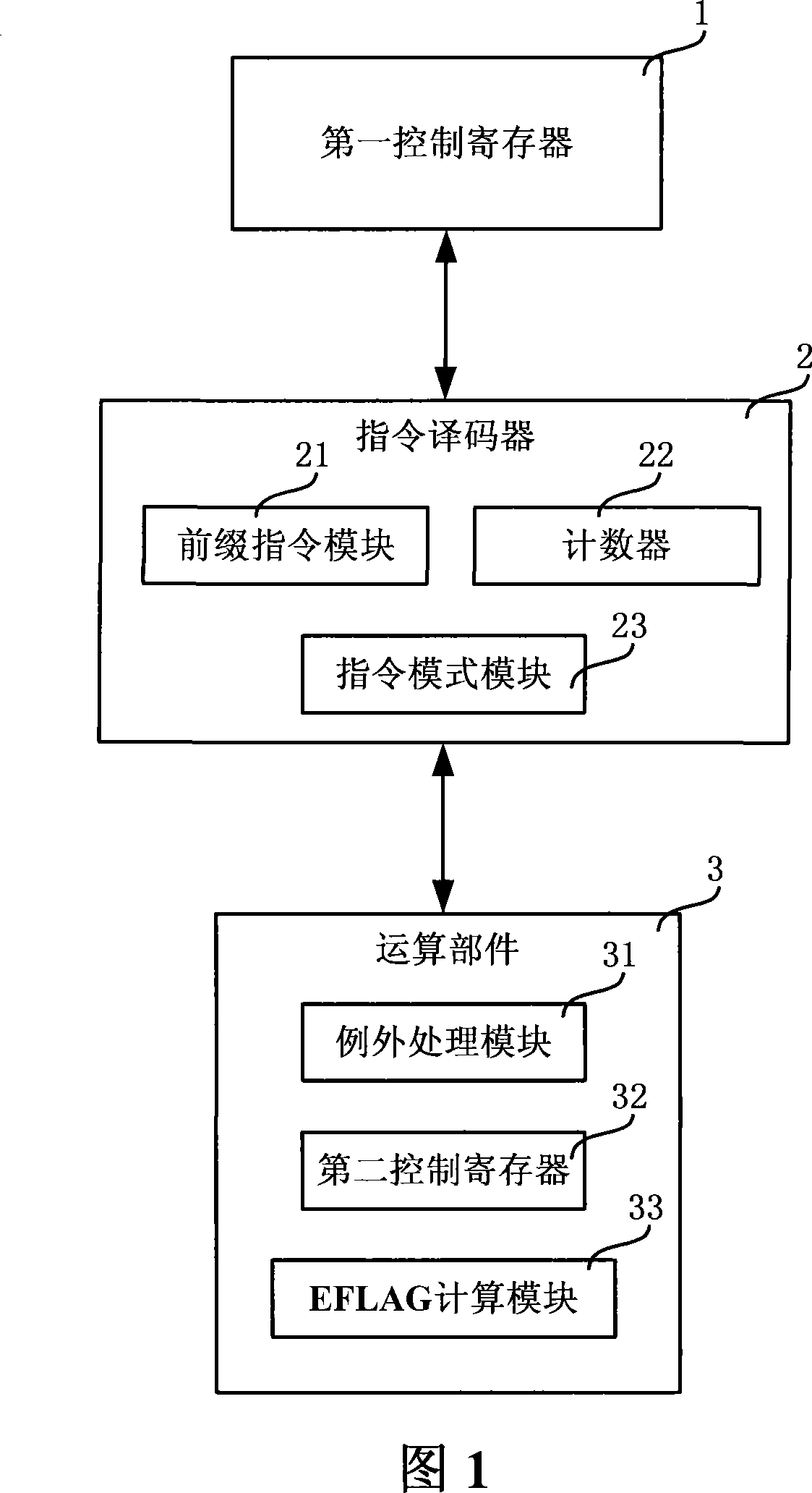

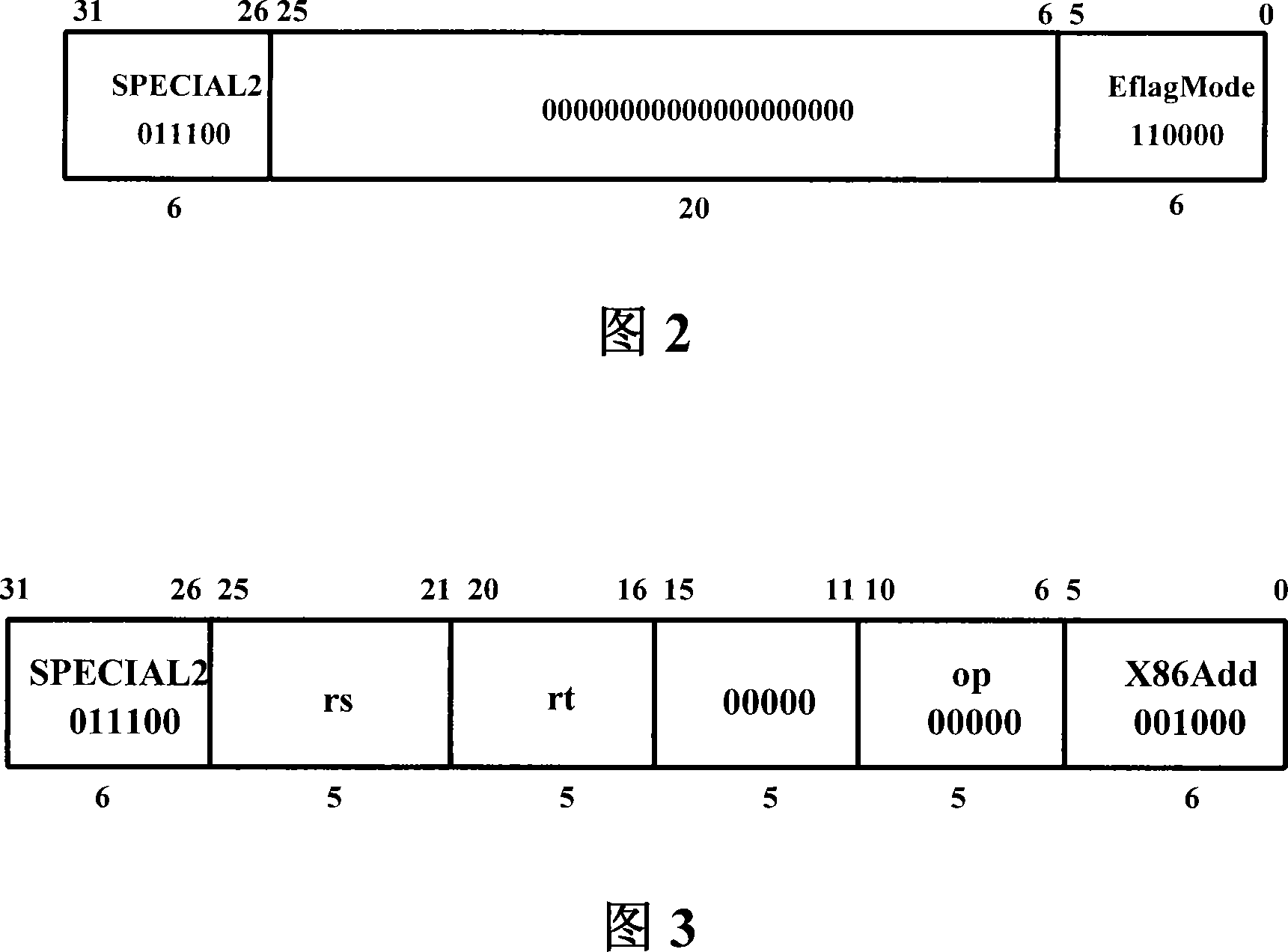

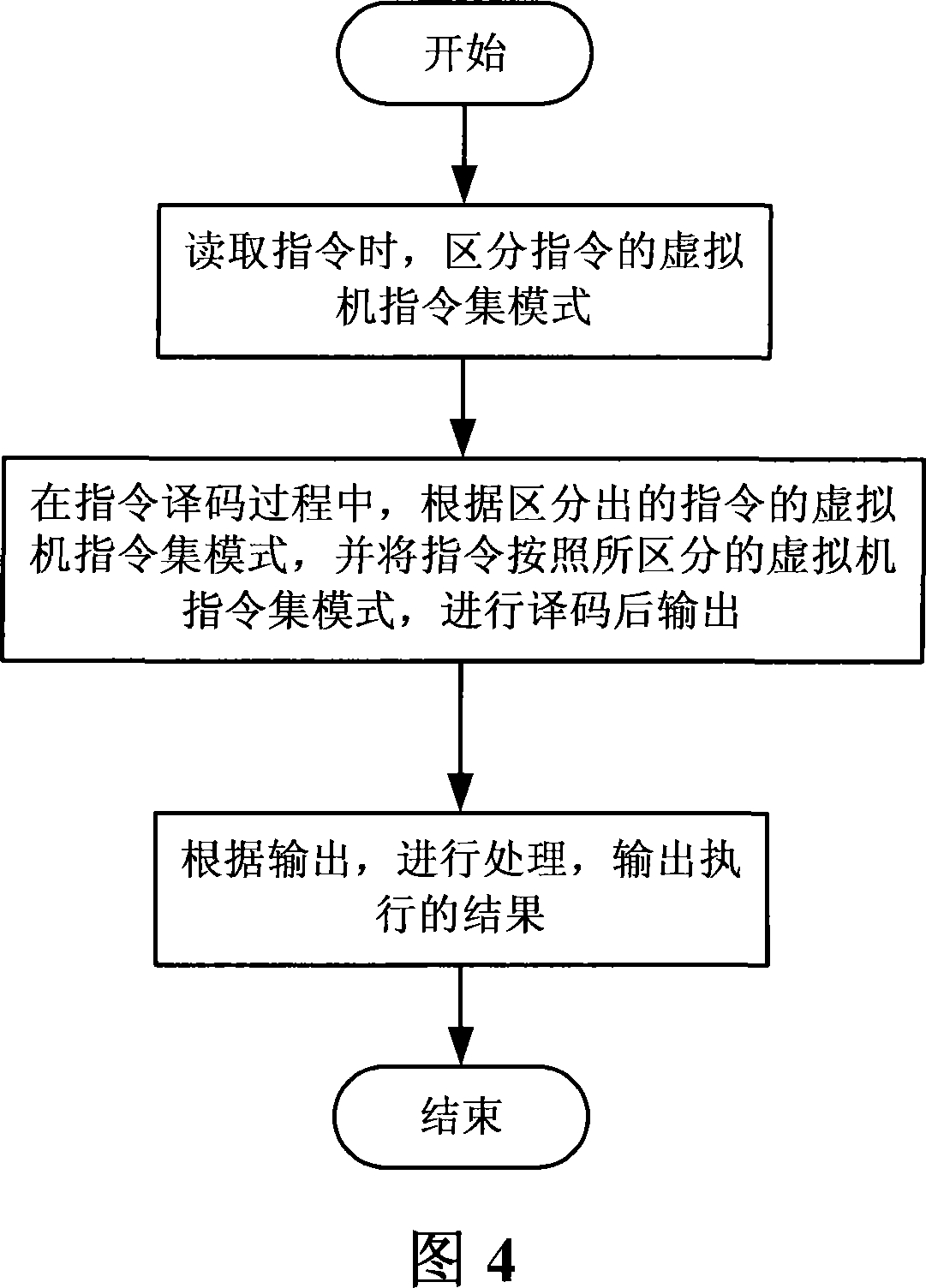

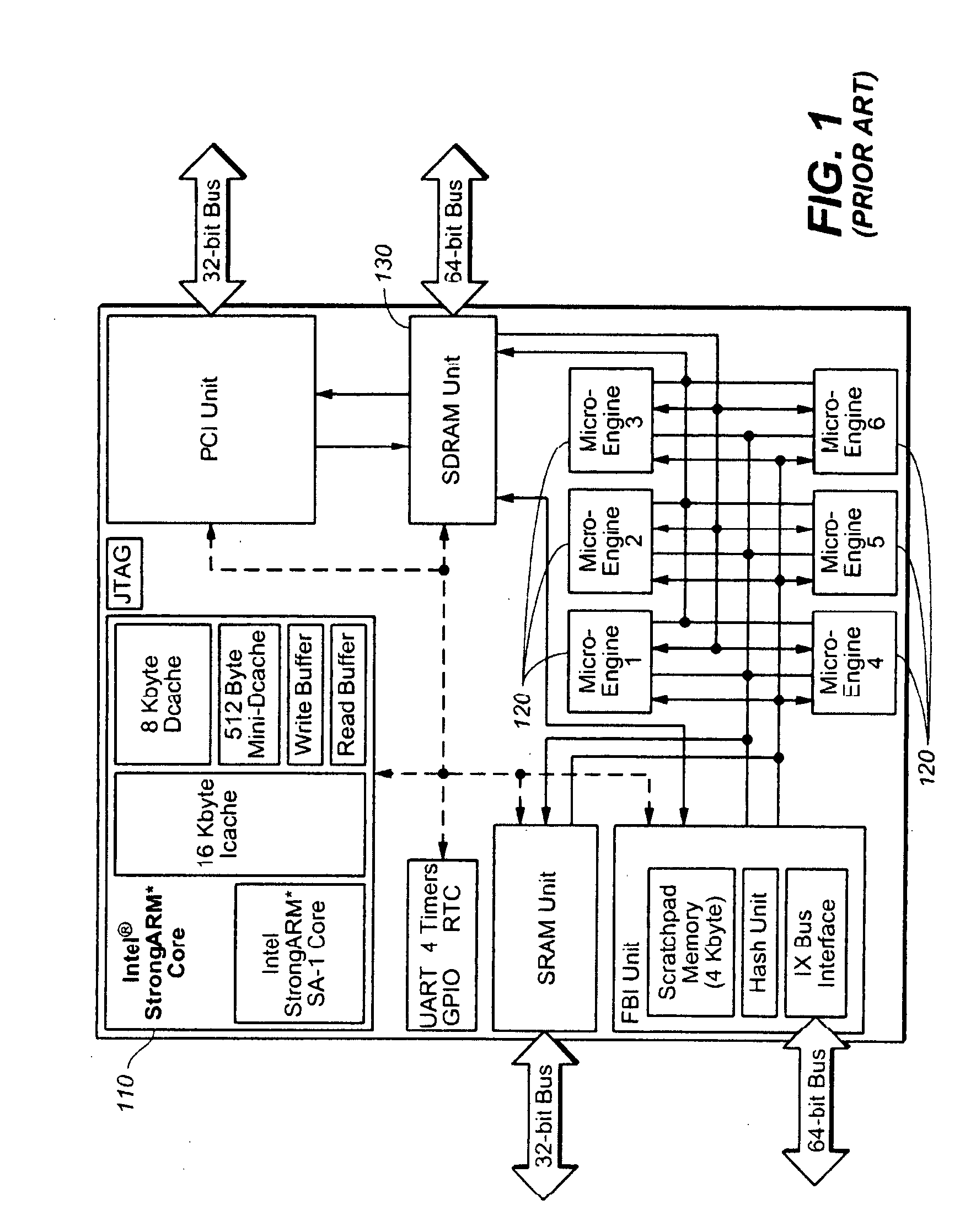

RISC processor device and multi-mode data processing method

PatentActiveCN101187858B

Innovation

- A RISC processor device is designed, including a judgment module, an instruction decoder and an arithmetic unit. The judgment module distinguishes the virtual machine instruction set mode of the instruction. The instruction decoder decodes the corresponding mode and the arithmetic unit executes the result. , using the control register and prefix instruction module to optimize instruction execution and calculate the EFLAG flag.

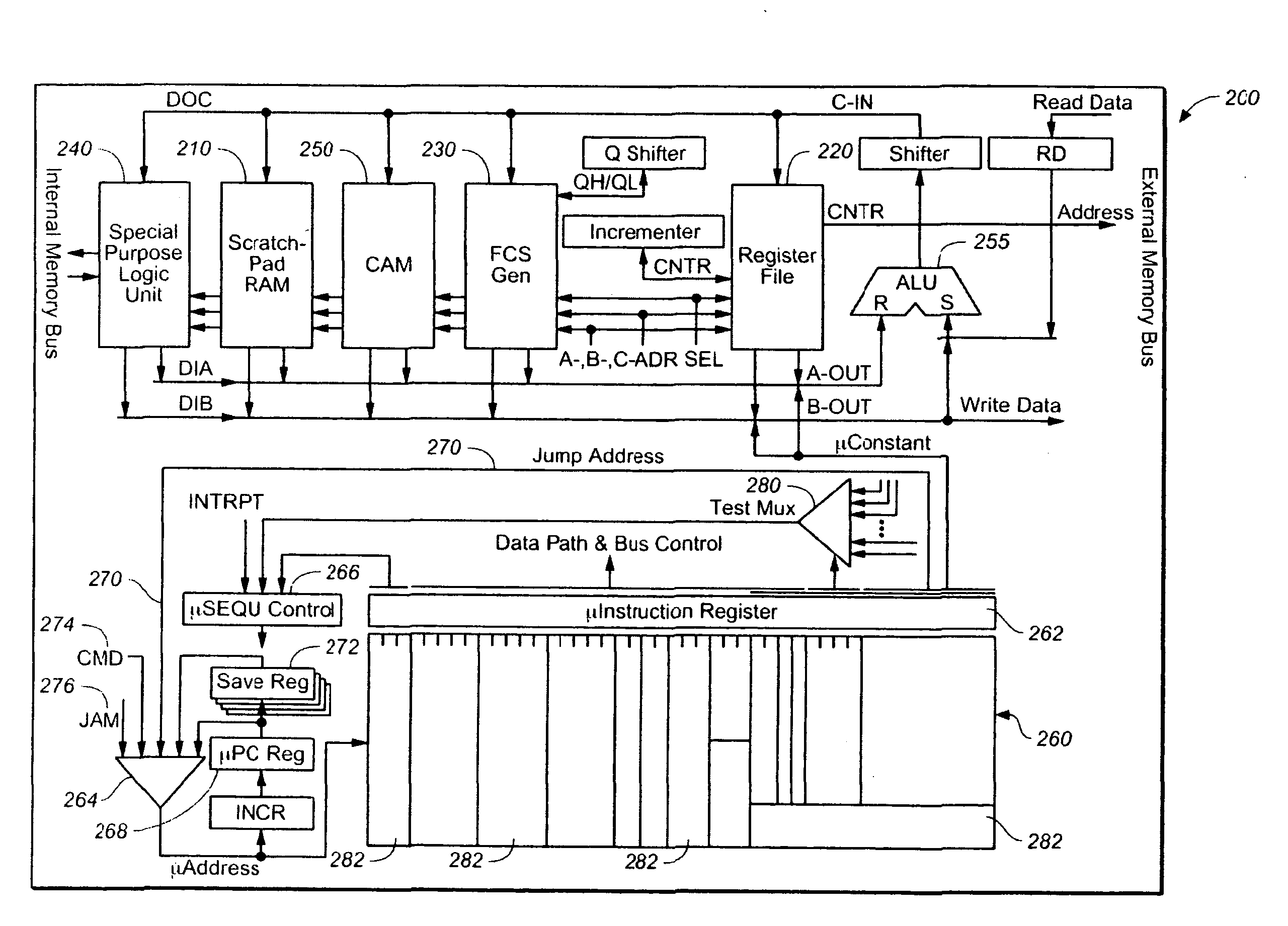

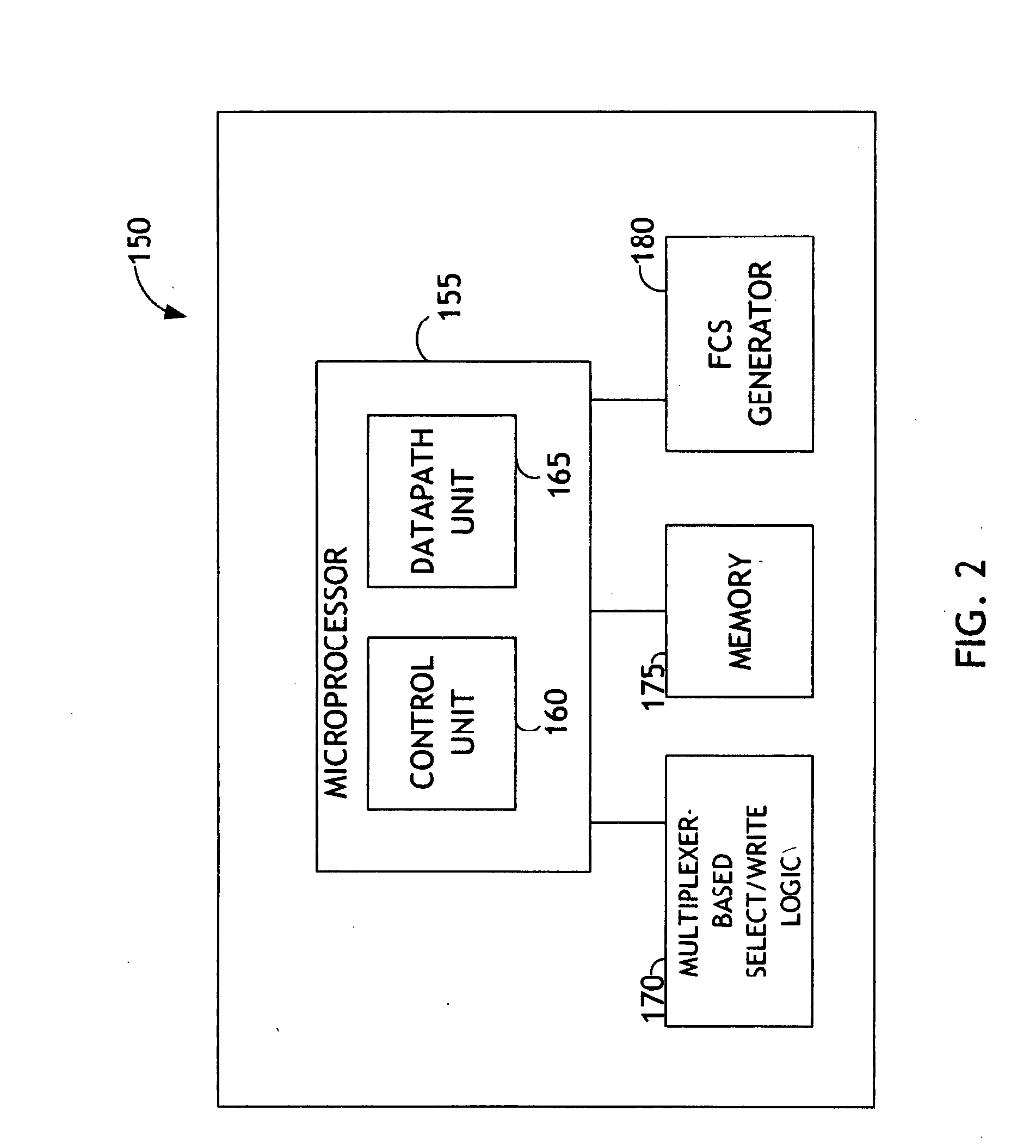

Energy efficient processing device

PatentInactiveUS20090228686A1

Innovation

- A network processor with a microcoded architecture employing non-opcode-oriented, fully decoded microcode instructions that do not require an instruction decoder, utilizing a programmable microsequencer for state management and control, and a data manipulation subsystem controlled by fully decoded microinstructions, resulting in reduced power consumption and compact size.

Energy Efficiency Standards for Cloud Data Centers

Energy efficiency has become a critical consideration in cloud data center operations, particularly when evaluating the scalability potential of RISC versus CISC architectures. The growing demand for cloud services has intensified focus on power consumption standards that directly impact operational costs and environmental sustainability.

Current energy efficiency standards for cloud data centers are primarily governed by metrics such as Power Usage Effectiveness (PUE), developed by The Green Grid consortium. PUE measures the ratio of total facility energy consumption to IT equipment energy consumption, with leading data centers achieving PUE values below 1.2. Additionally, the Energy Star program provides certification standards specifically for data centers, while the European Union's Code of Conduct for Energy Efficiency in Data Centres establishes voluntary guidelines for operators.

RISC architectures demonstrate inherent advantages in meeting these efficiency standards due to their simplified instruction sets and reduced transistor counts. ARM-based processors, exemplifying RISC design principles, typically consume 20-30% less power per computational unit compared to traditional x86 CISC processors. This efficiency translates directly into improved PUE ratios and reduced cooling requirements, enabling data centers to achieve higher density deployments while maintaining compliance with energy standards.

The scalability implications of these efficiency standards become apparent when considering large-scale cloud deployments. RISC processors' lower thermal design power (TDP) allows for higher server density within existing power and cooling constraints. Major cloud providers have recognized this advantage, with Amazon's Graviton processors and Google's custom silicon initiatives demonstrating significant energy savings at scale.

Emerging standards such as the Climate Neutral Data Centre Pact and ISO 50001 energy management systems are pushing the industry toward more stringent efficiency requirements. These evolving standards favor architectures that can deliver computational performance while minimizing energy consumption, positioning RISC designs as increasingly attractive for future cloud infrastructure investments.

The convergence of regulatory pressure, operational cost considerations, and environmental responsibility continues to drive adoption of energy-efficient computing architectures in cloud environments, making compliance with these standards a key factor in architectural decision-making processes.

Current energy efficiency standards for cloud data centers are primarily governed by metrics such as Power Usage Effectiveness (PUE), developed by The Green Grid consortium. PUE measures the ratio of total facility energy consumption to IT equipment energy consumption, with leading data centers achieving PUE values below 1.2. Additionally, the Energy Star program provides certification standards specifically for data centers, while the European Union's Code of Conduct for Energy Efficiency in Data Centres establishes voluntary guidelines for operators.

RISC architectures demonstrate inherent advantages in meeting these efficiency standards due to their simplified instruction sets and reduced transistor counts. ARM-based processors, exemplifying RISC design principles, typically consume 20-30% less power per computational unit compared to traditional x86 CISC processors. This efficiency translates directly into improved PUE ratios and reduced cooling requirements, enabling data centers to achieve higher density deployments while maintaining compliance with energy standards.

The scalability implications of these efficiency standards become apparent when considering large-scale cloud deployments. RISC processors' lower thermal design power (TDP) allows for higher server density within existing power and cooling constraints. Major cloud providers have recognized this advantage, with Amazon's Graviton processors and Google's custom silicon initiatives demonstrating significant energy savings at scale.

Emerging standards such as the Climate Neutral Data Centre Pact and ISO 50001 energy management systems are pushing the industry toward more stringent efficiency requirements. These evolving standards favor architectures that can deliver computational performance while minimizing energy consumption, positioning RISC designs as increasingly attractive for future cloud infrastructure investments.

The convergence of regulatory pressure, operational cost considerations, and environmental responsibility continues to drive adoption of energy-efficient computing architectures in cloud environments, making compliance with these standards a key factor in architectural decision-making processes.

Performance Benchmarking Methodologies for Cloud Processors

Performance benchmarking methodologies for cloud processors require specialized approaches that account for the unique characteristics of both RISC and CISC architectures in distributed computing environments. Traditional single-node benchmarking frameworks prove insufficient when evaluating processor scalability across cloud infrastructures, necessitating comprehensive methodologies that capture multi-dimensional performance metrics.

Standardized benchmark suites such as SPEC CPU, CoreMark, and Dhrystone provide foundational performance baselines but must be adapted for cloud-specific workloads. Cloud-native benchmarking frameworks like CloudSuite, TailBench, and BigDataBench offer more relevant test scenarios that simulate real-world distributed applications, microservices architectures, and containerized workloads that dominate modern cloud environments.

Scalability assessment methodologies focus on measuring performance degradation patterns as workload intensity increases. Linear scalability testing involves incrementally adding processing threads, virtual machines, or container instances while monitoring throughput, latency, and resource utilization metrics. RISC processors typically demonstrate more predictable scaling patterns due to their simplified instruction sets, while CISC processors may exhibit non-linear performance characteristics under heavy loads.

Multi-tenant performance evaluation represents a critical benchmarking dimension for cloud processors. Methodologies must assess how processor architectures handle resource contention, memory bandwidth competition, and cache interference when multiple workloads execute simultaneously. Isolation testing measures performance consistency across varying tenant loads, revealing architectural advantages in maintaining quality-of-service guarantees.

Energy efficiency benchmarking has become increasingly important for cloud deployments, requiring methodologies that correlate performance metrics with power consumption patterns. Performance-per-watt measurements across different utilization levels help determine optimal processor selection for specific cloud workload profiles and sustainability objectives.

Automated benchmarking frameworks utilizing infrastructure-as-code principles enable consistent, repeatable performance evaluations across heterogeneous cloud environments. These methodologies incorporate statistical analysis techniques to account for performance variability inherent in shared cloud infrastructures, ensuring reliable architectural comparisons between RISC and CISC processor implementations.

Standardized benchmark suites such as SPEC CPU, CoreMark, and Dhrystone provide foundational performance baselines but must be adapted for cloud-specific workloads. Cloud-native benchmarking frameworks like CloudSuite, TailBench, and BigDataBench offer more relevant test scenarios that simulate real-world distributed applications, microservices architectures, and containerized workloads that dominate modern cloud environments.

Scalability assessment methodologies focus on measuring performance degradation patterns as workload intensity increases. Linear scalability testing involves incrementally adding processing threads, virtual machines, or container instances while monitoring throughput, latency, and resource utilization metrics. RISC processors typically demonstrate more predictable scaling patterns due to their simplified instruction sets, while CISC processors may exhibit non-linear performance characteristics under heavy loads.

Multi-tenant performance evaluation represents a critical benchmarking dimension for cloud processors. Methodologies must assess how processor architectures handle resource contention, memory bandwidth competition, and cache interference when multiple workloads execute simultaneously. Isolation testing measures performance consistency across varying tenant loads, revealing architectural advantages in maintaining quality-of-service guarantees.

Energy efficiency benchmarking has become increasingly important for cloud deployments, requiring methodologies that correlate performance metrics with power consumption patterns. Performance-per-watt measurements across different utilization levels help determine optimal processor selection for specific cloud workload profiles and sustainability objectives.

Automated benchmarking frameworks utilizing infrastructure-as-code principles enable consistent, repeatable performance evaluations across heterogeneous cloud environments. These methodologies incorporate statistical analysis techniques to account for performance variability inherent in shared cloud infrastructures, ensuring reliable architectural comparisons between RISC and CISC processor implementations.

Unlock deeper insights with Patsnap Eureka Quick Research — get a full tech report to explore trends and direct your research. Try now!

Generate Your Research Report Instantly with AI Agent

Supercharge your innovation with Patsnap Eureka AI Agent Platform!