How to Implement Compression Wave Calibrations for Results

MAR 9, 20269 MIN READ

Generate Your Research Report Instantly with AI Agent

PatSnap Eureka helps you evaluate technical feasibility & market potential.

Compression Wave Tech Background and Calibration Goals

Compression wave technology has emerged as a fundamental component in various industrial applications, ranging from non-destructive testing to seismic exploration and material characterization. The evolution of this technology traces back to the early 20th century when researchers first recognized the potential of acoustic waves for subsurface investigation and material property assessment. Over the decades, compression wave applications have expanded significantly, driven by advances in signal processing, sensor technology, and computational capabilities.

The historical development of compression wave technology can be divided into several key phases. The initial phase focused on basic wave propagation theory and manual interpretation methods. The second phase introduced electronic instrumentation and automated data acquisition systems. The current phase emphasizes digital signal processing, real-time analysis, and integration with artificial intelligence algorithms for enhanced interpretation accuracy.

Modern compression wave systems face increasing demands for precision and reliability across diverse operating conditions. The technology has evolved from simple time-of-flight measurements to sophisticated multi-parameter analysis incorporating wave amplitude, frequency content, and phase characteristics. This evolution reflects the growing complexity of applications and the need for more detailed subsurface or material information.

The primary technical objectives for compression wave calibration systems center on achieving consistent and accurate measurement results across varying environmental conditions and material properties. Calibration goals encompass establishing reliable reference standards, minimizing measurement uncertainties, and ensuring traceability to international standards. These objectives are critical for maintaining data quality and enabling meaningful comparisons between different measurement campaigns or equipment configurations.

Contemporary calibration approaches aim to address systematic errors, temperature dependencies, and coupling variations that can significantly impact measurement accuracy. The development of automated calibration procedures represents a key advancement, reducing human error and improving measurement repeatability. Additionally, the integration of machine learning algorithms for calibration optimization has shown promising results in enhancing system performance and reducing calibration time requirements.

Future technical goals include developing adaptive calibration systems that can automatically adjust to changing operational conditions, implementing real-time quality assurance protocols, and establishing standardized calibration procedures for emerging compression wave applications. These objectives align with industry demands for increased automation, improved data reliability, and reduced operational costs while maintaining the highest standards of measurement accuracy and precision.

The historical development of compression wave technology can be divided into several key phases. The initial phase focused on basic wave propagation theory and manual interpretation methods. The second phase introduced electronic instrumentation and automated data acquisition systems. The current phase emphasizes digital signal processing, real-time analysis, and integration with artificial intelligence algorithms for enhanced interpretation accuracy.

Modern compression wave systems face increasing demands for precision and reliability across diverse operating conditions. The technology has evolved from simple time-of-flight measurements to sophisticated multi-parameter analysis incorporating wave amplitude, frequency content, and phase characteristics. This evolution reflects the growing complexity of applications and the need for more detailed subsurface or material information.

The primary technical objectives for compression wave calibration systems center on achieving consistent and accurate measurement results across varying environmental conditions and material properties. Calibration goals encompass establishing reliable reference standards, minimizing measurement uncertainties, and ensuring traceability to international standards. These objectives are critical for maintaining data quality and enabling meaningful comparisons between different measurement campaigns or equipment configurations.

Contemporary calibration approaches aim to address systematic errors, temperature dependencies, and coupling variations that can significantly impact measurement accuracy. The development of automated calibration procedures represents a key advancement, reducing human error and improving measurement repeatability. Additionally, the integration of machine learning algorithms for calibration optimization has shown promising results in enhancing system performance and reducing calibration time requirements.

Future technical goals include developing adaptive calibration systems that can automatically adjust to changing operational conditions, implementing real-time quality assurance protocols, and establishing standardized calibration procedures for emerging compression wave applications. These objectives align with industry demands for increased automation, improved data reliability, and reduced operational costs while maintaining the highest standards of measurement accuracy and precision.

Market Demand for Accurate Compression Wave Measurements

The global market for accurate compression wave measurements has experienced substantial growth driven by increasing demands across multiple industrial sectors. Oil and gas exploration represents the largest market segment, where precise seismic wave analysis is critical for reservoir characterization and drilling optimization. The energy sector's continuous expansion, particularly in unconventional resource extraction, has intensified the need for high-fidelity compression wave measurement systems that can deliver reliable subsurface imaging and geological interpretation.

Manufacturing industries, especially aerospace and automotive sectors, demonstrate growing demand for compression wave measurement technologies in non-destructive testing applications. Quality assurance protocols increasingly require precise ultrasonic testing capabilities to detect material defects, measure thickness variations, and ensure structural integrity. The automotive industry's shift toward lightweight materials and electric vehicle components has created new requirements for advanced compression wave calibration systems.

Medical imaging and healthcare sectors contribute significantly to market demand, particularly in ultrasound diagnostics and therapeutic applications. The aging global population and increasing healthcare accessibility drive continuous growth in medical ultrasound equipment markets. Advanced compression wave measurement accuracy directly impacts diagnostic quality and treatment effectiveness, creating sustained demand for calibration technologies.

Geophysical research and environmental monitoring applications represent emerging market segments with substantial growth potential. Climate change research, earthquake monitoring, and geological hazard assessment require increasingly sophisticated compression wave measurement capabilities. Academic institutions and government research organizations invest heavily in advanced seismic instrumentation with precise calibration requirements.

The construction and infrastructure development sectors show increasing adoption of compression wave measurement technologies for concrete testing, structural health monitoring, and foundation analysis. Smart city initiatives and aging infrastructure maintenance programs worldwide drive demand for reliable non-destructive evaluation methods.

Regional market dynamics reveal strong growth in Asia-Pacific regions, driven by industrial expansion and infrastructure development. North American markets maintain steady demand through energy sector activities and technological innovation. European markets emphasize precision manufacturing and environmental monitoring applications, creating specialized demand for high-accuracy compression wave measurement systems with stringent calibration requirements.

Manufacturing industries, especially aerospace and automotive sectors, demonstrate growing demand for compression wave measurement technologies in non-destructive testing applications. Quality assurance protocols increasingly require precise ultrasonic testing capabilities to detect material defects, measure thickness variations, and ensure structural integrity. The automotive industry's shift toward lightweight materials and electric vehicle components has created new requirements for advanced compression wave calibration systems.

Medical imaging and healthcare sectors contribute significantly to market demand, particularly in ultrasound diagnostics and therapeutic applications. The aging global population and increasing healthcare accessibility drive continuous growth in medical ultrasound equipment markets. Advanced compression wave measurement accuracy directly impacts diagnostic quality and treatment effectiveness, creating sustained demand for calibration technologies.

Geophysical research and environmental monitoring applications represent emerging market segments with substantial growth potential. Climate change research, earthquake monitoring, and geological hazard assessment require increasingly sophisticated compression wave measurement capabilities. Academic institutions and government research organizations invest heavily in advanced seismic instrumentation with precise calibration requirements.

The construction and infrastructure development sectors show increasing adoption of compression wave measurement technologies for concrete testing, structural health monitoring, and foundation analysis. Smart city initiatives and aging infrastructure maintenance programs worldwide drive demand for reliable non-destructive evaluation methods.

Regional market dynamics reveal strong growth in Asia-Pacific regions, driven by industrial expansion and infrastructure development. North American markets maintain steady demand through energy sector activities and technological innovation. European markets emphasize precision manufacturing and environmental monitoring applications, creating specialized demand for high-accuracy compression wave measurement systems with stringent calibration requirements.

Current Calibration Challenges in Compression Wave Systems

Compression wave calibration systems face significant technical challenges that impede accurate measurement and reliable data acquisition across various industrial applications. The primary obstacle stems from the inherent complexity of wave propagation dynamics, where multiple variables simultaneously influence measurement accuracy. Temperature fluctuations, material property variations, and environmental conditions create substantial calibration drift, requiring frequent recalibration cycles that disrupt operational efficiency.

Signal processing limitations represent another critical challenge in compression wave systems. Traditional calibration methods struggle with noise interference and signal degradation, particularly in harsh industrial environments. The analog-to-digital conversion process introduces quantization errors, while electromagnetic interference from nearby equipment can corrupt calibration signals. These factors collectively compromise the precision of baseline measurements essential for accurate compression wave analysis.

Hardware-related constraints further complicate calibration procedures. Sensor aging and mechanical wear affect transducer response characteristics over time, leading to gradual calibration parameter drift. The physical mounting and coupling of sensors to test specimens introduces variability that standard calibration protocols often fail to address adequately. Additionally, the limited bandwidth of conventional measurement systems restricts the frequency range available for comprehensive calibration verification.

Standardization gaps present ongoing challenges for compression wave calibration implementation. Current industry standards lack comprehensive guidelines for calibration frequency, acceptable tolerance ranges, and verification procedures across different application domains. This absence of unified standards results in inconsistent calibration practices between organizations and measurement systems, hindering data comparability and reliability assessment.

Real-time calibration verification poses technical difficulties due to computational limitations and processing speed requirements. Existing systems typically rely on offline calibration procedures that cannot account for dynamic changes in operating conditions. The lack of automated calibration adjustment mechanisms forces operators to manually intervene, introducing human error and reducing measurement consistency.

Integration challenges arise when implementing calibration systems across diverse measurement platforms and legacy equipment. Compatibility issues between modern calibration software and existing hardware infrastructure create implementation barriers. The complexity of integrating multiple calibration parameters while maintaining system stability requires sophisticated control algorithms that many current systems lack, resulting in suboptimal calibration performance and reduced measurement accuracy.

Signal processing limitations represent another critical challenge in compression wave systems. Traditional calibration methods struggle with noise interference and signal degradation, particularly in harsh industrial environments. The analog-to-digital conversion process introduces quantization errors, while electromagnetic interference from nearby equipment can corrupt calibration signals. These factors collectively compromise the precision of baseline measurements essential for accurate compression wave analysis.

Hardware-related constraints further complicate calibration procedures. Sensor aging and mechanical wear affect transducer response characteristics over time, leading to gradual calibration parameter drift. The physical mounting and coupling of sensors to test specimens introduces variability that standard calibration protocols often fail to address adequately. Additionally, the limited bandwidth of conventional measurement systems restricts the frequency range available for comprehensive calibration verification.

Standardization gaps present ongoing challenges for compression wave calibration implementation. Current industry standards lack comprehensive guidelines for calibration frequency, acceptable tolerance ranges, and verification procedures across different application domains. This absence of unified standards results in inconsistent calibration practices between organizations and measurement systems, hindering data comparability and reliability assessment.

Real-time calibration verification poses technical difficulties due to computational limitations and processing speed requirements. Existing systems typically rely on offline calibration procedures that cannot account for dynamic changes in operating conditions. The lack of automated calibration adjustment mechanisms forces operators to manually intervene, introducing human error and reducing measurement consistency.

Integration challenges arise when implementing calibration systems across diverse measurement platforms and legacy equipment. Compatibility issues between modern calibration software and existing hardware infrastructure create implementation barriers. The complexity of integrating multiple calibration parameters while maintaining system stability requires sophisticated control algorithms that many current systems lack, resulting in suboptimal calibration performance and reduced measurement accuracy.

Existing Calibration Solutions for Compression Wave Systems

01 Calibration methods using reference standards and compression wave generation

Calibration accuracy for compression wave systems can be improved through the use of reference standards and controlled compression wave generation. These methods involve generating known compression waves with specific characteristics and comparing the measured response against expected values. The calibration process typically includes establishing baseline measurements, applying correction factors, and validating the system's response across different operating conditions to ensure measurement accuracy.- Calibration methods using reference standards and known compression wave properties: Calibration accuracy can be improved by utilizing reference standards with known compression wave characteristics. These methods involve comparing measured compression wave parameters against established reference values to determine and correct systematic errors. The calibration process typically includes generating controlled compression waves with predetermined properties and using these as benchmarks for adjusting measurement systems to ensure accurate readings.

- Automated calibration systems with real-time adjustment capabilities: Advanced calibration systems incorporate automated mechanisms that continuously monitor and adjust calibration parameters during operation. These systems use feedback loops and adaptive algorithms to maintain calibration accuracy over time, compensating for environmental changes and equipment drift. The automated approach reduces manual intervention requirements and ensures consistent measurement precision throughout extended operational periods.

- Multi-point calibration techniques for enhanced accuracy: Calibration accuracy is enhanced through multi-point calibration approaches that establish calibration curves across multiple compression wave amplitude or frequency ranges. This technique involves measuring system response at several discrete calibration points and interpolating between them to create comprehensive calibration profiles. The method accounts for non-linear system behaviors and provides improved accuracy across the entire operational range of the measurement device.

- Temperature compensation and environmental correction in calibration: Calibration procedures incorporate temperature compensation mechanisms and environmental correction factors to maintain accuracy under varying operational conditions. These methods account for the effects of temperature, pressure, and other environmental variables on compression wave propagation and sensor response. Correction algorithms adjust calibration parameters dynamically based on measured environmental conditions to ensure consistent accuracy regardless of operating environment.

- Digital signal processing and error correction algorithms for calibration optimization: Modern calibration approaches employ sophisticated digital signal processing techniques and error correction algorithms to enhance measurement accuracy. These methods analyze compression wave signals to identify and compensate for various error sources including noise, signal distortion, and timing inaccuracies. Advanced filtering, pattern recognition, and machine learning algorithms are applied to refine calibration parameters and improve overall system performance.

02 Digital signal processing and error correction algorithms

Advanced digital signal processing techniques and error correction algorithms are employed to enhance calibration accuracy in compression wave measurements. These approaches involve analyzing the acquired signals, identifying systematic errors, and applying mathematical corrections to compensate for measurement deviations. The methods include filtering techniques, noise reduction algorithms, and adaptive calibration procedures that continuously adjust for environmental variations and system drift.Expand Specific Solutions03 Multi-point calibration and interpolation techniques

Multi-point calibration strategies involve measuring compression wave characteristics at multiple reference points across the operational range. Interpolation and curve-fitting techniques are then applied to establish accurate calibration curves. This approach accounts for non-linear system responses and provides improved accuracy across the entire measurement range. The calibration data is stored and used to correct subsequent measurements automatically.Expand Specific Solutions04 Temperature compensation and environmental factor correction

Calibration accuracy is significantly affected by environmental factors such as temperature, pressure, and humidity. Advanced calibration systems incorporate temperature compensation mechanisms and environmental factor correction algorithms. These systems monitor environmental conditions in real-time and apply dynamic corrections to maintain calibration accuracy across varying operating conditions. The compensation methods may include temperature-dependent calibration coefficients and environmental modeling.Expand Specific Solutions05 Automated calibration verification and self-diagnostic systems

Modern compression wave calibration systems incorporate automated verification procedures and self-diagnostic capabilities to ensure ongoing calibration accuracy. These systems perform periodic self-checks, compare current performance against stored calibration data, and alert operators when recalibration is needed. The automated approach reduces human error, ensures consistent calibration quality, and maintains measurement accuracy over extended periods. Self-diagnostic features can identify sensor degradation, signal path issues, and other factors affecting calibration.Expand Specific Solutions

Key Players in Compression Wave Technology Industry

The compression wave calibration technology landscape represents a mature yet evolving sector spanning defense, consumer electronics, and industrial applications. Major technology corporations like Samsung Electronics, Sony Group, LG Electronics, and Qualcomm drive innovation in consumer-facing compression applications, while specialized firms such as Thales SA, HENSOLDT Sensors, and Leidos Holdings focus on defense and aerospace implementations. Academic institutions including Xidian University, Harbin Engineering University, and Zhejiang University contribute fundamental research advancements. The market demonstrates strong growth potential, particularly in automotive applications through companies like DENSO Corp, and marine/acoustic sensing via specialized firms like Navico and Ocean Applied Acoustic-Tech. Technology maturity varies significantly across segments, with consumer electronics showing high standardization while emerging applications in IoT and autonomous systems present substantial development opportunities for calibration methodologies and precision enhancement.

QUALCOMM, Inc.

Technical Solution: Qualcomm implements compression wave calibration in their ultrasonic fingerprint sensing technology and acoustic communication systems. Their approach involves precise calibration of piezoelectric transducers used in under-display fingerprint sensors, where compression waves must be accurately characterized to distinguish between different fingerprint ridge patterns. The calibration process includes establishing reference wave propagation models for various display materials and thicknesses. Qualcomm's system employs adaptive signal processing algorithms that continuously adjust calibration parameters based on environmental factors such as temperature and humidity. Their implementation features real-time compensation mechanisms that account for manufacturing variations in display assemblies and ensure consistent biometric recognition performance across different device configurations and usage conditions.

Strengths: Innovative consumer electronics applications with high-volume manufacturing experience. Weaknesses: Specialized focus on mobile device applications limits broader industrial applicability.

Huawei Technologies Co., Ltd.

Technical Solution: Huawei has developed compression wave calibration techniques for their telecommunications infrastructure, particularly in fiber optic systems and 5G base station components. Their approach focuses on acoustic wave-based sensing and calibration for structural health monitoring of communication towers and equipment housings. The system utilizes distributed acoustic sensing (DAS) technology combined with compression wave analysis to detect structural anomalies and perform preventive maintenance. Huawei's calibration protocol involves establishing baseline compression wave signatures for different materials and environmental conditions, then using AI-powered algorithms to continuously compare real-time measurements against these references. Their implementation includes temperature compensation algorithms and multi-frequency analysis to improve calibration accuracy across varying operational conditions.

Strengths: Advanced AI integration and robust environmental adaptation capabilities. Weaknesses: Primarily focused on telecommunications applications with limited cross-industry applicability.

Core Innovations in Wave Calibration Algorithms

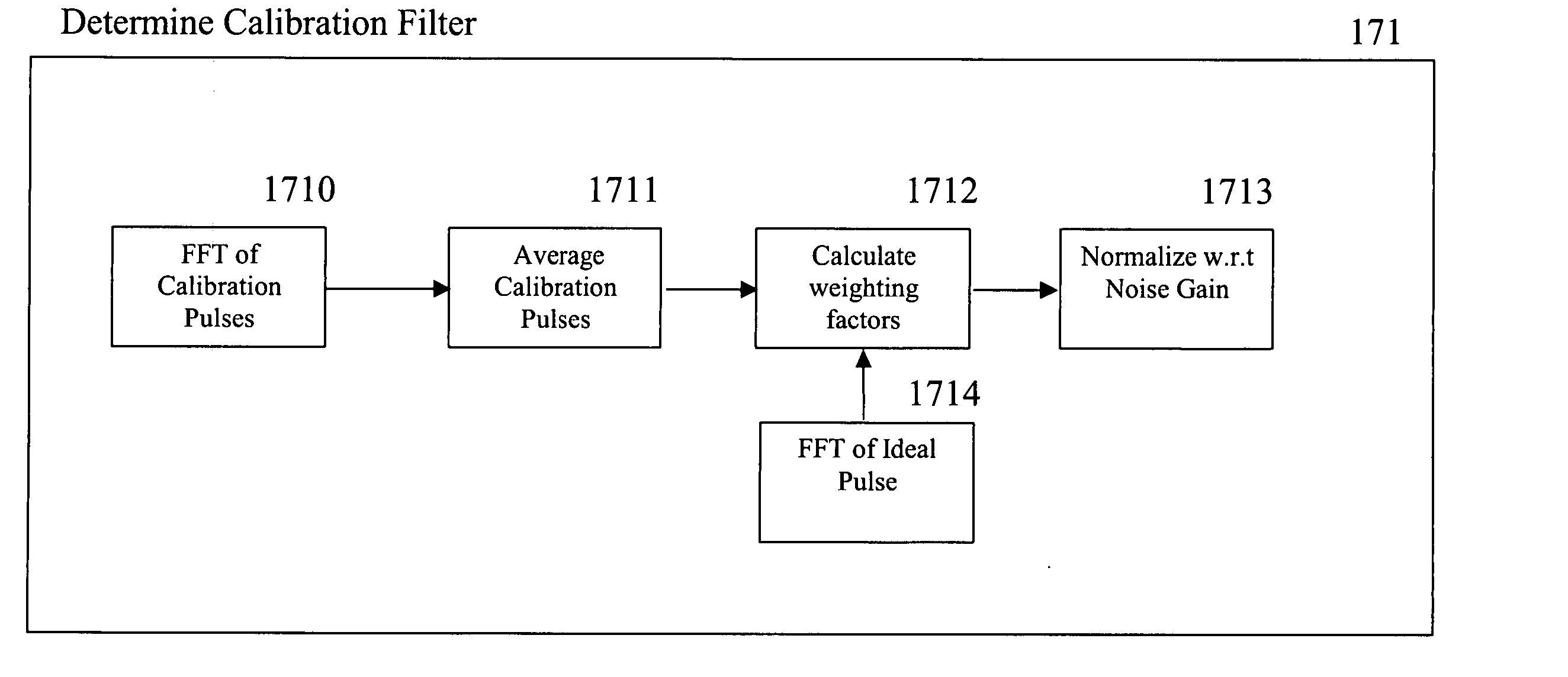

RF channel calibration for non-linear FM waveforms

PatentInactiveUS20050190100A1

Innovation

- A self-calibration system for pulse-compression radar signals that generates calibration pulses and uses them to create frequency domain weighting factors, which are normalized to create a calibration filter applied to received signals, adapting to component changes and non-linearities in the transmitter.

Method for determining parameters of a compression filter and associated multichannel radar

PatentWO2016096250A1

Innovation

- A method for determining parameters of a pulse compression filter with finite impulse response, involving calibration signals, transfer function calculation, and relative gain measurement across reception channels to balance and equalize channel responses, allowing for the use of matched filters and relative gains to optimize signal processing.

Standardization Requirements for Wave Calibration Protocols

The establishment of standardization requirements for wave calibration protocols represents a critical foundation for ensuring consistency, reliability, and interoperability across compression wave measurement systems. These requirements must address the fundamental parameters that govern calibration procedures, including frequency response characteristics, amplitude accuracy specifications, and temporal resolution standards that enable reproducible results across different measurement environments and equipment configurations.

International standardization bodies have recognized the necessity for comprehensive protocols that define minimum performance criteria for wave calibration systems. These standards typically encompass calibration signal generation requirements, specifying acceptable waveform characteristics such as rise time, settling accuracy, and harmonic distortion limits. Additionally, environmental conditioning parameters must be standardized to account for temperature, humidity, and pressure variations that can significantly impact calibration accuracy and measurement repeatability.

Traceability requirements form another essential component of standardization protocols, establishing clear chains of measurement authority that link field calibrations to national or international measurement standards. This includes documentation requirements for calibration certificates, uncertainty budgets, and measurement procedures that ensure compliance with metrological principles. The protocols must also define acceptable calibration intervals and drift specifications that maintain measurement integrity over extended operational periods.

Verification and validation procedures represent critical elements within standardization frameworks, requiring systematic approaches to confirm that calibration systems meet specified performance criteria. These procedures must include statistical methods for evaluating calibration uncertainty, acceptance criteria for system performance validation, and protocols for handling non-conforming measurements or equipment failures during calibration processes.

The standardization requirements must also address interoperability concerns, ensuring that calibration protocols developed by different organizations or implemented on various equipment platforms can produce comparable results. This includes standardized data formats, communication protocols, and measurement units that facilitate data exchange and comparison across different systems and applications, ultimately supporting the broader goal of measurement harmonization in compression wave analysis applications.

International standardization bodies have recognized the necessity for comprehensive protocols that define minimum performance criteria for wave calibration systems. These standards typically encompass calibration signal generation requirements, specifying acceptable waveform characteristics such as rise time, settling accuracy, and harmonic distortion limits. Additionally, environmental conditioning parameters must be standardized to account for temperature, humidity, and pressure variations that can significantly impact calibration accuracy and measurement repeatability.

Traceability requirements form another essential component of standardization protocols, establishing clear chains of measurement authority that link field calibrations to national or international measurement standards. This includes documentation requirements for calibration certificates, uncertainty budgets, and measurement procedures that ensure compliance with metrological principles. The protocols must also define acceptable calibration intervals and drift specifications that maintain measurement integrity over extended operational periods.

Verification and validation procedures represent critical elements within standardization frameworks, requiring systematic approaches to confirm that calibration systems meet specified performance criteria. These procedures must include statistical methods for evaluating calibration uncertainty, acceptance criteria for system performance validation, and protocols for handling non-conforming measurements or equipment failures during calibration processes.

The standardization requirements must also address interoperability concerns, ensuring that calibration protocols developed by different organizations or implemented on various equipment platforms can produce comparable results. This includes standardized data formats, communication protocols, and measurement units that facilitate data exchange and comparison across different systems and applications, ultimately supporting the broader goal of measurement harmonization in compression wave analysis applications.

Quality Assurance Framework for Calibration Results

Establishing a comprehensive quality assurance framework for compression wave calibration results requires systematic implementation of validation protocols that ensure measurement accuracy and reliability. The framework must encompass multiple verification layers, including reference standard validation, measurement repeatability assessment, and cross-validation procedures that collectively guarantee the integrity of calibration outcomes.

The foundation of quality assurance lies in implementing standardized calibration procedures that follow internationally recognized protocols such as ISO 17025 and ASTM standards. These procedures establish clear guidelines for equipment setup, environmental conditions, and measurement protocols that minimize systematic errors and ensure consistent results across different testing scenarios. Documentation requirements must include detailed records of calibration parameters, environmental conditions, and operator qualifications.

Measurement uncertainty analysis forms a critical component of the quality framework, requiring comprehensive evaluation of all potential error sources including equipment limitations, environmental variations, and human factors. Statistical analysis methods must be employed to quantify uncertainty budgets and establish confidence intervals for calibration results. This analysis enables proper interpretation of measurement data and supports decision-making processes regarding calibration validity.

Real-time monitoring systems should be integrated to continuously assess calibration performance through automated data collection and analysis. These systems can detect drift patterns, identify anomalous readings, and trigger corrective actions when measurements exceed predetermined tolerance limits. Implementation of control charts and statistical process control methods enables proactive quality management and prevents calibration failures.

Validation protocols must include inter-laboratory comparison programs and proficiency testing to verify calibration accuracy against external references. Regular participation in round-robin testing exercises provides independent verification of measurement capabilities and identifies potential systematic biases. Cross-validation with alternative measurement techniques strengthens confidence in calibration results and supports method validation requirements.

The framework should incorporate automated data integrity checks and audit trails that ensure traceability and prevent data manipulation. Digital signatures, timestamp verification, and change control procedures maintain the authenticity of calibration records and support regulatory compliance requirements. Regular internal audits and management reviews ensure continuous improvement of quality assurance processes and alignment with organizational objectives.

The foundation of quality assurance lies in implementing standardized calibration procedures that follow internationally recognized protocols such as ISO 17025 and ASTM standards. These procedures establish clear guidelines for equipment setup, environmental conditions, and measurement protocols that minimize systematic errors and ensure consistent results across different testing scenarios. Documentation requirements must include detailed records of calibration parameters, environmental conditions, and operator qualifications.

Measurement uncertainty analysis forms a critical component of the quality framework, requiring comprehensive evaluation of all potential error sources including equipment limitations, environmental variations, and human factors. Statistical analysis methods must be employed to quantify uncertainty budgets and establish confidence intervals for calibration results. This analysis enables proper interpretation of measurement data and supports decision-making processes regarding calibration validity.

Real-time monitoring systems should be integrated to continuously assess calibration performance through automated data collection and analysis. These systems can detect drift patterns, identify anomalous readings, and trigger corrective actions when measurements exceed predetermined tolerance limits. Implementation of control charts and statistical process control methods enables proactive quality management and prevents calibration failures.

Validation protocols must include inter-laboratory comparison programs and proficiency testing to verify calibration accuracy against external references. Regular participation in round-robin testing exercises provides independent verification of measurement capabilities and identifies potential systematic biases. Cross-validation with alternative measurement techniques strengthens confidence in calibration results and supports method validation requirements.

The framework should incorporate automated data integrity checks and audit trails that ensure traceability and prevent data manipulation. Digital signatures, timestamp verification, and change control procedures maintain the authenticity of calibration records and support regulatory compliance requirements. Regular internal audits and management reviews ensure continuous improvement of quality assurance processes and alignment with organizational objectives.

Unlock deeper insights with PatSnap Eureka Quick Research — get a full tech report to explore trends and direct your research. Try now!

Generate Your Research Report Instantly with AI Agent

Supercharge your innovation with PatSnap Eureka AI Agent Platform!