How to Integrate Multiple Sensors for Mobile Manipulation

APR 24, 20269 MIN READ

Generate Your Research Report Instantly with AI Agent

PatSnap Eureka helps you evaluate technical feasibility & market potential.

Multi-Sensor Mobile Manipulation Background and Objectives

Mobile manipulation represents a convergence of robotics, artificial intelligence, and sensor technologies that has evolved significantly over the past two decades. The field emerged from the fundamental need to create autonomous systems capable of navigating complex environments while simultaneously performing precise manipulation tasks. Early developments in the 1990s focused primarily on stationary manipulators or simple mobile platforms, but the integration of these capabilities remained largely theoretical due to computational and sensor limitations.

The evolution of mobile manipulation has been driven by advances in several key technological domains. Computer vision systems have progressed from basic edge detection algorithms to sophisticated deep learning models capable of real-time object recognition and scene understanding. Simultaneously, sensor miniaturization and cost reduction have enabled the deployment of multiple high-quality sensors on single platforms. The development of robust simultaneous localization and mapping algorithms has provided the foundational capability for mobile platforms to operate in unknown environments.

Current technological trends indicate a shift toward heterogeneous sensor architectures that combine complementary sensing modalities. LiDAR systems provide precise geometric measurements and obstacle detection capabilities, while RGB-D cameras offer rich visual information and depth perception. Inertial measurement units contribute essential motion sensing, and tactile sensors enable sophisticated manipulation feedback. The challenge lies not merely in collecting this diverse sensor data, but in creating unified representations that enable coherent decision-making.

The primary technical objective centers on developing robust sensor fusion frameworks that can handle the inherent uncertainties and temporal misalignments present in multi-modal sensor streams. This requires addressing fundamental challenges in data synchronization, coordinate frame transformation, and uncertainty propagation across different sensor modalities. The system must maintain real-time performance while processing high-dimensional sensor data and generating appropriate control commands for both navigation and manipulation subsystems.

Secondary objectives include achieving adaptive sensor selection based on task requirements and environmental conditions. The system should demonstrate graceful degradation when individual sensors fail or provide unreliable data. Additionally, the integration framework must support scalable architectures that can accommodate new sensor types without requiring complete system redesign, ensuring long-term technological viability and adaptability to emerging sensing technologies.

The evolution of mobile manipulation has been driven by advances in several key technological domains. Computer vision systems have progressed from basic edge detection algorithms to sophisticated deep learning models capable of real-time object recognition and scene understanding. Simultaneously, sensor miniaturization and cost reduction have enabled the deployment of multiple high-quality sensors on single platforms. The development of robust simultaneous localization and mapping algorithms has provided the foundational capability for mobile platforms to operate in unknown environments.

Current technological trends indicate a shift toward heterogeneous sensor architectures that combine complementary sensing modalities. LiDAR systems provide precise geometric measurements and obstacle detection capabilities, while RGB-D cameras offer rich visual information and depth perception. Inertial measurement units contribute essential motion sensing, and tactile sensors enable sophisticated manipulation feedback. The challenge lies not merely in collecting this diverse sensor data, but in creating unified representations that enable coherent decision-making.

The primary technical objective centers on developing robust sensor fusion frameworks that can handle the inherent uncertainties and temporal misalignments present in multi-modal sensor streams. This requires addressing fundamental challenges in data synchronization, coordinate frame transformation, and uncertainty propagation across different sensor modalities. The system must maintain real-time performance while processing high-dimensional sensor data and generating appropriate control commands for both navigation and manipulation subsystems.

Secondary objectives include achieving adaptive sensor selection based on task requirements and environmental conditions. The system should demonstrate graceful degradation when individual sensors fail or provide unreliable data. Additionally, the integration framework must support scalable architectures that can accommodate new sensor types without requiring complete system redesign, ensuring long-term technological viability and adaptability to emerging sensing technologies.

Market Demand for Advanced Mobile Manipulation Systems

The global market for advanced mobile manipulation systems is experiencing unprecedented growth driven by the increasing demand for automation across multiple industries. Manufacturing sectors are leading this transformation, seeking robotic solutions that can seamlessly integrate complex sensor arrays to perform precise manipulation tasks in dynamic environments. The automotive industry particularly demands systems capable of handling delicate assembly operations while maintaining high accuracy and reliability standards.

Healthcare applications represent another significant growth driver, where mobile manipulation systems equipped with sophisticated sensor integration capabilities are revolutionizing surgical procedures, patient care, and pharmaceutical operations. The need for sterile, precise, and adaptive robotic systems in medical environments has created substantial market opportunities for advanced sensor fusion technologies.

Logistics and warehousing sectors are rapidly adopting mobile manipulation systems to address labor shortages and improve operational efficiency. E-commerce growth has intensified the demand for robots capable of handling diverse objects with varying shapes, weights, and fragility levels. These applications require robust multi-sensor integration to ensure reliable object recognition, grasping, and manipulation in cluttered environments.

The agricultural sector is emerging as a promising market segment, driven by the need for precision farming and automated harvesting solutions. Mobile manipulation systems with integrated sensor arrays can perform selective picking, quality assessment, and handling of agricultural products, addressing both labor challenges and productivity requirements.

Service robotics applications in hospitality, retail, and domestic environments are creating new market segments. These applications demand highly sophisticated sensor integration capabilities to safely interact with humans and navigate complex, unpredictable environments while performing manipulation tasks.

Market growth is further accelerated by technological advancements in artificial intelligence, computer vision, and sensor miniaturization, making advanced mobile manipulation systems more accessible and cost-effective. Government initiatives promoting industrial automation and smart manufacturing are providing additional market momentum, particularly in developed economies seeking to maintain competitive advantages through technological innovation.

Healthcare applications represent another significant growth driver, where mobile manipulation systems equipped with sophisticated sensor integration capabilities are revolutionizing surgical procedures, patient care, and pharmaceutical operations. The need for sterile, precise, and adaptive robotic systems in medical environments has created substantial market opportunities for advanced sensor fusion technologies.

Logistics and warehousing sectors are rapidly adopting mobile manipulation systems to address labor shortages and improve operational efficiency. E-commerce growth has intensified the demand for robots capable of handling diverse objects with varying shapes, weights, and fragility levels. These applications require robust multi-sensor integration to ensure reliable object recognition, grasping, and manipulation in cluttered environments.

The agricultural sector is emerging as a promising market segment, driven by the need for precision farming and automated harvesting solutions. Mobile manipulation systems with integrated sensor arrays can perform selective picking, quality assessment, and handling of agricultural products, addressing both labor challenges and productivity requirements.

Service robotics applications in hospitality, retail, and domestic environments are creating new market segments. These applications demand highly sophisticated sensor integration capabilities to safely interact with humans and navigate complex, unpredictable environments while performing manipulation tasks.

Market growth is further accelerated by technological advancements in artificial intelligence, computer vision, and sensor miniaturization, making advanced mobile manipulation systems more accessible and cost-effective. Government initiatives promoting industrial automation and smart manufacturing are providing additional market momentum, particularly in developed economies seeking to maintain competitive advantages through technological innovation.

Current State and Challenges in Sensor Integration

The current landscape of sensor integration for mobile manipulation presents a complex technological ecosystem where multiple sensing modalities must work cohesively to enable autonomous robotic systems. Contemporary mobile manipulation platforms typically incorporate vision sensors, LiDAR, IMUs, force/torque sensors, tactile sensors, and proprioceptive encoders. However, achieving seamless integration remains challenging due to fundamental differences in sensor characteristics, data formats, and temporal synchronization requirements.

Existing sensor fusion architectures predominantly rely on centralized processing approaches, where raw sensor data streams converge at a single computational unit. This methodology creates significant bottlenecks in real-time applications, particularly when processing high-bandwidth sensors like RGB-D cameras and 3D LiDAR simultaneously. Current systems struggle with latency issues, often experiencing delays of 50-200 milliseconds between sensor acquisition and actionable output, which severely impacts dynamic manipulation tasks.

Calibration and spatial registration represent persistent technical hurdles in multi-sensor integration. Maintaining accurate extrinsic calibration between heterogeneous sensors mounted on mobile platforms proves challenging due to mechanical vibrations, thermal expansion, and long-term drift. Traditional calibration methods require controlled environments and periodic recalibration, limiting operational flexibility and increasing maintenance overhead.

Data association and correspondence matching across different sensor modalities remain computationally intensive processes. Current algorithms struggle to establish reliable correlations between visual features, point cloud data, and tactile feedback in dynamic environments. This challenge becomes particularly acute when dealing with occlusions, varying lighting conditions, and objects with similar geometric properties but different material characteristics.

Temporal synchronization issues plague existing sensor integration frameworks, as different sensors operate at varying sampling rates and exhibit distinct latency profiles. Vision sensors typically operate at 30-60 Hz, while tactile sensors may sample at kilohertz frequencies, creating temporal misalignment that degrades fusion accuracy. Current timestamp-based synchronization methods often prove insufficient for precise manipulation tasks requiring sub-millisecond coordination.

Processing power limitations constrain the sophistication of sensor fusion algorithms deployable on mobile platforms. Battery-powered robots must balance computational complexity with energy efficiency, often forcing compromises in algorithm sophistication. Edge computing solutions show promise but introduce additional complexity in distributed processing architectures and wireless communication reliability.

Environmental robustness remains a significant challenge, as sensor performance degrades under adverse conditions such as dust, moisture, extreme temperatures, and electromagnetic interference. Current integration approaches lack adaptive mechanisms to dynamically adjust sensor weighting and fusion strategies based on real-time environmental assessment and individual sensor reliability metrics.

Existing sensor fusion architectures predominantly rely on centralized processing approaches, where raw sensor data streams converge at a single computational unit. This methodology creates significant bottlenecks in real-time applications, particularly when processing high-bandwidth sensors like RGB-D cameras and 3D LiDAR simultaneously. Current systems struggle with latency issues, often experiencing delays of 50-200 milliseconds between sensor acquisition and actionable output, which severely impacts dynamic manipulation tasks.

Calibration and spatial registration represent persistent technical hurdles in multi-sensor integration. Maintaining accurate extrinsic calibration between heterogeneous sensors mounted on mobile platforms proves challenging due to mechanical vibrations, thermal expansion, and long-term drift. Traditional calibration methods require controlled environments and periodic recalibration, limiting operational flexibility and increasing maintenance overhead.

Data association and correspondence matching across different sensor modalities remain computationally intensive processes. Current algorithms struggle to establish reliable correlations between visual features, point cloud data, and tactile feedback in dynamic environments. This challenge becomes particularly acute when dealing with occlusions, varying lighting conditions, and objects with similar geometric properties but different material characteristics.

Temporal synchronization issues plague existing sensor integration frameworks, as different sensors operate at varying sampling rates and exhibit distinct latency profiles. Vision sensors typically operate at 30-60 Hz, while tactile sensors may sample at kilohertz frequencies, creating temporal misalignment that degrades fusion accuracy. Current timestamp-based synchronization methods often prove insufficient for precise manipulation tasks requiring sub-millisecond coordination.

Processing power limitations constrain the sophistication of sensor fusion algorithms deployable on mobile platforms. Battery-powered robots must balance computational complexity with energy efficiency, often forcing compromises in algorithm sophistication. Edge computing solutions show promise but introduce additional complexity in distributed processing architectures and wireless communication reliability.

Environmental robustness remains a significant challenge, as sensor performance degrades under adverse conditions such as dust, moisture, extreme temperatures, and electromagnetic interference. Current integration approaches lack adaptive mechanisms to dynamically adjust sensor weighting and fusion strategies based on real-time environmental assessment and individual sensor reliability metrics.

Existing Multi-Sensor Fusion Solutions for Mobile Robots

01 Multi-sensor fusion for robotic manipulation

Integration of multiple sensor types such as vision sensors, force sensors, tactile sensors, and proximity sensors to enable robots to perceive and interact with their environment more effectively. Sensor fusion algorithms combine data from different sensors to improve object detection, localization, and manipulation accuracy. This approach enhances the robot's ability to handle complex manipulation tasks by providing comprehensive environmental awareness.- Multi-sensor fusion for robotic manipulation: Integration of multiple sensor types such as vision sensors, force sensors, and proximity sensors to enable robots to perceive and interact with their environment more effectively. The fusion of data from different sensors allows for improved object detection, recognition, and manipulation accuracy. This approach enhances the robot's ability to handle complex tasks by combining complementary information from various sensing modalities.

- Vision-based mobile manipulation systems: Utilization of camera systems and image processing techniques to guide mobile manipulators in performing tasks. These systems employ visual feedback to identify objects, determine their positions and orientations, and plan appropriate manipulation strategies. Advanced vision algorithms enable real-time tracking and adaptive control during manipulation operations.

- Tactile and force sensing for manipulation control: Implementation of tactile sensors and force-torque sensors in robotic grippers and end-effectors to provide haptic feedback during manipulation tasks. These sensors enable the robot to detect contact forces, measure grip strength, and adjust manipulation parameters in real-time. This capability is essential for handling delicate objects and performing precision assembly operations.

- Sensor-guided autonomous navigation for mobile platforms: Employment of multiple sensors including lidar, ultrasonic sensors, and inertial measurement units to enable autonomous navigation of mobile manipulation platforms. The sensor array provides environmental mapping, obstacle detection, and localization capabilities. This allows mobile manipulators to navigate safely in dynamic environments while positioning themselves optimally for manipulation tasks.

- Coordinated control systems for multi-sensor mobile manipulators: Development of integrated control architectures that coordinate information from multiple sensors to achieve synchronized motion of mobile base and manipulator arm. These systems process sensor data to generate coordinated trajectories and ensure stable manipulation during platform movement. The control framework manages the coupling between mobility and manipulation to optimize task performance.

02 Vision-based mobile manipulation systems

Utilization of camera systems and computer vision algorithms to guide mobile manipulators in performing tasks. These systems employ image processing techniques for object recognition, pose estimation, and trajectory planning. Visual servoing methods enable real-time adjustment of manipulator movements based on visual feedback, allowing robots to adapt to dynamic environments and perform precise manipulation operations.Expand Specific Solutions03 Force and tactile sensing for manipulation control

Implementation of force and tactile sensors in robotic grippers and end-effectors to enable compliant manipulation and safe interaction with objects. These sensors provide feedback on contact forces, slip detection, and object properties, allowing for adaptive grasp control and delicate handling of fragile items. The sensory information is used to adjust grip strength and manipulation strategies in real-time.Expand Specific Solutions04 Autonomous navigation with manipulation capabilities

Mobile platforms equipped with navigation sensors such as lidar, ultrasonic sensors, and inertial measurement units that enable autonomous movement while performing manipulation tasks. These systems integrate localization, mapping, and path planning algorithms to navigate complex environments while coordinating arm movements for object manipulation. The combination allows robots to perform tasks that require both mobility and dexterous manipulation.Expand Specific Solutions05 Sensor-based grasp planning and execution

Advanced algorithms that utilize sensor data to plan and execute optimal grasping strategies for various objects. These systems analyze object geometry, surface properties, and environmental constraints using multiple sensor modalities to determine the best approach for manipulation. Real-time sensor feedback enables adaptive grasp adjustment and error recovery during manipulation operations, improving success rates in unstructured environments.Expand Specific Solutions

Key Players in Mobile Robotics and Sensor Integration

The mobile manipulation sensor integration field represents an emerging yet rapidly evolving technological landscape characterized by significant growth potential and diverse market participation. The industry is currently in its early-to-mid development stage, with substantial market expansion driven by increasing automation demands across manufacturing, logistics, and service sectors. Technology maturity varies considerably among market participants, with established companies like Boston Dynamics, Google LLC, and DJI demonstrating advanced integration capabilities through their commercial robotic platforms. Academic institutions including Tsinghua University, HKUST, and Southeast University contribute foundational research, while semiconductor leaders such as QUALCOMM and OMRON provide essential sensor hardware components. Specialized robotics companies like Vision Robotics Corp. and Shenzhen 3irobotix focus on application-specific solutions, indicating a fragmented but innovative competitive environment where technological convergence between AI, sensor fusion, and robotic control systems is accelerating market maturation and commercial viability.

SZ DJI Technology Co., Ltd.

Technical Solution: DJI has developed comprehensive sensor integration solutions primarily for aerial manipulation platforms, combining visual-inertial odometry (VIO), ultrasonic sensors, GPS, and gimbal-mounted cameras. Their multi-sensor fusion approach uses Kalman filtering and deep learning algorithms to enable precise positioning and obstacle avoidance during flight operations. The company's mobile manipulation systems integrate force/torque sensors with visual servoing capabilities, allowing drones to perform delicate manipulation tasks such as cargo delivery and inspection operations. Their sensor suite includes redundant IMU systems, barometric pressure sensors, and advanced computer vision modules that work together to maintain stable flight while executing manipulation commands.

Strengths: Mature aerial platform technology, excellent flight stability and control systems. Weaknesses: Limited to aerial applications, payload constraints affect manipulation capabilities.

OMRON Corp.

Technical Solution: OMRON has developed industrial mobile manipulation systems that integrate multiple sensors including vision systems, force sensors, proximity sensors, and encoders for factory automation applications. Their sensor integration approach uses their SYSMAC platform to coordinate data from various sensors through industrial Ethernet networks, enabling precise control of mobile manipulator arms in manufacturing environments. The system combines 3D vision sensors with tactile feedback and position encoders to perform assembly operations, quality inspection, and material handling tasks. OMRON's multi-sensor integration framework includes safety-rated sensors and redundant systems to ensure reliable operation in industrial settings, with real-time processing capabilities for high-speed manipulation operations.

Strengths: Proven industrial automation expertise, robust safety systems, reliable performance in manufacturing environments. Weaknesses: Primarily focused on structured industrial environments, limited adaptability to unstructured settings.

Core Technologies in Sensor Integration Algorithms

Adaptive sensor position determination for multiple mobile sensors

PatentActiveUS12225499B2

Innovation

- A method involving the creation of a spatio-temporal representation of sensor measurements, which is then applied to an environment state prediction model and a sensor position determination model. This model determines new positions for mobile sensors based on predicted future measurements and uncertainty values, facilitating adaptive sensor repositioning.

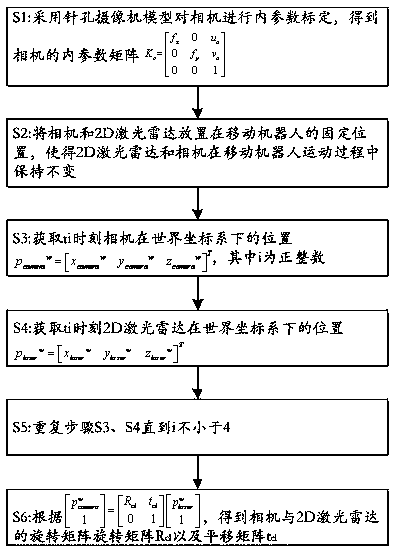

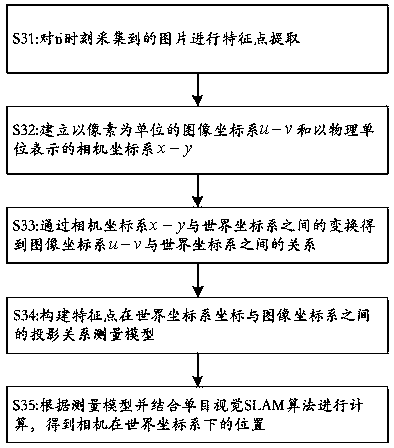

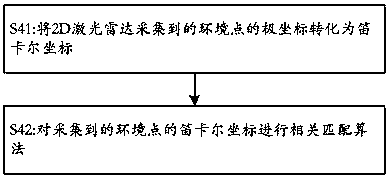

Combined calibration method for multiple sensors of mobile robot

PatentActiveCN105758426A

Innovation

- Through the description of the pinhole camera model, the camera and 2D lidar are used to remain fixed during the movement of the mobile robot, and data at multiple time points are collected, combined with steps such as feature point extraction, transformation of the image coordinate system and the world coordinate system, and ICP algorithm. Calculate the rotation matrix and translation matrix to achieve joint calibration of the camera and 2D lidar.

Safety Standards for Mobile Manipulation Systems

Safety standards for mobile manipulation systems represent a critical framework that governs the development and deployment of multi-sensor integrated robotic platforms. These standards establish comprehensive guidelines for ensuring human safety, environmental protection, and system reliability when robots operate in dynamic environments alongside humans. The integration of multiple sensors significantly impacts safety considerations, as sensor fusion creates complex interdependencies that must be carefully managed to prevent catastrophic failures.

International safety standards such as ISO 10218 for industrial robots and ISO 13482 for personal care robots provide foundational requirements that mobile manipulation systems must satisfy. These standards mandate specific safety functions including emergency stop capabilities, collision detection, and fail-safe behaviors. When multiple sensors are integrated, compliance becomes more complex as each sensor modality must contribute to overall system safety while maintaining redundancy for critical safety functions.

Functional safety requirements under IEC 61508 and ISO 26262 frameworks demand rigorous hazard analysis and risk assessment for sensor integration architectures. Safety Integrity Levels must be assigned based on potential harm severity, with higher levels requiring more robust sensor redundancy and fault detection mechanisms. Multi-sensor systems must implement systematic approaches to handle sensor degradation, occlusion, and failure scenarios without compromising operational safety.

Risk assessment methodologies specifically address sensor fusion challenges in mobile manipulation contexts. Hazard identification processes must consider sensor interference, data latency issues, and computational failures that could lead to incorrect environmental perception. Safety standards require comprehensive testing protocols that validate sensor performance under various environmental conditions, including lighting variations, weather effects, and electromagnetic interference.

Certification processes for mobile manipulation systems involve extensive documentation of sensor integration safety measures. Regulatory bodies require proof of compliance through formal verification methods, simulation testing, and real-world validation scenarios. The certification framework ensures that multi-sensor integration enhances rather than compromises system safety, establishing clear accountability for sensor-related safety functions throughout the system lifecycle.

International safety standards such as ISO 10218 for industrial robots and ISO 13482 for personal care robots provide foundational requirements that mobile manipulation systems must satisfy. These standards mandate specific safety functions including emergency stop capabilities, collision detection, and fail-safe behaviors. When multiple sensors are integrated, compliance becomes more complex as each sensor modality must contribute to overall system safety while maintaining redundancy for critical safety functions.

Functional safety requirements under IEC 61508 and ISO 26262 frameworks demand rigorous hazard analysis and risk assessment for sensor integration architectures. Safety Integrity Levels must be assigned based on potential harm severity, with higher levels requiring more robust sensor redundancy and fault detection mechanisms. Multi-sensor systems must implement systematic approaches to handle sensor degradation, occlusion, and failure scenarios without compromising operational safety.

Risk assessment methodologies specifically address sensor fusion challenges in mobile manipulation contexts. Hazard identification processes must consider sensor interference, data latency issues, and computational failures that could lead to incorrect environmental perception. Safety standards require comprehensive testing protocols that validate sensor performance under various environmental conditions, including lighting variations, weather effects, and electromagnetic interference.

Certification processes for mobile manipulation systems involve extensive documentation of sensor integration safety measures. Regulatory bodies require proof of compliance through formal verification methods, simulation testing, and real-world validation scenarios. The certification framework ensures that multi-sensor integration enhances rather than compromises system safety, establishing clear accountability for sensor-related safety functions throughout the system lifecycle.

Real-Time Processing Requirements for Sensor Integration

Real-time processing represents a critical bottleneck in multi-sensor integration for mobile manipulation systems, where sensor data must be processed within strict temporal constraints to enable effective robotic control. The fundamental challenge lies in achieving deterministic processing latencies while maintaining high data throughput across heterogeneous sensor modalities including vision, LiDAR, tactile, and proprioceptive sensors.

Processing latency requirements vary significantly across different sensor types and manipulation tasks. Vision-based object recognition typically demands processing cycles within 50-100 milliseconds, while tactile feedback for fine manipulation requires sub-millisecond response times. Force-torque sensors used in contact-rich tasks necessitate processing frequencies exceeding 1kHz to maintain stable control loops. These varying temporal requirements create complex scheduling challenges for real-time systems.

Memory bandwidth and computational resource allocation emerge as primary constraints in real-time sensor integration. High-resolution cameras generating data streams at 30-60 fps, combined with dense point clouds from LiDAR sensors, can saturate available memory bandwidth. Efficient data structures and memory management strategies become essential to prevent buffer overflows and maintain consistent processing performance.

Synchronization mechanisms play a crucial role in ensuring temporal coherence across multiple sensor streams. Hardware-level synchronization using external trigger signals provides the most precise timing control, while software-based synchronization introduces variable delays that can compromise real-time performance. Time-stamping accuracy and clock drift compensation become critical factors in maintaining sensor fusion quality.

Edge computing architectures are increasingly adopted to distribute processing loads and reduce communication latencies. Dedicated processing units for specific sensor types, such as GPU acceleration for vision processing and FPGA implementations for low-latency control loops, enable parallel processing while meeting real-time constraints. This distributed approach requires careful orchestration to maintain overall system coherence.

Adaptive processing strategies offer promising solutions for managing variable computational loads. Dynamic algorithm selection based on available processing time, progressive refinement techniques that provide increasingly accurate results over time, and predictive resource allocation help maintain real-time performance under varying operational conditions while preserving manipulation task effectiveness.

Processing latency requirements vary significantly across different sensor types and manipulation tasks. Vision-based object recognition typically demands processing cycles within 50-100 milliseconds, while tactile feedback for fine manipulation requires sub-millisecond response times. Force-torque sensors used in contact-rich tasks necessitate processing frequencies exceeding 1kHz to maintain stable control loops. These varying temporal requirements create complex scheduling challenges for real-time systems.

Memory bandwidth and computational resource allocation emerge as primary constraints in real-time sensor integration. High-resolution cameras generating data streams at 30-60 fps, combined with dense point clouds from LiDAR sensors, can saturate available memory bandwidth. Efficient data structures and memory management strategies become essential to prevent buffer overflows and maintain consistent processing performance.

Synchronization mechanisms play a crucial role in ensuring temporal coherence across multiple sensor streams. Hardware-level synchronization using external trigger signals provides the most precise timing control, while software-based synchronization introduces variable delays that can compromise real-time performance. Time-stamping accuracy and clock drift compensation become critical factors in maintaining sensor fusion quality.

Edge computing architectures are increasingly adopted to distribute processing loads and reduce communication latencies. Dedicated processing units for specific sensor types, such as GPU acceleration for vision processing and FPGA implementations for low-latency control loops, enable parallel processing while meeting real-time constraints. This distributed approach requires careful orchestration to maintain overall system coherence.

Adaptive processing strategies offer promising solutions for managing variable computational loads. Dynamic algorithm selection based on available processing time, progressive refinement techniques that provide increasingly accurate results over time, and predictive resource allocation help maintain real-time performance under varying operational conditions while preserving manipulation task effectiveness.

Unlock deeper insights with PatSnap Eureka Quick Research — get a full tech report to explore trends and direct your research. Try now!

Generate Your Research Report Instantly with AI Agent

Supercharge your innovation with PatSnap Eureka AI Agent Platform!