How To Validate Kalman Filter Models With Experimental Data

SEP 5, 20259 MIN READ

Generate Your Research Report Instantly with AI Agent

PatSnap Eureka helps you evaluate technical feasibility & market potential.

Kalman Filter Validation Background and Objectives

Kalman filtering, developed by Rudolf E. Kalman in the early 1960s, represents a significant breakthrough in estimation theory and has evolved into a cornerstone technology for state estimation in dynamic systems. Originally designed for aerospace applications during the Apollo program, this recursive algorithm has since expanded across numerous domains including robotics, autonomous vehicles, financial forecasting, and industrial control systems. The fundamental principle of Kalman filtering—combining mathematical models with real-world measurements to produce optimal state estimates—continues to drive its widespread adoption in modern engineering applications.

The validation of Kalman filter models against experimental data has become increasingly critical as these filters are deployed in safety-critical and high-reliability systems. This validation process ensures that theoretical models accurately represent real-world dynamics and that filter performance meets operational requirements under varying conditions. The growing complexity of modern systems, coupled with heightened performance expectations, has elevated the importance of robust validation methodologies.

Current validation approaches often suffer from inconsistency and lack standardization across different application domains. Engineers frequently rely on ad hoc methods that may not comprehensively evaluate filter performance or identify potential failure modes. This technical gap presents significant challenges for system certification, particularly in regulated industries where formal verification is mandatory.

The primary objective of this technical investigation is to establish a systematic framework for validating Kalman filter implementations using experimental data. This framework aims to bridge the gap between theoretical filter design and practical implementation by providing quantifiable metrics and methodologies that can be consistently applied across various application domains.

Specifically, this investigation seeks to: (1) identify key performance indicators that effectively measure Kalman filter accuracy, stability, and robustness; (2) develop standardized testing protocols that can reveal filter limitations under diverse operating conditions; (3) establish statistical methods for quantifying the confidence levels in filter estimates; and (4) create guidelines for tuning filter parameters based on experimental validation results.

Recent technological advancements in sensor technology, computational capabilities, and data analytics have created new opportunities for more sophisticated validation techniques. These developments enable more comprehensive testing regimes and real-time performance monitoring that were previously impractical due to computational constraints. By leveraging these capabilities, this investigation aims to advance the state of practice in Kalman filter validation and contribute to more reliable estimation systems across industries.

The validation of Kalman filter models against experimental data has become increasingly critical as these filters are deployed in safety-critical and high-reliability systems. This validation process ensures that theoretical models accurately represent real-world dynamics and that filter performance meets operational requirements under varying conditions. The growing complexity of modern systems, coupled with heightened performance expectations, has elevated the importance of robust validation methodologies.

Current validation approaches often suffer from inconsistency and lack standardization across different application domains. Engineers frequently rely on ad hoc methods that may not comprehensively evaluate filter performance or identify potential failure modes. This technical gap presents significant challenges for system certification, particularly in regulated industries where formal verification is mandatory.

The primary objective of this technical investigation is to establish a systematic framework for validating Kalman filter implementations using experimental data. This framework aims to bridge the gap between theoretical filter design and practical implementation by providing quantifiable metrics and methodologies that can be consistently applied across various application domains.

Specifically, this investigation seeks to: (1) identify key performance indicators that effectively measure Kalman filter accuracy, stability, and robustness; (2) develop standardized testing protocols that can reveal filter limitations under diverse operating conditions; (3) establish statistical methods for quantifying the confidence levels in filter estimates; and (4) create guidelines for tuning filter parameters based on experimental validation results.

Recent technological advancements in sensor technology, computational capabilities, and data analytics have created new opportunities for more sophisticated validation techniques. These developments enable more comprehensive testing regimes and real-time performance monitoring that were previously impractical due to computational constraints. By leveraging these capabilities, this investigation aims to advance the state of practice in Kalman filter validation and contribute to more reliable estimation systems across industries.

Market Applications and Demand Analysis for Validated Filters

The market for validated Kalman filter models spans numerous high-value industries where accurate state estimation and prediction are critical operational components. The autonomous vehicle sector represents one of the fastest-growing markets, projected to reach $556.67 billion by 2026, with validated Kalman filters serving as essential components in sensor fusion systems for reliable navigation and obstacle detection. Properly validated filters directly impact safety ratings and regulatory approval processes, making them indispensable for market entry.

Aerospace and defense applications constitute another significant market segment, where validated Kalman filters enable precise tracking of aircraft, satellites, and military assets. The increasing deployment of unmanned aerial vehicles (UAVs) for both commercial and defense purposes has further amplified demand for robust filtering solutions that can operate reliably in dynamic environments with varying levels of measurement noise.

Industrial automation and robotics represent a third major market, with manufacturing facilities increasingly adopting advanced sensing and control systems that rely on validated Kalman filters for real-time process monitoring and quality control. The Industry 4.0 transformation has accelerated this trend, with predictive maintenance applications particularly benefiting from accurate state estimation to forecast equipment failures before they occur.

Consumer electronics manufacturers have also emerged as significant stakeholders, incorporating Kalman filters in smartphones, wearables, and augmented reality devices to enhance motion sensing, image stabilization, and location-based services. Market research indicates that validated filters providing superior performance can command premium pricing in these competitive markets.

Financial institutions represent an emerging application area, utilizing Kalman filters for algorithmic trading, risk assessment, and economic forecasting. The ability to validate these models against historical market data directly correlates with trading performance and risk management effectiveness, driving demand for sophisticated validation methodologies.

Healthcare applications are experiencing rapid growth, with medical imaging, patient monitoring systems, and robotic surgery all benefiting from validated Kalman filter implementations. Regulatory requirements in this sector are particularly stringent, necessitating comprehensive validation protocols to ensure patient safety and treatment efficacy.

Market analysis reveals that organizations are increasingly willing to invest in advanced validation tools and methodologies that can reduce development cycles and improve filter performance. This trend is particularly evident in safety-critical applications where the cost of filter failure far exceeds the investment in thorough validation processes.

Aerospace and defense applications constitute another significant market segment, where validated Kalman filters enable precise tracking of aircraft, satellites, and military assets. The increasing deployment of unmanned aerial vehicles (UAVs) for both commercial and defense purposes has further amplified demand for robust filtering solutions that can operate reliably in dynamic environments with varying levels of measurement noise.

Industrial automation and robotics represent a third major market, with manufacturing facilities increasingly adopting advanced sensing and control systems that rely on validated Kalman filters for real-time process monitoring and quality control. The Industry 4.0 transformation has accelerated this trend, with predictive maintenance applications particularly benefiting from accurate state estimation to forecast equipment failures before they occur.

Consumer electronics manufacturers have also emerged as significant stakeholders, incorporating Kalman filters in smartphones, wearables, and augmented reality devices to enhance motion sensing, image stabilization, and location-based services. Market research indicates that validated filters providing superior performance can command premium pricing in these competitive markets.

Financial institutions represent an emerging application area, utilizing Kalman filters for algorithmic trading, risk assessment, and economic forecasting. The ability to validate these models against historical market data directly correlates with trading performance and risk management effectiveness, driving demand for sophisticated validation methodologies.

Healthcare applications are experiencing rapid growth, with medical imaging, patient monitoring systems, and robotic surgery all benefiting from validated Kalman filter implementations. Regulatory requirements in this sector are particularly stringent, necessitating comprehensive validation protocols to ensure patient safety and treatment efficacy.

Market analysis reveals that organizations are increasingly willing to invest in advanced validation tools and methodologies that can reduce development cycles and improve filter performance. This trend is particularly evident in safety-critical applications where the cost of filter failure far exceeds the investment in thorough validation processes.

Current Validation Methodologies and Technical Challenges

The validation of Kalman filter models with experimental data currently employs several established methodologies, each with specific strengths and limitations. The most widely adopted approach is the innovation-based validation method, which analyzes the statistical properties of the innovation sequence. When properly implemented, this sequence should exhibit white noise characteristics with zero mean and known covariance. Deviations from these properties indicate model inadequacies or incorrect filter tuning.

Residual analysis represents another fundamental validation technique, where the difference between predicted and measured states is systematically examined. This approach typically involves statistical tests such as chi-square tests to determine if residuals fall within expected confidence intervals. However, practitioners often struggle with determining appropriate thresholds for accepting or rejecting models, particularly in systems with complex noise characteristics.

Cross-validation techniques have gained prominence in recent years, where data is partitioned into training and validation sets. The filter is calibrated using the training data and then evaluated on the validation set to assess generalization performance. While effective, this method requires substantial data volumes and may not be feasible for systems with limited experimental observations.

Monte Carlo simulations serve as a complementary validation approach, enabling the assessment of filter performance across numerous synthetic scenarios. These simulations help establish confidence in filter robustness but face challenges in accurately representing real-world conditions and unexpected disturbances that may occur in practical applications.

A significant technical challenge in Kalman filter validation is the handling of nonlinearities and non-Gaussian noise distributions. Traditional validation methods often assume linear system dynamics and Gaussian noise characteristics, which rarely hold true in complex real-world applications. This discrepancy leads to validation results that may not accurately reflect operational performance.

Parameter sensitivity analysis presents another challenge, as Kalman filter performance can vary dramatically with small changes in noise covariance matrices and initial state estimates. Determining optimal parameter values remains largely heuristic, with limited systematic approaches for validation across parameter spaces.

Real-time validation poses additional difficulties, particularly for embedded systems with computational constraints. Validation methods that work well in offline analysis may be impractical for continuous monitoring during operation, creating a disconnect between development-phase validation and operational performance assessment.

The integration of multi-sensor data introduces further complications, as inconsistencies between different measurement sources can significantly impact validation results. Current methodologies often lack robust frameworks for validating filters that fuse heterogeneous sensor inputs with varying reliability and update rates.

Residual analysis represents another fundamental validation technique, where the difference between predicted and measured states is systematically examined. This approach typically involves statistical tests such as chi-square tests to determine if residuals fall within expected confidence intervals. However, practitioners often struggle with determining appropriate thresholds for accepting or rejecting models, particularly in systems with complex noise characteristics.

Cross-validation techniques have gained prominence in recent years, where data is partitioned into training and validation sets. The filter is calibrated using the training data and then evaluated on the validation set to assess generalization performance. While effective, this method requires substantial data volumes and may not be feasible for systems with limited experimental observations.

Monte Carlo simulations serve as a complementary validation approach, enabling the assessment of filter performance across numerous synthetic scenarios. These simulations help establish confidence in filter robustness but face challenges in accurately representing real-world conditions and unexpected disturbances that may occur in practical applications.

A significant technical challenge in Kalman filter validation is the handling of nonlinearities and non-Gaussian noise distributions. Traditional validation methods often assume linear system dynamics and Gaussian noise characteristics, which rarely hold true in complex real-world applications. This discrepancy leads to validation results that may not accurately reflect operational performance.

Parameter sensitivity analysis presents another challenge, as Kalman filter performance can vary dramatically with small changes in noise covariance matrices and initial state estimates. Determining optimal parameter values remains largely heuristic, with limited systematic approaches for validation across parameter spaces.

Real-time validation poses additional difficulties, particularly for embedded systems with computational constraints. Validation methods that work well in offline analysis may be impractical for continuous monitoring during operation, creating a disconnect between development-phase validation and operational performance assessment.

The integration of multi-sensor data introduces further complications, as inconsistencies between different measurement sources can significantly impact validation results. Current methodologies often lack robust frameworks for validating filters that fuse heterogeneous sensor inputs with varying reliability and update rates.

Established Experimental Validation Frameworks

01 Validation techniques for Kalman filter models

Various techniques are employed to validate Kalman filter models, ensuring their accuracy and reliability. These techniques include statistical testing, comparison with ground truth data, and performance metrics evaluation. Validation processes help to verify that the filter's state estimation is accurate and that the model assumptions are appropriate for the application context. These validation methods are crucial for confirming that the Kalman filter performs as expected in real-world scenarios.- Validation techniques for Kalman filter models: Various techniques are employed to validate Kalman filter models, ensuring their accuracy and reliability. These techniques include statistical testing, comparison with ground truth data, and performance metrics evaluation. Validation processes verify that the filter correctly estimates states and uncertainties, with methods such as residual analysis, consistency checks, and cross-validation being commonly used to assess model performance.

- Application-specific validation approaches: Different applications require specialized validation approaches for Kalman filter models. In navigation systems, validation focuses on position accuracy and trajectory smoothness. For financial forecasting, validation examines prediction errors and risk metrics. In signal processing, validation evaluates noise reduction and signal recovery quality. These application-specific approaches ensure that Kalman filters meet the particular requirements of their intended use.

- Real-time validation and adaptive filtering: Real-time validation methods for Kalman filters involve continuous monitoring of filter performance during operation. These methods include innovation sequence monitoring, adaptive parameter tuning, and fault detection algorithms. When deviations from expected behavior are detected, the filter parameters can be automatically adjusted to maintain optimal performance. This adaptive approach ensures robustness in dynamic environments where system characteristics may change over time.

- Model validation through simulation and testing: Comprehensive validation of Kalman filter models often involves simulation and testing under controlled conditions. Monte Carlo simulations help assess filter performance across various scenarios and noise conditions. Hardware-in-the-loop testing validates the filter in near-operational environments. Sensitivity analysis identifies critical parameters affecting filter performance. These approaches provide confidence in the filter's behavior before deployment in real-world applications.

- Validation metrics and performance evaluation: Specific metrics are used to quantitatively evaluate Kalman filter performance during validation. These include root mean square error (RMSE), normalized estimation error squared (NEES), and innovation consistency tests. Convergence rates, stability measures, and computational efficiency are also assessed. These metrics provide objective measures of filter quality and help compare different filter implementations or parameter settings to determine optimal configurations.

02 Kalman filter validation in navigation and positioning systems

Kalman filters are extensively used in navigation and positioning systems, where validation is critical for ensuring accurate location tracking. Validation methods in this context include comparing filter outputs with reference trajectories, analyzing residual errors, and testing under various environmental conditions. The validation process ensures that the filter can handle sensor noise, maintain stability during dynamic movements, and provide reliable position estimates even in challenging scenarios.Expand Specific Solutions03 Cross-validation and adaptive techniques for Kalman filters

Cross-validation and adaptive techniques are employed to enhance the performance and reliability of Kalman filter models. These approaches involve dynamically adjusting filter parameters based on observed data, using multiple model validation, and implementing adaptive noise covariance estimation. By continuously validating and adapting the filter parameters, these techniques ensure optimal performance across varying conditions and help prevent filter divergence when system dynamics change.Expand Specific Solutions04 Validation of Kalman filters in communication systems

In communication systems, Kalman filter validation focuses on ensuring accurate channel estimation, signal tracking, and noise reduction. Validation methods include bit error rate analysis, signal-to-noise ratio measurements, and comparison with alternative estimation techniques. These validation approaches help confirm that the Kalman filter effectively handles the unique challenges of communication channels, such as fading, interference, and rapid signal variations.Expand Specific Solutions05 Simulation-based validation frameworks for Kalman filters

Simulation-based frameworks provide comprehensive validation environments for Kalman filter models before deployment in real-world applications. These frameworks include Monte Carlo simulations, scenario-based testing, and sensitivity analysis to validate filter performance under various conditions. By simulating different noise profiles, system dynamics, and failure scenarios, these validation approaches help identify potential weaknesses in the filter design and ensure robust performance across a wide range of operating conditions.Expand Specific Solutions

Leading Research Groups and Industrial Implementers

Kalman filter validation with experimental data is currently in a mature development phase, with a growing market driven by increasing demand for accurate sensor fusion and state estimation across industries. The technology has reached significant maturity, with established players like Robert Bosch GmbH, Honeywell International, and Lockheed Martin leading implementation in automotive, aerospace, and defense sectors. Academic institutions such as Northwestern Polytechnical University and Johns Hopkins University contribute substantial research advancements. Companies like Siemens Healthineers and NEC Corp are expanding applications into healthcare and telecommunications, while specialized firms like TWAICE Technologies focus on battery analytics applications. The competitive landscape shows a balance between large industrial conglomerates with extensive resources and specialized technology providers offering domain-specific implementations.

Robert Bosch GmbH

Technical Solution: Bosch has pioneered a systematic approach to Kalman filter validation for automotive sensor fusion applications. Their methodology centers on a three-tiered validation framework: component-level testing, system-level integration, and vehicle-level validation. At the component level, Bosch employs sensor characterization to accurately model noise parameters, which are then incorporated into the filter design. For system validation, they utilize reference measurement systems with higher accuracy than the sensors being validated, establishing ground truth for comparison. Bosch's approach includes residual monitoring techniques that analyze the statistical properties of innovation sequences to detect model inconsistencies. They have developed specialized test tracks with precisely mapped features to validate localization algorithms under controlled conditions. Additionally, Bosch implements adaptive threshold techniques that automatically adjust validation parameters based on driving conditions, enhancing robustness in diverse environments. Their validation process incorporates both offline batch processing of collected data and real-time performance evaluation during test drives.

Strengths: Highly structured validation methodology that scales from laboratory to real-world testing. Strong focus on automotive-specific challenges like varying environmental conditions and sensor degradation over time. Weaknesses: Heavy reliance on expensive reference systems for ground truth establishment. Validation approach may be overly conservative, potentially rejecting valid innovations in edge cases, which could limit performance in unusual scenarios.

Honeywell International Technologies Ltd.

Technical Solution: Honeywell has developed a comprehensive Kalman filter validation framework specifically designed for aerospace and industrial control systems. Their approach combines theoretical analysis with practical testing across multiple validation stages. Initially, they employ sensitivity analysis to identify critical parameters that most significantly impact filter performance. Honeywell's methodology includes residual whiteness testing using autocorrelation functions and chi-square tests to verify filter consistency with actual measurement statistics. For aerospace applications, they utilize high-fidelity simulation environments that incorporate detailed sensor models and environmental factors before proceeding to flight testing. A key aspect of their validation process is the implementation of fault detection and isolation (FDI) algorithms that continuously monitor filter performance during operation, allowing for real-time validation against expected behavior. Honeywell also employs multi-model adaptive estimation techniques where multiple Kalman filter models run concurrently, with validation metrics determining which model best matches current conditions. Their validation framework includes extensive documentation of filter performance across various operational scenarios, building a comprehensive performance envelope.

Strengths: Robust validation methodology that addresses both theoretical consistency and practical performance. Strong integration with fault detection systems enhances operational reliability. Extensive experience across multiple industries provides diverse validation techniques. Weaknesses: Validation process can be time-consuming and resource-intensive. Some techniques require specialized expertise in statistical analysis that may not be readily available in all engineering teams.

Critical Research Papers and Validation Benchmarks

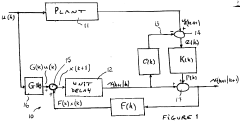

Data model hybrid driven Kalman filter design method and system

PatentInactiveCN118017976A

Innovation

- A Kalman filter design method driven by a data model hybrid is used to configure input feature vectors, establish a neural network model that integrates the Transformer architecture, conduct supervised training and set hyperparameters, such as learning rate, weight attenuation and batch size, and use cosine annealing. The algorithm adjusts the learning rate, establishes evaluation indicators, and verifies the model through simulation experiments.

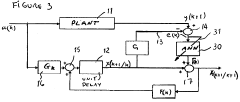

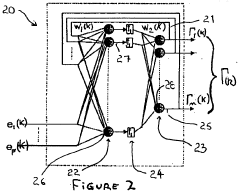

A self-tuning kalman filter

PatentInactiveCA2266282A1

Innovation

- Replacing the Kalman gain with an artificial neural network (ANN) that learns to minimize estimation errors, allowing the filter to adapt and tune itself without prior information on noise or model parameters, while maintaining the same filter structure.

Statistical Performance Metrics and Evaluation Criteria

Validating Kalman filter models requires robust statistical performance metrics to quantitatively assess how well the filter's estimates align with experimental data. The Root Mean Square Error (RMSE) serves as a fundamental metric, providing a comprehensive measure of the average magnitude of estimation errors. RMSE is particularly valuable for Kalman filter validation as it penalizes larger errors more heavily, making it sensitive to outliers that might indicate filter divergence or model inadequacies.

The Normalized Estimation Error Squared (NEES) test offers critical insights into filter consistency by evaluating whether the filter's estimated covariance accurately reflects the actual error distribution. A properly tuned Kalman filter should produce NEES values that follow a chi-square distribution. Persistent deviations suggest either overconfidence (underestimated covariance) or excessive conservatism (overestimated covariance) in the filter's uncertainty estimates.

Innovation consistency tests examine the filter's prediction residuals, which should ideally be zero-mean white noise with covariance matching the filter's predicted measurement covariance. Autocorrelation analysis of these residuals can reveal temporal dependencies that indicate unmodeled dynamics or incorrect process noise assumptions. Similarly, the Normalized Innovation Squared (NIS) metric helps assess whether the filter's predicted measurement covariance accurately captures the actual innovation statistics.

For state estimation accuracy, Mean Absolute Error (MAE) provides a straightforward measure of average error magnitude without the squared penalty of RMSE. This makes MAE less sensitive to occasional large errors and potentially more representative of typical performance in applications where outliers are less concerning than consistent accuracy.

Time-domain performance metrics such as settling time, overshoot, and steady-state error offer practical insights into the filter's dynamic response characteristics. These metrics are particularly relevant for control applications where the filter's transient behavior significantly impacts system performance.

Cross-validation techniques strengthen evaluation robustness by partitioning experimental data into training and validation sets. This approach helps detect overfitting and ensures the filter performs well on previously unseen data. Additionally, sensitivity analysis quantifies how parameter variations affect filter performance, identifying which model components most critically influence accuracy and stability.

The Normalized Estimation Error Squared (NEES) test offers critical insights into filter consistency by evaluating whether the filter's estimated covariance accurately reflects the actual error distribution. A properly tuned Kalman filter should produce NEES values that follow a chi-square distribution. Persistent deviations suggest either overconfidence (underestimated covariance) or excessive conservatism (overestimated covariance) in the filter's uncertainty estimates.

Innovation consistency tests examine the filter's prediction residuals, which should ideally be zero-mean white noise with covariance matching the filter's predicted measurement covariance. Autocorrelation analysis of these residuals can reveal temporal dependencies that indicate unmodeled dynamics or incorrect process noise assumptions. Similarly, the Normalized Innovation Squared (NIS) metric helps assess whether the filter's predicted measurement covariance accurately captures the actual innovation statistics.

For state estimation accuracy, Mean Absolute Error (MAE) provides a straightforward measure of average error magnitude without the squared penalty of RMSE. This makes MAE less sensitive to occasional large errors and potentially more representative of typical performance in applications where outliers are less concerning than consistent accuracy.

Time-domain performance metrics such as settling time, overshoot, and steady-state error offer practical insights into the filter's dynamic response characteristics. These metrics are particularly relevant for control applications where the filter's transient behavior significantly impacts system performance.

Cross-validation techniques strengthen evaluation robustness by partitioning experimental data into training and validation sets. This approach helps detect overfitting and ensures the filter performs well on previously unseen data. Additionally, sensitivity analysis quantifies how parameter variations affect filter performance, identifying which model components most critically influence accuracy and stability.

Computational Resources and Implementation Considerations

Implementing Kalman filter validation requires careful consideration of computational resources to ensure efficient and accurate results. Modern Kalman filter implementations, especially those handling complex systems with high-dimensional state spaces or nonlinear dynamics, demand significant computational power. Organizations should evaluate whether standard workstations are sufficient or if dedicated high-performance computing resources are necessary, particularly when processing large experimental datasets or running Monte Carlo simulations for validation purposes.

Memory requirements represent another critical consideration, as storing large covariance matrices and historical state estimates can quickly consume available RAM. For real-time applications, memory optimization techniques such as sparse matrix representations or reduced-order models may be essential to maintain performance within hardware constraints. Additionally, specialized hardware accelerators like GPUs or FPGAs can dramatically improve computational efficiency for matrix operations central to Kalman filtering, offering 10-100x performance improvements for certain implementations.

Software architecture decisions significantly impact validation workflows. Modular designs that separate filter algorithms from data handling and visualization components facilitate more efficient testing and comparison against experimental data. Many organizations benefit from leveraging established numerical computing environments such as MATLAB, Python with NumPy/SciPy, or Julia, which provide robust matrix operation libraries and visualization tools essential for validation processes.

Scalability considerations become paramount when moving from prototype to production implementations. Validation procedures that work well for small-scale tests may become prohibitively expensive at full scale. Incremental validation approaches that progressively increase complexity can help identify computational bottlenecks early. For embedded systems applications, additional constraints around power consumption, memory footprint, and real-time performance requirements must be factored into validation protocols.

Version control and reproducibility infrastructure represent often overlooked but critical components of effective validation workflows. Maintaining precise records of filter configurations, experimental data preprocessing steps, and validation metrics ensures that results can be reproduced and compared across different implementation iterations. Organizations should establish standardized benchmarking procedures that include computational performance metrics alongside accuracy measures to support comprehensive evaluation of Kalman filter implementations.

Memory requirements represent another critical consideration, as storing large covariance matrices and historical state estimates can quickly consume available RAM. For real-time applications, memory optimization techniques such as sparse matrix representations or reduced-order models may be essential to maintain performance within hardware constraints. Additionally, specialized hardware accelerators like GPUs or FPGAs can dramatically improve computational efficiency for matrix operations central to Kalman filtering, offering 10-100x performance improvements for certain implementations.

Software architecture decisions significantly impact validation workflows. Modular designs that separate filter algorithms from data handling and visualization components facilitate more efficient testing and comparison against experimental data. Many organizations benefit from leveraging established numerical computing environments such as MATLAB, Python with NumPy/SciPy, or Julia, which provide robust matrix operation libraries and visualization tools essential for validation processes.

Scalability considerations become paramount when moving from prototype to production implementations. Validation procedures that work well for small-scale tests may become prohibitively expensive at full scale. Incremental validation approaches that progressively increase complexity can help identify computational bottlenecks early. For embedded systems applications, additional constraints around power consumption, memory footprint, and real-time performance requirements must be factored into validation protocols.

Version control and reproducibility infrastructure represent often overlooked but critical components of effective validation workflows. Maintaining precise records of filter configurations, experimental data preprocessing steps, and validation metrics ensures that results can be reproduced and compared across different implementation iterations. Organizations should establish standardized benchmarking procedures that include computational performance metrics alongside accuracy measures to support comprehensive evaluation of Kalman filter implementations.

Unlock deeper insights with PatSnap Eureka Quick Research — get a full tech report to explore trends and direct your research. Try now!

Generate Your Research Report Instantly with AI Agent

Supercharge your innovation with PatSnap Eureka AI Agent Platform!