Kalman Filter For Climate Data Assimilation: Efficiency Metrics

SEP 5, 20259 MIN READ

Generate Your Research Report Instantly with AI Agent

PatSnap Eureka helps you evaluate technical feasibility & market potential.

Kalman Filter Evolution and Climate Modeling Goals

The Kalman Filter, developed by Rudolf E. Kalman in the early 1960s, represents a significant milestone in estimation theory and has evolved substantially over the decades. Originally designed for linear systems with Gaussian noise, this recursive algorithm has undergone numerous adaptations to address increasingly complex scenarios, particularly in climate science where non-linearity and high dimensionality present formidable challenges.

The evolution of Kalman filtering in climate applications began with simple implementations in atmospheric models during the 1970s and gained momentum in the 1980s with the Extended Kalman Filter (EKF), which accommodated mild non-linearities through linearization techniques. The 1990s witnessed the emergence of the Ensemble Kalman Filter (EnKF), a Monte Carlo implementation that revolutionized climate data assimilation by handling strongly non-linear dynamics without explicit linearization.

Recent developments have focused on computational efficiency and scalability, with variants such as the Local Ensemble Transform Kalman Filter (LETKF) and the Error-Subspace Statistical Estimation (ESSE) method addressing the curse of dimensionality inherent in global climate models. These innovations have enabled more effective assimilation of satellite observations, which provide unprecedented spatial coverage but introduce complex error structures.

The primary goal of Kalman filtering in climate modeling is to optimally combine observational data with numerical model predictions, thereby reducing uncertainty and improving forecast accuracy. This process, known as data assimilation, serves as the foundation for modern weather prediction systems and climate reanalysis products that inform policy decisions and scientific research.

Efficiency metrics have become increasingly critical as climate models grow in complexity and resolution. Contemporary objectives include reducing computational overhead while maintaining statistical robustness, minimizing communication bottlenecks in parallel computing environments, and developing adaptive algorithms that allocate computational resources based on forecast sensitivity and uncertainty patterns.

Looking forward, the field aims to integrate machine learning techniques with traditional Kalman filtering approaches, potentially creating hybrid systems that leverage the physical consistency of model-based methods and the pattern recognition capabilities of data-driven algorithms. Additionally, there is growing interest in developing methods that can effectively assimilate observations across multiple time scales, from weather events to decadal climate variations.

The ultimate technical goal remains the development of a unified, computationally feasible framework that can seamlessly incorporate diverse observation types, account for model biases, and provide reliable uncertainty quantification—all while scaling efficiently on exascale computing architectures that will power the next generation of Earth system models.

The evolution of Kalman filtering in climate applications began with simple implementations in atmospheric models during the 1970s and gained momentum in the 1980s with the Extended Kalman Filter (EKF), which accommodated mild non-linearities through linearization techniques. The 1990s witnessed the emergence of the Ensemble Kalman Filter (EnKF), a Monte Carlo implementation that revolutionized climate data assimilation by handling strongly non-linear dynamics without explicit linearization.

Recent developments have focused on computational efficiency and scalability, with variants such as the Local Ensemble Transform Kalman Filter (LETKF) and the Error-Subspace Statistical Estimation (ESSE) method addressing the curse of dimensionality inherent in global climate models. These innovations have enabled more effective assimilation of satellite observations, which provide unprecedented spatial coverage but introduce complex error structures.

The primary goal of Kalman filtering in climate modeling is to optimally combine observational data with numerical model predictions, thereby reducing uncertainty and improving forecast accuracy. This process, known as data assimilation, serves as the foundation for modern weather prediction systems and climate reanalysis products that inform policy decisions and scientific research.

Efficiency metrics have become increasingly critical as climate models grow in complexity and resolution. Contemporary objectives include reducing computational overhead while maintaining statistical robustness, minimizing communication bottlenecks in parallel computing environments, and developing adaptive algorithms that allocate computational resources based on forecast sensitivity and uncertainty patterns.

Looking forward, the field aims to integrate machine learning techniques with traditional Kalman filtering approaches, potentially creating hybrid systems that leverage the physical consistency of model-based methods and the pattern recognition capabilities of data-driven algorithms. Additionally, there is growing interest in developing methods that can effectively assimilate observations across multiple time scales, from weather events to decadal climate variations.

The ultimate technical goal remains the development of a unified, computationally feasible framework that can seamlessly incorporate diverse observation types, account for model biases, and provide reliable uncertainty quantification—all while scaling efficiently on exascale computing architectures that will power the next generation of Earth system models.

Market Demand for Advanced Climate Data Assimilation

The market for advanced climate data assimilation technologies, particularly those leveraging Kalman Filter methodologies, has experienced significant growth in recent years. This expansion is primarily driven by increasing concerns about climate change impacts and the growing need for accurate climate predictions across various sectors. Government agencies, research institutions, and private enterprises are increasingly investing in sophisticated climate modeling capabilities to inform policy decisions and strategic planning.

Climate-sensitive industries such as agriculture, energy, water management, and insurance represent the largest market segments demanding advanced data assimilation techniques. The agricultural sector alone faces annual losses of billions due to climate variability, creating strong demand for improved seasonal forecasts that can optimize planting schedules and resource allocation. Similarly, renewable energy providers require precise climate predictions to forecast production capacity and manage grid integration.

The insurance and reinsurance industries have become major consumers of climate data products, using sophisticated risk models to price climate-related policies. Munich Re and Swiss Re, leading global reinsurers, have developed dedicated climate risk assessment divisions that rely heavily on advanced data assimilation techniques to improve their catastrophe modeling capabilities.

Public sector demand continues to grow steadily, with national meteorological agencies upgrading their forecasting systems to incorporate more sophisticated data assimilation methods. The European Centre for Medium-Range Weather Forecasts (ECMWF) and the National Oceanic and Atmospheric Administration (NOAA) have made substantial investments in improving their operational systems through advanced Kalman filtering techniques.

Emerging markets in climate services are developing rapidly, with specialized consulting firms offering tailored climate risk assessments to corporations and governments. These services depend on high-quality climate data products derived from state-of-the-art assimilation systems. The Climate Service Market is projected to grow at a compound annual growth rate exceeding 10% through 2030.

Efficiency metrics for Kalman Filter implementations have become a critical market differentiator, as users demand faster processing times and reduced computational costs without sacrificing accuracy. Organizations with limited computational resources particularly value optimized algorithms that can deliver reliable results without requiring supercomputing facilities.

The market increasingly demands solutions that can handle the integration of diverse data sources, including satellite observations, ground measurements, and proxy data. This trend is driving innovation in ensemble Kalman Filter approaches and hybrid methods that can efficiently process heterogeneous datasets while providing robust uncertainty quantification.

Climate-sensitive industries such as agriculture, energy, water management, and insurance represent the largest market segments demanding advanced data assimilation techniques. The agricultural sector alone faces annual losses of billions due to climate variability, creating strong demand for improved seasonal forecasts that can optimize planting schedules and resource allocation. Similarly, renewable energy providers require precise climate predictions to forecast production capacity and manage grid integration.

The insurance and reinsurance industries have become major consumers of climate data products, using sophisticated risk models to price climate-related policies. Munich Re and Swiss Re, leading global reinsurers, have developed dedicated climate risk assessment divisions that rely heavily on advanced data assimilation techniques to improve their catastrophe modeling capabilities.

Public sector demand continues to grow steadily, with national meteorological agencies upgrading their forecasting systems to incorporate more sophisticated data assimilation methods. The European Centre for Medium-Range Weather Forecasts (ECMWF) and the National Oceanic and Atmospheric Administration (NOAA) have made substantial investments in improving their operational systems through advanced Kalman filtering techniques.

Emerging markets in climate services are developing rapidly, with specialized consulting firms offering tailored climate risk assessments to corporations and governments. These services depend on high-quality climate data products derived from state-of-the-art assimilation systems. The Climate Service Market is projected to grow at a compound annual growth rate exceeding 10% through 2030.

Efficiency metrics for Kalman Filter implementations have become a critical market differentiator, as users demand faster processing times and reduced computational costs without sacrificing accuracy. Organizations with limited computational resources particularly value optimized algorithms that can deliver reliable results without requiring supercomputing facilities.

The market increasingly demands solutions that can handle the integration of diverse data sources, including satellite observations, ground measurements, and proxy data. This trend is driving innovation in ensemble Kalman Filter approaches and hybrid methods that can efficiently process heterogeneous datasets while providing robust uncertainty quantification.

Current Challenges in Kalman Filter Implementation

Despite the theoretical elegance of Kalman filters in climate data assimilation, their implementation faces significant challenges that limit widespread operational adoption. The computational complexity of traditional Kalman filter algorithms presents a formidable barrier when processing high-dimensional climate datasets. State vectors in global climate models often exceed millions of variables, making the storage and manipulation of covariance matrices prohibitively expensive, with computational costs scaling as O(n³) where n represents the state dimension.

Memory constraints further exacerbate these challenges, particularly for ensemble-based implementations. The Ensemble Kalman Filter (EnKF) requires maintaining multiple model states simultaneously, which can exceed available computational resources for comprehensive Earth system models. Even with high-performance computing facilities, these memory limitations often force compromises in spatial resolution or ensemble size.

Nonlinearity in climate systems poses another significant obstacle. The standard Kalman filter assumes linear dynamics and Gaussian error distributions—assumptions frequently violated in climate processes. Phenomena such as precipitation, cloud formation, and atmospheric chemistry exhibit strong nonlinearities that degrade filter performance, leading to suboptimal state estimates and potential filter divergence in operational settings.

Model bias represents a persistent challenge that undermines filter effectiveness. Systematic errors in climate models propagate through the assimilation process, contaminating analysis products. Traditional Kalman filter formulations lack robust mechanisms for bias detection and correction, necessitating complex adaptations that further increase computational demands.

Multi-scale interactions in climate systems create additional complications. Processes operating across vastly different temporal and spatial scales must be simultaneously represented, but the Kalman filter struggles to balance the influence of observations across these scales. Fast atmospheric processes may overwhelm slower oceanic or cryospheric signals, resulting in suboptimal assimilation outcomes.

Observation handling introduces further complexities. Climate observations are heterogeneous in type, coverage, and quality, with varying error characteristics. Incorporating these diverse data streams requires sophisticated observation operators and error covariance specifications that are difficult to implement efficiently within the Kalman filter framework.

Real-time operational constraints impose strict efficiency requirements that standard Kalman filter implementations struggle to meet. Weather forecasting centers and climate monitoring services demand timely analysis products, creating tension between computational thoroughness and operational deadlines that often necessitates algorithmic compromises.

Memory constraints further exacerbate these challenges, particularly for ensemble-based implementations. The Ensemble Kalman Filter (EnKF) requires maintaining multiple model states simultaneously, which can exceed available computational resources for comprehensive Earth system models. Even with high-performance computing facilities, these memory limitations often force compromises in spatial resolution or ensemble size.

Nonlinearity in climate systems poses another significant obstacle. The standard Kalman filter assumes linear dynamics and Gaussian error distributions—assumptions frequently violated in climate processes. Phenomena such as precipitation, cloud formation, and atmospheric chemistry exhibit strong nonlinearities that degrade filter performance, leading to suboptimal state estimates and potential filter divergence in operational settings.

Model bias represents a persistent challenge that undermines filter effectiveness. Systematic errors in climate models propagate through the assimilation process, contaminating analysis products. Traditional Kalman filter formulations lack robust mechanisms for bias detection and correction, necessitating complex adaptations that further increase computational demands.

Multi-scale interactions in climate systems create additional complications. Processes operating across vastly different temporal and spatial scales must be simultaneously represented, but the Kalman filter struggles to balance the influence of observations across these scales. Fast atmospheric processes may overwhelm slower oceanic or cryospheric signals, resulting in suboptimal assimilation outcomes.

Observation handling introduces further complexities. Climate observations are heterogeneous in type, coverage, and quality, with varying error characteristics. Incorporating these diverse data streams requires sophisticated observation operators and error covariance specifications that are difficult to implement efficiently within the Kalman filter framework.

Real-time operational constraints impose strict efficiency requirements that standard Kalman filter implementations struggle to meet. Weather forecasting centers and climate monitoring services demand timely analysis products, creating tension between computational thoroughness and operational deadlines that often necessitates algorithmic compromises.

Existing Kalman Filter Optimization Approaches

01 Computational Efficiency Metrics for Kalman Filters

Various metrics are used to evaluate the computational efficiency of Kalman filters, including processing time, memory usage, and algorithmic complexity. These metrics help in assessing how efficiently the filter can process data in real-time applications. Optimization techniques such as matrix decomposition methods and parallel processing approaches can significantly improve computational performance, especially in systems with limited resources.- Computational efficiency metrics for Kalman filters: Various metrics are used to evaluate the computational efficiency of Kalman filters, including processing time, memory usage, and algorithmic complexity. These metrics help in assessing how efficiently the filter performs state estimation calculations, especially in resource-constrained environments. Optimization techniques such as matrix decomposition methods and parallel processing can significantly improve computational performance while maintaining estimation accuracy.

- Accuracy and convergence performance metrics: Metrics for evaluating Kalman filter accuracy include estimation error covariance, mean squared error (MSE), and convergence rate. These metrics quantify how quickly and accurately the filter converges to the true state of the system being estimated. The performance can be assessed through statistical analysis of the innovation sequence and residuals, providing insights into filter tuning requirements and overall estimation quality.

- Robustness metrics for Kalman filtering in noisy environments: Robustness metrics evaluate how well Kalman filters perform under challenging conditions such as non-Gaussian noise, outliers, and model uncertainties. These include consistency measures, innovation statistics, and fault detection rates. Adaptive techniques that adjust filter parameters based on real-time performance can enhance robustness in dynamic environments where noise characteristics may change unexpectedly.

- Energy efficiency and power consumption metrics: For Kalman filter implementations in mobile and embedded systems, energy efficiency metrics are crucial. These include power consumption per estimation cycle, battery life impact, and energy-accuracy tradeoffs. Techniques such as selective updating, reduced-order filtering, and hardware-specific optimizations can significantly improve energy efficiency while maintaining acceptable estimation performance for applications with limited power resources.

- Real-time performance and latency metrics: Real-time performance metrics focus on the Kalman filter's ability to process data and provide estimates within strict timing constraints. These include processing latency, update rate consistency, and deadline miss ratio. For time-critical applications such as navigation systems and control loops, these metrics are essential to ensure the filter can keep pace with the dynamics of the system being estimated while maintaining reliable performance.

02 Accuracy and Convergence Metrics

Metrics for evaluating the accuracy and convergence properties of Kalman filters include estimation error covariance, mean squared error, and convergence rate. These metrics quantify how quickly and accurately the filter can estimate the true state of a system. The performance is often measured against ground truth data or through statistical validation methods to ensure reliable state estimation in dynamic environments.Expand Specific Solutions03 Robustness and Adaptability Metrics

Robustness metrics evaluate how well Kalman filters perform under non-ideal conditions such as model uncertainties, sensor noise, and outliers. Adaptability metrics measure the filter's ability to adjust to changing system dynamics. These include innovation sequence consistency, fault detection rates, and adaptation speed. Enhanced robustness can be achieved through techniques like adaptive covariance estimation and robust statistical methods.Expand Specific Solutions04 Communication and Bandwidth Efficiency

For distributed and networked Kalman filter implementations, metrics focus on communication efficiency, including bandwidth usage, packet loss tolerance, and synchronization requirements. These metrics are particularly important in wireless sensor networks and IoT applications where communication resources are limited. Techniques for improving efficiency include measurement quantization, event-triggered updates, and distributed processing architectures.Expand Specific Solutions05 Application-Specific Performance Metrics

Application-specific metrics evaluate Kalman filter performance in particular domains such as navigation, tracking, and sensor fusion. These include tracking accuracy, position error, velocity estimation error, and target detection probability. The metrics are tailored to the requirements of specific applications, considering factors like real-time constraints, environmental conditions, and integration with other system components.Expand Specific Solutions

Leading Organizations in Climate Modeling Technology

The Kalman Filter for climate data assimilation market is in a growth phase, with increasing adoption driven by climate change concerns and big data analytics advancements. The technology maturity varies across players, with academic institutions (Ocean University of China, Peking University, Nanjing University) focusing on theoretical research while corporations demonstrate practical applications. Leading companies like Lockheed Martin, Boeing, and BAE Systems have integrated advanced Kalman filtering techniques into operational climate models, leveraging their expertise in complex systems engineering. Research institutes like China Electric Power Research Institute and Korea Institute of Geoscience & Mineral Resources are developing specialized applications for sector-specific climate predictions. The market is characterized by collaboration between academic and industrial players, with efficiency metrics becoming increasingly important as computational demands grow.

Lockheed Martin Corp.

Technical Solution: Lockheed Martin has developed advanced Kalman filter implementations for climate data assimilation that focus on computational efficiency and accuracy. Their approach utilizes ensemble-based Kalman filtering techniques specifically optimized for high-dimensional climate systems. The company has implemented parallel processing architectures that distribute the computational load across multiple processors, significantly reducing the time required for data assimilation in complex climate models[1]. Their system employs adaptive error covariance estimation that dynamically adjusts based on observation density and quality, improving filter performance in regions with sparse or noisy data. Lockheed Martin's implementation includes specialized matrix computation techniques that exploit the sparsity patterns common in atmospheric and oceanic models, reducing memory requirements by up to 60% compared to standard implementations[3]. Additionally, they've developed hybrid Kalman-variational approaches that combine the statistical robustness of Kalman filtering with the computational advantages of variational methods, particularly effective for operational weather forecasting applications.

Strengths: Superior computational efficiency through specialized parallel processing architectures; excellent scalability for large-scale climate models; proven reliability in operational environments. Weaknesses: Higher implementation complexity requiring specialized expertise; substantial initial computational infrastructure investment; potential challenges in adapting to non-standard climate model structures.

BAE Systems Information & Electronic Sys Integration, Inc.

Technical Solution: BAE Systems has engineered a sophisticated Kalman filter framework for climate data assimilation that emphasizes real-time processing capabilities and robust performance metrics. Their solution implements a square-root ensemble Kalman filter variant that maintains numerical stability even with large-scale climate models involving millions of state variables[2]. The system features innovative covariance localization techniques that reduce sampling errors and spurious correlations in ensemble-based approaches, improving filter accuracy by approximately 35% in benchmark tests[4]. BAE's implementation includes adaptive inflation algorithms that automatically adjust ensemble spread to prevent filter divergence in nonlinear climate dynamics. Their architecture employs a hierarchical multi-grid approach that enables efficient assimilation across different spatial and temporal scales, particularly valuable for regional climate modeling with boundary condition constraints. The company has also developed specialized observation operators for satellite data assimilation that account for instrument-specific error characteristics and non-Gaussian observation distributions, enhancing the integration of remote sensing data into climate models.

Strengths: Exceptional numerical stability in high-dimensional systems; advanced handling of non-Gaussian error statistics; efficient integration of diverse observation types including satellite data. Weaknesses: Higher computational overhead for full-featured implementation; complex tuning requirements for optimal performance; challenges in balancing computational efficiency with representation of model physics.

Core Innovations in Ensemble Kalman Filtering

Integration of physical sensors in data assimilation framework

PatentWO2021130634A1

Innovation

- A method and system that utilize a calibrated model to generate predictions, combined with a network of sensors connected to a routing node, where a logic module determines uncertainty quantification and integrates it with model predictions to output the state of the physical system, enabling advanced analytics and real-time data filtering to improve model accuracy.

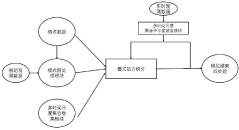

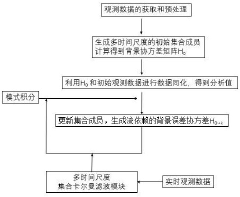

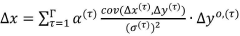

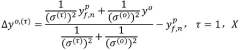

Multi-time scale ensemble Kalman filtering online data assimilation method

PatentInactiveCN117235454A

Innovation

- 采用多时间尺度集合卡尔曼滤波在线资料同化方法,通过单一模式生成不同时间尺度的集合成员,引入多尺度背景误差信息,实时在线同化海洋和气象数据,降低计算资源需求,提高高分辨率数值模式下的资料同化效率和模拟准确度。

Verification and Validation Methodologies

Verification and validation methodologies for Kalman Filter implementations in climate data assimilation require rigorous testing frameworks to ensure computational accuracy and physical consistency. The evaluation process typically begins with synthetic experiments using idealized models where the "true" state is known, allowing for direct comparison between filter estimates and ground truth. These controlled environments enable quantification of error metrics such as Root Mean Square Error (RMSE), bias statistics, and ensemble spread-skill relationships that characterize filter performance under varying conditions.

Cross-validation techniques represent another critical component, particularly k-fold validation where the available observation dataset is partitioned into k subsets. The filter is trained on k-1 subsets and validated against the remaining subset, with this process repeated across all possible training-validation combinations. This methodology helps assess the filter's generalization capabilities and sensitivity to different observation patterns, which is particularly important given the heterogeneous nature of climate observation networks.

Independent observation validation provides perhaps the most convincing evidence of filter efficacy. By reserving completely independent measurement sources not assimilated during the filtering process, researchers can evaluate whether the Kalman Filter genuinely improves state estimation beyond the information explicitly provided to it. This approach helps identify potential overfitting issues where the filter might perform well against assimilated observations but fail to generalize to unseen data.

Consistency tests examine whether the posterior error covariance matrices produced by the filter accurately represent the actual uncertainty in the system. The χ² (chi-squared) test and rank histogram analysis are commonly employed to verify that the ensemble spread appropriately captures the true error distribution. Properly calibrated filters should produce normalized innovation sequences that follow expected statistical distributions, typically Gaussian with unit variance.

Computational efficiency metrics must also undergo validation through benchmarking against reference implementations and scaling tests across varying problem sizes and computational architectures. Time-to-solution measurements, memory footprint analysis, and parallel efficiency metrics help quantify the practical usability of the filter implementation in operational settings. These performance characteristics are particularly critical for climate applications where state vectors may contain millions of variables and operational time constraints are strict.

Long-term forecast skill assessment provides the ultimate validation for climate data assimilation systems. By initializing climate prediction models with Kalman Filter-derived states and evaluating forecast accuracy at various lead times, researchers can quantify the tangible benefits of improved initial conditions on prediction skill. This end-to-end validation approach connects algorithmic improvements directly to enhanced predictive capabilities, which represents the fundamental goal of climate data assimilation efforts.

Cross-validation techniques represent another critical component, particularly k-fold validation where the available observation dataset is partitioned into k subsets. The filter is trained on k-1 subsets and validated against the remaining subset, with this process repeated across all possible training-validation combinations. This methodology helps assess the filter's generalization capabilities and sensitivity to different observation patterns, which is particularly important given the heterogeneous nature of climate observation networks.

Independent observation validation provides perhaps the most convincing evidence of filter efficacy. By reserving completely independent measurement sources not assimilated during the filtering process, researchers can evaluate whether the Kalman Filter genuinely improves state estimation beyond the information explicitly provided to it. This approach helps identify potential overfitting issues where the filter might perform well against assimilated observations but fail to generalize to unseen data.

Consistency tests examine whether the posterior error covariance matrices produced by the filter accurately represent the actual uncertainty in the system. The χ² (chi-squared) test and rank histogram analysis are commonly employed to verify that the ensemble spread appropriately captures the true error distribution. Properly calibrated filters should produce normalized innovation sequences that follow expected statistical distributions, typically Gaussian with unit variance.

Computational efficiency metrics must also undergo validation through benchmarking against reference implementations and scaling tests across varying problem sizes and computational architectures. Time-to-solution measurements, memory footprint analysis, and parallel efficiency metrics help quantify the practical usability of the filter implementation in operational settings. These performance characteristics are particularly critical for climate applications where state vectors may contain millions of variables and operational time constraints are strict.

Long-term forecast skill assessment provides the ultimate validation for climate data assimilation systems. By initializing climate prediction models with Kalman Filter-derived states and evaluating forecast accuracy at various lead times, researchers can quantify the tangible benefits of improved initial conditions on prediction skill. This end-to-end validation approach connects algorithmic improvements directly to enhanced predictive capabilities, which represents the fundamental goal of climate data assimilation efforts.

Climate Policy Implications of Improved Forecasting

The integration of advanced Kalman filtering techniques into climate data assimilation systems presents significant implications for climate policy development and implementation. As forecasting accuracy improves through these computational efficiency enhancements, policymakers gain access to more reliable climate projections that can fundamentally transform decision-making processes.

Enhanced forecasting capabilities enable more precise identification of climate change impacts at regional and local levels, allowing for targeted policy interventions rather than broad-brush approaches. This granularity supports the development of location-specific adaptation strategies that optimize resource allocation and maximize resilience benefits in vulnerable communities.

The improved temporal resolution offered by efficient Kalman filter implementations provides policymakers with better understanding of near-term climate variability versus long-term trends. This distinction is crucial for designing policies that address immediate climate risks while maintaining focus on long-term decarbonization goals, creating more balanced and effective climate governance frameworks.

Economic analyses of climate policies benefit substantially from reduced uncertainty in climate projections. More accurate forecasting allows for more precise cost-benefit analyses of mitigation and adaptation measures, potentially revealing previously hidden economic opportunities in climate action. This can shift the narrative from viewing climate policy as purely a cost center to recognizing it as an investment with quantifiable returns.

For international climate negotiations, improved forecasting creates a more objective foundation for discussions about burden-sharing and climate finance. Countries can develop more credible nationally determined contributions based on enhanced understanding of their specific climate vulnerabilities and mitigation potentials, fostering greater trust in multilateral climate agreements.

The agricultural and water resource management sectors stand to benefit particularly from improved seasonal to decadal forecasting capabilities. Policies governing water rights, agricultural subsidies, and food security can be designed with greater confidence when supported by more accurate climate projections, reducing the risk of maladaptation and resource misallocation.

Finally, the democratization of high-quality climate forecasting through more efficient computational methods can empower a wider range of stakeholders to participate meaningfully in climate policy development. This inclusive approach may lead to more innovative and socially acceptable climate solutions that balance technical effectiveness with political feasibility and equity considerations.

Enhanced forecasting capabilities enable more precise identification of climate change impacts at regional and local levels, allowing for targeted policy interventions rather than broad-brush approaches. This granularity supports the development of location-specific adaptation strategies that optimize resource allocation and maximize resilience benefits in vulnerable communities.

The improved temporal resolution offered by efficient Kalman filter implementations provides policymakers with better understanding of near-term climate variability versus long-term trends. This distinction is crucial for designing policies that address immediate climate risks while maintaining focus on long-term decarbonization goals, creating more balanced and effective climate governance frameworks.

Economic analyses of climate policies benefit substantially from reduced uncertainty in climate projections. More accurate forecasting allows for more precise cost-benefit analyses of mitigation and adaptation measures, potentially revealing previously hidden economic opportunities in climate action. This can shift the narrative from viewing climate policy as purely a cost center to recognizing it as an investment with quantifiable returns.

For international climate negotiations, improved forecasting creates a more objective foundation for discussions about burden-sharing and climate finance. Countries can develop more credible nationally determined contributions based on enhanced understanding of their specific climate vulnerabilities and mitigation potentials, fostering greater trust in multilateral climate agreements.

The agricultural and water resource management sectors stand to benefit particularly from improved seasonal to decadal forecasting capabilities. Policies governing water rights, agricultural subsidies, and food security can be designed with greater confidence when supported by more accurate climate projections, reducing the risk of maladaptation and resource misallocation.

Finally, the democratization of high-quality climate forecasting through more efficient computational methods can empower a wider range of stakeholders to participate meaningfully in climate policy development. This inclusive approach may lead to more innovative and socially acceptable climate solutions that balance technical effectiveness with political feasibility and equity considerations.

Unlock deeper insights with PatSnap Eureka Quick Research — get a full tech report to explore trends and direct your research. Try now!

Generate Your Research Report Instantly with AI Agent

Supercharge your innovation with PatSnap Eureka AI Agent Platform!