Neuromorphic materials and their effect on predictive modeling

SEP 19, 20259 MIN READ

Generate Your Research Report Instantly with AI Agent

PatSnap Eureka helps you evaluate technical feasibility & market potential.

Neuromorphic Materials Evolution and Research Objectives

Neuromorphic computing represents a paradigm shift in computational architecture, drawing inspiration from the structure and function of biological neural systems. The evolution of this field has been marked by significant milestones since the 1980s when Carver Mead first introduced the concept. Initially, neuromorphic systems were primarily implemented using traditional silicon-based CMOS technology, which offered limited capabilities in mimicking the complex, adaptive nature of biological neural networks.

The development trajectory has accelerated dramatically in the past decade with the emergence of novel neuromorphic materials. These materials exhibit properties that more closely resemble biological neural systems, including spike-based communication, synaptic plasticity, and inherent parallelism. Materials such as memristors, phase-change materials, and organic electrochemical transistors have demonstrated remarkable potential for implementing neuromorphic architectures with significantly improved energy efficiency and computational capabilities.

Current research objectives in neuromorphic materials focus on several key areas. First, enhancing the scalability of neuromorphic systems to accommodate increasingly complex computational tasks while maintaining energy efficiency. Second, improving the reliability and durability of neuromorphic materials to ensure consistent performance over extended operational periods. Third, developing materials with enhanced adaptive properties that can facilitate more sophisticated learning algorithms and predictive modeling capabilities.

The integration of neuromorphic materials with predictive modeling represents a particularly promising research direction. Traditional predictive modeling approaches often struggle with complex, non-linear systems and require substantial computational resources. Neuromorphic materials offer an alternative approach by enabling systems that can learn and adapt to patterns in data, potentially revolutionizing fields such as weather forecasting, financial market analysis, and healthcare diagnostics.

A critical research objective involves bridging the gap between material properties and algorithmic implementations. This includes developing new programming paradigms and computational frameworks specifically designed to leverage the unique characteristics of neuromorphic materials. Such frameworks would need to accommodate the inherent variability and stochasticity of these materials while harnessing their parallel processing capabilities.

Looking forward, the field aims to achieve truly brain-inspired computing systems that combine the efficiency and adaptability of biological neural networks with the precision and reliability of electronic systems. This convergence could lead to unprecedented advances in artificial intelligence, enabling more sophisticated predictive models that can operate with minimal energy consumption and adapt to changing conditions in real-time.

The development trajectory has accelerated dramatically in the past decade with the emergence of novel neuromorphic materials. These materials exhibit properties that more closely resemble biological neural systems, including spike-based communication, synaptic plasticity, and inherent parallelism. Materials such as memristors, phase-change materials, and organic electrochemical transistors have demonstrated remarkable potential for implementing neuromorphic architectures with significantly improved energy efficiency and computational capabilities.

Current research objectives in neuromorphic materials focus on several key areas. First, enhancing the scalability of neuromorphic systems to accommodate increasingly complex computational tasks while maintaining energy efficiency. Second, improving the reliability and durability of neuromorphic materials to ensure consistent performance over extended operational periods. Third, developing materials with enhanced adaptive properties that can facilitate more sophisticated learning algorithms and predictive modeling capabilities.

The integration of neuromorphic materials with predictive modeling represents a particularly promising research direction. Traditional predictive modeling approaches often struggle with complex, non-linear systems and require substantial computational resources. Neuromorphic materials offer an alternative approach by enabling systems that can learn and adapt to patterns in data, potentially revolutionizing fields such as weather forecasting, financial market analysis, and healthcare diagnostics.

A critical research objective involves bridging the gap between material properties and algorithmic implementations. This includes developing new programming paradigms and computational frameworks specifically designed to leverage the unique characteristics of neuromorphic materials. Such frameworks would need to accommodate the inherent variability and stochasticity of these materials while harnessing their parallel processing capabilities.

Looking forward, the field aims to achieve truly brain-inspired computing systems that combine the efficiency and adaptability of biological neural networks with the precision and reliability of electronic systems. This convergence could lead to unprecedented advances in artificial intelligence, enabling more sophisticated predictive models that can operate with minimal energy consumption and adapt to changing conditions in real-time.

Market Analysis for Brain-Inspired Computing Solutions

The brain-inspired computing market is experiencing unprecedented growth, driven by the increasing demand for efficient processing of complex data patterns and the limitations of traditional von Neumann computing architectures. Current market valuations place neuromorphic computing at approximately $2.5 billion, with projections indicating a compound annual growth rate of 20-25% over the next five years, potentially reaching $7-8 billion by 2028.

Key market segments adopting neuromorphic technologies include autonomous vehicles, robotics, healthcare diagnostics, and advanced security systems. The automotive sector represents the fastest-growing segment, with major manufacturers investing heavily in neuromorphic solutions for real-time sensor processing and decision-making capabilities that traditional computing struggles to deliver efficiently.

Healthcare applications are emerging as another significant market driver, particularly in medical imaging analysis and patient monitoring systems where predictive modeling based on neuromorphic materials offers substantial improvements in both accuracy and energy efficiency. These systems demonstrate up to 90% reduction in power consumption compared to GPU-based solutions while maintaining comparable or superior pattern recognition capabilities.

Geographically, North America currently dominates the market with approximately 40% share, followed by Europe and Asia-Pacific. However, the Asia-Pacific region is expected to witness the highest growth rate, fueled by substantial investments from countries like China, Japan, and South Korea in neuromorphic research and manufacturing capabilities.

From a customer perspective, the market exhibits a dual nature: high-end enterprise applications requiring maximum performance regardless of cost, and an emerging mid-market segment seeking balanced solutions with reasonable efficiency gains at moderate price points. This bifurcation is creating opportunities for diverse business models and product positioning strategies.

Market barriers include high initial development costs, limited standardization across platforms, and integration challenges with existing systems. Additionally, the specialized expertise required for implementing neuromorphic solutions creates adoption friction in organizations without dedicated AI teams.

Competitive dynamics reveal three distinct player categories: established semiconductor giants pivoting toward neuromorphic offerings, specialized neuromorphic startups with innovative materials and architectures, and research-focused entities developing next-generation solutions. This fragmented landscape suggests potential consolidation through acquisitions as the technology matures and clear leaders emerge in specific application domains.

Key market segments adopting neuromorphic technologies include autonomous vehicles, robotics, healthcare diagnostics, and advanced security systems. The automotive sector represents the fastest-growing segment, with major manufacturers investing heavily in neuromorphic solutions for real-time sensor processing and decision-making capabilities that traditional computing struggles to deliver efficiently.

Healthcare applications are emerging as another significant market driver, particularly in medical imaging analysis and patient monitoring systems where predictive modeling based on neuromorphic materials offers substantial improvements in both accuracy and energy efficiency. These systems demonstrate up to 90% reduction in power consumption compared to GPU-based solutions while maintaining comparable or superior pattern recognition capabilities.

Geographically, North America currently dominates the market with approximately 40% share, followed by Europe and Asia-Pacific. However, the Asia-Pacific region is expected to witness the highest growth rate, fueled by substantial investments from countries like China, Japan, and South Korea in neuromorphic research and manufacturing capabilities.

From a customer perspective, the market exhibits a dual nature: high-end enterprise applications requiring maximum performance regardless of cost, and an emerging mid-market segment seeking balanced solutions with reasonable efficiency gains at moderate price points. This bifurcation is creating opportunities for diverse business models and product positioning strategies.

Market barriers include high initial development costs, limited standardization across platforms, and integration challenges with existing systems. Additionally, the specialized expertise required for implementing neuromorphic solutions creates adoption friction in organizations without dedicated AI teams.

Competitive dynamics reveal three distinct player categories: established semiconductor giants pivoting toward neuromorphic offerings, specialized neuromorphic startups with innovative materials and architectures, and research-focused entities developing next-generation solutions. This fragmented landscape suggests potential consolidation through acquisitions as the technology matures and clear leaders emerge in specific application domains.

Current Neuromorphic Materials Landscape and Barriers

The neuromorphic materials landscape is currently dominated by several key material categories, each with distinct properties and applications in brain-inspired computing systems. Memristive materials, including metal oxides such as HfO₂, TiO₂, and Ta₂O₅, represent a significant portion of research focus due to their ability to mimic synaptic plasticity through resistance changes. Phase-change materials like Ge₂Sb₂Te₅ (GST) offer non-volatile memory capabilities with excellent scalability and switching speed, making them valuable for neuromorphic architectures requiring persistent states.

Ferroelectric materials, particularly hafnium-based compounds like HfZrO₂, have emerged as promising candidates due to their CMOS compatibility and low power consumption characteristics. Meanwhile, 2D materials including graphene, MoS₂, and h-BN are being explored for their unique electronic properties and potential for ultra-thin, flexible neuromorphic devices. Organic and polymer-based materials represent another growing segment, offering biocompatibility and mechanical flexibility advantages over traditional inorganic counterparts.

Despite significant advances, the field faces substantial technical barriers. Material stability and endurance remain critical challenges, with many neuromorphic materials exhibiting performance degradation after repeated switching cycles—a serious limitation for systems requiring long-term reliability. Variability between devices presents another major obstacle, as inconsistent behavior across identical components complicates large-scale integration and predictable modeling outcomes.

Fabrication complexity poses significant scaling challenges, with many promising materials requiring specialized deposition techniques incompatible with standard CMOS processes. This manufacturing incompatibility creates barriers to industrial adoption and commercialization. Energy efficiency, while improved compared to traditional computing architectures, still falls short of biological neural systems by several orders of magnitude, limiting applications in edge computing and mobile devices.

The interface between materials science and computational modeling represents perhaps the most significant barrier. Current predictive models struggle to accurately capture the complex, non-linear behaviors of neuromorphic materials, particularly their stochastic properties and temporal dynamics. This modeling gap creates a disconnect between material development and system-level implementation, slowing progress toward practical applications.

Geographically, research leadership in neuromorphic materials is concentrated in North America, Europe, and East Asia, with the United States, China, Germany, Japan, and South Korea hosting the most active research institutions. This distribution reflects both historical strengths in semiconductor research and strategic national investments in neuromorphic computing as a next-generation technology.

Ferroelectric materials, particularly hafnium-based compounds like HfZrO₂, have emerged as promising candidates due to their CMOS compatibility and low power consumption characteristics. Meanwhile, 2D materials including graphene, MoS₂, and h-BN are being explored for their unique electronic properties and potential for ultra-thin, flexible neuromorphic devices. Organic and polymer-based materials represent another growing segment, offering biocompatibility and mechanical flexibility advantages over traditional inorganic counterparts.

Despite significant advances, the field faces substantial technical barriers. Material stability and endurance remain critical challenges, with many neuromorphic materials exhibiting performance degradation after repeated switching cycles—a serious limitation for systems requiring long-term reliability. Variability between devices presents another major obstacle, as inconsistent behavior across identical components complicates large-scale integration and predictable modeling outcomes.

Fabrication complexity poses significant scaling challenges, with many promising materials requiring specialized deposition techniques incompatible with standard CMOS processes. This manufacturing incompatibility creates barriers to industrial adoption and commercialization. Energy efficiency, while improved compared to traditional computing architectures, still falls short of biological neural systems by several orders of magnitude, limiting applications in edge computing and mobile devices.

The interface between materials science and computational modeling represents perhaps the most significant barrier. Current predictive models struggle to accurately capture the complex, non-linear behaviors of neuromorphic materials, particularly their stochastic properties and temporal dynamics. This modeling gap creates a disconnect between material development and system-level implementation, slowing progress toward practical applications.

Geographically, research leadership in neuromorphic materials is concentrated in North America, Europe, and East Asia, with the United States, China, Germany, Japan, and South Korea hosting the most active research institutions. This distribution reflects both historical strengths in semiconductor research and strategic national investments in neuromorphic computing as a next-generation technology.

Contemporary Neuromorphic Materials for Predictive Modeling

01 Neuromorphic computing systems and architectures

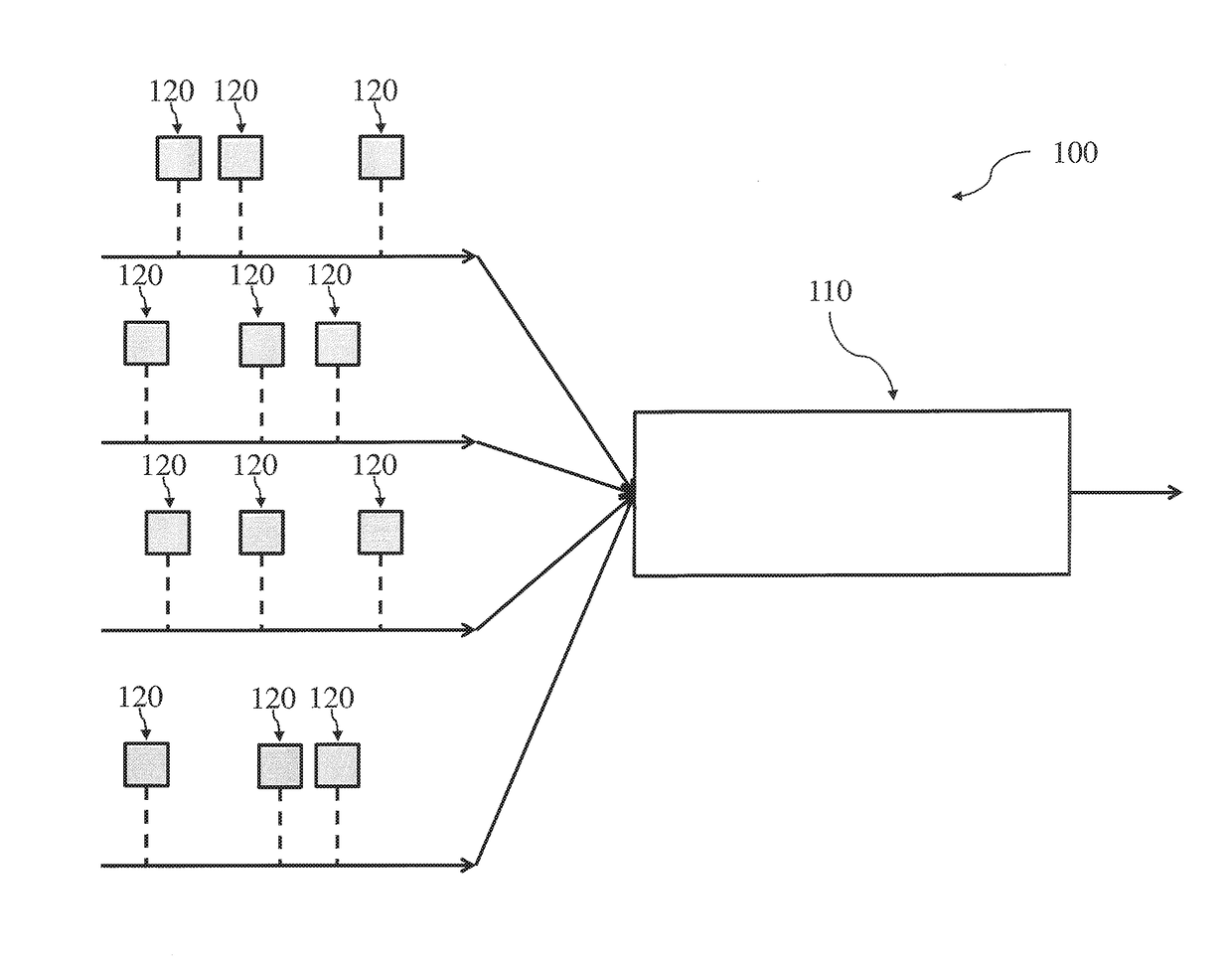

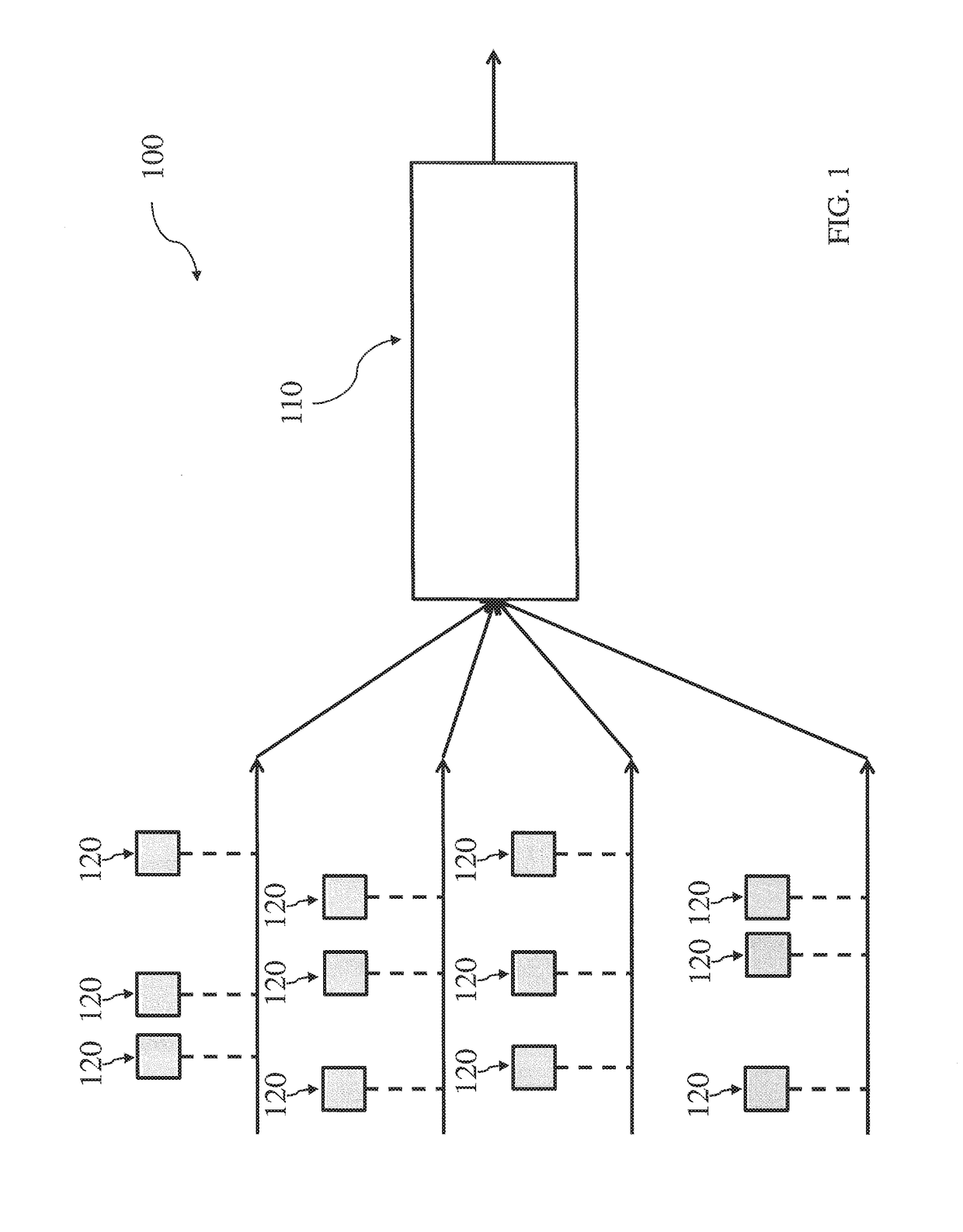

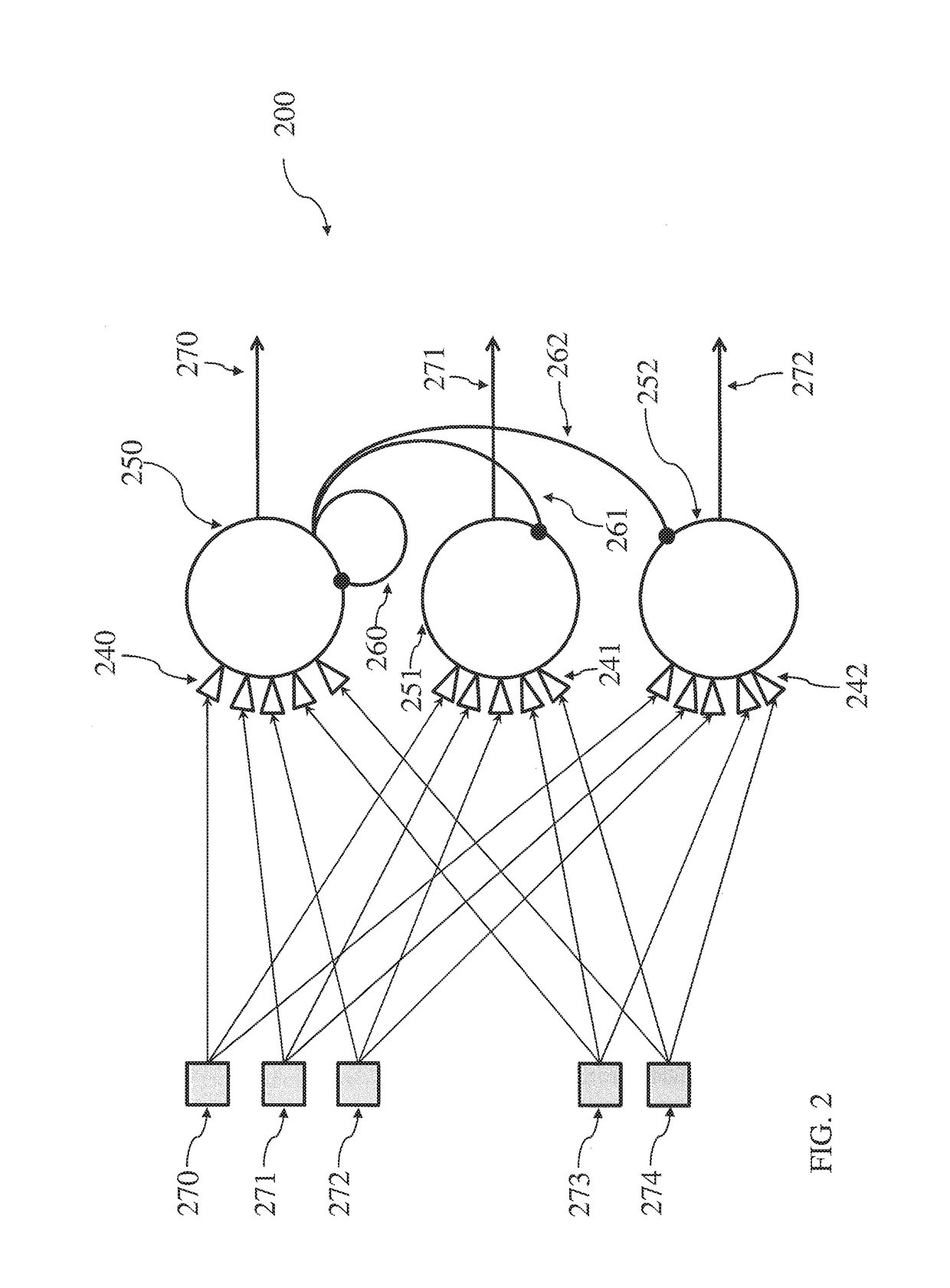

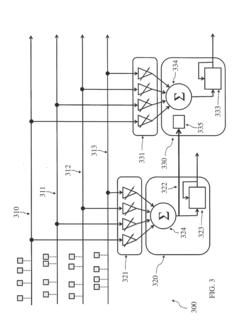

Neuromorphic computing systems mimic the structure and function of the human brain to process information more efficiently. These systems incorporate specialized materials and architectures that enable brain-like parallel processing, learning, and adaptation. Predictive modeling techniques are used to design and optimize these neuromorphic architectures, allowing for more efficient implementation of artificial neural networks in hardware form.- Neuromorphic computing systems and architectures: Neuromorphic computing systems mimic the structure and function of the human brain to process information more efficiently. These systems incorporate specialized materials and architectures that enable brain-like parallel processing, learning, and adaptation. Predictive modeling techniques are used to design and optimize these neuromorphic architectures, allowing for more efficient implementation of artificial neural networks in hardware form.

- Materials for neuromorphic devices and memristors: Advanced materials play a crucial role in developing neuromorphic devices, particularly memristors that can mimic synaptic behavior. These materials exhibit properties such as variable resistance states, non-volatility, and plasticity that are essential for implementing neural network functions in hardware. Predictive modeling helps identify and characterize suitable materials with desired electrical, thermal, and mechanical properties for neuromorphic applications.

- Machine learning for neuromorphic material design: Machine learning algorithms are employed to predict the properties and performance of potential neuromorphic materials before physical synthesis. These predictive models analyze vast datasets of material properties to identify promising candidates for neuromorphic applications. The approach accelerates material discovery by reducing the need for extensive experimental testing and enables the design of custom materials with specific neuromorphic characteristics.

- Simulation and modeling of neuromorphic systems: Simulation tools and computational models are developed to predict the behavior of neuromorphic systems under various conditions. These models incorporate material properties, device physics, and system-level architectures to optimize performance before physical implementation. The simulations help identify potential issues in neuromorphic designs and allow for iterative improvements without costly hardware prototyping.

- Applications of neuromorphic materials in predictive systems: Neuromorphic materials are integrated into systems designed for predictive analytics and real-time decision making. These applications span various domains including financial forecasting, healthcare diagnostics, autonomous vehicles, and industrial process control. The energy efficiency and parallel processing capabilities of neuromorphic materials make them particularly suitable for edge computing applications where predictive models must operate with limited power and computational resources.

02 Materials for neuromorphic devices and memristors

Advanced materials play a crucial role in developing neuromorphic devices, particularly memristors that can mimic synaptic behavior. These materials exhibit properties such as variable resistance states, non-volatility, and plasticity that are essential for brain-inspired computing. Predictive modeling helps identify and characterize suitable materials with desired electrical, thermal, and mechanical properties for neuromorphic applications, enabling more efficient and reliable neuromorphic hardware.Expand Specific Solutions03 Machine learning for material property prediction

Machine learning algorithms are employed to predict the properties and behaviors of neuromorphic materials without extensive experimental testing. These predictive models analyze large datasets of material characteristics to identify patterns and correlations that can guide material selection and optimization. By leveraging techniques such as neural networks, genetic algorithms, and statistical methods, researchers can accelerate the discovery and development of novel materials for neuromorphic computing applications.Expand Specific Solutions04 Simulation and modeling of neuromorphic systems

Computational simulation and modeling techniques are used to predict the behavior of neuromorphic systems before physical implementation. These models incorporate material properties, device physics, and system-level architectures to optimize performance parameters such as power consumption, processing speed, and learning capabilities. Advanced simulation frameworks enable researchers to test different design configurations and material combinations virtually, reducing development time and costs for neuromorphic computing solutions.Expand Specific Solutions05 Integration of neuromorphic materials in practical applications

Neuromorphic materials and predictive modeling techniques are being integrated into practical applications across various domains. These applications include edge computing devices, autonomous systems, pattern recognition, and data analytics platforms that benefit from brain-inspired computing capabilities. Predictive models help optimize the deployment of neuromorphic materials in specific use cases, considering constraints such as energy efficiency, form factor, and environmental conditions to ensure optimal performance in real-world scenarios.Expand Specific Solutions

Leading Organizations in Neuromorphic Materials Research

Neuromorphic materials are advancing rapidly in the predictive modeling landscape, with the market currently in its growth phase. The global market size is expanding, driven by increasing demand for AI applications requiring efficient, brain-inspired computing architectures. While still evolving, the technology shows promising maturity levels with key players driving innovation. IBM leads with significant neuromorphic computing patents and research initiatives, followed by Samsung Electronics developing specialized memory solutions. Other notable contributors include Dassault Systèmes focusing on simulation software, SK hynix advancing memory technologies, and Boeing exploring aerospace applications. Academic institutions like KAIST and Zhejiang University are collaborating with industry partners to bridge fundamental research and commercial applications, accelerating the field's development.

International Business Machines Corp.

Technical Solution: IBM has pioneered neuromorphic computing through its TrueNorth and subsequent neuromorphic chip architectures. Their approach focuses on developing brain-inspired hardware that mimics neural structures using phase-change memory (PCM) materials and memristive devices. IBM's neuromorphic materials integrate memory and processing in the same physical location, enabling efficient spike-based neural computation. Their SyNAPSE program developed chips with over 1 million neurons and 256 million synapses that consume only 70mW of power[1]. IBM has also developed specialized materials that can maintain multiple resistance states, allowing for analog-like computation that better replicates biological neural networks. These materials enable spike-timing-dependent plasticity (STDP) learning mechanisms directly in hardware. For predictive modeling, IBM's neuromorphic systems excel at pattern recognition, anomaly detection, and time-series forecasting with significantly reduced energy consumption compared to traditional von Neumann architectures[3].

Strengths: Extremely low power consumption (orders of magnitude less than conventional systems); ability to perform real-time sensory processing; inherent parallelism for certain AI workloads. Weaknesses: Limited software ecosystem compared to traditional computing platforms; challenges in programming paradigms that differ from conventional computing; still requires specialized knowledge to fully leverage the architecture's capabilities.

Samsung Electronics Co., Ltd.

Technical Solution: Samsung has developed advanced neuromorphic materials focusing on resistive random-access memory (RRAM) and magnetoresistive random-access memory (MRAM) technologies for brain-inspired computing. Their approach integrates these materials into 3D stacked architectures that enable massively parallel processing similar to biological neural networks. Samsung's neuromorphic chips utilize specialized oxide-based memristive materials that can maintain multiple resistance states, allowing for efficient implementation of synaptic weights in artificial neural networks. Their research demonstrates that these materials can achieve up to 4-5 bits of precision per synapse while consuming only picowatts of power per synaptic operation[2]. For predictive modeling applications, Samsung has demonstrated neuromorphic systems capable of online learning and adaptation, with particular success in time-series prediction, anomaly detection in sensor networks, and pattern recognition tasks. Their neuromorphic hardware accelerates certain machine learning tasks by 2-3 orders of magnitude compared to conventional processors while using a fraction of the energy[4].

Strengths: Excellent energy efficiency for edge AI applications; hardware-accelerated learning capabilities; tight integration with Samsung's memory technology ecosystem. Weaknesses: Still in relatively early stages of commercialization; requires specialized programming approaches different from traditional deep learning frameworks; challenges in scaling to very large network sizes while maintaining precision.

Critical Patents and Breakthroughs in Neuromorphic Computing

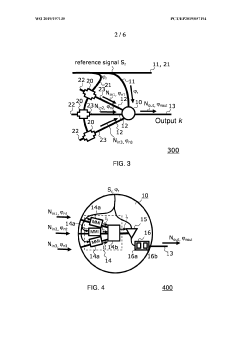

Neuromorphic architecture with multiple coupled neurons using internal state neuron information

PatentActiveUS20170372194A1

Innovation

- A neuromorphic architecture featuring interconnected neurons with internal state information links, allowing for the transmission of internal state information across layers to modify the operation of other neurons, enhancing the system's performance and capability in data processing, pattern recognition, and correlation detection.

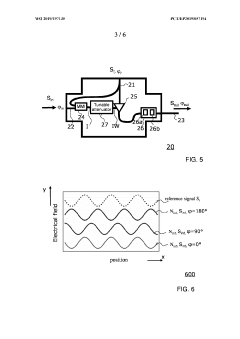

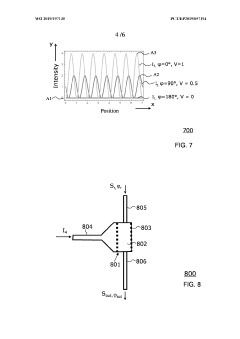

Optical synapse

PatentWO2019197135A1

Innovation

- An integrated optical circuit that processes phase-encoded optical signals to emulate synapse functionality by applying weights to the phase of the input signal, allowing for signal restoration and efficient implementation in both phase and amplitude domains, using components like optical interferometers, tunable attenuators, and phase-shifting devices with nonlinear materials.

Energy Efficiency Implications of Neuromorphic Computing

Neuromorphic computing represents a paradigm shift in computational architecture, drawing inspiration from the human brain's neural networks to create more efficient processing systems. The energy efficiency implications of this approach are profound and multifaceted, offering significant advantages over traditional von Neumann architectures.

Traditional computing systems consume substantial power due to their separation of memory and processing units, resulting in the well-known "von Neumann bottleneck." In contrast, neuromorphic systems integrate memory and computation, dramatically reducing energy requirements for data transfer. Current estimates suggest neuromorphic chips can achieve energy efficiencies 100-1000 times greater than conventional processors for certain tasks, particularly those involving pattern recognition and sensory processing.

The materials science underpinning neuromorphic computing contributes significantly to these efficiency gains. Memristive materials, phase-change memory, and spintronic devices enable persistent state changes with minimal energy input, allowing for efficient implementation of synaptic-like functions. These materials can maintain computational states without continuous power consumption, similar to biological neural networks that maintain connectivity patterns with minimal metabolic energy.

For predictive modeling applications, the energy efficiency advantages become particularly pronounced when dealing with complex, data-intensive tasks. Traditional approaches to machine learning often require massive computational resources and corresponding energy consumption. Neuromorphic systems, by contrast, can process sensory data streams and perform inference operations with orders of magnitude less power, making them ideal for edge computing applications where energy constraints are significant.

The scalability of neuromorphic architectures presents another efficiency dimension. As these systems grow in complexity, their energy consumption scales sub-linearly compared to traditional computing approaches. This characteristic becomes increasingly valuable as predictive models expand to incorporate more parameters and process larger datasets.

Looking forward, the integration of novel neuromorphic materials with advanced fabrication techniques promises to further enhance energy efficiency. Emerging two-dimensional materials and nanoscale fabrication approaches may enable neuromorphic systems that operate at femtojoule-per-operation levels, approaching the theoretical limits of computational efficiency and potentially rivaling the human brain's remarkable energy efficiency of approximately 20 watts for complex cognitive functions.

Traditional computing systems consume substantial power due to their separation of memory and processing units, resulting in the well-known "von Neumann bottleneck." In contrast, neuromorphic systems integrate memory and computation, dramatically reducing energy requirements for data transfer. Current estimates suggest neuromorphic chips can achieve energy efficiencies 100-1000 times greater than conventional processors for certain tasks, particularly those involving pattern recognition and sensory processing.

The materials science underpinning neuromorphic computing contributes significantly to these efficiency gains. Memristive materials, phase-change memory, and spintronic devices enable persistent state changes with minimal energy input, allowing for efficient implementation of synaptic-like functions. These materials can maintain computational states without continuous power consumption, similar to biological neural networks that maintain connectivity patterns with minimal metabolic energy.

For predictive modeling applications, the energy efficiency advantages become particularly pronounced when dealing with complex, data-intensive tasks. Traditional approaches to machine learning often require massive computational resources and corresponding energy consumption. Neuromorphic systems, by contrast, can process sensory data streams and perform inference operations with orders of magnitude less power, making them ideal for edge computing applications where energy constraints are significant.

The scalability of neuromorphic architectures presents another efficiency dimension. As these systems grow in complexity, their energy consumption scales sub-linearly compared to traditional computing approaches. This characteristic becomes increasingly valuable as predictive models expand to incorporate more parameters and process larger datasets.

Looking forward, the integration of novel neuromorphic materials with advanced fabrication techniques promises to further enhance energy efficiency. Emerging two-dimensional materials and nanoscale fabrication approaches may enable neuromorphic systems that operate at femtojoule-per-operation levels, approaching the theoretical limits of computational efficiency and potentially rivaling the human brain's remarkable energy efficiency of approximately 20 watts for complex cognitive functions.

Interdisciplinary Applications Across Scientific Domains

Neuromorphic materials are revolutionizing interdisciplinary scientific applications by bridging traditional domain boundaries. In healthcare, these materials enable advanced neural interfaces that enhance brain-computer interaction capabilities, allowing for more precise monitoring of neurological conditions and improved prosthetic control systems. The predictive modeling capabilities derived from neuromorphic architectures have significantly improved diagnostic accuracy in medical imaging, particularly in early detection of degenerative diseases where pattern recognition is crucial.

In environmental science, neuromorphic systems are transforming climate modeling by processing vast datasets with unprecedented energy efficiency. These materials facilitate complex simulations of ecological systems with reduced computational overhead, enabling researchers to predict environmental changes with greater accuracy and develop more effective conservation strategies. The adaptive learning capabilities inherent in neuromorphic materials allow for continuous refinement of predictive models as new environmental data becomes available.

The field of astronomy has embraced neuromorphic computing for processing the enormous data streams from radio telescopes and space observatories. These materials support real-time analysis of celestial phenomena and have enhanced our ability to detect subtle patterns in cosmic background radiation. Predictive models built on neuromorphic architectures have improved space weather forecasting, which is critical for satellite operations and communications infrastructure protection.

In materials science, neuromorphic systems are accelerating the discovery of novel compounds by predicting molecular behaviors and interactions without exhaustive laboratory testing. This approach has shortened research timelines for developing advanced materials with specific properties, such as superconductors and photovoltaic materials. The self-optimizing nature of neuromorphic algorithms has proven particularly valuable in navigating complex chemical spaces where traditional computational methods struggle.

Agricultural applications have emerged as an unexpected beneficiary of neuromorphic technology. Precision farming systems now incorporate these materials to analyze soil conditions, crop health, and weather patterns simultaneously. The resulting predictive models optimize resource allocation, reducing water usage and chemical inputs while maximizing yield. This integration represents a significant advancement in sustainable agricultural practices, particularly in regions facing climate uncertainty.

Quantum physics research has found neuromorphic materials invaluable for modeling quantum systems that defy traditional computational approaches. The inherent parallelism of neuromorphic architectures aligns well with quantum mechanical principles, enabling more accurate simulations of quantum phenomena. This synergy has accelerated progress in quantum computing research and enhanced our understanding of fundamental physical processes.

In environmental science, neuromorphic systems are transforming climate modeling by processing vast datasets with unprecedented energy efficiency. These materials facilitate complex simulations of ecological systems with reduced computational overhead, enabling researchers to predict environmental changes with greater accuracy and develop more effective conservation strategies. The adaptive learning capabilities inherent in neuromorphic materials allow for continuous refinement of predictive models as new environmental data becomes available.

The field of astronomy has embraced neuromorphic computing for processing the enormous data streams from radio telescopes and space observatories. These materials support real-time analysis of celestial phenomena and have enhanced our ability to detect subtle patterns in cosmic background radiation. Predictive models built on neuromorphic architectures have improved space weather forecasting, which is critical for satellite operations and communications infrastructure protection.

In materials science, neuromorphic systems are accelerating the discovery of novel compounds by predicting molecular behaviors and interactions without exhaustive laboratory testing. This approach has shortened research timelines for developing advanced materials with specific properties, such as superconductors and photovoltaic materials. The self-optimizing nature of neuromorphic algorithms has proven particularly valuable in navigating complex chemical spaces where traditional computational methods struggle.

Agricultural applications have emerged as an unexpected beneficiary of neuromorphic technology. Precision farming systems now incorporate these materials to analyze soil conditions, crop health, and weather patterns simultaneously. The resulting predictive models optimize resource allocation, reducing water usage and chemical inputs while maximizing yield. This integration represents a significant advancement in sustainable agricultural practices, particularly in regions facing climate uncertainty.

Quantum physics research has found neuromorphic materials invaluable for modeling quantum systems that defy traditional computational approaches. The inherent parallelism of neuromorphic architectures aligns well with quantum mechanical principles, enabling more accurate simulations of quantum phenomena. This synergy has accelerated progress in quantum computing research and enhanced our understanding of fundamental physical processes.

Unlock deeper insights with PatSnap Eureka Quick Research — get a full tech report to explore trends and direct your research. Try now!

Generate Your Research Report Instantly with AI Agent

Supercharge your innovation with PatSnap Eureka AI Agent Platform!