Neuromorphic materials enabling next-gen computational paradigms

SEP 19, 20259 MIN READ

Generate Your Research Report Instantly with AI Agent

PatSnap Eureka helps you evaluate technical feasibility & market potential.

Neuromorphic Materials Background and Objectives

Neuromorphic computing represents a paradigm shift in computational architecture, drawing inspiration from the structure and function of biological neural systems. This field has evolved significantly since the 1980s when Carver Mead first introduced the concept, progressing from theoretical frameworks to practical implementations that leverage specialized materials with brain-like properties. The fundamental goal is to overcome the limitations of traditional von Neumann architectures, which separate memory and processing units, creating bottlenecks in data-intensive applications.

The development of neuromorphic materials has accelerated dramatically in the past decade, driven by the increasing demands of artificial intelligence applications and the physical limitations of conventional semiconductor technology as Moore's Law approaches its theoretical limits. These materials aim to emulate the brain's remarkable energy efficiency, parallel processing capabilities, and adaptive learning mechanisms at the hardware level.

Current neuromorphic computing objectives focus on creating systems that can process information with the brain's efficiency—approximately 20 watts of power consumption while performing complex cognitive tasks. This represents orders of magnitude improvement over conventional computing systems for certain applications, particularly those involving pattern recognition, sensory processing, and decision-making under uncertainty.

Materials science plays a crucial role in this technological evolution, with research spanning various categories including phase-change materials, memristive systems, spintronic devices, and organic electronics. Each material class offers unique properties that can be harnessed to implement different aspects of neuronal and synaptic functions, such as spike generation, plasticity, and memory retention.

The technical trajectory aims to develop materials that can simultaneously address multiple requirements: non-volatile memory capabilities, analog computation, scalable fabrication, compatibility with existing semiconductor processes, and long-term reliability. Additionally, these materials must support the implementation of learning algorithms directly in hardware, enabling systems that can adapt to new information without explicit programming.

Beyond the immediate technical goals, neuromorphic materials research seeks to establish a new computing paradigm that could fundamentally transform how we approach complex computational problems. This includes applications in edge computing, autonomous systems, biomedical devices, and environmental monitoring, where energy constraints and real-time processing requirements are particularly stringent.

The convergence of material science innovations with neuromorphic architectural principles represents a promising frontier for addressing the computational challenges of the coming decades, potentially enabling artificial intelligence systems that approach biological efficiency while maintaining or exceeding the performance of conventional computing systems for specialized tasks.

The development of neuromorphic materials has accelerated dramatically in the past decade, driven by the increasing demands of artificial intelligence applications and the physical limitations of conventional semiconductor technology as Moore's Law approaches its theoretical limits. These materials aim to emulate the brain's remarkable energy efficiency, parallel processing capabilities, and adaptive learning mechanisms at the hardware level.

Current neuromorphic computing objectives focus on creating systems that can process information with the brain's efficiency—approximately 20 watts of power consumption while performing complex cognitive tasks. This represents orders of magnitude improvement over conventional computing systems for certain applications, particularly those involving pattern recognition, sensory processing, and decision-making under uncertainty.

Materials science plays a crucial role in this technological evolution, with research spanning various categories including phase-change materials, memristive systems, spintronic devices, and organic electronics. Each material class offers unique properties that can be harnessed to implement different aspects of neuronal and synaptic functions, such as spike generation, plasticity, and memory retention.

The technical trajectory aims to develop materials that can simultaneously address multiple requirements: non-volatile memory capabilities, analog computation, scalable fabrication, compatibility with existing semiconductor processes, and long-term reliability. Additionally, these materials must support the implementation of learning algorithms directly in hardware, enabling systems that can adapt to new information without explicit programming.

Beyond the immediate technical goals, neuromorphic materials research seeks to establish a new computing paradigm that could fundamentally transform how we approach complex computational problems. This includes applications in edge computing, autonomous systems, biomedical devices, and environmental monitoring, where energy constraints and real-time processing requirements are particularly stringent.

The convergence of material science innovations with neuromorphic architectural principles represents a promising frontier for addressing the computational challenges of the coming decades, potentially enabling artificial intelligence systems that approach biological efficiency while maintaining or exceeding the performance of conventional computing systems for specialized tasks.

Market Analysis for Brain-Inspired Computing Solutions

The brain-inspired computing market is experiencing unprecedented growth, driven by the limitations of traditional von Neumann architectures in handling complex AI workloads. Current market valuations place neuromorphic computing at approximately $3.1 billion in 2023, with projections indicating a compound annual growth rate of 24.7% through 2030, potentially reaching $19.8 billion.

Demand is primarily concentrated in four key sectors. The healthcare industry represents the largest market segment, utilizing neuromorphic systems for medical imaging analysis, patient monitoring, and drug discovery processes that require complex pattern recognition. Financial services follow closely, implementing these solutions for fraud detection, algorithmic trading, and risk assessment models that benefit from event-driven processing capabilities.

Autonomous systems constitute the fastest-growing segment, with automotive and robotics companies investing heavily in neuromorphic hardware to enable real-time decision-making with significantly lower power consumption than traditional computing approaches. Defense and aerospace applications represent the fourth major market, where neuromorphic computing addresses needs for intelligent surveillance, signal processing, and autonomous navigation in resource-constrained environments.

Geographically, North America currently dominates the market with approximately 42% share, supported by substantial research funding and the presence of key industry players. Asia-Pacific represents the fastest-growing region, with China, Japan, and South Korea making significant investments in neuromorphic research and manufacturing capabilities.

Customer requirements across these markets consistently emphasize three priorities: energy efficiency (with demands for 100-1000x improvement over conventional systems), real-time processing capabilities, and adaptive learning features that enable systems to evolve without extensive retraining.

Market barriers include high development costs, limited standardization across neuromorphic platforms, and integration challenges with existing technology stacks. The specialized programming paradigms required for neuromorphic systems also present adoption hurdles for organizations with established software development practices.

Industry analysts identify the development of application-specific neuromorphic materials as a critical inflection point for market acceleration. Materials that can effectively mimic synaptic plasticity while maintaining manufacturing scalability are positioned to capture significant market share, particularly in edge computing applications where power constraints are most severe.

Demand is primarily concentrated in four key sectors. The healthcare industry represents the largest market segment, utilizing neuromorphic systems for medical imaging analysis, patient monitoring, and drug discovery processes that require complex pattern recognition. Financial services follow closely, implementing these solutions for fraud detection, algorithmic trading, and risk assessment models that benefit from event-driven processing capabilities.

Autonomous systems constitute the fastest-growing segment, with automotive and robotics companies investing heavily in neuromorphic hardware to enable real-time decision-making with significantly lower power consumption than traditional computing approaches. Defense and aerospace applications represent the fourth major market, where neuromorphic computing addresses needs for intelligent surveillance, signal processing, and autonomous navigation in resource-constrained environments.

Geographically, North America currently dominates the market with approximately 42% share, supported by substantial research funding and the presence of key industry players. Asia-Pacific represents the fastest-growing region, with China, Japan, and South Korea making significant investments in neuromorphic research and manufacturing capabilities.

Customer requirements across these markets consistently emphasize three priorities: energy efficiency (with demands for 100-1000x improvement over conventional systems), real-time processing capabilities, and adaptive learning features that enable systems to evolve without extensive retraining.

Market barriers include high development costs, limited standardization across neuromorphic platforms, and integration challenges with existing technology stacks. The specialized programming paradigms required for neuromorphic systems also present adoption hurdles for organizations with established software development practices.

Industry analysts identify the development of application-specific neuromorphic materials as a critical inflection point for market acceleration. Materials that can effectively mimic synaptic plasticity while maintaining manufacturing scalability are positioned to capture significant market share, particularly in edge computing applications where power constraints are most severe.

Current Neuromorphic Materials Landscape and Barriers

The neuromorphic materials landscape is currently dominated by several key material categories, each with distinct properties and limitations. Memristive materials, including metal oxides like TiO2 and HfO2, demonstrate promising resistance switching capabilities essential for synaptic weight implementation. These materials exhibit excellent scalability and compatibility with CMOS fabrication processes, making them attractive for commercial integration. However, they still face challenges in switching uniformity, endurance limitations, and device-to-device variability that impede reliable large-scale deployment.

Phase-change materials (PCMs), such as Ge2Sb2Te5, represent another significant category that leverages crystalline-to-amorphous state transitions to mimic synaptic plasticity. While PCMs offer non-volatility and multi-level storage capabilities, they require substantial energy for phase transitions and suffer from drift phenomena that affect long-term stability of stored values, presenting barriers to energy-efficient implementation.

Ferroelectric materials, including hafnium zirconium oxide (HZO) and lead zirconate titanate (PZT), have emerged as promising candidates due to their polarization-dependent conductivity. These materials enable low-power operation but face challenges in scaling down while maintaining ferroelectric properties and managing fatigue effects during repeated switching operations.

Magnetic materials, particularly those exhibiting spintronic effects, offer unique advantages in non-volatility and energy efficiency. However, their integration with conventional electronics remains complex, and achieving reliable spin-transfer torque with low current densities continues to be a significant technical hurdle.

Two-dimensional materials like graphene and transition metal dichalcogenides (TMDs) represent the cutting edge of neuromorphic materials research. Their atomically thin structures provide exceptional tunability and novel quantum effects that could revolutionize neuromorphic computing. Nevertheless, manufacturing challenges, including precise control of defects and large-scale production consistency, currently limit their practical application.

A critical barrier across all material platforms is the gap between material properties and the biological neural functions they aim to emulate. Most current materials can only approximate limited aspects of biological synapses and neurons, lacking the complete functionality and adaptability of biological systems. Additionally, the interface between these novel materials and conventional computing architectures presents significant integration challenges.

From a geographical perspective, research leadership in neuromorphic materials is distributed across North America, Europe, and East Asia, with notable concentrations in the United States, Germany, China, Japan, and South Korea. This distribution reflects both academic research strengths and strategic industrial investments in next-generation computing technologies.

Phase-change materials (PCMs), such as Ge2Sb2Te5, represent another significant category that leverages crystalline-to-amorphous state transitions to mimic synaptic plasticity. While PCMs offer non-volatility and multi-level storage capabilities, they require substantial energy for phase transitions and suffer from drift phenomena that affect long-term stability of stored values, presenting barriers to energy-efficient implementation.

Ferroelectric materials, including hafnium zirconium oxide (HZO) and lead zirconate titanate (PZT), have emerged as promising candidates due to their polarization-dependent conductivity. These materials enable low-power operation but face challenges in scaling down while maintaining ferroelectric properties and managing fatigue effects during repeated switching operations.

Magnetic materials, particularly those exhibiting spintronic effects, offer unique advantages in non-volatility and energy efficiency. However, their integration with conventional electronics remains complex, and achieving reliable spin-transfer torque with low current densities continues to be a significant technical hurdle.

Two-dimensional materials like graphene and transition metal dichalcogenides (TMDs) represent the cutting edge of neuromorphic materials research. Their atomically thin structures provide exceptional tunability and novel quantum effects that could revolutionize neuromorphic computing. Nevertheless, manufacturing challenges, including precise control of defects and large-scale production consistency, currently limit their practical application.

A critical barrier across all material platforms is the gap between material properties and the biological neural functions they aim to emulate. Most current materials can only approximate limited aspects of biological synapses and neurons, lacking the complete functionality and adaptability of biological systems. Additionally, the interface between these novel materials and conventional computing architectures presents significant integration challenges.

From a geographical perspective, research leadership in neuromorphic materials is distributed across North America, Europe, and East Asia, with notable concentrations in the United States, Germany, China, Japan, and South Korea. This distribution reflects both academic research strengths and strategic industrial investments in next-generation computing technologies.

State-of-the-Art Neuromorphic Material Implementations

01 Memristive materials for neuromorphic computing

Memristive materials are being developed for neuromorphic computing applications due to their ability to mimic synaptic behavior. These materials can change their resistance based on the history of applied voltage or current, similar to how biological synapses change their strength. This property makes them ideal for implementing artificial neural networks in hardware, enabling more efficient and brain-like computation compared to traditional von Neumann architectures.- Memristive materials for neuromorphic computing: Memristive materials are used to create artificial synapses and neurons for neuromorphic computing systems. These materials can change their resistance based on the history of applied voltage or current, mimicking the behavior of biological synapses. This property allows for the implementation of learning algorithms and memory functions in hardware, enabling more efficient neural network computations compared to traditional von Neumann architectures.

- Spiking neural network hardware implementations: Specialized hardware architectures designed to implement spiking neural networks (SNNs) that more closely mimic biological neural systems. These implementations use event-driven processing where neurons communicate through discrete spikes rather than continuous values, resulting in energy-efficient computation. The hardware designs incorporate specialized circuits for spike generation, propagation, and synaptic plasticity mechanisms that enable online learning capabilities.

- Phase-change materials for cognitive computing: Phase-change materials that can rapidly switch between amorphous and crystalline states are utilized to create non-volatile memory elements for neuromorphic systems. These materials exhibit multiple resistance states that can be precisely controlled, allowing them to store synaptic weights in neural networks. The ability to maintain these states without power consumption makes them particularly suitable for energy-efficient cognitive computing applications that require persistent memory.

- Quantum neuromorphic computing paradigms: Integration of quantum computing principles with neuromorphic architectures to create hybrid systems that leverage quantum effects for enhanced computational capabilities. These paradigms utilize quantum materials and phenomena such as superposition and entanglement to implement neural network operations with potentially exponential speedups for certain problems. Quantum neuromorphic approaches offer new ways to represent and process information beyond classical binary logic.

- Bio-inspired computational materials and structures: Materials and structural designs directly inspired by biological neural systems, including dendrite-like branching structures and self-organizing materials that mimic neural development. These approaches incorporate principles from neuroscience to create computing substrates with inherent parallelism and adaptability. Bio-inspired computational materials often feature hierarchical organization and emergent properties that enable complex information processing with minimal energy consumption.

02 Spiking neural network implementations

Spiking neural networks (SNNs) represent a computational paradigm that more closely mimics the behavior of biological neurons by processing information through discrete spikes rather than continuous values. These networks can be implemented using specialized neuromorphic materials and architectures that support temporal information processing. SNNs offer advantages in terms of energy efficiency and real-time processing for applications such as pattern recognition and sensory processing.Expand Specific Solutions03 Phase-change materials for cognitive computing

Phase-change materials (PCMs) are being utilized in neuromorphic computing systems due to their ability to rapidly switch between amorphous and crystalline states. This property allows PCMs to serve as artificial synapses with multiple resistance states, enabling multi-level memory capabilities essential for neural network weight storage. These materials support computational paradigms that can perform both memory and processing functions in the same physical location, reducing the energy consumption associated with data movement.Expand Specific Solutions04 Neuromorphic hardware architectures

Novel hardware architectures are being developed specifically for neuromorphic computing, moving away from traditional von Neumann designs. These architectures integrate specialized neuromorphic materials with circuit designs that support parallel processing and local memory-compute integration. Such systems enable efficient implementation of neural networks by reducing power consumption and increasing processing speed for AI applications, while better accommodating the unique properties of neuromorphic materials.Expand Specific Solutions05 Quantum-inspired neuromorphic computing

Quantum-inspired approaches to neuromorphic computing utilize materials and systems that leverage quantum mechanical effects to enhance computational capabilities. These systems combine principles from quantum computing with neuromorphic architectures to create novel computational paradigms. By exploiting quantum properties such as superposition and entanglement in specialized materials, these approaches aim to solve complex problems more efficiently than classical neuromorphic systems while maintaining brain-inspired processing characteristics.Expand Specific Solutions

Leading Organizations in Neuromorphic Computing Research

The neuromorphic materials market is in its early growth phase, characterized by significant research activity but limited commercial deployment. Current market size is modest but projected to expand rapidly as brain-inspired computing gains traction in AI applications. The technological landscape shows varying maturity levels, with established players like IBM, Samsung Electronics, and Western Digital developing advanced neuromorphic architectures, while specialized entities such as Syntiant and Beijing Lingxi Technology focus on edge computing implementations. Academic institutions including MIT, Tsinghua University, and KAIST are driving fundamental research breakthroughs. The competitive environment features collaboration between industry and academia, with major semiconductor companies investing heavily in neuromorphic R&D to address growing computational demands while reducing energy consumption in next-generation AI systems.

International Business Machines Corp.

Technical Solution: IBM has pioneered neuromorphic computing through its TrueNorth and subsequent neuromorphic chip architectures. Their approach focuses on developing brain-inspired hardware that mimics neural structures using phase-change memory (PCM) materials as artificial synapses. IBM's neuromorphic systems implement spiking neural networks (SNNs) that process information in an event-driven manner, similar to biological neurons. Their materials innovation includes the use of non-volatile memory technologies that can simultaneously store and process information, eliminating the traditional von Neumann bottleneck. IBM has demonstrated neuromorphic chips containing millions of "neurons" and billions of "synapses" that consume significantly less power than conventional processors while performing cognitive tasks[1]. Their recent advancements include the development of analog memory devices using chalcogenide-based materials that can maintain multiple resistance states, enabling efficient implementation of neural network weights in hardware[3].

Strengths: Industry-leading expertise in neuromorphic architecture design; extensive patent portfolio in phase-change memory materials; proven energy efficiency (1000x more efficient than traditional architectures for certain tasks). Weaknesses: Specialized programming requirements limit widespread adoption; challenges in scaling manufacturing of novel materials; still requires complementary traditional computing for certain operations.

Samsung Electronics Co., Ltd.

Technical Solution: Samsung has developed advanced neuromorphic computing solutions based on resistive random-access memory (RRAM) and magnetoresistive random-access memory (MRAM) technologies. Their approach integrates these memory technologies directly into computational units, creating highly efficient in-memory computing architectures. Samsung's neuromorphic materials research focuses on metal-oxide-based memristors that can emulate synaptic plasticity, enabling spike-timing-dependent plasticity (STDP) learning mechanisms similar to biological neural systems. Their neuromorphic chips utilize crossbar arrays of these memristive devices to perform parallel matrix operations essential for neural network computations with minimal energy consumption[2]. Samsung has also pioneered the development of 3D stacking techniques for neuromorphic hardware, allowing for higher integration density and more complex neural architectures. Recent demonstrations have shown their neuromorphic systems achieving up to 100x improvement in energy efficiency compared to conventional digital implementations for AI workloads[4].

Strengths: Vertical integration capabilities from materials research to device manufacturing; strong expertise in memory technologies; established production facilities that can be adapted for neuromorphic materials. Weaknesses: Less specialized in neuromorphic algorithms compared to pure AI companies; balancing neuromorphic development with mainstream semiconductor business priorities; relatively newer entrant to neuromorphic computing compared to IBM.

Breakthrough Patents in Brain-Inspired Computing Materials

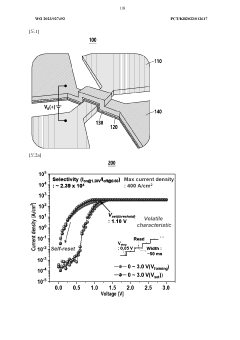

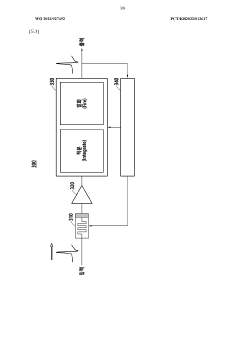

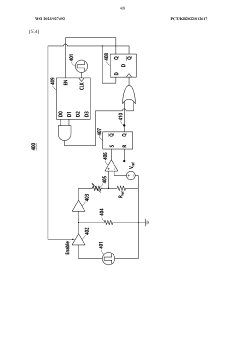

Neuromorphic device based on memristor device, and neuromorphic system using same

PatentWO2023027492A1

Innovation

- A neuromorphic device using a memristor with a switching layer of amorphous germanium sulfide and a source layer of copper telluride, allowing for both artificial neuron and synapse characteristics to be implemented, with a crossbar-type structure that adjusts current density for volatility or non-volatility, enabling efficient memory operations and paired pulse facilitation.

Energy Efficiency Considerations for Neuromorphic Systems

Energy efficiency represents a critical consideration in the development and deployment of neuromorphic systems. Traditional von Neumann computing architectures face fundamental limitations in power efficiency due to the physical separation between memory and processing units, creating the well-known "memory wall" bottleneck. Neuromorphic systems, inspired by biological neural networks, offer promising alternatives by integrating memory and computation within the same physical structures, potentially reducing energy consumption by several orders of magnitude.

Current neuromorphic materials demonstrate remarkable energy efficiency metrics. For instance, memristive devices can operate at femtojoule-per-operation levels, compared to picojoule-per-operation in conventional CMOS technologies. This efficiency stems from their ability to maintain state without continuous power supply and perform computations with minimal energy transfer. Phase-change materials and spintronic devices similarly exhibit ultra-low power consumption characteristics essential for large-scale neuromorphic implementations.

The energy landscape of neuromorphic systems must be evaluated across multiple operational dimensions. Static power consumption during idle states represents a significant factor in overall efficiency, particularly for edge computing applications where devices may remain dormant for extended periods. Dynamic power requirements during learning and inference operations vary substantially based on the specific neuromorphic material properties and circuit design approaches.

Thermal management presents another crucial aspect of energy efficiency considerations. The concentration of computational elements in neuromorphic architectures can create localized heating issues that impact both performance and reliability. Advanced materials with superior thermal conductivity properties and innovative cooling strategies are being explored to address these challenges while maintaining the compact form factors that make neuromorphic systems attractive.

Scaling neuromorphic systems from laboratory demonstrations to practical applications introduces additional energy efficiency challenges. As system size increases, interconnect energy consumption often becomes dominant, necessitating novel approaches to signal transmission and network topology optimization. Three-dimensional integration techniques offer promising solutions by minimizing connection distances while maximizing computational density.

Energy harvesting capabilities represent an emerging frontier for neuromorphic systems, particularly for autonomous edge devices. Materials that can simultaneously perform computation and harvest ambient energy from thermal, vibrational, or electromagnetic sources could enable self-powered neuromorphic systems for applications in remote sensing, environmental monitoring, and distributed intelligence scenarios.

Current neuromorphic materials demonstrate remarkable energy efficiency metrics. For instance, memristive devices can operate at femtojoule-per-operation levels, compared to picojoule-per-operation in conventional CMOS technologies. This efficiency stems from their ability to maintain state without continuous power supply and perform computations with minimal energy transfer. Phase-change materials and spintronic devices similarly exhibit ultra-low power consumption characteristics essential for large-scale neuromorphic implementations.

The energy landscape of neuromorphic systems must be evaluated across multiple operational dimensions. Static power consumption during idle states represents a significant factor in overall efficiency, particularly for edge computing applications where devices may remain dormant for extended periods. Dynamic power requirements during learning and inference operations vary substantially based on the specific neuromorphic material properties and circuit design approaches.

Thermal management presents another crucial aspect of energy efficiency considerations. The concentration of computational elements in neuromorphic architectures can create localized heating issues that impact both performance and reliability. Advanced materials with superior thermal conductivity properties and innovative cooling strategies are being explored to address these challenges while maintaining the compact form factors that make neuromorphic systems attractive.

Scaling neuromorphic systems from laboratory demonstrations to practical applications introduces additional energy efficiency challenges. As system size increases, interconnect energy consumption often becomes dominant, necessitating novel approaches to signal transmission and network topology optimization. Three-dimensional integration techniques offer promising solutions by minimizing connection distances while maximizing computational density.

Energy harvesting capabilities represent an emerging frontier for neuromorphic systems, particularly for autonomous edge devices. Materials that can simultaneously perform computation and harvest ambient energy from thermal, vibrational, or electromagnetic sources could enable self-powered neuromorphic systems for applications in remote sensing, environmental monitoring, and distributed intelligence scenarios.

Interdisciplinary Applications of Neuromorphic Computing

Neuromorphic computing systems are rapidly expanding beyond traditional computing domains, creating transformative opportunities across multiple disciplines. In healthcare, these brain-inspired systems are revolutionizing medical imaging analysis, enabling real-time processing of complex diagnostic data with significantly reduced power consumption compared to conventional computing architectures. The pattern recognition capabilities inherent in neuromorphic systems make them particularly valuable for detecting subtle anomalies in medical scans that might otherwise be missed.

The integration of neuromorphic computing with autonomous vehicles represents another promising frontier. These systems can process sensory data from multiple sources simultaneously, mimicking the human brain's ability to make split-second decisions based on visual, auditory, and tactile inputs. This parallel processing capability allows for more efficient obstacle detection and navigation in complex environments, potentially improving safety while reducing computational overhead.

Environmental monitoring networks are being transformed through neuromorphic implementations that can operate continuously on minimal power. These systems can detect environmental changes, from air quality fluctuations to early warning signs of natural disasters, processing sensor data locally rather than requiring constant cloud connectivity. This edge computing approach significantly reduces data transmission requirements and enables deployment in remote locations.

In robotics, neuromorphic materials and computing paradigms are enabling more adaptive and responsive systems. Unlike traditional robots programmed with explicit rules, neuromorphic robots can learn from their environments, adapting their behaviors based on experience. This capability is particularly valuable in unstructured environments where predefined programming is insufficient, such as disaster response scenarios or assistive care settings.

Financial technology represents another emerging application area, where neuromorphic systems excel at detecting patterns in market data and identifying anomalous transactions that might indicate fraud. The ability to process streaming data in real-time while consuming minimal power makes these systems ideal for continuous monitoring applications in the financial sector.

Educational technologies are also benefiting from neuromorphic approaches, with adaptive learning systems that can recognize individual learning patterns and adjust teaching strategies accordingly. These systems can process multiple inputs—from eye tracking to voice recognition—to gauge student engagement and comprehension, creating more personalized learning experiences.

The interdisciplinary nature of these applications highlights the versatility of neuromorphic materials and computing paradigms, suggesting that their impact will continue to expand as the technology matures and becomes more accessible to researchers and developers across diverse fields.

The integration of neuromorphic computing with autonomous vehicles represents another promising frontier. These systems can process sensory data from multiple sources simultaneously, mimicking the human brain's ability to make split-second decisions based on visual, auditory, and tactile inputs. This parallel processing capability allows for more efficient obstacle detection and navigation in complex environments, potentially improving safety while reducing computational overhead.

Environmental monitoring networks are being transformed through neuromorphic implementations that can operate continuously on minimal power. These systems can detect environmental changes, from air quality fluctuations to early warning signs of natural disasters, processing sensor data locally rather than requiring constant cloud connectivity. This edge computing approach significantly reduces data transmission requirements and enables deployment in remote locations.

In robotics, neuromorphic materials and computing paradigms are enabling more adaptive and responsive systems. Unlike traditional robots programmed with explicit rules, neuromorphic robots can learn from their environments, adapting their behaviors based on experience. This capability is particularly valuable in unstructured environments where predefined programming is insufficient, such as disaster response scenarios or assistive care settings.

Financial technology represents another emerging application area, where neuromorphic systems excel at detecting patterns in market data and identifying anomalous transactions that might indicate fraud. The ability to process streaming data in real-time while consuming minimal power makes these systems ideal for continuous monitoring applications in the financial sector.

Educational technologies are also benefiting from neuromorphic approaches, with adaptive learning systems that can recognize individual learning patterns and adjust teaching strategies accordingly. These systems can process multiple inputs—from eye tracking to voice recognition—to gauge student engagement and comprehension, creating more personalized learning experiences.

The interdisciplinary nature of these applications highlights the versatility of neuromorphic materials and computing paradigms, suggesting that their impact will continue to expand as the technology matures and becomes more accessible to researchers and developers across diverse fields.

Unlock deeper insights with PatSnap Eureka Quick Research — get a full tech report to explore trends and direct your research. Try now!

Generate Your Research Report Instantly with AI Agent

Supercharge your innovation with PatSnap Eureka AI Agent Platform!