Energy Efficiency in Neuromorphic Computing Systems

MAR 11, 20269 MIN READ

Generate Your Research Report Instantly with AI Agent

PatSnap Eureka helps you evaluate technical feasibility & market potential.

Neuromorphic Computing Energy Efficiency Background and Objectives

Neuromorphic computing represents a paradigm shift in computational architecture, drawing inspiration from the human brain's neural networks to create energy-efficient processing systems. This field emerged from the recognition that traditional von Neumann architectures face fundamental limitations in power consumption and parallel processing capabilities, particularly when handling cognitive tasks such as pattern recognition, sensory processing, and adaptive learning.

The historical development of neuromorphic computing traces back to Carver Mead's pioneering work in the 1980s, where he first proposed using analog circuits to mimic neural behavior. Since then, the field has evolved through several key phases, beginning with basic analog neural circuits, progressing to mixed-signal implementations, and advancing to current large-scale neuromorphic processors. Each evolutionary stage has been driven by the pursuit of brain-like efficiency, where biological neural networks demonstrate remarkable computational capabilities while consuming merely 20 watts of power.

Current technological trends indicate a convergence toward spike-based processing architectures that emulate the brain's event-driven communication mechanisms. Unlike conventional digital systems that process data continuously, neuromorphic systems operate on sparse, asynchronous spike trains, dramatically reducing unnecessary computations and power consumption. This approach has gained significant momentum with the development of specialized neuromorphic chips such as Intel's Loihi, IBM's TrueNorth, and various memristive devices.

The primary technical objectives driving neuromorphic computing development center on achieving ultra-low power consumption while maintaining high computational performance for cognitive tasks. Specific targets include reducing energy consumption by several orders of magnitude compared to conventional processors, enabling real-time learning and adaptation capabilities, and supporting massively parallel processing architectures that can scale efficiently.

Energy efficiency remains the cornerstone objective, with researchers aiming to approach the brain's remarkable energy efficiency of approximately 10^-15 joules per synaptic operation. This goal necessitates fundamental innovations in device physics, circuit design, and system architecture. Additionally, the field seeks to enable autonomous learning systems that can adapt and evolve without external programming, supporting applications in robotics, autonomous vehicles, and intelligent sensor networks where power constraints are critical.

The historical development of neuromorphic computing traces back to Carver Mead's pioneering work in the 1980s, where he first proposed using analog circuits to mimic neural behavior. Since then, the field has evolved through several key phases, beginning with basic analog neural circuits, progressing to mixed-signal implementations, and advancing to current large-scale neuromorphic processors. Each evolutionary stage has been driven by the pursuit of brain-like efficiency, where biological neural networks demonstrate remarkable computational capabilities while consuming merely 20 watts of power.

Current technological trends indicate a convergence toward spike-based processing architectures that emulate the brain's event-driven communication mechanisms. Unlike conventional digital systems that process data continuously, neuromorphic systems operate on sparse, asynchronous spike trains, dramatically reducing unnecessary computations and power consumption. This approach has gained significant momentum with the development of specialized neuromorphic chips such as Intel's Loihi, IBM's TrueNorth, and various memristive devices.

The primary technical objectives driving neuromorphic computing development center on achieving ultra-low power consumption while maintaining high computational performance for cognitive tasks. Specific targets include reducing energy consumption by several orders of magnitude compared to conventional processors, enabling real-time learning and adaptation capabilities, and supporting massively parallel processing architectures that can scale efficiently.

Energy efficiency remains the cornerstone objective, with researchers aiming to approach the brain's remarkable energy efficiency of approximately 10^-15 joules per synaptic operation. This goal necessitates fundamental innovations in device physics, circuit design, and system architecture. Additionally, the field seeks to enable autonomous learning systems that can adapt and evolve without external programming, supporting applications in robotics, autonomous vehicles, and intelligent sensor networks where power constraints are critical.

Market Demand for Low-Power Neuromorphic Solutions

The global semiconductor industry is experiencing unprecedented demand for energy-efficient computing solutions, driven by the exponential growth of edge computing applications and the increasing deployment of Internet of Things devices. Traditional von Neumann architectures face fundamental limitations in power efficiency, particularly when processing sensory data and running artificial intelligence workloads at the edge. This creates a substantial market opportunity for neuromorphic computing systems that can deliver orders of magnitude improvements in energy efficiency.

Mobile and wearable device manufacturers represent a primary market segment driving demand for low-power neuromorphic solutions. These devices require continuous sensory processing capabilities while maintaining extended battery life, making energy efficiency a critical design constraint. Neuromorphic processors can enable always-on functionality for voice recognition, gesture detection, and environmental sensing without significantly impacting battery performance.

The autonomous vehicle industry presents another significant market opportunity, where neuromorphic systems can process real-time sensor data from cameras, lidar, and radar systems with minimal power consumption. The ability to perform local processing reduces latency and bandwidth requirements while maintaining the energy budgets necessary for extended vehicle operation.

Industrial automation and smart manufacturing sectors are increasingly adopting edge AI solutions that require robust, low-power processing capabilities. Neuromorphic systems can enable predictive maintenance, quality control, and process optimization applications while operating in challenging industrial environments with limited power infrastructure.

Healthcare and biomedical applications represent an emerging market segment where ultra-low power consumption is essential. Implantable medical devices, continuous health monitoring systems, and portable diagnostic equipment can benefit from neuromorphic processors that extend operational lifetime while maintaining sophisticated signal processing capabilities.

The data center industry is also exploring neuromorphic solutions to address growing energy consumption concerns. While traditional applications focus on ultra-low power scenarios, neuromorphic architectures show promise for specific AI inference workloads where energy efficiency translates directly to operational cost savings and environmental sustainability goals.

Market adoption faces challenges including limited software ecosystems, integration complexity, and the need for specialized development expertise. However, increasing awareness of energy efficiency requirements and growing investment in neuromorphic research indicate strong long-term market potential across multiple application domains.

Mobile and wearable device manufacturers represent a primary market segment driving demand for low-power neuromorphic solutions. These devices require continuous sensory processing capabilities while maintaining extended battery life, making energy efficiency a critical design constraint. Neuromorphic processors can enable always-on functionality for voice recognition, gesture detection, and environmental sensing without significantly impacting battery performance.

The autonomous vehicle industry presents another significant market opportunity, where neuromorphic systems can process real-time sensor data from cameras, lidar, and radar systems with minimal power consumption. The ability to perform local processing reduces latency and bandwidth requirements while maintaining the energy budgets necessary for extended vehicle operation.

Industrial automation and smart manufacturing sectors are increasingly adopting edge AI solutions that require robust, low-power processing capabilities. Neuromorphic systems can enable predictive maintenance, quality control, and process optimization applications while operating in challenging industrial environments with limited power infrastructure.

Healthcare and biomedical applications represent an emerging market segment where ultra-low power consumption is essential. Implantable medical devices, continuous health monitoring systems, and portable diagnostic equipment can benefit from neuromorphic processors that extend operational lifetime while maintaining sophisticated signal processing capabilities.

The data center industry is also exploring neuromorphic solutions to address growing energy consumption concerns. While traditional applications focus on ultra-low power scenarios, neuromorphic architectures show promise for specific AI inference workloads where energy efficiency translates directly to operational cost savings and environmental sustainability goals.

Market adoption faces challenges including limited software ecosystems, integration complexity, and the need for specialized development expertise. However, increasing awareness of energy efficiency requirements and growing investment in neuromorphic research indicate strong long-term market potential across multiple application domains.

Current Energy Challenges in Neuromorphic Computing Systems

Neuromorphic computing systems face significant energy consumption challenges that limit their practical deployment and scalability. Despite their bio-inspired architecture designed to mimic the energy-efficient neural networks of the human brain, current implementations struggle to achieve the theoretical energy advantages promised by this paradigm.

The primary energy challenge stems from the analog-digital interface bottleneck. Most neuromorphic systems require frequent conversions between analog spike signals and digital processing units, leading to substantial energy overhead. These conversion processes can consume up to 60% of the total system energy, negating many of the efficiency gains from spike-based computation.

Memory access patterns present another critical energy constraint. Unlike biological neural networks where computation and memory are co-located, current neuromorphic architectures often rely on separate memory hierarchies. The constant data movement between processing elements and memory units creates significant energy penalties, particularly when implementing large-scale neural networks that exceed on-chip memory capacity.

Leakage current issues plague many neuromorphic implementations, especially those using analog circuits to emulate synaptic behavior. Subthreshold leakage in transistors operating in weak inversion regions can account for 40-70% of total power consumption in idle states. This challenge becomes more pronounced as device scaling continues and process variations increase.

Clock distribution and synchronization overhead represents an additional energy burden. While neuromorphic systems aim to operate asynchronously, many implementations still require global timing signals for coordination between different processing units. The energy cost of distributing these timing signals across large chip areas can be substantial, particularly in multi-core neuromorphic processors.

Peripheral circuit energy consumption often exceeds the core neuromorphic processing energy. Support circuits including bias generators, reference voltage sources, and communication interfaces can consume 2-3 times more power than the actual neural computation elements. This overhead becomes particularly problematic in battery-powered edge computing applications where every milliwatt matters.

Process variation sensitivity creates additional energy challenges by forcing designers to include large safety margins in operating voltages and currents. These margins ensure reliable operation across process corners but result in significant energy waste during typical operating conditions, reducing the overall system efficiency by 20-40% compared to ideal scenarios.

The primary energy challenge stems from the analog-digital interface bottleneck. Most neuromorphic systems require frequent conversions between analog spike signals and digital processing units, leading to substantial energy overhead. These conversion processes can consume up to 60% of the total system energy, negating many of the efficiency gains from spike-based computation.

Memory access patterns present another critical energy constraint. Unlike biological neural networks where computation and memory are co-located, current neuromorphic architectures often rely on separate memory hierarchies. The constant data movement between processing elements and memory units creates significant energy penalties, particularly when implementing large-scale neural networks that exceed on-chip memory capacity.

Leakage current issues plague many neuromorphic implementations, especially those using analog circuits to emulate synaptic behavior. Subthreshold leakage in transistors operating in weak inversion regions can account for 40-70% of total power consumption in idle states. This challenge becomes more pronounced as device scaling continues and process variations increase.

Clock distribution and synchronization overhead represents an additional energy burden. While neuromorphic systems aim to operate asynchronously, many implementations still require global timing signals for coordination between different processing units. The energy cost of distributing these timing signals across large chip areas can be substantial, particularly in multi-core neuromorphic processors.

Peripheral circuit energy consumption often exceeds the core neuromorphic processing energy. Support circuits including bias generators, reference voltage sources, and communication interfaces can consume 2-3 times more power than the actual neural computation elements. This overhead becomes particularly problematic in battery-powered edge computing applications where every milliwatt matters.

Process variation sensitivity creates additional energy challenges by forcing designers to include large safety margins in operating voltages and currents. These margins ensure reliable operation across process corners but result in significant energy waste during typical operating conditions, reducing the overall system efficiency by 20-40% compared to ideal scenarios.

Existing Energy Optimization Solutions for Neuromorphic Chips

01 Spiking Neural Network Architectures for Energy Reduction

Neuromorphic computing systems can utilize spiking neural network (SNN) architectures that process information through discrete spike events rather than continuous signals. This event-driven approach significantly reduces energy consumption by activating computations only when spikes occur, minimizing idle power usage. SNNs mimic biological neural processing more closely, enabling sparse computation patterns that inherently consume less energy compared to traditional artificial neural networks.- Spiking Neural Network Architectures for Energy Reduction: Neuromorphic computing systems can utilize spiking neural network (SNN) architectures that process information through discrete spike events rather than continuous signals. This event-driven approach significantly reduces energy consumption by activating computations only when spikes occur, minimizing idle power usage. SNNs mimic biological neural processing more closely, enabling sparse computation patterns that inherently consume less energy compared to traditional artificial neural networks.

- Memristor-Based Synaptic Devices for Low-Power Operation: Implementation of memristor technology as artificial synapses provides energy-efficient weight storage and computation in neuromorphic systems. These devices enable in-memory computing by performing analog multiplication operations directly within memory elements, eliminating energy-intensive data transfers between memory and processing units. The non-volatile nature of memristors also reduces static power consumption while maintaining synaptic weights.

- Adaptive Power Management and Dynamic Voltage Scaling: Energy efficiency can be enhanced through intelligent power management techniques that dynamically adjust voltage and clock frequencies based on computational workload. These systems monitor neural activity levels and scale power delivery accordingly, reducing energy waste during periods of low activity. Adaptive mechanisms can selectively power down inactive neural processing units while maintaining critical pathways, optimizing the trade-off between performance and energy consumption.

- Hierarchical Processing and Event-Driven Communication: Neuromorphic architectures employing hierarchical processing structures with event-driven communication protocols minimize unnecessary data movement and computation. By organizing neural layers in hierarchical arrangements and transmitting only significant events through asynchronous communication channels, these systems reduce both dynamic and static power consumption. This approach eliminates clock-driven synchronous operations that continuously consume energy regardless of computational necessity.

- Analog and Mixed-Signal Circuit Implementations: Utilizing analog and mixed-signal circuits for neuromorphic computation provides inherent energy advantages over purely digital implementations. Analog circuits can perform neural computations such as multiplication and accumulation using physical properties of transistors, consuming significantly less energy per operation. Mixed-signal designs combine the efficiency of analog processing with the precision and programmability of digital control, optimizing overall system energy efficiency while maintaining computational accuracy.

02 Memristor-Based Synaptic Devices for Low-Power Operation

Implementation of memristor technology as artificial synapses provides energy-efficient weight storage and computation in neuromorphic systems. These devices enable in-memory computing by performing synaptic operations directly within memory elements, eliminating energy-intensive data transfers between memory and processing units. Memristive devices offer non-volatile storage with low switching energy, enabling neuromorphic chips to achieve significant power savings while maintaining computational performance.Expand Specific Solutions03 Adaptive Power Management and Dynamic Voltage Scaling

Energy efficiency in neuromorphic systems can be enhanced through adaptive power management techniques that dynamically adjust voltage and clock frequencies based on computational demands. These systems monitor neural activity levels and scale power consumption accordingly, reducing energy waste during periods of low activity. Dynamic voltage and frequency scaling techniques optimize the trade-off between performance and power consumption, enabling neuromorphic processors to operate efficiently across varying workload conditions.Expand Specific Solutions04 Analog and Mixed-Signal Circuit Implementations

Neuromorphic computing systems employing analog or mixed-signal circuits can achieve superior energy efficiency compared to purely digital implementations. Analog circuits naturally perform neural computations such as multiplication and accumulation with minimal energy overhead. Mixed-signal designs combine the energy benefits of analog processing with the precision and programmability of digital control, enabling neuromorphic chips to execute complex neural algorithms while maintaining low power consumption profiles.Expand Specific Solutions05 Hierarchical and Distributed Processing Architectures

Energy-efficient neuromorphic systems can be designed with hierarchical and distributed processing architectures that localize computation and reduce data movement. By organizing neural processing into multiple layers or modules with local connectivity, these architectures minimize long-distance communication that consumes significant energy. Distributed processing enables parallel execution of neural computations across multiple low-power cores, improving overall system efficiency while reducing bottlenecks associated with centralized processing.Expand Specific Solutions

Key Players in Neuromorphic Computing Industry

The neuromorphic computing industry is in its early commercialization phase, transitioning from research-driven development to practical applications. The market shows significant growth potential, driven by increasing demand for energy-efficient AI processing at the edge. Technology maturity varies considerably across players, with established semiconductor giants like IBM, Intel, and Samsung Electronics leading in foundational research and chip development capabilities. Academic institutions including Tsinghua University, University of Tokyo, and Peking University contribute crucial theoretical advances, while specialized startups like Syntiant, Deepx, and GrAI Matter Labs focus on application-specific neuromorphic solutions. Research organizations such as CEA and NTT Research bridge the gap between fundamental science and commercial viability. The competitive landscape reflects a collaborative ecosystem where traditional computing companies, emerging AI chip specialists, and academic researchers collectively advance neuromorphic architectures toward mainstream adoption in ultra-low-power applications.

International Business Machines Corp.

Technical Solution: IBM has developed TrueNorth neuromorphic chip architecture featuring 1 million programmable neurons and 256 million synapses with ultra-low power consumption of 70mW during active operation[1]. The chip utilizes event-driven computation and asynchronous communication protocols to minimize energy waste. IBM's neuromorphic systems employ spike-based neural networks that only consume power when processing events, achieving energy efficiency improvements of up to 1000x compared to traditional von Neumann architectures[3]. Their research focuses on memristive devices and crossbar arrays for in-memory computing, reducing data movement energy costs significantly[5].

Strengths: Pioneer in neuromorphic computing with proven chip implementations and extensive research portfolio. Weaknesses: Limited commercial availability and scalability challenges for large-scale deployment.

Samsung Electronics Co., Ltd.

Technical Solution: Samsung has developed neuromorphic memory solutions using resistive RAM (ReRAM) and phase-change memory (PCM) technologies for energy-efficient neural network implementations[2]. Their approach integrates memory and processing units to eliminate the von Neumann bottleneck, achieving 10x energy reduction in neural network inference tasks[4]. Samsung's neuromorphic systems utilize crossbar array architectures with analog computing capabilities, enabling parallel matrix operations with minimal energy overhead. The company focuses on developing low-power neuromorphic processors for mobile and IoT applications, targeting sub-milliwatt power consumption levels[7].

Strengths: Strong memory technology foundation and manufacturing capabilities for large-scale production. Weaknesses: Limited software ecosystem and algorithm optimization compared to specialized neuromorphic companies.

Core Innovations in Ultra-Low Power Neuromorphic Design

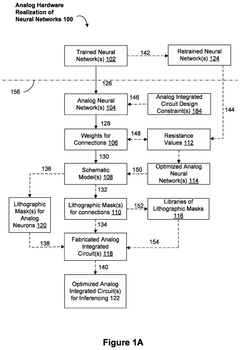

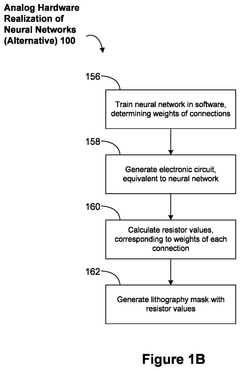

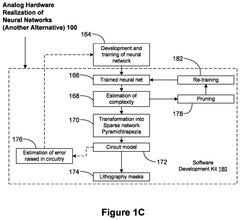

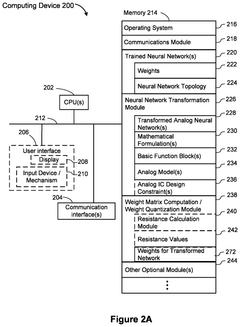

Systems and methods for optimizing energy efficiency of analog neuromorphic circuits

PatentActiveUS12393833B2

Innovation

- Analog neuromorphic circuits are designed to model trained neural networks, using operational amplifiers and resistors to represent neurons and connections, allowing for efficient hardware realization and mass production, with minimal power consumption and resilience to noise.

Neuromorphic computing system and current estimation method using the same

PatentActiveUS20190138881A1

Innovation

- The output channel of the synapse array is electrically connected to a first terminal or a second terminal in a switchable manner, allowing only limited or no current to flow, with the sum-of-product current estimated based on the voltage difference between these terminals, reducing energy dissipation.

Hardware-Software Co-design for Energy Optimization

Hardware-software co-design represents a paradigm shift in neuromorphic computing system development, where energy optimization becomes a holistic endeavor rather than isolated hardware or software improvements. This integrated approach recognizes that energy efficiency emerges from the synergistic interaction between computational architectures and algorithmic implementations, requiring simultaneous consideration of both domains during the design process.

The co-design methodology begins with establishing energy budgets that cascade from system-level requirements down to individual component specifications. Hardware designers must consider software execution patterns, memory access behaviors, and computational workload characteristics when architecting neuromorphic processors. Simultaneously, software developers optimize algorithms, data structures, and execution flows to leverage specific hardware capabilities such as event-driven processing, sparse connectivity patterns, and analog computation units.

Memory hierarchy optimization exemplifies successful co-design implementation in neuromorphic systems. Hardware architects design multi-level memory structures with varying access latencies and energy costs, while software frameworks implement intelligent data placement strategies that minimize high-energy memory transactions. This coordination reduces overall system energy consumption by orders of magnitude compared to traditional approaches where hardware and software are optimized independently.

Event-driven processing represents another critical co-design opportunity, where hardware provides asynchronous processing capabilities while software algorithms are restructured to maximize temporal sparsity. The hardware implements clock-gating mechanisms, power islands, and dynamic voltage scaling, while software ensures that computational tasks are distributed to minimize simultaneous active components and maximize idle periods for aggressive power management.

Cross-layer optimization techniques enable dynamic adaptation of both hardware configurations and software execution strategies based on real-time workload characteristics and energy constraints. This includes runtime reconfiguration of processing elements, adaptive precision control, and workload migration between different computational units to maintain optimal energy efficiency across varying operational conditions.

The co-design approach also addresses the challenge of maintaining computational accuracy while pursuing aggressive energy optimization. Hardware provides multiple precision modes and error correction mechanisms, while software implements adaptive algorithms that can tolerate controlled precision degradation without compromising overall system performance, achieving significant energy savings through reduced computational complexity.

The co-design methodology begins with establishing energy budgets that cascade from system-level requirements down to individual component specifications. Hardware designers must consider software execution patterns, memory access behaviors, and computational workload characteristics when architecting neuromorphic processors. Simultaneously, software developers optimize algorithms, data structures, and execution flows to leverage specific hardware capabilities such as event-driven processing, sparse connectivity patterns, and analog computation units.

Memory hierarchy optimization exemplifies successful co-design implementation in neuromorphic systems. Hardware architects design multi-level memory structures with varying access latencies and energy costs, while software frameworks implement intelligent data placement strategies that minimize high-energy memory transactions. This coordination reduces overall system energy consumption by orders of magnitude compared to traditional approaches where hardware and software are optimized independently.

Event-driven processing represents another critical co-design opportunity, where hardware provides asynchronous processing capabilities while software algorithms are restructured to maximize temporal sparsity. The hardware implements clock-gating mechanisms, power islands, and dynamic voltage scaling, while software ensures that computational tasks are distributed to minimize simultaneous active components and maximize idle periods for aggressive power management.

Cross-layer optimization techniques enable dynamic adaptation of both hardware configurations and software execution strategies based on real-time workload characteristics and energy constraints. This includes runtime reconfiguration of processing elements, adaptive precision control, and workload migration between different computational units to maintain optimal energy efficiency across varying operational conditions.

The co-design approach also addresses the challenge of maintaining computational accuracy while pursuing aggressive energy optimization. Hardware provides multiple precision modes and error correction mechanisms, while software implements adaptive algorithms that can tolerate controlled precision degradation without compromising overall system performance, achieving significant energy savings through reduced computational complexity.

Benchmarking Standards for Neuromorphic Energy Performance

The establishment of standardized benchmarking frameworks for neuromorphic energy performance represents a critical gap in the current evaluation ecosystem. Unlike traditional computing systems that rely on well-established metrics such as FLOPS per watt, neuromorphic systems require fundamentally different assessment methodologies that account for their event-driven nature and temporal processing capabilities.

Current benchmarking efforts face significant challenges due to the heterogeneous nature of neuromorphic architectures. Different platforms employ varying spike encoding schemes, synaptic plasticity mechanisms, and network topologies, making direct performance comparisons extremely difficult. The absence of unified standards has led to fragmented evaluation approaches where each research group or commercial entity develops proprietary metrics, hindering meaningful cross-platform analysis.

Several emerging standardization initiatives are attempting to address these challenges. The IEEE Standards Association has begun preliminary discussions on neuromorphic computing standards, while academic consortiums are developing benchmark suites specifically designed for spiking neural networks. These efforts focus on establishing common datasets, standardized workloads, and consistent measurement protocols that can accommodate the diverse range of neuromorphic implementations.

Energy measurement standardization presents unique complexities in neuromorphic systems. Traditional power monitoring techniques may not capture the fine-grained, event-driven power consumption patterns characteristic of these architectures. New methodologies must account for dynamic power scaling, idle state efficiency, and the temporal correlation between input stimulus patterns and energy consumption. This requires developing specialized instrumentation and measurement protocols that can accurately characterize power consumption across different operational modes.

The development of comprehensive benchmarking standards must also consider real-world application scenarios. Standards should encompass various computational tasks including pattern recognition, sensory processing, and adaptive learning, each with distinct energy efficiency requirements. Furthermore, these standards need to address scalability concerns, ensuring that benchmarking methodologies remain valid across different system sizes and complexity levels, from edge devices to large-scale neuromorphic computing clusters.

Current benchmarking efforts face significant challenges due to the heterogeneous nature of neuromorphic architectures. Different platforms employ varying spike encoding schemes, synaptic plasticity mechanisms, and network topologies, making direct performance comparisons extremely difficult. The absence of unified standards has led to fragmented evaluation approaches where each research group or commercial entity develops proprietary metrics, hindering meaningful cross-platform analysis.

Several emerging standardization initiatives are attempting to address these challenges. The IEEE Standards Association has begun preliminary discussions on neuromorphic computing standards, while academic consortiums are developing benchmark suites specifically designed for spiking neural networks. These efforts focus on establishing common datasets, standardized workloads, and consistent measurement protocols that can accommodate the diverse range of neuromorphic implementations.

Energy measurement standardization presents unique complexities in neuromorphic systems. Traditional power monitoring techniques may not capture the fine-grained, event-driven power consumption patterns characteristic of these architectures. New methodologies must account for dynamic power scaling, idle state efficiency, and the temporal correlation between input stimulus patterns and energy consumption. This requires developing specialized instrumentation and measurement protocols that can accurately characterize power consumption across different operational modes.

The development of comprehensive benchmarking standards must also consider real-world application scenarios. Standards should encompass various computational tasks including pattern recognition, sensory processing, and adaptive learning, each with distinct energy efficiency requirements. Furthermore, these standards need to address scalability concerns, ensuring that benchmarking methodologies remain valid across different system sizes and complexity levels, from edge devices to large-scale neuromorphic computing clusters.

Unlock deeper insights with PatSnap Eureka Quick Research — get a full tech report to explore trends and direct your research. Try now!

Generate Your Research Report Instantly with AI Agent

Supercharge your innovation with PatSnap Eureka AI Agent Platform!