Programming Models for Neuromorphic AI Hardware

MAR 11, 20269 MIN READ

Generate Your Research Report Instantly with AI Agent

PatSnap Eureka helps you evaluate technical feasibility & market potential.

Neuromorphic AI Hardware Programming Evolution and Objectives

Neuromorphic computing represents a paradigm shift from traditional von Neumann architectures, drawing inspiration from the brain's neural networks to create hardware systems that process information through spike-based communication and distributed memory. This field emerged in the 1980s with Carver Mead's pioneering work on analog VLSI circuits that mimicked neural behavior, establishing the foundation for brain-inspired computing architectures.

The evolution of neuromorphic hardware has progressed through distinct phases, beginning with early analog implementations that replicated basic neural functions using continuous-time circuits. The field subsequently advanced to mixed-signal approaches combining analog computation with digital control, and more recently to fully digital implementations that maintain neuromorphic principles while leveraging conventional semiconductor processes.

Contemporary neuromorphic systems encompass diverse architectural approaches, including Intel's Loihi chips with their mesh-based connectivity, IBM's TrueNorth processors featuring million-neuron arrays, and SpiNNaker's massively parallel ARM-based architecture. These platforms demonstrate varying degrees of biological fidelity, from abstract neural network implementations to detailed biophysical neuron models.

The primary technical objectives driving neuromorphic AI hardware development center on achieving ultra-low power consumption through event-driven processing, where computation occurs only when spikes are present. This approach fundamentally differs from clock-driven systems, potentially reducing energy consumption by several orders of magnitude for sparse, temporal data processing tasks.

Real-time processing capabilities represent another critical objective, as neuromorphic systems aim to process sensory data streams with minimal latency through parallel, distributed computation. The inherent parallelism of neural architectures enables simultaneous processing of multiple data channels without the bottlenecks associated with sequential processing paradigms.

Scalability objectives focus on creating systems that can accommodate millions to billions of artificial neurons while maintaining efficient communication and avoiding the memory wall problem. This requires innovative approaches to on-chip memory organization, inter-chip communication protocols, and hierarchical network topologies.

Adaptability and learning constitute fundamental goals, with hardware designed to support online learning algorithms that modify synaptic weights and network connectivity in response to input patterns. This capability enables autonomous adaptation to changing environments without external reprogramming, mimicking the brain's plasticity mechanisms.

The evolution of neuromorphic hardware has progressed through distinct phases, beginning with early analog implementations that replicated basic neural functions using continuous-time circuits. The field subsequently advanced to mixed-signal approaches combining analog computation with digital control, and more recently to fully digital implementations that maintain neuromorphic principles while leveraging conventional semiconductor processes.

Contemporary neuromorphic systems encompass diverse architectural approaches, including Intel's Loihi chips with their mesh-based connectivity, IBM's TrueNorth processors featuring million-neuron arrays, and SpiNNaker's massively parallel ARM-based architecture. These platforms demonstrate varying degrees of biological fidelity, from abstract neural network implementations to detailed biophysical neuron models.

The primary technical objectives driving neuromorphic AI hardware development center on achieving ultra-low power consumption through event-driven processing, where computation occurs only when spikes are present. This approach fundamentally differs from clock-driven systems, potentially reducing energy consumption by several orders of magnitude for sparse, temporal data processing tasks.

Real-time processing capabilities represent another critical objective, as neuromorphic systems aim to process sensory data streams with minimal latency through parallel, distributed computation. The inherent parallelism of neural architectures enables simultaneous processing of multiple data channels without the bottlenecks associated with sequential processing paradigms.

Scalability objectives focus on creating systems that can accommodate millions to billions of artificial neurons while maintaining efficient communication and avoiding the memory wall problem. This requires innovative approaches to on-chip memory organization, inter-chip communication protocols, and hierarchical network topologies.

Adaptability and learning constitute fundamental goals, with hardware designed to support online learning algorithms that modify synaptic weights and network connectivity in response to input patterns. This capability enables autonomous adaptation to changing environments without external reprogramming, mimicking the brain's plasticity mechanisms.

Market Demand for Neuromorphic Computing Solutions

The neuromorphic computing market is experiencing unprecedented growth driven by the increasing demand for energy-efficient artificial intelligence solutions across multiple industries. Traditional von Neumann architectures face significant limitations in handling the massive parallel processing requirements of modern AI workloads, creating substantial market opportunities for brain-inspired computing paradigms that can deliver superior performance per watt.

Edge computing applications represent the largest demand driver for neuromorphic solutions, particularly in autonomous vehicles, robotics, and Internet of Things devices where power consumption and real-time processing capabilities are critical. These applications require AI systems that can operate continuously under strict power budgets while maintaining high computational performance, making neuromorphic hardware an attractive alternative to conventional processors.

The healthcare and biomedical sectors demonstrate strong adoption potential for neuromorphic computing solutions, especially in medical imaging, prosthetics, and brain-computer interfaces. These applications benefit from the natural compatibility between neuromorphic architectures and biological signal processing, enabling more intuitive and efficient implementation of neural network algorithms for medical diagnostics and therapeutic devices.

Industrial automation and smart manufacturing sectors are increasingly seeking neuromorphic solutions for predictive maintenance, quality control, and adaptive manufacturing processes. The ability of neuromorphic systems to learn and adapt in real-time while consuming minimal power makes them ideal for deployment in distributed industrial environments where traditional cloud-based AI solutions may be impractical.

The defense and aerospace industries present significant market opportunities for neuromorphic computing, particularly in autonomous systems, surveillance, and signal processing applications. These sectors require robust, low-power AI solutions capable of operating in challenging environments while maintaining high reliability and performance standards.

Consumer electronics manufacturers are exploring neuromorphic integration for next-generation smartphones, wearables, and smart home devices. The demand for always-on AI capabilities with extended battery life drives interest in neuromorphic processors that can handle voice recognition, image processing, and sensor fusion tasks more efficiently than traditional architectures.

Research institutions and academic organizations constitute an important early-adopter market segment, driving demand for flexible neuromorphic platforms that support experimental programming models and algorithm development. This segment influences broader market adoption by validating neuromorphic approaches and developing the talent pipeline necessary for commercial deployment.

Edge computing applications represent the largest demand driver for neuromorphic solutions, particularly in autonomous vehicles, robotics, and Internet of Things devices where power consumption and real-time processing capabilities are critical. These applications require AI systems that can operate continuously under strict power budgets while maintaining high computational performance, making neuromorphic hardware an attractive alternative to conventional processors.

The healthcare and biomedical sectors demonstrate strong adoption potential for neuromorphic computing solutions, especially in medical imaging, prosthetics, and brain-computer interfaces. These applications benefit from the natural compatibility between neuromorphic architectures and biological signal processing, enabling more intuitive and efficient implementation of neural network algorithms for medical diagnostics and therapeutic devices.

Industrial automation and smart manufacturing sectors are increasingly seeking neuromorphic solutions for predictive maintenance, quality control, and adaptive manufacturing processes. The ability of neuromorphic systems to learn and adapt in real-time while consuming minimal power makes them ideal for deployment in distributed industrial environments where traditional cloud-based AI solutions may be impractical.

The defense and aerospace industries present significant market opportunities for neuromorphic computing, particularly in autonomous systems, surveillance, and signal processing applications. These sectors require robust, low-power AI solutions capable of operating in challenging environments while maintaining high reliability and performance standards.

Consumer electronics manufacturers are exploring neuromorphic integration for next-generation smartphones, wearables, and smart home devices. The demand for always-on AI capabilities with extended battery life drives interest in neuromorphic processors that can handle voice recognition, image processing, and sensor fusion tasks more efficiently than traditional architectures.

Research institutions and academic organizations constitute an important early-adopter market segment, driving demand for flexible neuromorphic platforms that support experimental programming models and algorithm development. This segment influences broader market adoption by validating neuromorphic approaches and developing the talent pipeline necessary for commercial deployment.

Current State of Neuromorphic Programming Model Challenges

The neuromorphic computing landscape faces significant programming model challenges that stem from the fundamental departure from traditional von Neumann architectures. Current neuromorphic hardware platforms, including Intel's Loihi, IBM's TrueNorth, and SpiNNaker, each implement distinct programming paradigms that lack standardization across the field. This fragmentation creates substantial barriers for developers attempting to create portable applications across different neuromorphic systems.

Programming abstraction represents one of the most pressing challenges in the current ecosystem. Unlike conventional processors where high-level languages abstract away hardware complexities, neuromorphic systems require developers to understand intricate details of spike timing, synaptic plasticity, and network topology. Existing programming frameworks such as NEST, Brian, and PyNN provide simulation capabilities but struggle to bridge the gap between algorithm design and efficient hardware implementation on neuromorphic chips.

The temporal dynamics inherent in neuromorphic computing introduce unprecedented complexity in program design and debugging. Traditional debugging tools and methodologies prove inadequate when dealing with asynchronous, event-driven computations where timing precision directly impacts functionality. Developers must navigate challenges related to spike timing dependencies, network synchronization, and the stochastic nature of neural computations, often without adequate toolchain support.

Memory management and data representation pose additional constraints in current programming models. Neuromorphic architectures typically feature distributed memory systems with limited capacity per processing element. Programming models must efficiently map neural networks onto these constrained resources while maintaining computational accuracy. The challenge intensifies when considering dynamic network reconfiguration and online learning scenarios that require real-time memory allocation and deallocation.

Scalability limitations plague existing programming approaches as network sizes increase. Current models often rely on manual optimization and hardware-specific tuning to achieve acceptable performance. The lack of automated compilation and optimization tools forces developers to possess deep hardware knowledge, significantly limiting the accessibility of neuromorphic computing to broader research and development communities.

Integration with existing AI development workflows remains problematic due to incompatible data formats, limited interoperability with popular machine learning frameworks, and insufficient support for hybrid computing scenarios that combine neuromorphic and conventional processing elements.

Programming abstraction represents one of the most pressing challenges in the current ecosystem. Unlike conventional processors where high-level languages abstract away hardware complexities, neuromorphic systems require developers to understand intricate details of spike timing, synaptic plasticity, and network topology. Existing programming frameworks such as NEST, Brian, and PyNN provide simulation capabilities but struggle to bridge the gap between algorithm design and efficient hardware implementation on neuromorphic chips.

The temporal dynamics inherent in neuromorphic computing introduce unprecedented complexity in program design and debugging. Traditional debugging tools and methodologies prove inadequate when dealing with asynchronous, event-driven computations where timing precision directly impacts functionality. Developers must navigate challenges related to spike timing dependencies, network synchronization, and the stochastic nature of neural computations, often without adequate toolchain support.

Memory management and data representation pose additional constraints in current programming models. Neuromorphic architectures typically feature distributed memory systems with limited capacity per processing element. Programming models must efficiently map neural networks onto these constrained resources while maintaining computational accuracy. The challenge intensifies when considering dynamic network reconfiguration and online learning scenarios that require real-time memory allocation and deallocation.

Scalability limitations plague existing programming approaches as network sizes increase. Current models often rely on manual optimization and hardware-specific tuning to achieve acceptable performance. The lack of automated compilation and optimization tools forces developers to possess deep hardware knowledge, significantly limiting the accessibility of neuromorphic computing to broader research and development communities.

Integration with existing AI development workflows remains problematic due to incompatible data formats, limited interoperability with popular machine learning frameworks, and insufficient support for hybrid computing scenarios that combine neuromorphic and conventional processing elements.

Existing Neuromorphic Programming Frameworks and Tools

01 Neuromorphic hardware architecture design and implementation

Neuromorphic AI hardware utilizes specialized architectures that mimic biological neural networks, featuring interconnected processing elements that operate in parallel. These architectures incorporate novel circuit designs, memory structures, and computational units optimized for neural processing. The hardware implementations focus on energy-efficient computation through event-driven processing and asynchronous operations, enabling real-time neural network execution with reduced power consumption compared to traditional computing architectures.- Spiking Neural Network Programming Frameworks: Programming models designed specifically for spiking neural networks (SNNs) that enable efficient mapping of neural computations onto neuromorphic hardware. These frameworks provide abstractions for defining neuron models, synaptic connections, and spike-based communication patterns. They support event-driven computation paradigms and temporal coding schemes that are fundamental to neuromorphic processing.

- Hardware-Software Co-design Programming Interfaces: Programming models that facilitate co-design between neuromorphic hardware architectures and software implementations. These interfaces provide APIs and toolchains that allow developers to optimize neural network models for specific hardware constraints such as memory hierarchy, interconnect topology, and power budgets. They enable seamless translation between high-level neural network descriptions and low-level hardware instructions.

- Dataflow and Graph-based Programming Models: Programming approaches that represent neural computations as dataflow graphs or computational graphs optimized for neuromorphic execution. These models enable parallel processing of neural operations and efficient resource allocation across neuromorphic cores. They support dynamic graph modifications and adaptive routing mechanisms that match the reconfigurable nature of neuromorphic hardware.

- Event-driven and Asynchronous Programming Paradigms: Programming models based on event-driven and asynchronous execution patterns that align with the inherent operation of neuromorphic systems. These paradigms handle spike events and temporal dynamics without requiring global clock synchronization. They provide mechanisms for managing asynchronous communication between neural processing elements and support real-time processing requirements.

- Learning and Training Frameworks for Neuromorphic Systems: Programming models that incorporate learning algorithms and training methodologies specifically adapted for neuromorphic hardware constraints. These frameworks support online learning, spike-timing-dependent plasticity, and other biologically-inspired learning rules. They provide tools for model conversion, quantization, and optimization to deploy trained networks onto neuromorphic platforms while maintaining accuracy and efficiency.

02 Programming frameworks and software interfaces for neuromorphic systems

Specialized programming models provide high-level abstractions and interfaces for developing applications on neuromorphic hardware. These frameworks include software development kits, application programming interfaces, and compilation tools that translate neural network models into hardware-executable formats. The programming environments support various neural network topologies and learning algorithms while managing the complexity of underlying hardware resources and enabling efficient mapping of computational tasks.Expand Specific Solutions03 Spiking neural network implementation and event-based processing

Programming models incorporate spiking neural network paradigms that process information through discrete events or spikes, closely resembling biological neural communication. These models define temporal dynamics, synaptic plasticity rules, and spike-timing-dependent learning mechanisms. The event-based processing approach enables asynchronous computation where neurons and synapses activate only when receiving input spikes, significantly reducing computational overhead and power consumption while maintaining high processing capabilities.Expand Specific Solutions04 Memory and data management for neuromorphic computing

Specialized memory architectures and data management strategies address the unique requirements of neuromorphic systems, including synaptic weight storage, state management, and efficient data routing. These approaches integrate memory elements closely with processing units to minimize data movement and latency. The programming models define methods for organizing, accessing, and updating neural network parameters while optimizing memory bandwidth utilization and supporting various learning and inference operations.Expand Specific Solutions05 Training and learning algorithms for neuromorphic platforms

Programming models incorporate specialized training methodologies and learning algorithms adapted for neuromorphic hardware constraints and capabilities. These include online learning approaches, unsupervised learning techniques, and hardware-aware training methods that account for device characteristics and limitations. The models support various plasticity mechanisms and adaptation rules that enable networks to learn from data while executing on neuromorphic substrates, facilitating both supervised and unsupervised learning scenarios with efficient resource utilization.Expand Specific Solutions

Key Players in Neuromorphic Hardware and Software Ecosystem

The neuromorphic AI hardware programming models field represents an emerging technology sector in its early development stage, characterized by significant growth potential but limited market maturity. The market remains relatively small compared to traditional AI hardware, with most applications still in research and prototype phases. Technology maturity varies considerably across players, with established tech giants like Samsung Electronics, IBM, Microsoft, and Huawei leveraging their extensive R&D capabilities and semiconductor expertise to advance neuromorphic computing architectures. Specialized companies such as Syntiant and Polyn Technology are pioneering ultra-low-power neuromorphic solutions for edge applications, while academic institutions including Zhejiang University and University of California contribute fundamental research. The competitive landscape shows a mix of hardware manufacturers, software developers, and research organizations working to establish standardized programming paradigms, though widespread commercial adoption remains limited by technical challenges and the nascent state of neuromorphic hardware platforms.

Samsung Electronics Co., Ltd.

Technical Solution: Samsung has invested in neuromorphic programming models through their advanced semiconductor division, developing programming frameworks for their neuromorphic memory technologies including RRAM and phase-change memory based systems. Their approach integrates in-memory computing paradigms with event-driven programming models, enabling efficient implementation of spiking neural networks directly in memory arrays. The programming model supports crossbar array architectures with analog computing capabilities, providing APIs for weight update mechanisms and spike-timing dependent plasticity implementations.

Strengths: Strong hardware-software integration with advanced memory technologies, excellent power efficiency for edge applications. Weaknesses: Limited software ecosystem compared to established players, focus primarily on memory-centric architectures.

Microsoft Technology Licensing LLC

Technical Solution: Microsoft has developed neuromorphic programming models through their Project Brainwave initiative, focusing on FPGA-based neuromorphic acceleration and software-hardware co-design approaches. Their programming framework emphasizes dataflow programming models that can efficiently map to both traditional and neuromorphic hardware platforms. The system provides automatic compilation from high-level neural network descriptions to optimized neuromorphic implementations, supporting both inference and online learning scenarios with adaptive resource allocation and dynamic reconfiguration capabilities.

Strengths: Strong cloud integration and developer tools ecosystem, excellent scalability across different hardware platforms. Weaknesses: Primary focus on FPGA rather than dedicated neuromorphic chips, limited support for biological plausibility.

Core Innovations in Spike-Based Programming Models

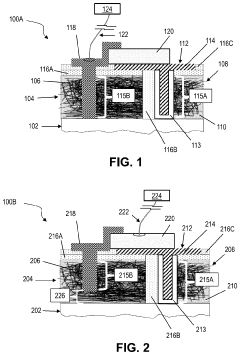

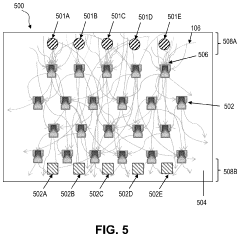

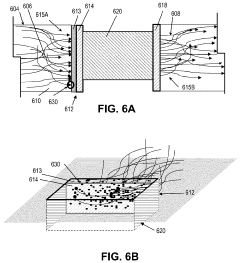

Neural network hardware device and system

PatentPendingUS20230422516A1

Innovation

- A neural network device comprising a mesh layer of randomly dispersed conductive nano-strands with memristor devices and modulating devices that enable electrical communication between a large number of individual nano-strands, mimicking dendritic communication and allowing for non-deterministic signal propagation, exceeding the interconnect capabilities of conventional lithographic methods.

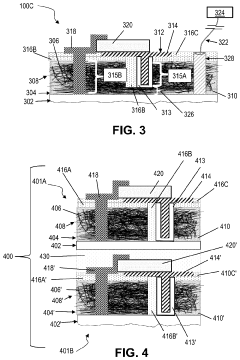

Neural network system with neurons including charge-trap transistors and neural integrators and methods therefor

PatentWO2022119631A1

Innovation

- A neural network system utilizing charge-trap transistors and neural integrators in a crossbar architecture, which enables efficient and low-power neuromorphic computation by using charge-trap transistors to perform weighted sums and integrate currents, reducing computation latency and energy consumption.

Hardware-Software Co-design for Neuromorphic Systems

Hardware-software co-design represents a fundamental paradigm shift in neuromorphic system development, where traditional sequential design approaches give way to integrated, concurrent engineering methodologies. This approach recognizes that neuromorphic computing's unique characteristics—such as event-driven processing, distributed memory architectures, and temporal dynamics—require intimate coordination between hardware capabilities and software abstractions from the earliest design stages.

The co-design methodology addresses the inherent complexity of mapping neural algorithms onto specialized neuromorphic hardware architectures. Unlike conventional computing systems where software operates on standardized hardware interfaces, neuromorphic systems demand custom-tailored solutions that exploit specific hardware features such as memristive devices, analog computation units, and asynchronous communication protocols. This necessitates simultaneous optimization of both hardware resources and software programming models to achieve optimal performance and energy efficiency.

Contemporary co-design frameworks emphasize the development of unified design environments that enable cross-layer optimization. These frameworks typically incorporate hardware description languages extended with neuromorphic-specific constructs, allowing designers to specify both synaptic connectivity patterns and underlying circuit implementations within a single design flow. Advanced simulation environments provide cycle-accurate modeling of neuromorphic hardware while supporting high-level neural network descriptions, enabling rapid prototyping and validation.

The co-design process involves iterative refinement of hardware architectures based on software requirements and vice versa. For instance, the granularity of hardware parallelism directly influences the design of programming abstractions, while the complexity of neural algorithms drives decisions about on-chip memory hierarchies and interconnect topologies. This bidirectional optimization process often reveals unexpected design trade-offs that would remain hidden in traditional sequential design approaches.

Emerging co-design methodologies increasingly leverage machine learning techniques to automate design space exploration. These approaches use reinforcement learning and genetic algorithms to simultaneously optimize hardware parameters and software mapping strategies, potentially discovering novel architectural configurations that human designers might overlook. Such automated co-design tools are becoming essential as the complexity of neuromorphic systems continues to grow, enabling more efficient exploration of the vast design space inherent in these highly configurable computing platforms.

The co-design methodology addresses the inherent complexity of mapping neural algorithms onto specialized neuromorphic hardware architectures. Unlike conventional computing systems where software operates on standardized hardware interfaces, neuromorphic systems demand custom-tailored solutions that exploit specific hardware features such as memristive devices, analog computation units, and asynchronous communication protocols. This necessitates simultaneous optimization of both hardware resources and software programming models to achieve optimal performance and energy efficiency.

Contemporary co-design frameworks emphasize the development of unified design environments that enable cross-layer optimization. These frameworks typically incorporate hardware description languages extended with neuromorphic-specific constructs, allowing designers to specify both synaptic connectivity patterns and underlying circuit implementations within a single design flow. Advanced simulation environments provide cycle-accurate modeling of neuromorphic hardware while supporting high-level neural network descriptions, enabling rapid prototyping and validation.

The co-design process involves iterative refinement of hardware architectures based on software requirements and vice versa. For instance, the granularity of hardware parallelism directly influences the design of programming abstractions, while the complexity of neural algorithms drives decisions about on-chip memory hierarchies and interconnect topologies. This bidirectional optimization process often reveals unexpected design trade-offs that would remain hidden in traditional sequential design approaches.

Emerging co-design methodologies increasingly leverage machine learning techniques to automate design space exploration. These approaches use reinforcement learning and genetic algorithms to simultaneously optimize hardware parameters and software mapping strategies, potentially discovering novel architectural configurations that human designers might overlook. Such automated co-design tools are becoming essential as the complexity of neuromorphic systems continues to grow, enabling more efficient exploration of the vast design space inherent in these highly configurable computing platforms.

Energy Efficiency Standards for Neuromorphic Applications

Energy efficiency standards for neuromorphic applications represent a critical framework for evaluating and optimizing the power consumption characteristics of brain-inspired computing systems. These standards establish quantitative metrics that enable fair comparison between different neuromorphic architectures and traditional computing approaches, particularly in AI workload scenarios.

The primary energy efficiency metric for neuromorphic systems is operations per joule (OPS/J), which measures computational throughput relative to power consumption. Unlike conventional processors that rely on floating-point operations per second (FLOPS), neuromorphic systems require specialized metrics that account for spike-based processing and event-driven computation patterns. The Synaptic Operations Per Second per Watt (SOPS/W) metric has emerged as a standard measure, capturing the unique characteristics of neural network computations in neuromorphic hardware.

Current industry benchmarks indicate that state-of-the-art neuromorphic chips achieve energy efficiency levels ranging from 1,000 to 10,000 SOPS/W for inference tasks, significantly outperforming traditional GPUs and CPUs in specific AI applications. Intel's Loihi chip demonstrates approximately 1,000x better energy efficiency than conventional processors for certain sparse, event-driven workloads, while IBM's TrueNorth achieves similar performance gains in pattern recognition tasks.

Standardization efforts focus on establishing consistent measurement methodologies across different neuromorphic platforms. The IEEE P2941 working group is developing comprehensive standards for neuromorphic computing terminology and performance metrics, including energy efficiency benchmarks. These standards address challenges such as varying spike rates, different neural network topologies, and diverse application requirements that affect power consumption patterns.

Application-specific energy efficiency targets vary significantly across domains. Edge AI applications typically require efficiency levels exceeding 10,000 SOPS/W to enable battery-powered operation, while data center deployments may accept lower efficiency in exchange for higher absolute performance. Autonomous vehicle applications demand real-time processing capabilities with energy budgets constrained by automotive power systems, necessitating efficiency standards tailored to safety-critical scenarios.

The development of energy efficiency standards also encompasses dynamic power management techniques specific to neuromorphic systems. These include adaptive voltage scaling based on neural activity levels, selective activation of processing cores, and intelligent workload distribution across multiple neuromorphic chips to optimize overall system efficiency while maintaining computational accuracy and response times.

The primary energy efficiency metric for neuromorphic systems is operations per joule (OPS/J), which measures computational throughput relative to power consumption. Unlike conventional processors that rely on floating-point operations per second (FLOPS), neuromorphic systems require specialized metrics that account for spike-based processing and event-driven computation patterns. The Synaptic Operations Per Second per Watt (SOPS/W) metric has emerged as a standard measure, capturing the unique characteristics of neural network computations in neuromorphic hardware.

Current industry benchmarks indicate that state-of-the-art neuromorphic chips achieve energy efficiency levels ranging from 1,000 to 10,000 SOPS/W for inference tasks, significantly outperforming traditional GPUs and CPUs in specific AI applications. Intel's Loihi chip demonstrates approximately 1,000x better energy efficiency than conventional processors for certain sparse, event-driven workloads, while IBM's TrueNorth achieves similar performance gains in pattern recognition tasks.

Standardization efforts focus on establishing consistent measurement methodologies across different neuromorphic platforms. The IEEE P2941 working group is developing comprehensive standards for neuromorphic computing terminology and performance metrics, including energy efficiency benchmarks. These standards address challenges such as varying spike rates, different neural network topologies, and diverse application requirements that affect power consumption patterns.

Application-specific energy efficiency targets vary significantly across domains. Edge AI applications typically require efficiency levels exceeding 10,000 SOPS/W to enable battery-powered operation, while data center deployments may accept lower efficiency in exchange for higher absolute performance. Autonomous vehicle applications demand real-time processing capabilities with energy budgets constrained by automotive power systems, necessitating efficiency standards tailored to safety-critical scenarios.

The development of energy efficiency standards also encompasses dynamic power management techniques specific to neuromorphic systems. These include adaptive voltage scaling based on neural activity levels, selective activation of processing cores, and intelligent workload distribution across multiple neuromorphic chips to optimize overall system efficiency while maintaining computational accuracy and response times.

Unlock deeper insights with PatSnap Eureka Quick Research — get a full tech report to explore trends and direct your research. Try now!

Generate Your Research Report Instantly with AI Agent

Supercharge your innovation with PatSnap Eureka AI Agent Platform!