Large-Scale Neuromorphic Networks for Cognitive Computing

MAR 11, 202610 MIN READ

Generate Your Research Report Instantly with AI Agent

PatSnap Eureka helps you evaluate technical feasibility & market potential.

Neuromorphic Computing Background and Cognitive Goals

Neuromorphic computing represents a paradigm shift in computational architecture, drawing inspiration from the structural and functional principles of biological neural networks. This field emerged in the 1980s through the pioneering work of Carver Mead at Caltech, who envisioned silicon-based systems that could mimic the brain's energy-efficient processing capabilities. Unlike traditional von Neumann architectures that separate memory and processing units, neuromorphic systems integrate these functions within individual computational elements, enabling massively parallel and distributed processing.

The evolution of neuromorphic computing has been driven by the fundamental limitations of conventional digital systems in handling cognitive tasks. While traditional processors excel at sequential, rule-based computations, they struggle with pattern recognition, sensory processing, and adaptive learning tasks that biological systems perform effortlessly. This disparity becomes particularly pronounced when considering energy efficiency, as the human brain operates on approximately 20 watts while performing complex cognitive functions that would require megawatts in conventional supercomputers.

Large-scale neuromorphic networks represent the next evolutionary step, scaling beyond individual chips to interconnected systems capable of supporting sophisticated cognitive computing applications. These networks aim to replicate the brain's hierarchical organization, featuring multiple layers of processing units that can handle increasingly complex abstractions. The scalability challenge involves maintaining the brain-like properties of local connectivity, sparse activation, and event-driven processing across distributed hardware platforms.

The primary technical objectives for large-scale neuromorphic networks center on achieving brain-scale connectivity and processing capabilities. Current targets include supporting billions of artificial neurons with trillions of synaptic connections, while maintaining real-time processing speeds and ultra-low power consumption. These systems must demonstrate adaptive learning capabilities, enabling continuous improvement through experience without explicit reprogramming.

Cognitive computing goals encompass several key areas including real-time sensory processing, autonomous decision-making, and contextual understanding. The networks should exhibit emergent intelligence properties such as pattern completion, associative memory, and predictive modeling. Additionally, these systems aim to support neuroplasticity mechanisms that enable structural and functional adaptation based on input patterns and feedback signals.

The convergence of neuromorphic hardware advances and cognitive computing requirements is driving research toward hybrid architectures that combine analog and digital processing elements. These systems seek to bridge the gap between biological intelligence and artificial computation, potentially revolutionizing applications in robotics, autonomous systems, and brain-computer interfaces.

The evolution of neuromorphic computing has been driven by the fundamental limitations of conventional digital systems in handling cognitive tasks. While traditional processors excel at sequential, rule-based computations, they struggle with pattern recognition, sensory processing, and adaptive learning tasks that biological systems perform effortlessly. This disparity becomes particularly pronounced when considering energy efficiency, as the human brain operates on approximately 20 watts while performing complex cognitive functions that would require megawatts in conventional supercomputers.

Large-scale neuromorphic networks represent the next evolutionary step, scaling beyond individual chips to interconnected systems capable of supporting sophisticated cognitive computing applications. These networks aim to replicate the brain's hierarchical organization, featuring multiple layers of processing units that can handle increasingly complex abstractions. The scalability challenge involves maintaining the brain-like properties of local connectivity, sparse activation, and event-driven processing across distributed hardware platforms.

The primary technical objectives for large-scale neuromorphic networks center on achieving brain-scale connectivity and processing capabilities. Current targets include supporting billions of artificial neurons with trillions of synaptic connections, while maintaining real-time processing speeds and ultra-low power consumption. These systems must demonstrate adaptive learning capabilities, enabling continuous improvement through experience without explicit reprogramming.

Cognitive computing goals encompass several key areas including real-time sensory processing, autonomous decision-making, and contextual understanding. The networks should exhibit emergent intelligence properties such as pattern completion, associative memory, and predictive modeling. Additionally, these systems aim to support neuroplasticity mechanisms that enable structural and functional adaptation based on input patterns and feedback signals.

The convergence of neuromorphic hardware advances and cognitive computing requirements is driving research toward hybrid architectures that combine analog and digital processing elements. These systems seek to bridge the gap between biological intelligence and artificial computation, potentially revolutionizing applications in robotics, autonomous systems, and brain-computer interfaces.

Market Demand for Brain-Inspired Computing Systems

The global market for brain-inspired computing systems is experiencing unprecedented growth driven by the increasing demand for energy-efficient artificial intelligence solutions. Traditional von Neumann architectures face significant limitations in processing the massive parallel computations required for modern AI applications, creating substantial market opportunities for neuromorphic computing technologies. Industries ranging from autonomous vehicles to robotics are actively seeking alternatives that can deliver real-time processing capabilities while maintaining low power consumption profiles.

Enterprise applications represent a particularly lucrative segment, with organizations requiring advanced pattern recognition, sensory processing, and adaptive learning capabilities. Financial institutions are exploring neuromorphic systems for fraud detection and algorithmic trading, while healthcare providers seek brain-inspired solutions for medical imaging analysis and diagnostic support systems. The manufacturing sector demonstrates strong interest in neuromorphic networks for predictive maintenance, quality control, and autonomous production line optimization.

Edge computing applications constitute another rapidly expanding market segment. The proliferation of Internet of Things devices and smart sensors creates demand for localized processing capabilities that can operate efficiently under power and bandwidth constraints. Neuromorphic networks offer compelling advantages for edge deployment scenarios, including reduced latency, improved privacy through local processing, and enhanced reliability in disconnected environments.

The defense and aerospace sectors present significant market opportunities for large-scale neuromorphic networks. Military applications require robust cognitive computing systems capable of operating in challenging environments while processing complex sensory inputs for surveillance, reconnaissance, and autonomous navigation. Space exploration missions particularly benefit from neuromorphic architectures due to their fault tolerance and energy efficiency characteristics.

Consumer electronics manufacturers are increasingly incorporating brain-inspired computing elements into smartphones, wearable devices, and smart home systems. The growing emphasis on personalized user experiences and context-aware applications drives demand for neuromorphic solutions that can learn and adapt to individual usage patterns while maintaining acceptable battery life.

Research institutions and academic organizations represent an emerging market segment, requiring scalable neuromorphic platforms for cognitive computing research and educational purposes. The need for accessible, programmable brain-inspired computing systems continues to expand as interdisciplinary research programs proliferate across neuroscience, computer science, and engineering disciplines.

Enterprise applications represent a particularly lucrative segment, with organizations requiring advanced pattern recognition, sensory processing, and adaptive learning capabilities. Financial institutions are exploring neuromorphic systems for fraud detection and algorithmic trading, while healthcare providers seek brain-inspired solutions for medical imaging analysis and diagnostic support systems. The manufacturing sector demonstrates strong interest in neuromorphic networks for predictive maintenance, quality control, and autonomous production line optimization.

Edge computing applications constitute another rapidly expanding market segment. The proliferation of Internet of Things devices and smart sensors creates demand for localized processing capabilities that can operate efficiently under power and bandwidth constraints. Neuromorphic networks offer compelling advantages for edge deployment scenarios, including reduced latency, improved privacy through local processing, and enhanced reliability in disconnected environments.

The defense and aerospace sectors present significant market opportunities for large-scale neuromorphic networks. Military applications require robust cognitive computing systems capable of operating in challenging environments while processing complex sensory inputs for surveillance, reconnaissance, and autonomous navigation. Space exploration missions particularly benefit from neuromorphic architectures due to their fault tolerance and energy efficiency characteristics.

Consumer electronics manufacturers are increasingly incorporating brain-inspired computing elements into smartphones, wearable devices, and smart home systems. The growing emphasis on personalized user experiences and context-aware applications drives demand for neuromorphic solutions that can learn and adapt to individual usage patterns while maintaining acceptable battery life.

Research institutions and academic organizations represent an emerging market segment, requiring scalable neuromorphic platforms for cognitive computing research and educational purposes. The need for accessible, programmable brain-inspired computing systems continues to expand as interdisciplinary research programs proliferate across neuroscience, computer science, and engineering disciplines.

Current State of Large-Scale Neuromorphic Architectures

Large-scale neuromorphic architectures have evolved significantly over the past decade, with several breakthrough implementations demonstrating the feasibility of brain-inspired computing at unprecedented scales. Intel's Loihi chip represents a pivotal advancement, featuring 128 neuromorphic cores with over 130,000 artificial neurons and 130 million synapses. Each core operates asynchronously, enabling event-driven computation that mimics biological neural networks' sparse activation patterns.

IBM's TrueNorth architecture has established another milestone in the field, incorporating 4,096 cores with approximately 1 million programmable neurons and 256 million synapses. The chip operates with remarkable energy efficiency, consuming only 70 milliwatts during active operation. TrueNorth's architecture emphasizes real-time processing capabilities, making it particularly suitable for sensory data processing and pattern recognition applications.

SpiNNaker, developed by the University of Manchester, takes a different approach by utilizing ARM processors configured to simulate biological neural networks. The system can scale up to 1 million ARM cores, theoretically capable of simulating portions of the human brain in real-time. This architecture excels in modeling complex neural dynamics and supports various neural network models simultaneously.

Current neuromorphic architectures face several technical challenges that limit their widespread adoption. Memory wall issues persist, as traditional von Neumann architectures struggle with the massive data movement requirements of neural computation. Most existing solutions employ hybrid approaches, combining analog and digital components to balance computational efficiency with programming flexibility.

Scalability remains a critical bottleneck, particularly in interconnect design and communication protocols between neuromorphic cores. Current architectures typically support thousands to millions of neurons, falling short of the billions required for human-level cognitive computing. Power consumption, while significantly lower than conventional processors for specific tasks, still presents challenges for battery-powered applications.

Programming paradigms for neuromorphic systems are still evolving, with most platforms requiring specialized knowledge of neural network principles. Standard software development tools and frameworks are limited, creating barriers for broader adoption across different application domains.

Geographically, neuromorphic research concentrates primarily in North America and Europe, with significant contributions from research institutions and technology companies. Asia-Pacific regions are increasingly investing in neuromorphic technologies, particularly in China and South Korea, where government initiatives support brain-inspired computing research.

The current technological landscape indicates that while large-scale neuromorphic architectures have demonstrated promising capabilities in specific domains such as sensory processing and pattern recognition, achieving general-purpose cognitive computing remains an ongoing challenge requiring continued innovation in hardware design, software frameworks, and system integration approaches.

IBM's TrueNorth architecture has established another milestone in the field, incorporating 4,096 cores with approximately 1 million programmable neurons and 256 million synapses. The chip operates with remarkable energy efficiency, consuming only 70 milliwatts during active operation. TrueNorth's architecture emphasizes real-time processing capabilities, making it particularly suitable for sensory data processing and pattern recognition applications.

SpiNNaker, developed by the University of Manchester, takes a different approach by utilizing ARM processors configured to simulate biological neural networks. The system can scale up to 1 million ARM cores, theoretically capable of simulating portions of the human brain in real-time. This architecture excels in modeling complex neural dynamics and supports various neural network models simultaneously.

Current neuromorphic architectures face several technical challenges that limit their widespread adoption. Memory wall issues persist, as traditional von Neumann architectures struggle with the massive data movement requirements of neural computation. Most existing solutions employ hybrid approaches, combining analog and digital components to balance computational efficiency with programming flexibility.

Scalability remains a critical bottleneck, particularly in interconnect design and communication protocols between neuromorphic cores. Current architectures typically support thousands to millions of neurons, falling short of the billions required for human-level cognitive computing. Power consumption, while significantly lower than conventional processors for specific tasks, still presents challenges for battery-powered applications.

Programming paradigms for neuromorphic systems are still evolving, with most platforms requiring specialized knowledge of neural network principles. Standard software development tools and frameworks are limited, creating barriers for broader adoption across different application domains.

Geographically, neuromorphic research concentrates primarily in North America and Europe, with significant contributions from research institutions and technology companies. Asia-Pacific regions are increasingly investing in neuromorphic technologies, particularly in China and South Korea, where government initiatives support brain-inspired computing research.

The current technological landscape indicates that while large-scale neuromorphic architectures have demonstrated promising capabilities in specific domains such as sensory processing and pattern recognition, achieving general-purpose cognitive computing remains an ongoing challenge requiring continued innovation in hardware design, software frameworks, and system integration approaches.

Existing Large-Scale Neuromorphic Network Solutions

01 Neuromorphic hardware architectures and chip designs

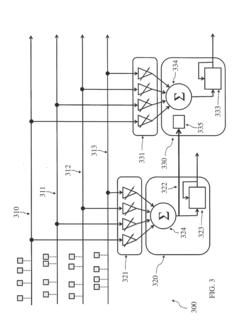

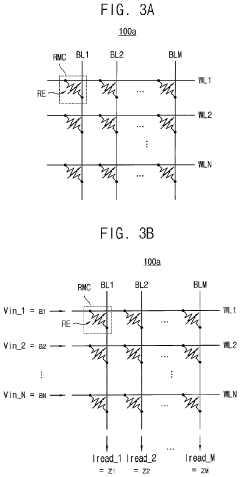

Large-scale neuromorphic networks require specialized hardware architectures that mimic biological neural systems. These architectures include custom chip designs with integrated circuits optimized for parallel processing of neural computations. The hardware implementations feature specialized components such as synaptic arrays, neuron circuits, and interconnection networks that enable efficient processing of spiking neural networks at scale. Advanced fabrication techniques and novel materials are employed to create energy-efficient neuromorphic processors capable of handling millions of artificial neurons and synapses.- Neuromorphic hardware architectures and chip designs: Large-scale neuromorphic networks require specialized hardware architectures that mimic biological neural systems. These architectures include custom chip designs with integrated circuits optimized for parallel processing of neural computations. The hardware implementations feature specialized components such as synaptic arrays, neuron circuits, and interconnection networks that enable efficient processing of spiking neural networks at scale. Advanced fabrication techniques and circuit designs allow for high-density integration of neuromorphic processing elements.

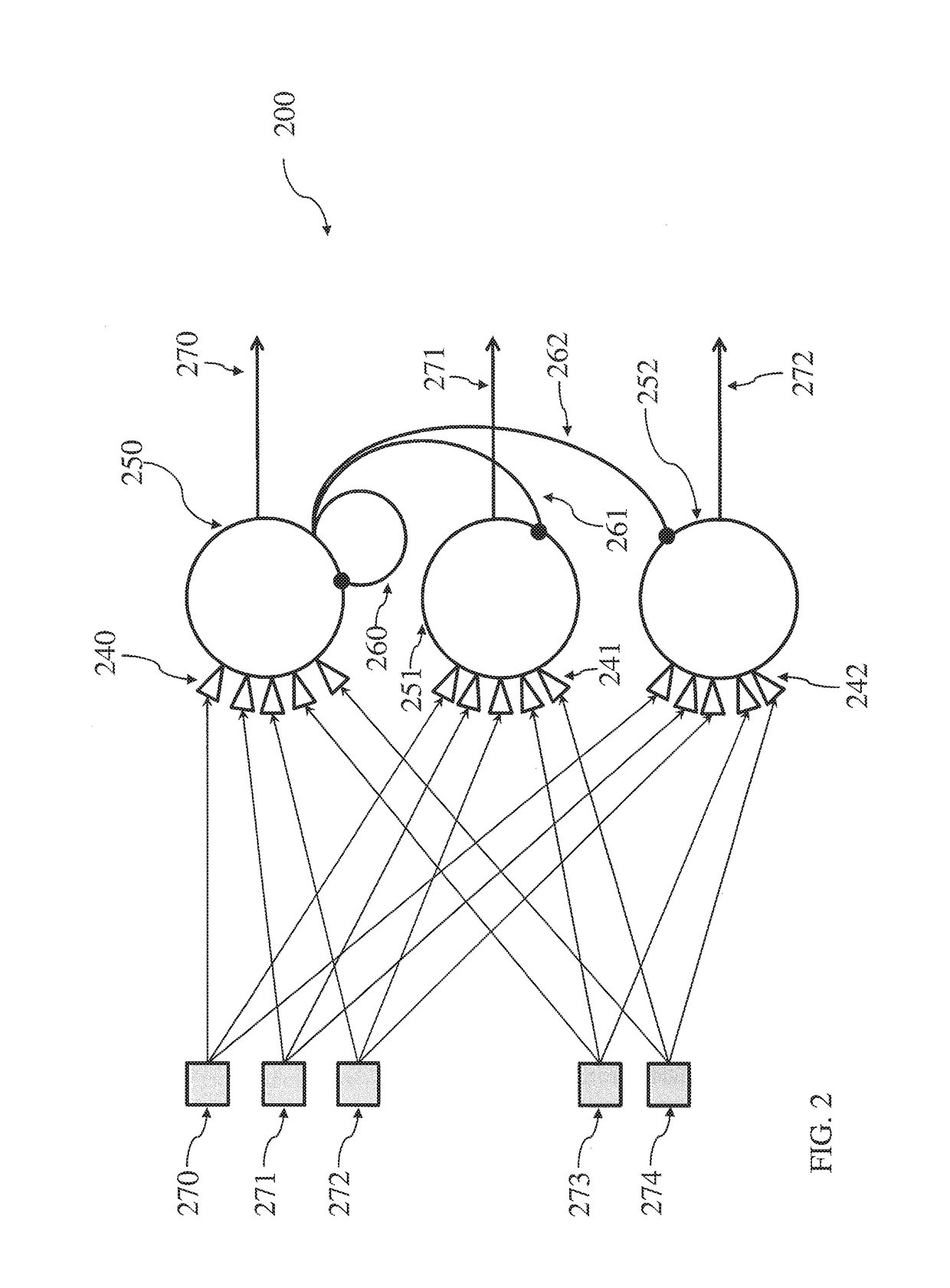

- Synaptic plasticity and learning mechanisms: Implementation of learning algorithms in large-scale neuromorphic systems involves synaptic weight adjustment mechanisms that enable adaptive behavior. These mechanisms include spike-timing-dependent plasticity and other biologically-inspired learning rules that allow the network to modify connection strengths based on neural activity patterns. The learning mechanisms are designed to operate efficiently at scale, enabling the network to adapt and improve performance through experience while maintaining low power consumption.

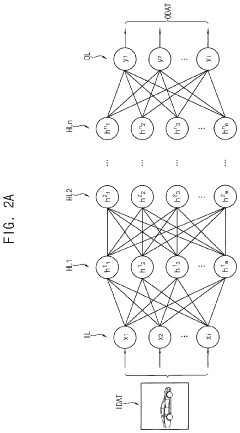

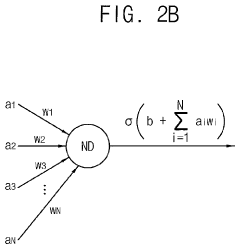

- Network topology and connectivity patterns: The organization and interconnection structure of large-scale neuromorphic networks involves specific topological arrangements that balance computational efficiency with biological plausibility. These patterns include hierarchical structures, recurrent connections, and modular organizations that enable complex information processing. The connectivity schemes are designed to support scalable implementations while maintaining the ability to represent and process diverse types of information across multiple layers and regions of the network.

- Power management and energy efficiency optimization: Energy-efficient operation of large-scale neuromorphic networks requires specialized power management strategies that minimize energy consumption while maintaining computational performance. These approaches include event-driven processing, dynamic voltage scaling, and power gating techniques that reduce energy usage during periods of low activity. The optimization methods take advantage of the sparse and asynchronous nature of neural computations to achieve significant power savings compared to traditional computing architectures.

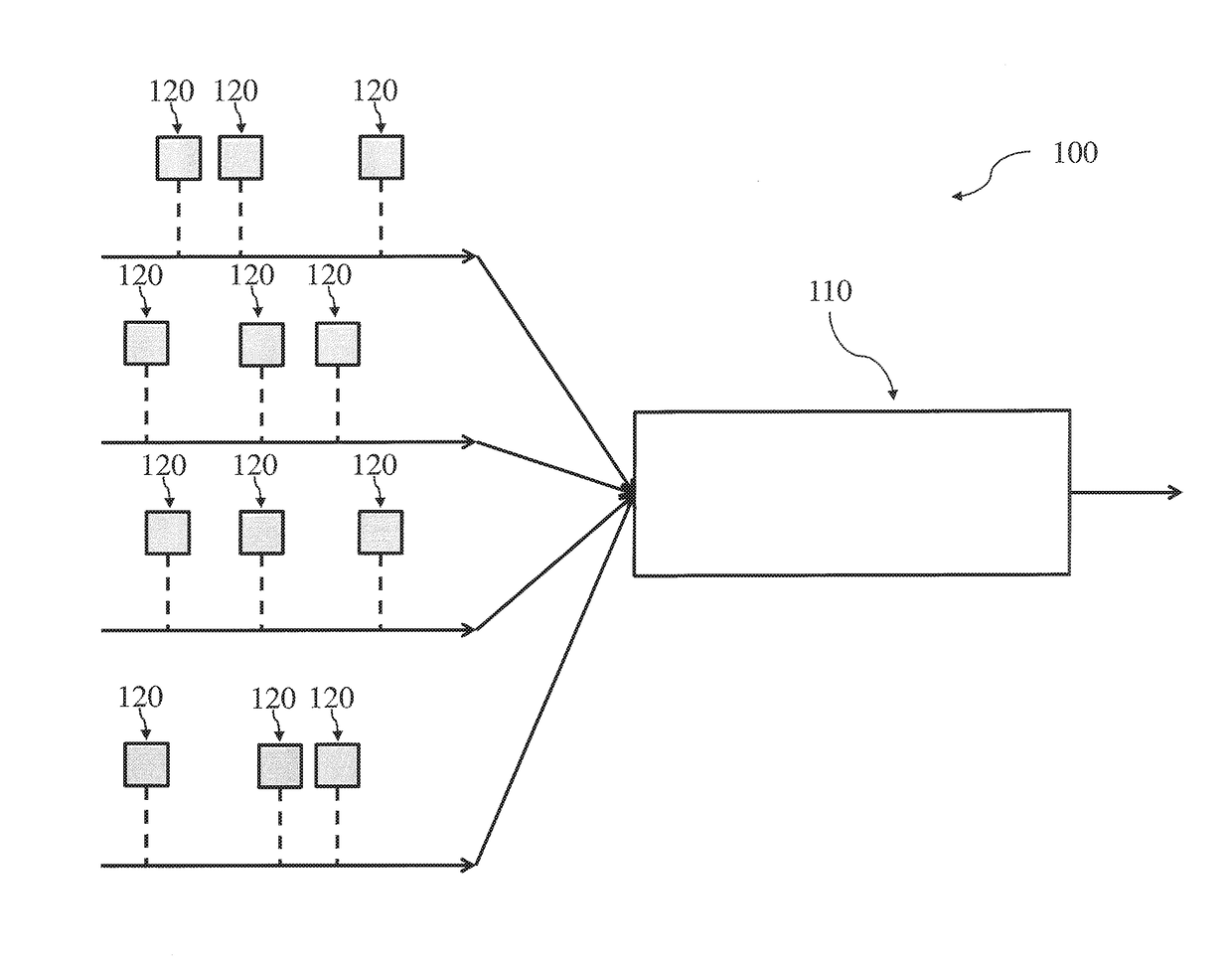

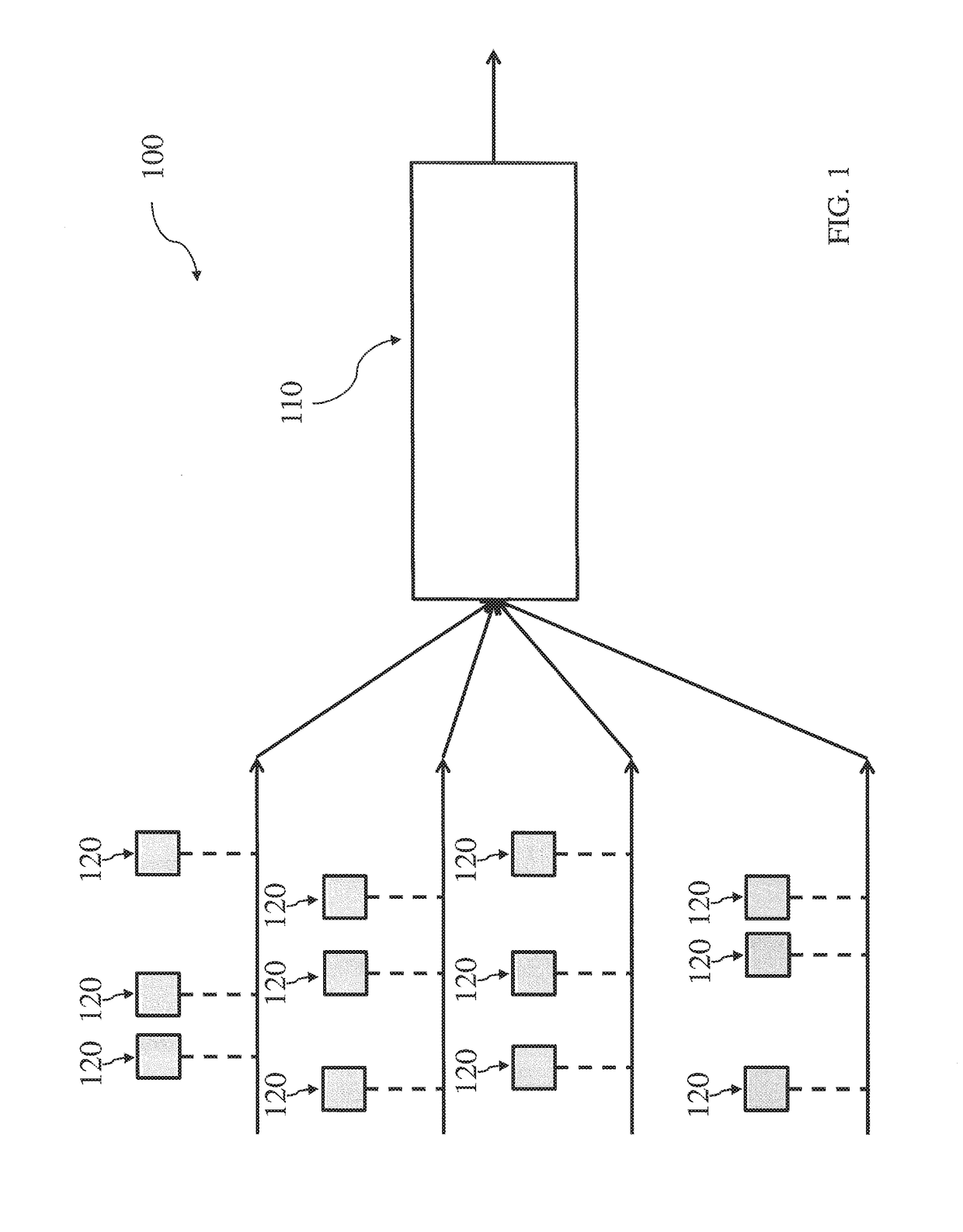

- Scalability and distributed processing frameworks: Scaling neuromorphic networks to large sizes requires distributed processing frameworks that enable coordination across multiple processing units or chips. These frameworks include communication protocols, routing mechanisms, and synchronization methods that allow different parts of the network to operate cohesively. The scalability solutions address challenges such as inter-chip communication latency, load balancing, and fault tolerance to enable the construction of networks with millions or billions of neurons and synapses.

02 Spiking neural network algorithms and learning mechanisms

Implementation of learning algorithms specifically designed for neuromorphic systems is crucial for large-scale networks. These mechanisms include spike-timing-dependent plasticity, unsupervised learning rules, and bio-inspired training methods that enable the network to adapt and learn from input patterns. The algorithms are optimized for event-driven computation and temporal coding schemes that leverage the asynchronous nature of spiking neurons. Various plasticity rules and synaptic weight update mechanisms are employed to achieve efficient learning in large-scale deployments.Expand Specific Solutions03 Scalable interconnection and communication protocols

Efficient communication infrastructure is essential for connecting large numbers of neuromorphic processing elements. This includes hierarchical routing schemes, packet-based communication protocols, and network-on-chip architectures that minimize latency and power consumption. The interconnection systems support both local and global connectivity patterns found in biological neural networks, enabling flexible topology configurations. Advanced addressing schemes and data encoding methods facilitate the transmission of spike events across the network while maintaining temporal precision.Expand Specific Solutions04 Power management and energy efficiency optimization

Energy efficiency is a critical consideration in large-scale neuromorphic systems. Various power management techniques are employed including dynamic voltage and frequency scaling, clock gating, and event-driven activation of processing elements. The designs incorporate low-power circuit techniques and optimize the trade-off between computational performance and energy consumption. Advanced power distribution networks and thermal management solutions ensure stable operation of densely packed neuromorphic chips while minimizing overall power requirements.Expand Specific Solutions05 System integration and application frameworks

Practical deployment of large-scale neuromorphic networks requires comprehensive system integration approaches and software frameworks. These include development tools, simulation environments, and programming interfaces that facilitate the mapping of neural network models onto neuromorphic hardware. The frameworks provide abstraction layers that enable researchers and developers to design and deploy applications without detailed knowledge of underlying hardware. Integration with conventional computing systems and support for various application domains such as pattern recognition, sensory processing, and cognitive computing are also addressed.Expand Specific Solutions

Key Players in Neuromorphic and Cognitive Computing

The large-scale neuromorphic networks for cognitive computing field represents an emerging technology sector in its early-to-growth stage, with significant market potential driven by increasing demand for brain-inspired computing architectures. The market demonstrates substantial growth prospects as organizations seek energy-efficient alternatives to traditional von Neumann architectures for AI workloads. Technology maturity varies considerably across players, with established technology giants like IBM, Intel, and Qualcomm leading advanced research and development efforts, while specialized companies such as Cambricon Technologies focus on dedicated neuromorphic chip solutions. Academic institutions including Zhejiang University, Fudan University, and University of California contribute foundational research, supported by research organizations like Fraunhofer-Gesellschaft and IMEC. The competitive landscape shows a mix of hardware manufacturers, software developers, and research entities collaborating to advance neuromorphic computing from laboratory concepts toward commercial applications, though widespread deployment remains several years away.

International Business Machines Corp.

Technical Solution: IBM has developed TrueNorth neuromorphic chip architecture featuring 1 million programmable neurons and 256 million synapses on a single chip, consuming only 70 milliwatts of power during real-time operation[1]. The company's neuromorphic computing platform integrates spike-based neural networks that can process sensory data in real-time with ultra-low power consumption. IBM's approach focuses on event-driven computation where neurons only consume power when processing spikes, enabling massive parallelism for cognitive tasks like pattern recognition, sensory processing, and adaptive learning. Their neuromorphic systems can scale to support large networks through multi-chip integration while maintaining the brain-inspired computing paradigm[2].

Strengths: Ultra-low power consumption, proven scalability to million-neuron networks, strong research foundation. Weaknesses: Limited commercial availability, requires specialized programming paradigms, integration challenges with traditional computing systems.

Cambricon Technologies Corp. Ltd.

Technical Solution: Cambricon has developed brain-inspired computing architectures that combine neuromorphic principles with AI acceleration capabilities, focusing on spike-based neural network processing for cognitive applications[5]. Their neuromorphic processors support large-scale neural network deployment with specialized hardware optimizations for synaptic operations and neural dynamics simulation. The company's approach integrates traditional deep learning acceleration with neuromorphic computing paradigms, enabling hybrid cognitive systems that can process both conventional AI workloads and brain-inspired algorithms. Cambricon's neuromorphic solutions target applications in autonomous vehicles, robotics, and intelligent sensing systems where real-time cognitive processing is essential[6].

Strengths: Hybrid AI and neuromorphic capabilities, strong market presence in China, integrated hardware-software solutions. Weaknesses: Limited global market penetration, primarily focused on Chinese market, newer entrant in pure neuromorphic computing.

Core Innovations in Scalable Neuromorphic Architectures

Neuromorphic architecture with multiple coupled neurons using internal state neuron information

PatentActiveUS20170372194A1

Innovation

- A neuromorphic architecture featuring interconnected neurons with internal state information links, allowing for the transmission of internal state information across layers to modify the operation of other neurons, enhancing the system's performance and capability in data processing, pattern recognition, and correlation detection.

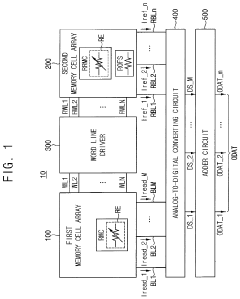

Neuromorphic computing device and method of designing the same

PatentActiveUS11881260B2

Innovation

- Incorporating a second memory cell array with offset resistors connected in parallel, using the same resistive material as the first memory cell array, to convert read currents into digital signals, thereby mitigating temperature and time dependency, and ensuring consistent resistance across offset resistors for enhanced sensing performance.

Hardware-Software Co-design for Neuromorphic Scaling

The successful deployment of large-scale neuromorphic networks for cognitive computing fundamentally depends on achieving seamless integration between specialized hardware architectures and adaptive software frameworks. This co-design approach represents a paradigm shift from traditional computing models, where hardware and software are developed independently, toward a unified methodology that optimizes both layers simultaneously for neuromorphic scaling challenges.

Hardware-software co-design in neuromorphic systems requires establishing unified abstraction layers that can efficiently map cognitive algorithms onto diverse neuromorphic substrates. These abstraction frameworks must accommodate the heterogeneous nature of neuromorphic processors, including memristive crossbars, spiking neural processing units, and analog-digital hybrid architectures. The co-design methodology enables dynamic resource allocation and workload distribution across different processing elements while maintaining computational coherence.

The scaling challenge necessitates developing hierarchical design methodologies that can address both intra-chip and inter-chip communication protocols. At the intra-chip level, co-design focuses on optimizing synaptic connectivity patterns, neuron placement strategies, and local memory hierarchies. Inter-chip scaling requires sophisticated routing algorithms and communication protocols that minimize latency while preserving the temporal dynamics essential for cognitive processing tasks.

Software frameworks for neuromorphic scaling must incorporate hardware-aware compilation techniques that can automatically partition neural network models across distributed neuromorphic resources. These compilers need to understand the unique constraints of neuromorphic hardware, including limited precision arithmetic, event-driven processing paradigms, and energy consumption profiles. Advanced scheduling algorithms become crucial for managing the asynchronous nature of spike-based computations across large-scale deployments.

The co-design approach also addresses critical challenges in fault tolerance and reliability for large-scale neuromorphic systems. Hardware redundancy mechanisms must be complemented by software-based error detection and correction strategies that can adapt to the probabilistic nature of neuromorphic computations. This includes developing resilient learning algorithms that can maintain performance despite hardware variations and component failures.

Emerging co-design methodologies are exploring adaptive reconfiguration capabilities that allow neuromorphic systems to dynamically adjust their hardware-software mapping based on workload characteristics and performance requirements. This adaptive approach enables efficient resource utilization while supporting diverse cognitive computing applications ranging from real-time sensory processing to complex reasoning tasks.

Hardware-software co-design in neuromorphic systems requires establishing unified abstraction layers that can efficiently map cognitive algorithms onto diverse neuromorphic substrates. These abstraction frameworks must accommodate the heterogeneous nature of neuromorphic processors, including memristive crossbars, spiking neural processing units, and analog-digital hybrid architectures. The co-design methodology enables dynamic resource allocation and workload distribution across different processing elements while maintaining computational coherence.

The scaling challenge necessitates developing hierarchical design methodologies that can address both intra-chip and inter-chip communication protocols. At the intra-chip level, co-design focuses on optimizing synaptic connectivity patterns, neuron placement strategies, and local memory hierarchies. Inter-chip scaling requires sophisticated routing algorithms and communication protocols that minimize latency while preserving the temporal dynamics essential for cognitive processing tasks.

Software frameworks for neuromorphic scaling must incorporate hardware-aware compilation techniques that can automatically partition neural network models across distributed neuromorphic resources. These compilers need to understand the unique constraints of neuromorphic hardware, including limited precision arithmetic, event-driven processing paradigms, and energy consumption profiles. Advanced scheduling algorithms become crucial for managing the asynchronous nature of spike-based computations across large-scale deployments.

The co-design approach also addresses critical challenges in fault tolerance and reliability for large-scale neuromorphic systems. Hardware redundancy mechanisms must be complemented by software-based error detection and correction strategies that can adapt to the probabilistic nature of neuromorphic computations. This includes developing resilient learning algorithms that can maintain performance despite hardware variations and component failures.

Emerging co-design methodologies are exploring adaptive reconfiguration capabilities that allow neuromorphic systems to dynamically adjust their hardware-software mapping based on workload characteristics and performance requirements. This adaptive approach enables efficient resource utilization while supporting diverse cognitive computing applications ranging from real-time sensory processing to complex reasoning tasks.

Energy Efficiency Optimization in Large Neural Networks

Energy efficiency optimization represents a critical bottleneck in scaling neuromorphic networks for cognitive computing applications. Traditional von Neumann architectures suffer from the memory wall problem, where data movement between processing units and memory consumes significantly more energy than computation itself. Neuromorphic systems address this challenge through co-located memory and processing elements, mimicking the brain's energy-efficient architecture where synapses store information locally at computation sites.

The primary energy consumption sources in large-scale neuromorphic networks include spike generation, synaptic transmission, and plasticity mechanisms. Spike-based communication inherently provides energy advantages over continuous analog signals, as neurons only consume power during active periods. However, as network scale increases, the cumulative energy from millions of concurrent synaptic operations becomes substantial, requiring sophisticated optimization strategies.

Dynamic voltage and frequency scaling techniques have emerged as fundamental approaches for neuromorphic energy management. These methods adjust operating parameters based on computational workload, reducing power consumption during periods of low neural activity. Advanced implementations incorporate predictive algorithms that anticipate network activity patterns, enabling proactive energy scaling before computational demands change.

Sparse connectivity patterns significantly impact energy efficiency in large neuromorphic networks. Biological neural networks typically exhibit connection densities below 10%, suggesting that artificial implementations can achieve substantial energy savings through selective connectivity pruning. Adaptive pruning algorithms dynamically eliminate weak synaptic connections while preserving critical pathways, maintaining cognitive performance while reducing energy overhead.

Event-driven processing architectures offer another promising optimization avenue. Unlike traditional clocked systems, event-driven neuromorphic processors only activate when receiving input spikes, eliminating idle power consumption. This approach becomes increasingly beneficial as network size grows, since large portions of the network remain inactive during typical cognitive tasks.

Hierarchical power management strategies enable fine-grained energy control across different network regions. Critical pathways maintaining essential cognitive functions operate at higher power levels, while peripheral processing areas utilize aggressive power reduction techniques. This selective approach ensures cognitive performance preservation while maximizing overall energy efficiency in large-scale deployments.

The primary energy consumption sources in large-scale neuromorphic networks include spike generation, synaptic transmission, and plasticity mechanisms. Spike-based communication inherently provides energy advantages over continuous analog signals, as neurons only consume power during active periods. However, as network scale increases, the cumulative energy from millions of concurrent synaptic operations becomes substantial, requiring sophisticated optimization strategies.

Dynamic voltage and frequency scaling techniques have emerged as fundamental approaches for neuromorphic energy management. These methods adjust operating parameters based on computational workload, reducing power consumption during periods of low neural activity. Advanced implementations incorporate predictive algorithms that anticipate network activity patterns, enabling proactive energy scaling before computational demands change.

Sparse connectivity patterns significantly impact energy efficiency in large neuromorphic networks. Biological neural networks typically exhibit connection densities below 10%, suggesting that artificial implementations can achieve substantial energy savings through selective connectivity pruning. Adaptive pruning algorithms dynamically eliminate weak synaptic connections while preserving critical pathways, maintaining cognitive performance while reducing energy overhead.

Event-driven processing architectures offer another promising optimization avenue. Unlike traditional clocked systems, event-driven neuromorphic processors only activate when receiving input spikes, eliminating idle power consumption. This approach becomes increasingly beneficial as network size grows, since large portions of the network remain inactive during typical cognitive tasks.

Hierarchical power management strategies enable fine-grained energy control across different network regions. Critical pathways maintaining essential cognitive functions operate at higher power levels, while peripheral processing areas utilize aggressive power reduction techniques. This selective approach ensures cognitive performance preservation while maximizing overall energy efficiency in large-scale deployments.

Unlock deeper insights with PatSnap Eureka Quick Research — get a full tech report to explore trends and direct your research. Try now!

Generate Your Research Report Instantly with AI Agent

Supercharge your innovation with PatSnap Eureka AI Agent Platform!