Neuromorphic Processors for Real-Time Pattern Recognition

MAR 11, 20269 MIN READ

Generate Your Research Report Instantly with AI Agent

PatSnap Eureka helps you evaluate technical feasibility & market potential.

Neuromorphic Computing Background and Pattern Recognition Goals

Neuromorphic computing represents a paradigm shift in computational architecture, drawing inspiration from the structure and function of biological neural networks. This field emerged in the 1980s when Carver Mead pioneered the concept of using analog circuits to mimic neural and sensory systems. Unlike traditional von Neumann architectures that separate memory and processing units, neuromorphic processors integrate these functions within the same physical substrate, enabling massively parallel, low-power computation that closely resembles brain-like information processing.

The evolution of neuromorphic computing has been driven by the fundamental limitations of conventional digital processors when handling complex, unstructured data in real-time scenarios. Traditional architectures struggle with the computational demands of pattern recognition tasks due to their sequential processing nature and high energy consumption. Neuromorphic systems address these challenges by implementing event-driven computation, where information is processed only when changes occur, dramatically reducing power consumption while maintaining high processing speeds.

Pattern recognition represents one of the most promising application domains for neuromorphic processors, encompassing visual recognition, auditory processing, sensory fusion, and cognitive tasks. The biological inspiration behind neuromorphic computing aligns naturally with pattern recognition objectives, as the human brain excels at recognizing complex patterns in noisy, dynamic environments with remarkable efficiency and speed.

The primary technical objectives for neuromorphic processors in pattern recognition include achieving real-time processing capabilities with sub-millisecond latency, maintaining ultra-low power consumption suitable for edge computing applications, and providing adaptive learning mechanisms that enable continuous improvement without external training cycles. These processors aim to handle streaming data from multiple sensors simultaneously while performing complex feature extraction and classification tasks.

Current development goals focus on scaling neuromorphic architectures to support increasingly complex pattern recognition tasks while maintaining their inherent advantages of low power consumption and real-time processing. The integration of spike-based neural networks, memristive devices, and novel learning algorithms represents the convergence of multiple technological advances toward creating truly brain-inspired computing systems capable of sophisticated pattern recognition in resource-constrained environments.

The evolution of neuromorphic computing has been driven by the fundamental limitations of conventional digital processors when handling complex, unstructured data in real-time scenarios. Traditional architectures struggle with the computational demands of pattern recognition tasks due to their sequential processing nature and high energy consumption. Neuromorphic systems address these challenges by implementing event-driven computation, where information is processed only when changes occur, dramatically reducing power consumption while maintaining high processing speeds.

Pattern recognition represents one of the most promising application domains for neuromorphic processors, encompassing visual recognition, auditory processing, sensory fusion, and cognitive tasks. The biological inspiration behind neuromorphic computing aligns naturally with pattern recognition objectives, as the human brain excels at recognizing complex patterns in noisy, dynamic environments with remarkable efficiency and speed.

The primary technical objectives for neuromorphic processors in pattern recognition include achieving real-time processing capabilities with sub-millisecond latency, maintaining ultra-low power consumption suitable for edge computing applications, and providing adaptive learning mechanisms that enable continuous improvement without external training cycles. These processors aim to handle streaming data from multiple sensors simultaneously while performing complex feature extraction and classification tasks.

Current development goals focus on scaling neuromorphic architectures to support increasingly complex pattern recognition tasks while maintaining their inherent advantages of low power consumption and real-time processing. The integration of spike-based neural networks, memristive devices, and novel learning algorithms represents the convergence of multiple technological advances toward creating truly brain-inspired computing systems capable of sophisticated pattern recognition in resource-constrained environments.

Market Demand for Real-Time Pattern Recognition Systems

The global demand for real-time pattern recognition systems has experienced unprecedented growth across multiple industry verticals, driven by the increasing need for instantaneous decision-making capabilities in complex environments. Traditional computing architectures face significant limitations in processing speed and energy efficiency when handling pattern recognition tasks, creating substantial market opportunities for neuromorphic processor solutions.

Autonomous vehicle systems represent one of the most demanding applications, requiring millisecond-level response times for object detection, pedestrian recognition, and traffic sign interpretation. Current market penetration remains limited due to processing latency constraints of conventional silicon-based systems, highlighting the critical need for neuromorphic alternatives that can process visual patterns with biological-level efficiency.

Healthcare diagnostics has emerged as another high-growth sector, particularly in medical imaging and real-time patient monitoring applications. Emergency care environments demand immediate pattern analysis for ECG interpretation, radiological screening, and vital sign anomaly detection. The market gap between required processing speeds and available solutions continues to widen as healthcare facilities adopt more sophisticated monitoring technologies.

Industrial automation and quality control systems increasingly require real-time defect detection and process optimization capabilities. Manufacturing environments generate massive volumes of sensor data that must be analyzed instantaneously to prevent production failures and maintain quality standards. Current solutions often struggle with the computational intensity required for continuous pattern analysis at industrial scales.

Security and surveillance markets demonstrate substantial demand for real-time facial recognition, behavioral analysis, and threat detection systems. Airport security, border control, and urban surveillance networks require processing capabilities that exceed traditional hardware limitations, particularly when analyzing multiple video streams simultaneously.

Edge computing applications across Internet of Things deployments create additional market pressure for efficient pattern recognition solutions. Smart city infrastructure, environmental monitoring systems, and distributed sensor networks require local processing capabilities that minimize latency while maintaining low power consumption profiles.

The convergence of artificial intelligence adoption and edge computing requirements has created a substantial market gap that neuromorphic processors are uniquely positioned to address, with demand spanning from consumer electronics to critical infrastructure applications.

Autonomous vehicle systems represent one of the most demanding applications, requiring millisecond-level response times for object detection, pedestrian recognition, and traffic sign interpretation. Current market penetration remains limited due to processing latency constraints of conventional silicon-based systems, highlighting the critical need for neuromorphic alternatives that can process visual patterns with biological-level efficiency.

Healthcare diagnostics has emerged as another high-growth sector, particularly in medical imaging and real-time patient monitoring applications. Emergency care environments demand immediate pattern analysis for ECG interpretation, radiological screening, and vital sign anomaly detection. The market gap between required processing speeds and available solutions continues to widen as healthcare facilities adopt more sophisticated monitoring technologies.

Industrial automation and quality control systems increasingly require real-time defect detection and process optimization capabilities. Manufacturing environments generate massive volumes of sensor data that must be analyzed instantaneously to prevent production failures and maintain quality standards. Current solutions often struggle with the computational intensity required for continuous pattern analysis at industrial scales.

Security and surveillance markets demonstrate substantial demand for real-time facial recognition, behavioral analysis, and threat detection systems. Airport security, border control, and urban surveillance networks require processing capabilities that exceed traditional hardware limitations, particularly when analyzing multiple video streams simultaneously.

Edge computing applications across Internet of Things deployments create additional market pressure for efficient pattern recognition solutions. Smart city infrastructure, environmental monitoring systems, and distributed sensor networks require local processing capabilities that minimize latency while maintaining low power consumption profiles.

The convergence of artificial intelligence adoption and edge computing requirements has created a substantial market gap that neuromorphic processors are uniquely positioned to address, with demand spanning from consumer electronics to critical infrastructure applications.

Current State and Challenges of Neuromorphic Processors

Neuromorphic processors represent a paradigm shift in computing architecture, drawing inspiration from the human brain's neural networks to process information. Currently, the field has achieved significant milestones with several commercial and research implementations demonstrating practical capabilities. Intel's Loihi chip, IBM's TrueNorth, and BrainChip's Akida processor exemplify the current state of neuromorphic technology, each offering unique approaches to spike-based computing and event-driven processing.

The global distribution of neuromorphic research reveals concentrated efforts in the United States, Europe, and Asia. Leading research institutions include Stanford University, MIT, ETH Zurich, and various national laboratories, while companies like Intel, IBM, Qualcomm, and emerging startups drive commercial development. European initiatives such as the Human Brain Project and Asian investments in brain-inspired computing have created a competitive international landscape.

Despite promising advances, neuromorphic processors face substantial technical challenges that limit widespread adoption. Programming complexity remains a primary obstacle, as traditional software development paradigms are incompatible with spike-based neural architectures. Developers must master new programming models, specialized development tools, and neuromorphic algorithms, creating a steep learning curve that hinders mainstream implementation.

Hardware standardization presents another critical challenge. Unlike conventional processors with established instruction sets and interfaces, neuromorphic chips lack unified standards for communication protocols, memory architectures, and inter-chip connectivity. This fragmentation complicates system integration and limits scalability for complex applications requiring multiple processing units.

Manufacturing scalability and cost-effectiveness constrain commercial viability. Current neuromorphic processors often require specialized fabrication processes or novel materials, resulting in higher production costs compared to traditional silicon-based processors. The limited production volumes further exacerbate cost issues, creating a barrier for mass market penetration.

Power efficiency, while theoretically superior to conventional processors, faces practical implementation challenges. Real-world deployments often require additional circuitry for interfacing with traditional systems, potentially negating some energy advantages. Additionally, the sparse, event-driven nature of neuromorphic computing may not always align with continuous data processing requirements in certain applications.

Integration with existing computing ecosystems remains problematic. Most software frameworks, operating systems, and development environments are designed for von Neumann architectures, necessitating significant adaptations or complete redesigns for neuromorphic platforms. This compatibility gap slows adoption and increases development costs for organizations considering neuromorphic solutions.

The global distribution of neuromorphic research reveals concentrated efforts in the United States, Europe, and Asia. Leading research institutions include Stanford University, MIT, ETH Zurich, and various national laboratories, while companies like Intel, IBM, Qualcomm, and emerging startups drive commercial development. European initiatives such as the Human Brain Project and Asian investments in brain-inspired computing have created a competitive international landscape.

Despite promising advances, neuromorphic processors face substantial technical challenges that limit widespread adoption. Programming complexity remains a primary obstacle, as traditional software development paradigms are incompatible with spike-based neural architectures. Developers must master new programming models, specialized development tools, and neuromorphic algorithms, creating a steep learning curve that hinders mainstream implementation.

Hardware standardization presents another critical challenge. Unlike conventional processors with established instruction sets and interfaces, neuromorphic chips lack unified standards for communication protocols, memory architectures, and inter-chip connectivity. This fragmentation complicates system integration and limits scalability for complex applications requiring multiple processing units.

Manufacturing scalability and cost-effectiveness constrain commercial viability. Current neuromorphic processors often require specialized fabrication processes or novel materials, resulting in higher production costs compared to traditional silicon-based processors. The limited production volumes further exacerbate cost issues, creating a barrier for mass market penetration.

Power efficiency, while theoretically superior to conventional processors, faces practical implementation challenges. Real-world deployments often require additional circuitry for interfacing with traditional systems, potentially negating some energy advantages. Additionally, the sparse, event-driven nature of neuromorphic computing may not always align with continuous data processing requirements in certain applications.

Integration with existing computing ecosystems remains problematic. Most software frameworks, operating systems, and development environments are designed for von Neumann architectures, necessitating significant adaptations or complete redesigns for neuromorphic platforms. This compatibility gap slows adoption and increases development costs for organizations considering neuromorphic solutions.

Existing Neuromorphic Solutions for Pattern Recognition

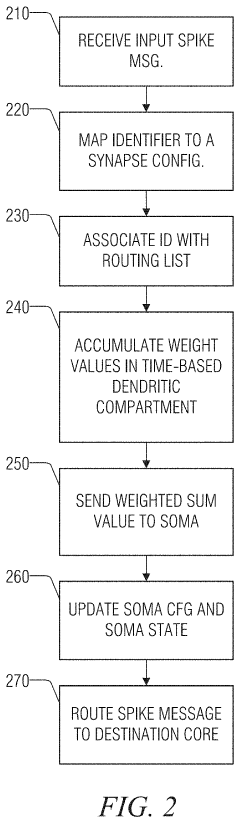

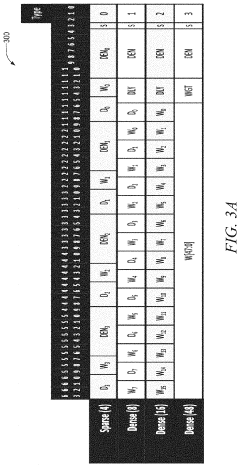

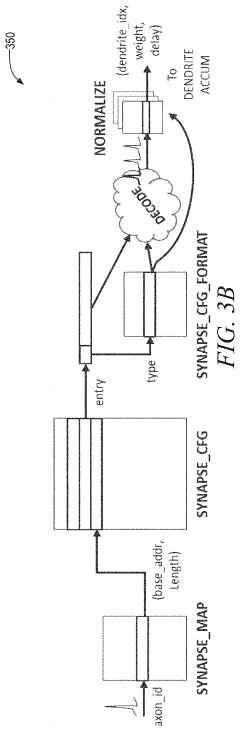

01 Spiking neural network architectures for pattern recognition

Neuromorphic processors utilize spiking neural network (SNN) architectures that mimic biological neural systems for pattern recognition tasks. These architectures process temporal information through spike-based communication, enabling efficient recognition of spatiotemporal patterns. The spiking neurons fire based on accumulated input signals, allowing for event-driven processing that reduces power consumption while maintaining high recognition accuracy.- Spiking neural network architectures for neuromorphic pattern recognition: Neuromorphic processors utilize spiking neural network (SNN) architectures that mimic biological neural systems for pattern recognition tasks. These architectures employ event-driven processing where neurons communicate through discrete spikes, enabling efficient temporal pattern recognition. The spiking mechanisms allow for low-power computation while maintaining high accuracy in recognizing complex patterns across various domains including visual, auditory, and sensor data processing.

- Memristor-based synaptic devices for pattern learning: Memristive devices are integrated into neuromorphic processors to implement synaptic plasticity for pattern recognition applications. These devices provide analog weight storage and in-memory computing capabilities that enable efficient learning of patterns through mechanisms such as spike-timing-dependent plasticity. The memristor arrays facilitate parallel processing and reduce data movement between memory and processing units, significantly improving energy efficiency in pattern recognition tasks.

- Hardware acceleration units for convolutional neural networks: Specialized hardware acceleration units are designed within neuromorphic processors to optimize convolutional operations essential for pattern recognition. These units implement parallel processing architectures that efficiently handle convolution, pooling, and activation functions. The hardware accelerators enable real-time pattern recognition by reducing computational latency and power consumption compared to traditional von Neumann architectures.

- Adaptive learning algorithms for dynamic pattern classification: Neuromorphic processors incorporate adaptive learning algorithms that enable continuous learning and classification of evolving patterns without requiring complete retraining. These algorithms implement online learning mechanisms that adjust synaptic weights based on incoming data streams, allowing the system to adapt to new pattern variations. The adaptive approach enhances recognition accuracy in dynamic environments where pattern characteristics change over time.

- Multi-modal sensor fusion for enhanced pattern recognition: Neuromorphic architectures integrate multi-modal sensor inputs to improve pattern recognition performance through sensor fusion techniques. The processors combine data from various sensing modalities such as vision, audio, and tactile sensors, processing them through specialized neural pathways. This multi-modal approach enables more robust pattern recognition by leveraging complementary information from different sensor types, improving accuracy in complex recognition scenarios.

02 Memristor-based neuromorphic computing for pattern classification

Memristive devices are integrated into neuromorphic processors to implement synaptic weights and enable in-memory computing for pattern recognition. These devices provide analog storage and computation capabilities, allowing for efficient implementation of neural network operations. The memristor arrays can be configured to perform matrix-vector multiplications essential for pattern classification tasks, offering advantages in terms of energy efficiency and processing speed.Expand Specific Solutions03 Hardware acceleration architectures for neural pattern processing

Specialized hardware architectures are designed to accelerate pattern recognition operations in neuromorphic processors. These architectures include parallel processing units, dedicated neural processing engines, and optimized data flow paths that enhance throughput for pattern matching and classification tasks. The hardware implementations support various neural network topologies and can be reconfigured to adapt to different pattern recognition applications.Expand Specific Solutions04 Learning algorithms and training methods for neuromorphic pattern recognition

Advanced learning algorithms are employed to train neuromorphic processors for pattern recognition tasks. These methods include spike-timing-dependent plasticity, online learning mechanisms, and hybrid training approaches that combine supervised and unsupervised learning. The algorithms enable the neuromorphic systems to adapt and improve their pattern recognition capabilities through experience, supporting applications in dynamic environments where patterns may evolve over time.Expand Specific Solutions05 Multi-modal sensory integration for enhanced pattern recognition

Neuromorphic processors integrate multiple sensory inputs to improve pattern recognition performance. These systems process data from various modalities such as visual, auditory, and tactile sensors simultaneously, enabling more robust and context-aware pattern detection. The multi-modal integration leverages the parallel processing capabilities of neuromorphic architectures to fuse information from different sources, resulting in improved recognition accuracy and reduced false positives in complex environments.Expand Specific Solutions

Key Players in Neuromorphic Processor Industry

The neuromorphic processors for real-time pattern recognition field represents an emerging technology sector transitioning from research to early commercialization phases. The market remains nascent with significant growth potential as demand for edge AI processing intensifies across IoT, automotive, and mobile applications. Technology maturity varies considerably among key players, with established semiconductor giants like Intel Corp. and IBM leading foundational research and prototype development, while Samsung Electronics Co., Ltd. and Micron Technology leverage their manufacturing capabilities for scalable solutions. Specialized startups such as Syntiant Corp. and Beijing Lingxi Technology focus on application-specific neuromorphic architectures, demonstrating promising commercial viability. Academic institutions including Tsinghua University, California Institute of Technology, and École Polytechnique Fédérale de Lausanne contribute fundamental research breakthroughs. The competitive landscape indicates a technology still in early adoption stages, with most solutions requiring further development before widespread market deployment, though recent advances suggest accelerating maturation toward practical real-time pattern recognition applications.

International Business Machines Corp.

Technical Solution: IBM has developed TrueNorth neuromorphic processor featuring 1 million programmable neurons and 256 million synapses on a single chip. The architecture implements event-driven computation with ultra-low power consumption of 70mW during active operation. TrueNorth utilizes a distributed memory architecture where each core contains 256 neurons with local memory, enabling parallel processing of sensory data. The system supports real-time pattern recognition through spike-based neural networks, particularly effective for visual and auditory pattern detection. IBM's neuromorphic ecosystem includes specialized programming tools and simulation environments for developing brain-inspired applications in robotics, autonomous systems, and IoT devices.

Strengths: Proven scalability with million-neuron integration, extremely low power consumption, comprehensive development ecosystem. Weaknesses: Limited commercial availability, complex programming paradigm, restricted to specific application domains.

Intel Corp.

Technical Solution: Intel's Loihi neuromorphic research chip incorporates 128 neuromorphic cores with 131,072 artificial neurons and 130 million synapses. The architecture features asynchronous spike-based communication and on-chip learning capabilities through spike-timing-dependent plasticity (STDP). Loihi operates at extremely low power levels, consuming 1000x less energy than conventional processors for certain AI workloads. The chip supports real-time adaptive learning and pattern recognition without requiring pre-trained models. Intel's neuromorphic framework includes Nengo and SLAYER development tools, enabling researchers to implement spiking neural networks for applications like gesture recognition, robotic control, and sensory processing tasks.

Strengths: Advanced on-chip learning capabilities, exceptional energy efficiency, strong research community support. Weaknesses: Still in research phase, limited production scalability, requires specialized programming expertise.

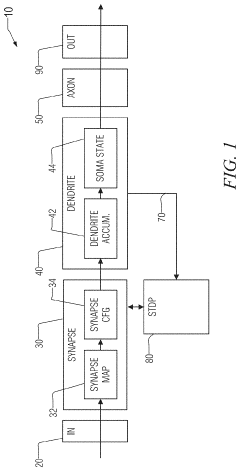

Core Innovations in Neuromorphic Pattern Processing

Trace-based neuromorphic architecture for advanced learning

PatentInactiveUS20210304005A1

Innovation

- The proposed neuromorphic computing architecture employs exponentially-filtered spike trains, or traces, to compute rich temporal correlations, and a configurable learning engine that combines these trace variables using microcode to support a broad range of learning rules, allowing for efficient implementation of advanced neural network learning algorithms with minimal state storage and aggressive sharing of state across neural structures.

Two-dimensional array-based neuromorphic processor and implementing method

PatentActiveUS11868874B2

Innovation

- A two-dimensional array-based neuromorphic processor design that includes axon circuits, synapse circuits, and neuron circuits, where synapse circuits store weights and output operation values based on time information, allowing for multi-bit operations through bit-wise AND operations and addition, enabling high-resolution processing without requiring additional peripheral circuits.

Hardware-Software Co-design for Neuromorphic Systems

Hardware-software co-design represents a fundamental paradigm shift in neuromorphic system development, where traditional sequential design approaches give way to integrated, concurrent engineering methodologies. This approach recognizes that neuromorphic processors for real-time pattern recognition require intimate coordination between hardware architectures and software algorithms to achieve optimal performance, energy efficiency, and functionality.

The co-design methodology begins with establishing unified design objectives that simultaneously consider hardware constraints and software requirements. Unlike conventional processors where software adapts to fixed hardware architectures, neuromorphic systems benefit from hardware that can be tailored to specific algorithmic needs while maintaining flexibility for diverse pattern recognition tasks. This symbiotic relationship enables designers to optimize critical parameters such as synaptic connectivity patterns, neuron model complexity, and memory hierarchy organization based on target application requirements.

Memory architecture represents a crucial co-design consideration, as neuromorphic pattern recognition algorithms exhibit unique data access patterns that differ significantly from traditional von Neumann architectures. The integration of processing and memory elements requires careful coordination between hardware memory organization and software data structures. Co-design approaches optimize memory bandwidth, latency, and energy consumption by aligning hardware memory hierarchies with software algorithm characteristics, including synaptic weight distributions and neural activation patterns.

Communication protocols between hardware and software layers demand specialized attention in neuromorphic co-design. Real-time pattern recognition applications require low-latency interfaces that can handle asynchronous spike-based communications while maintaining temporal precision. Co-design methodologies establish standardized communication frameworks that enable efficient data exchange between software-defined neural network models and hardware spike processing units.

Optimization strategies in hardware-software co-design leverage cross-layer feedback mechanisms to achieve superior performance compared to isolated design approaches. Software profiling informs hardware architectural decisions, while hardware performance characteristics guide software algorithm optimization. This iterative refinement process enables designers to identify and eliminate bottlenecks that span both hardware and software domains, resulting in neuromorphic systems that can meet stringent real-time pattern recognition requirements while maintaining energy efficiency and scalability for diverse application scenarios.

The co-design methodology begins with establishing unified design objectives that simultaneously consider hardware constraints and software requirements. Unlike conventional processors where software adapts to fixed hardware architectures, neuromorphic systems benefit from hardware that can be tailored to specific algorithmic needs while maintaining flexibility for diverse pattern recognition tasks. This symbiotic relationship enables designers to optimize critical parameters such as synaptic connectivity patterns, neuron model complexity, and memory hierarchy organization based on target application requirements.

Memory architecture represents a crucial co-design consideration, as neuromorphic pattern recognition algorithms exhibit unique data access patterns that differ significantly from traditional von Neumann architectures. The integration of processing and memory elements requires careful coordination between hardware memory organization and software data structures. Co-design approaches optimize memory bandwidth, latency, and energy consumption by aligning hardware memory hierarchies with software algorithm characteristics, including synaptic weight distributions and neural activation patterns.

Communication protocols between hardware and software layers demand specialized attention in neuromorphic co-design. Real-time pattern recognition applications require low-latency interfaces that can handle asynchronous spike-based communications while maintaining temporal precision. Co-design methodologies establish standardized communication frameworks that enable efficient data exchange between software-defined neural network models and hardware spike processing units.

Optimization strategies in hardware-software co-design leverage cross-layer feedback mechanisms to achieve superior performance compared to isolated design approaches. Software profiling informs hardware architectural decisions, while hardware performance characteristics guide software algorithm optimization. This iterative refinement process enables designers to identify and eliminate bottlenecks that span both hardware and software domains, resulting in neuromorphic systems that can meet stringent real-time pattern recognition requirements while maintaining energy efficiency and scalability for diverse application scenarios.

Energy Efficiency Optimization in Neuromorphic Architectures

Energy efficiency represents the paramount challenge in neuromorphic processor design for real-time pattern recognition applications. Traditional von Neumann architectures consume substantial power due to continuous data movement between memory and processing units, whereas neuromorphic systems aim to achieve brain-like efficiency through event-driven computation and co-located memory-processing elements.

The fundamental approach to energy optimization in neuromorphic architectures centers on spike-based communication protocols. Unlike conventional processors that maintain continuous voltage levels, neuromorphic chips transmit information through discrete spikes, dramatically reducing power consumption during idle periods. This asynchronous operation enables processors to activate only when meaningful events occur, eliminating unnecessary computational overhead.

Advanced power gating techniques have emerged as critical optimization strategies. Modern neuromorphic designs implement hierarchical power domains that can selectively shut down unused neural clusters while maintaining active regions for ongoing pattern recognition tasks. This granular control allows systems to scale power consumption proportionally with computational demands, achieving significant energy savings during low-activity periods.

Memory architecture optimization plays a crucial role in overall energy efficiency. Emerging neuromorphic processors integrate memristive devices and phase-change memory elements directly within neural processing units, eliminating energy-intensive data transfers. These in-memory computing approaches reduce power consumption by orders of magnitude compared to traditional architectures while enabling parallel processing of multiple pattern recognition streams.

Voltage scaling and adaptive frequency modulation represent additional optimization vectors. Neuromorphic processors can dynamically adjust operating voltages based on recognition accuracy requirements, trading computational precision for energy savings when appropriate. This adaptive approach proves particularly valuable in battery-powered edge devices where energy conservation directly impacts operational lifetime.

Circuit-level optimizations focus on minimizing leakage currents and optimizing transistor sizing for neuromorphic workloads. Specialized analog circuits designed for synaptic operations consume significantly less power than their digital equivalents while maintaining sufficient precision for pattern recognition tasks. These analog implementations leverage the inherent noise tolerance of neural algorithms to achieve remarkable energy efficiency gains.

The fundamental approach to energy optimization in neuromorphic architectures centers on spike-based communication protocols. Unlike conventional processors that maintain continuous voltage levels, neuromorphic chips transmit information through discrete spikes, dramatically reducing power consumption during idle periods. This asynchronous operation enables processors to activate only when meaningful events occur, eliminating unnecessary computational overhead.

Advanced power gating techniques have emerged as critical optimization strategies. Modern neuromorphic designs implement hierarchical power domains that can selectively shut down unused neural clusters while maintaining active regions for ongoing pattern recognition tasks. This granular control allows systems to scale power consumption proportionally with computational demands, achieving significant energy savings during low-activity periods.

Memory architecture optimization plays a crucial role in overall energy efficiency. Emerging neuromorphic processors integrate memristive devices and phase-change memory elements directly within neural processing units, eliminating energy-intensive data transfers. These in-memory computing approaches reduce power consumption by orders of magnitude compared to traditional architectures while enabling parallel processing of multiple pattern recognition streams.

Voltage scaling and adaptive frequency modulation represent additional optimization vectors. Neuromorphic processors can dynamically adjust operating voltages based on recognition accuracy requirements, trading computational precision for energy savings when appropriate. This adaptive approach proves particularly valuable in battery-powered edge devices where energy conservation directly impacts operational lifetime.

Circuit-level optimizations focus on minimizing leakage currents and optimizing transistor sizing for neuromorphic workloads. Specialized analog circuits designed for synaptic operations consume significantly less power than their digital equivalents while maintaining sufficient precision for pattern recognition tasks. These analog implementations leverage the inherent noise tolerance of neural algorithms to achieve remarkable energy efficiency gains.

Unlock deeper insights with PatSnap Eureka Quick Research — get a full tech report to explore trends and direct your research. Try now!

Generate Your Research Report Instantly with AI Agent

Supercharge your innovation with PatSnap Eureka AI Agent Platform!