FinFET Reliability Tests In AI Computation

SEP 11, 20259 MIN READ

Generate Your Research Report Instantly with AI Agent

PatSnap Eureka helps you evaluate technical feasibility & market potential.

FinFET Reliability Background and Objectives

FinFET technology has evolved significantly since its introduction in the early 2000s, becoming a cornerstone of modern semiconductor manufacturing. Initially developed to overcome the limitations of planar transistors at sub-28nm nodes, FinFETs offer superior electrostatic control, reduced leakage current, and enhanced performance in smaller form factors. This three-dimensional structure has enabled the continued scaling of semiconductor devices in accordance with Moore's Law, despite increasing physical challenges.

In the context of artificial intelligence computation, FinFET reliability has become increasingly critical. AI workloads present unique challenges to semiconductor devices due to their intensive computational requirements, sustained high-performance operation, and variable power consumption patterns. As AI applications continue to proliferate across industries, from data centers to edge devices, the reliability of the underlying hardware becomes paramount to ensure consistent performance and longevity.

The evolution of FinFET technology has seen several generations of improvements, from 22nm to the current sub-7nm nodes. Each generation has introduced refinements in fin geometry, gate structure, and materials to enhance performance and reliability. However, as dimensions continue to shrink, new reliability concerns have emerged, including hot carrier injection, bias temperature instability, time-dependent dielectric breakdown, and electromigration, all of which can significantly impact device lifetime and performance stability.

The primary objective of FinFET reliability testing in AI computation contexts is to develop comprehensive methodologies that accurately predict device behavior under AI-specific workloads. This includes characterizing failure mechanisms unique to AI computation patterns, establishing accelerated testing protocols that correlate with real-world AI applications, and developing models that can predict device degradation over time.

Another critical goal is to establish reliability standards specifically tailored to AI hardware, considering the diverse deployment environments from cloud data centers to edge devices. These standards must address both immediate performance requirements and long-term reliability concerns, balancing the need for cutting-edge performance with sustainable operation.

Furthermore, the research aims to bridge the gap between device-level reliability testing and system-level performance in AI applications. This holistic approach seeks to understand how transistor-level degradation translates to impacts on neural network accuracy, inference speed, and overall system efficiency, enabling more resilient AI hardware design.

Ultimately, the technological trajectory points toward developing FinFETs specifically optimized for AI workloads, with reliability characteristics engineered to withstand the unique stresses imposed by machine learning training and inference operations. This specialized approach may lead to divergent FinFET designs optimized for different computational paradigms, representing a significant shift in semiconductor development philosophy.

In the context of artificial intelligence computation, FinFET reliability has become increasingly critical. AI workloads present unique challenges to semiconductor devices due to their intensive computational requirements, sustained high-performance operation, and variable power consumption patterns. As AI applications continue to proliferate across industries, from data centers to edge devices, the reliability of the underlying hardware becomes paramount to ensure consistent performance and longevity.

The evolution of FinFET technology has seen several generations of improvements, from 22nm to the current sub-7nm nodes. Each generation has introduced refinements in fin geometry, gate structure, and materials to enhance performance and reliability. However, as dimensions continue to shrink, new reliability concerns have emerged, including hot carrier injection, bias temperature instability, time-dependent dielectric breakdown, and electromigration, all of which can significantly impact device lifetime and performance stability.

The primary objective of FinFET reliability testing in AI computation contexts is to develop comprehensive methodologies that accurately predict device behavior under AI-specific workloads. This includes characterizing failure mechanisms unique to AI computation patterns, establishing accelerated testing protocols that correlate with real-world AI applications, and developing models that can predict device degradation over time.

Another critical goal is to establish reliability standards specifically tailored to AI hardware, considering the diverse deployment environments from cloud data centers to edge devices. These standards must address both immediate performance requirements and long-term reliability concerns, balancing the need for cutting-edge performance with sustainable operation.

Furthermore, the research aims to bridge the gap between device-level reliability testing and system-level performance in AI applications. This holistic approach seeks to understand how transistor-level degradation translates to impacts on neural network accuracy, inference speed, and overall system efficiency, enabling more resilient AI hardware design.

Ultimately, the technological trajectory points toward developing FinFETs specifically optimized for AI workloads, with reliability characteristics engineered to withstand the unique stresses imposed by machine learning training and inference operations. This specialized approach may lead to divergent FinFET designs optimized for different computational paradigms, representing a significant shift in semiconductor development philosophy.

AI Computation Market Requirements Analysis

The AI computation market has witnessed exponential growth in recent years, driven primarily by the increasing adoption of artificial intelligence across various industries. This growth has placed unprecedented demands on semiconductor technologies, particularly FinFET architectures that power modern AI processors. Market analysis indicates that the global AI chip market reached $15 billion in 2022 and is projected to grow at a CAGR of 30% through 2028, highlighting the critical importance of reliable semiconductor solutions.

The reliability requirements for FinFET devices in AI computation contexts differ significantly from traditional computing applications. AI workloads, especially those involving deep learning training and inference, subject processors to sustained high-performance operations with intensive parallel computing demands. This creates unique stress conditions that can accelerate transistor degradation mechanisms such as Hot Carrier Injection (HCI), Bias Temperature Instability (BTI), and Time-Dependent Dielectric Breakdown (TDDB).

Industry surveys reveal that AI system developers prioritize three key reliability metrics for FinFET-based AI chips: operational longevity under high computational loads, consistent performance over time, and power efficiency stability. Cloud service providers, who represent the largest market segment for high-performance AI chips, require processors that can maintain 99.999% uptime while operating at near-maximum capacity for extended periods, often exceeding 3-5 years of continuous operation.

The automotive and edge AI segments present additional reliability challenges, with temperature ranges from -40°C to 150°C and requirements for fault tolerance that exceed traditional consumer electronics standards. These applications demand FinFET technologies that can maintain reliable operation despite thermal cycling, mechanical stress, and variable power conditions.

Market data shows that reliability-related failures in AI systems can result in significant financial impacts, with downtime costs for large-scale AI operations averaging $100,000 per hour. This economic reality has elevated reliability testing from a technical consideration to a critical business requirement, with 78% of enterprise AI adopters citing hardware reliability as a top-three concern in procurement decisions.

The competitive landscape further intensifies these requirements, as AI chip manufacturers compete not only on performance metrics but increasingly on reliability guarantees. Leading cloud providers now demand comprehensive reliability test data and accelerated life testing results as part of their vendor qualification processes, creating market pressure for more sophisticated FinFET reliability testing methodologies specifically tailored to AI computation patterns.

The reliability requirements for FinFET devices in AI computation contexts differ significantly from traditional computing applications. AI workloads, especially those involving deep learning training and inference, subject processors to sustained high-performance operations with intensive parallel computing demands. This creates unique stress conditions that can accelerate transistor degradation mechanisms such as Hot Carrier Injection (HCI), Bias Temperature Instability (BTI), and Time-Dependent Dielectric Breakdown (TDDB).

Industry surveys reveal that AI system developers prioritize three key reliability metrics for FinFET-based AI chips: operational longevity under high computational loads, consistent performance over time, and power efficiency stability. Cloud service providers, who represent the largest market segment for high-performance AI chips, require processors that can maintain 99.999% uptime while operating at near-maximum capacity for extended periods, often exceeding 3-5 years of continuous operation.

The automotive and edge AI segments present additional reliability challenges, with temperature ranges from -40°C to 150°C and requirements for fault tolerance that exceed traditional consumer electronics standards. These applications demand FinFET technologies that can maintain reliable operation despite thermal cycling, mechanical stress, and variable power conditions.

Market data shows that reliability-related failures in AI systems can result in significant financial impacts, with downtime costs for large-scale AI operations averaging $100,000 per hour. This economic reality has elevated reliability testing from a technical consideration to a critical business requirement, with 78% of enterprise AI adopters citing hardware reliability as a top-three concern in procurement decisions.

The competitive landscape further intensifies these requirements, as AI chip manufacturers compete not only on performance metrics but increasingly on reliability guarantees. Leading cloud providers now demand comprehensive reliability test data and accelerated life testing results as part of their vendor qualification processes, creating market pressure for more sophisticated FinFET reliability testing methodologies specifically tailored to AI computation patterns.

FinFET Reliability Challenges in AI Workloads

The integration of FinFET technology into AI computation systems has introduced significant reliability challenges that must be addressed to ensure optimal performance and longevity of these critical components. As AI workloads continue to intensify with more complex neural networks and larger datasets, FinFET transistors face unprecedented stress conditions that differ substantially from traditional computing patterns.

AI computation typically involves highly parallel operations with intensive memory access patterns and computational bursts. These workloads create unique stress profiles characterized by rapid thermal cycling, voltage fluctuations, and current density variations across the FinFET structures. The three-dimensional nature of FinFET devices, while beneficial for performance and power efficiency, introduces additional reliability concerns when subjected to these dynamic AI processing demands.

One primary challenge is Bias Temperature Instability (BTI), which manifests more severely under AI workloads due to the sustained high-utilization periods followed by idle states. This cycling pattern accelerates threshold voltage shifts in FinFETs, potentially leading to timing violations and computational errors in AI algorithms that require precise mathematical operations.

Hot Carrier Injection (HCI) presents another significant concern, particularly in AI accelerator chips where certain computational units may experience continuous high-voltage operations. The narrow fin structure of FinFETs can exacerbate HCI effects, leading to degraded transistor performance over time and potentially affecting the accuracy of AI model execution.

Time-Dependent Dielectric Breakdown (TDDB) reliability is also challenged by AI workloads, as the high current densities required for parallel matrix multiplications and convolution operations place substantial stress on the gate oxide. This is particularly problematic in advanced FinFET nodes where oxide layers are extremely thin.

Electromigration risks are heightened in AI chips due to the non-uniform current distribution typical of neural network computations. Critical interconnect paths servicing frequently activated neurons may experience accelerated degradation, potentially creating performance bottlenecks or complete failures in the most utilized computational pathways.

Self-heating effects within FinFET structures become more pronounced during intensive AI training sessions, creating thermal gradients that can accelerate various failure mechanisms. The confined geometry of fins limits heat dissipation capabilities, making thermal management a critical reliability factor for AI-focused FinFET implementations.

Additionally, the increasing deployment of AI systems in edge devices introduces environmental stressors such as variable ambient temperatures and power supply fluctuations that further complicate reliability predictions for FinFET-based AI accelerators.

AI computation typically involves highly parallel operations with intensive memory access patterns and computational bursts. These workloads create unique stress profiles characterized by rapid thermal cycling, voltage fluctuations, and current density variations across the FinFET structures. The three-dimensional nature of FinFET devices, while beneficial for performance and power efficiency, introduces additional reliability concerns when subjected to these dynamic AI processing demands.

One primary challenge is Bias Temperature Instability (BTI), which manifests more severely under AI workloads due to the sustained high-utilization periods followed by idle states. This cycling pattern accelerates threshold voltage shifts in FinFETs, potentially leading to timing violations and computational errors in AI algorithms that require precise mathematical operations.

Hot Carrier Injection (HCI) presents another significant concern, particularly in AI accelerator chips where certain computational units may experience continuous high-voltage operations. The narrow fin structure of FinFETs can exacerbate HCI effects, leading to degraded transistor performance over time and potentially affecting the accuracy of AI model execution.

Time-Dependent Dielectric Breakdown (TDDB) reliability is also challenged by AI workloads, as the high current densities required for parallel matrix multiplications and convolution operations place substantial stress on the gate oxide. This is particularly problematic in advanced FinFET nodes where oxide layers are extremely thin.

Electromigration risks are heightened in AI chips due to the non-uniform current distribution typical of neural network computations. Critical interconnect paths servicing frequently activated neurons may experience accelerated degradation, potentially creating performance bottlenecks or complete failures in the most utilized computational pathways.

Self-heating effects within FinFET structures become more pronounced during intensive AI training sessions, creating thermal gradients that can accelerate various failure mechanisms. The confined geometry of fins limits heat dissipation capabilities, making thermal management a critical reliability factor for AI-focused FinFET implementations.

Additionally, the increasing deployment of AI systems in edge devices introduces environmental stressors such as variable ambient temperatures and power supply fluctuations that further complicate reliability predictions for FinFET-based AI accelerators.

Current FinFET Reliability Test Methodologies

01 Gate structure optimization for FinFET reliability

Optimizing the gate structure of FinFETs is crucial for enhancing device reliability. This includes modifications to gate materials, gate dielectrics, and gate geometry to reduce leakage current and improve threshold voltage stability. Advanced gate stacks with high-k dielectrics and metal gates help minimize electron trapping and reduce hot carrier effects, thereby extending device lifetime and improving performance under stress conditions.- Gate structure optimization for FinFET reliability: Optimizing the gate structure of FinFETs is crucial for enhancing device reliability. This includes modifications to gate materials, gate dielectrics, and gate geometries to reduce leakage current and improve threshold voltage stability. Advanced gate stack engineering techniques help mitigate hot carrier injection effects and time-dependent dielectric breakdown, which are common reliability concerns in FinFET devices. These optimizations contribute to extended device lifetime and improved performance under various operating conditions.

- Stress management techniques in FinFET fabrication: Managing mechanical stress in FinFET structures is essential for reliability improvement. Various techniques are employed to control and utilize stress effects, including strain engineering, stress memorization techniques, and stress liner technologies. These approaches can enhance carrier mobility while preventing stress-induced defects that may lead to device degradation over time. Proper stress management contributes to consistent performance and improved reliability of FinFET devices under thermal and electrical stress conditions.

- Simulation and modeling for FinFET reliability prediction: Advanced simulation and modeling techniques are employed to predict and enhance FinFET reliability. These computational methods enable analysis of failure mechanisms, lifetime estimation, and optimization of device parameters before physical fabrication. Reliability models account for various degradation mechanisms including bias temperature instability, hot carrier injection, and electromigration. Simulation-based approaches allow for efficient design space exploration and reliability-aware circuit design, reducing development cycles and improving overall device robustness.

- Novel FinFET structures for enhanced reliability: Innovative FinFET architectures are developed to address reliability challenges. These include multi-bridge channel FETs, gate-all-around structures, and vertically stacked nanowire/nanosheet configurations. Such novel structures provide better electrostatic control, reduced short-channel effects, and improved resistance to various degradation mechanisms. By fundamentally altering the transistor geometry, these approaches achieve superior reliability characteristics while enabling continued device scaling according to Moore's Law.

- Process optimization for FinFET reliability improvement: Manufacturing process optimizations play a critical role in enhancing FinFET reliability. This includes refined etching techniques for fin formation, improved doping profiles, advanced annealing methods, and optimized cleaning processes. Careful control of process parameters helps minimize defect density, interface traps, and variability issues that impact device reliability. Post-fabrication treatments and passivation techniques are also employed to enhance resistance to environmental factors and extend device lifetime under operational conditions.

02 Fin structure engineering for improved reliability

Engineering the fin structure is essential for FinFET reliability. This involves optimizing fin height, width, and profile to enhance carrier mobility and reduce variability. Techniques such as strain engineering, fin sidewall treatments, and doping profile optimization help mitigate short channel effects and improve device stability under various operating conditions. Proper fin design also reduces surface roughness-induced scattering and improves electrostatic control.Expand Specific Solutions03 Reliability simulation and modeling techniques

Advanced simulation and modeling techniques are employed to predict and enhance FinFET reliability. These include physics-based models that account for various degradation mechanisms such as bias temperature instability, hot carrier injection, and time-dependent dielectric breakdown. Simulation tools help in identifying reliability bottlenecks during the design phase, enabling proactive reliability engineering and accelerating the development of robust FinFET technologies.Expand Specific Solutions04 Source/drain engineering for reliability enhancement

Optimizing source and drain regions is critical for FinFET reliability. This includes advanced epitaxial growth techniques, selective doping methods, and strain engineering to reduce contact resistance and improve carrier transport. Proper source/drain design helps minimize self-heating effects and electromigration, which are significant reliability concerns in scaled FinFET devices. Additionally, optimized source/drain regions contribute to better electrostatic integrity and reduced leakage currents.Expand Specific Solutions05 Process integration and manufacturing techniques for reliable FinFETs

Advanced process integration and manufacturing techniques are essential for producing reliable FinFET devices. This includes precise control of critical dimensions, optimized etching processes, and improved cleaning methods to ensure fin uniformity and reduce defects. Novel deposition techniques for gate dielectrics and metal gates, along with optimized annealing processes, help minimize interface traps and improve device stability. Process monitoring and statistical control methods are also implemented to ensure consistent reliability across wafers.Expand Specific Solutions

Key Industry Players in FinFET Manufacturing

The FinFET reliability testing landscape for AI computation is currently in a growth phase, with the market expanding rapidly due to increasing AI chip demands. The technology maturity varies across key players, with TSMC and Samsung leading with advanced FinFET processes optimized for AI workloads. IBM contributes significant research in reliability physics, while SMIC and GlobalFoundries are advancing their capabilities. Huawei and Xilinx focus on AI-specific reliability enhancements. Academic institutions like IMEC and Chinese universities collaborate with industry to address thermal and electrical stress challenges. The market is characterized by intense competition between established semiconductor manufacturers and emerging specialized AI chip designers, driving innovation in reliability methodologies for next-generation AI computing architectures.

Taiwan Semiconductor Manufacturing Co., Ltd.

Technical Solution: TSMC has developed comprehensive FinFET reliability testing methodologies specifically optimized for AI computation workloads. Their approach includes accelerated lifetime testing protocols that simulate the unique stress conditions experienced during intensive AI training and inference operations. TSMC's N5 and N3 FinFET processes incorporate specialized test structures to evaluate hot carrier injection (HCI), bias temperature instability (BTI), and time-dependent dielectric breakdown (TDDB) under AI-specific workload patterns. Their testing framework includes on-chip monitoring systems that can detect reliability degradation in real-time during AI computation tasks. TSMC has also implemented machine learning-based reliability prediction models that can forecast potential failures based on early indicators from their test data, allowing for proactive reliability management in AI chips. Their testing methodology accounts for the unique thermal profiles and power density challenges presented by AI accelerators, with particular focus on the reliability implications of rapid power state transitions common in AI workloads.

Strengths: Industry-leading process technology with extensive reliability data collection capabilities across multiple technology nodes. Advanced statistical analysis methods for correlating test results with field performance. Weaknesses: Testing methodologies may be optimized primarily for high-performance computing rather than specialized AI accelerators, potentially missing unique failure modes in custom AI architectures.

International Business Machines Corp.

Technical Solution: IBM has pioneered advanced FinFET reliability testing methodologies specifically designed for AI computation workloads. Their approach leverages their extensive experience in both semiconductor manufacturing and AI system development to create highly targeted reliability assessment techniques. IBM's testing framework incorporates workload-aware reliability characterization that simulates the unique computational patterns of neural network operations across different AI architectures. Their methodology includes specialized test structures that can evaluate reliability degradation under tensor operations, matrix multiplications, and other core AI computational primitives. IBM has developed proprietary on-chip monitoring systems that can track reliability parameters during actual AI computation tasks, providing real-time data on degradation under workload. Their testing protocols pay particular attention to the reliability implications of the heterogeneous integration approaches increasingly common in AI accelerators, evaluating how system-level factors impact FinFET reliability. IBM also employs advanced machine learning techniques to analyze reliability test data, identifying subtle patterns and correlations that might escape traditional statistical analysis. Their reliability testing framework includes accelerated aging tests specifically designed to evaluate the impact of AI workload patterns on FinFET lifetime.

Strengths: Deep integration between semiconductor research, manufacturing experience, and AI system development enables highly sophisticated reliability testing approaches. Extensive experience with high-performance computing workloads provides valuable insights into reliability requirements for large-scale AI systems. Weaknesses: More limited commercial semiconductor manufacturing footprint compared to pure-play foundries may result in less extensive field reliability data across diverse application scenarios.

Critical Patents in FinFET Reliability Testing

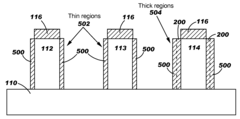

Cut-Fin Isolation Regions and Method Forming Same

PatentActiveUS20220059685A1

Innovation

- The formation of cut-fin isolation regions is achieved by etching STI regions and filling the resulting recesses with a dielectric material, eliminating the need for exposed STI regions and thereby reducing leakage currents, using a method that includes forming semiconductor fins, gate stacks, and replacing dummy gate stacks with metal gates, and then etching and filling the isolation regions to create a dielectric isolation structure that penetrates through the substrate.

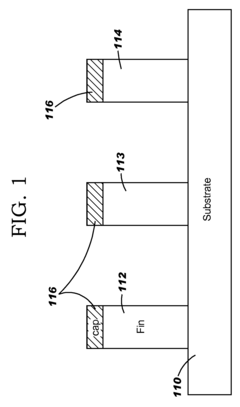

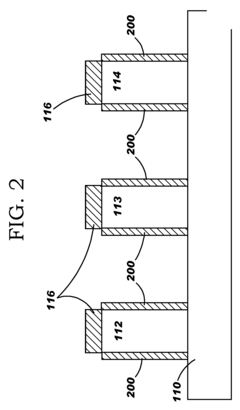

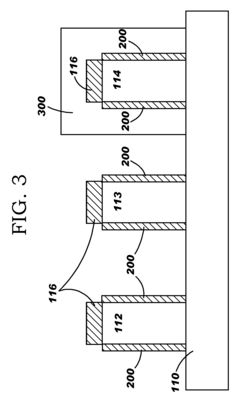

Multiple dielectric finfet structure and method

PatentInactiveUS20070290250A1

Innovation

- A method of forming FinFETs with multiple gate dielectric thicknesses by patterning fins, applying a first gate dielectric, protecting some fins with a mask, removing it from others, and adding additional dielectric layers to achieve varying thicknesses, allowing for optimized performance and reliability across different regions.

Thermal Management Solutions for AI Chips

The thermal challenges in AI chips utilizing FinFET technology have become increasingly critical as computational demands grow exponentially. Modern AI accelerators generate significant heat during intensive workloads, with power densities often exceeding 500W/cm² in localized hotspots. This thermal concentration is particularly problematic for FinFET structures, where reliability degradation accelerates exponentially with temperature increases. Studies indicate that every 10°C rise above optimal operating temperatures can reduce a FinFET's lifespan by approximately 50%.

Advanced cooling solutions have evolved significantly to address these thermal management challenges. Liquid cooling systems have demonstrated superior heat dissipation capabilities compared to traditional air cooling, removing up to 3-5 times more heat per unit area. Direct-to-chip liquid cooling technologies can maintain junction temperatures below 85°C even under sustained AI training workloads exceeding 400W per chip.

Microfluidic cooling channels integrated directly into silicon interposers represent a cutting-edge approach, allowing coolant to flow within microns of the active FinFET devices. These systems have demonstrated the ability to handle heat fluxes of up to 1000W/cm² in laboratory settings, though commercial implementations typically manage 600-700W/cm² effectively.

Phase-change materials (PCMs) incorporated into thermal interface materials have shown promising results for managing transient thermal loads common in AI inference workloads. These materials can absorb significant thermal energy during phase transition, effectively dampening temperature spikes during burst computational activities. Silicon-based PCMs with embedded metallic nanoparticles have demonstrated thermal conductivity improvements of 200-300% compared to conventional thermal interface materials.

3D packaging technologies present both challenges and opportunities for thermal management. While stacking multiple FinFET dies increases power density concerns, it also enables the integration of dedicated cooling layers between computational elements. Through-silicon vias (TSVs) filled with high thermal conductivity materials can serve dual purposes as electrical interconnects and thermal conduits, reducing junction-to-case thermal resistance by up to 30%.

Dynamic thermal management techniques have become essential components of AI chip design. Advanced algorithms that incorporate predictive workload modeling can preemptively adjust computational loads across different regions of the chip, preventing hotspot formation. These techniques, when combined with hardware-level thermal sensors distributed throughout the die, have demonstrated the ability to reduce peak temperatures by 15-20°C during intensive AI training operations while minimizing performance impact.

Advanced cooling solutions have evolved significantly to address these thermal management challenges. Liquid cooling systems have demonstrated superior heat dissipation capabilities compared to traditional air cooling, removing up to 3-5 times more heat per unit area. Direct-to-chip liquid cooling technologies can maintain junction temperatures below 85°C even under sustained AI training workloads exceeding 400W per chip.

Microfluidic cooling channels integrated directly into silicon interposers represent a cutting-edge approach, allowing coolant to flow within microns of the active FinFET devices. These systems have demonstrated the ability to handle heat fluxes of up to 1000W/cm² in laboratory settings, though commercial implementations typically manage 600-700W/cm² effectively.

Phase-change materials (PCMs) incorporated into thermal interface materials have shown promising results for managing transient thermal loads common in AI inference workloads. These materials can absorb significant thermal energy during phase transition, effectively dampening temperature spikes during burst computational activities. Silicon-based PCMs with embedded metallic nanoparticles have demonstrated thermal conductivity improvements of 200-300% compared to conventional thermal interface materials.

3D packaging technologies present both challenges and opportunities for thermal management. While stacking multiple FinFET dies increases power density concerns, it also enables the integration of dedicated cooling layers between computational elements. Through-silicon vias (TSVs) filled with high thermal conductivity materials can serve dual purposes as electrical interconnects and thermal conduits, reducing junction-to-case thermal resistance by up to 30%.

Dynamic thermal management techniques have become essential components of AI chip design. Advanced algorithms that incorporate predictive workload modeling can preemptively adjust computational loads across different regions of the chip, preventing hotspot formation. These techniques, when combined with hardware-level thermal sensors distributed throughout the die, have demonstrated the ability to reduce peak temperatures by 15-20°C during intensive AI training operations while minimizing performance impact.

Power Efficiency vs Reliability Trade-offs

The fundamental challenge in FinFET design for AI computation lies in balancing power efficiency against reliability concerns. As AI workloads become increasingly demanding, FinFET transistors are pushed to operate at higher frequencies and densities, creating significant power consumption challenges. The power envelope directly impacts thermal profiles, which in turn affects device reliability through mechanisms such as electromigration, bias temperature instability, and hot carrier injection.

Current industry approaches demonstrate several strategic trade-offs. Dynamic voltage and frequency scaling (DVFS) techniques allow for real-time adjustments based on computational demands, reducing power consumption during less intensive operations while maintaining reliability margins. However, aggressive DVFS implementations can introduce voltage transients that potentially accelerate aging mechanisms in FinFET structures.

Architectural innovations present another dimension of this trade-off. Multi-threshold voltage designs incorporate transistors with different threshold voltages within the same chip, allowing critical paths to utilize low-threshold devices for performance while non-critical paths employ high-threshold transistors for leakage reduction. This approach optimizes power efficiency but introduces complexity in reliability testing due to the varied degradation rates across different threshold devices.

The emergence of AI-specific FinFET designs has further complicated this landscape. Specialized transistor configurations optimized for matrix multiplication operations may achieve superior power efficiency for specific AI tasks but potentially sacrifice reliability margins when workloads deviate from expected patterns. Testing data indicates that such specialized designs may exhibit up to 30% better power efficiency but can show accelerated degradation under varied computational loads.

Recent reliability studies reveal that traditional worst-case design margins may be excessively conservative for AI applications with inherent error tolerance. Adaptive reliability techniques that dynamically adjust protection mechanisms based on computational criticality show promise in optimizing the power-reliability balance. These approaches permit controlled reliability degradation in non-critical neural network layers while maintaining strict reliability standards for critical operations.

The industry is increasingly moving toward application-specific reliability targets rather than universal standards. This paradigm shift acknowledges that different AI applications have varying sensitivity to computational errors. For instance, inference applications may tolerate occasional computational inaccuracies, while training workloads require stricter reliability to prevent error propagation through the learning process. This nuanced approach enables more efficient power utilization while maintaining appropriate reliability levels for specific use cases.

Current industry approaches demonstrate several strategic trade-offs. Dynamic voltage and frequency scaling (DVFS) techniques allow for real-time adjustments based on computational demands, reducing power consumption during less intensive operations while maintaining reliability margins. However, aggressive DVFS implementations can introduce voltage transients that potentially accelerate aging mechanisms in FinFET structures.

Architectural innovations present another dimension of this trade-off. Multi-threshold voltage designs incorporate transistors with different threshold voltages within the same chip, allowing critical paths to utilize low-threshold devices for performance while non-critical paths employ high-threshold transistors for leakage reduction. This approach optimizes power efficiency but introduces complexity in reliability testing due to the varied degradation rates across different threshold devices.

The emergence of AI-specific FinFET designs has further complicated this landscape. Specialized transistor configurations optimized for matrix multiplication operations may achieve superior power efficiency for specific AI tasks but potentially sacrifice reliability margins when workloads deviate from expected patterns. Testing data indicates that such specialized designs may exhibit up to 30% better power efficiency but can show accelerated degradation under varied computational loads.

Recent reliability studies reveal that traditional worst-case design margins may be excessively conservative for AI applications with inherent error tolerance. Adaptive reliability techniques that dynamically adjust protection mechanisms based on computational criticality show promise in optimizing the power-reliability balance. These approaches permit controlled reliability degradation in non-critical neural network layers while maintaining strict reliability standards for critical operations.

The industry is increasingly moving toward application-specific reliability targets rather than universal standards. This paradigm shift acknowledges that different AI applications have varying sensitivity to computational errors. For instance, inference applications may tolerate occasional computational inaccuracies, while training workloads require stricter reliability to prevent error propagation through the learning process. This nuanced approach enables more efficient power utilization while maintaining appropriate reliability levels for specific use cases.

Unlock deeper insights with PatSnap Eureka Quick Research — get a full tech report to explore trends and direct your research. Try now!

Generate Your Research Report Instantly with AI Agent

Supercharge your innovation with PatSnap Eureka AI Agent Platform!