Signal Integrity vs Cable Loss

MAR 26, 20269 MIN READ

Generate Your Research Report Instantly with AI Agent

PatSnap Eureka helps you evaluate technical feasibility & market potential.

Signal Integrity and Cable Loss Background and Objectives

Signal integrity has emerged as one of the most critical challenges in modern electronic system design, fundamentally governing the reliable transmission of digital signals across interconnected components. As data rates continue to escalate exponentially, reaching speeds of 100 Gbps and beyond in contemporary high-performance computing systems, the preservation of signal quality throughout the transmission path has become paramount to system functionality and performance.

The evolution of signal integrity concerns parallels the advancement of digital technology itself. In early electronic systems operating at relatively low frequencies, signal degradation was primarily a secondary consideration. However, as clock speeds increased from megahertz to gigahertz ranges, engineers discovered that transmission line effects, previously negligible, began to dominate system behavior. This paradigm shift necessitated a fundamental rethinking of circuit design methodologies, transforming signal integrity from an afterthought into a primary design constraint.

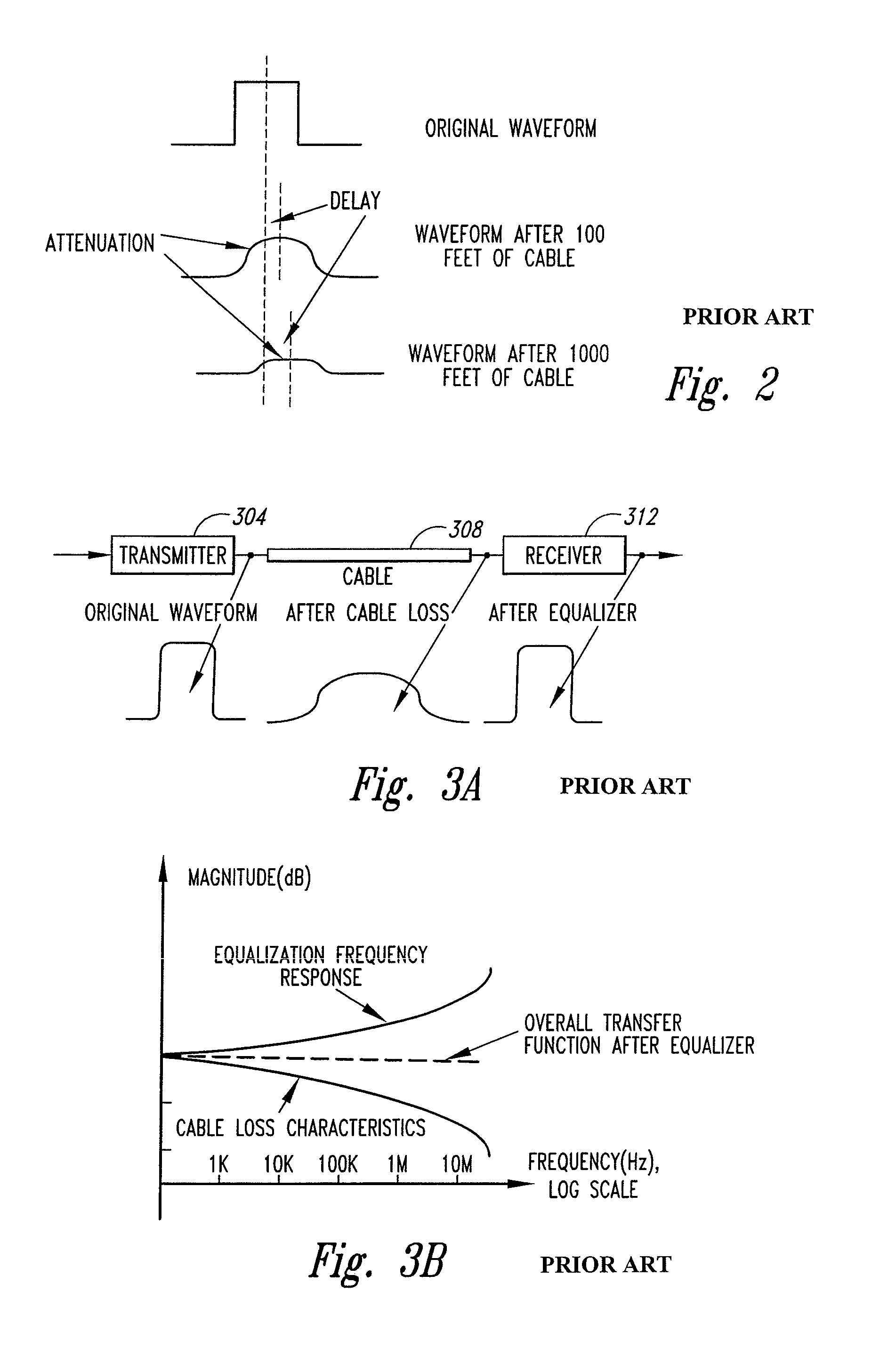

Cable loss represents a fundamental physical limitation that directly impacts signal integrity across all transmission mediums. This phenomenon encompasses various loss mechanisms including conductor resistance, dielectric absorption, skin effect, and proximity effect, each contributing to signal attenuation and distortion. The frequency-dependent nature of these losses creates particularly complex challenges, as higher frequency components of digital signals experience disproportionately greater attenuation, leading to intersymbol interference and reduced signal-to-noise ratios.

The primary objective of addressing signal integrity versus cable loss challenges centers on developing comprehensive methodologies and technologies that enable reliable high-speed data transmission while minimizing signal degradation. This encompasses advancing equalization techniques, optimizing cable materials and geometries, implementing sophisticated error correction algorithms, and developing predictive modeling capabilities that can accurately forecast signal behavior across diverse operating conditions.

Contemporary research efforts focus on achieving seamless integration between theoretical signal integrity principles and practical cable loss mitigation strategies. The goal extends beyond merely compensating for losses to creating transmission systems that maintain signal fidelity across extended distances and varying environmental conditions. This includes developing adaptive compensation mechanisms that can dynamically adjust to changing channel characteristics and establishing standardized methodologies for characterizing and predicting signal integrity performance in complex multi-channel systems.

The ultimate technical objective involves establishing a comprehensive framework that enables engineers to design transmission systems capable of supporting next-generation data rates while maintaining acceptable bit error rates and power consumption levels, thereby ensuring the continued advancement of high-performance electronic systems.

The evolution of signal integrity concerns parallels the advancement of digital technology itself. In early electronic systems operating at relatively low frequencies, signal degradation was primarily a secondary consideration. However, as clock speeds increased from megahertz to gigahertz ranges, engineers discovered that transmission line effects, previously negligible, began to dominate system behavior. This paradigm shift necessitated a fundamental rethinking of circuit design methodologies, transforming signal integrity from an afterthought into a primary design constraint.

Cable loss represents a fundamental physical limitation that directly impacts signal integrity across all transmission mediums. This phenomenon encompasses various loss mechanisms including conductor resistance, dielectric absorption, skin effect, and proximity effect, each contributing to signal attenuation and distortion. The frequency-dependent nature of these losses creates particularly complex challenges, as higher frequency components of digital signals experience disproportionately greater attenuation, leading to intersymbol interference and reduced signal-to-noise ratios.

The primary objective of addressing signal integrity versus cable loss challenges centers on developing comprehensive methodologies and technologies that enable reliable high-speed data transmission while minimizing signal degradation. This encompasses advancing equalization techniques, optimizing cable materials and geometries, implementing sophisticated error correction algorithms, and developing predictive modeling capabilities that can accurately forecast signal behavior across diverse operating conditions.

Contemporary research efforts focus on achieving seamless integration between theoretical signal integrity principles and practical cable loss mitigation strategies. The goal extends beyond merely compensating for losses to creating transmission systems that maintain signal fidelity across extended distances and varying environmental conditions. This includes developing adaptive compensation mechanisms that can dynamically adjust to changing channel characteristics and establishing standardized methodologies for characterizing and predicting signal integrity performance in complex multi-channel systems.

The ultimate technical objective involves establishing a comprehensive framework that enables engineers to design transmission systems capable of supporting next-generation data rates while maintaining acceptable bit error rates and power consumption levels, thereby ensuring the continued advancement of high-performance electronic systems.

Market Demand for High-Speed Signal Transmission Solutions

The global demand for high-speed signal transmission solutions has experienced unprecedented growth driven by the proliferation of data-intensive applications and emerging technologies. Cloud computing infrastructure, artificial intelligence workloads, and edge computing deployments require increasingly sophisticated signal integrity management to maintain performance standards across expanding network architectures.

Data centers represent the largest market segment for high-speed signal transmission solutions, where operators face mounting pressure to support bandwidth-intensive applications while minimizing signal degradation. The transition to higher data rates in server interconnects, storage networks, and switching fabrics has created substantial demand for advanced cable technologies and signal conditioning solutions that can effectively manage cable loss characteristics.

Telecommunications infrastructure modernization drives significant market demand as service providers upgrade networks to support enhanced mobile broadband and emerging applications. The deployment of advanced wireless technologies necessitates high-performance backhaul and fronthaul connections where signal integrity directly impacts network reliability and user experience quality.

Enterprise networking markets demonstrate growing appetite for high-speed transmission solutions as organizations adopt bandwidth-intensive technologies including video conferencing, collaborative platforms, and distributed computing architectures. These applications require consistent signal quality across various cable lengths and environmental conditions, creating opportunities for specialized signal integrity solutions.

Automotive and industrial automation sectors present emerging demand drivers as connected vehicle technologies and Industry 4.0 implementations require reliable high-speed data transmission in challenging environments. These applications demand robust solutions that maintain signal integrity despite electromagnetic interference and mechanical stress factors.

The consumer electronics market contributes additional demand through gaming systems, virtual reality platforms, and high-resolution display technologies that require pristine signal transmission for optimal user experiences. These applications often involve longer cable runs where managing cable loss becomes critical for maintaining performance standards.

Market growth trajectories indicate sustained expansion across all segments as digital transformation initiatives accelerate and new applications emerge. The increasing complexity of signal integrity requirements across diverse operating environments continues to drive innovation in transmission solutions and cable loss mitigation technologies.

Data centers represent the largest market segment for high-speed signal transmission solutions, where operators face mounting pressure to support bandwidth-intensive applications while minimizing signal degradation. The transition to higher data rates in server interconnects, storage networks, and switching fabrics has created substantial demand for advanced cable technologies and signal conditioning solutions that can effectively manage cable loss characteristics.

Telecommunications infrastructure modernization drives significant market demand as service providers upgrade networks to support enhanced mobile broadband and emerging applications. The deployment of advanced wireless technologies necessitates high-performance backhaul and fronthaul connections where signal integrity directly impacts network reliability and user experience quality.

Enterprise networking markets demonstrate growing appetite for high-speed transmission solutions as organizations adopt bandwidth-intensive technologies including video conferencing, collaborative platforms, and distributed computing architectures. These applications require consistent signal quality across various cable lengths and environmental conditions, creating opportunities for specialized signal integrity solutions.

Automotive and industrial automation sectors present emerging demand drivers as connected vehicle technologies and Industry 4.0 implementations require reliable high-speed data transmission in challenging environments. These applications demand robust solutions that maintain signal integrity despite electromagnetic interference and mechanical stress factors.

The consumer electronics market contributes additional demand through gaming systems, virtual reality platforms, and high-resolution display technologies that require pristine signal transmission for optimal user experiences. These applications often involve longer cable runs where managing cable loss becomes critical for maintaining performance standards.

Market growth trajectories indicate sustained expansion across all segments as digital transformation initiatives accelerate and new applications emerge. The increasing complexity of signal integrity requirements across diverse operating environments continues to drive innovation in transmission solutions and cable loss mitigation technologies.

Current State and Challenges in Cable Loss Mitigation

The current landscape of cable loss mitigation presents a complex array of technological approaches, each addressing different aspects of signal degradation in high-speed data transmission systems. Traditional methods primarily focus on material optimization, with low-loss dielectric materials such as expanded PTFE and advanced foam structures becoming industry standards for high-frequency applications. These materials typically achieve dielectric constants below 2.1 and loss tangents under 0.0009, representing significant improvements over conventional cable designs.

Advanced conductor technologies constitute another major area of development, with silver-plated copper conductors and specialized plating techniques demonstrating measurable improvements in high-frequency performance. Hollow conductor designs and optimized strand configurations have emerged as viable solutions for reducing skin effect losses, particularly in applications exceeding 10 GHz bandwidth requirements.

Despite these material advances, several fundamental challenges continue to limit the effectiveness of current mitigation strategies. Manufacturing consistency remains a critical issue, as variations in dielectric thickness, conductor positioning, and impedance control directly impact signal integrity performance. Current production tolerances often struggle to meet the precision requirements of next-generation high-speed systems, particularly in applications demanding sub-picosecond timing accuracy.

Thermal management presents another significant constraint, as elevated operating temperatures substantially increase both dielectric and conductor losses. Existing thermal mitigation approaches, including improved heat dissipation designs and temperature-stable materials, add complexity and cost while providing only incremental performance gains.

The scalability of current solutions faces increasing pressure from evolving system requirements. As data rates continue advancing toward 100+ Gbps per channel, traditional cable loss mitigation techniques approach their theoretical limits. The relationship between cable length and achievable data rates becomes increasingly restrictive, forcing system designers to implement costly active compensation methods or accept reduced transmission distances.

Electromagnetic interference susceptibility remains inadequately addressed by current shielding technologies, particularly in dense installation environments where multiple high-speed cables operate in proximity. Existing shielding approaches often compromise flexibility and increase cable diameter, creating mechanical constraints that limit practical deployment scenarios.

Cost considerations significantly influence the adoption of advanced cable loss mitigation technologies. While high-performance materials and manufacturing processes can achieve superior electrical characteristics, their economic viability remains questionable for many applications, creating a persistent gap between technical capability and market accessibility.

Advanced conductor technologies constitute another major area of development, with silver-plated copper conductors and specialized plating techniques demonstrating measurable improvements in high-frequency performance. Hollow conductor designs and optimized strand configurations have emerged as viable solutions for reducing skin effect losses, particularly in applications exceeding 10 GHz bandwidth requirements.

Despite these material advances, several fundamental challenges continue to limit the effectiveness of current mitigation strategies. Manufacturing consistency remains a critical issue, as variations in dielectric thickness, conductor positioning, and impedance control directly impact signal integrity performance. Current production tolerances often struggle to meet the precision requirements of next-generation high-speed systems, particularly in applications demanding sub-picosecond timing accuracy.

Thermal management presents another significant constraint, as elevated operating temperatures substantially increase both dielectric and conductor losses. Existing thermal mitigation approaches, including improved heat dissipation designs and temperature-stable materials, add complexity and cost while providing only incremental performance gains.

The scalability of current solutions faces increasing pressure from evolving system requirements. As data rates continue advancing toward 100+ Gbps per channel, traditional cable loss mitigation techniques approach their theoretical limits. The relationship between cable length and achievable data rates becomes increasingly restrictive, forcing system designers to implement costly active compensation methods or accept reduced transmission distances.

Electromagnetic interference susceptibility remains inadequately addressed by current shielding technologies, particularly in dense installation environments where multiple high-speed cables operate in proximity. Existing shielding approaches often compromise flexibility and increase cable diameter, creating mechanical constraints that limit practical deployment scenarios.

Cost considerations significantly influence the adoption of advanced cable loss mitigation technologies. While high-performance materials and manufacturing processes can achieve superior electrical characteristics, their economic viability remains questionable for many applications, creating a persistent gap between technical capability and market accessibility.

Existing Solutions for Signal Loss Compensation

01 Cable design and construction for signal integrity improvement

Advanced cable designs incorporate specific structural elements such as shielding configurations, conductor arrangements, and dielectric materials to minimize signal degradation. These designs focus on controlling impedance, reducing crosstalk, and maintaining signal quality over transmission distances. Specialized cable geometries and material selections help preserve signal integrity by minimizing electromagnetic interference and maintaining consistent electrical characteristics throughout the cable length.- Cable design and construction for signal integrity improvement: Optimizing cable physical structure, including conductor arrangement, shielding configurations, and dielectric materials, can significantly reduce signal loss and improve signal integrity. Advanced cable designs incorporate specific geometries and material compositions to minimize electromagnetic interference and maintain signal quality over transmission distances. These designs focus on controlling impedance characteristics and reducing crosstalk between adjacent conductors.

- Signal compensation and equalization techniques: Active and passive equalization methods are employed to compensate for signal degradation caused by cable loss. These techniques involve pre-emphasis, de-emphasis, and adaptive equalization circuits that adjust signal characteristics to counteract frequency-dependent attenuation. Signal processing algorithms can be implemented to restore signal integrity by boosting high-frequency components that are typically attenuated during transmission through cables.

- Impedance matching and termination strategies: Proper impedance matching between cables and connected devices is critical for maintaining signal integrity and minimizing reflections. Termination techniques, including resistive termination networks and controlled impedance designs, help reduce signal reflections and standing waves that contribute to signal degradation. These strategies ensure maximum power transfer and minimize signal distortion at connection points.

- High-frequency signal transmission optimization: Specialized techniques for maintaining signal integrity at high frequencies address skin effect, dielectric losses, and dispersion phenomena. These approaches include the use of low-loss dielectric materials, optimized conductor geometries, and frequency-dependent compensation methods. Advanced transmission line designs minimize attenuation of high-frequency components while maintaining consistent signal propagation characteristics across the frequency spectrum.

- Testing and measurement methods for signal integrity assessment: Comprehensive testing methodologies evaluate cable performance and signal integrity through time-domain and frequency-domain analysis. These methods include measurement of insertion loss, return loss, crosstalk, and eye diagram analysis to characterize signal quality. Diagnostic techniques enable identification of signal integrity issues and verification of cable performance against specified standards, facilitating optimization of transmission systems.

02 Equalization and compensation techniques for cable loss

Signal processing methods are employed to compensate for attenuation and distortion that occurs during signal transmission through cables. These techniques include adaptive equalization algorithms, pre-emphasis, and de-emphasis circuits that adjust signal characteristics to counteract frequency-dependent losses. Digital and analog compensation methods work to restore signal amplitude and timing characteristics that degrade over cable length.Expand Specific Solutions03 High-frequency signal transmission and loss mitigation

Specialized approaches address the challenges of transmitting high-frequency signals where cable losses are more pronounced. These solutions involve impedance matching techniques, termination strategies, and the use of low-loss materials optimized for high-frequency performance. Methods focus on reducing skin effect losses, dielectric losses, and reflection-related signal degradation that become significant at elevated frequencies.Expand Specific Solutions04 Testing and measurement methods for signal integrity assessment

Diagnostic techniques and measurement systems are utilized to evaluate cable performance and signal integrity characteristics. These methods include time-domain reflectometry, frequency-domain analysis, and eye diagram measurements to quantify signal quality, identify sources of degradation, and verify compliance with performance specifications. Testing approaches enable characterization of insertion loss, return loss, and other critical parameters.Expand Specific Solutions05 Connector and interface optimization for signal integrity

Interface designs and connector technologies are optimized to minimize signal integrity degradation at connection points. These solutions address impedance discontinuities, contact resistance, and electromagnetic coupling issues that occur at cable-to-device interfaces. Advanced connector designs incorporate features such as controlled impedance transitions, improved shielding continuity, and reduced parasitic effects to maintain signal quality across connections.Expand Specific Solutions

Key Players in High-Speed Cable and Connector Industry

The signal integrity versus cable loss technology landscape represents a mature yet rapidly evolving market driven by increasing data transmission demands across telecommunications, computing, and automotive sectors. The industry is experiencing significant growth as 5G deployment, high-speed computing, and IoT applications intensify requirements for low-loss, high-fidelity signal transmission. Technology maturity varies significantly among key players, with established semiconductor leaders like Texas Instruments, Qualcomm, and Samsung Electronics driving advanced signal processing solutions, while infrastructure specialists including IBM, Huawei, and Samtec focus on high-performance interconnect systems. Companies like Amphenol and Belden leverage decades of connector expertise, whereas newer entrants like MediaTek and Lattice Semiconductor bring innovative FPGA and processing capabilities. The competitive landscape spans from component manufacturers to system integrators, reflecting the technology's critical role across multiple industries requiring reliable, high-speed data transmission with minimal signal degradation.

MARVELL ASIA PTE LTD

Technical Solution: Marvell specializes in high-performance connectivity solutions that address signal integrity and cable loss through advanced PHY (Physical Layer) technologies. Their approach combines adaptive equalization, clock and data recovery (CDR) circuits, and sophisticated signal processing algorithms to maintain data integrity across various cable types and lengths. The company's Ethernet PHY solutions feature advanced DSP-based equalization that can compensate for up to 40dB of cable loss, supporting 10G, 25G, and 100G data rates. Their technology includes programmable pre-emphasis, continuous-time linear equalization, and decision feedback equalization to optimize signal quality in real-time based on channel characteristics.

Strengths: Strong expertise in high-speed networking, comprehensive PHY solution portfolio, excellent power efficiency. Weaknesses: Limited presence in consumer markets, primarily focused on data center and enterprise applications.

Texas Instruments Incorporated

Technical Solution: TI develops advanced signal conditioning and equalization solutions to combat cable loss in high-speed digital communications. Their approach includes adaptive equalization algorithms, pre-emphasis drivers, and continuous-time linear equalizers (CTLE) that automatically adjust signal amplitude and timing to compensate for frequency-dependent losses in cables. The company's SerDes (Serializer/Deserializer) technology incorporates decision feedback equalizers (DFE) and feed-forward equalizers (FFE) to maintain signal integrity across various cable lengths and types, supporting data rates up to 28 Gbps and beyond.

Strengths: Industry-leading analog and mixed-signal expertise, comprehensive equalization portfolio, proven reliability in automotive and industrial applications. Weaknesses: Higher power consumption compared to some competitors, complex implementation requiring specialized design knowledge.

Core Innovations in Low-Loss Cable Design

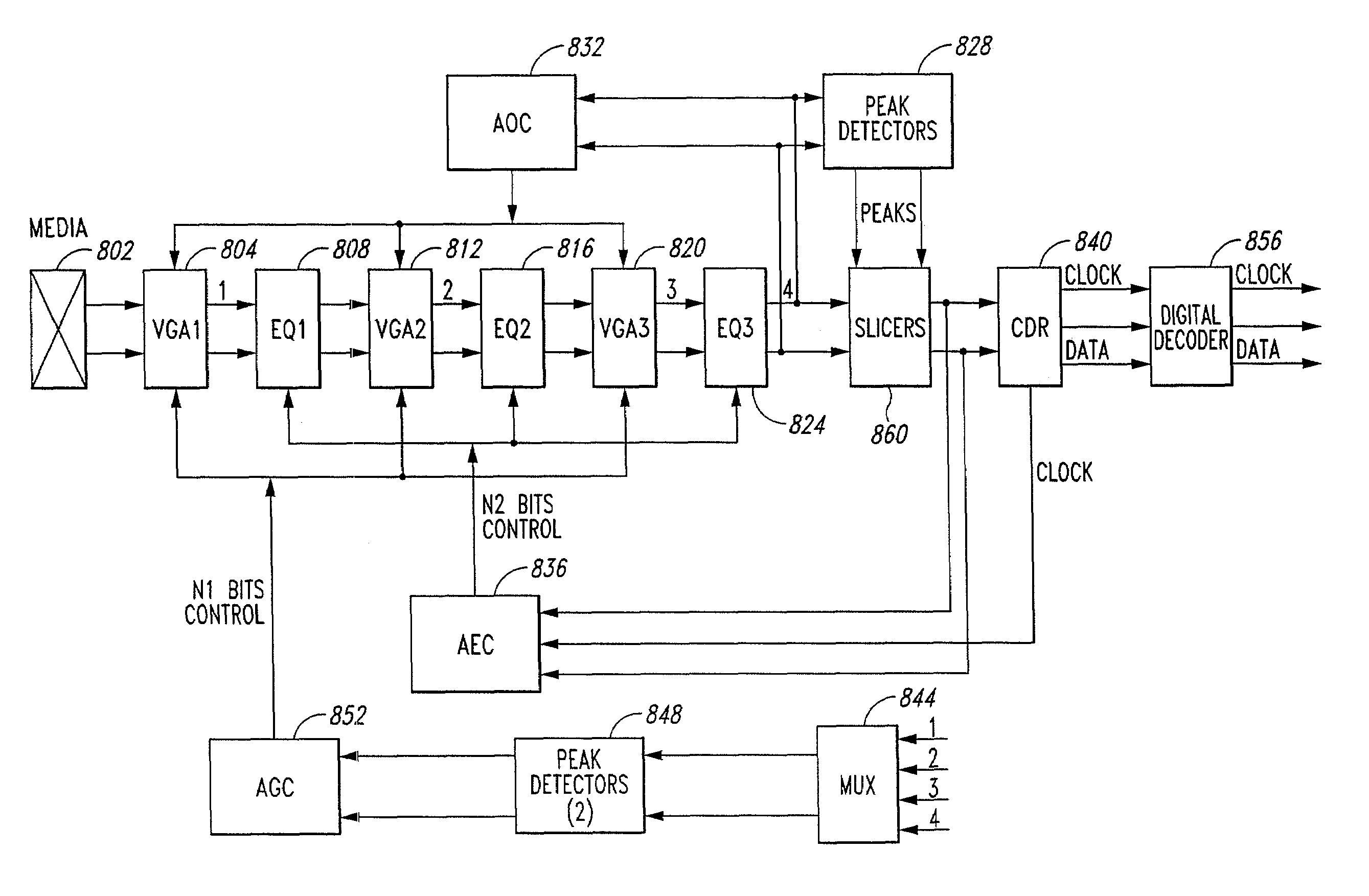

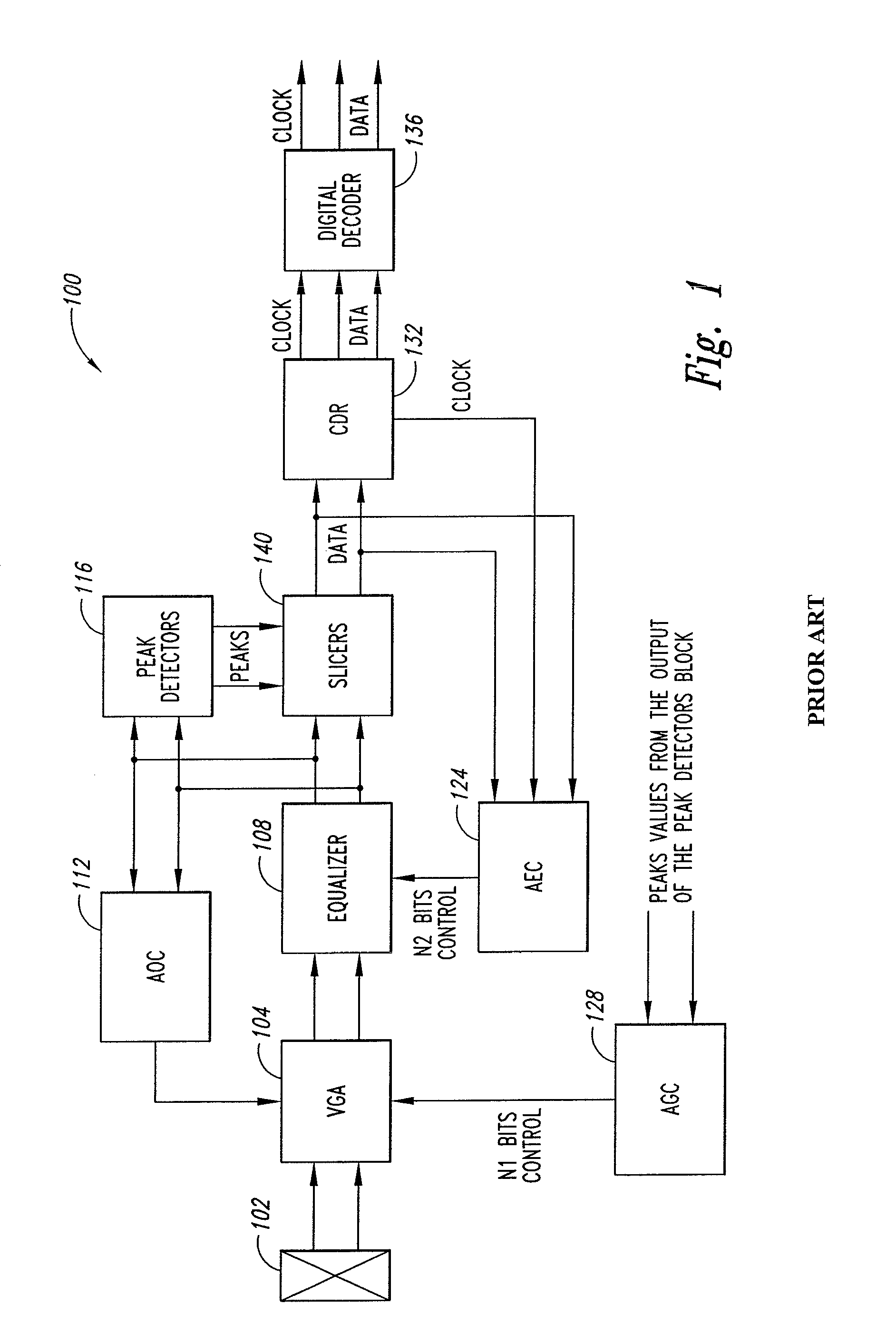

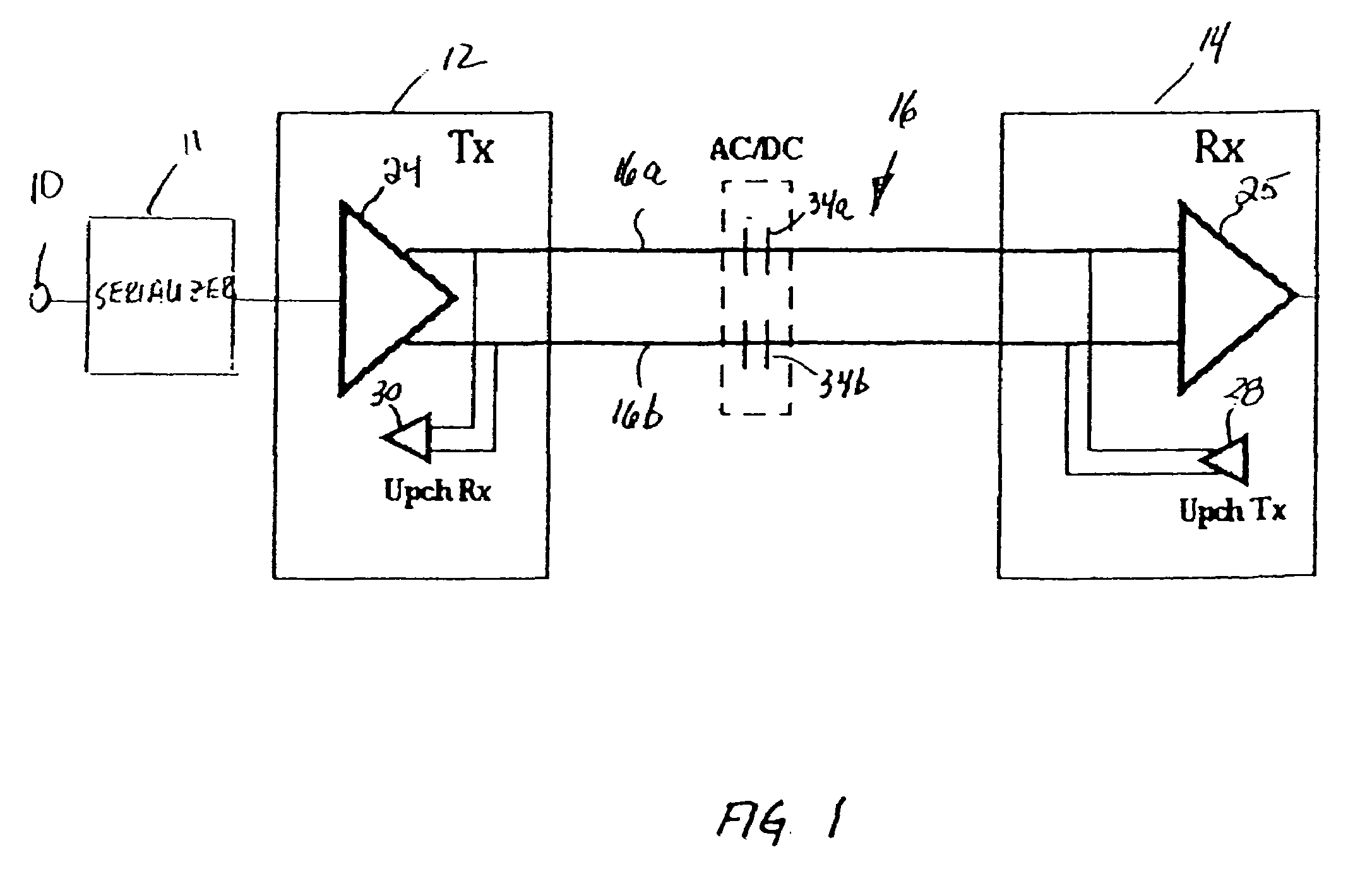

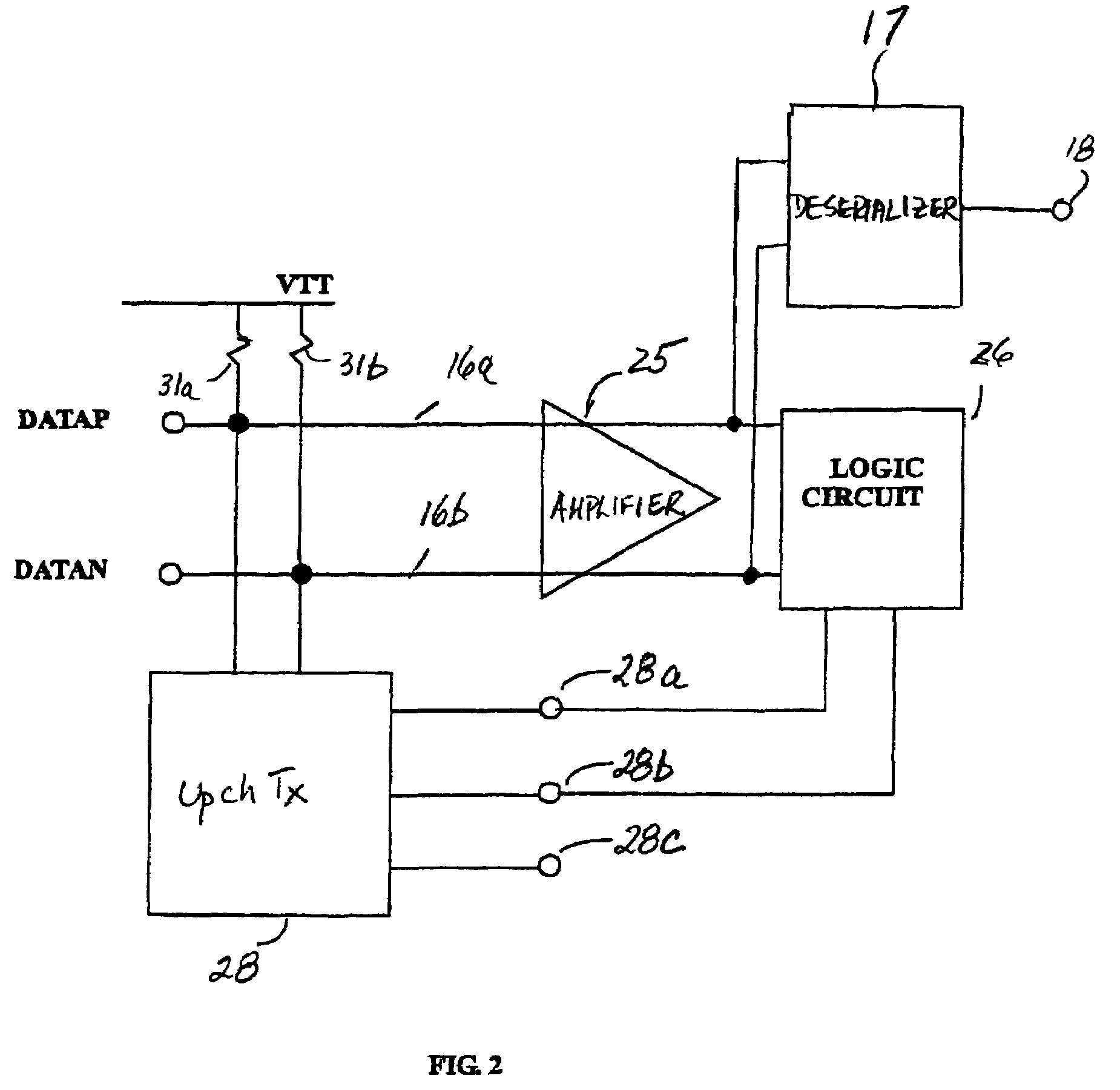

Dynamic range signal to noise optimization system and method for receiver

PatentActiveUS7440525B2

Innovation

- A dynamic range optimization system that employs a series connection of variable gain amplifiers (VGAs) and equalizers, with independent peak detectors and gain control circuits to adjust VGA gain and equalizer coefficients separately, ensuring optimal compensation for both frequency-dependent and independent losses without degrading the SNR.

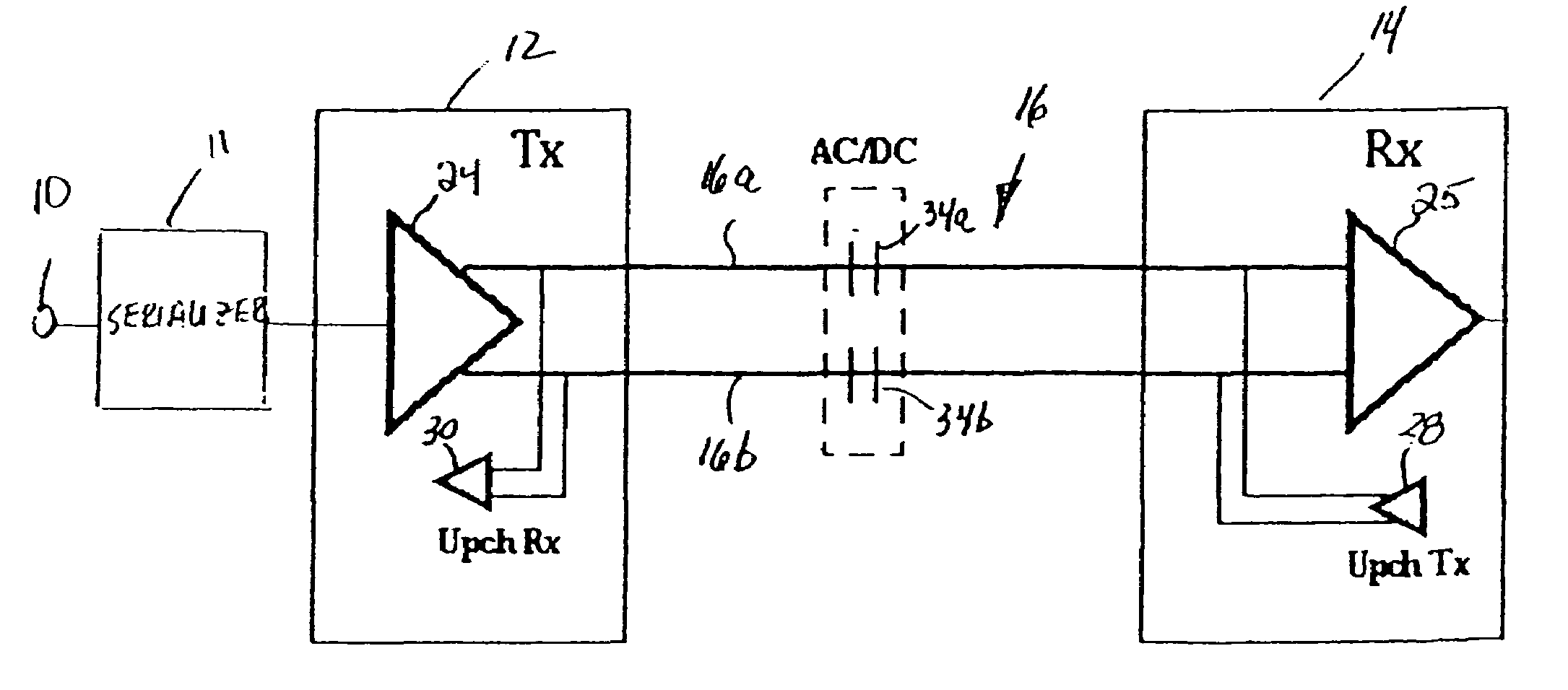

Data transceiver and method for equalizing the data eye of a differential input data signal

PatentInactiveUS7352815B2

Innovation

- A data transceiver system that dynamically equalizes differential data signals by sensing signal integrity and adjusting amplification based on feedback from the receiver to control the data eye, using a variable frequency-selective gain driver circuit and logic circuit to determine and correct the Bit Error Rate.

Industry Standards and Compliance Requirements

Signal integrity and cable loss management are governed by a comprehensive framework of industry standards that ensure reliable high-speed data transmission across various applications. These standards establish critical parameters for acceptable signal degradation, insertion loss limits, and performance metrics that manufacturers and system integrators must adhere to when designing and implementing cable solutions.

The IEEE 802.3 Ethernet standards family provides fundamental specifications for cable performance in networking applications. IEEE 802.3bz defines requirements for 2.5GBASE-T and 5GBASE-T over existing Category 5e and Category 6 cabling, establishing specific insertion loss budgets and signal-to-noise ratio thresholds. For higher-speed applications, IEEE 802.3an and IEEE 802.3bq specify stringent requirements for 10GBASE-T and 25GBASE-T respectively, with detailed alien crosstalk and return loss specifications.

TIA/EIA structured cabling standards, particularly TIA-568 series, establish comprehensive performance categories for copper cabling systems. Category 6A specifications limit insertion loss to 2.07 dB per 100 meters at 500 MHz, while Category 8 standards reduce this to 1.5 dB at 2000 MHz. These standards also define near-end crosstalk (NEXT) and far-end crosstalk (FEXT) requirements that directly impact signal integrity in multi-pair cable environments.

For fiber optic applications, ITU-T G.652 through G.657 standards specify attenuation coefficients and dispersion characteristics. Single-mode fiber standards typically require attenuation below 0.4 dB/km at 1310 nm and 0.3 dB/km at 1550 nm wavelengths. Multimode fiber standards like OM3 and OM4 establish modal bandwidth requirements that determine maximum transmission distances for various data rates.

Compliance verification requires standardized testing methodologies defined by IEC 61935 series for copper cabling and IEC 61280 series for fiber optics. These standards specify measurement procedures, test equipment calibration requirements, and acceptable measurement uncertainties. Field testing must demonstrate compliance with channel and permanent link models, ensuring installed systems meet theoretical performance specifications under real-world conditions.

The IEEE 802.3 Ethernet standards family provides fundamental specifications for cable performance in networking applications. IEEE 802.3bz defines requirements for 2.5GBASE-T and 5GBASE-T over existing Category 5e and Category 6 cabling, establishing specific insertion loss budgets and signal-to-noise ratio thresholds. For higher-speed applications, IEEE 802.3an and IEEE 802.3bq specify stringent requirements for 10GBASE-T and 25GBASE-T respectively, with detailed alien crosstalk and return loss specifications.

TIA/EIA structured cabling standards, particularly TIA-568 series, establish comprehensive performance categories for copper cabling systems. Category 6A specifications limit insertion loss to 2.07 dB per 100 meters at 500 MHz, while Category 8 standards reduce this to 1.5 dB at 2000 MHz. These standards also define near-end crosstalk (NEXT) and far-end crosstalk (FEXT) requirements that directly impact signal integrity in multi-pair cable environments.

For fiber optic applications, ITU-T G.652 through G.657 standards specify attenuation coefficients and dispersion characteristics. Single-mode fiber standards typically require attenuation below 0.4 dB/km at 1310 nm and 0.3 dB/km at 1550 nm wavelengths. Multimode fiber standards like OM3 and OM4 establish modal bandwidth requirements that determine maximum transmission distances for various data rates.

Compliance verification requires standardized testing methodologies defined by IEC 61935 series for copper cabling and IEC 61280 series for fiber optics. These standards specify measurement procedures, test equipment calibration requirements, and acceptable measurement uncertainties. Field testing must demonstrate compliance with channel and permanent link models, ensuring installed systems meet theoretical performance specifications under real-world conditions.

Cost-Performance Trade-offs in Cable Selection

Cable selection in high-speed digital systems represents a critical balance between performance requirements and economic constraints. The relationship between signal integrity and cable loss directly impacts the total cost of ownership, as higher-performance cables typically command premium pricing while delivering superior electrical characteristics. Organizations must carefully evaluate whether the additional investment in low-loss cables justifies the performance gains, particularly when considering system-wide deployment costs.

The cost spectrum for high-speed cables varies significantly based on construction quality and materials. Standard copper cables with basic shielding may cost 50-70% less than premium low-loss alternatives featuring advanced dielectric materials and precision manufacturing. However, this initial cost differential must be weighed against potential system-level expenses including signal conditioning equipment, repeaters, and increased power consumption required to compensate for higher cable losses.

Performance scaling demonstrates non-linear cost relationships across different cable categories. Moving from Category 6A to Category 8 cables can increase unit costs by 200-300%, yet the bandwidth improvement may only represent a 2-3x increase. Similarly, fiber optic solutions, while offering superior signal integrity, introduce additional costs for transceivers, connectors, and specialized installation expertise that can multiply the total implementation expense by factors of 4-6x compared to copper alternatives.

Economic optimization requires analyzing the total system cost rather than individual component pricing. Lower-cost cables with higher insertion loss may necessitate additional amplification stages, consuming more power and requiring additional cooling infrastructure. These operational expenses can accumulate over the system lifecycle, potentially exceeding the initial savings from selecting budget cable options.

Market dynamics further influence cost-performance calculations, as volume procurement can significantly reduce per-unit costs for premium cables. Large-scale deployments may achieve 30-40% cost reductions through bulk purchasing agreements, effectively narrowing the price gap between standard and high-performance options. Additionally, standardizing on higher-grade cables can reduce inventory complexity and maintenance costs while providing headroom for future bandwidth requirements.

The decision framework must incorporate application-specific requirements, deployment scale, and lifecycle considerations to identify the optimal cost-performance intersection point for each implementation scenario.

The cost spectrum for high-speed cables varies significantly based on construction quality and materials. Standard copper cables with basic shielding may cost 50-70% less than premium low-loss alternatives featuring advanced dielectric materials and precision manufacturing. However, this initial cost differential must be weighed against potential system-level expenses including signal conditioning equipment, repeaters, and increased power consumption required to compensate for higher cable losses.

Performance scaling demonstrates non-linear cost relationships across different cable categories. Moving from Category 6A to Category 8 cables can increase unit costs by 200-300%, yet the bandwidth improvement may only represent a 2-3x increase. Similarly, fiber optic solutions, while offering superior signal integrity, introduce additional costs for transceivers, connectors, and specialized installation expertise that can multiply the total implementation expense by factors of 4-6x compared to copper alternatives.

Economic optimization requires analyzing the total system cost rather than individual component pricing. Lower-cost cables with higher insertion loss may necessitate additional amplification stages, consuming more power and requiring additional cooling infrastructure. These operational expenses can accumulate over the system lifecycle, potentially exceeding the initial savings from selecting budget cable options.

Market dynamics further influence cost-performance calculations, as volume procurement can significantly reduce per-unit costs for premium cables. Large-scale deployments may achieve 30-40% cost reductions through bulk purchasing agreements, effectively narrowing the price gap between standard and high-performance options. Additionally, standardizing on higher-grade cables can reduce inventory complexity and maintenance costs while providing headroom for future bandwidth requirements.

The decision framework must incorporate application-specific requirements, deployment scale, and lifecycle considerations to identify the optimal cost-performance intersection point for each implementation scenario.

Unlock deeper insights with PatSnap Eureka Quick Research — get a full tech report to explore trends and direct your research. Try now!

Generate Your Research Report Instantly with AI Agent

Supercharge your innovation with PatSnap Eureka AI Agent Platform!