Visual Servoing vs Smart Sensor Networks: A Detailed Comparison

APR 13, 20268 MIN READ

Generate Your Research Report Instantly with AI Agent

PatSnap Eureka helps you evaluate technical feasibility & market potential.

Visual Servoing and Smart Sensor Networks Background and Objectives

Visual servoing represents a sophisticated control methodology that integrates computer vision with robotic systems to achieve precise positioning and manipulation tasks. This technology emerged from the convergence of robotics, computer vision, and control theory, enabling robots to use visual feedback for real-time decision making and motion control. The fundamental principle involves processing visual information from cameras to generate control signals that guide robotic actuators toward desired positions or trajectories.

The evolution of visual servoing has been driven by advances in image processing algorithms, computational power, and sensor miniaturization. Early implementations focused on simple geometric tracking, while modern systems incorporate machine learning, deep neural networks, and advanced feature extraction techniques. This progression has expanded applications from industrial assembly lines to autonomous vehicles, medical robotics, and unmanned aerial systems.

Smart sensor networks constitute a distributed sensing paradigm that connects multiple intelligent sensors through wireless or wired communication protocols. These networks emerged from the intersection of sensor technology, wireless communications, and distributed computing. Each sensor node possesses computational capabilities, enabling local data processing, decision making, and collaborative information sharing across the network infrastructure.

The development trajectory of smart sensor networks has been shaped by miniaturization trends, energy efficiency improvements, and standardization of communication protocols. Initial deployments concentrated on environmental monitoring and industrial process control, but contemporary applications encompass smart cities, precision agriculture, healthcare monitoring, and Internet of Things ecosystems.

Both technologies share common objectives in enhancing automation capabilities and improving system intelligence through distributed sensing and processing. Visual servoing aims to achieve precise control through visual feedback loops, while smart sensor networks focus on comprehensive environmental awareness through collaborative sensing. The convergence of these technologies represents a significant opportunity for creating more robust and adaptive autonomous systems.

The primary technical objectives include developing real-time processing capabilities, ensuring system reliability under varying environmental conditions, and achieving seamless integration with existing automation infrastructure. Performance metrics encompass accuracy, response time, energy efficiency, and scalability across different operational scenarios.

Current research directions emphasize the integration of artificial intelligence, edge computing capabilities, and advanced communication protocols to enhance both visual servoing precision and sensor network coordination. These developments are establishing foundations for next-generation autonomous systems that combine visual intelligence with distributed sensing capabilities.

The evolution of visual servoing has been driven by advances in image processing algorithms, computational power, and sensor miniaturization. Early implementations focused on simple geometric tracking, while modern systems incorporate machine learning, deep neural networks, and advanced feature extraction techniques. This progression has expanded applications from industrial assembly lines to autonomous vehicles, medical robotics, and unmanned aerial systems.

Smart sensor networks constitute a distributed sensing paradigm that connects multiple intelligent sensors through wireless or wired communication protocols. These networks emerged from the intersection of sensor technology, wireless communications, and distributed computing. Each sensor node possesses computational capabilities, enabling local data processing, decision making, and collaborative information sharing across the network infrastructure.

The development trajectory of smart sensor networks has been shaped by miniaturization trends, energy efficiency improvements, and standardization of communication protocols. Initial deployments concentrated on environmental monitoring and industrial process control, but contemporary applications encompass smart cities, precision agriculture, healthcare monitoring, and Internet of Things ecosystems.

Both technologies share common objectives in enhancing automation capabilities and improving system intelligence through distributed sensing and processing. Visual servoing aims to achieve precise control through visual feedback loops, while smart sensor networks focus on comprehensive environmental awareness through collaborative sensing. The convergence of these technologies represents a significant opportunity for creating more robust and adaptive autonomous systems.

The primary technical objectives include developing real-time processing capabilities, ensuring system reliability under varying environmental conditions, and achieving seamless integration with existing automation infrastructure. Performance metrics encompass accuracy, response time, energy efficiency, and scalability across different operational scenarios.

Current research directions emphasize the integration of artificial intelligence, edge computing capabilities, and advanced communication protocols to enhance both visual servoing precision and sensor network coordination. These developments are establishing foundations for next-generation autonomous systems that combine visual intelligence with distributed sensing capabilities.

Current State and Challenges in Visual Servoing vs Smart Sensors

Visual servoing technology has reached significant maturity in controlled industrial environments, with established applications in robotic assembly, quality inspection, and automated manufacturing. Current systems demonstrate high precision in structured settings, achieving sub-millimeter accuracy for tasks such as pick-and-place operations and component alignment. However, the technology faces substantial limitations when deployed in dynamic or unstructured environments where lighting conditions vary, objects move unpredictably, or visual occlusions occur frequently.

Smart sensor networks have evolved from simple data collection systems to sophisticated distributed intelligence platforms capable of real-time processing and autonomous decision-making. Modern implementations leverage edge computing capabilities, enabling local data processing and reducing latency in critical applications. These networks excel in large-scale monitoring scenarios, environmental sensing, and infrastructure management, where distributed intelligence provides comprehensive situational awareness across extensive geographical areas.

The primary challenge confronting visual servoing lies in its dependency on consistent visual input and computational processing power. Real-time image processing requirements often necessitate high-performance computing resources, limiting deployment in resource-constrained environments. Additionally, visual servoing systems struggle with robustness issues when encountering unexpected visual scenarios, requiring extensive calibration and environmental adaptation procedures.

Smart sensor networks face distinct challenges related to network reliability, data synchronization, and energy management across distributed nodes. Maintaining consistent communication protocols while ensuring fault tolerance remains problematic, particularly in harsh environmental conditions. The complexity of coordinating multiple sensor inputs and managing data fusion algorithms presents ongoing technical hurdles that impact system reliability and response times.

Integration challenges emerge when attempting to combine visual servoing with smart sensor networks, as the two technologies operate on different temporal scales and data processing paradigms. Visual servoing requires high-frequency, low-latency feedback loops, while sensor networks typically operate on longer time horizons with emphasis on data aggregation and trend analysis. Bridging these operational differences requires sophisticated middleware solutions and standardized communication protocols.

Current technological gaps include limited interoperability between visual servoing systems and existing sensor network infrastructures, insufficient standardization of data formats and communication protocols, and inadequate real-time processing capabilities for handling the massive data streams generated by integrated systems. These limitations constrain the development of truly autonomous systems that could leverage the complementary strengths of both technologies.

Smart sensor networks have evolved from simple data collection systems to sophisticated distributed intelligence platforms capable of real-time processing and autonomous decision-making. Modern implementations leverage edge computing capabilities, enabling local data processing and reducing latency in critical applications. These networks excel in large-scale monitoring scenarios, environmental sensing, and infrastructure management, where distributed intelligence provides comprehensive situational awareness across extensive geographical areas.

The primary challenge confronting visual servoing lies in its dependency on consistent visual input and computational processing power. Real-time image processing requirements often necessitate high-performance computing resources, limiting deployment in resource-constrained environments. Additionally, visual servoing systems struggle with robustness issues when encountering unexpected visual scenarios, requiring extensive calibration and environmental adaptation procedures.

Smart sensor networks face distinct challenges related to network reliability, data synchronization, and energy management across distributed nodes. Maintaining consistent communication protocols while ensuring fault tolerance remains problematic, particularly in harsh environmental conditions. The complexity of coordinating multiple sensor inputs and managing data fusion algorithms presents ongoing technical hurdles that impact system reliability and response times.

Integration challenges emerge when attempting to combine visual servoing with smart sensor networks, as the two technologies operate on different temporal scales and data processing paradigms. Visual servoing requires high-frequency, low-latency feedback loops, while sensor networks typically operate on longer time horizons with emphasis on data aggregation and trend analysis. Bridging these operational differences requires sophisticated middleware solutions and standardized communication protocols.

Current technological gaps include limited interoperability between visual servoing systems and existing sensor network infrastructures, insufficient standardization of data formats and communication protocols, and inadequate real-time processing capabilities for handling the massive data streams generated by integrated systems. These limitations constrain the development of truly autonomous systems that could leverage the complementary strengths of both technologies.

Existing Visual Servoing and Smart Sensor Solutions

01 Visual servoing control systems for robotic manipulation

Visual servoing techniques enable robots to use visual feedback from cameras to control their motion and perform precise manipulation tasks. These systems process image data in real-time to calculate position errors and adjust robot trajectories accordingly. The integration of visual sensors with control algorithms allows for adaptive positioning and tracking of objects in dynamic environments, improving accuracy and flexibility in automated manufacturing and assembly operations.- Visual servoing control systems for robotic manipulation: Visual servoing techniques enable robots to use visual feedback from cameras to control their motion and perform precise manipulation tasks. These systems process image data in real-time to calculate position errors and adjust robot trajectories accordingly. The integration of visual sensors with control algorithms allows for adaptive positioning and tracking of objects in dynamic environments, improving accuracy and flexibility in automated manufacturing and assembly operations.

- Distributed sensor network architectures and communication protocols: Smart sensor networks utilize distributed architectures where multiple sensor nodes communicate and coordinate to collect and process environmental data. These networks employ efficient communication protocols to manage data transmission, reduce power consumption, and ensure reliable connectivity among nodes. The distributed approach enables scalability, fault tolerance, and collaborative sensing capabilities for monitoring large areas or complex systems.

- Image processing and feature extraction for visual guidance: Advanced image processing algorithms are employed to extract relevant features from visual data for guidance and navigation purposes. These techniques include edge detection, pattern recognition, and object identification to enable systems to interpret visual information and make decisions. The processed visual features serve as input for control systems, allowing for autonomous navigation and precise positioning in various applications.

- Sensor fusion and multi-modal data integration: Integration of data from multiple sensor types enhances the robustness and accuracy of perception systems. Sensor fusion techniques combine information from visual sensors, proximity sensors, and other modalities to create comprehensive environmental models. This multi-modal approach compensates for individual sensor limitations and provides redundancy, resulting in more reliable system performance under varying conditions.

- Adaptive learning and intelligent control in sensor networks: Machine learning and adaptive algorithms enable sensor networks to optimize their performance based on operational experience and changing conditions. These intelligent systems can adjust sensing parameters, reconfigure network topology, and improve decision-making over time. The incorporation of learning capabilities allows for autonomous optimization of resource allocation, energy management, and task scheduling in complex sensor network deployments.

02 Distributed sensor network architectures and communication protocols

Smart sensor networks utilize distributed architectures where multiple sensor nodes communicate and coordinate to collect and process environmental data. These networks employ efficient communication protocols to manage data transmission, reduce power consumption, and ensure reliable connectivity among nodes. The distributed approach enables scalable monitoring systems that can cover large areas while maintaining low latency and high data integrity through mesh networking and multi-hop communication strategies.Expand Specific Solutions03 Image processing and feature extraction for visual tracking

Advanced image processing algorithms are employed to extract relevant features from visual data for object detection, recognition, and tracking purposes. These techniques include edge detection, pattern matching, and machine learning-based classification methods that enable systems to identify and follow targets in complex scenes. The processed visual information is used to generate control signals for servo systems or to update network nodes with spatial awareness data.Expand Specific Solutions04 Sensor fusion and multi-modal data integration

Integration of multiple sensor types including visual, proximity, and environmental sensors provides comprehensive situational awareness in smart networks. Sensor fusion algorithms combine data from heterogeneous sources to improve measurement accuracy, reduce uncertainty, and enable robust decision-making. This multi-modal approach enhances system reliability by compensating for individual sensor limitations and providing redundant information channels for critical applications.Expand Specific Solutions05 Adaptive control and machine learning for autonomous systems

Machine learning techniques and adaptive control strategies enable visual servoing and sensor networks to learn from experience and optimize their performance over time. These systems can automatically adjust parameters, predict system behavior, and adapt to changing environmental conditions without manual intervention. Neural networks and reinforcement learning algorithms are applied to improve tracking accuracy, optimize resource allocation in sensor networks, and enhance overall system intelligence for autonomous operation.Expand Specific Solutions

Key Players in Visual Servoing and Smart Sensor Industries

The visual servoing versus smart sensor networks comparison represents a mature technological landscape in the growth phase, with significant market expansion driven by automation and IoT adoption. The industry demonstrates advanced technical maturity through established players like Sony, Samsung, Google, and Siemens who have developed sophisticated imaging and sensor technologies. Industrial automation leaders including FANUC, Rockwell Automation, and Caterpillar showcase proven visual servoing implementations in manufacturing and robotics. Meanwhile, smart sensor network capabilities are evidenced by companies like Cisco, AT&T, Qualcomm, and IBM providing robust networking infrastructure and edge computing solutions. Research institutions such as Beihang University and South China University of Technology continue advancing both domains. The competitive landscape reveals convergence between traditional automation companies and tech giants, with emerging players like UISEE and Whisker Labs demonstrating specialized applications in autonomous systems and IoT sensing, indicating a maturing market with diverse technological approaches.

Samsung Electronics Co., Ltd.

Technical Solution: Samsung has developed smart sensor network solutions for IoT applications, including their SmartThings platform that connects over 40 million devices worldwide. Their sensor networks utilize Zigbee 3.0 and Thread protocols, supporting mesh networking with automatic device discovery and configuration. The system includes edge computing capabilities with on-device AI processing, reducing cloud dependency by 60%. Their visual sensing solutions integrate with mobile devices and smart home systems, providing real-time monitoring and automated responses.

Strengths: Consumer electronics expertise, large ecosystem integration, cost-effective solutions for mass market. Weaknesses: Less specialized for industrial applications, limited high-precision visual servoing capabilities.

Google LLC

Technical Solution: Google has developed advanced visual servoing systems integrated with TensorFlow and computer vision APIs, enabling real-time object tracking and manipulation with sub-pixel accuracy. Their approach combines deep learning-based visual perception with adaptive control algorithms, achieving processing speeds of up to 30 FPS for robotic applications. The system utilizes cloud-based processing capabilities and edge computing to reduce latency to under 50ms, making it suitable for industrial automation and autonomous vehicle applications.

Strengths: Powerful AI integration, cloud infrastructure, real-time processing capabilities. Weaknesses: Dependency on internet connectivity, potential privacy concerns with cloud processing.

Core Innovations in Vision-Guided Control Technologies

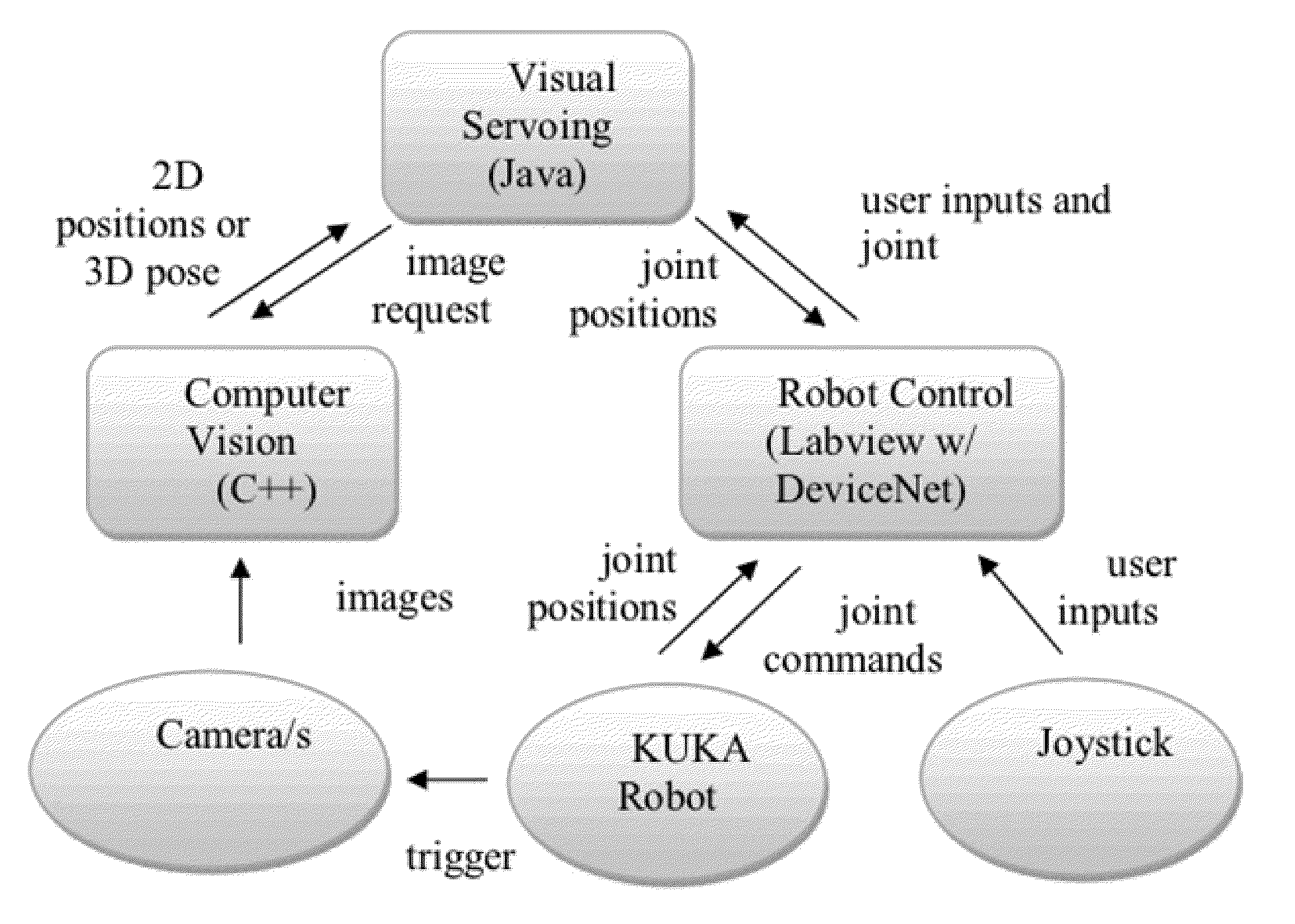

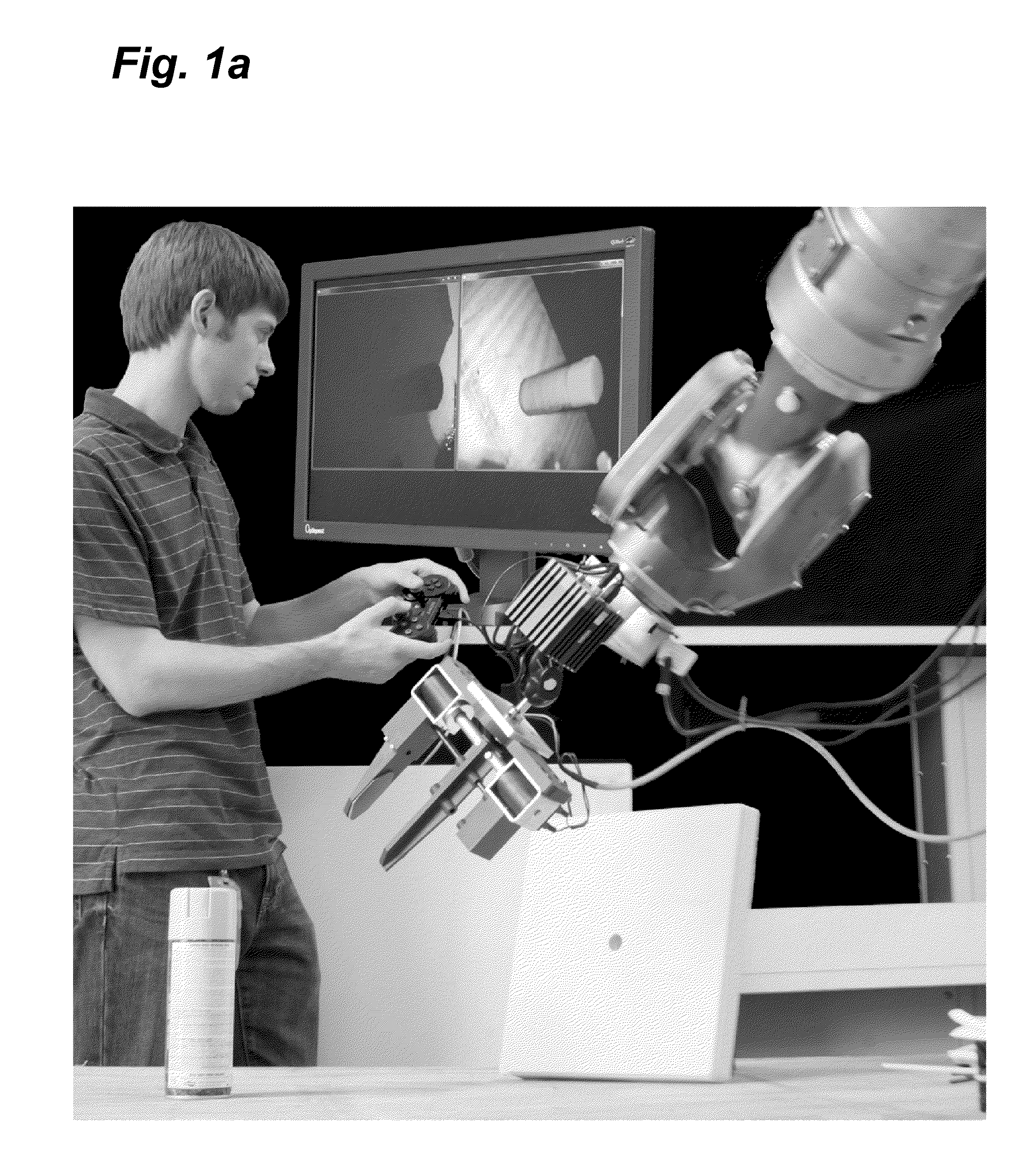

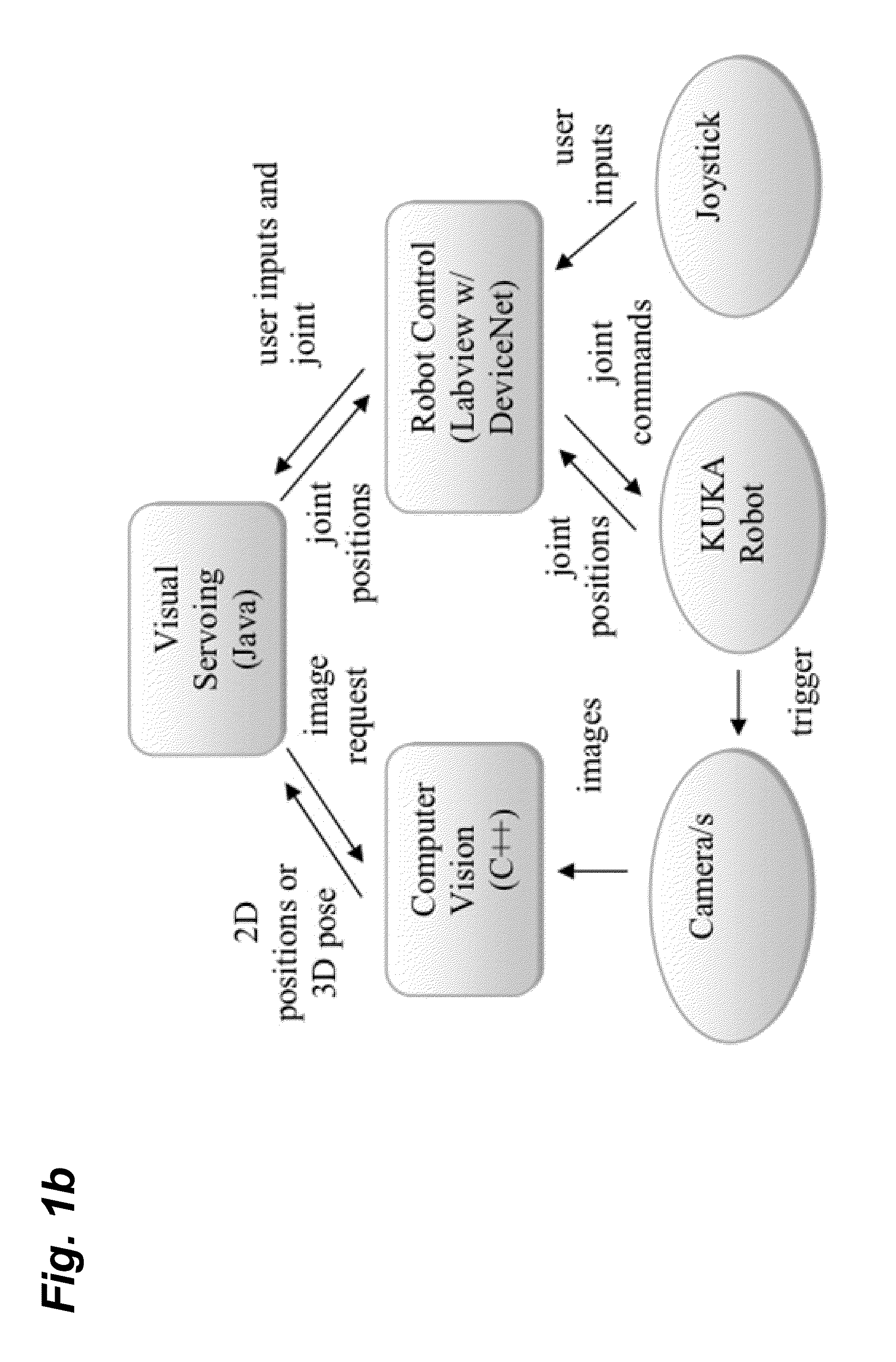

Systems and methods for operating robots using visual servoing

PatentInactiveUS20130041508A1

Innovation

- The implementation of visual servoing systems that use onboard cameras and sensors to translate commanded movements into intended robot movements in 6D space, allowing intuitive control through a joystick or similar controller without requiring precise knowledge of robot kinematics, and utilizing algorithms like Image-Based and Position-Based visual servoing to build a control map for robot control.

An apparatus and a method for obtaining a registration error map representing a level of sharpness of an image

PatentWO2016202946A1

Innovation

- An apparatus and method using four-dimensional light-field data to generate a registration error map by computing the intersection of a re-focusing surface from a three-dimensional model and a focal stack, determining the re-focusing distance for each pixel, and displaying a map representing the level of sharpness of pixels in the image, allowing for improved visual guidance and quality control.

Standardization Framework for Vision-Sensor Systems

The establishment of comprehensive standardization frameworks for vision-sensor systems represents a critical infrastructure requirement for advancing both visual servoing and smart sensor network technologies. Current standardization efforts face significant challenges due to the heterogeneous nature of vision sensors, varying communication protocols, and diverse application requirements across industrial automation, robotics, and distributed sensing domains.

Existing standardization initiatives primarily focus on isolated aspects such as camera interfaces, image formats, or network communication protocols. The IEEE 1588 Precision Time Protocol and GenICam standard provide foundational elements for vision system synchronization and camera control interfaces. However, these standards lack comprehensive integration frameworks that address the complex interoperability requirements between visual servoing systems and distributed sensor networks.

The development of unified standardization frameworks must address several key technical dimensions. Data format standardization requires establishing common protocols for image data exchange, sensor metadata transmission, and real-time performance metrics. Communication layer standardization involves defining consistent APIs for sensor discovery, configuration management, and distributed processing coordination. Quality assurance standards must encompass calibration procedures, performance benchmarking methodologies, and system validation protocols.

Emerging standardization efforts are increasingly focusing on modular architecture approaches that enable flexible integration of heterogeneous vision sensors within larger networked systems. The Industrial Internet Consortium and IEEE Standards Association are developing frameworks that support both centralized visual servoing applications and distributed smart sensor network deployments through common middleware layers.

Future standardization frameworks must incorporate adaptive protocols that can accommodate evolving sensor technologies, machine learning integration requirements, and edge computing architectures. These frameworks should enable seamless interoperability between traditional visual servoing systems and next-generation smart sensor networks while maintaining backward compatibility with existing industrial installations.

The successful implementation of comprehensive standardization frameworks will significantly accelerate technology adoption, reduce integration costs, and enable more sophisticated hybrid systems that leverage the complementary strengths of both visual servoing precision and smart sensor network scalability across diverse application domains.

Existing standardization initiatives primarily focus on isolated aspects such as camera interfaces, image formats, or network communication protocols. The IEEE 1588 Precision Time Protocol and GenICam standard provide foundational elements for vision system synchronization and camera control interfaces. However, these standards lack comprehensive integration frameworks that address the complex interoperability requirements between visual servoing systems and distributed sensor networks.

The development of unified standardization frameworks must address several key technical dimensions. Data format standardization requires establishing common protocols for image data exchange, sensor metadata transmission, and real-time performance metrics. Communication layer standardization involves defining consistent APIs for sensor discovery, configuration management, and distributed processing coordination. Quality assurance standards must encompass calibration procedures, performance benchmarking methodologies, and system validation protocols.

Emerging standardization efforts are increasingly focusing on modular architecture approaches that enable flexible integration of heterogeneous vision sensors within larger networked systems. The Industrial Internet Consortium and IEEE Standards Association are developing frameworks that support both centralized visual servoing applications and distributed smart sensor network deployments through common middleware layers.

Future standardization frameworks must incorporate adaptive protocols that can accommodate evolving sensor technologies, machine learning integration requirements, and edge computing architectures. These frameworks should enable seamless interoperability between traditional visual servoing systems and next-generation smart sensor networks while maintaining backward compatibility with existing industrial installations.

The successful implementation of comprehensive standardization frameworks will significantly accelerate technology adoption, reduce integration costs, and enable more sophisticated hybrid systems that leverage the complementary strengths of both visual servoing precision and smart sensor network scalability across diverse application domains.

Performance Benchmarking Methodologies for Comparative Analysis

Establishing robust performance benchmarking methodologies is critical for conducting meaningful comparative analysis between visual servoing systems and smart sensor networks. The fundamental challenge lies in developing standardized metrics that can effectively evaluate both technologies across their diverse operational contexts while maintaining objectivity and reproducibility.

The primary benchmarking framework should encompass accuracy metrics, including positioning precision, tracking error rates, and target acquisition success rates. For visual servoing systems, pixel-level accuracy measurements and end-effector positioning errors provide quantitative baselines. Smart sensor networks require evaluation through data fusion accuracy, localization precision, and distributed sensing coherence metrics. Temporal performance indicators such as response time, processing latency, and real-time capability assessments form another crucial dimension.

Standardized testing environments must be established to ensure fair comparison. This involves creating controlled scenarios with varying complexity levels, from simple static target tracking to dynamic multi-object environments with occlusions and interference. Environmental factors including lighting conditions, electromagnetic interference, and physical obstacles should be systematically varied to assess robustness across both technologies.

Scalability benchmarking requires progressive testing methodologies that evaluate performance degradation as system complexity increases. For visual servoing, this involves increasing the number of cameras, targets, and degrees of freedom. Smart sensor networks demand assessment of performance scaling with node density, network size, and data volume growth.

Resource utilization metrics provide essential comparative insights, encompassing computational overhead, power consumption, bandwidth requirements, and hardware costs. These measurements should be normalized against performance outcomes to establish efficiency ratios that enable direct technology comparison.

Statistical validation protocols must incorporate multiple trial runs, confidence interval calculations, and significance testing to ensure benchmark reliability. Cross-validation techniques and independent verification processes enhance the credibility of comparative results, supporting informed technology selection decisions.

The primary benchmarking framework should encompass accuracy metrics, including positioning precision, tracking error rates, and target acquisition success rates. For visual servoing systems, pixel-level accuracy measurements and end-effector positioning errors provide quantitative baselines. Smart sensor networks require evaluation through data fusion accuracy, localization precision, and distributed sensing coherence metrics. Temporal performance indicators such as response time, processing latency, and real-time capability assessments form another crucial dimension.

Standardized testing environments must be established to ensure fair comparison. This involves creating controlled scenarios with varying complexity levels, from simple static target tracking to dynamic multi-object environments with occlusions and interference. Environmental factors including lighting conditions, electromagnetic interference, and physical obstacles should be systematically varied to assess robustness across both technologies.

Scalability benchmarking requires progressive testing methodologies that evaluate performance degradation as system complexity increases. For visual servoing, this involves increasing the number of cameras, targets, and degrees of freedom. Smart sensor networks demand assessment of performance scaling with node density, network size, and data volume growth.

Resource utilization metrics provide essential comparative insights, encompassing computational overhead, power consumption, bandwidth requirements, and hardware costs. These measurements should be normalized against performance outcomes to establish efficiency ratios that enable direct technology comparison.

Statistical validation protocols must incorporate multiple trial runs, confidence interval calculations, and significance testing to ensure benchmark reliability. Cross-validation techniques and independent verification processes enhance the credibility of comparative results, supporting informed technology selection decisions.

Unlock deeper insights with PatSnap Eureka Quick Research — get a full tech report to explore trends and direct your research. Try now!

Generate Your Research Report Instantly with AI Agent

Supercharge your innovation with PatSnap Eureka AI Agent Platform!