Comparing Quantum Mechanical Models: Efficiency Metrics

SEP 4, 20259 MIN READ

Generate Your Research Report Instantly with AI Agent

PatSnap Eureka helps you evaluate technical feasibility & market potential.

Quantum Mechanical Models Background and Objectives

Quantum mechanical models have evolved significantly since the early 20th century, beginning with the foundational work of Planck, Einstein, Bohr, and Schrödinger. These models were developed to explain phenomena that classical physics could not account for, such as blackbody radiation, the photoelectric effect, and atomic structure. The progression from the early quantum theory to modern computational quantum mechanics represents one of the most profound scientific revolutions in history.

The field has witnessed several key evolutionary trends, including the transition from analytical to numerical approaches, the development of increasingly sophisticated approximation methods, and the integration of quantum mechanics with other disciplines such as computer science and information theory. This evolution has been driven by both theoretical breakthroughs and technological advancements in computational capabilities.

Currently, multiple quantum mechanical models coexist, each with specific strengths and limitations. These include Density Functional Theory (DFT), Hartree-Fock methods, Quantum Monte Carlo simulations, Coupled Cluster approaches, and Configuration Interaction techniques. The diversity of these models reflects the complexity of quantum systems and the various trade-offs between computational efficiency and accuracy that must be considered.

The primary objective of comparing efficiency metrics across quantum mechanical models is to establish standardized benchmarks that enable researchers and industry practitioners to make informed decisions about which model best suits their specific applications. This comparison aims to quantify the computational resources required, the accuracy of results obtained, and the scalability of different approaches across various problem domains.

Additionally, this technical research seeks to identify optimization opportunities within existing models and potential convergence points where different approaches might be combined to leverage their respective strengths. Understanding the efficiency landscape is crucial for advancing quantum simulation capabilities in fields ranging from materials science and drug discovery to quantum computing and cryptography.

The long-term goal is to develop a comprehensive framework for evaluating quantum mechanical models that accounts for both theoretical rigor and practical implementation considerations. This framework would facilitate more rapid progress in quantum technologies by allowing researchers to focus their efforts on the most promising approaches for specific applications, ultimately accelerating innovation across multiple industries that stand to benefit from quantum mechanical insights.

The field has witnessed several key evolutionary trends, including the transition from analytical to numerical approaches, the development of increasingly sophisticated approximation methods, and the integration of quantum mechanics with other disciplines such as computer science and information theory. This evolution has been driven by both theoretical breakthroughs and technological advancements in computational capabilities.

Currently, multiple quantum mechanical models coexist, each with specific strengths and limitations. These include Density Functional Theory (DFT), Hartree-Fock methods, Quantum Monte Carlo simulations, Coupled Cluster approaches, and Configuration Interaction techniques. The diversity of these models reflects the complexity of quantum systems and the various trade-offs between computational efficiency and accuracy that must be considered.

The primary objective of comparing efficiency metrics across quantum mechanical models is to establish standardized benchmarks that enable researchers and industry practitioners to make informed decisions about which model best suits their specific applications. This comparison aims to quantify the computational resources required, the accuracy of results obtained, and the scalability of different approaches across various problem domains.

Additionally, this technical research seeks to identify optimization opportunities within existing models and potential convergence points where different approaches might be combined to leverage their respective strengths. Understanding the efficiency landscape is crucial for advancing quantum simulation capabilities in fields ranging from materials science and drug discovery to quantum computing and cryptography.

The long-term goal is to develop a comprehensive framework for evaluating quantum mechanical models that accounts for both theoretical rigor and practical implementation considerations. This framework would facilitate more rapid progress in quantum technologies by allowing researchers to focus their efforts on the most promising approaches for specific applications, ultimately accelerating innovation across multiple industries that stand to benefit from quantum mechanical insights.

Market Applications and Demand Analysis

The quantum computing market is experiencing unprecedented growth, with the global market value projected to reach $1.3 billion by 2023 and expected to grow at a CAGR of 56.0% through 2030. This remarkable expansion is driven by increasing demand for efficient computational models across various industries seeking solutions to complex problems beyond classical computing capabilities.

Efficiency metrics for quantum mechanical models have become a critical focus area as organizations evaluate which quantum approaches best suit their specific applications. Financial institutions represent one of the largest market segments, with major banks investing heavily in quantum technologies for portfolio optimization, risk assessment, and fraud detection algorithms that require comparing different quantum mechanical approaches for optimal performance.

Pharmaceutical and biotechnology companies constitute another significant market segment, utilizing quantum mechanical models for drug discovery and molecular simulation. These companies require robust efficiency metrics to determine which quantum approaches deliver the most accurate results within reasonable timeframes, directly impacting R&D costs and time-to-market for new therapies.

The logistics and transportation sector demonstrates growing demand for quantum optimization solutions, with companies seeking to evaluate different quantum mechanical models for route optimization, fleet management, and supply chain efficiency. The ability to compare efficiency metrics between quantum approaches translates directly to operational cost savings in this highly competitive industry.

Government and defense sectors represent substantial market demand, particularly for quantum encryption and security applications. These organizations require sophisticated efficiency metrics to evaluate quantum mechanical models for cryptographic applications, communications security, and threat detection systems.

Academic and research institutions form a foundational market segment, continuously advancing the theoretical frameworks for comparing quantum mechanical models. Their work establishes the standardized efficiency metrics that commercial applications subsequently adopt, creating a symbiotic relationship between theoretical advancement and practical implementation.

Cloud service providers have emerged as critical market enablers, offering quantum computing as a service (QCaaS) platforms that allow organizations to test and compare different quantum mechanical models without significant infrastructure investment. This accessibility has democratized access to quantum computing resources and accelerated adoption across industries previously limited by cost barriers.

The market demonstrates regional variations, with North America currently leading in quantum computing investments, followed by Europe and Asia-Pacific regions showing accelerated growth rates. China's national initiatives in quantum technologies are creating substantial market demand for efficiency metrics to evaluate progress against global competitors.

Efficiency metrics for quantum mechanical models have become a critical focus area as organizations evaluate which quantum approaches best suit their specific applications. Financial institutions represent one of the largest market segments, with major banks investing heavily in quantum technologies for portfolio optimization, risk assessment, and fraud detection algorithms that require comparing different quantum mechanical approaches for optimal performance.

Pharmaceutical and biotechnology companies constitute another significant market segment, utilizing quantum mechanical models for drug discovery and molecular simulation. These companies require robust efficiency metrics to determine which quantum approaches deliver the most accurate results within reasonable timeframes, directly impacting R&D costs and time-to-market for new therapies.

The logistics and transportation sector demonstrates growing demand for quantum optimization solutions, with companies seeking to evaluate different quantum mechanical models for route optimization, fleet management, and supply chain efficiency. The ability to compare efficiency metrics between quantum approaches translates directly to operational cost savings in this highly competitive industry.

Government and defense sectors represent substantial market demand, particularly for quantum encryption and security applications. These organizations require sophisticated efficiency metrics to evaluate quantum mechanical models for cryptographic applications, communications security, and threat detection systems.

Academic and research institutions form a foundational market segment, continuously advancing the theoretical frameworks for comparing quantum mechanical models. Their work establishes the standardized efficiency metrics that commercial applications subsequently adopt, creating a symbiotic relationship between theoretical advancement and practical implementation.

Cloud service providers have emerged as critical market enablers, offering quantum computing as a service (QCaaS) platforms that allow organizations to test and compare different quantum mechanical models without significant infrastructure investment. This accessibility has democratized access to quantum computing resources and accelerated adoption across industries previously limited by cost barriers.

The market demonstrates regional variations, with North America currently leading in quantum computing investments, followed by Europe and Asia-Pacific regions showing accelerated growth rates. China's national initiatives in quantum technologies are creating substantial market demand for efficiency metrics to evaluate progress against global competitors.

Current State and Challenges in Quantum Modeling

Quantum mechanical modeling has evolved significantly over the past decades, with various approaches developed to simulate quantum systems. Currently, the field encompasses several established methodologies including Density Functional Theory (DFT), Quantum Monte Carlo (QMC), Coupled Cluster methods, and emerging quantum computing algorithms. Each model offers distinct advantages and limitations in terms of accuracy, computational efficiency, and applicability to different physical systems.

The global landscape of quantum modeling research shows concentration in North America, Europe, and parts of Asia, particularly Japan and China. Research institutions like MIT, Caltech, Max Planck Institute, and companies such as IBM, Google, and Microsoft are at the forefront of advancing these technologies. This geographical distribution reflects both historical scientific leadership and recent strategic investments in quantum technologies.

Despite significant progress, quantum mechanical modeling faces several critical challenges. Computational complexity remains a fundamental obstacle, with accurate simulations of many-body quantum systems requiring exponentially increasing resources as system size grows. This "exponential wall" limits practical applications to relatively small molecular systems or requires significant approximations for larger systems.

Accuracy-efficiency tradeoffs present another major challenge. More accurate methods like Coupled Cluster with Single, Double, and perturbative Triple excitations (CCSD(T)) demand substantially greater computational resources than approximate methods like DFT, forcing researchers to balance precision against feasibility. Additionally, the treatment of strongly correlated systems remains problematic for many standard approaches.

Benchmarking and standardization issues complicate the comparison of different quantum mechanical models. The field lacks universally accepted efficiency metrics that simultaneously account for accuracy, computational cost, and scalability. This hampers objective evaluation of emerging methods against established approaches.

Technical implementation challenges include code optimization, parallelization strategies, and hardware-specific adaptations. As quantum computing hardware advances, bridging the gap between theoretical algorithms and practical implementation on noisy intermediate-scale quantum (NISQ) devices presents additional complications.

Data management represents an emerging challenge, with modern quantum simulations generating massive datasets that require sophisticated storage, analysis, and visualization techniques. The integration of machine learning approaches with quantum modeling is showing promise but introduces new methodological questions about validation and interpretability.

Addressing these challenges requires interdisciplinary collaboration between quantum physicists, computer scientists, materials scientists, and data specialists. Recent funding initiatives from national science foundations and technology companies have accelerated research, but significant theoretical and technical hurdles remain before quantum mechanical modeling can reach its full potential across scientific and industrial applications.

The global landscape of quantum modeling research shows concentration in North America, Europe, and parts of Asia, particularly Japan and China. Research institutions like MIT, Caltech, Max Planck Institute, and companies such as IBM, Google, and Microsoft are at the forefront of advancing these technologies. This geographical distribution reflects both historical scientific leadership and recent strategic investments in quantum technologies.

Despite significant progress, quantum mechanical modeling faces several critical challenges. Computational complexity remains a fundamental obstacle, with accurate simulations of many-body quantum systems requiring exponentially increasing resources as system size grows. This "exponential wall" limits practical applications to relatively small molecular systems or requires significant approximations for larger systems.

Accuracy-efficiency tradeoffs present another major challenge. More accurate methods like Coupled Cluster with Single, Double, and perturbative Triple excitations (CCSD(T)) demand substantially greater computational resources than approximate methods like DFT, forcing researchers to balance precision against feasibility. Additionally, the treatment of strongly correlated systems remains problematic for many standard approaches.

Benchmarking and standardization issues complicate the comparison of different quantum mechanical models. The field lacks universally accepted efficiency metrics that simultaneously account for accuracy, computational cost, and scalability. This hampers objective evaluation of emerging methods against established approaches.

Technical implementation challenges include code optimization, parallelization strategies, and hardware-specific adaptations. As quantum computing hardware advances, bridging the gap between theoretical algorithms and practical implementation on noisy intermediate-scale quantum (NISQ) devices presents additional complications.

Data management represents an emerging challenge, with modern quantum simulations generating massive datasets that require sophisticated storage, analysis, and visualization techniques. The integration of machine learning approaches with quantum modeling is showing promise but introduces new methodological questions about validation and interpretability.

Addressing these challenges requires interdisciplinary collaboration between quantum physicists, computer scientists, materials scientists, and data specialists. Recent funding initiatives from national science foundations and technology companies have accelerated research, but significant theoretical and technical hurdles remain before quantum mechanical modeling can reach its full potential across scientific and industrial applications.

Existing Efficiency Metrics and Benchmarking Methods

01 Computational efficiency metrics for quantum mechanical models

Various metrics are used to evaluate the computational efficiency of quantum mechanical models, including execution time, resource utilization, and algorithmic complexity. These metrics help in assessing the performance of quantum simulations across different hardware platforms. Optimization techniques focus on reducing computational overhead while maintaining accuracy in quantum mechanical calculations, particularly for complex molecular systems and materials science applications.- Quantum computing efficiency metrics and benchmarking: Various metrics and benchmarking approaches are used to evaluate the efficiency of quantum mechanical models in computing applications. These metrics help assess the performance of quantum algorithms, quantum processors, and quantum simulations. Benchmarking frameworks compare different quantum computing platforms and algorithms based on factors such as execution time, resource utilization, and accuracy of results. These metrics are essential for determining the practical viability of quantum computing solutions for specific applications.

- Quantum simulation optimization techniques: Optimization techniques for quantum simulations focus on improving computational efficiency while maintaining accuracy. These techniques include adaptive algorithms that dynamically adjust simulation parameters, resource allocation strategies that minimize quantum circuit depth, and hybrid quantum-classical approaches that leverage the strengths of both computing paradigms. By optimizing quantum simulations, researchers can model complex quantum systems with reduced computational overhead and improved performance metrics.

- Quantum error correction and mitigation strategies: Error correction and mitigation strategies are crucial for improving the efficiency of quantum mechanical models. These approaches address quantum decoherence and gate errors that can significantly impact computational accuracy. Techniques include error-correcting codes, error detection protocols, and noise-resilient algorithm design. By implementing effective error correction and mitigation strategies, quantum systems can achieve higher fidelity results and improved performance metrics even in the presence of noise and environmental interference.

- Hardware-specific quantum model optimization: Optimization of quantum mechanical models for specific hardware architectures involves tailoring algorithms and simulations to leverage the unique capabilities of different quantum processors. This includes mapping quantum circuits to specific qubit topologies, optimizing gate sequences for particular hardware constraints, and developing hardware-aware compilation techniques. Hardware-specific optimizations can significantly improve efficiency metrics such as execution time, energy consumption, and computational accuracy by accounting for the physical limitations and strengths of the target quantum system.

- Quantum-classical hybrid approaches for efficiency enhancement: Hybrid approaches combining quantum and classical computing techniques offer practical solutions for enhancing the efficiency of quantum mechanical models. These methods distribute computational tasks between quantum and classical processors based on their respective strengths. Variational quantum algorithms, quantum machine learning with classical pre-processing, and quantum-inspired classical algorithms are examples of hybrid approaches. By strategically integrating quantum and classical resources, these hybrid methods can achieve improved performance metrics while working within the constraints of current quantum hardware capabilities.

02 Quantum computing performance benchmarks

Standardized benchmarks have been developed to measure the performance of quantum computing systems implementing quantum mechanical models. These benchmarks evaluate metrics such as quantum volume, circuit depth capabilities, error rates, and coherence times. The efficiency metrics allow for comparative analysis between different quantum computing architectures and help identify bottlenecks in quantum information processing systems.Expand Specific Solutions03 Quantum-classical hybrid model efficiency

Hybrid approaches combining quantum and classical computing elements have specific efficiency metrics to evaluate their performance. These metrics measure the effectiveness of workload distribution between quantum and classical processors, communication overhead, and overall speedup compared to purely classical or quantum approaches. The efficiency of these hybrid models is particularly important for near-term quantum applications where quantum resources are limited.Expand Specific Solutions04 Quantum simulation accuracy-efficiency tradeoffs

Metrics have been developed to quantify the tradeoff between computational efficiency and simulation accuracy in quantum mechanical models. These metrics help researchers select appropriate approximation methods based on specific application requirements. The balance between precision and computational cost is particularly important in areas such as drug discovery, materials design, and quantum chemistry, where both accuracy and timely results are critical.Expand Specific Solutions05 Hardware-specific quantum efficiency optimization

Efficiency metrics tailored to specific quantum hardware architectures help optimize quantum mechanical model performance. These metrics account for the unique characteristics of different quantum computing platforms, including superconducting qubits, trapped ions, and photonic systems. Hardware-aware optimization techniques focus on mapping quantum algorithms to specific hardware topologies, minimizing gate errors, and maximizing qubit utilization to improve overall computational efficiency.Expand Specific Solutions

Key Players in Quantum Computing Research

The quantum mechanical models efficiency metrics landscape is currently in an early growth phase, characterized by increasing research interest but limited commercial applications. The market size is expanding rapidly, estimated to reach several billion dollars by 2030 as quantum computing transitions from research to practical implementation. Technical maturity varies significantly across players, with established technology companies like IBM, Honeywell (through Quantinuum), and Oracle leading commercial development. Academic institutions (Chongqing University, Southeast University) are advancing theoretical frameworks, while specialized quantum companies like Zapata Computing and BenevolentAI are developing innovative applications. Research institutes such as Fraunhofer-Gesellschaft and Global Energy Interconnection Research Institute are bridging the gap between theoretical advancements and practical implementations, creating a competitive ecosystem where collaboration and specialization are equally important for advancement.

International Business Machines Corp.

Technical Solution: IBM has pioneered quantum efficiency metrics through its Quantum Information Science Kit (Qiskit) framework, which provides comprehensive tools for evaluating quantum mechanical models. Their approach centers on the Quantum Volume metric, a holistic measure they developed that accounts for both the number of qubits and their error rates to quantify a quantum computer's capability[2]. IBM's efficiency framework incorporates circuit layer operations per second (CLOPS), which measures the execution speed of quantum circuits, providing insights into the practical performance of quantum mechanical models. Their superconducting qubit technology has achieved coherence times exceeding 100 microseconds[3], enabling more complex quantum simulations before decoherence affects results. IBM has also developed specialized quantum error mitigation techniques like Zero Noise Extrapolation and Probabilistic Error Cancellation that improve the accuracy of quantum mechanical calculations without requiring full quantum error correction. Their quantum hardware roadmap includes processors with increasingly sophisticated architectures designed to reduce crosstalk and improve gate fidelities, directly enhancing the efficiency of quantum mechanical model implementations.

Strengths: Extensive quantum software ecosystem with Qiskit that provides comprehensive benchmarking tools; industry-standard Quantum Volume metric for comparing systems; large-scale quantum processors with increasing qubit counts. Weaknesses: Relatively short coherence times compared to trapped-ion systems; higher error rates in multi-qubit operations; requires extremely low operating temperatures that increase system complexity and operational costs.

Fraunhofer-Gesellschaft eV

Technical Solution: Fraunhofer-Gesellschaft has developed a comprehensive framework for evaluating quantum mechanical model efficiency through their Quantum Computing Initiative. Their approach focuses on application-specific benchmarking that assesses quantum algorithms based on their performance for practical use cases rather than abstract metrics alone. Fraunhofer's efficiency framework incorporates resource estimation tools that predict the quantum computing resources required for specific quantum mechanical simulations, allowing researchers to determine when quantum advantage might be achieved for particular problems[7]. They have pioneered hybrid quantum-classical algorithms that optimize the distribution of computational tasks between quantum and classical processors to maximize overall efficiency for quantum mechanical models. Their benchmarking methodology includes standardized test suites for comparing different quantum hardware platforms and software frameworks across multiple efficiency dimensions, including circuit depth, gate count, and runtime. Fraunhofer has also developed specialized quantum circuit optimization techniques that reduce the resources required for implementing quantum mechanical models while preserving simulation accuracy. Their research includes quantum error mitigation strategies tailored to specific application domains, improving the practical efficiency of quantum mechanical simulations on near-term quantum devices.

Strengths: Application-focused benchmarking methodology that provides practical efficiency assessments; comprehensive resource estimation tools; expertise in hybrid quantum-classical approaches that maximize current capabilities. Weaknesses: Limited proprietary quantum hardware development compared to commercial entities; dependence on partnerships for hardware access; primarily research-focused approach that may lag behind commercial implementation timelines.

Core Quantum Model Comparison Methodologies

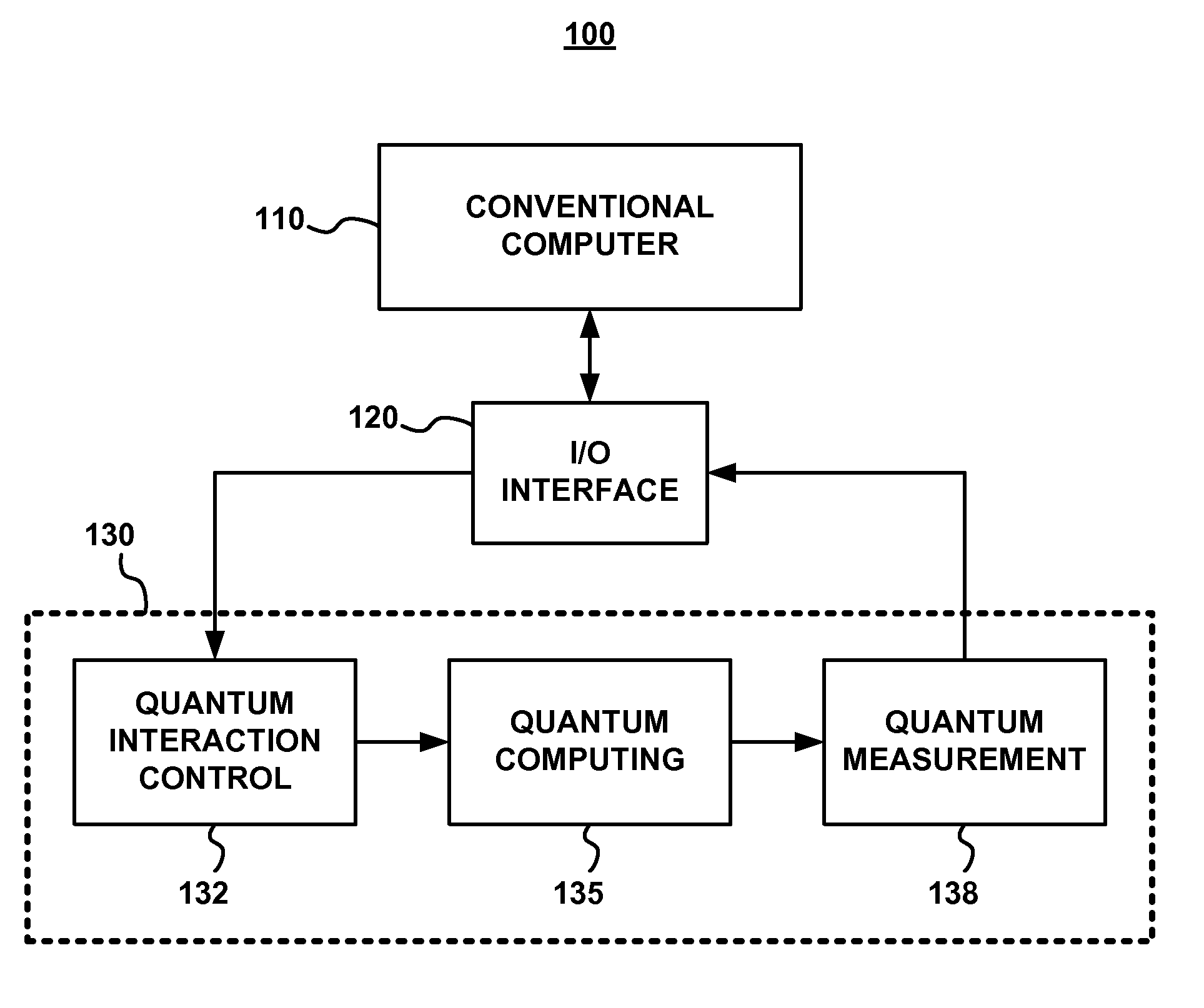

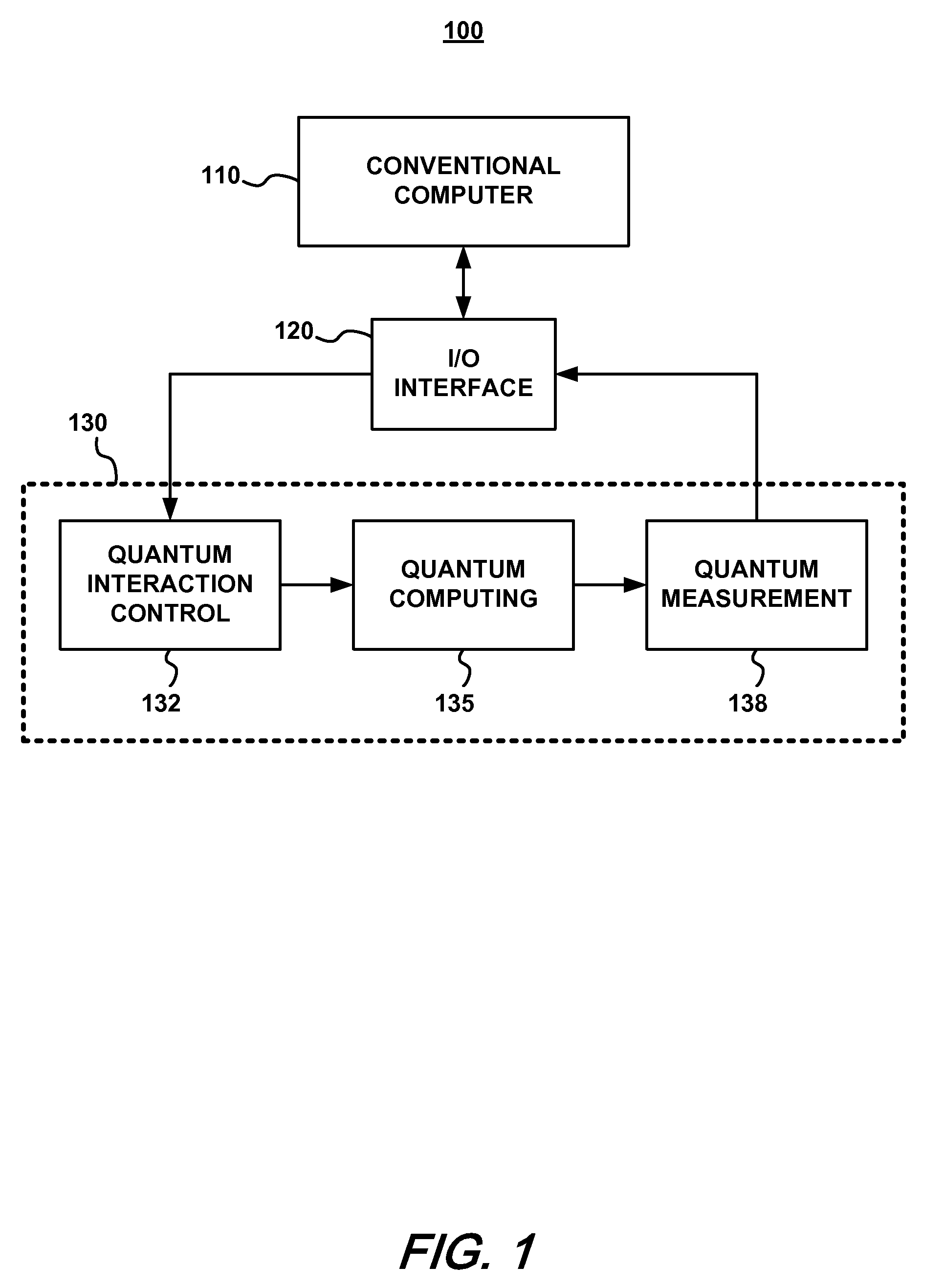

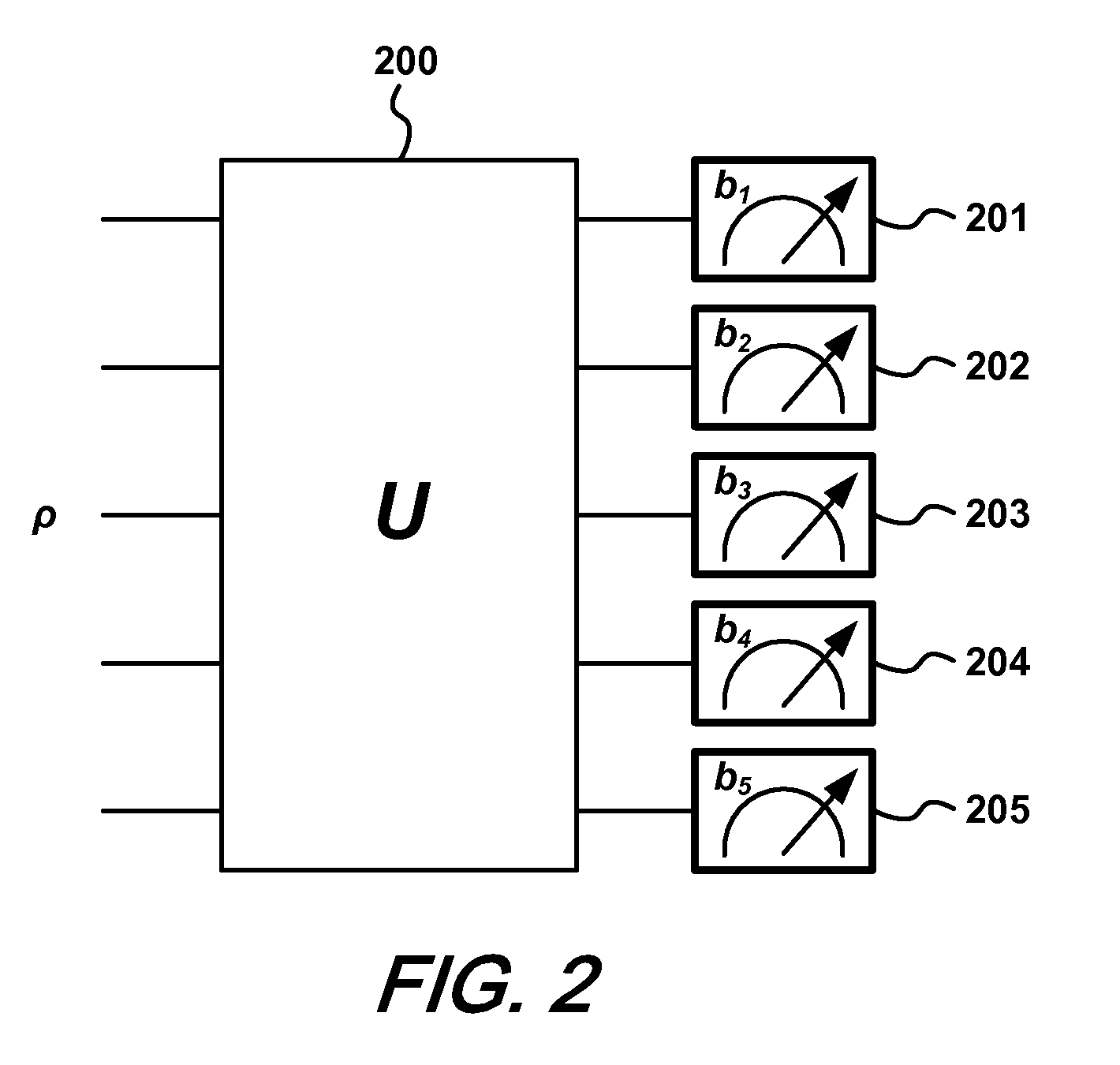

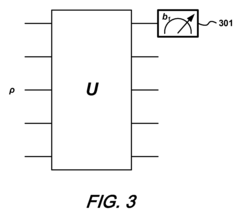

Estimating a quantum state of a quantum mechanical system

PatentInactiveUS8315969B2

Innovation

- A method using yes/no measurements of a single qubit to estimate the quantum state by applying a series of unitary operations and reconstructing the state with the expression ρ=(2d∑i=1d2-1piPi+(1-pi)(1-Pi))-(d2-2d)Id, where d is the system dimension, Pi are measurement projectors, and pi are their probabilities, allowing for efficient characterization of the quantum state with minimal measurements.

Frequency Domain Work Analysis of Machinery including Turbomachinery

PatentPendingUS20240329619A1

Innovation

- The analysis of experimentally measured motor power versus speed data using a mathematical model that decomposes input power into output work and heat components, allowing for the characterization of efficiency and identification of frictional losses through frequency-domain analysis of motor current data.

Computational Resource Requirements Analysis

When evaluating quantum mechanical models, computational resource requirements represent a critical efficiency metric that directly impacts practical implementation feasibility. Current quantum mechanical simulations demand substantial computational power, with resource scaling varying dramatically across different models. For instance, full configuration interaction (FCI) methods scale exponentially with system size, making them prohibitive for systems beyond a few atoms or molecules. In contrast, density functional theory (DFT) implementations typically scale as O(N³) where N represents the number of basis functions, offering significantly improved efficiency for larger systems.

Memory requirements constitute another crucial consideration in quantum mechanical calculations. Wave function-based methods like coupled-cluster approaches require storage of high-dimensional tensors, with CCSD(T) calculations necessitating terabytes of memory for moderately-sized molecular systems. Quantum Monte Carlo methods present different resource challenges, trading memory efficiency for increased computational time through statistical sampling approaches.

Hardware acceleration capabilities significantly influence resource utilization profiles. GPU acceleration has demonstrated 10-100x performance improvements for certain quantum chemistry algorithms, particularly those involving dense linear algebra operations common in DFT implementations. Emerging quantum computing platforms offer theoretical advantages for specific quantum simulations but currently face severe limitations in qubit count and coherence times, restricting practical applications.

Parallelization efficiency varies substantially between quantum mechanical models. Methods like DFT exhibit excellent strong scaling on high-performance computing clusters, maintaining efficiency up to thousands of processor cores for large systems. Conversely, methods with complex interdependencies between computational steps, such as certain post-Hartree-Fock approaches, demonstrate diminishing returns beyond several hundred cores due to communication overhead.

Time-to-solution metrics reveal significant variations across methodologies. Semi-empirical methods can produce results for large biomolecular systems within minutes on standard workstations, while accurate coupled-cluster calculations for the same systems might require weeks on supercomputing resources. This time-accuracy tradeoff represents a fundamental consideration when selecting appropriate quantum mechanical models for specific research or industrial applications.

Energy efficiency has emerged as an increasingly important metric, particularly for large-scale deployments. Recent benchmarks indicate that quantum chemistry calculations can consume between 500-5000 kWh for complex molecular systems, highlighting the environmental and economic impacts of computational approach selection. Optimized algorithms and specialized hardware architectures have demonstrated potential to reduce energy requirements by 30-70% for equivalent calculations.

Memory requirements constitute another crucial consideration in quantum mechanical calculations. Wave function-based methods like coupled-cluster approaches require storage of high-dimensional tensors, with CCSD(T) calculations necessitating terabytes of memory for moderately-sized molecular systems. Quantum Monte Carlo methods present different resource challenges, trading memory efficiency for increased computational time through statistical sampling approaches.

Hardware acceleration capabilities significantly influence resource utilization profiles. GPU acceleration has demonstrated 10-100x performance improvements for certain quantum chemistry algorithms, particularly those involving dense linear algebra operations common in DFT implementations. Emerging quantum computing platforms offer theoretical advantages for specific quantum simulations but currently face severe limitations in qubit count and coherence times, restricting practical applications.

Parallelization efficiency varies substantially between quantum mechanical models. Methods like DFT exhibit excellent strong scaling on high-performance computing clusters, maintaining efficiency up to thousands of processor cores for large systems. Conversely, methods with complex interdependencies between computational steps, such as certain post-Hartree-Fock approaches, demonstrate diminishing returns beyond several hundred cores due to communication overhead.

Time-to-solution metrics reveal significant variations across methodologies. Semi-empirical methods can produce results for large biomolecular systems within minutes on standard workstations, while accurate coupled-cluster calculations for the same systems might require weeks on supercomputing resources. This time-accuracy tradeoff represents a fundamental consideration when selecting appropriate quantum mechanical models for specific research or industrial applications.

Energy efficiency has emerged as an increasingly important metric, particularly for large-scale deployments. Recent benchmarks indicate that quantum chemistry calculations can consume between 500-5000 kWh for complex molecular systems, highlighting the environmental and economic impacts of computational approach selection. Optimized algorithms and specialized hardware architectures have demonstrated potential to reduce energy requirements by 30-70% for equivalent calculations.

Standardization Efforts in Quantum Model Evaluation

The quantum computing field has recognized the critical need for standardized evaluation frameworks to compare different quantum mechanical models effectively. Several international organizations, including IEEE Quantum, the International Organization for Standardization (ISO), and the National Institute of Standards and Technology (NIST), have initiated collaborative efforts to establish common benchmarking protocols for quantum model evaluation.

IEEE Quantum's Working Group on Quantum Computing Performance Metrics has developed preliminary standards focusing on gate fidelity, coherence time measurements, and algorithmic performance benchmarks. These standards provide a foundation for comparing quantum models across different hardware implementations and theoretical frameworks.

ISO/IEC JTC 1/SC 42, dedicated to artificial intelligence and quantum computing, has published draft standards for quantum algorithm efficiency metrics. Their framework proposes standardized methods for evaluating computational complexity, resource requirements, and error tolerance across various quantum mechanical models.

The Quantum Economic Development Consortium (QED-C) has established an industry-led initiative to develop practical benchmarks that address both theoretical performance and real-world implementation challenges. Their approach emphasizes metrics relevant to commercial applications, including scalability potential and integration capabilities with classical systems.

Academic institutions have contributed significantly through the Quantum Benchmarking Alliance, which focuses on creating open-source benchmarking suites that enable transparent comparison of quantum models. Their standardized test cases cover diverse application domains from quantum chemistry to optimization problems.

Recent progress includes the development of the Quantum Volume metric, which has gained traction as a holistic measure of quantum system capability. Additionally, the Quantum Circuit Robustness (QCR) standard provides a framework for evaluating model performance under noise and environmental interference.

Cross-platform compatibility remains a significant challenge in standardization efforts. The Quantum Open Source Foundation is addressing this through the development of middleware solutions that enable consistent evaluation across different quantum programming frameworks and hardware architectures.

These standardization initiatives are gradually converging toward a comprehensive evaluation framework that balances theoretical performance metrics with practical implementation considerations, enabling more meaningful comparisons between quantum mechanical models and accelerating progress in the field.

IEEE Quantum's Working Group on Quantum Computing Performance Metrics has developed preliminary standards focusing on gate fidelity, coherence time measurements, and algorithmic performance benchmarks. These standards provide a foundation for comparing quantum models across different hardware implementations and theoretical frameworks.

ISO/IEC JTC 1/SC 42, dedicated to artificial intelligence and quantum computing, has published draft standards for quantum algorithm efficiency metrics. Their framework proposes standardized methods for evaluating computational complexity, resource requirements, and error tolerance across various quantum mechanical models.

The Quantum Economic Development Consortium (QED-C) has established an industry-led initiative to develop practical benchmarks that address both theoretical performance and real-world implementation challenges. Their approach emphasizes metrics relevant to commercial applications, including scalability potential and integration capabilities with classical systems.

Academic institutions have contributed significantly through the Quantum Benchmarking Alliance, which focuses on creating open-source benchmarking suites that enable transparent comparison of quantum models. Their standardized test cases cover diverse application domains from quantum chemistry to optimization problems.

Recent progress includes the development of the Quantum Volume metric, which has gained traction as a holistic measure of quantum system capability. Additionally, the Quantum Circuit Robustness (QCR) standard provides a framework for evaluating model performance under noise and environmental interference.

Cross-platform compatibility remains a significant challenge in standardization efforts. The Quantum Open Source Foundation is addressing this through the development of middleware solutions that enable consistent evaluation across different quantum programming frameworks and hardware architectures.

These standardization initiatives are gradually converging toward a comprehensive evaluation framework that balances theoretical performance metrics with practical implementation considerations, enabling more meaningful comparisons between quantum mechanical models and accelerating progress in the field.

Unlock deeper insights with PatSnap Eureka Quick Research — get a full tech report to explore trends and direct your research. Try now!

Generate Your Research Report Instantly with AI Agent

Supercharge your innovation with PatSnap Eureka AI Agent Platform!