How to Benchmark Quantum Computing Models in Research

SEP 4, 20259 MIN READ

Generate Your Research Report Instantly with AI Agent

PatSnap Eureka helps you evaluate technical feasibility & market potential.

Quantum Computing Benchmarking Background and Objectives

Quantum computing has evolved significantly since its theoretical conception in the early 1980s by Richard Feynman and others. This revolutionary computational paradigm leverages quantum mechanical phenomena such as superposition and entanglement to perform calculations that would be intractable for classical computers. As quantum technologies advance from theoretical constructs to practical implementations, the need for standardized benchmarking methodologies has become increasingly critical for meaningful progress assessment.

The quantum computing landscape has witnessed accelerated development in recent years, with various hardware architectures emerging, including superconducting qubits, trapped ions, photonic systems, and topological qubits. Each architecture presents unique advantages and limitations, making comparative analysis challenging without robust benchmarking frameworks. The absence of standardized performance metrics has hindered objective evaluation of quantum computing advancements across different platforms and research groups.

Current benchmarking efforts often focus on isolated performance aspects such as qubit count, coherence times, or gate fidelities. However, these metrics alone fail to capture the holistic capabilities of quantum systems in solving practical computational problems. The quantum volume metric introduced by IBM represents an early attempt at comprehensive benchmarking, but the field requires more sophisticated and application-specific evaluation frameworks.

The primary objective of quantum computing benchmarking is to establish universally accepted methodologies for assessing quantum hardware and software performance across diverse architectures and implementations. These benchmarks must be platform-agnostic while remaining sensitive to the unique characteristics of quantum systems, including error rates, connectivity constraints, and coherence limitations.

Another critical goal is developing benchmarks that meaningfully correlate with practical quantum advantage in real-world applications. This includes standardized test suites for quantum algorithms in optimization, simulation, machine learning, and cryptography that can demonstrate quantum supremacy in specific domains while providing clear performance comparisons with classical alternatives.

Furthermore, benchmarking initiatives aim to track the industry's progress toward fault-tolerant quantum computing by establishing intermediate milestones that quantify advancements in error correction, logical qubit implementation, and quantum resource utilization efficiency. These benchmarks will serve as crucial indicators for the quantum computing roadmap, helping researchers and industry stakeholders make informed decisions about technology development priorities.

Ultimately, robust quantum computing benchmarks will facilitate transparent communication about capabilities across the quantum ecosystem, accelerate technological progress through healthy competition, and provide clarity for potential users regarding when and how quantum computing can deliver practical value for specific computational challenges.

The quantum computing landscape has witnessed accelerated development in recent years, with various hardware architectures emerging, including superconducting qubits, trapped ions, photonic systems, and topological qubits. Each architecture presents unique advantages and limitations, making comparative analysis challenging without robust benchmarking frameworks. The absence of standardized performance metrics has hindered objective evaluation of quantum computing advancements across different platforms and research groups.

Current benchmarking efforts often focus on isolated performance aspects such as qubit count, coherence times, or gate fidelities. However, these metrics alone fail to capture the holistic capabilities of quantum systems in solving practical computational problems. The quantum volume metric introduced by IBM represents an early attempt at comprehensive benchmarking, but the field requires more sophisticated and application-specific evaluation frameworks.

The primary objective of quantum computing benchmarking is to establish universally accepted methodologies for assessing quantum hardware and software performance across diverse architectures and implementations. These benchmarks must be platform-agnostic while remaining sensitive to the unique characteristics of quantum systems, including error rates, connectivity constraints, and coherence limitations.

Another critical goal is developing benchmarks that meaningfully correlate with practical quantum advantage in real-world applications. This includes standardized test suites for quantum algorithms in optimization, simulation, machine learning, and cryptography that can demonstrate quantum supremacy in specific domains while providing clear performance comparisons with classical alternatives.

Furthermore, benchmarking initiatives aim to track the industry's progress toward fault-tolerant quantum computing by establishing intermediate milestones that quantify advancements in error correction, logical qubit implementation, and quantum resource utilization efficiency. These benchmarks will serve as crucial indicators for the quantum computing roadmap, helping researchers and industry stakeholders make informed decisions about technology development priorities.

Ultimately, robust quantum computing benchmarks will facilitate transparent communication about capabilities across the quantum ecosystem, accelerate technological progress through healthy competition, and provide clarity for potential users regarding when and how quantum computing can deliver practical value for specific computational challenges.

Market Analysis for Quantum Computing Benchmarking Tools

The quantum computing benchmarking tools market is experiencing significant growth as quantum technologies transition from research laboratories to commercial applications. Current market estimates value the quantum computing benchmarking sector at approximately $50 million, with projections indicating expansion to $300 million by 2028, representing a compound annual growth rate of 43%. This growth is primarily driven by increasing investments in quantum research from both public and private sectors.

The market segmentation reveals distinct customer categories with varying needs. Academic and research institutions constitute about 45% of the current market, focusing on theoretical performance metrics and algorithm testing. Corporate R&D departments represent 30%, emphasizing practical applications and integration capabilities. Government agencies and national laboratories account for 20%, prioritizing security features and standardized comparison methodologies. The remaining 5% consists of quantum startups seeking cost-effective solutions.

Geographically, North America dominates with 40% market share, led by substantial investments from the United States government and technology giants like IBM, Google, and Microsoft. Europe follows at 35%, with significant contributions from quantum initiatives in the UK, Germany, and France. The Asia-Pacific region holds 20%, with rapid growth observed in China, Japan, and Singapore. The remaining 5% is distributed across other regions.

Key market drivers include the intensifying quantum supremacy race among major technology companies, growing demand for standardized performance metrics, and increasing venture capital investments in quantum computing startups. The market also benefits from government initiatives worldwide, with the US National Quantum Initiative, EU Quantum Flagship, and China's national quantum program collectively allocating billions for quantum technology development.

Market challenges include the lack of universally accepted benchmarking standards, technical complexity requiring specialized expertise, and high implementation costs. Additionally, the rapidly evolving nature of quantum hardware creates difficulties in establishing consistent performance metrics across different quantum computing architectures.

Customer pain points reveal opportunities for market entrants. Users consistently report difficulties in comparing results across different quantum platforms, challenges in translating benchmark results to practical applications, and the steep learning curve associated with quantum benchmarking tools. There is also significant demand for benchmarking solutions that can effectively evaluate quantum advantage for specific industry applications rather than generic computational tasks.

The market segmentation reveals distinct customer categories with varying needs. Academic and research institutions constitute about 45% of the current market, focusing on theoretical performance metrics and algorithm testing. Corporate R&D departments represent 30%, emphasizing practical applications and integration capabilities. Government agencies and national laboratories account for 20%, prioritizing security features and standardized comparison methodologies. The remaining 5% consists of quantum startups seeking cost-effective solutions.

Geographically, North America dominates with 40% market share, led by substantial investments from the United States government and technology giants like IBM, Google, and Microsoft. Europe follows at 35%, with significant contributions from quantum initiatives in the UK, Germany, and France. The Asia-Pacific region holds 20%, with rapid growth observed in China, Japan, and Singapore. The remaining 5% is distributed across other regions.

Key market drivers include the intensifying quantum supremacy race among major technology companies, growing demand for standardized performance metrics, and increasing venture capital investments in quantum computing startups. The market also benefits from government initiatives worldwide, with the US National Quantum Initiative, EU Quantum Flagship, and China's national quantum program collectively allocating billions for quantum technology development.

Market challenges include the lack of universally accepted benchmarking standards, technical complexity requiring specialized expertise, and high implementation costs. Additionally, the rapidly evolving nature of quantum hardware creates difficulties in establishing consistent performance metrics across different quantum computing architectures.

Customer pain points reveal opportunities for market entrants. Users consistently report difficulties in comparing results across different quantum platforms, challenges in translating benchmark results to practical applications, and the steep learning curve associated with quantum benchmarking tools. There is also significant demand for benchmarking solutions that can effectively evaluate quantum advantage for specific industry applications rather than generic computational tasks.

Current Quantum Benchmarking Challenges and Limitations

Despite significant advancements in quantum computing, the field faces substantial challenges in establishing standardized benchmarking methodologies. Current quantum benchmarking approaches suffer from a lack of universality, making it difficult to compare results across different quantum computing platforms. This fragmentation stems from the diversity of quantum hardware architectures, including superconducting qubits, trapped ions, photonic systems, and topological qubits, each with unique error profiles and operational characteristics.

Noise and decoherence represent fundamental limitations in quantum benchmarking. Quantum systems are inherently sensitive to environmental interactions, leading to rapid degradation of quantum information. This sensitivity varies significantly across hardware implementations, complicating the development of standardized performance metrics that can be meaningfully applied across platforms.

The absence of consensus on benchmark suites presents another critical challenge. Unlike classical computing, which benefits from established benchmark suites like SPEC and LINPACK, quantum computing lacks widely accepted standardized test sets. Current efforts such as Quantum Volume, Circuit Layer Operations Per Second (CLOPS), and application-specific benchmarks provide valuable but incomplete perspectives on system performance.

Scale limitations further complicate benchmarking efforts. Many current quantum systems operate with relatively few qubits (50-100 range), making it difficult to extrapolate performance to the large-scale systems required for quantum advantage. Additionally, error correction overhead, which will be essential for practical quantum computing, is not adequately captured in most existing benchmarks.

The rapidly evolving nature of quantum hardware creates a moving target for benchmarking standards. Hardware improvements often outpace the development of benchmarking methodologies, resulting in metrics that quickly become obsolete or irrelevant. This rapid evolution challenges the establishment of enduring performance standards.

Verification challenges also persist, as classical computers cannot efficiently simulate large quantum systems, making it difficult to verify the correctness of quantum computation results beyond certain scales. This verification gap undermines confidence in benchmark results for larger quantum systems.

Cross-platform comparability remains elusive due to varying qubit connectivity, gate fidelities, and coherence times across different quantum computing architectures. These hardware-specific characteristics make direct performance comparisons problematic and potentially misleading without appropriate normalization techniques.

Noise and decoherence represent fundamental limitations in quantum benchmarking. Quantum systems are inherently sensitive to environmental interactions, leading to rapid degradation of quantum information. This sensitivity varies significantly across hardware implementations, complicating the development of standardized performance metrics that can be meaningfully applied across platforms.

The absence of consensus on benchmark suites presents another critical challenge. Unlike classical computing, which benefits from established benchmark suites like SPEC and LINPACK, quantum computing lacks widely accepted standardized test sets. Current efforts such as Quantum Volume, Circuit Layer Operations Per Second (CLOPS), and application-specific benchmarks provide valuable but incomplete perspectives on system performance.

Scale limitations further complicate benchmarking efforts. Many current quantum systems operate with relatively few qubits (50-100 range), making it difficult to extrapolate performance to the large-scale systems required for quantum advantage. Additionally, error correction overhead, which will be essential for practical quantum computing, is not adequately captured in most existing benchmarks.

The rapidly evolving nature of quantum hardware creates a moving target for benchmarking standards. Hardware improvements often outpace the development of benchmarking methodologies, resulting in metrics that quickly become obsolete or irrelevant. This rapid evolution challenges the establishment of enduring performance standards.

Verification challenges also persist, as classical computers cannot efficiently simulate large quantum systems, making it difficult to verify the correctness of quantum computation results beyond certain scales. This verification gap undermines confidence in benchmark results for larger quantum systems.

Cross-platform comparability remains elusive due to varying qubit connectivity, gate fidelities, and coherence times across different quantum computing architectures. These hardware-specific characteristics make direct performance comparisons problematic and potentially misleading without appropriate normalization techniques.

Established Quantum Computing Benchmarking Methodologies

01 Quantum Computing Performance Benchmarking Methods

Various methods and systems for benchmarking quantum computing performance have been developed to evaluate and compare different quantum computing models. These benchmarking techniques involve standardized tests that measure factors such as quantum volume, error rates, coherence times, and computational efficiency. The benchmarks help in assessing the practical capabilities of quantum processors and provide metrics for comparing different quantum computing architectures.- Quantum Computing Performance Benchmarking Methods: Various methods and systems for benchmarking quantum computing performance have been developed to evaluate and compare different quantum computing models. These benchmarking techniques involve standardized tests that measure factors such as quantum volume, error rates, coherence times, and computational efficiency. By establishing consistent metrics, researchers can objectively assess the capabilities of different quantum processors and architectures, enabling better comparison between competing quantum computing technologies.

- Quantum Circuit Optimization and Simulation: Techniques for optimizing quantum circuits and simulating quantum computing models have been developed to enhance performance benchmarking. These approaches involve methods for reducing gate complexity, minimizing error propagation, and efficiently simulating quantum algorithms on classical computers. By optimizing quantum circuits and creating accurate simulation environments, researchers can better predict and evaluate the performance of quantum computing models before implementation on actual quantum hardware.

- Quantum Error Correction and Mitigation: Error correction and mitigation techniques are crucial for benchmarking quantum computing models, as quantum systems are highly susceptible to noise and decoherence. These methods involve identifying, characterizing, and compensating for errors that occur during quantum computation. Advanced error correction codes and mitigation strategies enable more accurate performance assessment by distinguishing between the theoretical capabilities of quantum computing models and their practical implementation limitations.

- Quantum-Classical Hybrid Computing Benchmarks: Hybrid quantum-classical computing approaches combine quantum processors with classical computing resources to leverage the strengths of both paradigms. Benchmarking these hybrid models requires specialized metrics that evaluate how effectively the quantum and classical components interact and complement each other. These benchmarks assess factors such as data transfer efficiency, workload distribution optimization, and overall computational advantage compared to purely classical or purely quantum approaches.

- Industry-Specific Quantum Computing Benchmarks: Industry-specific benchmarks have been developed to evaluate quantum computing models for particular application domains such as finance, cryptography, materials science, and drug discovery. These specialized benchmarks assess how well different quantum computing approaches solve problems relevant to specific industries. By focusing on real-world use cases rather than abstract performance metrics, these benchmarks provide practical insights into which quantum computing models are most suitable for particular business and scientific applications.

02 Quantum Error Correction and Mitigation Techniques

Error correction and mitigation techniques are essential for improving the reliability of quantum computing models. These approaches include surface codes, stabilizer codes, and error detection algorithms that help identify and correct quantum errors resulting from decoherence and noise. Benchmarking these error correction methods involves measuring their effectiveness in preserving quantum information and extending the useful computation time of quantum systems.Expand Specific Solutions03 Quantum-Classical Hybrid Computing Benchmarks

Hybrid quantum-classical computing models combine quantum processors with classical computing resources to leverage the strengths of both paradigms. Benchmarking these hybrid systems involves evaluating how effectively they partition computational tasks between quantum and classical components, measuring speedup over purely classical approaches, and assessing resource efficiency. These benchmarks are particularly important for near-term quantum applications where classical pre-processing and post-processing play significant roles.Expand Specific Solutions04 Quantum Algorithm Performance Evaluation

Benchmarking the performance of quantum algorithms across different quantum computing models is crucial for understanding their practical utility. This involves measuring execution time, resource requirements, and solution quality for algorithms such as quantum phase estimation, quantum approximate optimization, and quantum machine learning. These benchmarks help identify which quantum computing architectures are best suited for specific algorithmic applications and guide the development of more efficient quantum software.Expand Specific Solutions05 Quantum Hardware Architecture Comparison

Different quantum computing hardware architectures, including superconducting qubits, trapped ions, photonic systems, and topological qubits, require specialized benchmarking approaches. These benchmarks evaluate architecture-specific metrics such as qubit connectivity, gate fidelity, scalability potential, and operational stability. Comparative analysis of these metrics helps identify the strengths and limitations of each hardware approach and guides future quantum computer design.Expand Specific Solutions

Leading Organizations in Quantum Benchmarking Research

The quantum computing benchmarking landscape is evolving rapidly in a market transitioning from early research to commercial applications. Currently valued at approximately $500 million, the quantum benchmarking sector is expected to grow significantly as quantum advantage becomes achievable. Leading players include established technology giants (Google, IBM, Alibaba) developing comprehensive benchmarking frameworks, specialized quantum startups (D-Wave, Rigetti, Zapata, IQM, Quantinuum) focusing on hardware-specific metrics, and academic institutions (Tsinghua University, Johns Hopkins, Beihang University) contributing foundational research. The technical maturity varies widely, with standardized benchmarks still emerging and companies competing to establish their metrics as industry standards, particularly for NISQ-era devices and application-specific performance measurements.

Google LLC

Technical Solution: Google's quantum benchmarking approach centers around their Quantum AI framework and Sycamore processor. Their methodology includes Quantum Volume (QV) measurements, Quantum Supremacy tests, and the development of application-specific benchmarks. Google introduced cross-entropy benchmarking to validate quantum advantage claims, demonstrating a 53-qubit quantum computer performing a specific calculation in 200 seconds that would take classical supercomputers 10,000 years[1]. Their benchmarking suite includes randomized benchmarking protocols to measure gate fidelities and error rates across different qubit configurations. Google also developed cycle benchmarking to characterize the performance of quantum circuits over time and under varying environmental conditions, providing standardized metrics for comparing quantum hardware implementations across different architectures[2].

Strengths: Industry-leading quantum supremacy demonstrations with well-documented benchmarking methodologies; robust statistical validation techniques for quantum advantage claims. Weaknesses: Benchmarks often optimized for Google's specific quantum architecture; some controversy around quantum supremacy claims when compared to optimized classical algorithms.

International Business Machines Corp.

Technical Solution: IBM's quantum benchmarking framework revolves around their Qiskit platform and comprehensive performance metrics. Their approach includes Quantum Volume as a holistic hardware benchmark (introduced in 2019), measuring the maximum size of square quantum circuits that can be successfully implemented[3]. IBM developed randomized benchmarking protocols to characterize gate errors and coherence times across their quantum processors. Their benchmarking suite includes application-specific benchmarks for chemistry, optimization, and machine learning workloads. IBM's quantum benchmark methodology incorporates error mitigation techniques and circuit optimization to provide realistic performance assessments. They maintain a public quantum hardware roadmap with performance targets and regularly publish benchmark results for their quantum processors, allowing transparent comparison across generations of devices[4]. IBM also pioneered the Circuit Layer Operations Per Second (CLOPS) metric to measure circuit execution throughput on quantum systems.

Strengths: Comprehensive benchmarking ecosystem with open-source tools; industry-standard metrics like Quantum Volume widely adopted; transparent reporting of results across processor generations. Weaknesses: Some metrics favor IBM's superconducting qubit architecture; benchmarks may not fully translate to other quantum computing paradigms like trapped ions or photonic systems.

Key Quantum Benchmarking Protocols and Frameworks

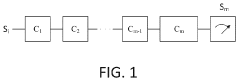

Method and system for fully randomized benchmarking for quantum circuits

PatentPendingJP2024516483A

Innovation

- A fully randomized benchmarking method that involves generating sequences of random unitary quantum gates with restoration gates to ensure equivalence to an identity operator, allowing for the measurement of qubit states before and after gate sequences to determine fidelity, thereby separating contributions from qubit decoherence and SPAM errors.

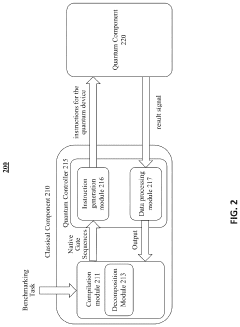

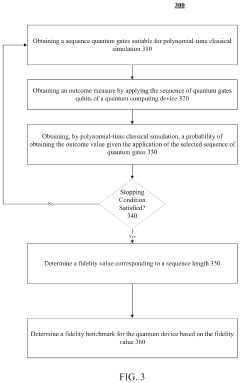

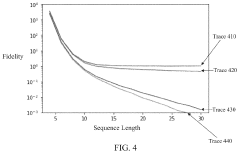

Polynomial-time linear cross-entropy benchmarking

PatentPendingUS20230385679A1

Innovation

- Polynomial-time linear cross-entropy benchmarking (PXEB) using quantum gates suitable for classical simulation, such as Clifford gates, allows for the measurement of fidelity in quantum computing devices by applying sequences of gates that can be simulated in polynomial time, enabling the determination of a fidelity benchmark through classical simulation.

Standardization Efforts in Quantum Benchmarking

The quantum computing industry has recognized the critical need for standardized benchmarking methodologies to enable meaningful comparisons between different quantum systems and algorithms. Several significant standardization initiatives have emerged in recent years, spearheaded by both industry consortia and academic institutions. The IEEE Quantum Computing Performance Metrics & Performance Benchmarking group has been developing standards for quantum volume, quantum computing architecture stack, and benchmarking methodologies since 2017, providing a foundation for consistent evaluation practices.

The Quantum Economic Development Consortium (QED-C), established in 2018, has formed dedicated working groups focused on creating industry-wide standards for performance metrics and benchmarking protocols. Their efforts aim to establish common terminology and measurement approaches that can be universally adopted across the quantum computing ecosystem.

Academic institutions have also made substantial contributions to standardization efforts. The Quantum Benchmarking collaboration between multiple universities has proposed the Quantum Performance Assessment Framework (QPAF), which offers a structured approach to evaluating quantum systems across various performance dimensions. This framework has gained traction among researchers seeking comprehensive evaluation methods.

In the commercial sector, IBM's Quantum Volume metric has emerged as a de facto standard for measuring the capability of quantum computers. This metric considers both the number of qubits and their quality, providing a single value that represents overall system performance. Google's Quantum Supremacy experiments have similarly established benchmarks for comparing classical and quantum computational capabilities.

International standards organizations have begun incorporating quantum benchmarking into their portfolios. The International Organization for Standardization (ISO) established the ISO/IEC JTC 1/SC 7 working group on quantum computing in 2020, which is developing standards for quantum software quality and performance evaluation. Similarly, the National Institute of Standards and Technology (NIST) in the United States has launched initiatives to standardize quantum benchmarking methodologies.

These standardization efforts face significant challenges, including the rapid evolution of quantum hardware, the diversity of qubit technologies, and the varying requirements of different quantum applications. Despite these challenges, the convergence toward common benchmarking frameworks represents a crucial step in the maturation of quantum computing as a field, enabling more transparent evaluation and comparison of quantum systems across the industry.

The Quantum Economic Development Consortium (QED-C), established in 2018, has formed dedicated working groups focused on creating industry-wide standards for performance metrics and benchmarking protocols. Their efforts aim to establish common terminology and measurement approaches that can be universally adopted across the quantum computing ecosystem.

Academic institutions have also made substantial contributions to standardization efforts. The Quantum Benchmarking collaboration between multiple universities has proposed the Quantum Performance Assessment Framework (QPAF), which offers a structured approach to evaluating quantum systems across various performance dimensions. This framework has gained traction among researchers seeking comprehensive evaluation methods.

In the commercial sector, IBM's Quantum Volume metric has emerged as a de facto standard for measuring the capability of quantum computers. This metric considers both the number of qubits and their quality, providing a single value that represents overall system performance. Google's Quantum Supremacy experiments have similarly established benchmarks for comparing classical and quantum computational capabilities.

International standards organizations have begun incorporating quantum benchmarking into their portfolios. The International Organization for Standardization (ISO) established the ISO/IEC JTC 1/SC 7 working group on quantum computing in 2020, which is developing standards for quantum software quality and performance evaluation. Similarly, the National Institute of Standards and Technology (NIST) in the United States has launched initiatives to standardize quantum benchmarking methodologies.

These standardization efforts face significant challenges, including the rapid evolution of quantum hardware, the diversity of qubit technologies, and the varying requirements of different quantum applications. Despite these challenges, the convergence toward common benchmarking frameworks represents a crucial step in the maturation of quantum computing as a field, enabling more transparent evaluation and comparison of quantum systems across the industry.

Cross-Platform Quantum Benchmark Compatibility

One of the most significant challenges in quantum computing research is ensuring that benchmarks can be effectively applied across different quantum computing platforms. The diversity of quantum hardware architectures, from superconducting qubits to trapped ions, photonic systems, and topological qubits, creates substantial compatibility issues when attempting to establish standardized performance metrics. Each platform operates under different physical principles and exhibits unique error characteristics, making direct comparison problematic without carefully designed cross-platform benchmarking protocols.

The quantum computing ecosystem currently lacks universally accepted standards for cross-platform benchmarking, unlike classical computing which benefits from established benchmarks like SPEC and LINPACK. This absence hampers meaningful comparison between quantum systems from different vendors or research groups. Researchers must navigate through proprietary benchmarks that often emphasize the strengths of specific platforms while minimizing their weaknesses, creating a fragmented evaluation landscape that impedes objective assessment.

Hardware-agnostic benchmarking frameworks have emerged as a promising solution to this challenge. Quantum Volume, developed by IBM, attempts to measure the effective number of qubits a system can use, considering both qubit count and error rates. Similarly, the Quantum Approximate Optimization Algorithm (QAOA) performance across different problem instances serves as a functional benchmark that can be implemented across platforms. These approaches focus on the computational capability rather than the underlying hardware specifics.

Circuit layer operations per second (CLOPS) represents another attempt at cross-platform compatibility, measuring how quickly a quantum system can execute quantum circuits regardless of the underlying technology. However, translation between different native gate sets remains problematic, as each platform has optimized operations that may not have direct equivalents on other systems, potentially skewing performance comparisons.

The development of abstraction layers in quantum programming frameworks like Qiskit, Cirq, and PyQuil has improved cross-platform compatibility by allowing researchers to write platform-agnostic code. These frameworks automatically handle the translation to platform-specific instructions, though often with varying levels of optimization efficiency. Future advancements in these middleware solutions will be crucial for establishing truly comparable benchmarks across the quantum computing landscape.

Standardization efforts by organizations such as IEEE and the Quantum Economic Development Consortium (QED-C) are working toward establishing industry-wide benchmarking protocols. These initiatives aim to create technology-neutral metrics that can fairly evaluate quantum systems regardless of their underlying architecture, enabling researchers and industry stakeholders to make informed decisions about which quantum computing platforms best suit their specific computational needs.

The quantum computing ecosystem currently lacks universally accepted standards for cross-platform benchmarking, unlike classical computing which benefits from established benchmarks like SPEC and LINPACK. This absence hampers meaningful comparison between quantum systems from different vendors or research groups. Researchers must navigate through proprietary benchmarks that often emphasize the strengths of specific platforms while minimizing their weaknesses, creating a fragmented evaluation landscape that impedes objective assessment.

Hardware-agnostic benchmarking frameworks have emerged as a promising solution to this challenge. Quantum Volume, developed by IBM, attempts to measure the effective number of qubits a system can use, considering both qubit count and error rates. Similarly, the Quantum Approximate Optimization Algorithm (QAOA) performance across different problem instances serves as a functional benchmark that can be implemented across platforms. These approaches focus on the computational capability rather than the underlying hardware specifics.

Circuit layer operations per second (CLOPS) represents another attempt at cross-platform compatibility, measuring how quickly a quantum system can execute quantum circuits regardless of the underlying technology. However, translation between different native gate sets remains problematic, as each platform has optimized operations that may not have direct equivalents on other systems, potentially skewing performance comparisons.

The development of abstraction layers in quantum programming frameworks like Qiskit, Cirq, and PyQuil has improved cross-platform compatibility by allowing researchers to write platform-agnostic code. These frameworks automatically handle the translation to platform-specific instructions, though often with varying levels of optimization efficiency. Future advancements in these middleware solutions will be crucial for establishing truly comparable benchmarks across the quantum computing landscape.

Standardization efforts by organizations such as IEEE and the Quantum Economic Development Consortium (QED-C) are working toward establishing industry-wide benchmarking protocols. These initiatives aim to create technology-neutral metrics that can fairly evaluate quantum systems regardless of their underlying architecture, enabling researchers and industry stakeholders to make informed decisions about which quantum computing platforms best suit their specific computational needs.

Unlock deeper insights with PatSnap Eureka Quick Research — get a full tech report to explore trends and direct your research. Try now!

Generate Your Research Report Instantly with AI Agent

Supercharge your innovation with PatSnap Eureka AI Agent Platform!